airhacks

airhacks.com communication repository

Stars: 69

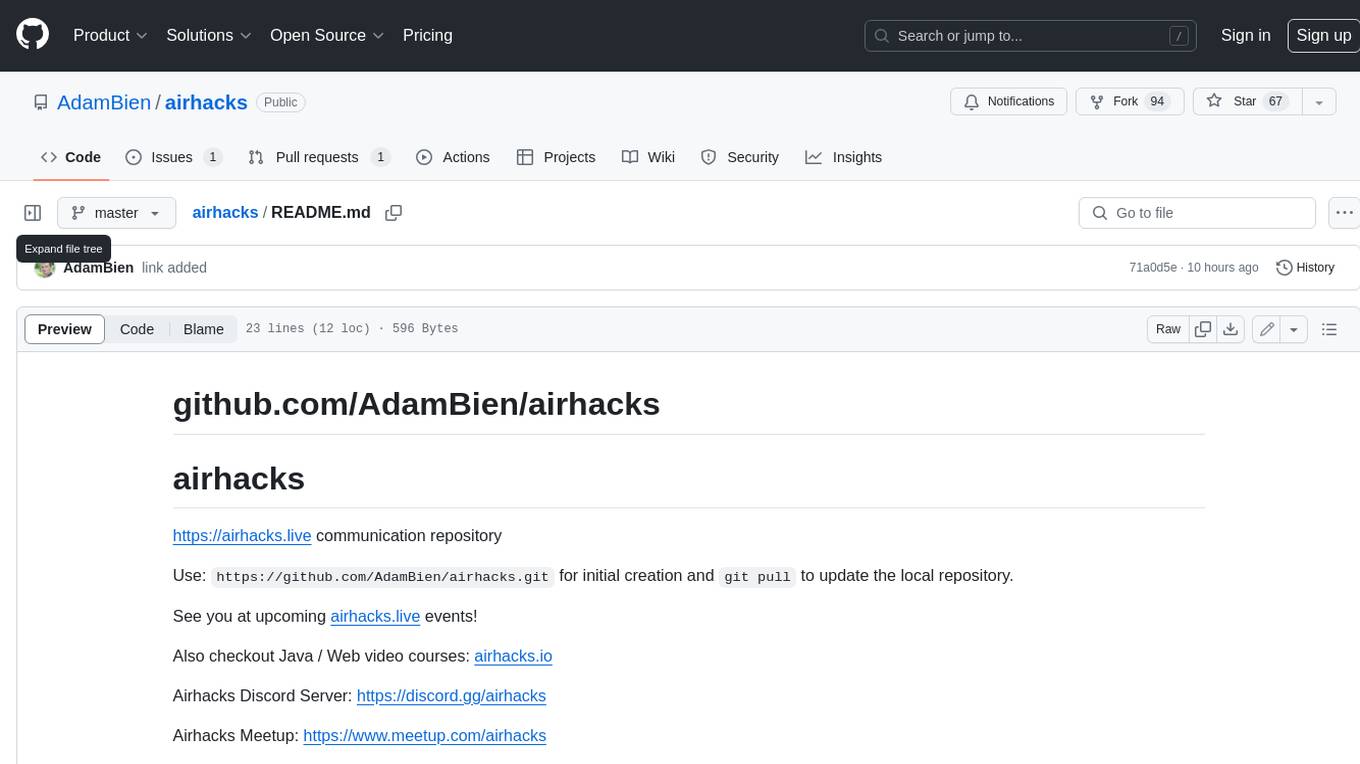

This repository is a communication repository for airhacks.live events. Users can use `https://github.com/AdamBien/airhacks.git` for initial creation and `git pull` to update the local repository. Airhacks Discord Server: https://discord.gg/airhacks, Airhacks Meetup: https://www.meetup.com/airhacks, Adam Bien / Airhacks links: https://airhacks.industries

README:

https://airhacks.live communication repository

Use: https://github.com/AdamBien/airhacks.git for initial creation and git pull to update the local repository.

See you at upcoming airhacks.live events!

Also checkout Java / Web video courses: airhacks.io

Airhacks Discord Server: https://discord.gg/airhacks

Airhacks Meetup: https://www.meetup.com/airhacks

Adam Bien / Airhacks links: https://airhacks.industries

https://github.com/mukel/llama3.java

https://docs.anthropic.com/en/docs/build-with-claude/embeddings

https://platform.openai.com/tokenizer

https://platform.openai.com/tokenizer

https://docs.langchain4j.dev/tutorials/tools/

https://docs.anthropic.com/en/docs/build-with-claude/tool-use

https://github.com/jbellis/jvector

https://central.sonatype.com/artifact/dev.langchain4j/langchain4j-document-parser-apache-tika

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for airhacks

Similar Open Source Tools

airhacks

This repository is a communication repository for airhacks.live events. Users can use `https://github.com/AdamBien/airhacks.git` for initial creation and `git pull` to update the local repository. Airhacks Discord Server: https://discord.gg/airhacks, Airhacks Meetup: https://www.meetup.com/airhacks, Adam Bien / Airhacks links: https://airhacks.industries

gcloud-aio

This repository contains shared codebase for two projects: gcloud-aio and gcloud-rest. gcloud-aio is built for Python 3's asyncio, while gcloud-rest is a threadsafe requests-based implementation. It provides clients for Google Cloud services like Auth, BigQuery, Datastore, KMS, PubSub, Storage, and Task Queue. Users can install the library using pip and refer to the documentation for usage details. Developers can contribute to the project by following the contribution guide.

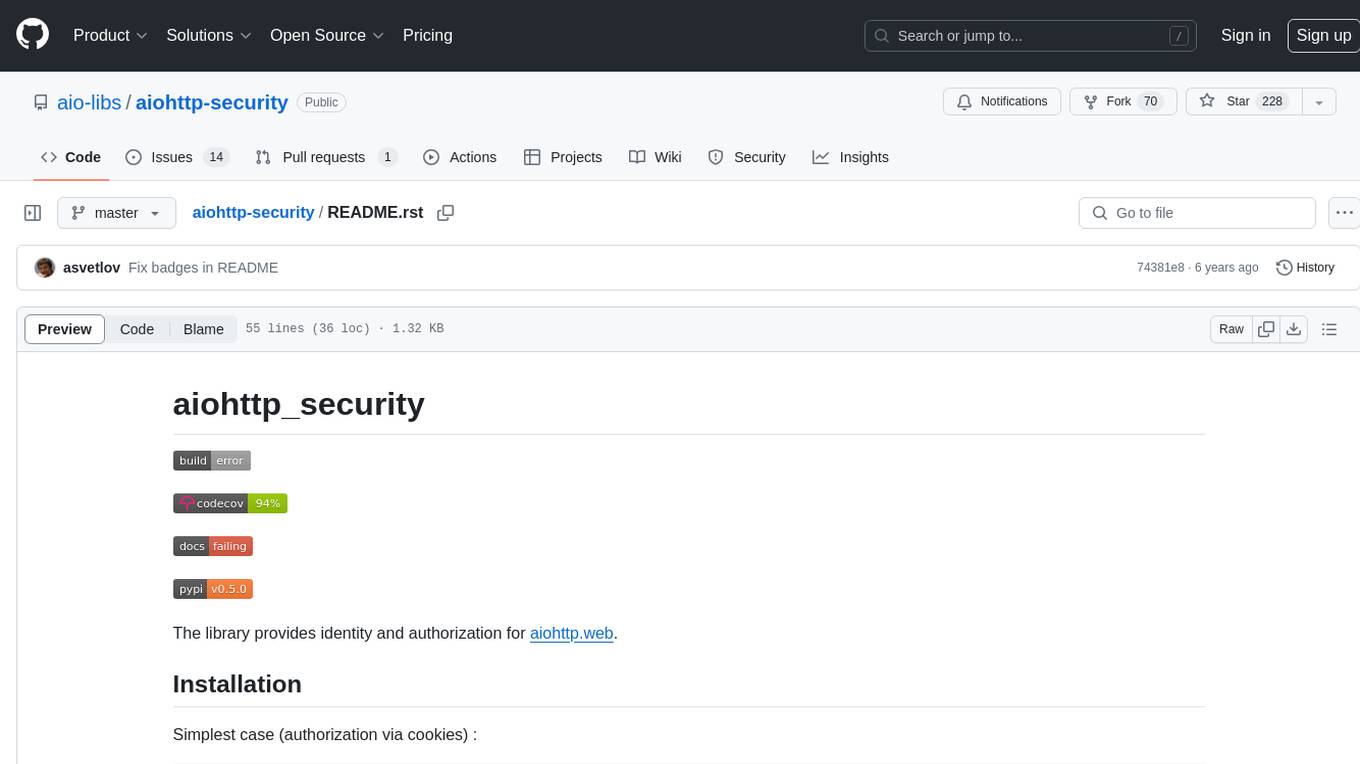

aiohttp-security

aiohttp_security is a library that provides identity and authorization for aiohttp.web. It offers features for handling authorization via cookies and supports aiohttp-session. The library includes examples for basic usage and database authentication, along with demos in the demo directory. For development, the library requires installation of specific requirements listed in the requirements-dev.txt file. aiohttp_security is licensed under the Apache 2 license.

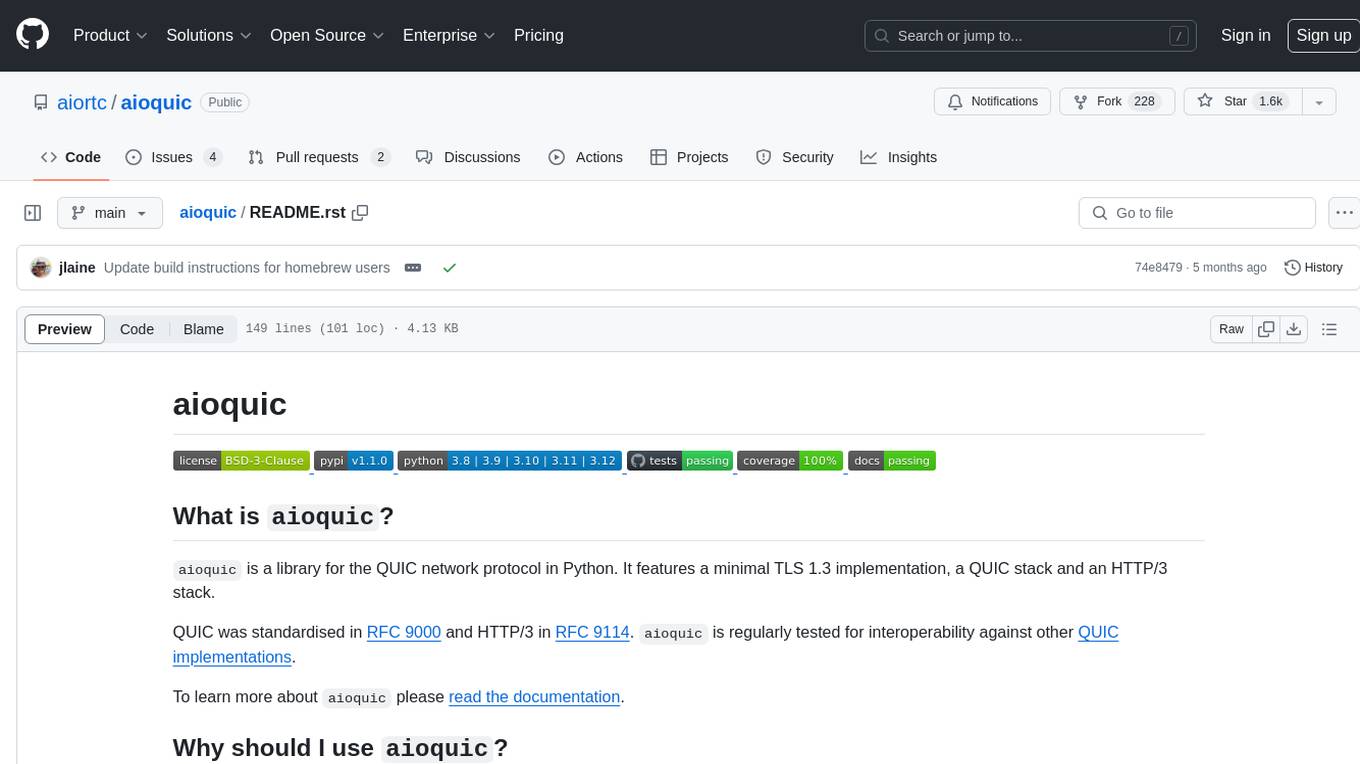

aioquic

aioquic is a Python library for the QUIC network protocol, featuring a minimal TLS 1.3 implementation, a QUIC stack, and an HTTP/3 stack. It is designed to be embedded into Python client and server libraries supporting QUIC and HTTP/3, with IPv4 and IPv6 support, connection migration, NAT rebinding, logging TLS traffic secrets and QUIC events, server push, WebSocket bootstrapping, and datagram support. The library follows the 'bring your own I/O' pattern for QUIC and HTTP/3 APIs, making it testable and integrable with different concurrency models.

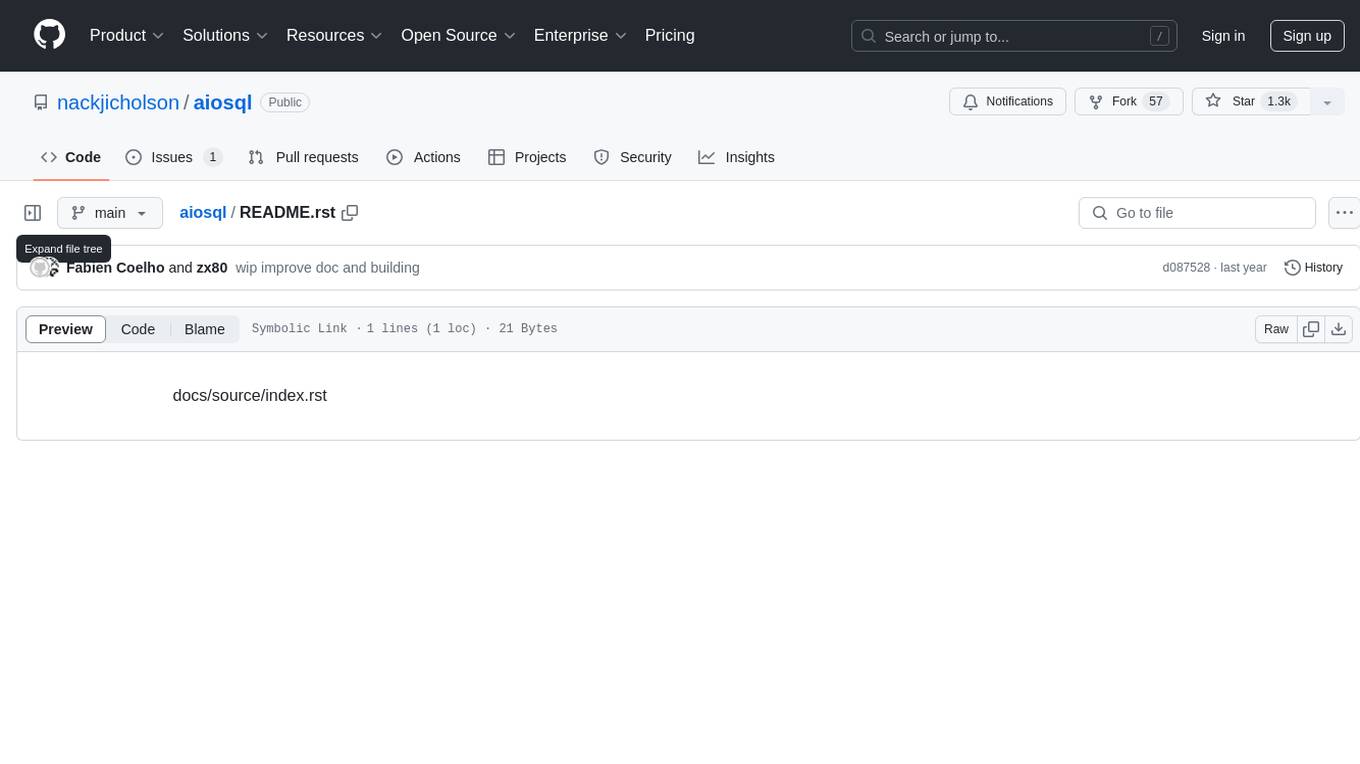

aiosql

aiosql is a Python module that allows you to organize SQL statements in .sql files and load them into your Python application as methods to call. It supports various database drivers like SQLite, PostgreSQL, MySQL, MariaDB, and DuckDB. The project is an implementation of Kris Jenkins' yesql library to the Python ecosystem, allowing users to easily reuse SQL code in SQL GUIs or CLI tools. With aiosql, you can write, version control, comment, and run SQL code using files without losing the ability to use them as you would any other SQL file. It provides support for PEP 249 and asyncio based drivers, enabling users to execute parametric SQL queries from Python methods.

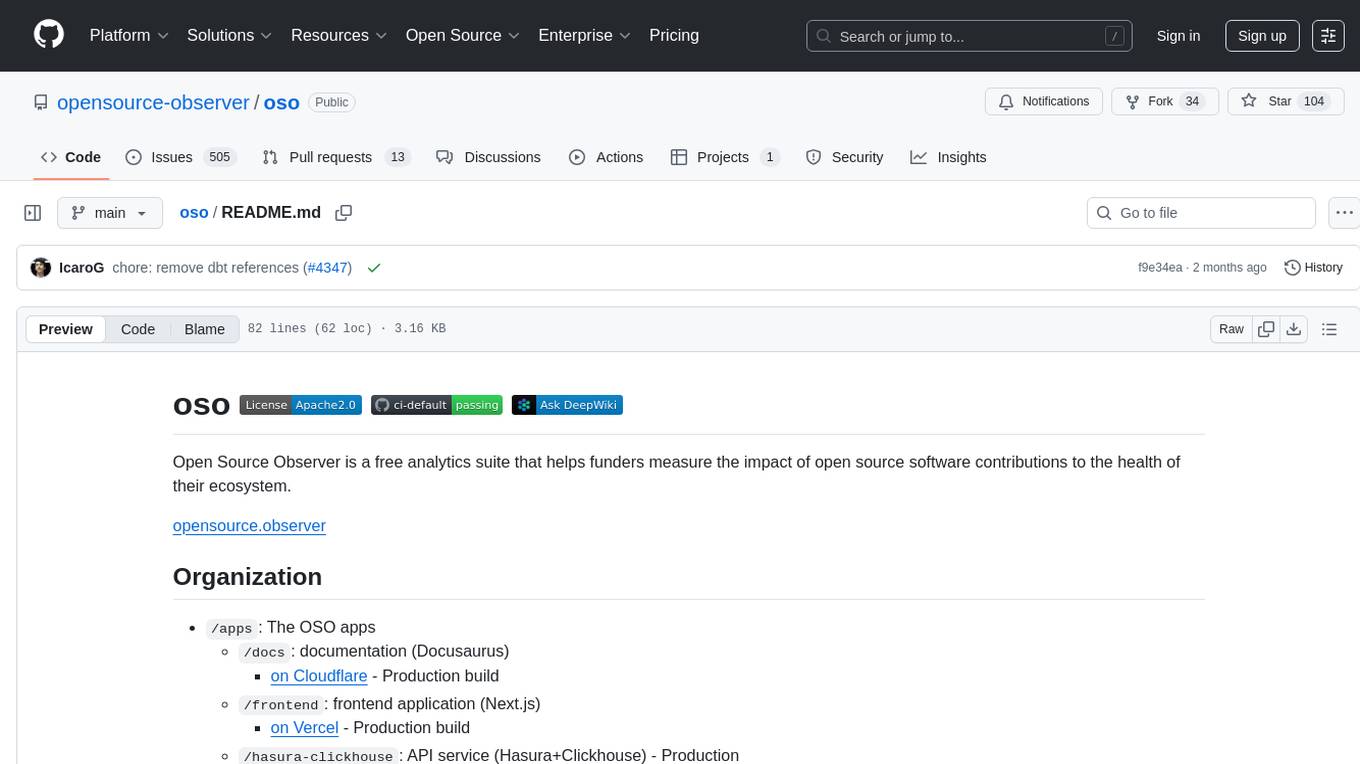

oso

Open Source Observer is a free analytics suite that helps funders measure the impact of open source software contributions to the health of their ecosystem. The repository contains various subprojects such as OSO apps, documentation, frontend application, API services, Docker files, common libraries, utilities, GitHub app for validating pull requests, Helm charts for Kubernetes, Kubernetes configuration, Terraform modules, data warehouse code, Python utilities for managing data, OSO agent, Dagster configuration, sqlmesh configuration, Python package for pyoso, and other tools to manage warehouse pipelines.

company-research-agent

Agentic Company Researcher is a multi-agent tool that generates comprehensive company research reports by utilizing a pipeline of AI agents to gather, curate, and synthesize information from various sources. It features multi-source research, AI-powered content filtering, real-time progress streaming, dual model architecture, modern React frontend, and modular architecture. The tool follows an agentic framework with specialized research and processing nodes, leverages separate models for content generation, uses a content curation system for relevance scoring and document processing, and implements a real-time communication system via WebSocket connections. Users can set up the tool quickly using the provided setup script or manually, and it can also be deployed using Docker and Docker Compose. The application can be used for local development and deployed to various cloud platforms like AWS Elastic Beanstalk, Docker, Heroku, and Google Cloud Run.

openai-kotlin

OpenAI Kotlin API client is a Kotlin client for OpenAI's API with multiplatform and coroutines capabilities. It allows users to interact with OpenAI's API using Kotlin programming language. The client supports various features such as models, chat, images, embeddings, files, fine-tuning, moderations, audio, assistants, threads, messages, and runs. It also provides guides on getting started, chat & function call, file source guide, and assistants. Sample apps are available for reference, and troubleshooting guides are provided for common issues. The project is open-source and licensed under the MIT license, allowing contributions from the community.

aioconsole

aioconsole is a Python package that provides asynchronous console and interfaces for asyncio. It offers asynchronous equivalents to input, print, exec, and code.interact, an interactive loop running the asynchronous Python console, customization and running of command line interfaces using argparse, stream support to serve interfaces instead of using standard streams, and the apython script to access asyncio code at runtime without modifying the sources. The package requires Python version 3.8 or higher and can be installed from PyPI or GitHub. It allows users to run Python files or modules with a modified asyncio policy, replacing the default event loop with an interactive loop. aioconsole is useful for scenarios where users need to interact with asyncio code in a console environment.

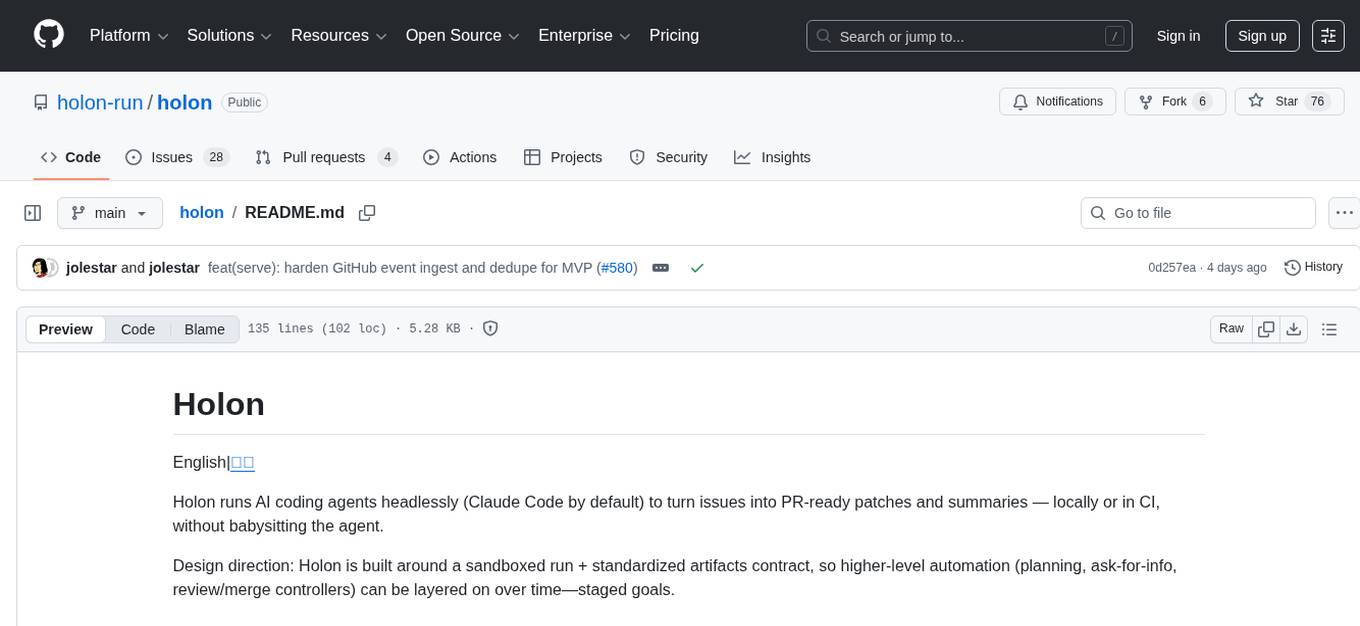

holon

Holon is a tool that runs AI coding agents headlessly to automate the process of turning issues into PR-ready patches and summaries. It provides a sandboxed execution environment with standardized artifacts, allowing for deterministic and repeatable runs. Users can easily create or update PRs, manage state persistence, and customize agent bundles. Holon can be used locally or in CI environments, offering seamless integration with GitHub Actions.

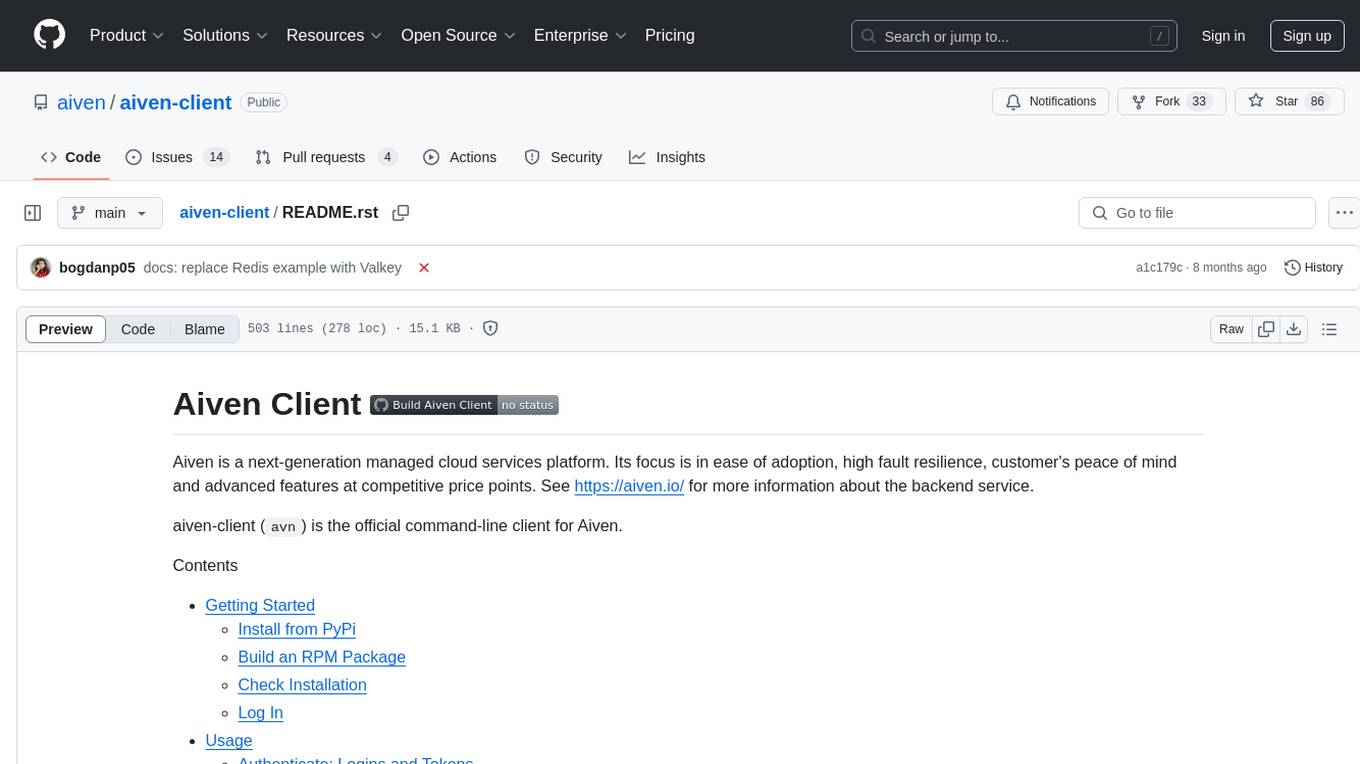

aiven-client

Aiven Client is the official command-line client for Aiven, a next-generation managed cloud services platform. It focuses on ease of adoption, high fault resilience, customer's peace of mind, and advanced features at competitive price points. The client allows users to interact with Aiven services through a command-line interface, providing functionalities such as authentication, project management, service exploration, service launching, service integrations, custom files management, team management, OAuth2 client configuration, autocomplete support, and auth helpers for connecting to services. Users can perform various tasks related to managing cloud services efficiently using the Aiven Client.

nuxt-llms

Nuxt LLMs automatically generates llms.txt markdown documentation for Nuxt applications. It provides runtime hooks to collect data from various sources and generate structured documentation. The tool allows customization of sections directly from nuxt.config.ts and integrates with Nuxt modules via the runtime hooks system. It generates two documentation formats: llms.txt for concise structured documentation and llms_full.txt for detailed documentation. Users can extend documentation using hooks to add sections, links, and metadata. The tool is suitable for developers looking to automate documentation generation for their Nuxt applications.

aiohttp-debugtoolbar

aiohttp_debugtoolbar provides a debug toolbar for aiohttp web applications. It is a port of pyramid_debugtoolbar and offers basic functionality such as basic panels, intercepting redirects, pretty printing exceptions, an interactive python console, and showing source code. The library is still in early development stages and offers various debug panels for monitoring different aspects of the web application. It is a useful tool for developers working with aiohttp to debug and optimize their applications.

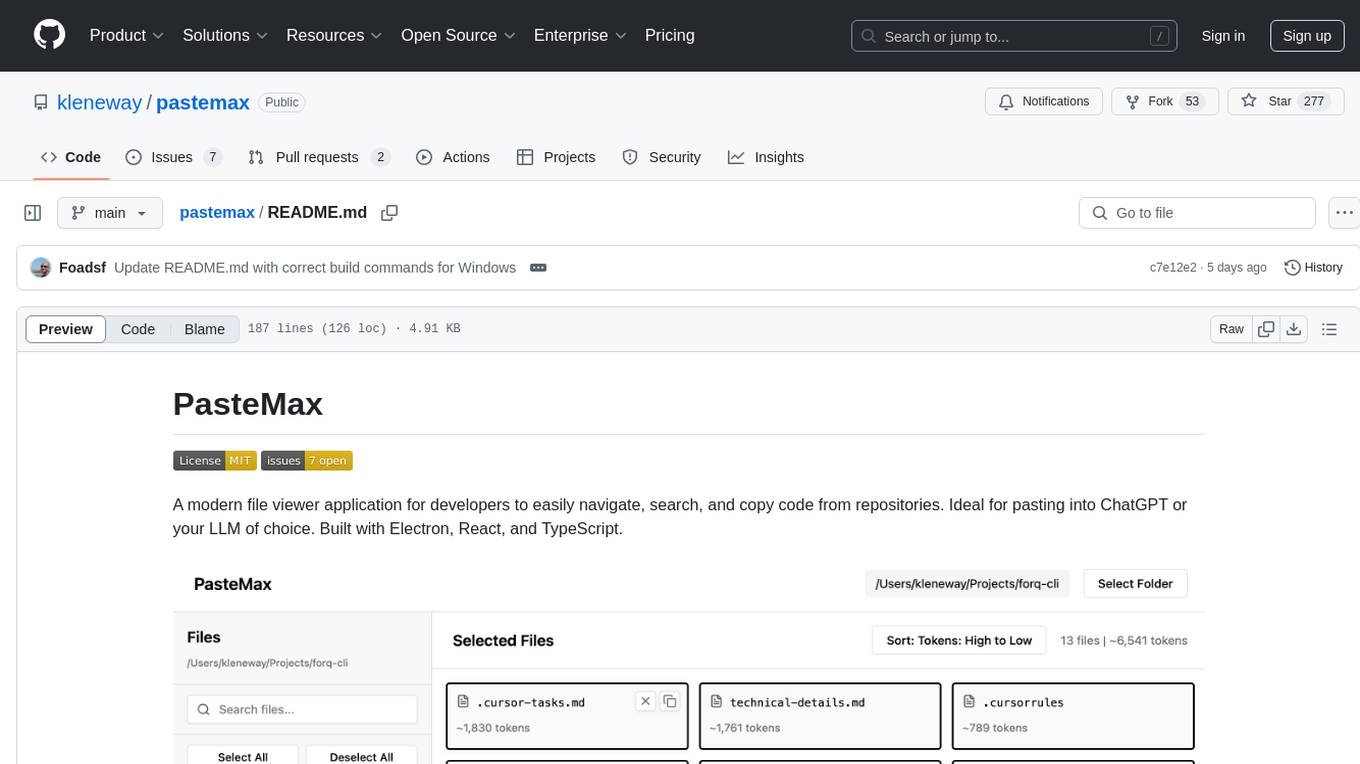

pastemax

PasteMax is a modern file viewer application designed for developers to easily navigate, search, and copy code from repositories. It provides features such as file tree navigation, token counting, search capabilities, selection management, sorting options, dark mode, binary file detection, and smart file exclusion. Built with Electron, React, and TypeScript, PasteMax is ideal for pasting code into ChatGPT or other language models. Users can download the application or build it from source, and customize file exclusions. Troubleshooting steps are provided for common issues, and contributions to the project are welcome under the MIT License.

AirCasting

AirCasting is a platform for gathering, visualizing, and sharing environmental data. It aims to provide a central hub for environmental data, making it easier for people to access and use this information to make informed decisions about their environment.

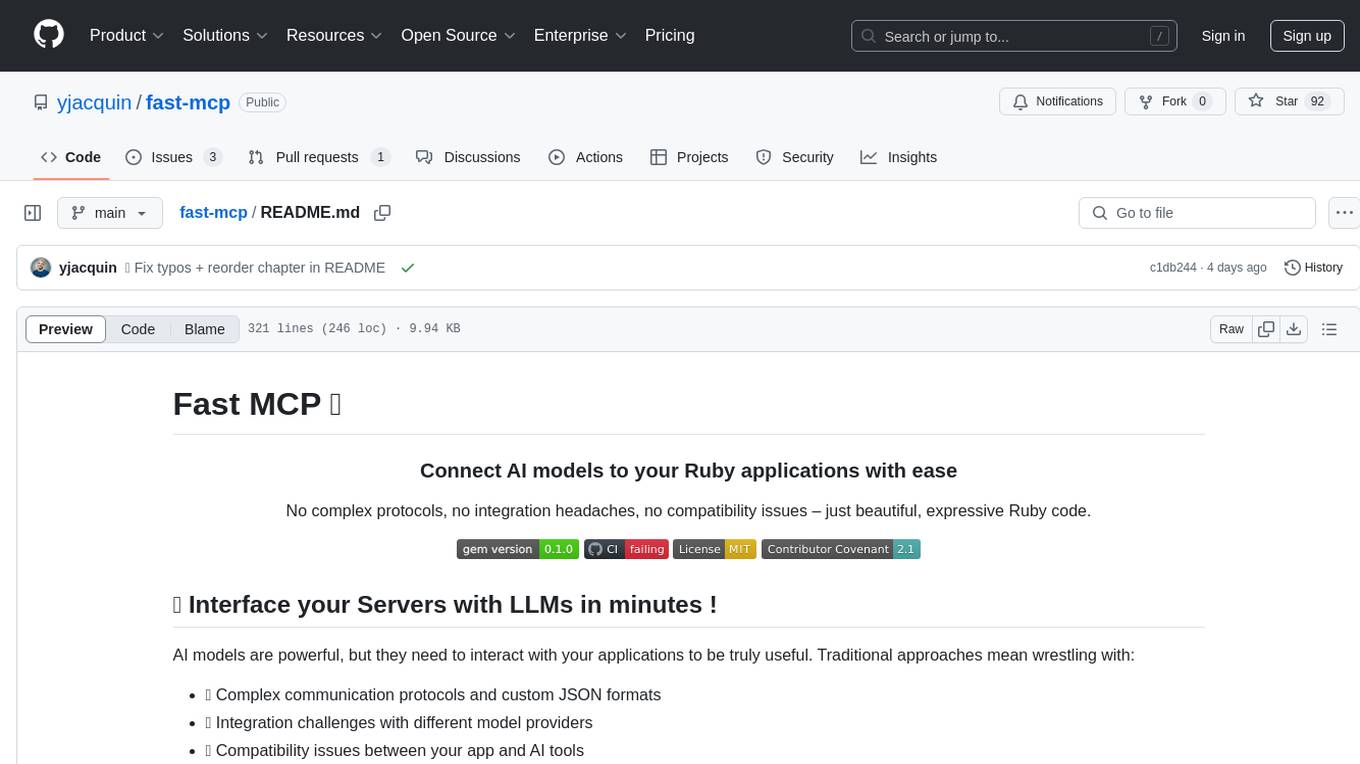

fast-mcp

Fast MCP is a Ruby gem that simplifies the integration of AI models with your Ruby applications. It provides a clean implementation of the Model Context Protocol, eliminating complex communication protocols, integration challenges, and compatibility issues. With Fast MCP, you can easily connect AI models to your servers, share data resources, choose from multiple transports, integrate with frameworks like Rails and Sinatra, and secure your AI-powered endpoints. The gem also offers real-time updates and authentication support, making AI integration a seamless experience for developers.