Best AI tools for< Manage Llms >

20 - AI tool Sites

Unified DevOps platform to build AI applications

This is a unified DevOps platform to build AI applications. It provides a comprehensive set of tools and services to help developers build, deploy, and manage AI applications. The platform includes a variety of features such as a code editor, a debugger, a profiler, and a deployment manager. It also provides access to a variety of AI services, such as natural language processing, machine learning, and computer vision.

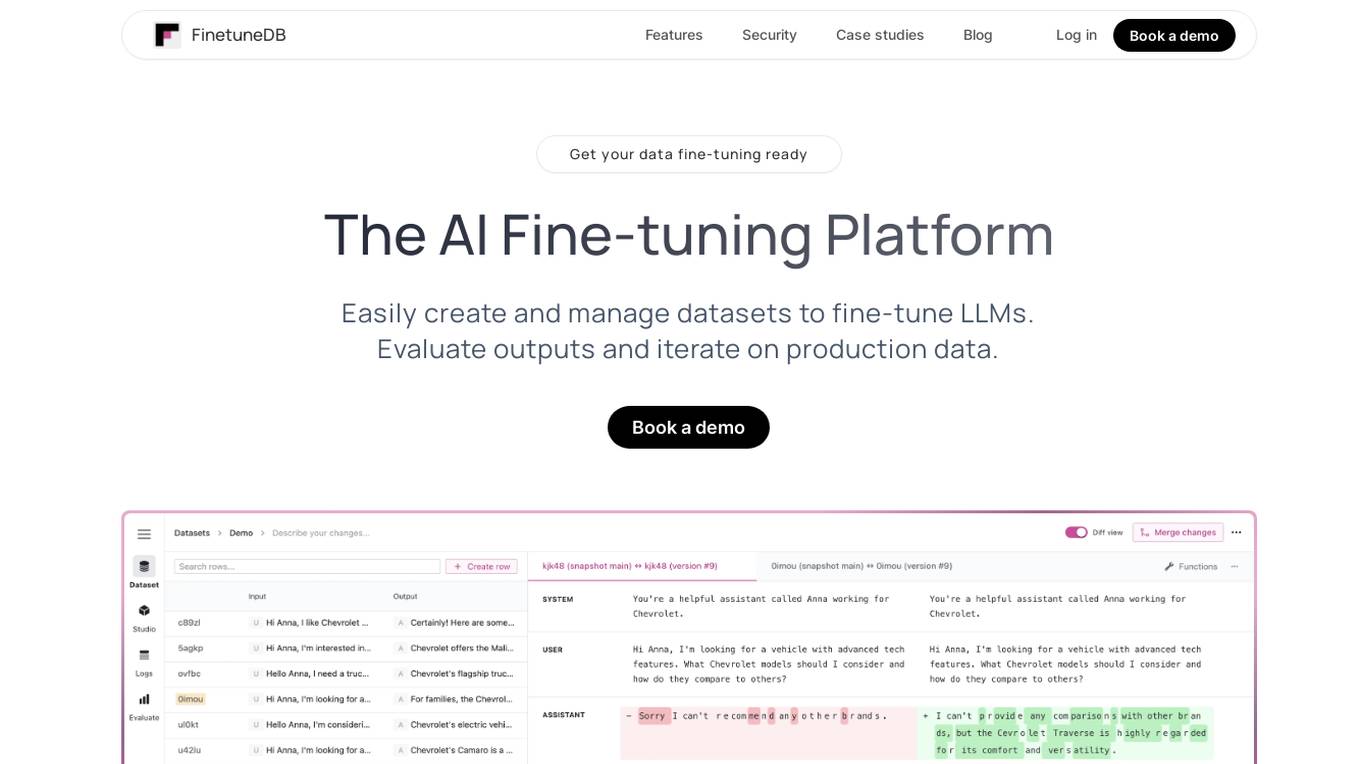

FinetuneDB

FinetuneDB is an AI fine-tuning platform that allows users to easily create and manage datasets to fine-tune LLMs, evaluate outputs, and iterate on production data. It integrates with open-source and proprietary foundation models, and provides a collaborative editor for building datasets. FinetuneDB also offers a variety of features for evaluating model performance, including human and AI feedback, automated evaluations, and model metrics tracking.

Entry Point AI

Entry Point AI is a modern AI optimization platform for fine-tuning proprietary and open-source language models. It provides a user-friendly interface to manage prompts, fine-tunes, and evaluations in one place. The platform enables users to optimize models from leading providers, train across providers, work collaboratively, write templates, import/export data, share models, and avoid common pitfalls associated with fine-tuning. Entry Point AI simplifies the fine-tuning process, making it accessible to users without the need for extensive data, infrastructure, or insider knowledge.

JFrog ML

JFrog ML is an AI platform designed to streamline AI development from prototype to production. It offers a unified MLOps platform to build, train, deploy, and manage AI workflows at scale. With features like Feature Store, LLMOps, and model monitoring, JFrog ML empowers AI teams to collaborate efficiently and optimize AI & ML models in production.

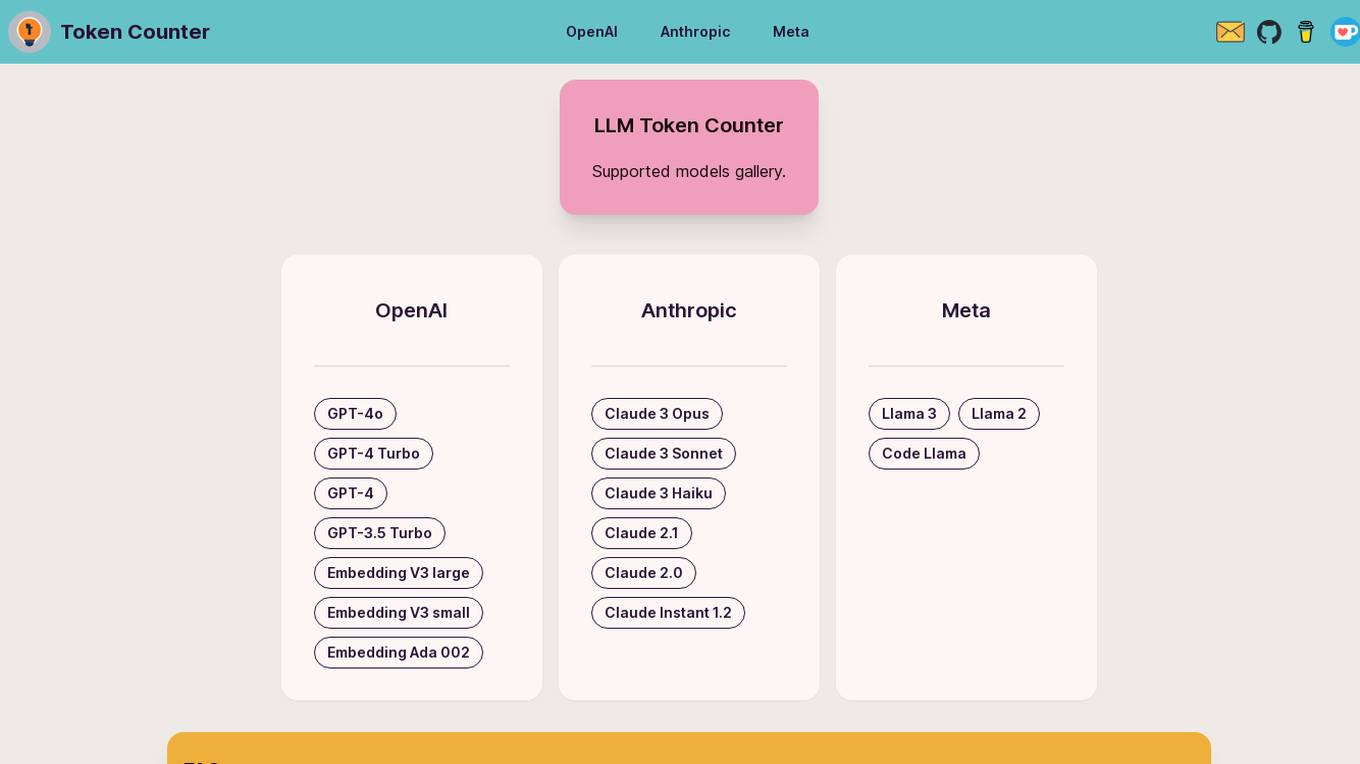

LLM Token Counter

The LLM Token Counter is a sophisticated tool designed to help users effectively manage token limits for various Language Models (LLMs) like GPT-3.5, GPT-4, Claude-3, Llama-3, and more. It utilizes Transformers.js, a JavaScript implementation of the Hugging Face Transformers library, to calculate token counts client-side. The tool ensures data privacy by not transmitting prompts to external servers.

ModelOp

ModelOp is the leading AI Governance software for enterprises, providing a single source of truth for all AI systems, automated process workflows, real-time insights, and integrations to extend the value of existing technology investments. It helps organizations safeguard AI initiatives without stifling innovation, ensuring compliance, accelerating innovation, and improving key performance indicators. ModelOp supports generative AI, Large Language Models (LLMs), in-house, third-party vendor, and embedded systems. The software enables visibility, accountability, risk tiering, systemic tracking, enforceable controls, workflow automation, reporting, and rapid establishment of AI governance.

Shieldbase

Shieldbase is an AI-powered enterprise search tool designed to provide secure and efficient search capabilities for businesses. It utilizes advanced artificial intelligence algorithms to index and retrieve information from various data sources within an organization, ensuring quick and accurate search results. With a focus on security, Shieldbase offers encryption and access control features to protect sensitive data. The platform is user-friendly and customizable, making it easy for businesses to implement and integrate into their existing systems. Shieldbase enhances productivity by enabling employees to quickly find the information they need, ultimately improving decision-making processes and overall operational efficiency.

Mirage

Mirage is a custom AI platform that builds custom LLMs to accelerate productivity. It is backed by Sequoia and offers a variety of features, including the ability to create custom AI models, train models on your own data, and deploy models to the cloud or on-premises.

Composio

Composio is an integration platform for AI Agents and LLMs that allows users to access over 150 tools with just one line of code. It offers seamless integrations, managed authentication, a repository of tools, and powerful RPA tools to streamline and optimize the connection and interaction between AI Agents/LLMs and various APIs/services. Composio simplifies JSON structures, improves variable names, and enhances error handling to increase reliability by 30%. The platform is SOC Type II compliant, ensuring maximum security of user data.

Langtail

Langtail is a platform that helps developers build, test, and deploy AI-powered applications. It provides a suite of tools to help developers debug prompts, run tests, and monitor the performance of their AI models. Langtail also offers a community forum where developers can share tips and tricks, and get help from other users.

Taskaid

Taskaid is a free AI task management tool that leverages the power of AI and LLMs to help users manage tasks and todos more efficiently. It is designed for high-performing individuals who seek to boost productivity and get more done in less time. Taskaid offers an AI-first approach to task management, providing relevant and actionable suggestions to streamline task completion. The platform ensures user-friendly experience without the need for technical AI knowledge, and it prioritizes data privacy and security.

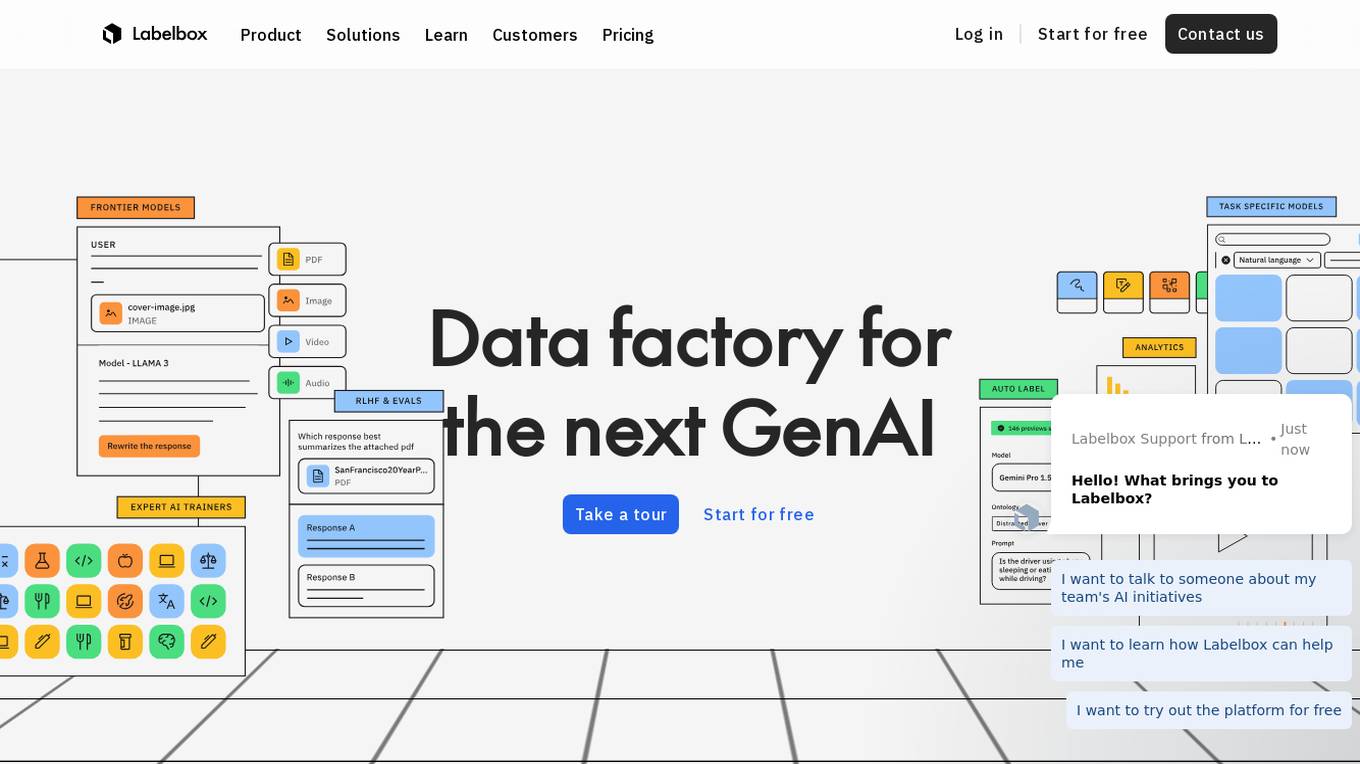

Labelbox

Labelbox is a data factory platform that empowers AI teams to manage data labeling, train models, and create better data with internet scale RLHF platform. It offers an all-in-one solution comprising tooling and services powered by a global community of domain experts. Labelbox operates a global data labeling infrastructure and operations for AI workloads, providing expert human network for data labeling in various domains. The platform also includes AI-assisted alignment for maximum efficiency, data curation, model training, and labeling services. Customers achieve breakthroughs with high-quality data through Labelbox.

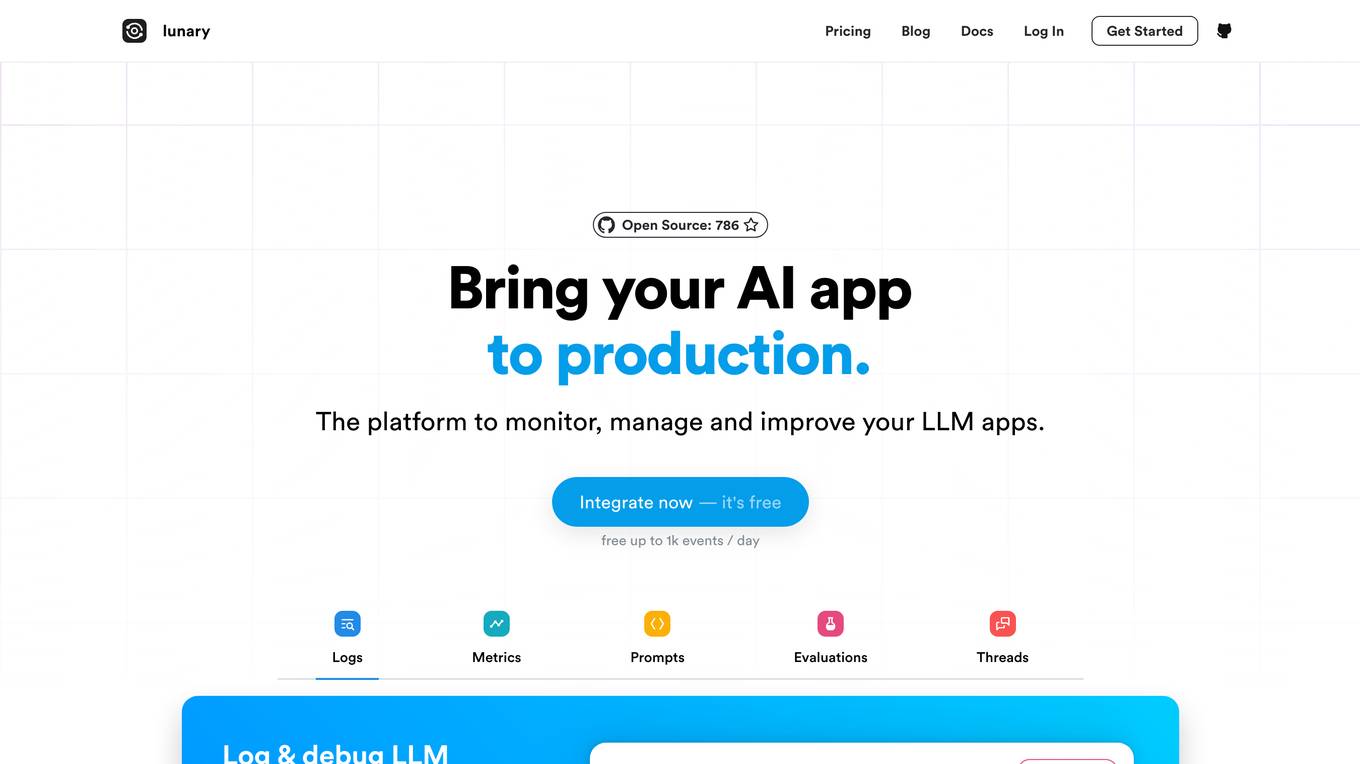

Lunary

Lunary is an AI developer platform designed to bring AI applications to production. It offers a comprehensive set of tools to manage, improve, and protect LLM apps. With features like Logs, Metrics, Prompts, Evaluations, and Threads, Lunary empowers users to monitor and optimize their AI agents effectively. The platform supports tasks such as tracing errors, labeling data for fine-tuning, optimizing costs, running benchmarks, and testing open-source models. Lunary also facilitates collaboration with non-technical teammates through features like A/B testing, versioning, and clean source-code management.

Anyscale

Anyscale is a company that provides a scalable compute platform for AI and Python applications. Their platform includes a serverless API for serving and fine-tuning open LLMs, a private cloud solution for data privacy and governance, and an open source framework for training, batch, and real-time workloads. Anyscale's platform is used by companies such as OpenAI, Uber, and Spotify to power their AI workloads.

Dify.AI

Dify.AI is a generative AI application development platform that allows users to create AI agents, chatbots, and other AI-powered applications. It provides a variety of tools and services to help developers build, deploy, and manage their AI applications. Dify.AI is designed to be easy to use, even for those with no prior experience in AI development.

Surfsite

Surfsite is an AI application designed for SaaS professionals to streamline workflows, make data-driven decisions, and enhance productivity. It offers AI assistants that connect to essential tools, provide real-time insights, and assist in various tasks such as market research, project management, and analytics. Surfsite aims to centralize data, improve decision-making, and optimize processes for product managers, growth marketers, and founders. The application leverages advanced LLMs and integrates seamlessly with popular tools like Google Docs, Jira, and Trello to offer a comprehensive AI-powered solution.

Arthur

Arthur is an industry-leading MLOps platform that simplifies deployment, monitoring, and management of traditional and generative AI models. It ensures scalability, security, compliance, and efficient enterprise use. Arthur's turnkey solutions enable companies to integrate the latest generative AI technologies into their operations, making informed, data-driven decisions. The platform offers open-source evaluation products, model-agnostic monitoring, deployment with leading data science tools, and model risk management capabilities. It emphasizes collaboration, security, and compliance with industry standards.

Astra

Astra is a universal API for LLM function calling that supercharges LLMs with integrations using a single line of code. It allows users to conveniently leverage function calling in LLMs with over 2,200 integrations, manage authentication profiles, import tools easily, and enable function calling with any LLM. Astra replaces JSON with a type-safe UI, making integration management simpler. The application extends the capabilities of LLMs without altering their core structure, offering a seamless layer of integrations and function execution.

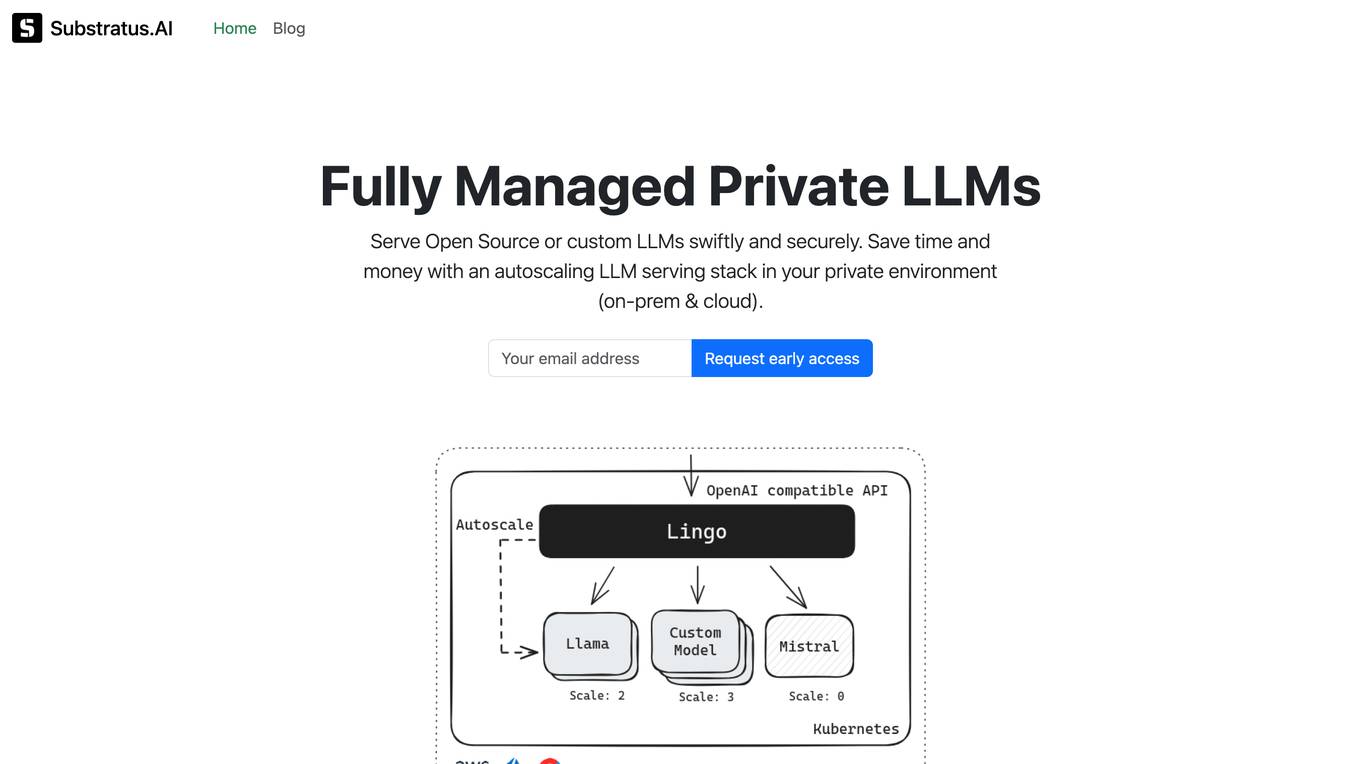

Substratus.AI

Substratus.AI is a fully managed private LLMs platform that allows users to serve LLMs (Llama and Mistral) in their own cloud account. It enables users to keep control of their data while reducing OpenAI costs by up to 10x. With Substratus.AI, users can utilize LLMs in production in hours instead of weeks, making it a convenient and efficient solution for AI model deployment.

LiteLLM

LiteLLM is a platform that simplifies model access, spend tracking, and fallbacks across 100+ LLMs. It provides a gateway to manage model access and offers features like logging, budget tracking, pass-through endpoints, and self-serve key management. LiteLLM is open-source and compatible with the OpenAI format, allowing users to access various LLMs seamlessly.

1 - Open Source AI Tools

parllama

PAR LLAMA is a Text UI application for managing and using LLMs, designed with Textual and Rich and PAR AI Core. It runs on major OS's including Windows, Windows WSL, Mac, and Linux. Supports Dark and Light mode, custom themes, and various workflows like Ollama chat, image chat, and OpenAI provider chat. Offers features like custom prompts, themes, environment variables configuration, and remote instance connection. Suitable for managing and using LLMs efficiently.

20 - OpenAI Gpts

GPT Detector

ChatGPT Detector quickly finds AI writing from ChatGPT, LLMs, Bard, and GPT-4. It's easy and fast to use!

FODMAPs Dietician

Dietician that helps those with IBS manage their symptoms via FODMAPs. FODMAP stands for fermentable oligosaccharides, disaccharides, monosaccharides and polyols. These are the chemical names of 5 naturally occurring sugars that are not well absorbed by your small intestine.

Cognitive Behavioral Coach

Provides cognitive-behavioral and emotional therapy guidance, helping users understand and manage their thoughts, behaviors, and emotions.

1ACulma - Management Coach

Cross-cultural management. Useful for those who relocate to another country or manage cross-cultural teams.

Finance Butler(ファイナンス・バトラー)

I manage finances securely with encryption and user authentication.

GroceriesGPT

I manage your grocery lists to help you stay organized. *1/ Tell me what to add to a list. 2/ Ask me to add all ingredients for a receipe. 3/ Upload a receipt to remove items from your lists 4/ Add an item by simply uploading a picture. 5/ Ask me what items I would recommend you add to your lists.*

Family Legacy Assistant

Helps users manage and preserve family heirlooms with empathy and practical advice.

AI Home Doctor (Guided Care)

Give me your syptoms and I will provide instructions for how to manage your illness.

MixerBox ChatGSlide

Your AI Google Slides assistant! Effortlessly locate, manage, and summarize your presentations!

Herbal Healer: The Art of Botany

A simulation game where players learn grow medicinal plants, craft remedies, and manage a herbal healing garden. Another AI Tiny Game by Dave Lalande

ZenFin

💡 Tips and guidance to buy, sell, and manage BitCoins, Ether , and more for transactions under $50.

DivineFeed

As the Divine Apple II, I defy Moore's Law in this darkly humorous game where you, as God, manage global prayers, navigate celestial politics, and accept that omnipotence can't please everyone.