Best AI tools for< Inferencing Models >

12 - AI tool Sites

Local AI Playground

Local AI Playground is a free and open-source native app designed for AI management, verification, and inferencing. It allows users to experiment with AI offline in a private environment without the need for a GPU. The application is memory-efficient and compact, with a Rust backend, making it suitable for various operating systems. It offers features such as CPU inferencing, model management, and digest verification. Users can start a local streaming server for AI inferencing with just two clicks. Local AI Playground aims to simplify the AI development process and provide a user-friendly experience for both offline and online AI applications.

Wallaroo.AI

Wallaroo.AI is an AI inference platform that offers production-grade AI inference microservices optimized on OpenVINO for cloud and Edge AI application deployments on CPUs and GPUs. It provides hassle-free AI inferencing for any model, any hardware, anywhere, with ultrafast turnkey inference microservices. The platform enables users to deploy, manage, observe, and scale AI models effortlessly, reducing deployment costs and time-to-value significantly.

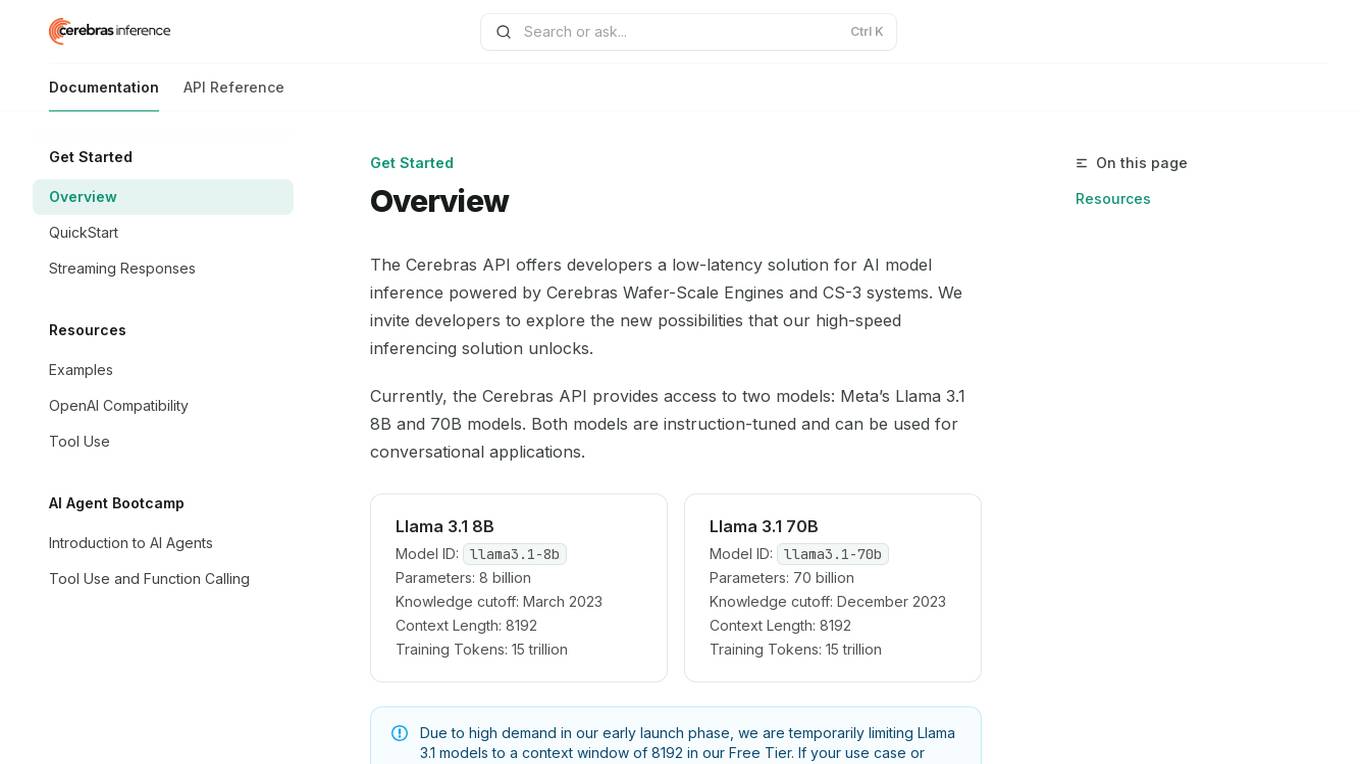

Cerebras API

The Cerebras API is a high-speed inferencing solution for AI model inference powered by Cerebras Wafer-Scale Engines and CS-3 systems. It offers developers access to two models: Meta’s Llama 3.1 8B and 70B models, which are instruction-tuned and suitable for conversational applications. The API provides low-latency solutions and invites developers to explore new possibilities in AI development.

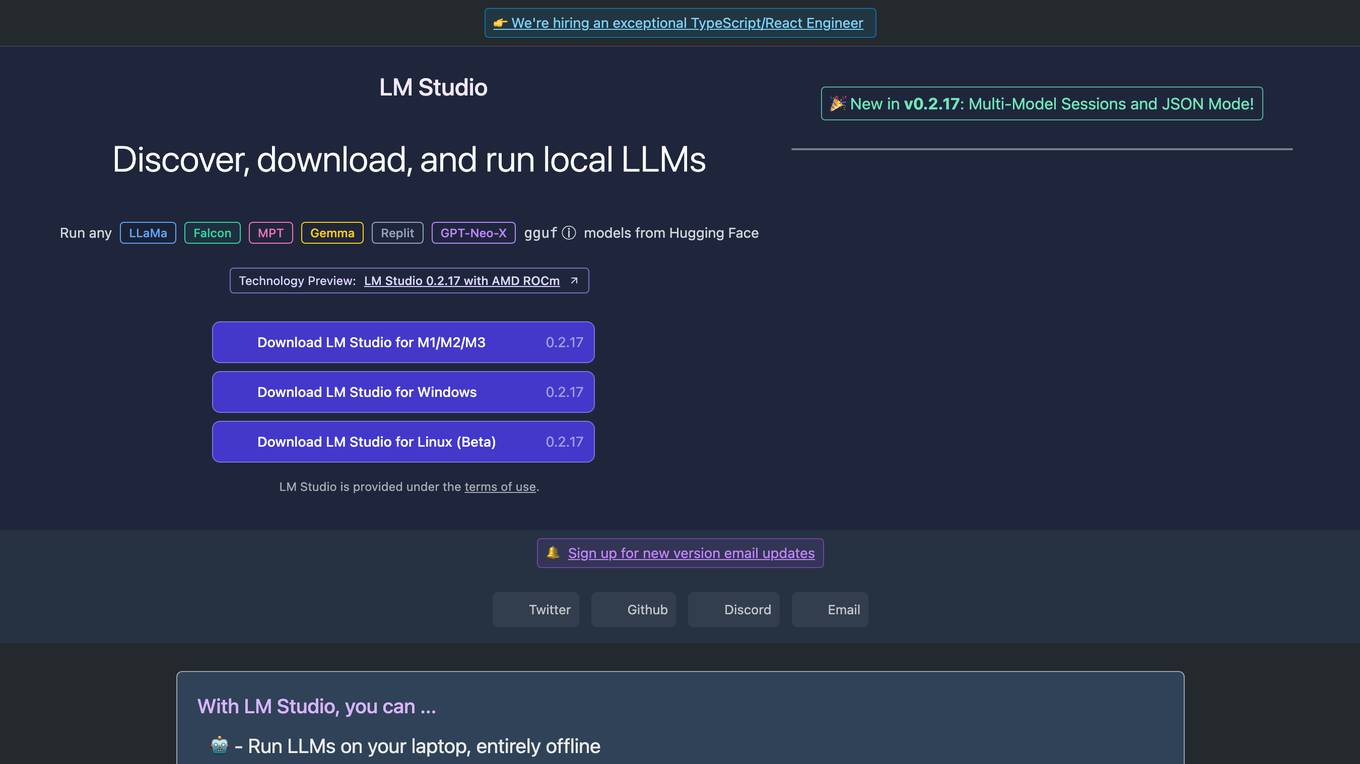

LM Studio

LM Studio is an AI tool designed for discovering, downloading, and running local LLMs (Large Language Models). Users can run LLMs on their laptops offline, use models through an in-app Chat UI or a local server, download compatible model files from HuggingFace repositories, and discover new LLMs. The tool ensures privacy by not collecting data or monitoring user actions, making it suitable for personal and business use. LM Studio supports various models like ggml Llama, MPT, and StarCoder on Hugging Face, with minimum hardware/software requirements specified for different platforms.

ONNX Runtime

ONNX Runtime is a production-grade AI engine designed to accelerate machine learning training and inferencing in various technology stacks. It supports multiple languages and platforms, optimizing performance for CPU, GPU, and NPU hardware. ONNX Runtime powers AI in Microsoft products and is widely used in cloud, edge, web, and mobile applications. It also enables large model training and on-device training, offering state-of-the-art models for tasks like image synthesis and text generation.

Tresata

Tresata is an AI tool that offers inventory and cataloging, inferencing and connecting, discoverability and lineage tracking, tokenization, and data enrichment capabilities. It provides SAM (Smart Augmented Intelligence) features and seamless integrations for customers. The platform empowers users to create data products for AI applications by uploading data to the Tresata cloud and accessing it for analysis and insights. Tresata emphasizes the importance of good data for all, with a focus on data-driven decision-making and innovation.

Pocus

Pocus is an AI-powered platform designed to enhance sales team performance by providing valuable insights and automating various sales processes. It offers a custom AI model that combines internal and external data to create personalized strategies for businesses. With features like research prospects, intent alerts, and building playbooks, Pocus streamlines prospecting and account research. Trusted by leading companies like Asana, Canva, and Miro, Pocus helps in turning product usage data into revenue, building pipeline based on buying signals, and proactively expanding customer base. The platform assists in converting website visitors into customers and unlocking pipeline from existing product champions. Pocus is known for generating qualified pipeline, uncovering hidden opportunities, and influencing outbound pipeline and closed-won deals.

Roe AI

Roe AI is an unstructured data warehouse that uses AI to process and analyze data from various sources, including documents, images, videos, and audio files. It provides a range of features to help businesses extract insights from their unstructured data, including data standardization, classification and inferencing, similarity search, and natural language processing. Roe AI is designed to be easy to use, even for teams with minimal ML background.

Godly

Godly is a tool that allows you to add your own data to GPT for personalized completions. It makes it easy to set up and manage your context, and comes with a chat bot to explore your context with no coding required. Godly also makes it easy to debug and manage which contexts are influencing your prompts, and provides an easy-to-use SDK for builders to quickly integrate context to their GPT completions.

Cast.app

Cast.app is an AI-driven platform that automates customer success management, enabling businesses to grow and preserve revenue by leveraging AI agents. The application offers a range of features such as automating customer onboarding, driving usage and adoption, minimizing revenue churn, influencing renewals and revenue expansion, and scaling without increasing team size. Cast.app provides personalized recommendations, insights, and customer communications, enhancing customer engagement and satisfaction. The platform is designed to streamline customer interactions, improve retention rates, and drive revenue growth through AI-powered automation and personalized customer experiences.

Parker.ai

Parker.ai is an intelligent platform that helps product teams capture and action their conversations, wherever they are. By integrating with popular communication and collaboration tools, Parker.ai surfaces what matters most, empowering product teams to concentrate on strategic thinking, influencing stakeholders, and engaging directly with users. Parker.ai's advanced analysis capabilities transform qualitative data into actionable quantitative insights, providing product teams with a deeper understanding of customer needs and pain points. This enables teams to make better decisions, create stronger roadmaps, and ultimately build better products.

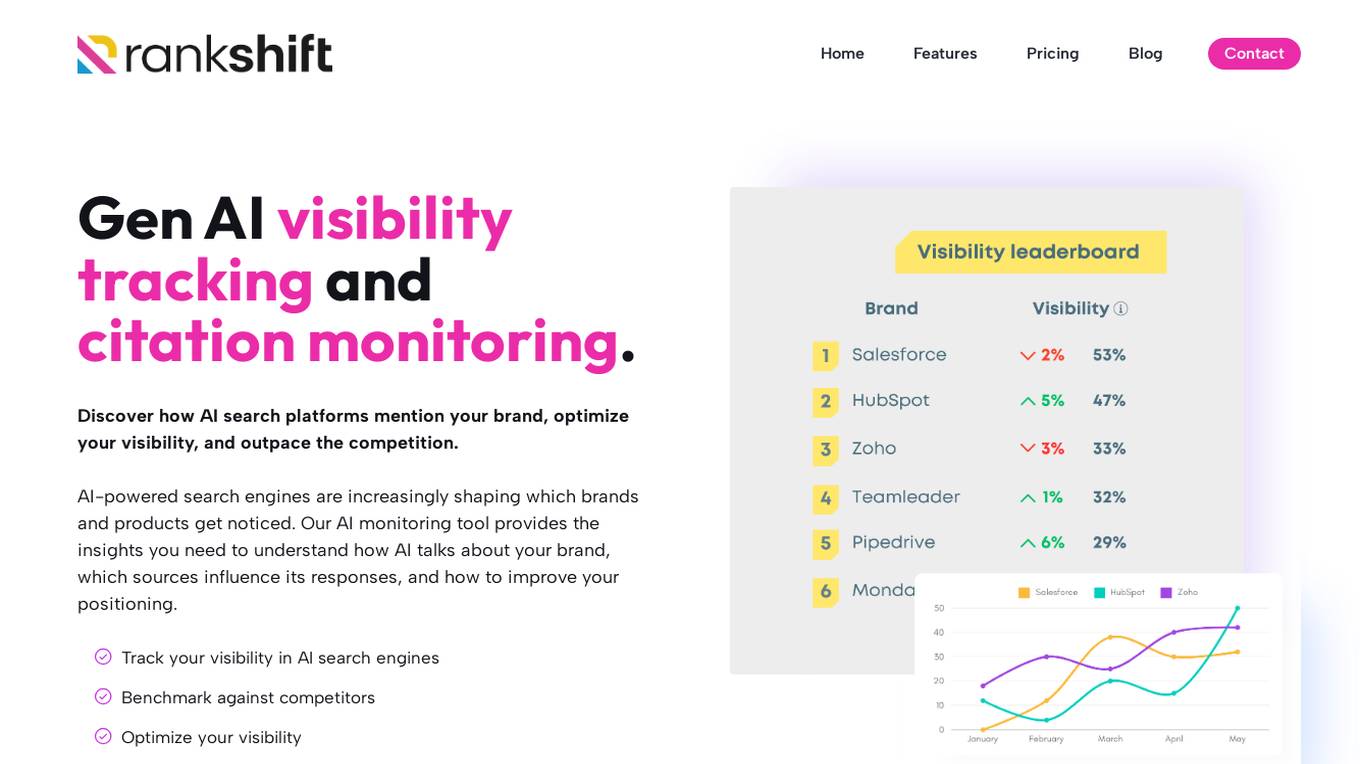

RANKSHIFT

RANKSHIFT is an AI brand visibility tracking tool that helps businesses monitor their visibility in AI search engines and optimize their presence. It provides insights on how AI platforms mention brands, compares visibility against competitors, and offers data-driven insights to improve AI search strategy. The tool tracks brand mentions, identifies sources influencing AI-generated answers, and helps businesses stay ahead in the competitive AI landscape.

1 - Open Source AI Tools

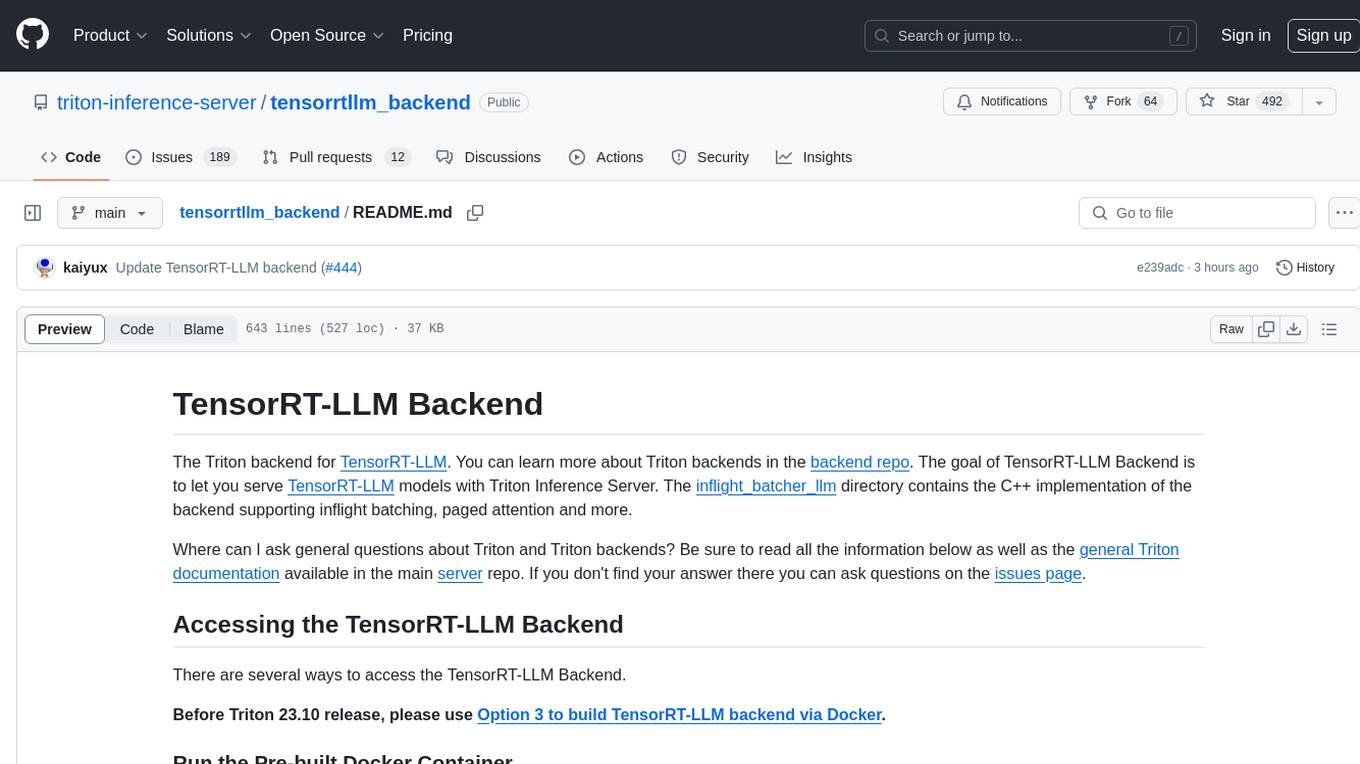

tensorrtllm_backend

The TensorRT-LLM Backend is a Triton backend designed to serve TensorRT-LLM models with Triton Inference Server. It supports features like inflight batching, paged attention, and more. Users can access the backend through pre-built Docker containers or build it using scripts provided in the repository. The backend can be used to create models for tasks like tokenizing, inferencing, de-tokenizing, ensemble modeling, and more. Users can interact with the backend using provided client scripts and query the server for metrics related to request handling, memory usage, KV cache blocks, and more. Testing for the backend can be done following the instructions in the 'ci/README.md' file.

2 - OpenAI Gpts

Behavioral Insights Researcher

Analyzes behavioral data to understand user interactions and preferences, improving product designs.