Awesome-explainable-AI

A collection of research materials on explainable AI/ML

Stars: 1343

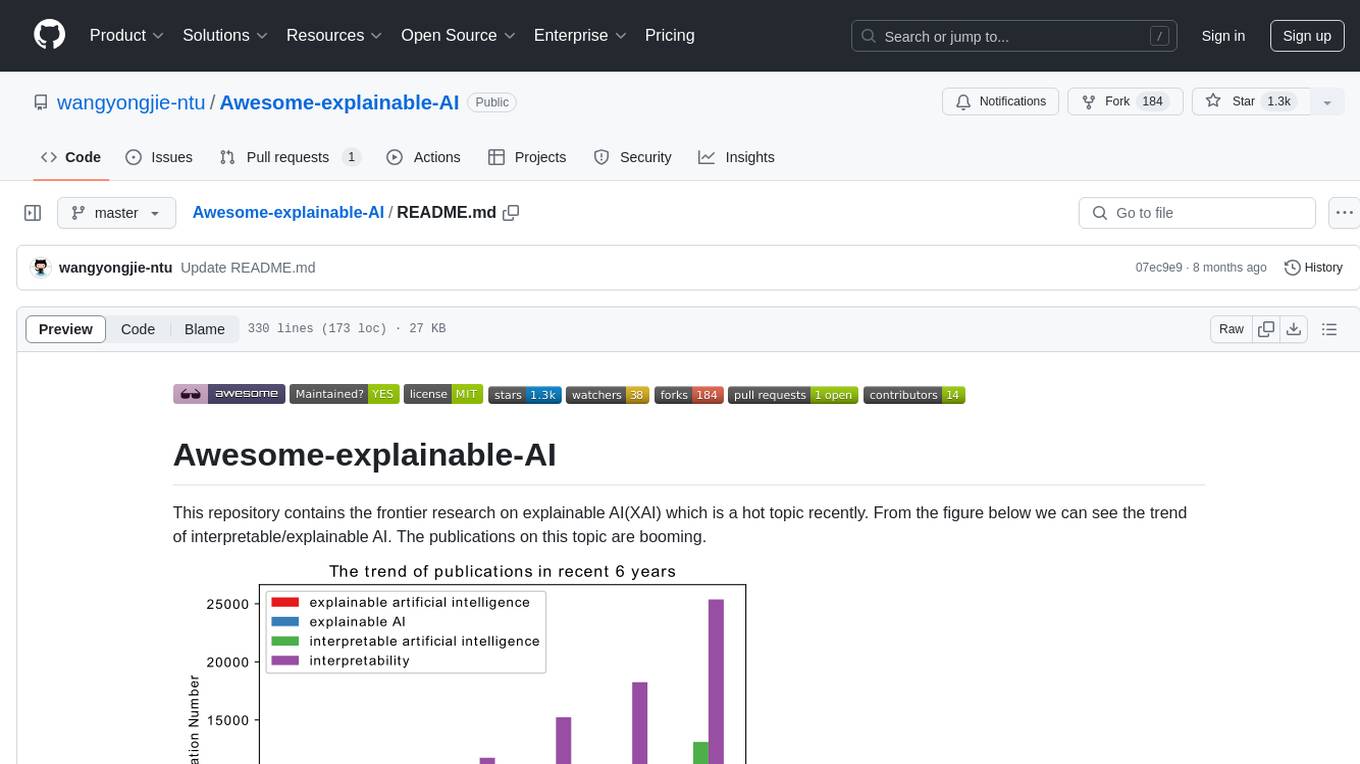

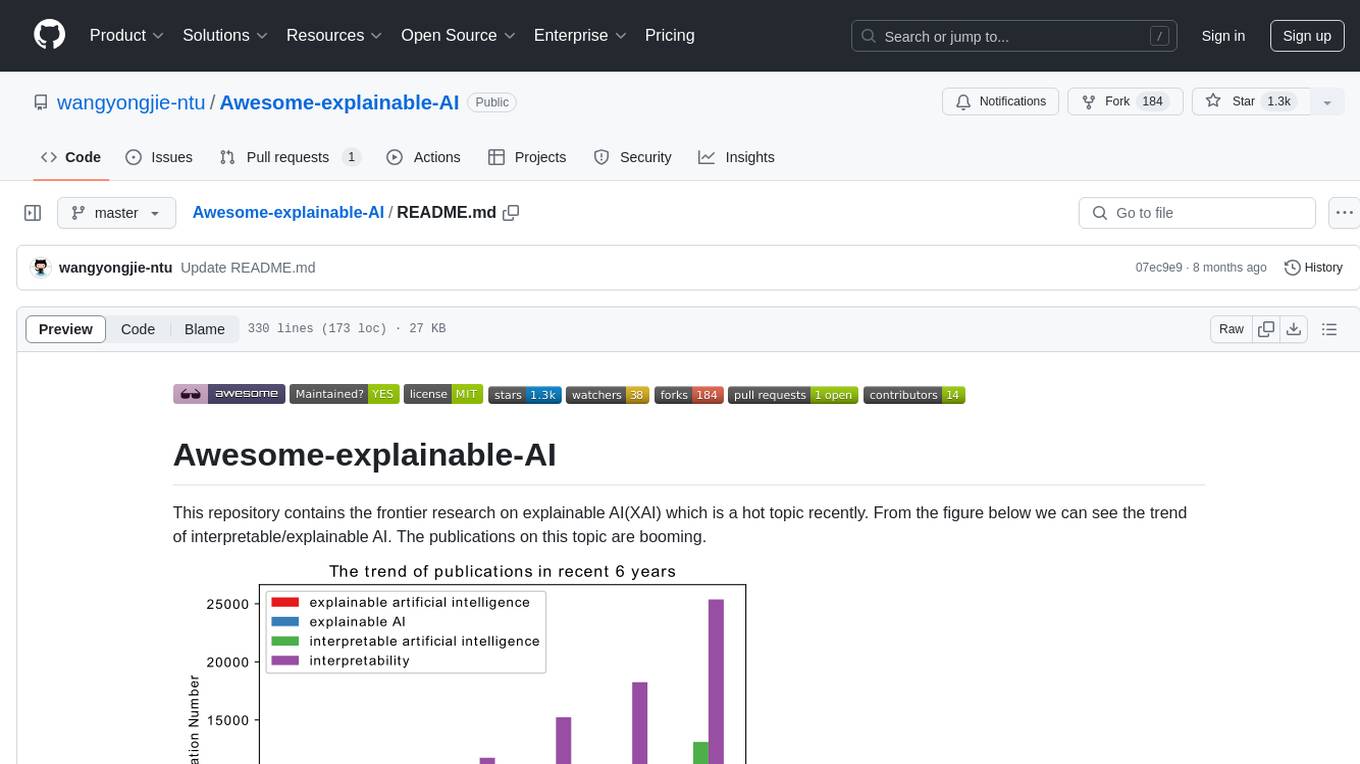

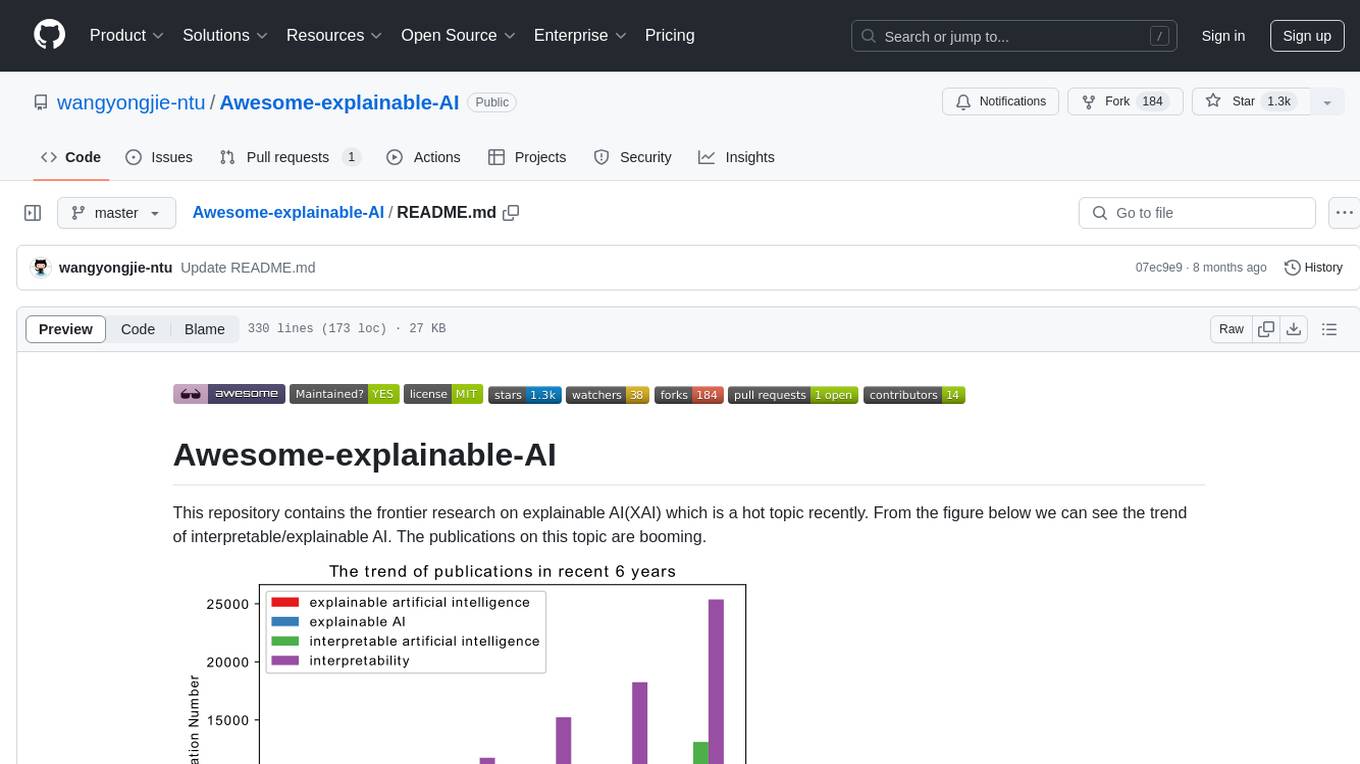

This repository contains frontier research on explainable AI (XAI), a hot topic in the field of artificial intelligence. It includes trends, use cases, survey papers, books, open courses, papers, and Python libraries related to XAI. The repository aims to organize and categorize publications on XAI, provide evaluation methods, and list various Python libraries for explainable AI.

README:

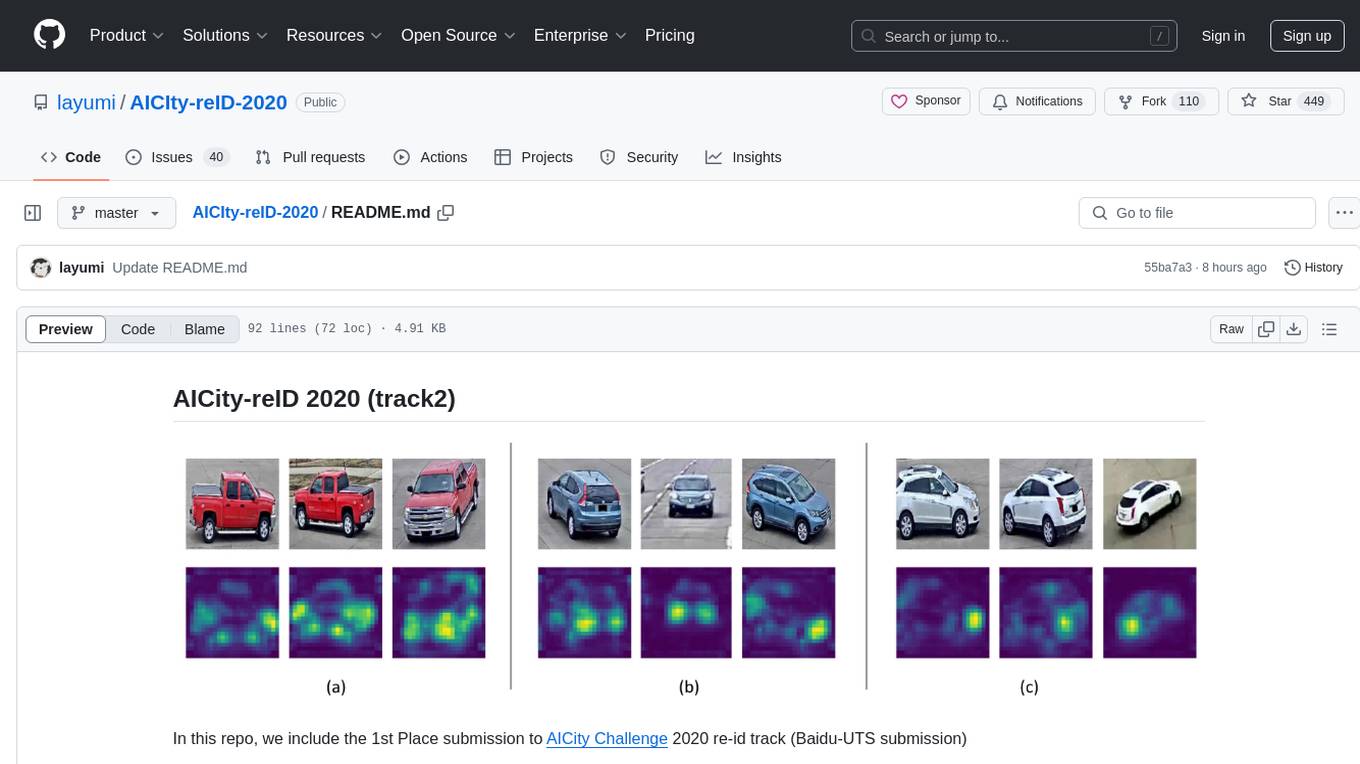

This repository contains the frontier research on explainable AI(XAI) which is a hot topic recently. From the figure below we can see the trend of interpretable/explainable AI. The publications on this topic are booming.

The figure below illustrates several use cases of XAI. Here we also divide the publications into serveal categories based on this figure. It is challenging to organise these papers well. Good to hear your voice!

Benchmarking and Survey of Explanation Methods for Black Box Models, DMKD 2023

Post-hoc Interpretability for Neural NLP: A Survey, ACM Computing Survey 2022

Explainable Artificial Intelligence (XAI): What we know and what is left to attain Trustworthy Artificial Intelligence, Information fusion 2023

Explainable Biometrics in the Age of Deep Learning, Arxiv preprint 2022

Explainable AI (XAI): Core Ideas, Techniques and Solutions, ACM Computing Survey 2022

A Review of Taxonomies of Explainable Artificial Intelligence (XAI) Methods, FaccT 2022

From Anecdotal Evidence to Quantitative Evaluation Methods: A Systematic Review on Evaluating Explainable AI, ArXiv preprint 2022. Corresponding website with collection of XAI methods

Interpretable machine learning:Fundamental principles and 10 grand challenges, Statist. Survey 2022

Teach Me to Explain: A Review of Datasets for Explainable Natural Language Processing, NeurlIPS 2021

Pitfalls of Explainable ML: An Industry Perspective, Arxiv preprint 2021

Explainable Machine Learning in Deployment, FAT 2020

The elephant in the interpretability room: Why use attention as explanation when we have saliency methods, EMNLP Workshop 2020

A Survey of the State of Explainable AI for Natural Language Processing, AACL-IJCNLP 2020

Interpretable Machine Learning – A Brief History, State-of-the-Art and Challenges, Communications in Computer and Information Science 2020

A brief survey of visualization methods for deep learning models from the perspective of Explainable AI, Information Visualization 2020

Explaining Explanations in AI, ACM FAT 2019

Machine learning interpretability: A survey on methods and metrics, Electronics, 2019

A Survey on Explainable Artificial Intelligence (XAI): Towards Medical XAI, IEEE TNNLS 2020

Interpretable machine learning: definitions, methods, and applications, Arxiv preprint 2019

Visual Analytics in Deep Learning: An Interrogative Survey for the Next Frontiers, IEEE Transactions on Visualization and Computer Graphics, 2019

Explainable Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI, Information Fusion, 2019

Explanation in artificial intelligence: Insights from the social sciences, Artificial Intelligence 2019

Evaluating Explanation Without Ground Truth in Interpretable Machine Learning, Arxiv preprint 2019

Explanation in Human-AI Systems: A Literature Meta-Review Synopsis of Key Ideas and Publications and Bibliography for Explainable AI, DARPA XAI literature Review 2019

A survey of methods for explaining black box models, ACM Computing Surveys, 2018

Explaining Explanations: An Overview of Interpretability of Machine Learning, IEEE DSAA, 2018

Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI), IEEE Access, 2018

Explainable artificial intelligence: A survey, MIPRO, 2018

The Mythos of Model Interpretability: In machine learning, the concept of interpretability is both important and slippery, ACM Queue 2018

How Convolutional Neural Networks See the World — A Survey of Convolutional Neural Network Visualization Methods, Mathematical Foundations of Computing 2018

Explainable Artificial Intelligence: Understanding, Visualizing and Interpreting Deep Learning Models, Arxiv 2017

Towards A Rigorous Science of Interpretable Machine Learning, Arxiv preprint 2017

Explaining Explanation, Part 1: Theoretical Foundations, IEEE Intelligent System 2017

Explaining Explanation, Part 2: Empirical Foundations, IEEE Intelligent System 2017

Explaining Explanation, Part 3: The Causal Landscape, IEEE Intelligent System 2017

Explaining Explanation, Part 4: A Deep Dive on Deep Nets, IEEE Intelligent System 2017

An accurate comparison of methods for quantifying variable importance in artificial neural networks using simulated data, Ecological Modelling 2004

Review and comparison of methods to study the contribution of variables in artificial neural network models, Ecological Modelling 2003

Explainable Artificial Intelligence (xAI) Approaches and Deep Meta-Learning Models, Advances in Deep Learning Chapter 2020

Explainable AI: Interpreting, Explaining and Visualizing Deep Learning, Springer 2019

Explanation in Artificial Intelligence: Insights from the Social Sciences, 2017 arxiv preprint

Visualizations of Deep Neural Networks in Computer Vision: A Survey, Springer Transparent Data Mining for Big and Small Data 2017

Explanatory Model Analysis Explore, Explain and Examine Predictive Models

Interpretable Machine Learning A Guide for Making Black Box Models Explainable

Limitations of Interpretable Machine Learning Methods

Interpretability and Explainability in Machine Learning, Harvard University

We mainly follow the taxonomy in the survey paper and divide the XAI/XML papers into the several branches.

Faithfulness Tests for Natural Language Explanations, ACL 2023

OpenXAI: Towards a Transparent Evaluation of Model Explanations, Arxiv 2022

From Anecdotal Evidence to Quantitative Evaluation Methods: A Systematic Review on Evaluating Explainable AI, ArXiv preprint 2022. Corresponding website with collection of XAI methods

Towards Better Understanding Attribution Methods, CVPR 2022

Evaluations and Methods for Explanation through Robustness Analysis, arxiv preprint 2020

Evaluating and Aggregating Feature-based Model Explanations, IJCAI 2020

Sanity Checks for Saliency Metrics, AAAI 2020

A benchmark for interpretability methods in deep neural networks, NIPS 2019

What Do Different Evaluation Metrics Tell Us About Saliency Models?, TPAMI 2018

Methods for interpreting and understanding deep neural networks, Digital Signal Processing 2017

Evaluating the visualization of what a Deep Neural Network has learned, IEEE Transactions on Neural Networks and Learning Systems 2015

AIF360: https://github.com/Trusted-AI/AIF360,

AIX360: https://github.com/IBM/AIX360,

Anchor: https://github.com/marcotcr/anchor, scikit-learn

Alibi: https://github.com/SeldonIO/alibi

Alibi-detect: https://github.com/SeldonIO/alibi-detect

BlackBoxAuditing: https://github.com/algofairness/BlackBoxAuditing, scikit-learn

Brain2020: https://github.com/vkola-lab/brain2020, Pytorch, 3D Brain MRI

Boruta-Shap: https://github.com/Ekeany/Boruta-Shap, scikit-learn

casme: https://github.com/kondiz/casme, Pytorch

Captum: https://github.com/pytorch/captum, Pytorch,

cnn-exposed: https://github.com/idealo/cnn-exposed, Tensorflow

ClusterShapley: https://github.com/wilsonjr/ClusterShapley, Sklearn

DALEX: https://github.com/ModelOriented/DALEX,

Deeplift: https://github.com/kundajelab/deeplift, Tensorflow, Keras

DeepExplain: https://github.com/marcoancona/DeepExplain, Tensorflow, Keras

Deep Visualization Toolbox: https://github.com/yosinski/deep-visualization-toolbox, Caffe,

dianna: https://github.com/dianna-ai/dianna, ONNX,

Eli5: https://github.com/TeamHG-Memex/eli5, Scikit-learn, Keras, xgboost, lightGBM, catboost etc.

explabox: https://github.com/MarcelRobeer/explabox, ONNX, Scikit-learn, Pytorch, Keras, Tensorflow, Huggingface

explainx: https://github.com/explainX/explainx, xgboost, catboost

ExplainaBoard: https://github.com/neulab/ExplainaBoard,

ExKMC: https://github.com/navefr/ExKMC, Python,

Facet: https://github.com/BCG-Gamma/facet, sklearn,

Grad-cam-Tensorflow: https://github.com/insikk/Grad-CAM-tensorflow, Tensorflow

GRACE: https://github.com/lethaiq/GRACE_KDD20, Pytorch

Innvestigate: https://github.com/albermax/innvestigate, tensorflow, theano, cntk, Keras

imodels: https://github.com/csinva/imodels,

InterpretML: https://github.com/interpretml/interpret

interpret-community: https://github.com/interpretml/interpret-community

Integrated-Gradients: https://github.com/ankurtaly/Integrated-Gradients, Tensorflow

Keras-grad-cam: https://github.com/jacobgil/keras-grad-cam, Keras

Keras-vis: https://github.com/raghakot/keras-vis, Keras

keract: https://github.com/philipperemy/keract, Keras

Lucid: https://github.com/tensorflow/lucid, Tensorflow

LIT: https://github.com/PAIR-code/lit, Tensorflow, specified for NLP Task

Lime: https://github.com/marcotcr/lime, Nearly all platform on Python

LOFO: https://github.com/aerdem4/lofo-importance, scikit-learn

modelStudio: https://github.com/ModelOriented/modelStudio, Keras, Tensorflow, xgboost, lightgbm, h2o

M3d-Cam: https://github.com/MECLabTUDA/M3d-Cam, PyTorch,

NeuroX: https://github.com/fdalvi/NeuroX, PyTorch,

neural-backed-decision-trees: https://github.com/alvinwan/neural-backed-decision-trees, Pytorch

Outliertree: https://github.com/david-cortes/outliertree, (Python, R, C++),

InterpretDL: https://github.com/PaddlePaddle/InterpretDL, (Python PaddlePaddle),

polyjuice: https://github.com/tongshuangwu/polyjuice, (Pytorch),

pytorch-cnn-visualizations: https://github.com/utkuozbulak/pytorch-cnn-visualizations, Pytorch

Pytorch-grad-cam: https://github.com/jacobgil/pytorch-grad-cam, Pytorch

PDPbox: https://github.com/SauceCat/PDPbox, Scikit-learn

py-ciu:https://github.com/TimKam/py-ciu/,

PyCEbox: https://github.com/AustinRochford/PyCEbox

path_explain: https://github.com/suinleelab/path_explain, Tensorflow

Quantus: https://github.com/understandable-machine-intelligence-lab/Quantus, Tensorflow, Pytorch

rulefit: https://github.com/christophM/rulefit,

rulematrix: https://github.com/rulematrix/rule-matrix-py,

Saliency: https://github.com/PAIR-code/saliency, Tensorflow

SHAP: https://github.com/slundberg/shap, Nearly all platform on Python

Shapley: https://github.com/benedekrozemberczki/shapley,

Skater: https://github.com/oracle/Skater

TCAV: https://github.com/tensorflow/tcav, Tensorflow, scikit-learn

skope-rules: https://github.com/scikit-learn-contrib/skope-rules, Scikit-learn

TensorWatch: https://github.com/microsoft/tensorwatch.git, Tensorflow

tf-explain: https://github.com/sicara/tf-explain, Tensorflow

Treeinterpreter: https://github.com/andosa/treeinterpreter, scikit-learn,

torch-cam: https://github.com/frgfm/torch-cam, Pytorch,

WeightWatcher: https://github.com/CalculatedContent/WeightWatcher, Keras, Pytorch

What-if-tool: https://github.com/PAIR-code/what-if-tool, Tensorflow

XAI: https://github.com/EthicalML/xai, scikit-learn

Xplique: https://github.com/deel-ai/xplique, Tensorflow,

https://github.com/jphall663/awesome-machine-learning-interpretability,

https://github.com/lopusz/awesome-interpretable-machine-learning,

https://github.com/pbiecek/xai_resources,

https://github.com/h2oai/mli-resources,

https://github.com/AstraZeneca/awesome-explainable-graph-reasoning,

https://github.com/utwente-dmb/xai-papers,

https://github.com/samzabdiel/XAI,

Need your help to re-organize and refine current taxonomy. Thanks very very much!

I appreciate it very much if you could add more works related to XAI/XML to this repo, archive uncategoried papers or anything to enrich this repo.

If any questions, feel free to drop me an email([email protected]). Welcome to discuss together.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-explainable-AI

Similar Open Source Tools

Awesome-explainable-AI

This repository contains frontier research on explainable AI (XAI), a hot topic in the field of artificial intelligence. It includes trends, use cases, survey papers, books, open courses, papers, and Python libraries related to XAI. The repository aims to organize and categorize publications on XAI, provide evaluation methods, and list various Python libraries for explainable AI.

Building-a-Small-LLM-from-Scratch

This tutorial provides a comprehensive guide on building a small Large Language Model (LLM) from scratch using PyTorch. The author shares insights and experiences gained from working on LLM projects in the industry, aiming to help beginners understand the fundamental components of LLMs and training fine-tuning codes. The tutorial covers topics such as model structure overview, attention modules, optimization techniques, normalization layers, tokenizers, pretraining, and fine-tuning with dialogue data. It also addresses specific industry-related challenges and explores cutting-edge model concepts like DeepSeek network structure, causal attention, dynamic-to-static tensor conversion for ONNX inference, and performance optimizations for NPU chips. The series emphasizes hands-on practice with small models to enable local execution and plans to expand into multimodal language models and tensor parallel multi-card deployment.

Awesome-RL-based-LLM-Reasoning

This repository is dedicated to enhancing Language Model (LLM) reasoning with reinforcement learning (RL). It includes a collection of the latest papers, slides, and materials related to RL-based LLM reasoning, aiming to facilitate quick learning and understanding in this field. Starring this repository allows users to stay updated and engaged with the forefront of RL-based LLM reasoning.

LightLLM

LightLLM is a lightweight library for linear and logistic regression models. It provides a simple and efficient way to train and deploy machine learning models for regression tasks. The library is designed to be easy to use and integrate into existing projects, making it suitable for both beginners and experienced data scientists. With LightLLM, users can quickly build and evaluate regression models using a variety of algorithms and hyperparameters. The library also supports feature engineering and model interpretation, allowing users to gain insights from their data and make informed decisions based on the model predictions.

FuseAI

FuseAI is a repository that focuses on knowledge fusion of large language models. It includes FuseChat, a state-of-the-art 7B LLM on MT-Bench, and FuseLLM, which surpasses Llama-2-7B by fusing three open-source foundation LLMs. The repository provides tech reports, releases, and datasets for FuseChat and FuseLLM, showcasing their performance and advancements in the field of chat models and large language models.

OpenNARS-for-Applications

OpenNARS-for-Applications is an implementation of a Non-Axiomatic Reasoning System, a general-purpose reasoner that adapts under the Assumption of Insufficient Knowledge and Resources. The system combines the logic and conceptual ideas of OpenNARS, event handling and procedure learning capabilities of ANSNA and 20NAR1, and the control model from ALANN. It is written in C, offers improved reasoning performance, and has been compared with Reinforcement Learning and means-end reasoning approaches. The system has been used in real-world applications such as assisting first responders, real-time traffic surveillance, and experiments with autonomous robots. It has been developed with a pragmatic mindset focusing on effective implementation of existing theory.

Awesome-local-LLM

Awesome-local-LLM is a curated list of platforms, tools, practices, and resources that help run Large Language Models (LLMs) locally. It includes sections on inference platforms, engines, user interfaces, specific models for general purpose, coding, vision, audio, and miscellaneous tasks. The repository also covers tools for coding agents, agent frameworks, retrieval-augmented generation, computer use, browser automation, memory management, testing, evaluation, research, training, and fine-tuning. Additionally, there are tutorials on models, prompt engineering, context engineering, inference, agents, retrieval-augmented generation, and miscellaneous topics, along with a section on communities for LLM enthusiasts.

intro_pharma_ai

This repository serves as an educational resource for pharmaceutical and chemistry students to learn the basics of Deep Learning through a collection of Jupyter Notebooks. The content covers various topics such as Introduction to Jupyter, Python, Cheminformatics & RDKit, Linear Regression, Data Science, Linear Algebra, Neural Networks, PyTorch, Convolutional Neural Networks, Transfer Learning, Recurrent Neural Networks, Autoencoders, Graph Neural Networks, and Summary. The notebooks aim to provide theoretical concepts to understand neural networks through code completion, but instructors are encouraged to supplement with their own lectures. The work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

AI-System-School

AI System School is a curated list of research in machine learning systems, focusing on ML/DL infra, LLM infra, domain-specific infra, ML/LLM conferences, and general resources. It provides resources such as data processing, training systems, video systems, autoML systems, and more. The repository aims to help users navigate the landscape of AI systems and machine learning infrastructure, offering insights into conferences, surveys, books, videos, courses, and blogs related to the field.

llm_illustrated

llm_illustrated is an electronic book that visually explains various technical aspects of large language models using clear and easy-to-understand images. The book covers topics such as self-attention structure and code, absolute position encoding, KV cache visualization, transformers composition, and a relationship graph of participants in the Dartmouth Conference. The progress of the book is less than 10%, and readers can stay updated by following the WeChat official account and replying 'learn large models through images'. The PDF layout and Latex formatting are still being adjusted.

AICIty-reID-2020

AICIty-reID 2020 is a repository containing the 1st Place submission to AICity Challenge 2020 re-id track by Baidu-UTS. It includes models trained on Paddlepaddle and Pytorch, with performance metrics and trained models provided. Users can extract features, perform camera and direction prediction, and access related repositories for drone-based building re-id, vehicle re-ID, person re-ID baseline, and person/vehicle generation. Citations are also provided for research purposes.

IvyGPT

IvyGPT is a medical large language model that aims to generate the most realistic doctor consultation effects. It has been fine-tuned on high-quality medical Q&A data and trained using human feedback reinforcement learning. The project features full-process training on medical Q&A LLM, multiple fine-tuning methods support, efficient dataset creation tools, and a dataset of over 300,000 high-quality doctor-patient dialogues for training.

AutoPatent

AutoPatent is a multi-agent framework designed for automatic patent generation. It challenges large language models to generate full-length patents based on initial drafts. The framework leverages planner, writer, and examiner agents along with PGTree and RRAG to craft lengthy, intricate, and high-quality patent documents. It introduces a new metric, IRR (Inverse Repetition Rate), to measure sentence repetition within patents. The tool aims to streamline the patent generation process by automating the creation of detailed and specialized patent documents.

For similar tasks

Awesome-explainable-AI

This repository contains frontier research on explainable AI (XAI), a hot topic in the field of artificial intelligence. It includes trends, use cases, survey papers, books, open courses, papers, and Python libraries related to XAI. The repository aims to organize and categorize publications on XAI, provide evaluation methods, and list various Python libraries for explainable AI.

pytorch-grad-cam

This repository provides advanced AI explainability for PyTorch, offering state-of-the-art methods for Explainable AI in computer vision. It includes a comprehensive collection of Pixel Attribution methods for various tasks like Classification, Object Detection, Semantic Segmentation, and more. The package supports high performance with full batch image support and includes metrics for evaluating and tuning explanations. Users can visualize and interpret model predictions, making it suitable for both production and model development scenarios.

AwesomeResponsibleAI

Awesome Responsible AI is a curated list of academic research, books, code of ethics, courses, data sets, frameworks, institutes, newsletters, principles, podcasts, reports, tools, regulations, and standards related to Responsible, Trustworthy, and Human-Centered AI. It covers various concepts such as Responsible AI, Trustworthy AI, Human-Centered AI, Responsible AI frameworks, AI Governance, and more. The repository provides a comprehensive collection of resources for individuals interested in ethical, transparent, and accountable AI development and deployment.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.