ai-engine-direct-helper

QAI AppBuilder is designed to help developers easily execute models on WoS and Linux platforms. It encapsulates the Qualcomm® AI Runtime SDK APIs into a set of simplified interfaces for running models on the NPU/HTP.

Stars: 125

A simple way to build AI applications based on Qualcomm® AI Runtime SDK. QAI AppBuilder simplifies the deployment of QNN models by encapsulating model execution APIs into simplified interfaces for loading models onto NPU/HTP and performing inference. It supports both C++ and Python projects, Windows and Linux platforms, Genie (Large Language Model), LLM on CPU & NPU, multimodal LLM, float & native input/output data, multi-graph, LoRA, multiple models, inputs & outputs. It makes app development easier, model testing faster, and provides plenty of sample code.

README:

This software is provided “as is,” without any express or implied warranties. The authors and contributors shall not be held liable for any damages arising from its use. The code may be incomplete or insufficiently tested. Users are solely responsible for evaluating its suitability and assume all associated risks.

Note: Contributions are welcome. Please ensure thorough testing before deploying in critical systems.

The Quick AI Application Builder (this repository) is also referred to as "QAI AppBuilder" in the source code and documentation.

QAI AppBuilder is an extension of the Qualcomm® AI Runtime SDK, which is used to simplify the deployment of QNN models. Some libraries from the Qualcomm® AI Runtime SDK are required to use QAI AppBuilder.

QAI AppBuilder is designed to help developers easily use the Qualcomm® AI Runtime SDK to execute models on Windows on Snapdragon (WoS) and Linux platforms. It encapsulates the model execution APIs into a set of simplified interfaces for loading models onto the NPU/HTP and performing inference. It substantially lowers the complexity of model deployment for developers.

We provide a wealth of samples to help you quickly explore the features of QAI AppBuilder. For some key examples, we also offer scripts to assist in setting up the relevant environment efficiently. You can learn how to use these scripts through QAI AppBuilder Launcher, enabling you to experience the core functionalities within an hour.

QAI AppBuilder Guide English | 中文

GenieAPIService (OpenAI Compatible API Service) English | 中文

Qwen2.5-VL-3B On-Device Deployment English | 中文

QAI AppBuilder: 让本地 AI 部署触手可及!

大语言模型系列(1): 3分钟上手,在骁龙AI PC上部署DeepSeek!

大语言模型系列(2): 本地 OpenAI 兼容 API 服务的配置与部署

大语言模型系列(3): Qwen2.5-VL-3B 多模态模型端侧部署

高通平台大语言模型精选

QAI AppBuilder on Linux (QCS6490)

Qwen2 7B SSD 使用教程

Qwen2.5 3B 使用教程

Genie API Service 配置与使用

GenieChat:使用 Genie API Service 构建本地大语言模型驱动的安卓应用

SuperResolutionApp:图片超分 Android 开发示例

BGE-Base-Zh-V1.5 端侧使用教程

Developers can use QAI AppBuilder in both C++ and Python projects

• Support both C++ & Python

• Support both Windows & Linux

• Support Genie(Large Language Model)

• Support LLM on both CPU & NPU [NEW!]

• Support Multimodal LLM [NEW!]

• Support Float & Native Input & Output Data [NEW!]

• Support Multi Graph

• Support LoRA

• Support multiple models

• Support multiple inputs & outputs

• Easier for developing apps

• Faster for testing models

• Plenty of sample code

** Support ARM64 Windows, Linux and Ubuntu (e.g.: X Elite Windows, QCS8550 Linux and QCM6490 Ubuntu).

** Support OpenAI Compatible API Service(GenieAPIService) on WoS, Android and Linux.

** Use "native" mode input & output data can improve data conversation performance obviously. Refer to User Guide & Wisper sample code.

Refere to python.md for instructions on setting up the Python(x64 version) environment to use QAI AppBuilder on Windows on Snapdragon (WoS) platforms.

You can also run the batch file from QAI AppBuilder Launcher to setup the environment automatically.

We have developed several WebUI AI applications based on QAI AppBuilder, allowing you to experience them quickly.

All these applications run on a local PC, requiring no internet connection and are completely free.

You can run WebUI AI applications througn the batch file 3.Start_WebUI.bat.

| App | Description |

|---|---|

| ImageRepairApp | An image restoration tool designed to repair old or damaged photographs. |

| StableDiffusionApp | A text-to-image generation tool that creates images based on user input. |

| GenieWebUI | A large language model (LLM) interface that enables interactive conversations. |

Considering that the current mainstream method for invoking LLMs is based on OpenAI-compatible APIs, we have implemented such interfaces in both C++ and Python. This allows application developers to interact with the local large language model running on NPU in a familiar way.

Many third-party applications that support the OpenAI API can seamlessly switch to the local NPU-based model by simply changing the API IP endpoint.

We have also implemented the client sample code of Genie API Service through both C++ and Python for the reference of developers.

-

Python based service: Guide to run OpenAI compatible API services developed with python.

-

C++ based service: Guide to run OpenAI compatible API services developed with C++.

We have many Python and C++ samples which can be run directly. The models used in these samples are from AI-Hub.

We suggest that you try to run these sample code before you actually start writing your own code.

We have LangFlow Package for running QNN model in 3rd party LangFlow framework. You can get more information here.

We provide QAI AppBuilder Launcher, enabling you to experience the core functionalities of QAI AppBuilder within an hour.

DLC2BIN is a guide to help you convert the general DLC model format into the BIN format optimized for a specific platform.

ONNX2BIN is a guide to help you convert the ONNX model format into the BIN format optimized for a specific platform.

ONNXRT2QNNRT is a wrapper to run onnx inference code with qnn model, which will switch to qnn runtime automatically.

Qwen2 7B SSD

DeepSeek-R1-Distill-Qwen-7B

stable-diffusion-webui Extension

Blender ControlNet Plugin

无痕修图软件

图片超分器

图片超分应用

视频超分应用

图片消除器

图片搜索应用

There're two ways to use QAI AppBuilder:

Download prebuild binary package QAI_AppBuilder-win_arm64-{Qualcomm® AI Runtime SDK version}-Release.zip to get these files: https://github.com/quic/ai-engine-direct-helper/releases

Download Python extension qai_appbuilder-{version}-cp312-cp312-win_amd64.whl and install it with the command below: https://github.com/quic/ai-engine-direct-helper/releases

pip install qai_appbuilder-{version}-cp312-cp312-win_amd64.whl

Refere to User Guide on how to use QAI AppBuilder to program AI application.

Refer to tutorial.ipynb to setup and run a cv model step by step.

You can use the pre-compiled version directly and download the version you need from Release. But if you really want to compile it yourself, you can refer to the BUILD.md.

QAI AppBuilder is licensed under the BSD 3-clause "New" or "Revised" License. Check out the LICENSE for more details.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ai-engine-direct-helper

Similar Open Source Tools

ai-engine-direct-helper

A simple way to build AI applications based on Qualcomm® AI Runtime SDK. QAI AppBuilder simplifies the deployment of QNN models by encapsulating model execution APIs into simplified interfaces for loading models onto NPU/HTP and performing inference. It supports both C++ and Python projects, Windows and Linux platforms, Genie (Large Language Model), LLM on CPU & NPU, multimodal LLM, float & native input/output data, multi-graph, LoRA, multiple models, inputs & outputs. It makes app development easier, model testing faster, and provides plenty of sample code.

ChatterUI

ChatterUI is a mobile app that allows users to manage chat files and character cards, and to interact with Large Language Models (LLMs). It supports multiple backends, including local, koboldcpp, text-generation-webui, Generic Text Completions, AI Horde, Mancer, Open Router, and OpenAI. ChatterUI provides a mobile-friendly interface for interacting with LLMs, making it easy to use them for a variety of tasks, such as generating text, translating languages, writing code, and answering questions.

toolmate

ToolMate AI is an advanced AI companion that integrates agents, tools, and plugins to excel in conversations, generative work, and task execution. It supports multi-step actions, allowing users to customize workflows for tackling complex projects with ease. The tool offers a wide range of AI backends and models, including Ollama, Llama.cpp, Groq Cloud API, OpenAI API, and Google Gemini via Vertex AI. Users can easily switch between backends and leverage AI models like wizardlm2 and mixtral. ToolMate AI stands out for its distinctive features such as tool calling for any LLMs, running multiple tools in one go, highly customizable plugins, and integration with popular AI tools. It also supports quick tool calling using '@' notation and enables the execution of computing tasks on demand. With features like multiple tools in one go, customizable plugins, system command and fabric integration, GPU offloading support, real-time data access, and device information retrieval, ToolMate AI offers a comprehensive solution for various tasks and content creation.

devchat

DevChat is an open-source workflow engine that enables developers to create intelligent, automated workflows for engaging with users through a chat panel within their IDEs. It combines script writing flexibility, latest AI models, and an intuitive chat GUI to enhance user experience and productivity. DevChat simplifies the integration of AI in software development, unlocking new possibilities for developers.

singulatron

Singulatron is an AI Superplatform that runs on your computer(s) and server(s) without using third party APIs, providing complete control over data and privacy. It offers AI functionality, user management, supports different database backends, collaboration, and mini-apps. It aims to be a desktop app for local usage and a distributed daemon for servers, with a web app frontend client. The tool is stack-based on Electron, Angular, and Go, and currently dual-licensed under AGPL-3.0-or-later and a commercial license.

superplatform

Superplatform is a microservices platform focused on distributed AI management and development. It enables users to self-host AI models, build backendless AI apps, develop microservices-based AI applications, and deploy third-party AI apps easily. The platform supports running open-source AI models privately, building apps leveraging AI models, and utilizing a microservices-based communal backend for diverse projects.

mattermost-plugin-ai

The Mattermost AI Copilot Plugin is an extension that adds functionality for local and third-party LLMs within Mattermost v9.6 and above. It is currently experimental and allows users to interact with AI models seamlessly. The plugin enhances the user experience by providing AI-powered assistance and features for communication and collaboration within the Mattermost platform.

LafTools

LafTools is a privacy-first, self-hosted, fully open source toolbox designed for programmers. It offers a wide range of tools, including code generation, translation, encryption, compression, data analysis, and more. LafTools is highly integrated with a productive UI and supports full GPT-alike functionality. It is available as Docker images and portable edition, with desktop edition support planned for the future.

chatgpt-api

Chat Worm is a ChatGPT client that provides access to the API for generating text using OpenAI's GPT models. It works as a single-page application directly communicating with the API, allowing users to interact with the latest GPT-4 model if they have access. The project includes web, Android, and Windows apps for easy access. Users can set up local development, contribute improvements via pull requests, report bugs or request features on GitHub, deploy to production servers, and release on different app stores. The project is licensed under the MIT License.

connery-sdk

Connery SDK is an open-source NPM package that provides an SDK and CLI for developing plugins and actions. The SDK offers a JavaScript API to define plugins and actions, which are then packaged into a plugin server with a standardized REST API. This enables automation in the development process and simplifies handling authorization, input validation, and logging. Users can focus on the logic of their actions while the standardized API allows various clients to interact with actions uniformly. Actions can communicate with external APIs, databases, or services, making it versatile for creating AI plugins and actions.

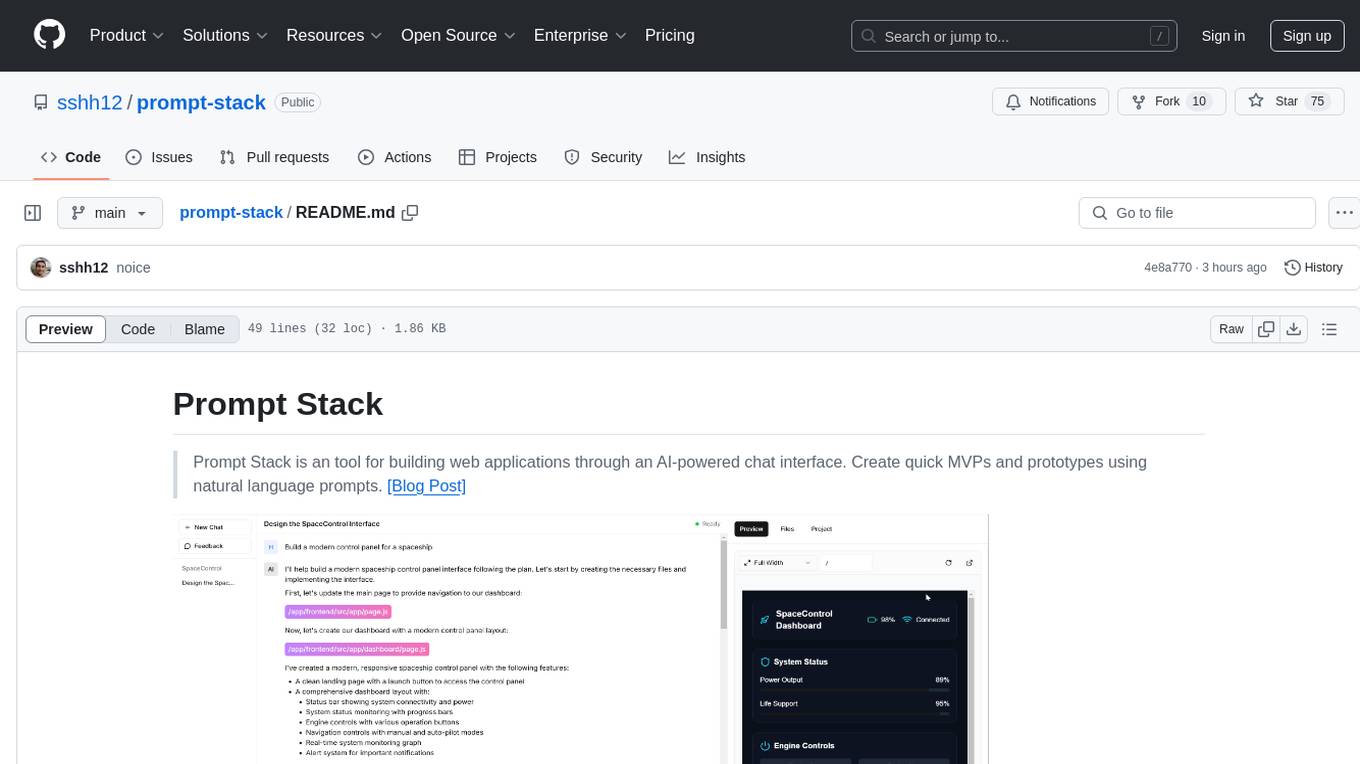

prompt-stack

Prompt Stack is a tool for building web applications using an AI-powered chat interface. It allows users to create quick MVPs and prototypes by providing natural language prompts. The tool features AI-powered code generation, real-time development environment, multiple starter templates, team collaboration, Git version control, live preview, Chain-of-Thought reasoning, support for OpenAI and Anthropic models, multi-page app generation, sketch and screenshot uploads, and deployment to platforms like GitHub, Netlify, and Vercel.

module-ballerinax-ai.agent

This library provides functionality required to build ReAct Agent using Large Language Models (LLMs).

letmedoit

LetMeDoIt AI is a virtual assistant designed to revolutionize the way you work. It goes beyond being a mere chatbot by offering a unique and powerful capability - the ability to execute commands and perform computing tasks on your behalf. With LetMeDoIt AI, you can access OpenAI ChatGPT-4, Google Gemini Pro, and Microsoft AutoGen, local LLMs, all in one place, to enhance your productivity.

Ollamac

Ollamac is a macOS app designed for interacting with Ollama models. It is optimized for macOS, allowing users to easily use any model from the Ollama library. The app features a user-friendly interface, chat archive for saving interactions, and real-time communication using HTTP streaming technology. Ollamac is open-source, enabling users to contribute to its development and enhance its capabilities. It requires macOS 14 or later and the Ollama system to be installed on the user's Mac with at least one Ollama model downloaded.

slidev-ai

Slidev AI is a web app that leverages LLM (Large Language Model) technology to make creating Slidev-based online presentations elegant and effortless. It is designed to help engineers and academics quickly produce content-focused, minimalist PPTs that are easily shareable online. This project serves as a reference implementation for OpenMCP agent development, a production-ready presentation generation solution, and a template for creating domain-specific AI agents.

ComfyUIMini

ComfyUI Mini is a lightweight and mobile-friendly frontend designed to run ComfyUI workflows. It allows users to save workflows locally on their device or PC, easily import workflows, and view generation progress information. The tool requires ComfyUI to be installed on the PC and a modern browser with WebSocket support on the mobile device. Users can access the WebUI by running the app and connecting to the local address of the PC. ComfyUI Mini provides a simple and efficient way to manage workflows on mobile devices.

For similar tasks

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.

AI-in-a-Box

AI-in-a-Box is a curated collection of solution accelerators that can help engineers establish their AI/ML environments and solutions rapidly and with minimal friction, while maintaining the highest standards of quality and efficiency. It provides essential guidance on the responsible use of AI and LLM technologies, specific security guidance for Generative AI (GenAI) applications, and best practices for scaling OpenAI applications within Azure. The available accelerators include: Azure ML Operationalization in-a-box, Edge AI in-a-box, Doc Intelligence in-a-box, Image and Video Analysis in-a-box, Cognitive Services Landing Zone in-a-box, Semantic Kernel Bot in-a-box, NLP to SQL in-a-box, Assistants API in-a-box, and Assistants API Bot in-a-box.

spring-ai

The Spring AI project provides a Spring-friendly API and abstractions for developing AI applications. It offers a portable client API for interacting with generative AI models, enabling developers to easily swap out implementations and access various models like OpenAI, Azure OpenAI, and HuggingFace. Spring AI also supports prompt engineering, providing classes and interfaces for creating and parsing prompts, as well as incorporating proprietary data into generative AI without retraining the model. This is achieved through Retrieval Augmented Generation (RAG), which involves extracting, transforming, and loading data into a vector database for use by AI models. Spring AI's VectorStore abstraction allows for seamless transitions between different vector database implementations.

ragstack-ai

RAGStack is an out-of-the-box solution simplifying Retrieval Augmented Generation (RAG) in GenAI apps. RAGStack includes the best open-source for implementing RAG, giving developers a comprehensive Gen AI Stack leveraging LangChain, CassIO, and more. RAGStack leverages the LangChain ecosystem and is fully compatible with LangSmith for monitoring your AI deployments.

breadboard

Breadboard is a library for prototyping generative AI applications. It is inspired by the hardware maker community and their boundless creativity. Breadboard makes it easy to wire prototypes and share, remix, reuse, and compose them. The library emphasizes ease and flexibility of wiring, as well as modularity and composability.

cloudflare-ai-web

Cloudflare-ai-web is a lightweight and easy-to-use tool that allows you to quickly deploy a multi-modal AI platform using Cloudflare Workers AI. It supports serverless deployment, password protection, and local storage of chat logs. With a size of only ~638 kB gzip, it is a great option for building AI-powered applications without the need for a dedicated server.

app-builder

AppBuilder SDK is a one-stop development tool for AI native applications, providing basic cloud resources, AI capability engine, Qianfan large model, and related capability components to improve the development efficiency of AI native applications.

cookbook

This repository contains community-driven practical examples of building AI applications and solving various tasks with AI using open-source tools and models. Everyone is welcome to contribute, and we value everybody's contribution! There are several ways you can contribute to the Open-Source AI Cookbook: Submit an idea for a desired example/guide via GitHub Issues. Contribute a new notebook with a practical example. Improve existing examples by fixing issues/typos. Before contributing, check currently open issues and pull requests to avoid working on something that someone else is already working on.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.