ScreenAgent

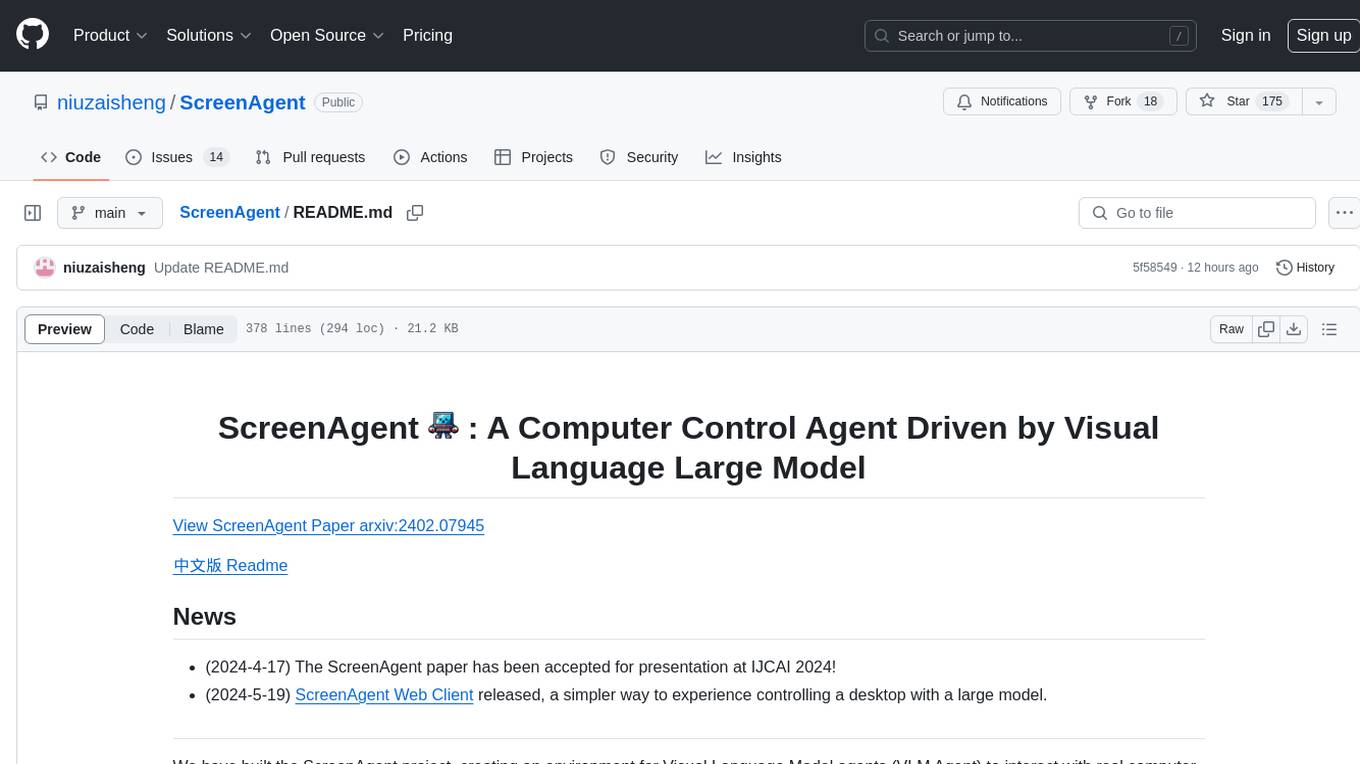

ScreenAgent: A Computer Control Agent Driven by Visual Language Large Model

Stars: 175

ScreenAgent is a project focused on creating an environment for Visual Language Model agents (VLM Agent) to interact with real computer screens. The project includes designing an automatic control process for agents to interact with the environment and complete multi-step tasks. It also involves building the ScreenAgent dataset, which collects screenshots and action sequences for various daily computer tasks. The project provides a controller client code, configuration files, and model training code to enable users to control a desktop with a large model.

README:

View ScreenAgent Paper arxiv:2402.07945

- (2024-4-17) The ScreenAgent paper has been accepted for presentation at IJCAI 2024!

- (2024-5-19) ScreenAgent Web Client released, a simpler way to experience controlling a desktop with a large model.

We have built the ScreenAgent project, creating an environment for Visual Language Model agents (VLM Agent) to interact with real computer screens. In this environment, the agent can observe screenshots and manipulate the GUI by outputting mouse and keyboard operations. We have also designed an automatic control process, which includes planning, action, and reflection stages, guiding the agent to continuously interact with the environment and complete multi-step tasks. In addition, we have built the ScreenAgent dataset, which collects screenshots and action sequences when completing various daily computer tasks.

To guide the VLM Agent to interact continuously with the computer screen, we have built a process that includes "planning-execution-reflection". In the planning phase, the Agent is asked to break down the user task into subtasks. In the execution phase, the Agent will observe the screenshot and give specific mouse and keyboard actions to execute the subtasks. The controller will execute these actions and feed back the execution results to the Agent. In the reflection phase, the Agent will observe the execution results, judge the current state, and choose to continue execution, retry, or adjust the plan. This process will continue until the task is completed.

We referred to the VNC remote desktop connection protocol to design the action space of the Agent, which are all the most basic mouse and keyboard operations. Most of the mouse click operations require the Agent to give the exact screen coordinate position. Compared with calling specific APIs to complete tasks, this method is more universal and can be applied to various desktop operating systems and applications.

Teaching the Agent to use a computer is not a simple matter. It requires the Agent to have multiple comprehensive abilities such as task planning, image understanding, visual positioning, and tool use. For this reason, we manually annotated the ScreenAgent dataset. This dataset covers a variety of daily computer tasks, including file operations, web browsing, gaming entertainment and other scenarios. We build a session according to the above "planning-execution-reflection" process.

The project mainly includes the following parts:

ScreenAgent

├── client # Controller client code

│ ├── prompt # Prompt template

│ ├── config.yml # Controller client configuration file template

│ └── tasks.txt # Task list

├── data # Contains the ScreenAgent dataset and other vision positioning related datasets

├── model_workers # VLM inferencer

└── train # Model training code

First, you need to prepare the desktop operating system to be controlled, where the VNC Server is installed, such as TightVNC. Or you can use a Docker container with a GUI. We have prepared a container niuniushan/screenagent-env. You can use the following command to pull and start this container:

docker run -d --name ScreenAgent -e RESOLUTION=1024x768 -p 5900:5900 -p 8001:8001 -e VNC_PASSWORD=<VNC_PASSWORD> -e CLIPBOARD_SERVER_SECRET_TOKEN=<CLIPBOARD_SERVER_SECRET_TOKEN> -v /dev/shm:/dev/shm niuniushan/screenagent-env:latestPlease replace <VNC_PASSWORD> with your new VNC password, and <CLIPBOARD_SERVER_SECRET_TOKEN> with your clipboard service password. Since keyboard input of long strings of text or unicode characters relies on the clipboard, if the clipboard service is not enabled, you can only input ASCII strings by pressing the keyboard in sequence, and you cannot input Chinese and other unicode characters. This image already contains a clipboard service, which listens to port 8001 by default. You need to set a password to protect your clipboard service. niuniushan/screenagent-env is built based on fcwu/docker-ubuntu-vnc-desktop. You can find more information about this image here.

If you want to use an existing desktop environment, such as Windows, Linux Desktop, or any other desktop environment, you need to run any VNC Server and note its IP address and port number. If you want to enable the clipboard service, please perform the following steps in your desktop environment:

# Install dependencies

pip install fastapi pydantic uvicorn pyperclip

# Set password in environment variable

export CLIPBOARD_SERVER_SECRET_TOKEN=<CLIPBOARD_SERVER_SECRET_TOKEN>

# Start clipboard service

python client/clipboard_server.pyclipboard_server.py will listen to port 8001 and receive the (text) instruction for keyboard input of long strings of text from the controller.

After keeping it running, you can test whether the clipboard service is working properly, for example:

curl --location 'http://localhost:8001/clipboard' \

--header 'Content-Type: application/json' \

--data '{

"text":"Hello world",

"token":"<CLIPBOARD_SERVER_SECRET_TOKEN>"

}'If it works correctly, you will receive a response of {"success": True, "message": "Text copied to clipboard"}.

If you encounter an error "Pyperclip could not find a copy/paste mechanism for your system.", please add an environment variable specifying the X server location before running python client/clipboard_server.py:

export DISPLAY=:0.0Please adjust according to your system environment. If you still encounter errors, please refer to here.

Please fill in the above information in the remote_vnc_server item of the configuration file client/config.yml.

You need to run the controller code, which has three missions: First, the controller will connect to the VNC Server, collect screenshots, and send commands such as mouse and keyboard; Second, the controller maintains a state machine internally, implementing an automatic control process of planning, action, and reflection, guiding the agent to continuously interact with the environment; Finally, the controller will construct complete prompts based on the prompt word template, send them to the large model inference API, and parse the control commands in the large model generated reply. The controller is a program based on PyQt5, you need to install some dependencies:

pip install -r client/requirements.txtPlease choose a VLM as the Agent, we provide inferencers for 4 models in model_workers, they are: GPT-4V, LLaVA-1.5, CogAgent, and ScreenAgent. You can also implement an inferencer yourself or use a third-party API, you can refer to the code in client/interface_api to implement a new API call interface.

Please refer to the llm_api part in client/config.yml to prepare the configuration file, only keep one model under llm_api.

llm_api:

# Select ONE of the following models to use:

GPT4V:

model_name: "gpt-4-vision-preview"

openai_api_key: "<YOUR-OPENAI-API-KEY>"

target_url: "https://api.openai.com/v1/chat/completions"

LLaVA:

model_name: "LLaVA-1.5"

target_url: "http://localhost:40000/worker_generate"

CogAgent:

target_url: "http://localhost:40000/worker_generate"

ScreenAgent:

target_url: "http://localhost:40000/worker_generate"

# Common settings for all models

temperature: 1.0

top_p: 0.9

max_tokens: 500

Please set llm_api to GPT4V in client/config.yml, and fill in your OpenAI API Key, please always pay attention to your account balance.

Please refer to the LLaVA project to download and prepare the LLaVA-1.5 model, for example:

git clone https://github.com/haotian-liu/LLaVA.git

cd LLaVA

conda create -n llava python=3.10 -y

conda activate llava

pip install --upgrade pip # enable PEP 660 support

pip install -e .model_workers/llava_model_worker.py provides a non-streaming output inferencer for LLaVA-1.5. You can copy it to llava/serve/model_worker and start it with the following command:

cd llava

python -m llava.serve.llava_model_worker --host 0.0.0.0 --port 40000 --worker http://localhost:40000 --model-path liuhaotian/llava-v1.5-13b --no-registerPlease refer to the CogVLM project to download and prepare the CogAgent model. Download the sat version of the CogAgent weights cogagent-chat.zip from here, unzip it and place it in the train/saved_models/cogagent-chat directory.

train/cogagent_model_worker.py provides a non-streaming output inferencer for CogAgent. You can start it with the following command:

cd train

RANK=0 WORLD_SIZE=1 LOCAL_RANK=0 python ./cogagent_model_worker.py --host 0.0.0.0 --port 40000 --from_pretrained "saved_models/cogagent-chat" --bf16 --max_length 2048ScreenAgent is trained based on CogAgent. Download the sat format weight file ScreenAgent-2312.zip from here, unzip it and place it in train/saved_models/ScreenAgent-2312. You can start it with the following command:

cd train

RANK=0 WORLD_SIZE=1 LOCAL_RANK=0 python ./cogagent_model_worker.py --host 0.0.0.0 --port 40000 --from_pretrained "./saved_models/ScreenAgent-2312" --bf16 --max_length 2048After the preparation is complete, you can run the controller:

cd client

python run_controller.py -c config.ymlThe controller interface is as follows. You need to double-click to select a task from the left side first, then press the "Start Automation" button. The controller will automatically run according to the plan-action-reflection process. The controller will collect the current screen image, fill in the prompt word template, send the image and complete prompt words to the large model inferencer, parse the reply from the large model inferencer, send mouse and keyboard control commands to the VNC Server, and repeat the process.

If the screen is stuck, try pressing the "Re-connect" button. The controller will try to reconnect to the VNC Server.

All datasets and dataset processing code are in the data directory. We used three existing datasets: COCO2014, Rico & widget-caption, and Mind2Web.

We used COCO 2014 validation images as the training dataset for visual positioning capabilities. You can download COCO 2014 train images from here. The annotation information we used here is refcoco, split by unc.

├── COCO

├── prompts # Prompt word templates for training the Agent's visual positioning capabilities

├── train2014 # COCO 2014 train

└── annotations # COCO 2014 annotations

Rico is a dataset that contains a large number of screenshots and widget information of Android applications. You can download the "1. UI Screenshots and View Hierarchies (6 GB)" part of the Rico dataset from here. The file name is unique_uis.tar.gz. Please put the unzipped combined folder in the data/Rico directory.

widget-caption is an annotation of widget information based on Rico. Please clone the https://github.com/google-research-datasets/widget-caption project under data/Rico.

The final directory structure is as follows:

├── Rico

├── prompts # Prompt word templates for training the Agent's visual positioning capabilities

├── combined # Rico dataset screenshots

└── widget-caption

├── split

│ ├── dev.txt

│ ├── test.txt

│ └── train.txt

└── widget_captions.csv

Mind2Web is a real simulated web browsing dataset. You need to download the original dataset and process it. First, use the globus tool to download the original web screenshots from here. The folder name is raw_dump, placed in the data/Mind2Web/raw_dump directory, and then use the following command to process the dataset:

cd data/Mind2Web

python convert_dataset.pyThis code will download the processed form of the osunlp/Mind2Web dataset from huggingface datasets. Please ensure that the network is smooth. At the same time, this step will involve translating English instructions into Chinese instructions.

cd data/Mind2Web

python convert_dataset.pyThis code will download the processed form of the osunlp/Mind2Web dataset from huggingface datasets. Please ensure that the network is smooth. This step will involve translating English instructions into Chinese instructions. You need to call your own translation API in data/Mind2Web/translate.py.

The directory structure is as follows:

├── Mind2Web

├── convert_dataset.py

├── translate.py

├── prompts # Prompt word templates for training the Agent's web browsing capabilities

├── raw_dump # Mind2Web raw_dump downloaded from globus

└── processed_dataset # Created by convert_dataset.py

ScreenAgent is the dataset annotated in this paper, divided into training and testing sets, the directory structure is as follows:

├── data

├── ScreenAgent

├── train

│ ├── <session id>

│ │ ├── images

│ │ │ ├── <timestamp-1>.jpg

│ │ │ └── ...

│ │ ├── <timestamp-1>.json

│ │ └── ...

│ ├── ...

└── test

The meaning of each field in the json file:

- session_id: Session ID

- task_prompt: Overall goal of the task

- task_prompt_en: Overall goal of the task (En)

- task_prompt_zh: Overall goal of the task (Zh)

- send_prompt: Complete prompt sent to the model

- send_prompt_en: Complete prompt sent to the model (En)

- send_prompt_zh: Complete prompt sent to the model (Zh)

- LLM_response: Original reply text given by the model, i.e., reject response in RLHF

- LLM_response_editer: Reply text after manual correction, i.e., choice response in RLHF

- LLM_response_editer_en: Reply text after manual correction (En)

- LLM_response_editer_zh: Reply text after manual correction (Zh)

- video_height, video_width: Height and width of the image

- saved_image_name: Screenshot filename, under each session's images folder

- actions: Action sequence parsed from LLM_response_editer

If you want to train your own model, or reproduce the ScreenAgent model, please prepare the above datasets first, and check all dataset paths in the train/dataset/mixture_dataset.py file. If you only want to use part of the datasets or add new datasets, please modify the make_supervised_data_module function in train/dataset/mixture_dataset.py. Please download the sat version of the CogAgent weights cogagent-chat.zip from here, unzip it and place it in the train/saved_models/ directory.

You need to pay attention to and check the following files:

train

├── data -> ../data

├── dataset

│ └── mixture_dataset.py

├── finetune_ScreenAgent.sh

└── saved_models

└── cogagent-chat # unzip cogagent-chat.zip

├── 1

│ └── mp_rank_00_model_states.pt

├── latest

└── model_config.json

Please modify the parameters in train/finetune_ScreenAgent.sh according to your device situation, then run:

cd train

bash finetune_ScreenAgent.shFinally, if you want to merge the weights of sat distributed training into a single weight file, please refer to the train/merge_model.sh code. Make sure that the number of model parallelism MP_SIZE in this file is consistent with WORLD_SIZE in train/finetune_ScreenAgent.sh. Modify the parameter after --from-pretrained to the checkpoint location stored during training. The merged weight file will be saved as the train/saved_models/merged_model folder.

- [ ] Provide huggingface transformers weights.

- [ ] Simplify the design of the controller, provide a no render mode.

- [ ] Integrate Gym.

- [ ] Add skill libraries to support more complex function calls.

- Mobile-Agent: Autonomous Multi-Modal Mobile Device Agent with Visual Perception http://arxiv.org/abs/2401.16158

- UFO: A UI-Focused Agent for Windows OS Interaction http://arxiv.org/abs/2402.07939

- ScreenAI: A Vision-Language Model for UI and Infographics Understanding http://arxiv.org/abs/2402.04615

- AppAgent: Multimodal Agents as Smartphone Users http://arxiv.org/abs/2312.13771

- CogAgent: A Visual Language Model for GUI Agents http://arxiv.org/abs/2312.08914

- Screen2Words: Automatic Mobile UI Summarization with Multimodal Learning http://arxiv.org/abs/2108.03353

- A Real-World WebAgent with Planning, Long Context Understanding, and Program Synthesis http://arxiv.org/abs/2307.12856

- Comprehensive Cognitive LLM Agent for Smartphone GUI Automation http://arxiv.org/abs/2402.11941

- Towards General Computer Control: A Multimodal Agent for Red Dead Redemption II as a Case Study https://arxiv.org/abs/2403.03186

- Scaling Instructable Agents Across Many Simulated Worlds link to technical report

- Android in the Zoo: Chain-of-Action-Thought for GUI Agents http://arxiv.org/abs/2403.02713

- OmniACT: A Dataset and Benchmark for Enabling Multimodal Generalist Autonomous Agents for Desktop and Web http://arxiv.org/abs/2402.17553

- Comprehensive Cognitive LLM Agent for Smartphone GUI Automation http://arxiv.org/abs/2402.11941

- Improving Language Understanding from Screenshots http://arxiv.org/abs/2402.14073

- AndroidEnv: A Reinforcement Learning Platform for Android http://arxiv.org/abs/2105.13231

- SeeClick: Harnessing GUI Grounding for Advanced Visual GUI Agents https://arxiv.org/abs/2401.10935

- AgentStudio: A Toolkit for Building General Virtual Agents https://arxiv.org/abs/2403.17918

- ReALM: Reference Resolution As Language Modeling https://arxiv.org/abs/2403.20329

- AutoWebGLM: Bootstrap And Reinforce A Large Language Model-based Web Navigating Agent https://arxiv.org/abs/2404.03648

- Octopus v2: On-device language model for super agent https://arxiv.org/pdf/2404.01744.pdf

- Mobile-Env: An Evaluation Platform and Benchmark for LLM-GUI Interaction https://arxiv.org/abs/2305.08144

- OSWorld: Benchmarking Multimodal Agents for Open-Ended Tasks in Real Computer Environments https://arxiv.org/abs/2404.07972

- Ferret-UI: Grounded Mobile UI Understanding with Multimodal LLMs https://arxiv.org/abs/2404.05719

- VisualWebBench: How Far Have Multimodal LLMs Evolved in Web Page Understanding and Grounding? https://arxiv.org/abs/2404.05955

- OS-Copilot: Towards Generalist Computer Agents with Self-Improvement https://arxiv.org/abs/2402.07456

- VisualWebBench: How Far Have Multimodal LLMs Evolved in Web Page Understanding and Grounding? https://arxiv.org/abs/2404.05955

- OS-Copilot: Towards Generalist Computer Agents with Self-Improvement https://arxiv.org/abs/2402.07456

@article{niu2024screenagent,

title={ScreenAgent: A Vision Language Model-driven Computer Control Agent},

author={Runliang Niu and Jindong Li and Shiqi Wang and Yali Fu and Xiyu Hu and Xueyuan Leng and He Kong and Yi Chang and Qi Wang},

year={2024},

eprint={2402.07945},

archivePrefix={arXiv},

primaryClass={cs.HC}

}Code License: MIT

Dataset License: Apache-2.0

Model License: The CogVLM License

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ScreenAgent

Similar Open Source Tools

ScreenAgent

ScreenAgent is a project focused on creating an environment for Visual Language Model agents (VLM Agent) to interact with real computer screens. The project includes designing an automatic control process for agents to interact with the environment and complete multi-step tasks. It also involves building the ScreenAgent dataset, which collects screenshots and action sequences for various daily computer tasks. The project provides a controller client code, configuration files, and model training code to enable users to control a desktop with a large model.

CoML

CoML (formerly MLCopilot) is an interactive coding assistant for data scientists and machine learning developers, empowered on large language models. It offers an out-of-the-box interactive natural language programming interface for data mining and machine learning tasks, integration with Jupyter lab and Jupyter notebook, and a built-in large knowledge base of machine learning to enhance the ability to solve complex tasks. The tool is designed to assist users in coding tasks related to data analysis and machine learning using natural language commands within Jupyter environments.

spec-kit

Spec Kit is a tool designed to enable organizations to focus on product scenarios rather than writing undifferentiated code through Spec-Driven Development. It flips the script on traditional software development by making specifications executable, directly generating working implementations. The tool provides a structured process emphasizing intent-driven development, rich specification creation, multi-step refinement, and heavy reliance on advanced AI model capabilities for specification interpretation. Spec Kit supports various development phases, including 0-to-1 Development, Creative Exploration, and Iterative Enhancement, and aims to achieve experimental goals related to technology independence, enterprise constraints, user-centric development, and creative & iterative processes. The tool requires Linux/macOS (or WSL2 on Windows), an AI coding agent (Claude Code, GitHub Copilot, Gemini CLI, or Cursor), uv for package management, Python 3.11+, and Git.

generative-ai-sagemaker-cdk-demo

This repository showcases how to deploy generative AI models from Amazon SageMaker JumpStart using the AWS CDK. Generative AI is a type of AI that can create new content and ideas, such as conversations, stories, images, videos, and music. The repository provides a detailed guide on deploying image and text generative AI models, utilizing pre-trained models from SageMaker JumpStart. The web application is built on Streamlit and hosted on Amazon ECS with Fargate. It interacts with the SageMaker model endpoints through Lambda functions and Amazon API Gateway. The repository also includes instructions on setting up the AWS CDK application, deploying the stacks, using the models, and viewing the deployed resources on the AWS Management Console.

redbox

Redbox is a retrieval augmented generation (RAG) app that uses GenAI to chat with and summarise civil service documents. It increases organisational memory by indexing documents and can summarise reports read months ago, supplement them with current work, and produce a first draft that lets civil servants focus on what they do best. The project uses a microservice architecture with each microservice running in its own container defined by a Dockerfile. Dependencies are managed using Python Poetry. Contributions are welcome, and the project is licensed under the MIT License. Security measures are in place to ensure user data privacy and considerations are being made to make the core-api secure.

aisheets

Hugging Face AI Sheets is an open-source tool for building, enriching, and transforming datasets using AI models with no code. It can be deployed locally or on the Hub, providing access to thousands of open models. Users can easily generate datasets, run data generation scripts, and customize inference endpoints for text generation. The tool supports custom LLMs and offers advanced configuration options for authentication, inference, and miscellaneous settings. With AI Sheets, users can leverage the power of AI models without writing any code, making dataset management and transformation efficient and accessible.

honcho

Honcho is a platform for creating personalized AI agents and LLM powered applications for end users. The repository is a monorepo containing the server/API for managing database interactions and storing application state, along with a Python SDK. It utilizes FastAPI for user context management and Poetry for dependency management. The API can be run using Docker or manually by setting environment variables. The client SDK can be installed using pip or Poetry. The project is open source and welcomes contributions, following a fork and PR workflow. Honcho is licensed under the AGPL-3.0 License.

OSWorld

OSWorld is a benchmarking tool designed to evaluate multimodal agents for open-ended tasks in real computer environments. It provides a platform for running experiments, setting up virtual machines, and interacting with the environment using Python scripts. Users can install the tool on their desktop or server, manage dependencies with Conda, and run benchmark tasks. The tool supports actions like executing commands, checking for specific results, and evaluating agent performance. OSWorld aims to facilitate research in AI by providing a standardized environment for testing and comparing different agent baselines.

geti-sdk

The Intel® Geti™ SDK is a python package that enables teams to rapidly develop AI models by easing the complexities of model development and enhancing collaboration between teams. It provides tools to interact with an Intel® Geti™ server via the REST API, allowing for project creation, downloading, uploading, deploying for local inference with OpenVINO, setting project and model configuration, launching and monitoring training jobs, and media upload and prediction. The SDK also includes tutorial-style Jupyter notebooks demonstrating its usage.

geti-sdk

The Intel® Geti™ SDK is a python package that enables teams to rapidly develop AI models by easing the complexities of model development and fostering collaboration. It provides tools to interact with an Intel® Geti™ server via the REST API, allowing for project creation, downloading, uploading, deploying for local inference with OpenVINO, configuration management, training job monitoring, media upload, and prediction. The repository also includes tutorial-style Jupyter notebooks demonstrating SDK usage.

testzeus-hercules

Hercules is the world’s first open-source testing agent designed to handle the toughest testing tasks for modern web applications. It turns simple Gherkin steps into fully automated end-to-end tests, making testing simple, reliable, and efficient. Hercules adapts to various platforms like Salesforce and is suitable for CI/CD pipelines. It aims to democratize and disrupt test automation, making top-tier testing accessible to everyone. The tool is transparent, reliable, and community-driven, empowering teams to deliver better software. Hercules offers multiple ways to get started, including using PyPI package, Docker, or building and running from source code. It supports various AI models, provides detailed installation and usage instructions, and integrates with Nuclei for security testing and WCAG for accessibility testing. The tool is production-ready, open core, and open source, with plans for enhanced LLM support, advanced tooling, improved DOM distillation, community contributions, extensive documentation, and a bounty program.

agentok

Agentok Studio is a visual tool built for AutoGen, a cutting-edge agent framework from Microsoft and various contributors. It offers intuitive visual tools to simplify the construction and management of complex agent-based workflows. Users can create workflows visually as graphs, chat with agents, and share flow templates. The tool is designed to streamline the development process for creators and developers working on next-generation Multi-Agent Applications.

visualwebarena

VisualWebArena is a benchmark for evaluating multimodal autonomous language agents through diverse and complex web-based visual tasks. It builds on the reproducible evaluation introduced in WebArena. The repository provides scripts for end-to-end training, demos to run multimodal agents on webpages, and tools for setting up environments for evaluation. It includes trajectories of the GPT-4V + SoM agent on VWA tasks, along with human evaluations on 233 tasks. The environment supports OpenAI models and Gemini models for evaluation.

llm-subtrans

LLM-Subtrans is an open source subtitle translator that utilizes LLMs as a translation service. It supports translating subtitles between any language pairs supported by the language model. The application offers multiple subtitle formats support through a pluggable system, including .srt, .ssa/.ass, and .vtt files. Users can choose to use the packaged release for easy usage or install from source for more control over the setup. The tool requires an active internet connection as subtitles are sent to translation service providers' servers for translation.

warc-gpt

WARC-GPT is an experimental retrieval augmented generation pipeline for web archive collections. It allows users to interact with WARC files, extract text, generate text embeddings, visualize embeddings, and interact with a web UI and API. The tool is highly customizable, supporting various LLMs, providers, and embedding models. Users can configure the application using environment variables, ingest WARC files, start the server, and interact with the web UI and API to search for content and generate text completions. WARC-GPT is designed for exploration and experimentation in exploring web archives using AI.

CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

For similar tasks

ScreenAgent

ScreenAgent is a project focused on creating an environment for Visual Language Model agents (VLM Agent) to interact with real computer screens. The project includes designing an automatic control process for agents to interact with the environment and complete multi-step tasks. It also involves building the ScreenAgent dataset, which collects screenshots and action sequences for various daily computer tasks. The project provides a controller client code, configuration files, and model training code to enable users to control a desktop with a large model.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.