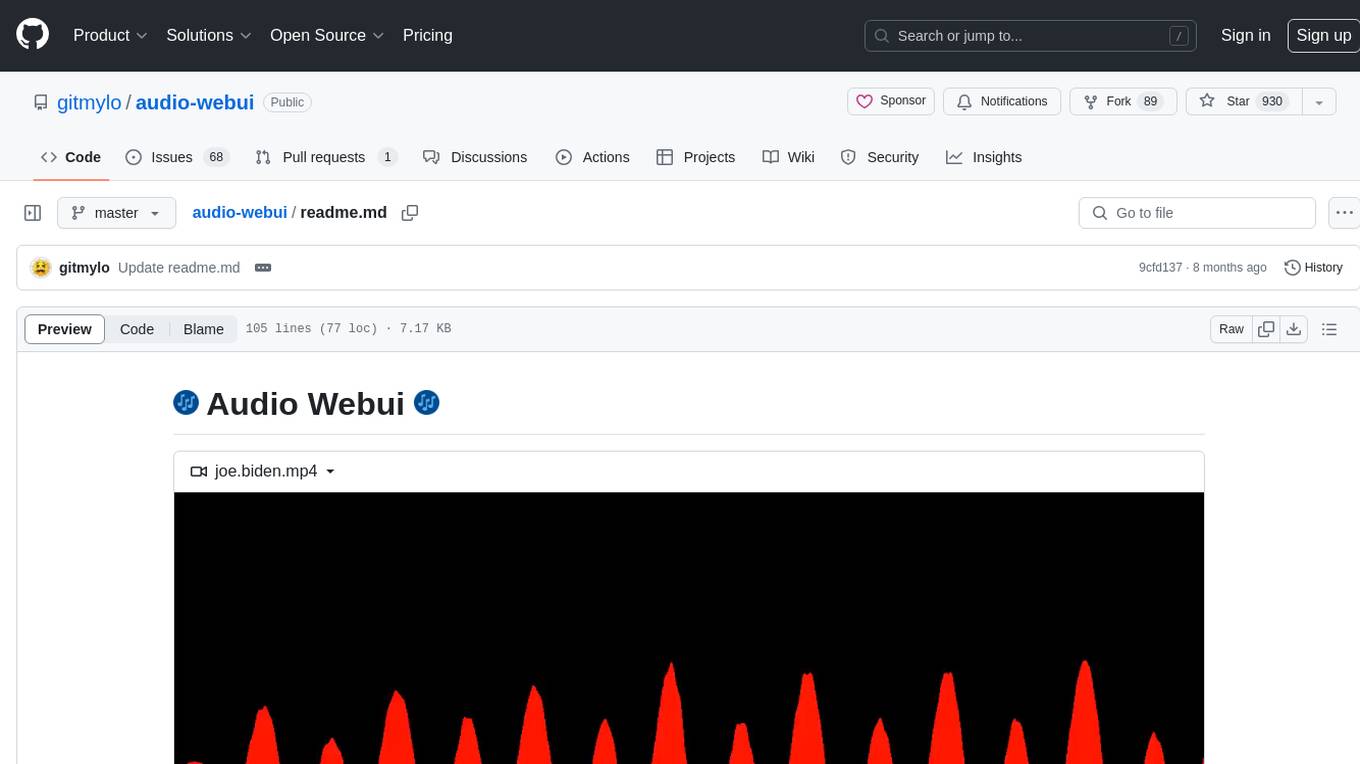

audio-webui

A webui for different audio related Neural Networks

Stars: 930

Audio Webui is a tool designed to provide a user-friendly interface for audio processing tasks. It supports automatic installers, Docker deployment, local manual installation, Google Colab integration, and common command line flags. Users can easily download, install, update, and run the tool for various audio-related tasks. The tool requires Python 3.10, Git, and ffmpeg for certain features. It also offers extensions for additional functionalities.

README:

https://github.com/gitmylo/audio-webui/assets/36931363/c285b4dc-63cf-4b1c-895d-9723a2cbf91e

This code works on python 3.10 (lower versions don't support "|" type annotations, and i believe 3.11 doesn't have support for the TTS library currently).

You also need to have Git installed, you might already have it, run git --version in a console/terminal to see if you already have it installed.

Some features require ffmpeg to be installed.

On Windows, you need to have visual studio C++ build tools installed.

- Extensions

Automatic installers! (Download)

- Put the installer in a folder

- Run the installer for your operating system.

- Now run the webui's install script. Follow the steps at 📦 Installing

Links to community audio-webui docker projects

- https://github.com/LajaSoft/audio-webui-docker (Docker compose which downloads jacen92's fork)

- https://github.com/jacen92/audio-webui-docker (Fork of audio-webui which includes docker compose)

It is recommended to use git to download the webui, using git allows for easy updating.

To download using git, run git clone https://github.com/gitmylo/audio-webui in a console/terminal

Installation is done automatically in a venv when you run run.bat or run.sh (.bat on Windows, .sh on Linux/MacOS).

To update,

run update.bat on windows, update.sh on linux/macos

OR run git pull in the folder your webui is installed in.

Running should be as simple as running run.bat or run.sh depending on your OS.

Everything should get installed automatically.

If there's an issue with running, please create an issue

| Name | Args | Short | Usage | Description |

|---|---|---|---|---|

| --skip-install | [None] | -si | -si | Skip installing packages |

| --skip-venv | [None] | -sv | -sv | Skip creating/activating venv, also skips install. (for advanced users) |

| --no-data-cache | [None] | [None] | --no-data-cache | Don't change the default dir for huggingface_hub models. (This might fix some models not loading) |

| --launch | [None] | [None] | --launch | Automatically open the webui in your browser once it launches. |

| --share | [None] | -s | -s | Share the gradio instance publicly |

| --username | username (str) | -u, --user | -u username | Set the username for gradio |

| --password | password (str) | -p, --pass | -p password | Set the password for gradio |

| --theme | theme (str) | [None] | --theme "gradio/soft" | Set the theme for gradio |

| --listen | [None] | -l | -l | Listen a server, allowing other devices within your local network to access the server. (or outside if port forwarded) |

| --port | port (int) | [None] | --port 12345 | Set a custom port to listen on, by default a port is picked automatically |

moved to a separate readme

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for audio-webui

Similar Open Source Tools

audio-webui

Audio Webui is a tool designed to provide a user-friendly interface for audio processing tasks. It supports automatic installers, Docker deployment, local manual installation, Google Colab integration, and common command line flags. Users can easily download, install, update, and run the tool for various audio-related tasks. The tool requires Python 3.10, Git, and ffmpeg for certain features. It also offers extensions for additional functionalities.

palico-ai

Palico AI is a tech stack designed for rapid iteration of LLM applications. It allows users to preview changes instantly, improve performance through experiments, debug issues with logs and tracing, deploy applications behind a REST API, and manage applications with a UI control panel. Users have complete flexibility in building their applications with Palico, integrating with various tools and libraries. The tool enables users to swap models, prompts, and logic easily using AppConfig. It also facilitates performance improvement through experiments and provides options for deploying applications to cloud providers or using managed hosting. Contributions to the project are welcomed, with easy ways to get involved by picking issues labeled as 'good first issue'.

floneum

Floneum is a graph editor that makes it easy to develop your own AI workflows. It uses large language models (LLMs) to run AI models locally, without any external dependencies or even a GPU. This makes it easy to use LLMs with your own data, without worrying about privacy. Floneum also has a plugin system that allows you to improve the performance of LLMs and make them work better for your specific use case. Plugins can be used in any language that supports web assembly, and they can control the output of LLMs with a process similar to JSONformer or guidance.

auto-dev

AutoDev is an AI-powered coding wizard that supports multiple languages, including Java, Kotlin, JavaScript/TypeScript, Rust, Python, Golang, C/C++/OC, and more. It offers a range of features, including auto development mode, copilot mode, chat with AI, customization options, SDLC support, custom AI agent integration, and language features such as language support, extensions, and a DevIns language for AI agent development. AutoDev is designed to assist developers with tasks such as auto code generation, bug detection, code explanation, exception tracing, commit message generation, code review content generation, smart refactoring, Dockerfile generation, CI/CD config file generation, and custom shell/command generation. It also provides a built-in LLM fine-tune model and supports UnitEval for LLM result evaluation and UnitGen for code-LLM fine-tune data generation.

OpenAiTx

OpenAiTx is a language auto-translate tool designed for GitHub project readme & wiki. It uses premium-grade LLM for one-time translation and makes the results freely accessible to the open-source community. The tool supports Google/Bing multiple languages SEO search, which regular client translate tools cannot do. It is free and open source forever, allowing project maintainers to save time by submitting once and auto-updating in the future. OpenAiTx provides users with different style options for language display badges or text, making it easy to integrate into readme files. Contributors can participate by forking the project, cloning it, choosing a script in their preferred language, filling in their AI token, running it, and creating a pull request. It is important not to upload personal tokens for security reasons. The tool is specifically designed for GitHub markdown and aims to help users translate their projects efficiently.

beeai-framework

BeeAI Framework is a versatile tool for building production-ready multi-agent systems. It offers flexibility in orchestrating agents, seamless integration with various models and tools, and production-grade controls for scaling. The framework supports Python and TypeScript libraries, enabling users to implement simple to complex multi-agent patterns, connect with AI services, and optimize token usage and resource management.

openlit

OpenLIT is an OpenTelemetry-native GenAI and LLM Application Observability tool. It's designed to make the integration process of observability into GenAI projects as easy as pie – literally, with just **a single line of code**. Whether you're working with popular LLM Libraries such as OpenAI and HuggingFace or leveraging vector databases like ChromaDB, OpenLIT ensures your applications are monitored seamlessly, providing critical insights to improve performance and reliability.

EvoAgentX

EvoAgentX is an open-source framework for building, evaluating, and evolving LLM-based agents or agentic workflows in an automated, modular, and goal-driven manner. It enables developers and researchers to move beyond static prompt chaining or manual workflow orchestration by introducing a self-evolving agent ecosystem. The framework includes features such as agent workflow autoconstruction, built-in evaluation, self-evolution engine, plug-and-play compatibility, comprehensive built-in tools, memory module support, and human-in-the-loop interactions.

pr-agent

PR-Agent is a tool that helps to efficiently review and handle pull requests by providing AI feedbacks and suggestions. It supports various commands such as generating PR descriptions, providing code suggestions, answering questions about the PR, and updating the CHANGELOG.md file. PR-Agent can be used via CLI, GitHub Action, GitHub App, Docker, and supports multiple git providers and models. It emphasizes real-life practical usage, with each tool having a single GPT-4 call for quick and affordable responses. The PR Compression strategy enables effective handling of both short and long PRs, while the JSON prompting strategy allows for modular and customizable tools. PR-Agent Pro, the hosted version by CodiumAI, provides additional benefits such as full management, improved privacy, priority support, and extra features.

outspeed

Outspeed is a PyTorch-inspired SDK for building real-time AI applications on voice and video input. It offers low-latency processing of streaming audio and video, an intuitive API familiar to PyTorch users, flexible integration of custom AI models, and tools for data preprocessing and model deployment. Ideal for developing voice assistants, video analytics, and other real-time AI applications processing audio-visual data.

cocoindex

CocoIndex is the world's first open-source engine that supports both custom transformation logic and incremental updates specialized for data indexing. Users declare the transformation, CocoIndex creates & maintains an index, and keeps the derived index up to date based on source update, with minimal computation and changes. It provides a Python library for data indexing with features like text embedding, code embedding, PDF parsing, and more. The tool is designed to simplify the process of indexing data for semantic search and structured information extraction.

MooER

MooER (摩耳) is an LLM-based speech recognition and translation model developed by Moore Threads. It allows users to transcribe speech into text (ASR) and translate speech into other languages (AST) in an end-to-end manner. The model was trained using 5K hours of data and is now also available with an 80K hours version. MooER is the first LLM-based speech model trained and inferred using domestic GPUs. The repository includes pretrained models, inference code, and a Gradio demo for a better user experience.

pipeline

Pipeline is a Python library designed for constructing computational flows for AI/ML models. It supports both development and production environments, offering capabilities for inference, training, and finetuning. The library serves as an interface to Mystic, enabling the execution of pipelines at scale and on enterprise GPUs. Users can also utilize this SDK with Pipeline Core on a private hosted cluster. The syntax for defining AI/ML pipelines is reminiscent of sessions in Tensorflow v1 and Flows in Prefect.

opik

Comet Opik is a repository containing two main services: a frontend and a backend. It provides a Python SDK for easy installation. Users can run the full application locally with minikube, following specific installation prerequisites. The repository structure includes directories for applications like Opik backend, with detailed instructions available in the README files. Users can manage the installation using simple k8s commands and interact with the application via URLs for checking the running application and API documentation. The repository aims to facilitate local development and testing of Opik using Kubernetes technology.

computer

Cua is a tool for creating and running high-performance macOS and Linux VMs on Apple Silicon, with built-in support for AI agents. It provides libraries like Lume for running VMs with near-native performance, Computer for interacting with sandboxes, and Agent for running agentic workflows. Users can refer to the documentation for onboarding and explore demos showcasing the tool's capabilities. Additionally, accessory libraries like Core, PyLume, Computer Server, and SOM offer additional functionality. Contributions to Cua are welcome, and the tool is open-sourced under the MIT License.

Open-Interface

Open Interface is a self-driving software that automates computer tasks by sending user requests to a language model backend (e.g., GPT-4V) and simulating keyboard and mouse inputs to execute the steps. It course-corrects by sending current screenshots to the language models. The tool supports MacOS, Linux, and Windows, and requires setting up the OpenAI API key for access to GPT-4V. It can automate tasks like creating meal plans, setting up custom language model backends, and more. Open Interface is currently not efficient in accurate spatial reasoning, tracking itself in tabular contexts, and navigating complex GUI-rich applications. Future improvements aim to enhance the tool's capabilities with better models trained on video walkthroughs. The tool is cost-effective, with user requests priced between $0.05 - $0.20, and offers features like interrupting the app and primary display visibility in multi-monitor setups.

For similar tasks

audio-webui

Audio Webui is a tool designed to provide a user-friendly interface for audio processing tasks. It supports automatic installers, Docker deployment, local manual installation, Google Colab integration, and common command line flags. Users can easily download, install, update, and run the tool for various audio-related tasks. The tool requires Python 3.10, Git, and ffmpeg for certain features. It also offers extensions for additional functionalities.

Easy-Voice-Toolkit

Easy Voice Toolkit is a toolkit based on open source voice projects, providing automated audio tools including speech model training. Users can seamlessly integrate functions like audio processing, voice recognition, voice transcription, dataset creation, model training, and voice conversion to transform raw audio files into ideal speech models. The toolkit supports multiple languages and is currently only compatible with Windows systems. It acknowledges the contributions of various projects and offers local deployment options for both users and developers. Additionally, cloud deployment on Google Colab is available. The toolkit has been tested on Windows OS devices and includes a FAQ section and terms of use for academic exchange purposes.

Audio-Upscaler

Audio Upscaler (AudioSR) is a powerful tool designed to enhance the fidelity of audio files, regardless of type or sampling rates. It leverages cutting-edge super-resolution techniques to upscale audio signals, resulting in superior quality output. The tool is versatile, handling all types of audio content, easy to use with a simple interface, and ensures high fidelity output with enhanced clarity and detail.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.