Best AI tools for< Persist Http Responses >

6 - AI tool Sites

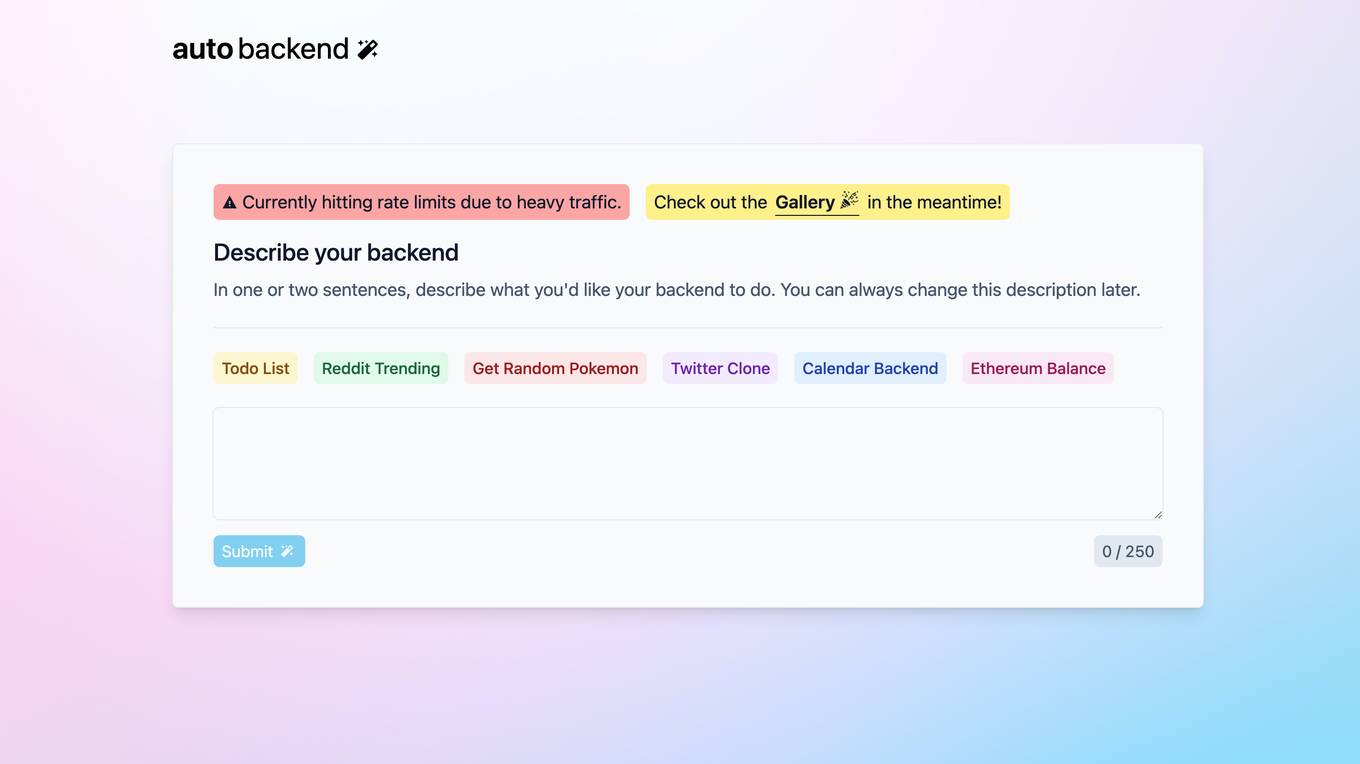

autobackend.dev

autobackend.dev is a website that appears to be experiencing technical difficulties at the moment. The page is currently not working and displaying an HTTP error message. Users encountering this issue are advised to reload the page and contact the site owner if the problem persists.

ai2page.com

ai2page.com is a website that appears to be experiencing technical difficulties at the moment. The page is not working, showing an HTTP error 436 message. Users encountering this issue are advised to reload the page and contact the site owner if the problem persists.

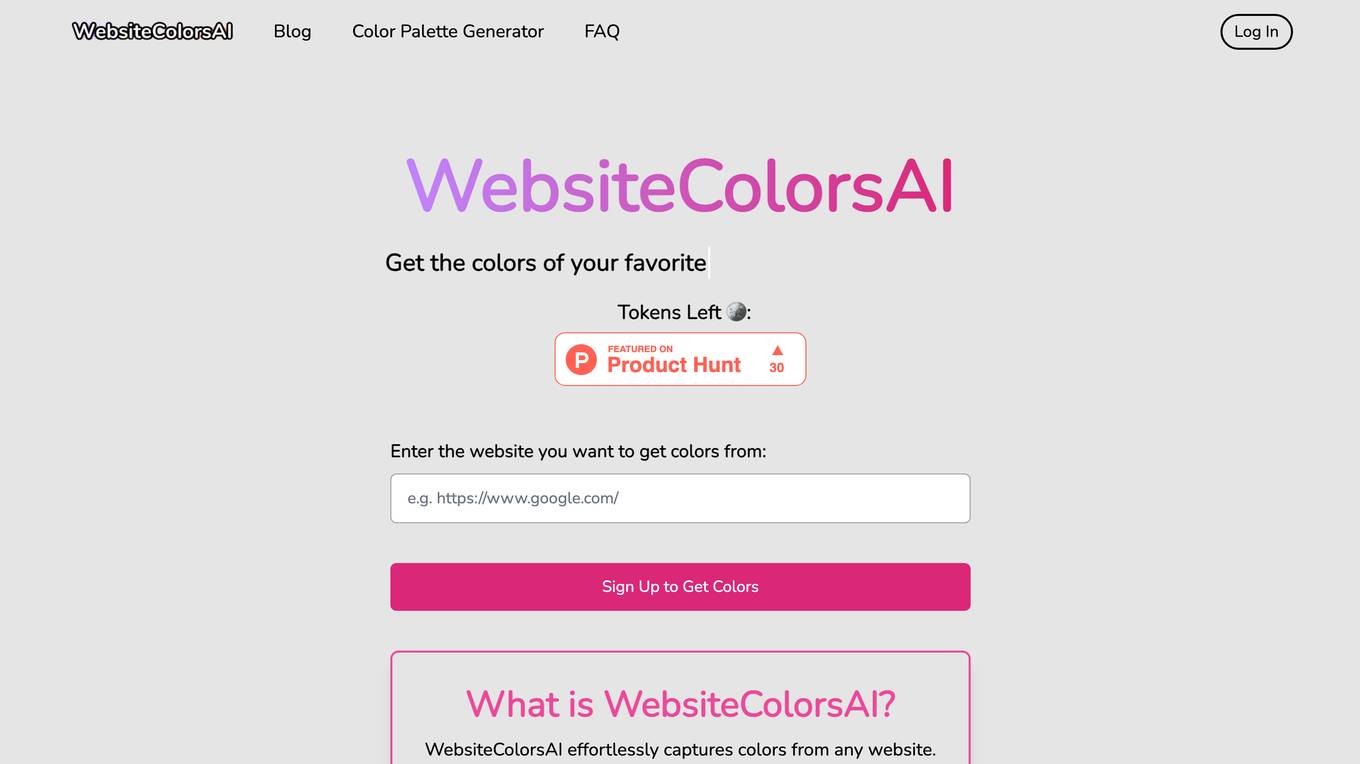

Websitecolorsai

Websitecolorsai.com is a website that appears to be experiencing technical difficulties at the moment. The page is not working and displays an HTTP ERROR 436 message. Users encountering this issue are advised to reload the page and contact the site owner if the problem persists.

Dachi.chat

Dachi.chat is a website that seems to be experiencing technical difficulties at the moment. The page is currently not working, displaying an HTTP ERROR 436 message. Users encountering this issue are advised to reload the page and contact the site owner if the problem persists.

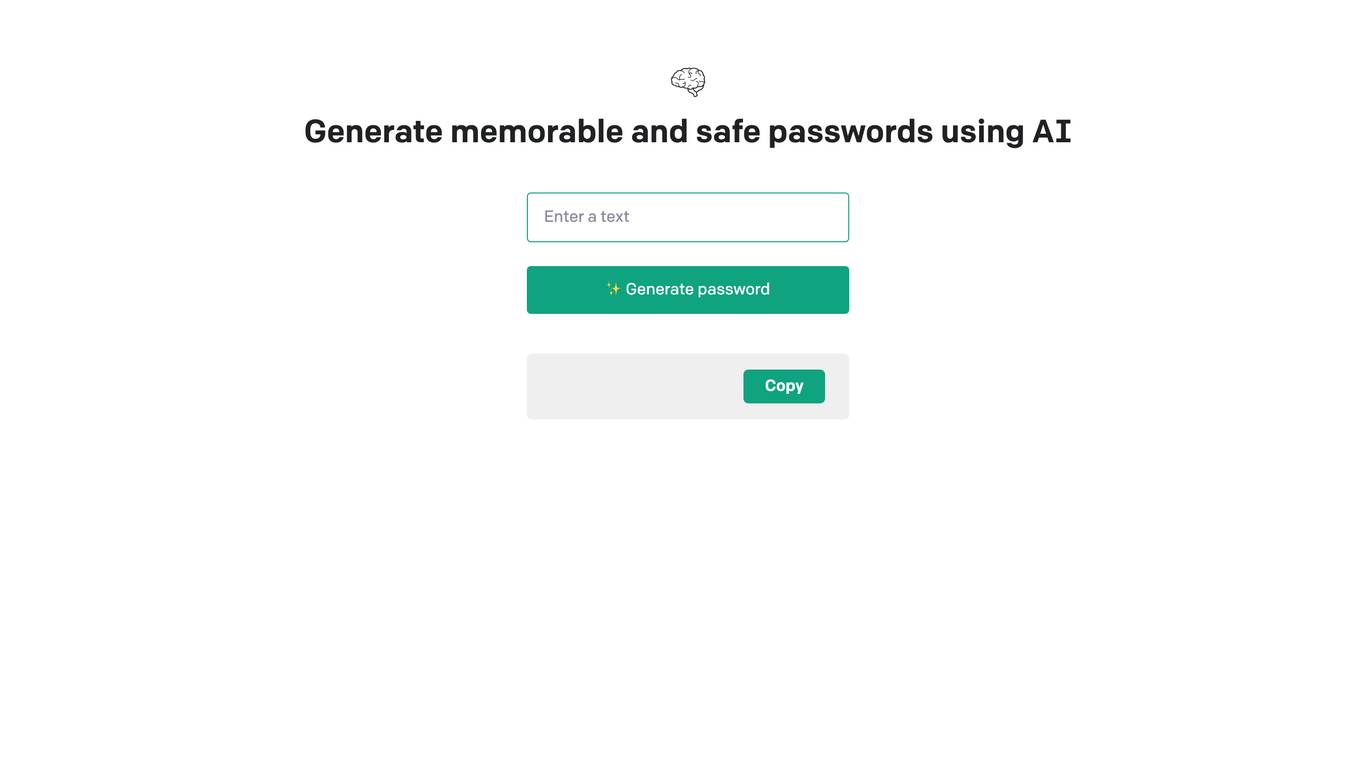

aipass.site

aipass.site is a website that currently experiences a connection timeout issue, preventing users from accessing its content. The error code 522 indicates a problem with the connection between Cloudflare's network and the origin web server. Users are advised to wait a few minutes and try again. If the issue persists, website owners should contact their hosting provider for assistance. The site seems to be utilizing Cloudflare services for performance and security enhancements.

Avatarcraft.ai

Avatarcraft.ai is a website that appears to be experiencing a connection issue at the moment. The error code 522 indicates a timeout between Cloudflare's network and the origin web server, resulting in the web page not being displayed. Users are advised to wait a few minutes and try again. If the issue persists, the website owner should contact their hosting provider for assistance. The error may be due to server resource constraints. Cloudflare provides performance and security services for websites.

1 - Open Source AI Tools

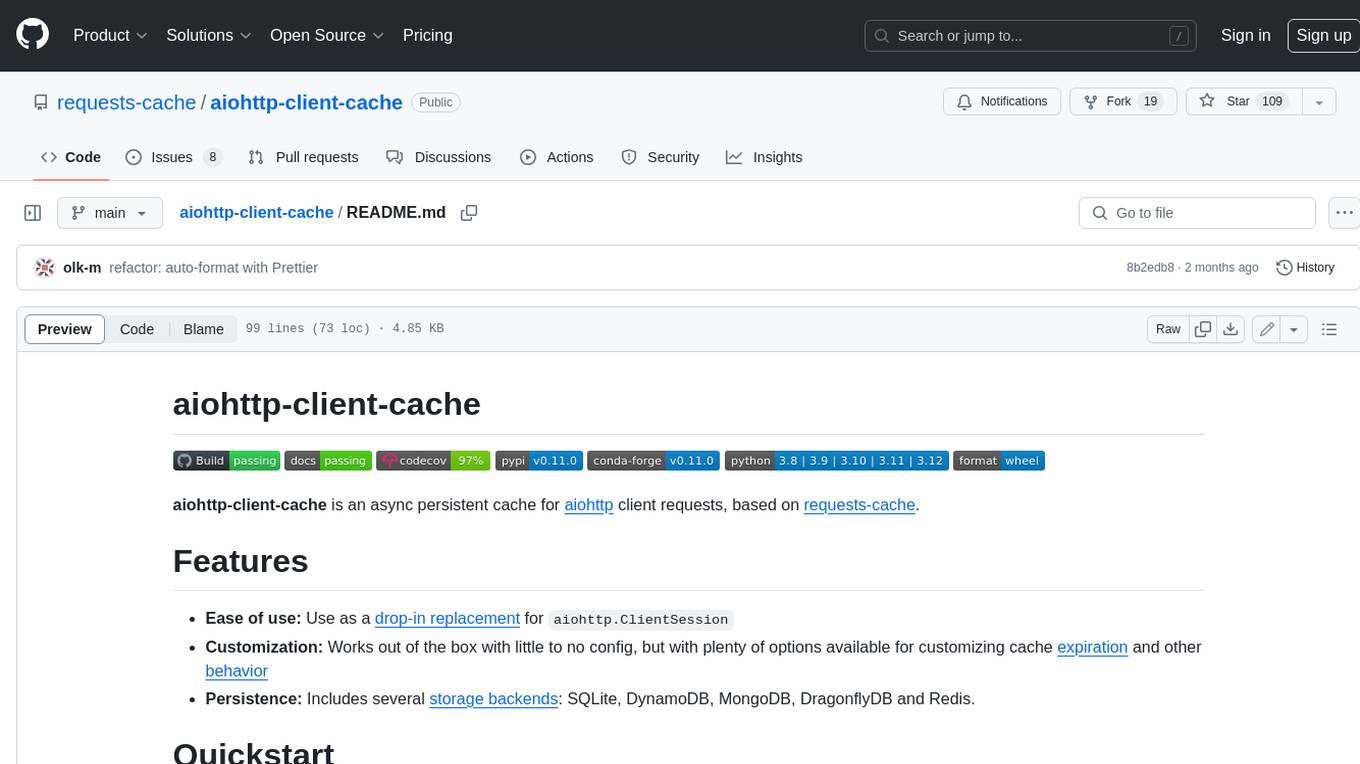

aiohttp-client-cache

aiohttp-client-cache is an asynchronous persistent cache for aiohttp client requests, based on requests-cache. It is easy to use, customizable, and persistent, with several storage backends available, including SQLite, DynamoDB, MongoDB, DragonflyDB, and Redis.