Best AI tools for< Partition Data >

1 - AI tool Sites

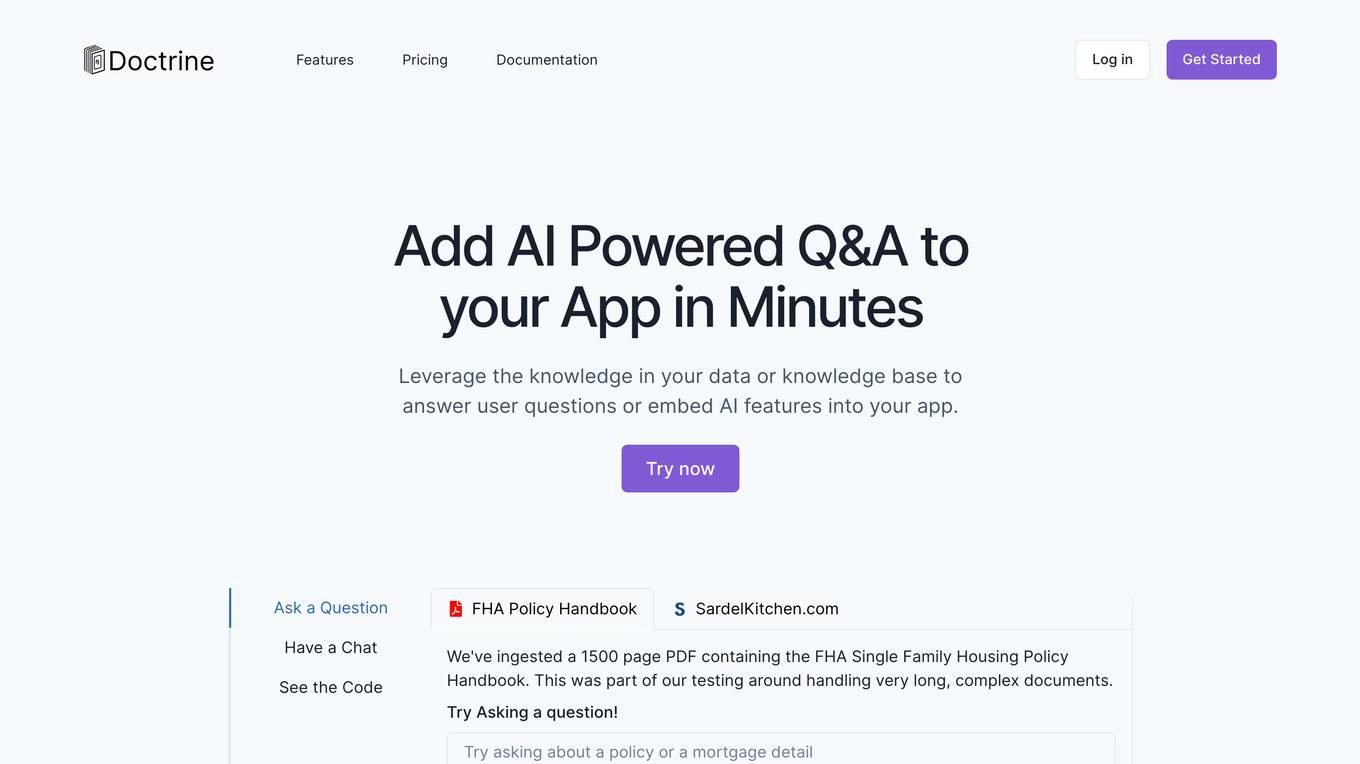

Doctrine

Doctrine is an AI-powered application that allows users to add AI-powered Q&A features to their apps in minutes. It leverages knowledge from data or knowledge bases to answer user questions or embed AI features. With the ability to ingest content from various sources like websites, documents, and images, Doctrine simplifies the process of knowledge extraction and enables seamless integration of AI capabilities into applications.

site

: 305

0 - Open Source AI Tools

No tools available

0 - OpenAI Gpts

No tools available