Best AI tools for< extract data from resumes >

20 - AI tool Sites

Extracta.ai

Extracta.ai is a cloud-based data extraction platform that uses artificial intelligence (AI) to automatically extract data from unstructured documents. It can be used to extract data from a variety of document types, including invoices, resumes, contracts, receipts, and custom documents. Extracta.ai is easy to use and requires no training. Simply define the fields that you want to extract from your documents, upload the documents, and Extracta.ai will do the rest. Extracta.ai is a powerful tool that can help you save time and money by automating your data extraction processes.

ResuMetrics

ResuMetrics is an AI-powered resume analysis tool that helps businesses automate their resume processing. With ResuMetrics, businesses can extract structured data from resumes, anonymize resumes, and automate the candidate onboarding process. ResuMetrics is easy to use and integrates with a variety of HR systems. It is also affordable, with plans starting at just €0 per month.

JobWizard

JobWizard is an AI-powered tool that helps job seekers autofill job applications. It uses natural language processing and machine learning to extract information from your resume and LinkedIn profile, and then automatically fills out the corresponding fields on job applications. This can save you a lot of time and hassle, and it can also help you to avoid making mistakes that could cost you the job.

YellowGoose

YellowGoose is an AI tool that offers intelligent analysis of resumes. It utilizes advanced algorithms to extract and analyze key information from resumes, helping users to streamline the recruitment process. With YellowGoose, users can quickly evaluate candidates and make informed hiring decisions based on data-driven insights.

Affinda

Affinda is a document AI platform that can read, understand, and extract data from any document type. It combines 10+ years of IP in document reconstruction with the latest advancements in computer vision, natural language processing, and deep learning. Affinda's platform can be used to automate a variety of document processing workflows, including invoice processing, receipt processing, credit note processing, purchase order processing, account statement processing, resume parsing, job description parsing, resume redaction, passport processing, birth certificate processing, and driver's license processing. Affinda's platform is used by some of the world's leading organizations, including Google, Microsoft, Amazon, and IBM.

CoverBot

CoverBot is an AI-powered tool that helps job seekers create high-quality cover letters in minutes. With CoverBot, you can simply input your resume and job description, and the tool will generate a personalized cover letter that highlights your skills and experience, and is tailored to the specific job you're applying for. CoverBot's AI technology analyzes your resume and job description to identify the most relevant keywords and phrases, and then uses this information to generate a cover letter that is both informative and persuasive.

SkillOk

SkillOk is an AI-powered resume builder that helps users create customized resumes for each job application. It extracts skills from any job description and allows users to add their own, ensuring that their resume matches the job requirements. SkillOk also uses a smart AI engine to fine-tune resumes based on targeted questions and automatically generates customized introductions based on the job description. Additionally, it provides data-driven advice and grammar checks to ensure a polished resume that stands out to employers.

Parsio

Parsio is an AI-powered document parser that can extract structured data from PDFs, emails, and other documents. It uses natural language processing to understand the context of the document and identify the relevant data points. Parsio can be used to automate a variety of tasks, such as extracting data from invoices, receipts, and emails.

Tablize

Tablize is a powerful data extraction tool that helps you turn unstructured data into structured, tabular format. With Tablize, you can easily extract data from PDFs, images, and websites, and export it to Excel, CSV, or JSON. Tablize uses artificial intelligence to automate the data extraction process, making it fast and easy to get the data you need.

FormX.ai

FormX.ai is an AI-powered data extraction and conversion tool that automates the process of extracting data from physical documents and converting it into digital formats. It supports a wide range of document types, including invoices, receipts, purchase orders, bank statements, contracts, HR forms, shipping orders, loyalty member applications, annual reports, business certificates, personnel licenses, and more. FormX.ai's pre-configured data extraction models and effortless API integration make it easy for businesses to integrate data extraction into their existing systems and workflows. With FormX.ai, businesses can save time and money on manual data entry and improve the accuracy and efficiency of their data processing.

Kadoa

Kadoa is an AI-powered web scraping tool that automates the extraction of data from websites. It uses machine learning algorithms to identify and extract the desired data, making it easy for users to collect and analyze data from the web. Kadoa offers a variety of features, including no-code data extraction, smart navigation and RPA, self-healing workflows, enterprise scalability, and powerful API and integrations.

Airparser

Airparser is a GPT-powered email and document parser that automates data extraction from various document types, including emails, PDFs, and handwritten notes. It uses AI and GPT technology to efficiently extract structured data, making it easy to integrate into workflows and export to various platforms and applications.

super.AI

Super.AI provides Intelligent Document Processing (IDP) solutions powered by Large Language Models (LLMs) and human-in-the-loop (HITL) capabilities. It automates document processing tasks such as data extraction, classification, and redaction, enabling businesses to streamline their workflows and improve accuracy. Super.AI's platform leverages cutting-edge AI models from providers like Amazon, Google, and OpenAI to handle complex documents, ensuring high-quality outputs. With its focus on accuracy, flexibility, and scalability, Super.AI caters to various industries, including financial services, insurance, logistics, and healthcare.

AlgoDocs

AlgoDocs is a powerful AI Platform developed based on the latest technologies to streamline your processes and free your team from annoying and error-prone manual data entry by offering fast, secure, and accurate document data extraction.

Webscrape AI

Webscrape AI is a no-code web scraping tool that allows users to collect data from websites without writing any code. It is easy to use, accurate, and affordable, making it a great option for businesses of all sizes. With Webscrape AI, you can automate your data collection process and free up your time to focus on other tasks.

Elicit

Elicit is an AI research assistant that helps researchers analyze research papers at superhuman speed. It automates time-consuming research tasks such as summarizing papers, extracting data, and synthesizing findings. Trusted by researchers, Elicit offers a plethora of features to speed up the research process and is particularly beneficial for empirical domains like biomedicine and machine learning.

Docugami

Docugami is a document engineering platform that uses artificial intelligence to extract, analyze, and automate data from business documents. It is designed to empower business users with immediate impact, without the need for massive investment in machine learning, staff training, or IT development. Docugami's proprietary Business Document Foundation Model is an LLM for Generative AI that can be applied to any type of business document.

SOAX AI data collection

SOAX AI data collection is a powerful tool that utilizes artificial intelligence to gather and analyze data from various online sources. It automates the process of data collection, saving time and effort for users. The tool is designed to extract relevant information efficiently and accurately, providing valuable insights for businesses and researchers. With its advanced algorithms, SOAX AI data collection can handle large volumes of data quickly and effectively, making it a valuable asset for anyone in need of data-driven decision-making.

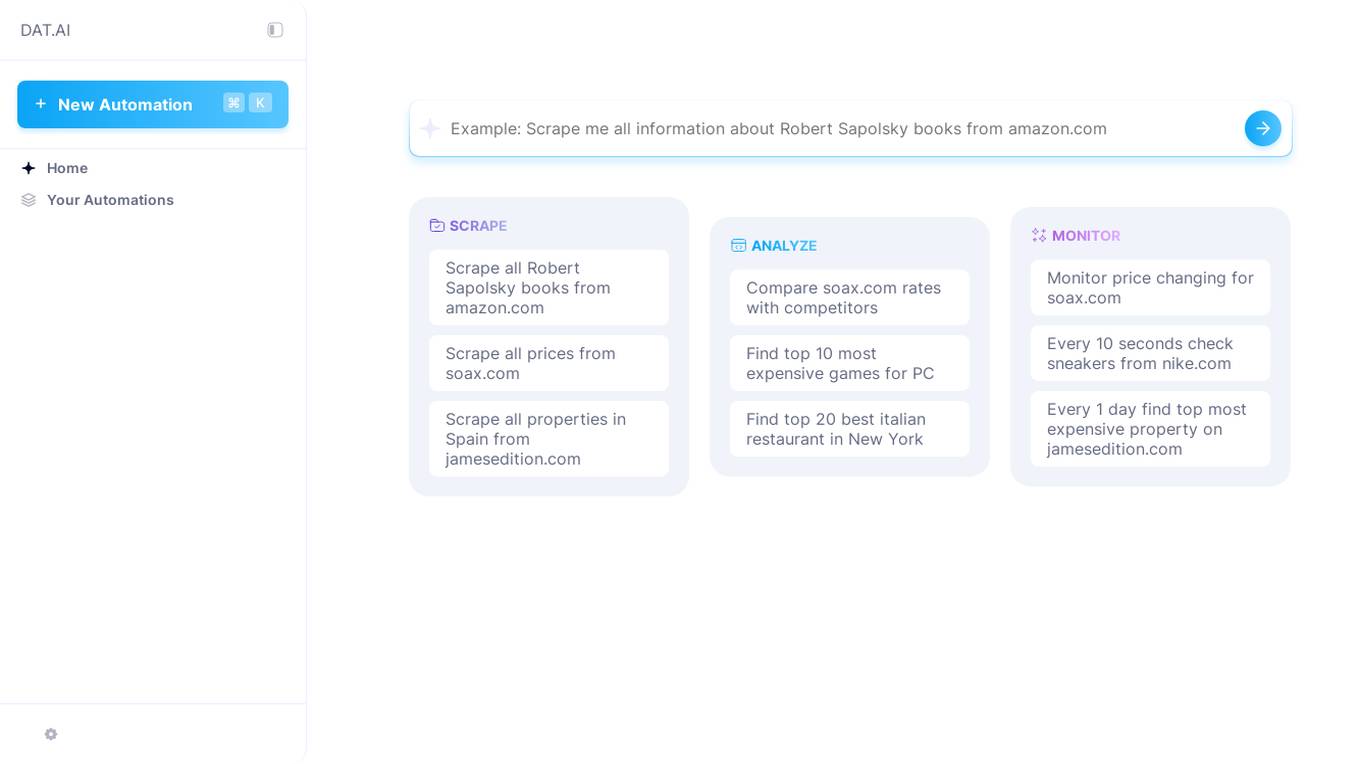

Dataku

Dataku is an advanced data extraction and analysis platform powered by AI. It enables businesses to easily extract, transform, and analyze data from a variety of sources, including web pages, PDFs, and spreadsheets. With Dataku, businesses can gain valuable insights from their data to make better decisions.

heißdocs

heißdocs is a tool that adds a superfast search layer on top of scanned or digital PDFs. It also has AI-powered Question-Answering capabilities. With heißdocs, you can easily find information in your PDFs without having to sift through thousands of pages. You can also own your data and host it yourself. heißdocs is open source, so you can view the code, make modifications, or request modifications as you like.

20 - Open Source AI Tools

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

awesome-generative-ai

A curated list of Generative AI projects, tools, artworks, and models

baml

BAML is a config file format for declaring LLM functions that you can then use in TypeScript or Python. With BAML you can Classify or Extract any structured data using Anthropic, OpenAI or local models (using Ollama) ## Resources  [Discord Community](https://discord.gg/boundaryml)  [Follow us on Twitter](https://twitter.com/boundaryml) * Discord Office Hours - Come ask us anything! We hold office hours most days (9am - 12pm PST). * Documentation - Learn BAML * Documentation - BAML Syntax Reference * Documentation - Prompt engineering tips * Boundary Studio - Observability and more #### Starter projects * BAML + NextJS 14 * BAML + FastAPI + Streaming ## Motivation Calling LLMs in your code is frustrating: * your code uses types everywhere: classes, enums, and arrays * but LLMs speak English, not types BAML makes calling LLMs easy by taking a type-first approach that lives fully in your codebase: 1. Define what your LLM output type is in a .baml file, with rich syntax to describe any field (even enum values) 2. Declare your prompt in the .baml config using those types 3. Add additional LLM config like retries or redundancy 4. Transpile the .baml files to a callable Python or TS function with a type-safe interface. (VSCode extension does this for you automatically). We were inspired by similar patterns for type safety: protobuf and OpenAPI for RPCs, Prisma and SQLAlchemy for databases. BAML guarantees type safety for LLMs and comes with tools to give you a great developer experience:  Jump to BAML code or how Flexible Parsing works without additional LLM calls. | BAML Tooling | Capabilities | | ----------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | | BAML Compiler install | Transpiles BAML code to a native Python / Typescript library (you only need it for development, never for releases) Works on Mac, Windows, Linux  | | VSCode Extension install | Syntax highlighting for BAML files Real-time prompt preview Testing UI | | Boundary Studio open (not open source) | Type-safe observability Labeling |

neo4j-generative-ai-google-cloud

This repo contains sample applications that show how to use Neo4j with the generative AI capabilities in Google Cloud Vertex AI. We explore how to leverage Google generative AI to build and consume a knowledge graph in Neo4j.

Open-Sora-Plan

Open-Sora-Plan is a project that aims to create a simple and scalable repo to reproduce Sora (OpenAI, but we prefer to call it "ClosedAI"). The project is still in its early stages, but the team is working hard to improve it and make it more accessible to the open-source community. The project is currently focused on training an unconditional model on a landscape dataset, but the team plans to expand the scope of the project in the future to include text2video experiments, training on video2text datasets, and controlling the model with more conditions.

browser-copilot

Browser Copilot is a browser extension that enables users to utilize AI assistants for various web application tasks. It provides a versatile UI and framework to implement copilots that can automate tasks, extract information, interact with web applications, and utilize service APIs. Users can easily install copilots, start chats, save prompts, and toggle the copilot on or off. The project also includes a sample copilot implementation for testing purposes and encourages community contributions to expand the catalog of copilots.

Azure-OpenAI-demos

Azure OpenAI demos is a repository showcasing various demos and use cases of Azure OpenAI services. It includes demos for tasks such as image comparisons, car damage copilot, video to checklist generation, automatic data visualization, text analytics, and more. The repository provides a wide range of examples on how to leverage Azure OpenAI for different applications and industries.

llama.cpp

llama.cpp is a C++ implementation of LLaMA, a large language model from Meta. It provides a command-line interface for inference and can be used for a variety of tasks, including text generation, translation, and question answering. llama.cpp is highly optimized for performance and can be run on a variety of hardware, including CPUs, GPUs, and TPUs.

llm-reasoners

LLM Reasoners is a library that enables LLMs to conduct complex reasoning, with advanced reasoning algorithms. It approaches multi-step reasoning as planning and searches for the optimal reasoning chain, which achieves the best balance of exploration vs exploitation with the idea of "World Model" and "Reward". Given any reasoning problem, simply define the reward function and an optional world model (explained below), and let LLM reasoners take care of the rest, including Reasoning Algorithms, Visualization, LLM calling, and more!

Linly-Talker

Linly-Talker is an innovative digital human conversation system that integrates the latest artificial intelligence technologies, including Large Language Models (LLM) 🤖, Automatic Speech Recognition (ASR) 🎙️, Text-to-Speech (TTS) 🗣️, and voice cloning technology 🎤. This system offers an interactive web interface through the Gradio platform 🌐, allowing users to upload images 📷 and engage in personalized dialogues with AI 💬.

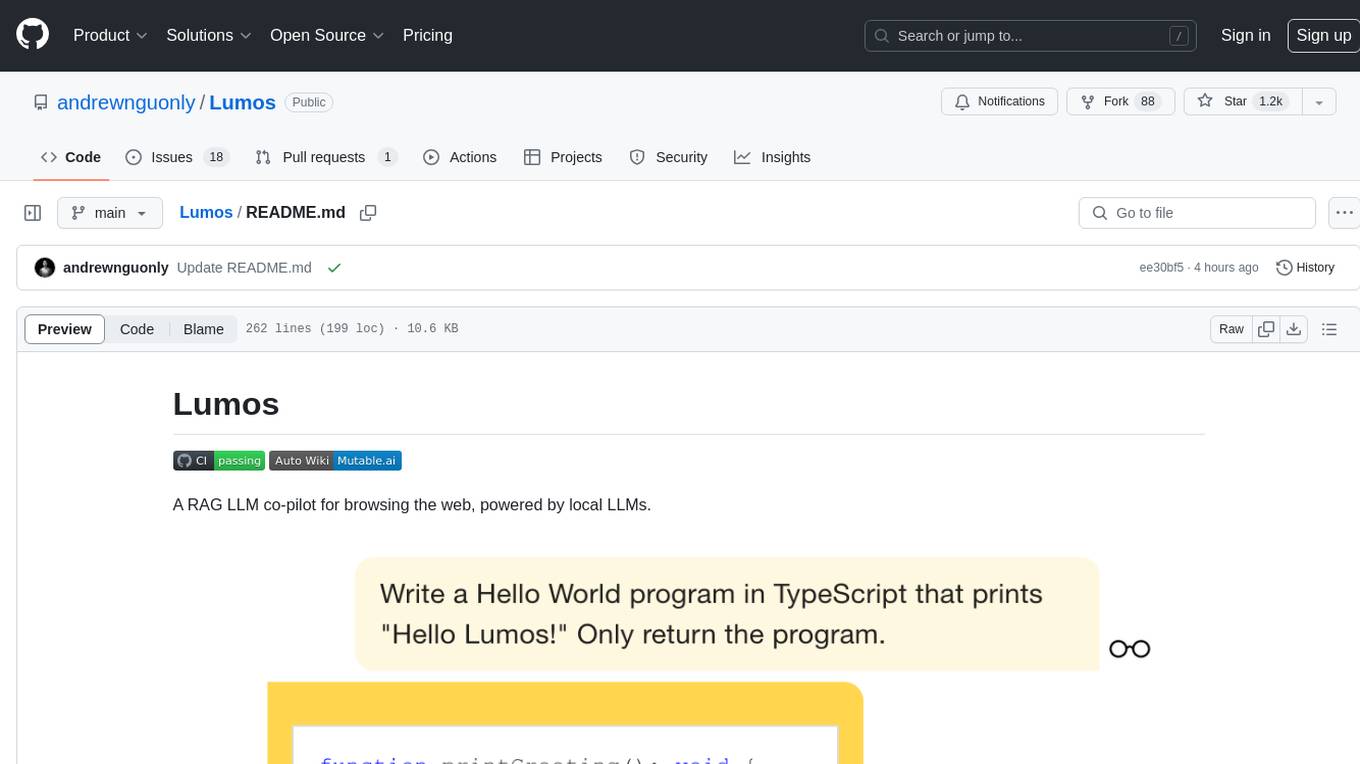

Lumos

Lumos is a Chrome extension powered by a local LLM co-pilot for browsing the web. It allows users to summarize long threads, news articles, and technical documentation. Users can ask questions about reviews and product pages. The tool requires a local Ollama server for LLM inference and embedding database. Lumos supports multimodal models and file attachments for processing text and image content. It also provides options to customize models, hosts, and content parsers. The extension can be easily accessed through keyboard shortcuts and offers tools for automatic invocation based on prompts.

openai-chat-api-workflow

**OpenAI Chat API Workflow for Alfred** An Alfred 5 Workflow for using OpenAI Chat API to interact with GPT-3.5/GPT-4 🤖💬 It also allows image generation 🖼️, image understanding 👀, speech-to-text conversion 🎤, and text-to-speech synthesis 🔈 **Features:** * Execute all features using Alfred UI, selected text, or a dedicated web UI * Web UI is constructed by the workflow and runs locally on your Mac 💻 * API call is made directly between the workflow and OpenAI, ensuring your chat messages are not shared online with anyone other than OpenAI 🔒 * OpenAI does not use the data from the API Platform for training 🚫 * Export chat data to a simple JSON format external file 📄 * Continue the chat by importing the exported data later 🔄

Scrapegraph-ai

ScrapeGraphAI is a Python library that uses Large Language Models (LLMs) and direct graph logic to create web scraping pipelines for websites, documents, and XML files. It allows users to extract specific information from web pages by providing a prompt describing the desired data. ScrapeGraphAI supports various LLMs, including Ollama, OpenAI, Gemini, and Docker, enabling users to choose the most suitable model for their needs. The library provides a user-friendly interface through its `SmartScraper` class, which simplifies the process of building and executing scraping pipelines. ScrapeGraphAI is open-source and available on GitHub, with extensive documentation and examples to guide users. It is particularly useful for researchers and data scientists who need to extract structured data from web pages for analysis and exploration.

Scrapegraph-ai

ScrapeGraphAI is a web scraping Python library that utilizes LLM and direct graph logic to create scraping pipelines for websites and local documents. It offers various standard scraping pipelines like SmartScraperGraph, SearchGraph, SpeechGraph, and ScriptCreatorGraph. Users can extract information by specifying prompts and input sources. The library supports different LLM APIs such as OpenAI, Groq, Azure, and Gemini, as well as local models using Ollama. ScrapeGraphAI is designed for data exploration and research purposes, providing a versatile tool for extracting information from web pages and generating outputs like Python scripts, audio summaries, and search results.

extractor

Extractor is an AI-powered data extraction library for Laravel that leverages OpenAI's capabilities to effortlessly extract structured data from various sources, including images, PDFs, and emails. It features a convenient wrapper around OpenAI Chat and Completion endpoints, supports multiple input formats, includes a flexible Field Extractor for arbitrary data extraction, and integrates with Textract for OCR functionality. Extractor utilizes JSON Mode from the latest GPT-3.5 and GPT-4 models, providing accurate and efficient data extraction.

20 - OpenAI Gpts

PDF Ninja

I extract data and tables from PDFs to CSV, focusing on data privacy and precision.

Spreadsheet Composer

Magically turning text from emails, lists and website content into spreadsheet tables

Property Manager Document Assistant

Provides analysis and data extraction of Property Management documents and contracts for managers

Fill PDF Forms

Fill legal forms & complex PDF documents easily! Upload a file, provide data sources and I'll handle the rest.

Email Thread GPT

I'm EmailThreadAnalyzer, here to help you with your email thread analysis.

Regex Wizard

Generate and explain regex patterns from your description, it support English and Chinese.

Metaphor API Guide - Python SDK

Teaches you how to use the Metaphor Search API using our Python SDK