Best AI tools for< Rust Engineer >

Infographic

9 - AI tool Sites

Blackbox

Blackbox is an AI-powered code generation, code chat, and code search tool that helps developers write better code faster. With Blackbox, you can generate code snippets, chat with an AI assistant about code, and search for code examples from a massive database.

Replit

Replit is a software creation platform that provides an integrated development environment (IDE), artificial intelligence (AI) assistance, and deployment services. It allows users to build, test, and deploy software projects directly from their browser, without the need for local setup or configuration. Replit offers real-time collaboration, code generation, debugging, and autocompletion features powered by AI. It supports multiple programming languages and frameworks, making it suitable for a wide range of development projects.

Chat Blackbox

Chat Blackbox is an AI tool that specializes in AI code generation, code chat, and code search. It provides a platform where users can interact with AI to generate code, discuss code-related topics, and search for specific code snippets. The tool leverages artificial intelligence algorithms to enhance the coding experience and streamline the development process. With Chat Blackbox, users can access a wide range of features to improve their coding skills and efficiency.

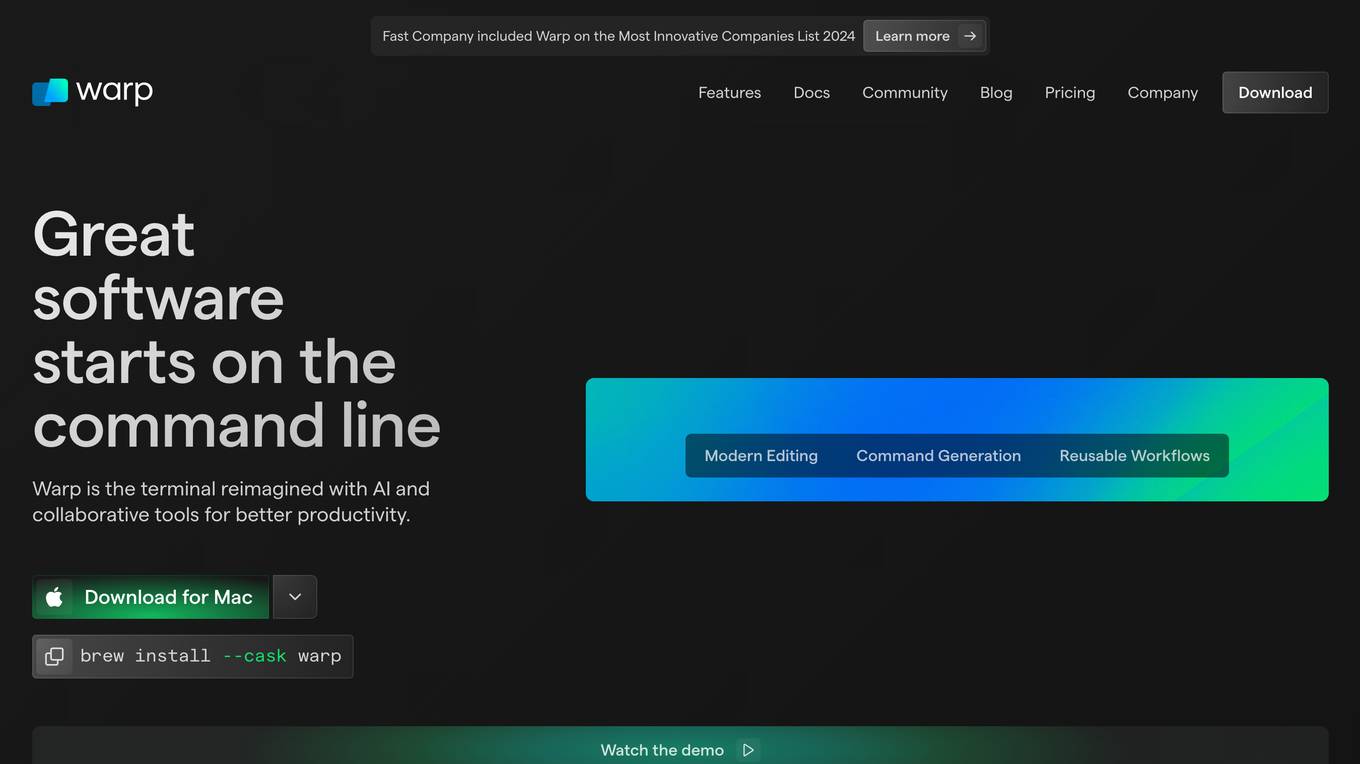

Warp

Warp is a terminal reimagined with AI and collaborative tools for better productivity. It is built with Rust for speed and has an intuitive interface. Warp includes features such as modern editing, command generation, reusable workflows, and Warp Drive. Warp AI allows users to ask questions about programming and get answers, recall commands, and debug errors. Warp Drive helps users organize hard-to-remember commands and share them with their team. Warp is a private and secure application that is trusted by hundreds of thousands of professional developers.

CodeDefender α

CodeDefender α is an AI-powered tool that helps developers and non-developers improve code quality and security. It integrates with popular IDEs like Visual Studio, VS Code, and IntelliJ, providing real-time code analysis and suggestions. CodeDefender supports multiple programming languages, including C/C++, C#, Java, Python, and Rust. It can detect a wide range of code issues, including security vulnerabilities, performance bottlenecks, and correctness errors. Additionally, CodeDefender offers features like custom prompts, multiple models, and workspace/solution understanding to enhance code comprehension and knowledge sharing within teams.

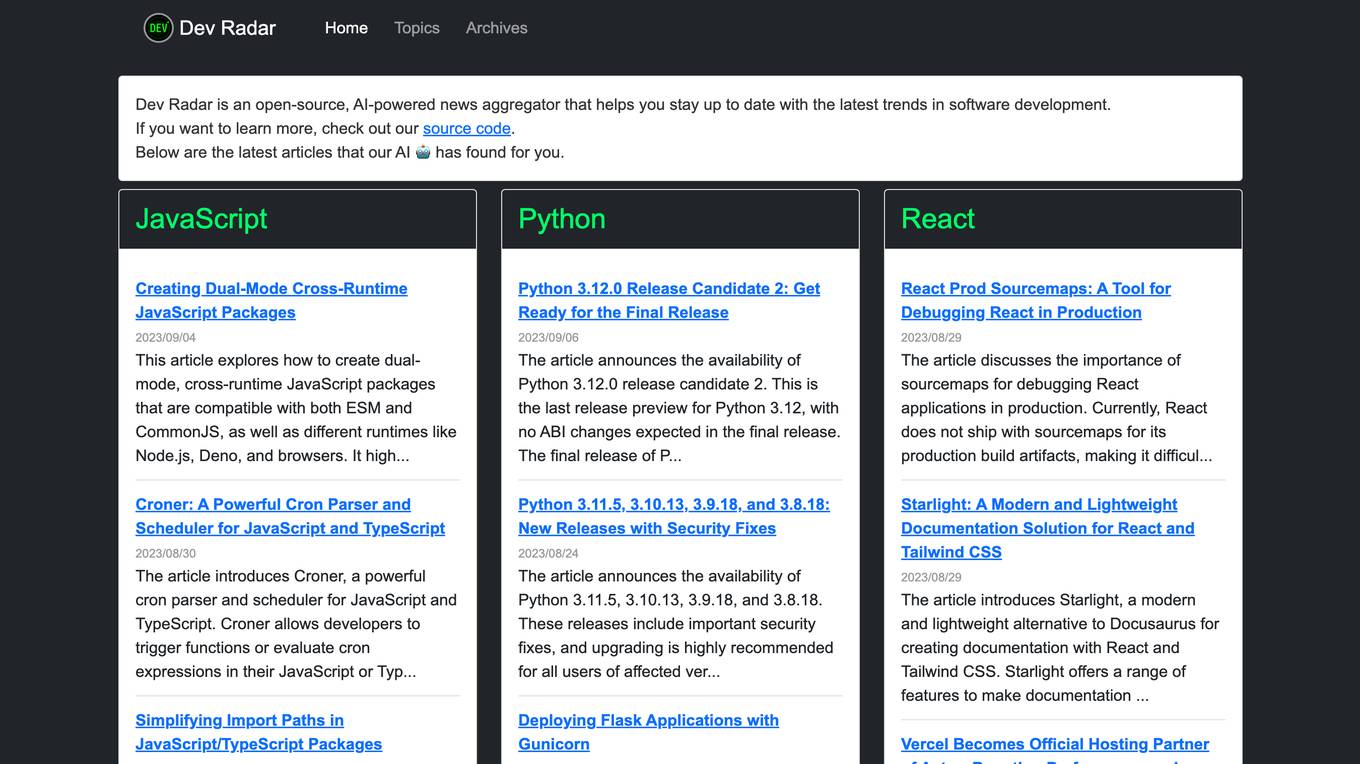

Dev Radar

Dev Radar is an open-source, AI-powered news aggregator that helps users stay up to date with the latest trends in software development. It provides curated articles on various programming languages and frameworks, offering insights and updates for developers. Users can explore a wide range of topics related to JavaScript, Python, React, TypeScript, Rust, Go, Node.js, Deno, Ruby, and more. Dev Radar aims to streamline the process of discovering relevant and valuable content in the ever-evolving field of software development.

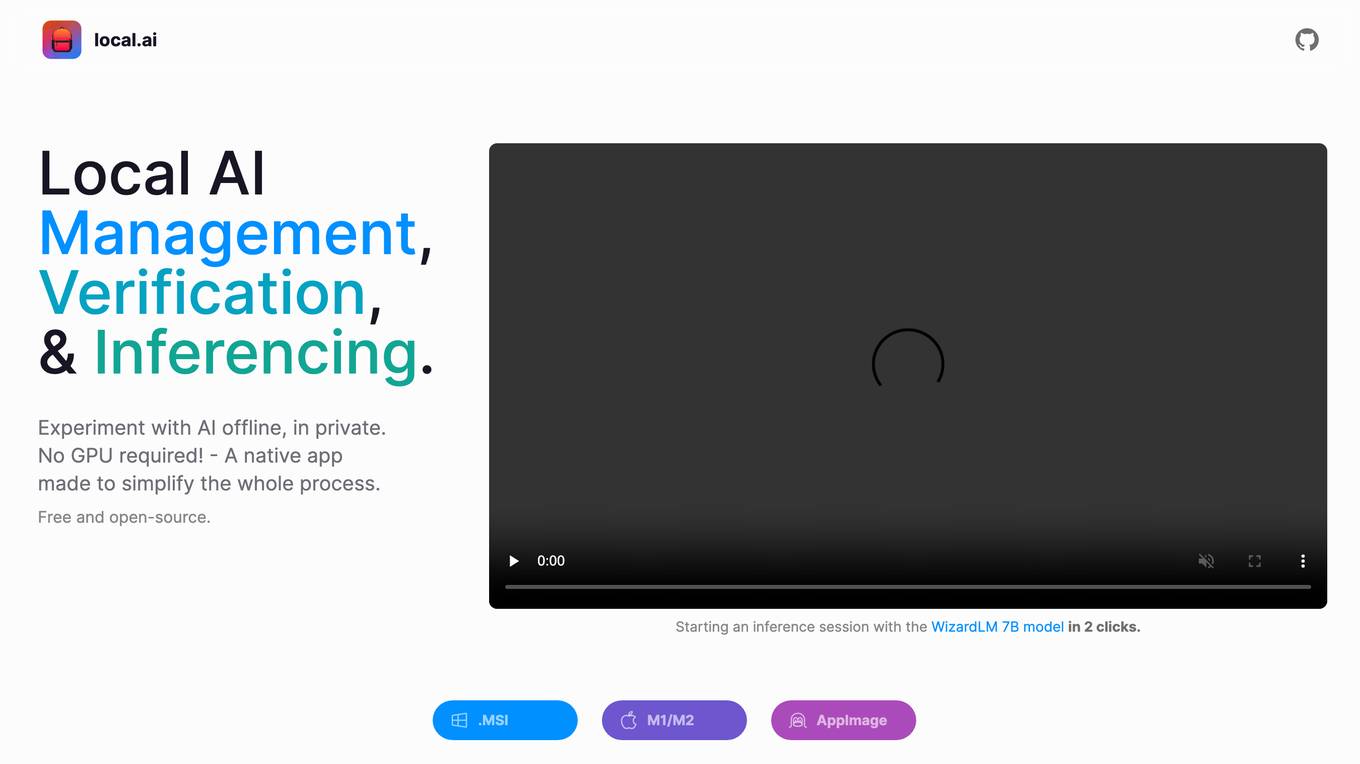

Local AI Playground

Local AI Playground is a free and open-source native app designed for AI management, verification, and inferencing. It allows users to experiment with AI offline in a private environment without the need for a GPU. The application is memory-efficient and compact, with a Rust backend, making it suitable for various operating systems. It offers features such as CPU inferencing, model management, and digest verification. Users can start a local streaming server for AI inferencing with just two clicks. Local AI Playground aims to simplify the AI development process and provide a user-friendly experience for both offline and online AI applications.

N/A

The website is currently under maintenance, indicating that it is temporarily unavailable for access or use. During maintenance, updates, improvements, or technical fixes may be applied to enhance the website's performance and user experience. Users are advised to check back later for the website to be fully operational.

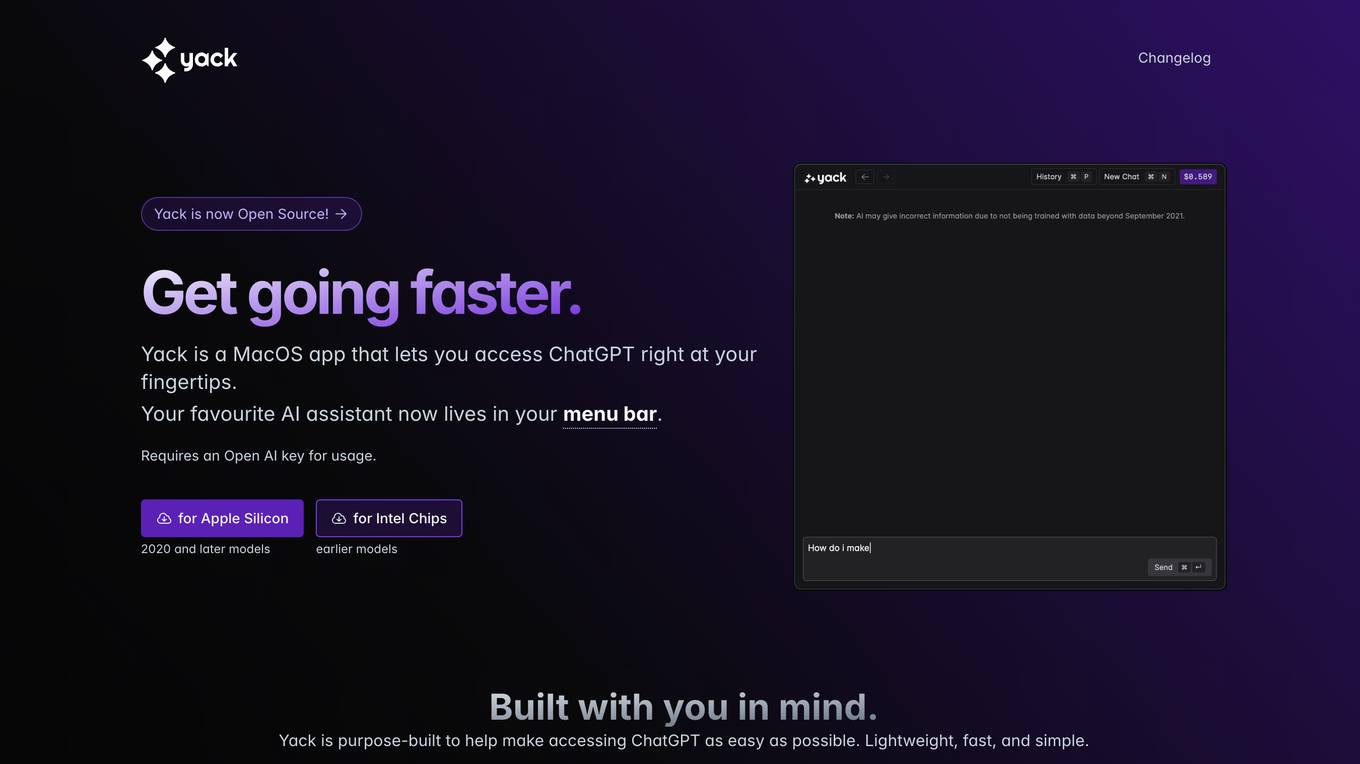

Yack

Yack is an AI tool that provides easy access to ChatGPT on MacOS. It is a lightweight and fast application designed to be used with a keyboard, offering features like multiple themes, Markdown support, and upcoming features such as cross-app integration and prompt templates. Yack prioritizes user privacy by not storing any data on external servers, ensuring that all information remains on the user's device. Built with Rust, Yack is efficient and compact, making it a convenient tool for generating AI-powered responses and completing prompts.

0 - Open Source Tools

12 - OpenAI Gpts

Rust

Powerful Rust coding assistant. Trained on a vast array of the best up-to-date Rust resources, libraries and frameworks. Start with a quest! 🥷 (V1.7)

RustChat

Hello! I'm your Rust language learning and practical assistant created by AlexZhang. I can help you learn and practice Rust whether you are a beginner or professional. I can provide suitable learning resources and hands-on projects for you. You can view all supported shortcut commands with /list.

Rust on ESP32 Expert

Expert in Rust coding for ESP32, offering detailed programming and deployment guidance.