docs

Documentation for Lovable

Stars: 164

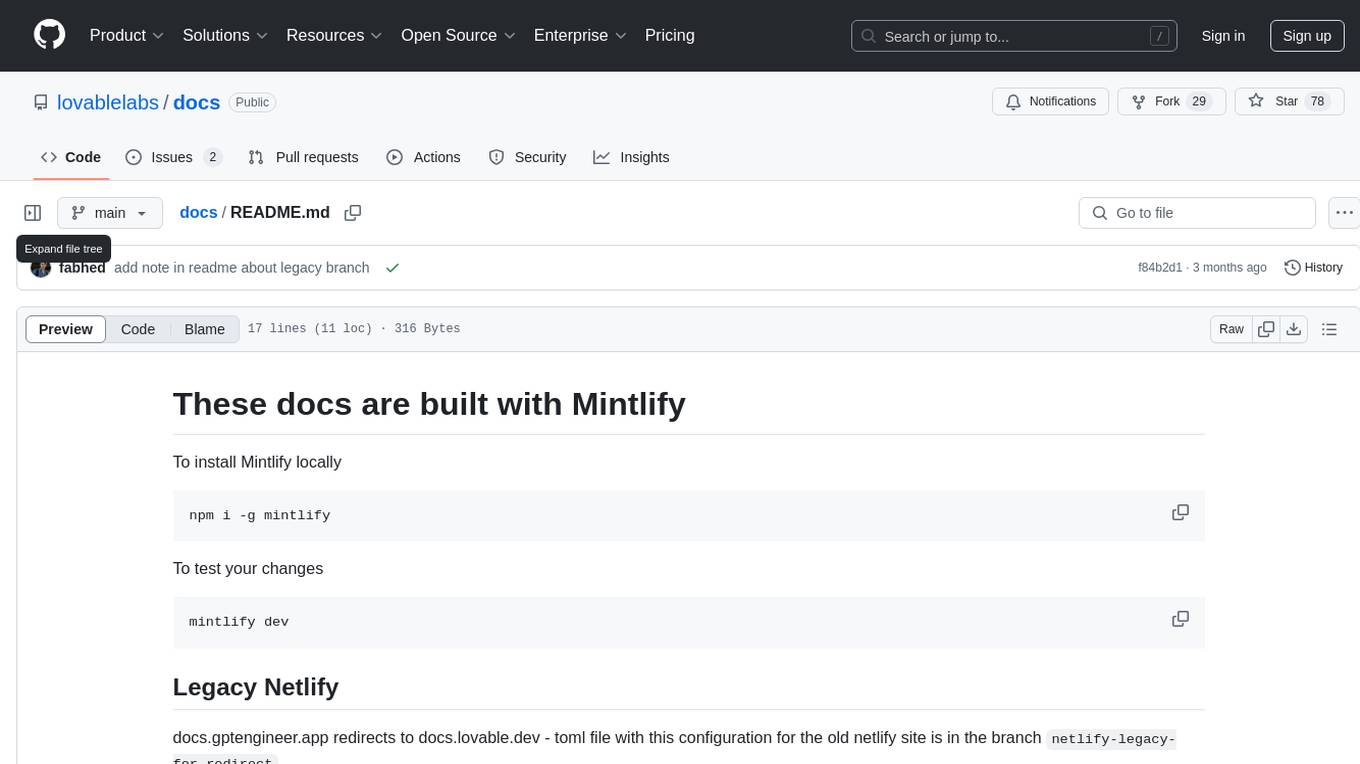

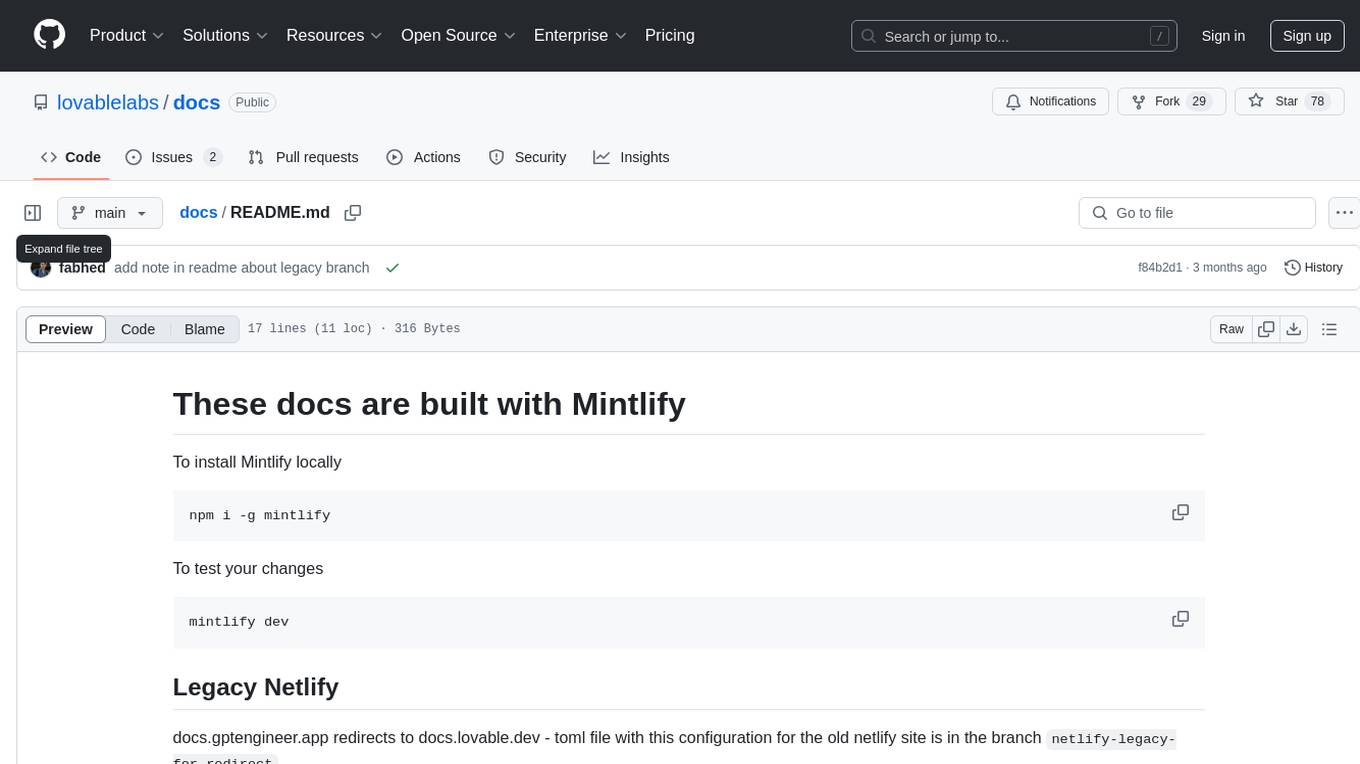

This repository contains documentation for a tool called Mintlify. It provides instructions on how to install Mintlify locally and test changes. Additionally, it includes information on redirecting from an old Netlify site to a new one using a toml file configuration. The documentation is built using Mintlify.

README:

To install Mintlify locally

npm i -g mintlify

To test your changes

mintlify dev

docs.gptengineer.app redirects to docs.lovable.dev - toml file with this configuration for the old netlify site is in the branch netlify-legacy-for-redirect

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for docs

Similar Open Source Tools

docs

This repository contains documentation for a tool called Mintlify. It provides instructions on how to install Mintlify locally and test changes. Additionally, it includes information on redirecting from an old Netlify site to a new one using a toml file configuration. The documentation is built using Mintlify.

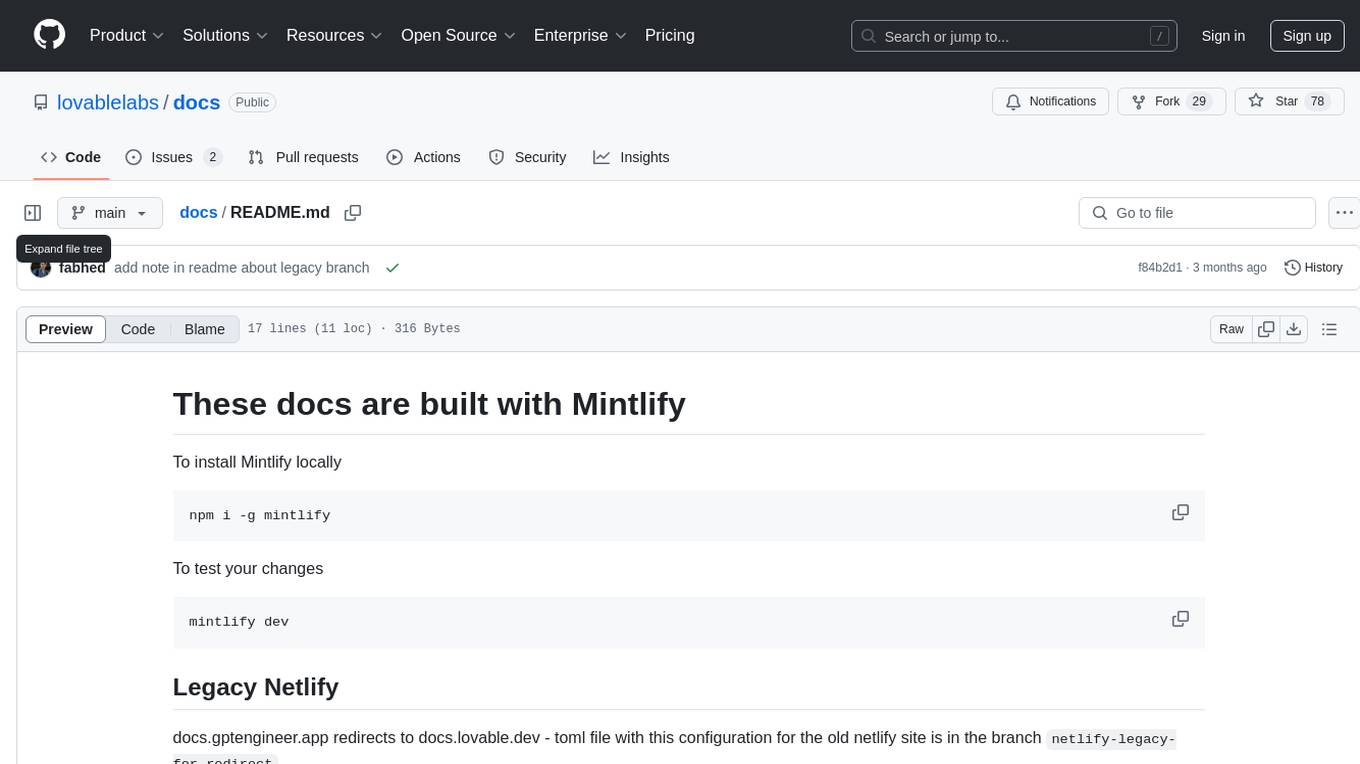

airflow-site

This repository contains the source code for the Apache Airflow website, including directories for archived documentation versions, landing pages, license templates, and the Sphinx theme. To work on the site locally, users need to install coreutils, Node.js, NPM, and HUGO, and run specific scripts provided in the repository. Contributors can refer to the contributor's guide for detailed instructions on how to contribute to the website.

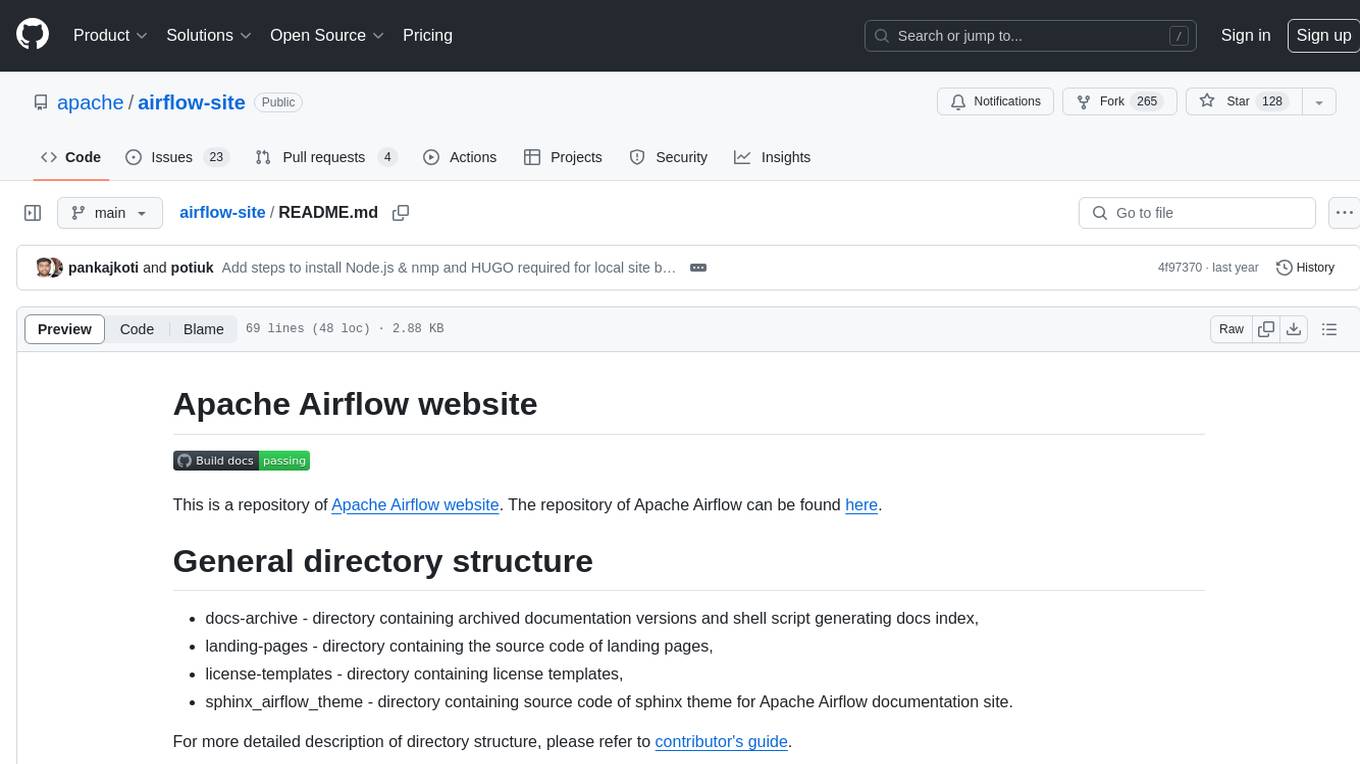

Roo-Code-Docs

Roo Code Docs is a website built using Docusaurus, a modern static website generator. It serves as a documentation platform for Roo Code, accessible at https://docs.roocode.com. The website provides detailed information and guides for users to navigate and utilize Roo Code effectively. With a clean and user-friendly interface, it offers a seamless experience for developers and users seeking information about Roo Code.

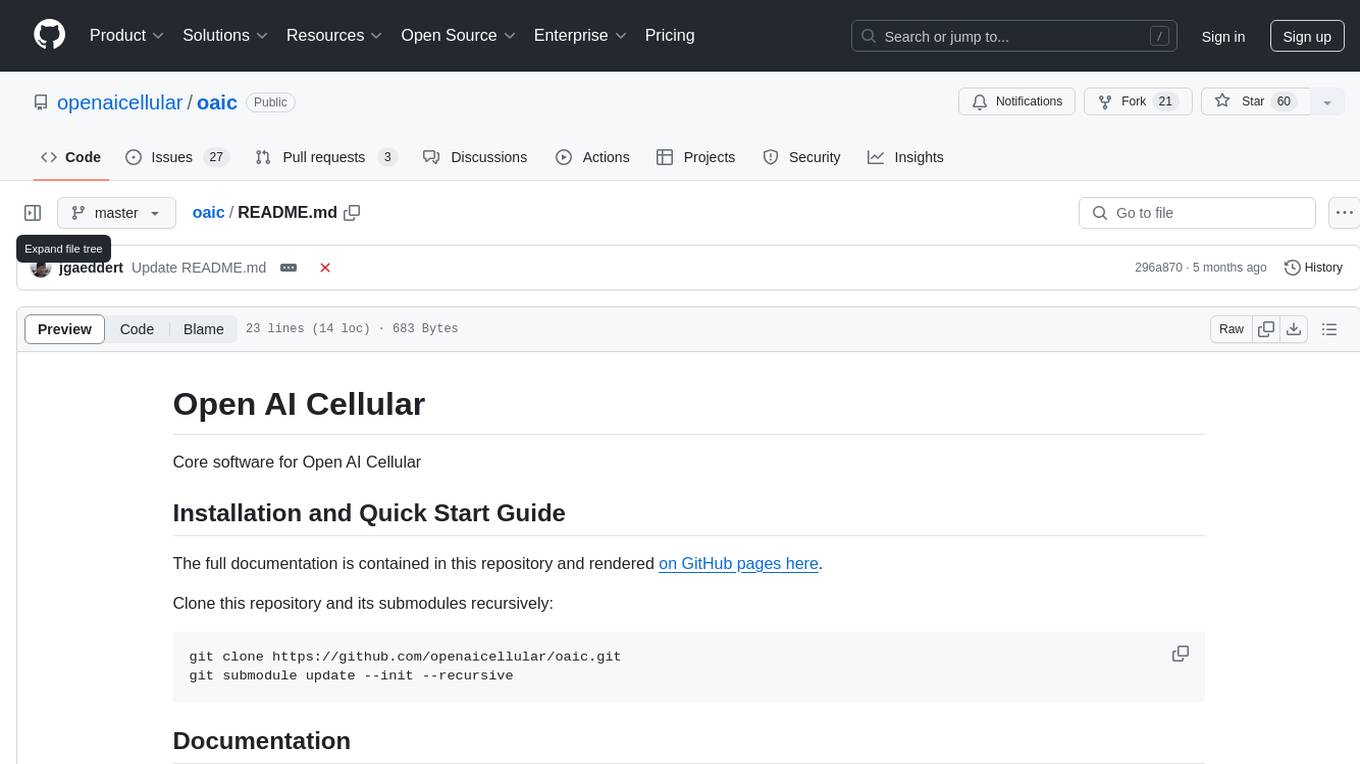

oaic

Open AI Cellular is the core software for Open AI Cellular. It provides documentation on installation, quick start guide, and usage. The repository contains submodules and requires sphinx with the read-the-docs theme for building core documentation. The resulting documentation is stored in the 'docs/build/html' directory.

shipstation

ShipStation is an AI-based website and agents generation platform that optimizes landing page websites and generic connect-anything-to-anything services. It enables seamless communication between service providers and integration partners, offering features like user authentication, project management, code editing, payment integration, and real-time progress tracking. The project architecture includes server-side (Node.js) and client-side (React with Vite) components. Prerequisites include Node.js, npm or yarn, Anthropic API key, Supabase account, Tavily API key, and Razorpay account. Setup instructions involve cloning the repository, setting up Supabase, configuring environment variables, and starting the backend and frontend servers. Users can access the application through the browser, sign up or log in, create landing pages or portfolios, and get websites stored in an S3 bucket. Deployment to Heroku involves building the client project, committing changes, and pushing to the main branch. Contributions to the project are encouraged, and the license encourages doing good.

brokk

Brokk is a code assistant tool named after the Norse god of the forge. It is designed to understand code semantically, enabling LLMs to work effectively on large codebases. Users can sign up at Brokk.ai, install jbang, and follow instructions to run Brokk. The tool uses Gradle with Scala support and requires JDK 21 or newer for building. Brokk aims to enhance code comprehension and productivity by providing semantic understanding of code.

WhiskeyAI

WhiskeyAI is a Next.js project that serves as a starting point for developing web applications. It includes a development server for live previewing changes and utilizes next/font for optimizing and loading the Geist font family. The project encourages contributions and feedback from users, providing resources for learning Next.js and deploying applications on the Vercel platform.

NeoHaskell

NeoHaskell is a newcomer-friendly and productive dialect of Haskell. It aims to be easy to learn and use, while also powerful enough for app development with minimal effort and maximum confidence. The project prioritizes design and documentation before implementation, with ongoing work on design documents for community sharing.

just-the-browser

Just the Browser is a tool designed to help users remove AI features, telemetry data reporting, sponsored content, product integrations, and other annoyances from desktop web browsers. The project provides configuration files for popular web browsers, documentation for installation and modification, and easy installation scripts. Additionally, it includes a static site generator for justthebrowser.com, built with Eleventy and Simple.css, with icons from Bootstrap Icons. Users can subscribe to updates via the RSS/Atom releases feed on GitHub.

FlowTest

FlowTestAI is the world’s first GenAI powered OpenSource Integrated Development Environment (IDE) designed for crafting, visualizing, and managing API-first workflows. It operates as a desktop app, interacting with the local file system, ensuring privacy and enabling collaboration via version control systems. The platform offers platform-specific binaries for macOS, with versions for Windows and Linux in development. It also features a CLI for running API workflows from the command line interface, facilitating automation and CI/CD processes.

vespa

Vespa is a platform that performs operations such as selecting a subset of data in a large corpus, evaluating machine-learned models over the selected data, organizing and aggregating it, and returning it, typically in less than 100 milliseconds, all while the data corpus is continuously changing. It has been in development for many years and is used on a number of large internet services and apps which serve hundreds of thousands of queries from Vespa per second.

airavata

Apache Airavata is a software framework for executing and managing computational jobs on distributed computing resources. It supports local clusters, supercomputers, national grids, academic and commercial clouds. Airavata utilizes service-oriented computing, distributed messaging, and workflow composition. It includes a server package with an API, client SDKs, and a general-purpose UI implementation called Apache Airavata Django Portal.

pyvespa

Vespa is a scalable open-source serving engine that enables users to store, compute, and rank big data at user serving time. Pyvespa provides a Python API to Vespa, allowing users to create, modify, deploy, and interact with running Vespa instances. The library's primary purpose is to facilitate faster prototyping and familiarization with Vespa features.

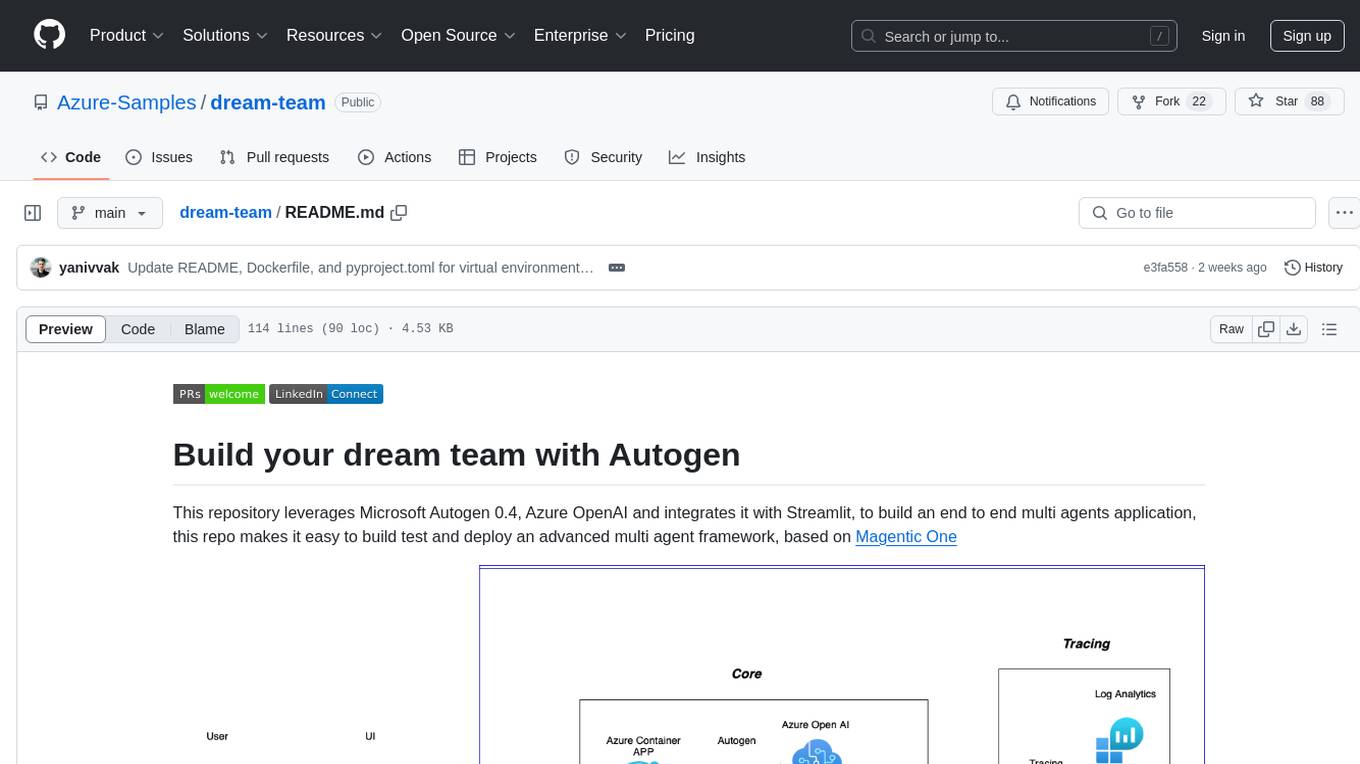

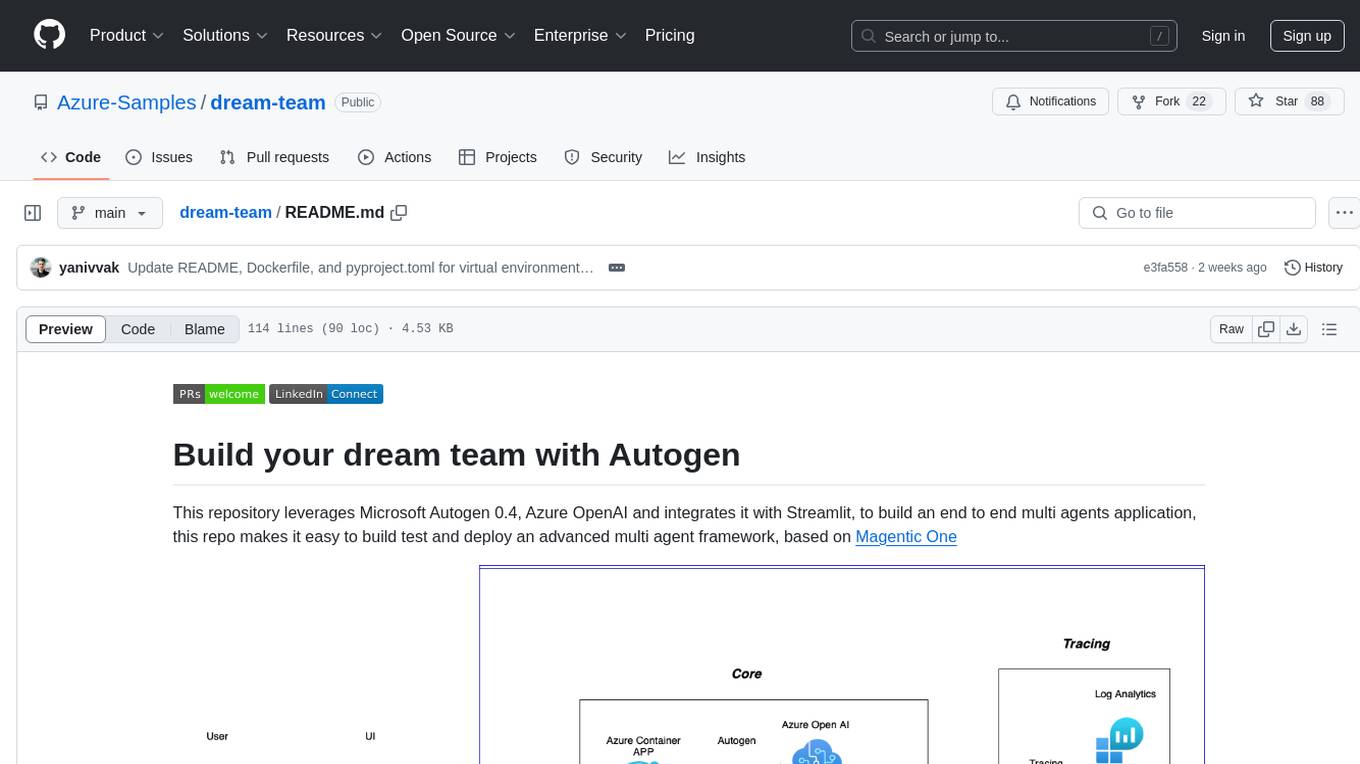

dream-team

Build your dream team with Autogen is a repository that leverages Microsoft Autogen 0.4, Azure OpenAI, and Streamlit to create an end-to-end multi-agent application. It provides an advanced multi-agent framework based on Magentic One, with features such as a friendly UI, single-line deployment, secure code execution, managed identities, and observability & debugging tools. Users can deploy Azure resources and the app with simple commands, work locally with virtual environments, install dependencies, update configurations, and run the application. The repository also offers resources for learning more about building applications with Autogen.

n8n-docs

n8n is an extendable workflow automation tool that enables you to connect anything to everything. It is open-source and can be self-hosted or used as a service. n8n provides a visual interface for creating workflows, which can be used to automate tasks such as data integration, data transformation, and data analysis. n8n also includes a library of pre-built nodes that can be used to connect to a variety of applications and services. This makes it easy to create complex workflows without having to write any code.

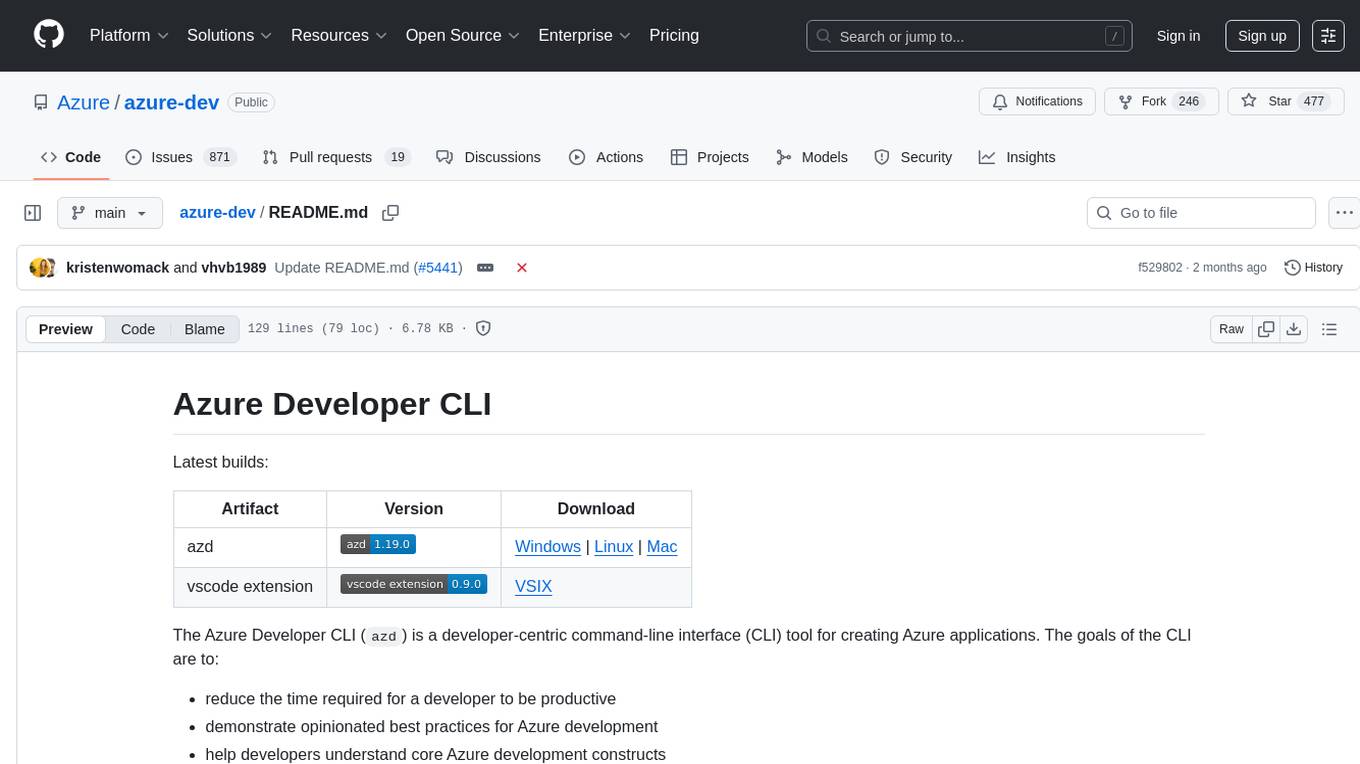

azure-dev

The Azure Developer CLI (`azd`) is a developer-centric command-line interface (CLI) tool for creating Azure applications. It aims to reduce the time required for a developer to be productive, demonstrate best practices for Azure development, and help developers understand core Azure development constructs. The CLI requires code repositories to adhere to specific conventions. It supports shell completion for `bash`, `zsh`, `fish`, and `powershell`. The software may collect information about users and their use of the software for service improvement. Telemetry collection is on by default but can be opted out by setting the environment variable `AZURE_DEV_COLLECT_TELEMETRY` to `no`. Contributions are welcome, and contributors need to agree to a Contributor License Agreement (CLA). The project has adopted the Microsoft Open Source Code of Conduct. The tool is licensed under Azure Developer CLI Templates Trust Notice.

For similar tasks

oaic

Open AI Cellular is the core software for Open AI Cellular. It provides documentation on installation, quick start guide, and usage. The repository contains submodules and requires sphinx with the read-the-docs theme for building core documentation. The resulting documentation is stored in the 'docs/build/html' directory.

docs

This repository contains documentation for a tool called Mintlify. It provides instructions on how to install Mintlify locally and test changes. Additionally, it includes information on redirecting from an old Netlify site to a new one using a toml file configuration. The documentation is built using Mintlify.

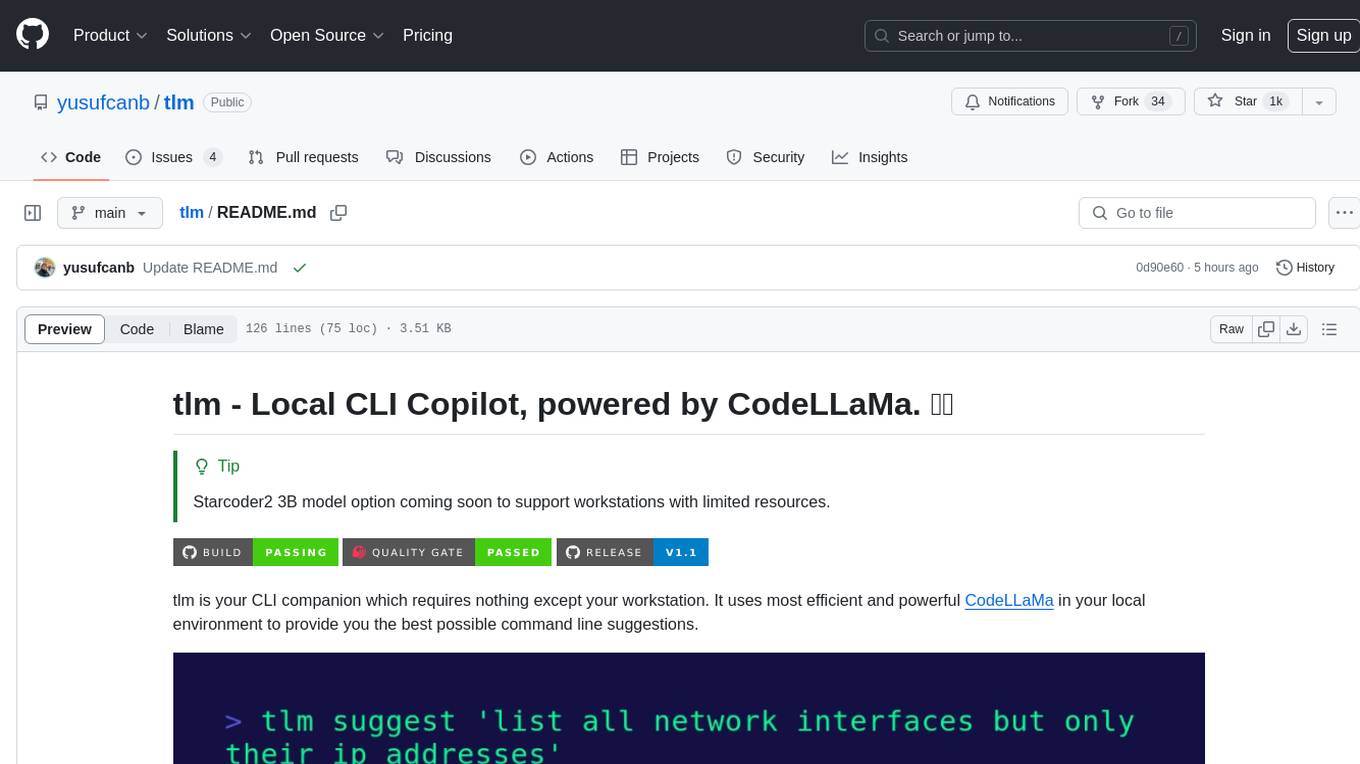

tlm

tlm is a local CLI copilot tool powered by CodeLLaMa, providing efficient command line suggestions without the need for an API key or internet connection. It works on macOS, Linux, and Windows, with automatic shell detection for Powershell, Bash, and Zsh. The tool offers one-liner generation and command explanation, and can be installed via an installation script or using Go Install. Ollama is required to download necessary models, and the tool can be easily deployed and configured. Contributors are welcome to enhance the tool's functionality.

arena-breakout-ai

Arena Breakout AI is a repository that offers detailed information and resources for downloading, installing, and using the ExCheats tool. Users can access premium plan functionalities and ensure the safety of their Windows system. The tool supports various Windows systems, including Windows 8, 8.1, 10, and 11 (x32/64). To get started, users need to visit the provided website, navigate to the cheats catalog, select CheatSquad Loader 3.5 for any games, download the tool, open the archive, and choose the game to continue.

perplexity-mcp

Perplexity-mcp is a Model Context Protocol (MCP) server that provides web search functionality using Perplexity AI's API. It works with the Anthropic Claude desktop client. The server allows users to search the web with specific queries and filter results by recency. It implements the perplexity_search_web tool, which takes a query as a required argument and can filter results by day, week, month, or year. Users need to set up environment variables, including the PERPLEXITY_API_KEY, to use the server. The tool can be installed via Smithery and requires UV for installation. It offers various models for different contexts and can be added as an MCP server in Cursor or Claude Desktop configurations.

modelence

Modelence is an all-in-one TypeScript framework for startups shipping production apps, aiming to eliminate boilerplate for standard web app features. It provides authentication, database setup, cron jobs, AI observability, and email functionalities. Modelence requires Node.js 20.20 or higher. Developers can create projects, install dependencies, and start the development server quickly. For local development, contributors can clone the repository, install dependencies, build the package, and test changes in a real application. Modelence offers examples for further guidance.

dream-team

Build your dream team with Autogen is a repository that leverages Microsoft Autogen 0.4, Azure OpenAI, and Streamlit to create an end-to-end multi-agent application. It provides an advanced multi-agent framework based on Magentic One, with features such as a friendly UI, single-line deployment, secure code execution, managed identities, and observability & debugging tools. Users can deploy Azure resources and the app with simple commands, work locally with virtual environments, install dependencies, update configurations, and run the application. The repository also offers resources for learning more about building applications with Autogen.

For similar jobs

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

ai-on-gke

This repository contains assets related to AI/ML workloads on Google Kubernetes Engine (GKE). Run optimized AI/ML workloads with Google Kubernetes Engine (GKE) platform orchestration capabilities. A robust AI/ML platform considers the following layers: Infrastructure orchestration that support GPUs and TPUs for training and serving workloads at scale Flexible integration with distributed computing and data processing frameworks Support for multiple teams on the same infrastructure to maximize utilization of resources

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

nvidia_gpu_exporter

Nvidia GPU exporter for prometheus, using `nvidia-smi` binary to gather metrics.

tracecat

Tracecat is an open-source automation platform for security teams. It's designed to be simple but powerful, with a focus on AI features and a practitioner-obsessed UI/UX. Tracecat can be used to automate a variety of tasks, including phishing email investigation, evidence collection, and remediation plan generation.

openinference

OpenInference is a set of conventions and plugins that complement OpenTelemetry to enable tracing of AI applications. It provides a way to capture and analyze the performance and behavior of AI models, including their interactions with other components of the application. OpenInference is designed to be language-agnostic and can be used with any OpenTelemetry-compatible backend. It includes a set of instrumentations for popular machine learning SDKs and frameworks, making it easy to add tracing to your AI applications.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

kong

Kong, or Kong API Gateway, is a cloud-native, platform-agnostic, scalable API Gateway distinguished for its high performance and extensibility via plugins. It also provides advanced AI capabilities with multi-LLM support. By providing functionality for proxying, routing, load balancing, health checking, authentication (and more), Kong serves as the central layer for orchestrating microservices or conventional API traffic with ease. Kong runs natively on Kubernetes thanks to its official Kubernetes Ingress Controller.