poke-env

A python interface for training Reinforcement Learning bots to battle on pokemon showdown

Stars: 306

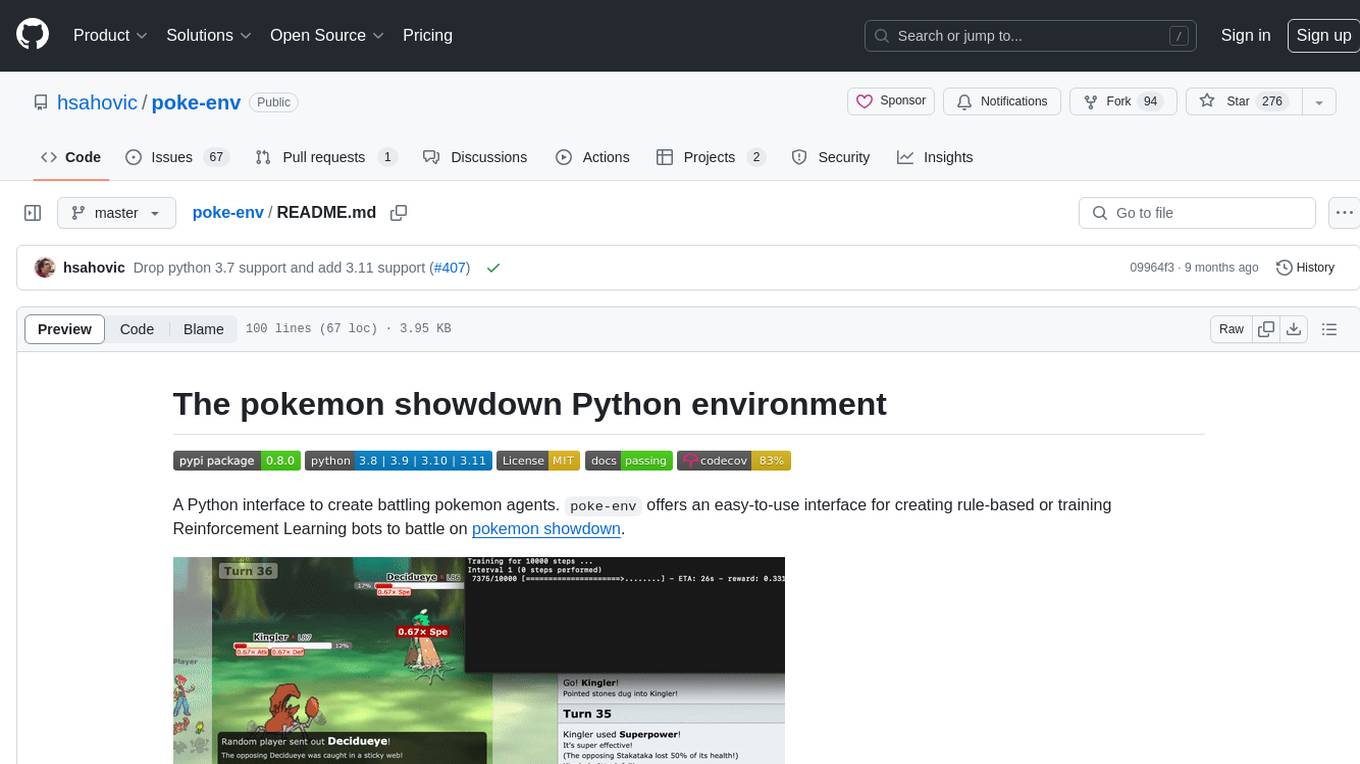

A Python interface for creating battling Pokemon agents, 'poke-env' allows users to develop rule-based or Reinforcement Learning bots to battle on Pokemon Showdown. The tool provides an easy-to-use interface for agent creation and offers documentation, examples, and starting code for beginners. Users can install 'poke-env' via pip and set up a development server for testing. The project is inspired by an artificial intelligence class project and relies on data from Smogon forums' RMT section. It is licensed under MIT and can be cited using a provided BibTeX entry.

README:

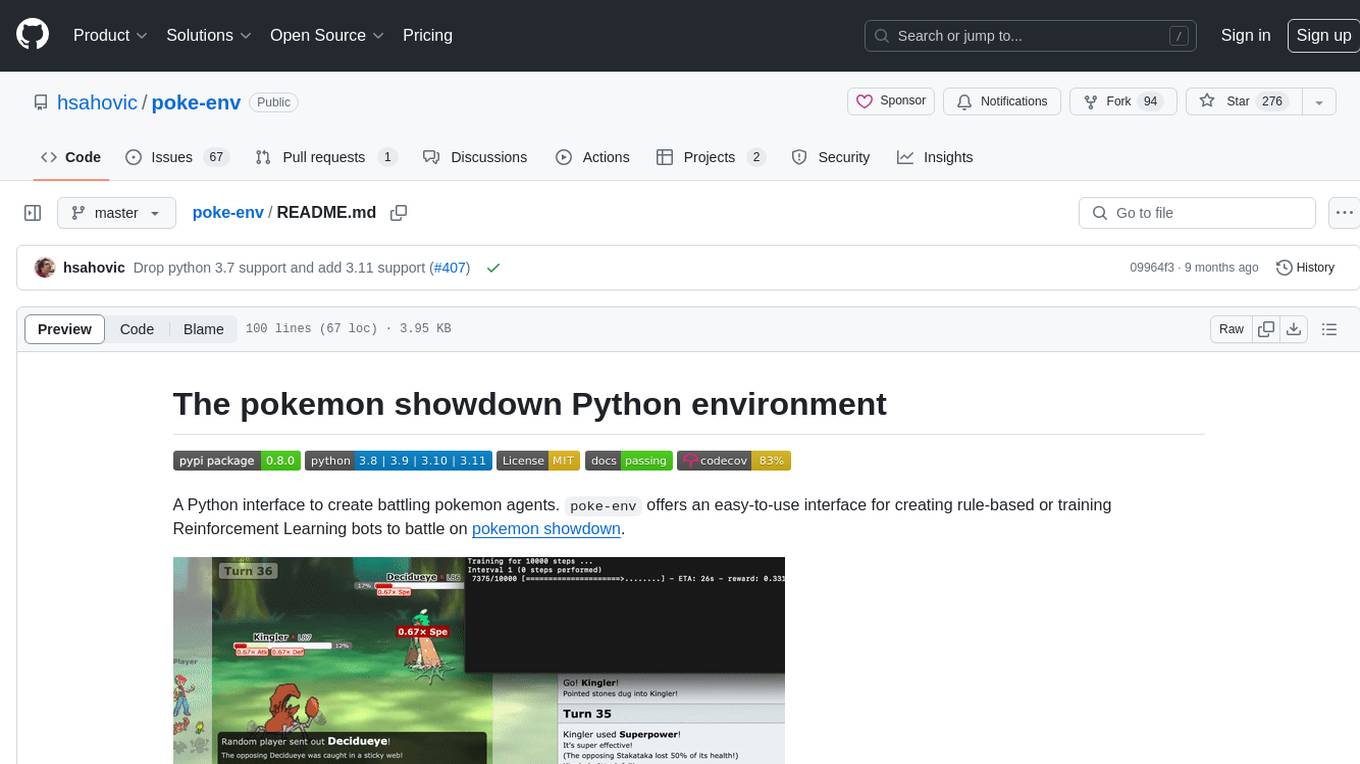

A Python interface to create battling pokemon agents. poke-env offers an easy-to-use interface for creating rule-based or training Reinforcement Learning bots to battle on pokemon showdown.

Agents are instance of python classes inheriting from Player. Here is what your first agent could look like:

class YourFirstAgent(Player):

def choose_move(self, battle):

for move in battle.available_moves:

if move.base_power > 90:

# A powerful move! Let's use it

return self.create_order(move)

# No available move? Let's switch then!

for switch in battle.available_switches:

if switch.current_hp_fraction > battle.active_pokemon.current_hp_fraction:

# This other pokemon has more HP left... Let's switch it in?

return self.create_order(switch)

# Not sure what to do?

return self.choose_random_move(battle)To get started, take a look at our documentation!

Documentation, detailed examples and starting code can be found on readthedocs.

This project requires python >= 3.9 and a Pokemon Showdown server.

pip install poke-env

You can use smogon's server to try out your agents against humans, but having a development server is strongly recommended. In particular, it is recommended to use the --no-security flag to run a local server with most rate limiting and throttling turned off. Please refer to the docs for detailed setup instructions.

git clone https://github.com/smogon/pokemon-showdown.git

cd pokemon-showdown

npm install

cp config/config-example.js config/config.js

node pokemon-showdown start --no-security

You can also clone the latest master version with:

git clone https://github.com/hsahovic/poke-env.git

Dependencies and development dependencies can then be installed with:

pip install -r requirements.txt

pip install -r requirements-dev.txt

This project is a follow-up of a group project from an artifical intelligence class at Ecole Polytechnique.

You can find the original repository here. It is partially inspired by the showdown-battle-bot project. Of course, none of these would have been possible without Pokemon Showdown.

Team data comes from Smogon forums' RMT section.

Data files are adapted version of the js data files of Pokemon Showdown.

@misc{poke_env,

author = {Haris Sahovic},

title = {Poke-env: pokemon AI in python},

url = {https://github.com/hsahovic/poke-env}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for poke-env

Similar Open Source Tools

poke-env

A Python interface for creating battling Pokemon agents, 'poke-env' allows users to develop rule-based or Reinforcement Learning bots to battle on Pokemon Showdown. The tool provides an easy-to-use interface for agent creation and offers documentation, examples, and starting code for beginners. Users can install 'poke-env' via pip and set up a development server for testing. The project is inspired by an artificial intelligence class project and relies on data from Smogon forums' RMT section. It is licensed under MIT and can be cited using a provided BibTeX entry.

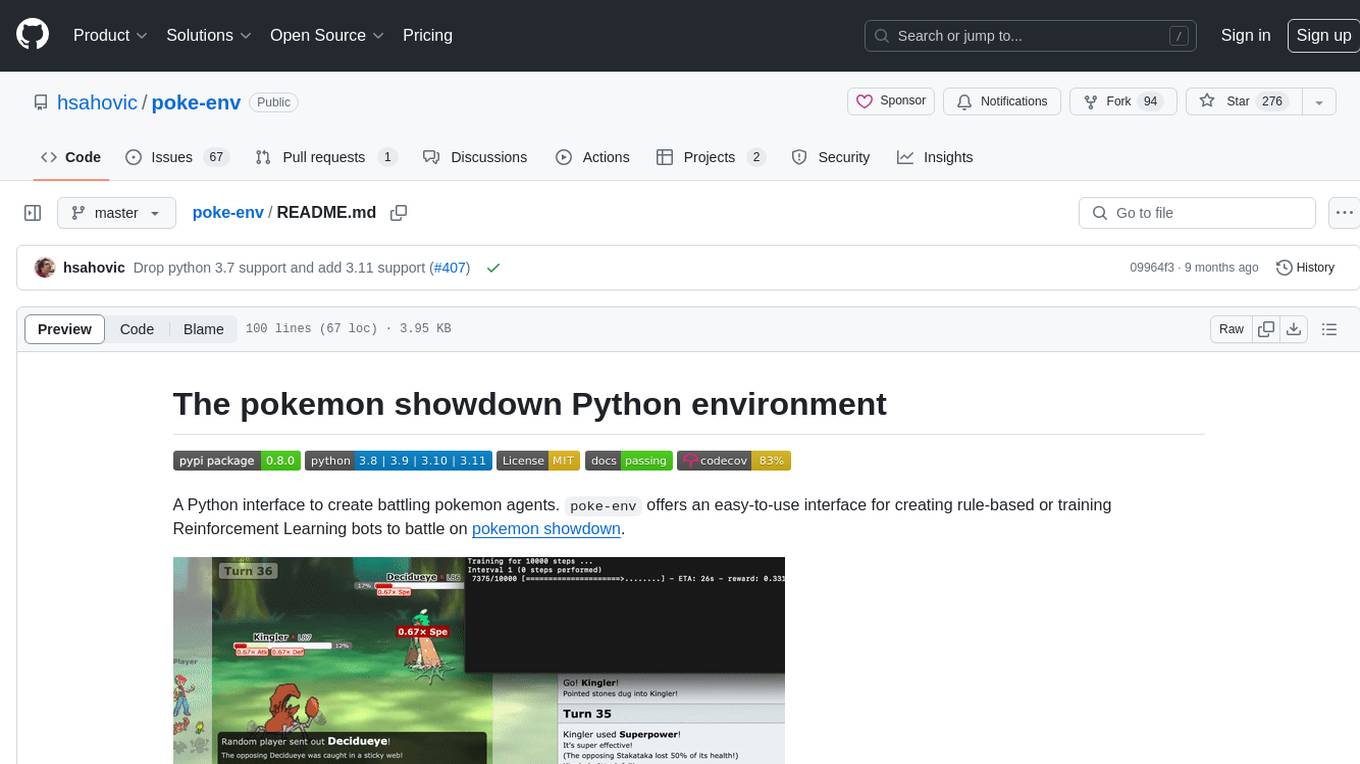

minecraft-mcp-server

Minecraft MCP Server is a bot powered by large language models and Mineflayer API. It uses the Model Context Protocol (MCP) to enable models like Claude to control a Minecraft character. The bot allows users to interact with Minecraft through commands and chat messages, facilitating tasks such as movement, inventory management, block interaction, entity interaction, and more. Users can also upload images of buildings and ask the bot to build them. The tool is designed to work with Claude Desktop and requires specific configurations for Minecraft and MCP clients. Contributions to the project, including refactoring, testing, documentation, and new functionality, are welcome.

vim-ollama

The 'vim-ollama' plugin for Vim adds Copilot-like code completion support using Ollama as a backend, enabling intelligent AI-based code completion and integrated chat support for code reviews. It does not rely on cloud services, preserving user privacy. The plugin communicates with Ollama via Python scripts for code completion and interactive chat, supporting Vim only. Users can configure LLM models for code completion tasks and interactive conversations, with detailed installation and usage instructions provided in the README.

gpt-engineer

GPT-Engineer is a tool that allows you to specify a software in natural language, sit back and watch as an AI writes and executes the code, and ask the AI to implement improvements.

torchchat

torchchat is a codebase showcasing the ability to run large language models (LLMs) seamlessly. It allows running LLMs using Python in various environments such as desktop, server, iOS, and Android. The tool supports running models via PyTorch, chatting, generating text, running chat in the browser, and running models on desktop/server without Python. It also provides features like AOT Inductor for faster execution, running in C++ using the runner, and deploying and running on iOS and Android. The tool supports popular hardware and OS including Linux, Mac OS, Android, and iOS, with various data types and execution modes available.

robocorp

Robocorp is a platform that allows users to create, deploy, and operate Python automations and AI actions. It provides an easy way to extend the capabilities of AI agents, assistants, and copilots with custom actions written in Python. Users can create and deploy tools, skills, loaders, and plugins that securely connect any AI Assistant platform to their data and applications. The Robocorp Action Server makes Python scripts compatible with ChatGPT and LangChain by automatically creating and exposing an API based on function declaration, type hints, and docstrings. It simplifies the process of developing and deploying AI actions, enabling users to interact with AI frameworks effortlessly.

actions

Sema4.ai Action Server is a tool that allows users to build semantic actions in Python to connect AI agents with real-world applications. It enables users to create custom actions, skills, loaders, and plugins that securely connect any AI Assistant platform to data and applications. The tool automatically creates and exposes an API based on function declaration, type hints, and docstrings by adding '@action' to Python scripts. It provides an end-to-end stack supporting various connections between AI and user's apps and data, offering ease of use, security, and scalability.

browser

Lightpanda Browser is an open-source headless browser designed for fast web automation, AI agents, LLM training, scraping, and testing. It features ultra-low memory footprint, exceptionally fast execution, and compatibility with Playwright and Puppeteer through CDP. Built for performance, Lightpanda offers Javascript execution, support for Web APIs, and is optimized for minimal memory usage. It is a modern solution for web scraping and automation tasks, providing a lightweight alternative to traditional browsers like Chrome.

bia-bob

BIA `bob` is a Jupyter-based assistant for interacting with data using large language models to generate Python code. It can utilize OpenAI's chatGPT, Google's Gemini, Helmholtz' blablador, and Ollama. Users need respective accounts to access these services. Bob can assist in code generation, bug fixing, code documentation, GPU-acceleration, and offers a no-code custom Jupyter Kernel. It provides example notebooks for various tasks like bio-image analysis, model selection, and bug fixing. Installation is recommended via conda/mamba environment. Custom endpoints like blablador and ollama can be used. Google Cloud AI API integration is also supported. The tool is extensible for Python libraries to enhance Bob's functionality.

1backend

1Backend is a flexible and scalable platform designed for running AI models on private servers and handling high-concurrency workloads. It provides a ChatGPT-like interface for users and a network-accessible API for machines, serving as a general-purpose backend framework. The platform offers on-premise ChatGPT alternatives, a microservices-first web framework, out-of-the-box services like file uploads and user management, infrastructure simplification acting as a container orchestrator, reverse proxy, multi-database support with its own ORM, and AI integration with platforms like LlamaCpp and StableDiffusion.

flake

Nixified.ai aims to simplify and provide access to a vast repository of AI executable code that would otherwise be challenging to run independently due to package management and complexity issues. The tool primarily runs on NixOS and Linux, with compatibility on Windows through NixOS-WSL. It can automatically utilize the GPU of the Windows host by setting LD_LIBRARY_PATH in the wrapper script. Users can explore the tool's offerings through the nix repl, with the main outputs including ComfyUI, a modular node-based Stable Diffusion WebUI, and deprecated packages like InvokeAI and textgen. To enable binary cache and save time building packages, users need to trust nixified-ai's binary cache by adding specific lines to their system configuration files.

superflows

Superflows is an open-source alternative to OpenAI's Assistant API. It allows developers to easily add an AI assistant to their software products, enabling users to ask questions in natural language and receive answers or have tasks completed by making API calls. Superflows can analyze data, create plots, answer questions based on static knowledge, and even write code. It features a developer dashboard for configuration and testing, stateful streaming API, UI components, and support for multiple LLMs. Superflows can be set up in the cloud or self-hosted, and it provides comprehensive documentation and support.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.

Sentient

Sentient is a personal, private, and interactive AI companion developed by Existence. The project aims to build a completely private AI companion that is deeply personalized and context-aware of the user. It utilizes automation and privacy to create a true companion for humans. The tool is designed to remember information about the user and use it to respond to queries and perform various actions. Sentient features a local and private environment, MBTI personality test, integrations with LinkedIn, Reddit, and more, self-managed graph memory, web search capabilities, multi-chat functionality, and auto-updates for the app. The project is built using technologies like ElectronJS, Next.js, TailwindCSS, FastAPI, Neo4j, and various APIs.

opencode

Opencode is an AI coding agent designed for the terminal. It is a tool that allows users to interact with AI models for coding tasks in a terminal-based environment. Opencode is open source, provider-agnostic, and focuses on a terminal user interface (TUI) for coding. It offers features such as client/server architecture, support for various AI models, and a strong emphasis on community contributions and feedback.

SWELancer-Benchmark

SWE-Lancer is a benchmark repository containing datasets and code for the paper 'SWE-Lancer: Can Frontier LLMs Earn $1 Million from Real-World Freelance Software Engineering?'. It provides instructions for package management, building Docker images, configuring environment variables, and running evaluations. Users can use this tool to assess the performance of language models in real-world freelance software engineering tasks.

For similar tasks

poke-env

A Python interface for creating battling Pokemon agents, 'poke-env' allows users to develop rule-based or Reinforcement Learning bots to battle on Pokemon Showdown. The tool provides an easy-to-use interface for agent creation and offers documentation, examples, and starting code for beginners. Users can install 'poke-env' via pip and set up a development server for testing. The project is inspired by an artificial intelligence class project and relies on data from Smogon forums' RMT section. It is licensed under MIT and can be cited using a provided BibTeX entry.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.