ai_wiki

《AI全栈算法笔记》:记载工程实践问题的解决策略与关键要点,分享各种实用案例,追踪前沿技术发展,囊括 AI 全栈知识,涵盖大模型、编程技术、机器学习、深度学习、强化学习、图神经网络、语音识别、NLP 及图像识别等领域

Stars: 346

This repository provides a comprehensive collection of resources, open-source tools, and knowledge related to quantitative analysis. It serves as a valuable knowledge base and navigation guide for individuals interested in various aspects of quantitative investing, including platforms, programming languages, mathematical foundations, machine learning, deep learning, and practical applications. The repository is well-structured and organized, with clear sections covering different topics. It includes resources on system platforms, programming codes, mathematical foundations, algorithm principles, machine learning, deep learning, reinforcement learning, graph networks, model deployment, and practical applications. Additionally, there are dedicated sections on quantitative trading and investment, as well as large models. The repository is actively maintained and updated, ensuring that users have access to the latest information and resources.

README:

如果喜欢本项目,或希望随时关注动态,请给我点个赞吧 (页面右上角的小星星),欢迎分享到社区!

探索 AI 工程实践,快速掌握全栈技术知识

欢迎来到《AI驯龙笔记》!本项目致力于分享工程实践中的问题解决策略与关键要点,追踪前沿技术发展,覆盖 AI 全栈知识,帮助开发者高效掌握 AI 技术,提升应用能力。

- 实战导向:每个主题均以工程问题为背景,提供完整的解决策略与关键代码。

- 知识体系全面:从基础到高级,涵盖编程、算法、机器学习、深度学习、强化学习、大模型、多模态等核心领域。

- 案例驱动:精选实际案例,深度解析 AI 工程中的难点与最佳实践。

- 技术发展追踪:紧跟技术前沿,分享最新工具、框架与应用方法。

- 模块化结构:主题清晰,方便快速查找与学习。

知识星球

🔥低至每日1毛|独家速成课|无痛学课|📺视频教程|

开源避坑指南|3分钟视频论文速度|图书馆|

全网最低价量化类星球之一|3天不满意免费退款

微信公众号

✨AI驯龙笔记:

- Github: https://github.com/charliedream1/ai_wiki

- Gitee(国内镜像):https://gitee.com/charlie1/ai_wiki.git

✨AI股票操盘手:

- Github: https://github.com/charliedream1/ai_quant_trade

- Gitee(国内镜像): https://gitee.com/charlie1/ai_quant_trade.git

- 简介:站式平台。包含股票知识、策略实例、因子挖掘、传统策略、机器学习、深度学习、强化学习、图网络、高频交易、C++部署和聚宽实例代码等,可以方便学习、模拟及实盘交易

- 系统平台网站:分享搭建开发环境与高效工具链的经验。

- 程序代码:精选代码片段和工程模板,涵盖多种编程语言与框架。

- 数据库:从SQL优化到NoSQL数据库再到向量数据库及图数据库的设计与使用,解决高效存储与查询问题。

亮点:提供完整的代码解决方案,帮助快速解决开发过程中的常见问题。

- 涵盖经典与现代算法,注重实际应用的性能与优化。

- 提供数据结构、高效算法与分布式计算的完整教程。

亮点:通过案例分析算法在复杂场景中的应用,如推荐系统与搜索优化。

- 数学基础:线性代数、概率统计等核心知识的实用解析。

- 机器学习:从监督学习到无监督学习的算法实现。

- 深度学习:神经网络的原理与优化策略。

- 强化学习:包括Q学习、深度强化学习的经典与创新应用。

- 图网络:图嵌入与图卷积网络的前沿案例。

亮点:不仅关注理论,还辅以工具和代码实现,贴近实际工程需求。

- 图像识别:目标检测、图像分割与生成的关键技术。

- NLP文本处理:从预训练语言模型到自监督学习的实战案例。

- 音频:语音识别、音频生成与增强技术。

- 时间序列:股票预测与时序分析的解决方案。

亮点:实践案例贯穿多个领域,提供跨领域的应用参考。

- LLM(大语言模型):训练与优化大语言模型的策略。

- 多模态:文字、图像、音频多模态模型的集成与应用。

- Prompt工程:探索设计高效 Prompt 的方法。

- RAG(检索增强生成):构建具备实时信息查询能力的智能模型。

- Agent:实现基于大模型的自主智能代理。

亮点:展示如何将大模型能力应用到生产系统中,提升自动化效率。

- 显卡硬件:优化 GPU 使用,提升训练效率。

- 数据接口:设计高效的训练数据流,支持大规模数据处理。

亮点:优化计算资源和数据流管理,降低训练成本。

- 总结日常学习中的重点、难点,提炼为高效学习指南。

- 配套思维导图,帮助快速记忆与复习。

亮点:帮助开发者在海量知识中提炼出关键要点,节约时间。

- 专利及著作权:记录项目中的创新成果与授权专利。

- 执业证书:汇总职业发展中的技术认证。

- 职业心得:分享职业规划、技术成长中的经验。

亮点:提供职业发展的宝贵参考与技术积累建议。

- 博客及知识星球资源:整合互联网中的高质量资源。

- 音乐与生活:在繁忙的开发中,提供一份轻松与娱乐。

亮点:拓展技术之外的知识,帮助开发者保持良好的生活节奏。

- 选择主题:根据目录快速定位感兴趣的模块。

- 阅读内容:每个模块均提供详尽案例与代码,便于直接参考。

- 应用到项目:将学习到的知识与技术应用到实际工程中,解决实际问题。

您的支持是我前进的动力,即便“1毛钱”我也很开心啊,感谢您的打赏和支持 (^o^)/

欢迎在 Github Discussions 中发起讨论。

- 欢迎在 Github Issues 中提交问题。

- 加入知识星球,获取更多技术支持。

请查看文档常见问题

@misc{ai_quant_trade,

author={Yi Li},

title={ai_quant_trade},

year={2022},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/charliedream1/ai_quant_trade}},

}

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ai_wiki

Similar Open Source Tools

ai_wiki

This repository provides a comprehensive collection of resources, open-source tools, and knowledge related to quantitative analysis. It serves as a valuable knowledge base and navigation guide for individuals interested in various aspects of quantitative investing, including platforms, programming languages, mathematical foundations, machine learning, deep learning, and practical applications. The repository is well-structured and organized, with clear sections covering different topics. It includes resources on system platforms, programming codes, mathematical foundations, algorithm principles, machine learning, deep learning, reinforcement learning, graph networks, model deployment, and practical applications. Additionally, there are dedicated sections on quantitative trading and investment, as well as large models. The repository is actively maintained and updated, ensuring that users have access to the latest information and resources.

bella-openapi

Bella OpenAPI is an API gateway that provides rich AI capabilities, similar to openrouter. In addition to chat completion ability, it also offers text embedding, ASR, TTS, image-to-image, and text-to-image AI capabilities. It integrates billing, rate limiting, and resource management functions. All integrated capabilities have been validated in large-scale production environments. The tool supports various AI capabilities, metadata management, unified login service, billing and rate limiting, and has been validated in large-scale production environments for stability and reliability. It offers a user-friendly experience with Java-friendly technology stack, convenient cloud-based experience service, and Dockerized deployment.

chatwiki

ChatWiki is an open-source knowledge base AI question-answering system. It is built on large language models (LLM) and retrieval-augmented generation (RAG) technologies, providing out-of-the-box data processing, model invocation capabilities, and helping enterprises quickly build their own knowledge base AI question-answering systems. It offers exclusive AI question-answering system, easy integration of models, data preprocessing, simple user interface design, and adaptability to different business scenarios.

ai-money-maker-handbook

The 'ai-money-maker-handbook' repository is a collection of information on using AI to earn extra income through side jobs. It includes strategies, resources, and verified methods for making money with AI technology in various fields. The repository provides insights on leveraging AI tools, platforms, and techniques to generate additional revenue streams in the AI era.

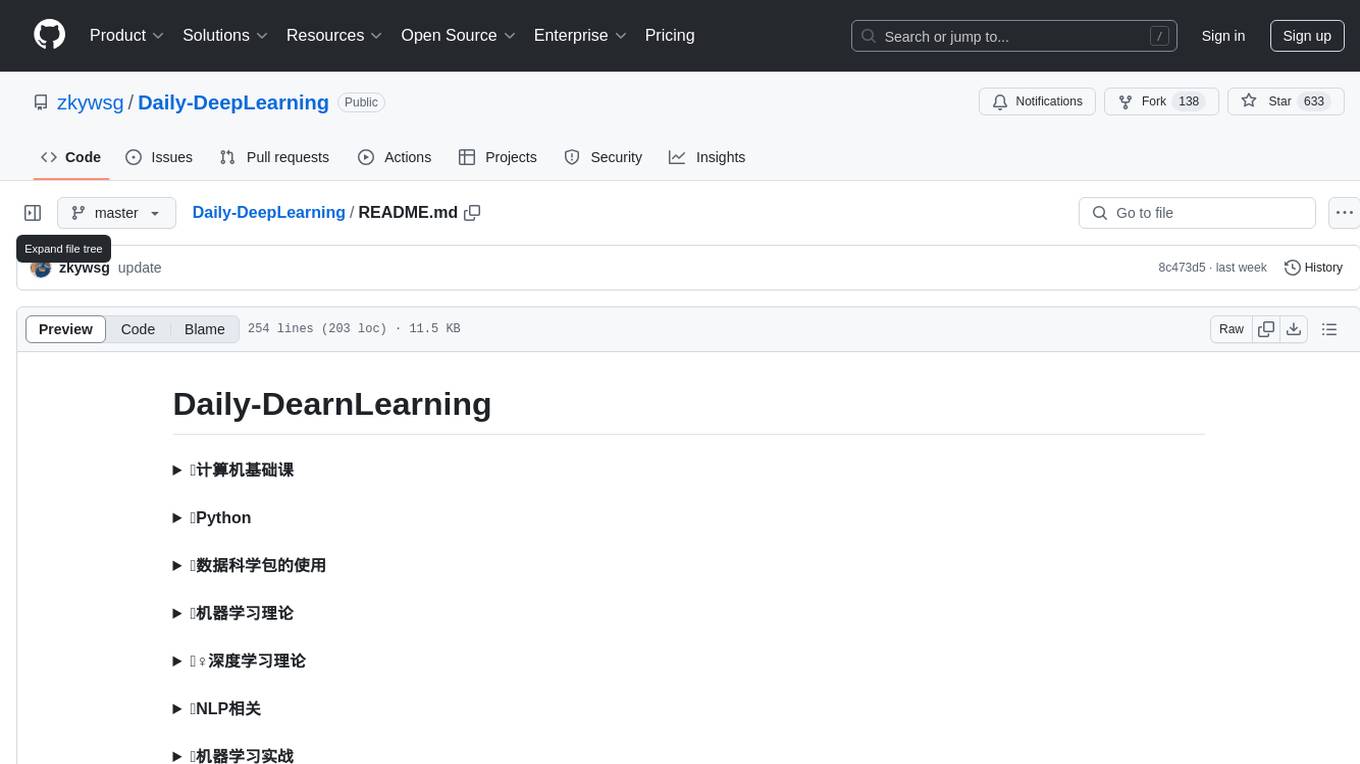

Daily-DeepLearning

Daily-DeepLearning is a repository that covers various computer science topics such as data structures, operating systems, computer networks, Python programming, data science packages like numpy, pandas, matplotlib, machine learning theories, deep learning theories, NLP concepts, machine learning practical applications, deep learning practical applications, and big data technologies like Hadoop and Hive. It also includes coding exercises related to '剑指offer'. The repository provides detailed explanations and examples for each topic, making it a comprehensive resource for learning and practicing different aspects of computer science and data-related fields.

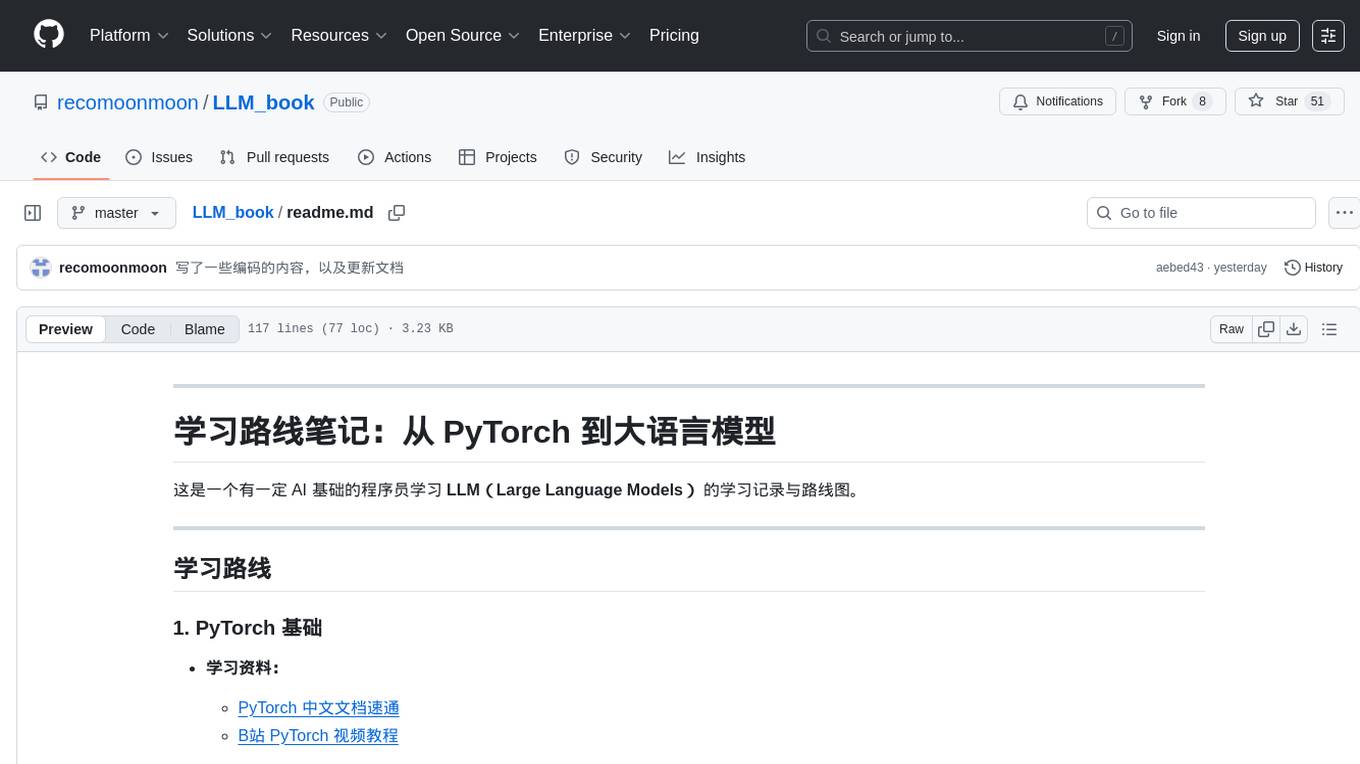

LLM_book

LLM_book is a learning record and roadmap for programmers with a certain AI foundation to learn Large Language Models (LLM). It covers topics such as PyTorch basics, Transformer architecture, langchain basics, foundational concepts of large models, fine-tuning methods, RAG (Retrieval-Augmented Generation), and building intelligent agents using LLM. The repository provides learning materials, code implementations, and documentation to help users progress in understanding and implementing LLM technologies.

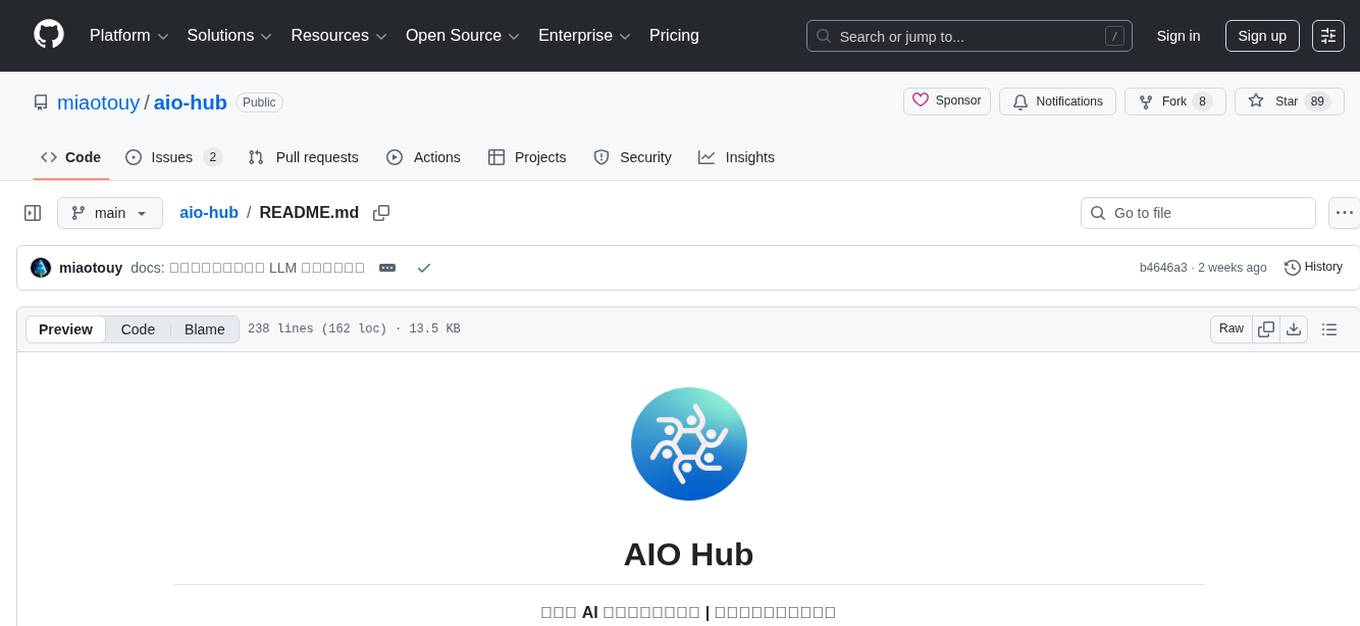

aio-hub

AIO Hub is a cross-platform AI hub built on Tauri + Vue 3 + TypeScript, aiming to provide developers and creators with precise LLM control experience and efficient toolchain. It features a chat function designed for complex tasks and deep exploration, a unified context pipeline for controlling every token sent to the model, interactive AI buttons, dual-view management for non-linear conversation mapping, open ecosystem compatibility with various AI models, and a rich text renderer for LLM output. The tool also includes features for media workstation, developer productivity, system and asset management, regex applier, collaboration enhancement between developers and AI, and more.

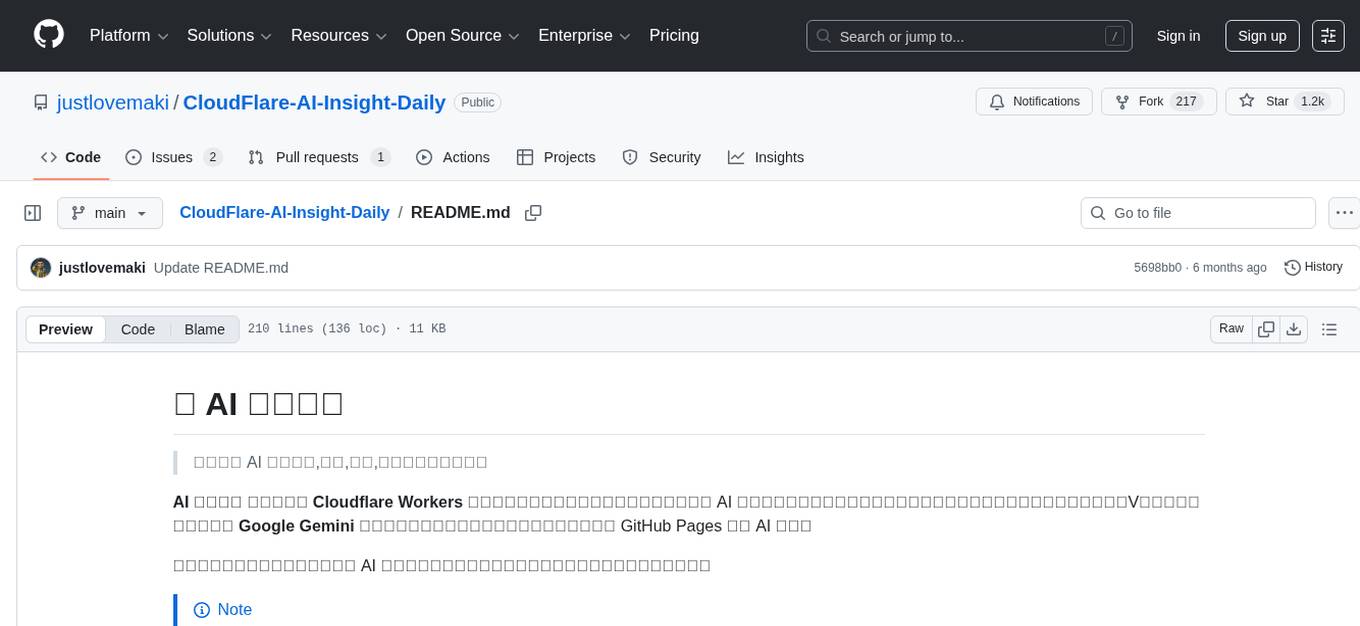

CloudFlare-AI-Insight-Daily

CloudFlare-AI-Insight-Daily is a content aggregation and generation platform powered by Cloudflare Workers. It curates the latest updates in the AI field, including industry news, popular open-source projects, cutting-edge academic papers, and tech influencers' social media comments. The platform utilizes the Google Gemini model for intelligent processing and summary generation, automatically publishing AI daily reports on GitHub Pages. Its goal is to be your efficient assistant in staying ahead in the rapidly changing AI landscape and acquiring the most valuable information.

AI-fundermentals

AI Fundamentals is a comprehensive AI infrastructure learning resource collection, covering a complete technical stack from hardware basics to advanced applications. It includes GPU architecture and programming, CUDA development, large language models, AI system design, performance optimization, enterprise deployment, and more. The repository aims to provide a systematic learning path and practical guidance for AI engineers, architects, GPU programming developers, large model application developers, and technical researchers.

ChatGPT-airport-tizi-fanqiang

This repository provides a curated list of recommended airport proxies for accessing ChatGPT and other AI tools while bypassing internet restrictions. The proxies are tested and verified to ensure reliability and stability. The readme includes detailed instructions on how to set up and use the proxies with various devices and platforms. Additionally, the repository offers advanced tutorials on upgrading to GPT-4/Plus, deploying a 24/7 ChatGPT微信机器人 server, and using Claude-3 securely and for free.

Flux-AI-Pro

Flux AI Pro - NanoBanana Edition is a high-performance, single-file AI image generation solution built on Cloudflare Workers. It integrates top AI providers like Pollinations.ai, Infip/Ghostbot, Aqua Server, Kinai API, and Airforce API to offer a serverless, fast, and feature-rich creative experience. It provides seamless interface for generating high-quality AI art without complex server setups. The tool supports multiple languages, smart language detection, RTL support, AI prompt generator, high-definition image generation, and local history storage with export/import functionality.

BigBanana-AI-Director

BigBanana AI Director is an industrial AI motion comic and video workbench platform that provides a one-stop solution for creating short dramas and comics. It utilizes a 'Script-to-Asset-to-Keyframe' workflow with advanced AI models to automate the process from script to final production, ensuring precise control over character consistency, scene continuity, and camera movements. The tool is designed to streamline the production process for creators, enabling efficient production from idea to finished product.

AcademicForge

Academic Forge is a collection of skills integrated for academic writing workflows. It provides a curated set of skills related to academic writing and research, allowing for precise skill calls, avoiding confusion between similar skills, maintaining focus on research workflows, and receiving timely updates from original authors. The forge integrates carefully selected skills covering various areas such as bioinformatics, clinical research, data analysis, scientific writing, laboratory automation, machine learning, databases, AI research, model architectures, fine-tuning, post-training, distributed training, optimization, inference, evaluation, agents, multimodal tasks, and machine learning paper writing. It is designed to streamline the academic writing and AI research processes by providing a cohesive and community-driven collection of skills.

LogChat

LogChat is an open-source and free AI chat client that supports various chat models and technologies such as ChatGPT, 讯飞星火, DeepSeek, LLM, TTS, STT, and Live2D. The tool provides a user-friendly interface designed using Qt Creator and can be used on Windows systems without any additional environment requirements. Users can interact with different AI models, perform voice synthesis and recognition, and customize Live2D character models. LogChat also offers features like language translation, AI platform integration, and menu items like screenshot editing, clock, and application launcher.

TypeTale

TypeTale is an AIGC creation software designed specifically for content creators, primarily used for novel promotion. It offers a wide range of AI capabilities such as image, video, and audio generation, as well as text processing and story extraction. The tool also provides workflow customization, AI assistant support, and a vast library of creative materials. With a user-friendly interface and system requirements compatible with Windows operating systems, TypeTale aims to streamline the content creation process for writers and creators.

For similar tasks

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

sorrentum

Sorrentum is an open-source project that aims to combine open-source development, startups, and brilliant students to build machine learning, AI, and Web3 / DeFi protocols geared towards finance and economics. The project provides opportunities for internships, research assistantships, and development grants, as well as the chance to work on cutting-edge problems, learn about startups, write academic papers, and get internships and full-time positions at companies working on Sorrentum applications.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

zep-python

Zep is an open-source platform for building and deploying large language model (LLM) applications. It provides a suite of tools and services that make it easy to integrate LLMs into your applications, including chat history memory, embedding, vector search, and data enrichment. Zep is designed to be scalable, reliable, and easy to use, making it a great choice for developers who want to build LLM-powered applications quickly and easily.

telemetry-airflow

This repository codifies the Airflow cluster that is deployed at workflow.telemetry.mozilla.org (behind SSO) and commonly referred to as "WTMO" or simply "Airflow". Some links relevant to users and developers of WTMO: * The `dags` directory in this repository contains some custom DAG definitions * Many of the DAGs registered with WTMO don't live in this repository, but are instead generated from ETL task definitions in bigquery-etl * The Data SRE team maintains a WTMO Developer Guide (behind SSO)

mojo

Mojo is a new programming language that bridges the gap between research and production by combining Python syntax and ecosystem with systems programming and metaprogramming features. Mojo is still young, but it is designed to become a superset of Python over time.

pandas-ai

PandasAI is a Python library that makes it easy to ask questions to your data in natural language. It helps you to explore, clean, and analyze your data using generative AI.

databend

Databend is an open-source cloud data warehouse that serves as a cost-effective alternative to Snowflake. With its focus on fast query execution and data ingestion, it's designed for complex analysis of the world's largest datasets.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.