Awesome-LLM-Agent-Optimization-Papers

This is the reading list for the survey "A Survey on the Optimization of LLM-based Agents ". We will keep adding papers and improving the list. Any suggestions and PRs are welcome!

Stars: 190

This repository contains a curated list of papers related to agent optimization in reinforcement learning. It includes research papers, articles, and resources that focus on improving the performance of agents in various environments through optimization techniques. The collection covers a wide range of topics such as policy optimization, reward shaping, exploration strategies, and more. Whether you are a researcher, student, or practitioner in the field of reinforcement learning, this repository serves as a valuable resource to stay updated on the latest advancements and best practices in agent optimization.

README:

This is the reading list for the survey "A Survey of LLM-based Agents Optimization" (Paper Link), which systematically explores various optimization techniques for enhancing LLM-based agents. The survey categorizes existing works into parameter-driven optimization, parameter-free optimization, datasets and benchmarks, and real-world applications. We will keep adding papers and improving the list. Any suggestions and PRs are welcome!

- FireAct : TOWARD LANGUAGE AGENT FINE-TUNING (arXiv 2023) [paper] [code]

- AgentTuning: Enabling Generalized Agent Abilities for LLMs (ACL-findings 2024) [paper] [code]

- SMART: Synergistic Multi-Agent Framework with Trajectory Learning for Knowledge-Intensive Tasks (arXiv 2024) [paper] [code]

- Agent-FLAN: Designing Data and Methods of Effective Agent Tuning for Large Language Models (ACL-findings 2024) [paper] [code]

- Bootstrapping LLM-based Task-Oriented Dialogue Agents via Self-Talk (arXiv 2024) [paper]

- SaySelf: Teaching LLMs to Express Confidence with Self-Reflective Rationales (EMNLP 2024) [paper] [code]

- AgentGym: Evolving Large Language Model-based Agents across Diverse Environments (arXiv 2024) [paper] [code]

- Trial and Error: Exploration-Based Trajectory Optimization for LLM Agents (ACL 2024) [paper] [code]

- Agent LUMOS: Unified and Modular Training for Open-Source Language Agents (ACL 2024) [paper] [code]

- LLMs in the Imaginarium: Tool Learning through Simulated Trial and Error (ACL 2024) [paper] [code]

- NAT: Learning From Failure: Integrating Negative Examples when Fine-tuning LLMs as Agents (arXiv 2024) [paper] [code]

- OPTIMA: Optimizing Effectiveness and Efficiency for LLM-Based Multi-Agent System (arXiv 2024) [paper] [code]

- Enhancing the General Agent Capabilities of Low-Parameter LLMs through Tuning and Multi-Branch Reasoning (NAACL 2024) [paper] [code]

- AgentOhana: Design Unified Data and Training Pipeline for Effective Agent Learning (arXiv 2024) [paper] [code]

- TORA: A TOOL-INTEGRATED REASONING AGENT FOR MATHEMATICAL PROBLEM SOLVING (ICLR 2024) [paper] [code]

- ReST meets ReAct: Self-Improvement for Multi-Step Reasoning LLM Agent (arxiv 2023) [paper]

- AGENTBANK: Towards Generalized LLM Agents via Fine-Tuning on 50000+ Interaction Trajectories (ACL-Findings 2024) [paper] [code]

- ADASWITCH: Adaptive Switching between Small and Large Agents for Effective Cloud-Local Collaborative Learning (EMNLP 2024) [paper]

- Watch Every Step! LLM Agent Learning via Iterative Step-Level Process Refinement (EMNLP 2024) [paper] [code]

- Re-ReST: Reflection-Reinforced Self-Training for Language Agents (EMNLP 2024) [paper] [code]

- Retrospex: Language Agent Meets Offline Reinforcement Learning Critic (EMNLP 2024) [paper] [code]

- ATM: Adversarial Tuning Multi-agent System Makes a Robust Retrieval-Augmented Generator (EMNLP 2024) [paper] [code]

- SWIFTSAGE: A Generative Agent with Fast and Slow Thinking for Complex Interactive Tasks (NeurIPS 2023) [paper] [code]

- NLRL: Natural Language Reinforcement Learning (arXiv 2024) [paper] [code]

- AGILE: A Novel Reinforcement Learning Framework of LLM Agents (NeurIPS 2024) [paper] [code]

- COEVOL: Constructing Better Responses for Instruction Finetuning through Multi-Agent Cooperation (arXiv 2024) [paper] [code]

- E2CL: Exploration-based Error Correction Learning for Embodied Agents (EMNLP-Findings 2024) [paper][code]

- STeCa: Step-level Trajectory Calibration for LLM Agent Learning (arXiv 2025) [paper][code]

- Star-Agents: Automatic Data Optimization with LLM Agents for Instruction Tuning (NeurIPS 2024) [paper] [code]

- ATLaS: Agent Tuning via Learning Critical Steps (ACL 2025) [paper]

- Multi-Agent Design: Optimizing Agents with Better Prompts and Topologies (arXiv 2025) [paper]

- Agent Planning with World Knowledge Model (NeurIPS 2024) [paper] [code]

- MULTIAGENT FINETUNING: SELF IMPROVEMENT WITH DIVERSE REASONING CHAINS (ICLR 2025) [paper] [code]

- Disentangling Reasoning Tokens and Boilerplate Tokens For Language Model Fine-tuning (ACL 2025) [paper]

- Rearchitecting LLMs (Manning Publications 2025) [book]

- CMAT: A Multi-Agent Collaboration Tuning Framework for Enhancing Small Language Models (arXiv 2024) [paper] [code]

- From Novice to Expert: LLM Agent Policy Optimization via Step-wise Reinforcement Learning (arXiv 2024) [paper]

- WebRL: Training LLM Web Agents via Self-Evolving Online Curriculum Reinforcement Learning (arXiv 2024) [paper] [code]

- SaySelf: Teaching LLMs to Express Confidence with Self-Reflective Rationales (EMNLP 2024) [paper] [code]

- AgentGym: Evolving Large Language Model-based Agents across Diverse Environments (arXiv 2024) [paper] [code]

- Coevolving with the Other You: Fine-Tuning LLM with Sequential Cooperative Multi-Agent Reinforcement Learning (arXiv 2024) [paper]

- GELI: Global Reward to Local Rewards: Multimodal-Guided Decomposition for Improving Dialogue Agents (EMNLP 2024) [paper]

- AGILE: A Novel Reinforcement Learning Framework of LLM Agents (NeurIPS 2024) [paper] [code]

- Agent Q: Advanced Reasoning and Learning for Autonomous AI Agents (arxiv) [paper]

- DMPO: Direct Multi-Turn Preference Optimization for Language Agents (EMNLP 2024) [paper] [code]

- Re-ReST: Reflection-Reinforced Self-Training for Language Agents (EMNLP 2024) [paper] [code]

- ATM: Adversarial Tuning Multi-agent System Makes a Robust Retrieval-Augmented Generator (EMNLP 2024) [paper] [code]

- OPTIMA: Optimizing Effectiveness and Efficiency for LLM-Based Multi-Agent System (arXiv 2024) [paper] [code]

- EPO: Hierarchical LLM Agents with Environment Preference Optimization (EMNLP 2024) [paper] [code]

- Watch Every Step! LLM Agent Learning via Iterative Step-Level Process Refinement (EMNLP 2024) [paper] [code]

- AMOR: A Recipe for Building Adaptable Modular Knowledge Agents Through Process Feedback (NeurIPS 2024) [paper] [code]

- SWEET-RL: Training Multi-Turn LLM Agents on Collaborative Reasoning Tasks (arXiv 2025) [paper] [code]

- Reinforcing Language Agents via Policy Optimization with Action Decomposition (NeurIPS 2024) [paper] [code]

- STeCa: Step-level Trajectory Calibration for LLM Agent Learning (arXiv 2025) [paper][code]

- DITS: Efficient Multi-Agent System Training with Data Influence-Oriented Tree Search (arXiv 2025) [paper]

- EPO: Explicit Policy Optimization for Strategic Reasoning in LLMs via Reinforcement Learning (ACL 2025) [paper] [code]

- DAPO: Decoupled Clip and Dynamic Sampling Policy Optimization (arXiv 2025) [paper]

- MARFT: Multi-Agent Reinforcement Fine-Tuning (arXiv 2025) [paper] [code]

- ReFT: Reasoning with Reinforced Fine-Tuning (ACL 2024) [paper] [code]

- AgentGym: Evolving Large Language Model-based Agents across Diverse Environments (arXiv 2024) [paper] [code]

- AGILE: A Novel Reinforcement Learning Framework of LLM Agents (NeurIPS 2024) [paper] [code]

- Re-ReST: Reflection-Reinforced Self-Training for Language Agents (EMNLP 2024) [paper] [code]

- AMOR: A Recipe for Building Adaptable Modular Knowledge Agents Through Process Feedback (NeurIPS 2024) [paper] [code]

- Trial and Error: Exploration-Based Trajectory Optimization for LLM Agents (ACL 2024) [paper] [code]

- OPTIMA: Optimizing Effectiveness and Efficiency for LLM-Based Multi-Agent System (arXiv 2024) [paper] [code]

- Watch Every Step! LLM Agent Learning via Iterative Step-Level Process Refinement (EMNLP 2024) [paper] [code]

- Retrospex: Language Agent Meets Offline Reinforcement Learning Critic (EMNLP 2024) [paper] [code]

- ENVISION:Interactive Evolution: A Neural-Symbolic Self-Training Framework For Large Language Models (arXiv 2024) [paper] [code]

- DITS: Efficient Multi-Agent System Training with Data Influence-Oriented Tree Search (arXiv 2025) [paper]

- Optimus-1: Hybrid Multimodal Memory Empowered Agents Excel in Long-Horizon Tasks (NeurIPS 2024) [paper] [code]

- Agent Hospital: A Simulacrum of Hospital with Evolvable Medical Agents (arXiv 2024) [paper]

- ExpeL: LLM Agents Are Experiential Learners (AAAI 2024) [paper] [code]

- AutoManual: Constructing Instruction Manuals by LLM Agents via Interactive Environmental Learning (NeurIPS 2024) [paper] [code]

- AutoGuide: Automated Generation and Selection of Context-Aware Guidelines for Large Language Model Agents (NeurIPS 2024) [paper]

- Experiential Co-Learning of Software-Developing Agents (ACL 2024) [paper] [code]

- Reflexion: Language Agents with Verbal Reinforcement Learning (NeurIPS 2023) [paper] [code]

- QueryAgent: A Reliable and Efficient Reasoning Framework with Environmental Feedback based Self-Correction (ACL 2024) [paper] [code]

- Agent-Pro: Learning to Evolve via Policy-Level Reflection and Optimization (ACL 2024) [paper] [code]

- SAGE: Self-Evolving Agents with Reflective and Memory-Augmented Abilities (arXiv 2024) [paper]

- ReCon: Boosting LLM Agents with Recursive Contemplation for Effective Deception Handling (ACL-findings 2024) [paper]

- Symbolic Learning Enables Self-Evolving Agents (arXiv 2024) [paper] [code]

- COPPR:Reflective Multi-Agent Collaboration based on Large Language Models (NeurIPS 2024) [paper]

- METAREFLECTION: Learning Instructions for Language Agents using Past Reflections (EMNLP 2024) [paper] [code]

- InteRecAgent: Recommender AI Agent: Integrating Large Language Models for Interactive Recommendations (arXiv 2023) [paper] [code]

- NLRL: Natural Language Reinforcement Learning (arXiv 2024) [paper] [code]

- Chain-of-Experts: When LLMs Meet Complex Operation Research Problems (ICLR 2024) [paper] [code]

- Retroformer: Retrospective Large Language Agents with Policy Gradient Optimization (arXiv 2024) [paper]

- SELF-TUNING: Instructing LLMs to Effectively Acquire New Knowledge through Self-Teaching (arXiv 2024) [paper]

- OPRO: LARGE LANGUAGE MODELS AS OPTIMIZERS (ICLR 2024) [paper] [code]

- MPO: Boosting LLM Agents with Meta Plan Optimization (arXiv 2025) [paper]

- Middleware for LLMs: Tools Are Instrumental for Language Agents in Complex Environments (EMNLP 2024) [paper] [code]

- AVATAR: Optimizing LLM Agents for Tool-Assisted Knowledge Retrieval (NeurIPS 2024) [paper] [code]

- AUTOACT: Automatic Agent Learning from Scratch for QA via Self-Planning (ACL 2024) [paper] [code]

- TPTU: Large Language Model-based AI Agents for Task Planning and Tool Usage (arXiv 2023) [paper]

- Lyra: Orchestrating Dual Correction in Automated Theorem Proving (TMLR 2024) [paper] [code]

- Offline Training of Language Model Agents with Functions as Learnable Weights (arXiv 2024) [paper]

- VideoAgent: A Memory-Augmented Multimodal Agent for Video Understanding (ECCV 2024) [paper] [code]

- ALITA: Generalist Agent Enabling Scalable Agentic Reasoning with Minimal Predefinition and Maximal Self-Evolution (arXiv 2025) [paper] [code]

- Search-o1: Agentic Search-Enhanced Large Reasoning Models (arXiv 2025) [paper] [code]

- Crafting Personalized Agents through Retrieval-Augmented Generation on Editable Memory Graphs (EMNLP 2024) [paper]

- RaDA: Retrieval-augmented Web Agent Planning with LLMs (ACL 2024-findings) [paper] [code]

- AutoRAG: Automated Framework for Optimization of Retrieval Augmented Generation Pipeline (arXiv 2024) [paper] [code]

- RAP: Retrieval-Augmented Planning with Contextual Memory for Multimodal LLM Agents (arXiv 2024) [paper] [code]

- MALADE: Orchestration of LLM-powered Agents with Retrieval Augmented Generation for Pharmacovigilance (arXiv 2024) [paper] [code]

- PaperQA: Retrieval-Augmented Generative Agent for Scientific Research (arXiv 2023) [paper] [code]

- Search-o1: Agentic Search-Enhanced Large Reasoning Models (arXiv 2025) [paper] [code]

- CAPO: Cooperative Plan Optimization for Efficient Embodied Multi-Agent Cooperation (arXiv 2024) [paper]

- A Multi-AI Agent System for Autonomous Optimization of Agentic AI Solutions via Iterative Refinement and LLM-Driven Feedback Loops (arXiv 2024) [paper] [code]

- Training Agents with Weakly Supervised Feedback from Large Language Models (arXiv 2024) [paper]

- Chatdev: Communicative Agents for Software Development (arXiv 2023) [paper] [code]

- MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework (arXiv 2023) [paper] [code]

- MapCoder: Multi-Agent Code Generation for Competitive Problem Solving (arXiv 2024) [paper] [code]

- A Dynamic LLM-Powered Agent Network for Task-Oriented Agent Collaboration (COLM 2024) [paper] [code]

- Scaling Large-Language-Model-based Multi-Agent Collaboration (arXiv 2024) [paper] [code]

- AgentVerse: Facilitating Multi-Agent Collaboration and Exploring Emergent Behaviors (arXiv 2023) [paper] [code]

- SMoA: Improving Multi-Agent Large Language Models with Sparse Mixture-of-Agents (arXiv 2024) [paper] [code]

- Encouraging Divergent Thinking in Large Language Models through Multi-Agent Debate (EMNLP 2024) [paper] [code]

- AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation (arXiv 2023) [paper] [code]

- WebShop: Towards Scalable Real-World Web Interaction with Grounded Language Agents (NeurIPS 2022) [paper] [code]

- WebArena: A Realistic Web Environment for Building Autonomous Agents (arXiv 2024) [paper] [code]

- Mind2Web: Towards a Generalist Agent for the Web (NeurIPS 2023 Spotlight) [paper] [code]

- Reinforcement Learning on Web Interfaces using Workflow-Guided Exploration (ICLR 2018) [paper] [code]

- ScienceWorld: Is your Agent Smarter than a 5th Grader? (EMNLP 2022) [paper] [code]

- ALFWorld: Aligning Text and Embodied Environments for Interactive Learning (ICLR 2021) [paper] [code]

- Building Cooperative Embodied Agents Modularly with Large Language Models (ICLR 2024) [paper] [code]

- ALFRED: A Benchmark for Interpreting Grounded Instructions for Everyday Tasks (CVPR 2020) [paper] [code]

- RLCard: A Toolkit for Reinforcement Learning in Card Games (AAAI-Workshop 2020) [paper] [code]

- OpenSpiel: A Framework for Reinforcement Learning in Games (arXiv 2019) [paper] [code]

- HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering (EMNLP 2018) [paper] [code]

- StrategyQA: Did Aristotle Use a Laptop? A Question Answering Benchmark with Implicit Reasoning Strategies (TACL 2021) [paper] [code]

- mmlu:Measuring Massive Multitask Language Understanding (ICLR 2021) [paper] [code]

- TruthfulQA: Measuring How Models Mimic Human Falsehoods (ACL 2022) [paper] [code]

- TriviaQA: A Large Scale Distantly Supervised Challenge Dataset for Reading Comprehension (ACL 2017) [paper]

- PubMedQA: A Dataset for Biomedical Research Question Answering (EMNLP 2019) [paper] [code]

- MuSiQue: Multihop Questions via Single-hop Question Composition (TACL 2022) [paper] [code]

- Constructing A Multi-hop QA Dataset for Comprehensive Evaluation of Reasoning Steps (COLING 2020) [paper] [code]

- A Dataset of Information-Seeking Questions and Answers Anchored in Research Papers (NAACL 2021) [paper] [code]

- Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge (arXiv 2018) [paper] [code]

- Training Verifiers to Solve Math Word Problems (arXiv 2021) [[paper] [code]

- A Diverse Corpus for Evaluating and Developing English Math Word Problem Solvers (ACL 2020) [paper] [code]

- mwp:Are NLP Models Really Able to Solve Simple Math Word Problems? (NAACL 2021) [paper] [code]

- Measuring Mathematical Problem Solving with the MATH Dataset (NeurIPS 2021) [paper] [code]

- T-Eval: Evaluating the Tool Utilization Capability of Large Language Models Step by Step (ACL 2024) [paper] [code]

- ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs (ICLR 2024) [paper] [code]

- MINT: Evaluating LLMs in Multi-turn Interaction with Tools and Language Feedback (ICLR 2024) [paper] [code]

- API-Bank: A Comprehensive Benchmark for Tool-Augmented LLMs (EMNLP 2023) [paper] [code]

- A-OKVQA: A Benchmark for Visual Question Answering using World Knowledge (ECCV 2022) [paper] [code]

- Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering (NeurIPS 2022) [paper] [code]

- VQA: Visual Question Answering (ICCV 2015) [paper] [code]

- EgoSchema: A Diagnostic Benchmark for Very Long-form Video Language Understanding (NeurIPS 2023) [paper] [code]

- NExT-QA: Next Phase of Question-Answering to Explaining Temporal Actions (CVPR 2021) [paper] [code]

- SWE-bench: Can Language Models Resolve Real-World GitHub Issues? (ICLR 2024) [paper] [code]

- Evaluating Large Language Models Trained on Code (arXiv 2021) [paper] [code]

- LiveCodeBench: Holistic and Contamination Free Evaluation of Large Language Models for Code (arXiv 2024) [paper] [code]

- Can LLM Already Serve as A Database Interface? A BIg Bench for Large-Scale Database Grounded Text-to-SQLs (NeurIPS 2023) [paper] [code]

- InterCode: Standardizing and Benchmarking Interactive Coding with Execution Feedback (NeurIPS 2023) [paper] [code]

- AgentBench: Evaluating LLMs as Agents (ICLR 2024) [paper] [code]

- AgentGym: Evolving Large Language Model-based Agents across Diverse Environments (arXiv 2024) [paper] [code]

- Just-Eval: The Unlocking Spell on Base LLMs: Rethinking Alignment via In-Context Learning (ICLR 2024) [paper] [code]

- StreamBench: Towards Benchmarking Continuous Improvement of Language Agents (NeurIPS 2024) [paper] [code]

- AgentBoard: An Analytical Evaluation Board of Multi-turn LLM Agent (NeurIPS 2024) [paper] [code]

- GAIA: A Benchmark for General AI Assistants (arXiv 2023) [paper] [code]

- Humanity’s Last Exam (arXiv 2025) [paper] [code]

- NESTFUL: A Benchmark for Evaluating LLMs on Nested Sequences of API Calls (arXiv 2024) [paper] [code]

- MCP_RADAR: Multi-Dimensional Benchmark for Evaluating Tool Use Capabilities in Large Language Models (arXiv 2025) [paper] [code]

- Med-PaLM: Large language models encode clinical knowledge (Nature 2023) [paper]

- DoctorGLM: Fine-tuning your Chinese Doctor is not a Herculean Task (arXiv 2023) [paper] [code]

- BianQue: Balancing the Questioning and Suggestion Ability of Health LLMs with Multi-turn Health Conversations Polished by ChatGPT (arXiv 2023) [paper] [code]

- DISC-MedLLM: Bridging General Large Language Models and Real-World Medical Consultation (arXiv 2023) [paper] [code]

- ClinicalAgent: Clinical Trial Multi-Agent System with Large Language Model-based Reasoning (BCB 2024) [paper] [code]

- MedAgents: Large Language Models as Collaborators for Zero-shot Medical Reasoning (ACL-findings 2024) [paper] [code]

- MDAgents: An Adaptive Collaboration of LLMs for Medical Decision-Making (NeurIPS 2024) [paper] [code]

- Agent Hospital: A Simulacrum of Hospital with Evolvable Medical Agents (arXiv 2024) [paper]

- AI Hospital: Benchmarking Large Language Models in a Multi-agent Medical Interaction Simulator (COLING 2025) [paper] [code]

- KG4Diagnosis: A Hierarchical Multi-Agent LLM Framework with Knowledge Graph Enhancement for Medical Diagnosis (AAAI-25 Bridge Program) [paper]

- AgentMD: Empowering Language Agents for Risk Prediction with Large-Scale Clinical Tool Learning (arXiv 2024) [paper] [code]

- MMedAgent: Learning to Use Medical Tools with Multi-modal Agent (EMNLP-findings 2024) [paper] [code]

- HuatuoGPT-o1: Towards Medical Complex Reasoning with LLMs (arXiv 2024) [paper] [code]

- IIMedGPT: Promoting Large Language Model Capabilities of Medical Tasks by Efficient Human Preference Alignment (arXiv 2025) [paper]

- CellAgent: An LLM-driven Multi-Agent Framework for Automated Single-cell Data Analysis (arXiv 2024) [paper] [code]

- BioDiscoveryAgent: An AI Agent for Designing Genetic Perturbation Experiments (arXiv 2024) [paper] [code]

- ProtAgents: Protein discovery via large language model multi-agent collaborations combining physics and machine learning (Digital Discovery 2024) [paper] [code]

- CRISPR-GPT: An LLM Agent for Automated Design of Gene-Editing Experiments (arXiv 2024) [paper] [code]

- ChemCrow: Augmenting Large-Language Models with Chemistry Tools (Nature Machine Intelligence 2024) [paper] [code]

- DrugAssist: A Large Language Model for Molecule Optimization (Briefings in Bioinformatics 2024) [paper] [code]

- Agent-based Learning of Materials Datasets from Scientific Literature (Digital Discovery 2024) [paper] [code]

- DrugAgent: Automating AI-aided Drug Discovery Programming through LLM Multi-Agent Collaboration (arXiv 2024) [paper] [code]

- MProt-DPO: Breaking the ExaFLOPS Barrier for Multimodal Protein Design Workflows with Direct Preference Optimization (SC 2024) [paper]

- Many Heads Are Better Than One: Improved Scientific Idea Generation by A LLM-Based Multi-Agent System (arXiv 2024) [paper] [code]

- SciAgent: Tool-augmented Language Models for Scientific Reasoning (arXiv 2024) [paper]

- Building Cooperative Embodied Agents Modularly with Large Language Models (ICLR 2024) [paper] [code]

- Do As I Can, Not As I Say: Grounding Language in Robotic Affordances (PMLR 2023) [paper] [code]

- RoCo: Dialectic Multi-Robot Collaboration with Large Language Models (ICRA 2024) [paper] [code]

- Voyager: An Open-Ended Embodied Agent with Large Language Models (arXiv 2023) [paper] [code]

- MultiPLY: A Multisensory Object-Centric Embodied Large Language Model in 3D World (CVPR 2024) [paper] [code]

- Retrospex: Language Agent Meets Offline Reinforcement Learning Critic (EMNLP 2024) [paper] [code]

- EPO: Hierarchical LLM Agents with Environment Preference Optimization (EMNLP 2024) [paper] [code]

- AutoManual: Constructing Instruction Manuals by LLM Agents via Interactive Environmental Learning (NeurIPS 2024) [paper] [code]

- MSI-Agent: Incorporating Multi-Scale Insight into Embodied Agents for Superior Planning and Decision-Making (EMNLP 2024) [paper]

- iVideoGPT: Interactive VideoGPTs are Scalable World Models (NeurIPS 2024) [paper] [code]

- AutoRT: Embodied Foundation Models for Large Scale Orchestration of Robotic Agents (arXiv 2024) [paper]

- Embodied Multi-Modal Agent trained by an LLM from a Parallel TextWorld (CVPR 2024) [paper] [code]

- Large Language Model Agent in Financial Trading: A Survey (arXiv 2024) [paper]

- TradingGPT: Multi-Agent System with Layered Memory and Distinct Characters for Enhanced Financial Trading Performance (arXiv 2023) [paper]

- FinMem: A Performance-Enhanced LLM Trading Agent with Layered Memory and Character Design (AAAI-SS) [paper] [code]

- A Multimodal Foundation Agent for Financial Trading: Tool-Augmented, Diversified, and Generalist (SIGKDD 2024) [paper]

- Learning to Generate Explainable Stock Predictions using Self-Reflective Large Language Models (WWW 2024) [paper] [code]

- FinCon: A Synthesized LLM Multi-Agent System with Conceptual Verbal Reinforcement for Enhanced Financial Decision Making (NeurIPS 2024) [paper]

- TradingAgents: Multi-Agents LLM Financial Trading Framework (arXiv 2024) [paper] [code]

- FinVision: A Multi-Agent Framework for Stock Market Prediction (ICAIF 2024) [paper]

- Simulating Financial Market via Large Language Model-based Agents (arXiv 2024) [paper]

- FinVerse: An Autonomous Agent System for Versatile Financial Analysis (arXiv 2024) [paper]

- FinRobot: An Open-Source AI Agent Platform for Financial Applications using Large Language Models (arXiv 2024) [paper] [code]

- Agents in Software Engineering: Survey, Landscape, and Vision (arXiv 2024) [paper] [code]

- Large Language Model-Based Agents for Software Engineering: A Survey (arXiv 2024) [paper] [code]

- Chatdev: Communicative Agents for Software Development (ACL 2024) [paper] [code]

- MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework (ICLR 2024) [paper] [code]

- MapCoder: Multi-Agent Code Generation for Competitive Problem Solving (ACL 2024) [paper] [code]

- Self-Organized Agents: A LLM Multi-Agent Framework toward Ultra Large-Scale Code Generation and Optimization (arXiv 2024) [paper] [code]

- Multi-Agent Software Development through Cross-Team Collaboration (arXiv 2024) [paper] [code]

- SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering (NeurIPS 2024) [paper] [code]

- CodeAgent: Enhancing Code Generation with Tool-Integrated Agent Systems for Real-World Repo-level Coding Challenges (ACL 2024) [paper]

- AgentCoder: Multi-Agent-based Code Generation with Iterative Testing and Optimisation (arXiv 2023) [paper] [code]

- RLEF: Grounding Code LLMs in Execution Feedback with Reinforcement Learning (arXiv 2024) [paper]

- Lemur: Harmonizing Natural Language and Code for Language Agents (ICLR 2024) [paper] [code]

- AgileCoder: Dynamic Collaborative Agents for Software Development based on Agile Methodology (FORGE 2025) [paper] [code]

If you find this project or the related paper helpful, please consider citing our work:

A Survey on the Optimization of Large Language Model-based Agents 📚 arXiv:2503.12434

@article{du2025survey,

title={A Survey on the Optimization of Large Language Model-based Agents},

author={Du, Shangheng and Zhao, Jiabao and Shi, Jinxin and Xie, Zhentao and Jiang, Xin and Bai, Yanhong and He, Liang},

journal={arXiv preprint arXiv:2503.12434},

year={2025}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-LLM-Agent-Optimization-Papers

Similar Open Source Tools

Awesome-LLM-Agent-Optimization-Papers

This repository contains a curated list of papers related to agent optimization in reinforcement learning. It includes research papers, articles, and resources that focus on improving the performance of agents in various environments through optimization techniques. The collection covers a wide range of topics such as policy optimization, reward shaping, exploration strategies, and more. Whether you are a researcher, student, or practitioner in the field of reinforcement learning, this repository serves as a valuable resource to stay updated on the latest advancements and best practices in agent optimization.

GenAiGuidebook

GenAiGuidebook is a comprehensive resource for individuals looking to begin their journey in GenAI. It serves as a detailed guide providing insights, tips, and information on various aspects of GenAI technology. The guidebook covers a wide range of topics, including introductory concepts, practical applications, and best practices in the field of GenAI. Whether you are a beginner or an experienced professional, this resource aims to enhance your understanding and proficiency in GenAI.

awesome-ai-agent-papers

This repository contains a curated list of papers related to artificial intelligence agents. It includes research papers, articles, and resources covering various aspects of AI agents, such as reinforcement learning, multi-agent systems, natural language processing, and more. Whether you are a researcher, student, or practitioner in the field of AI, this collection of papers can serve as a valuable reference to stay updated with the latest advancements and trends in AI agent technologies.

learn-claude-code

Learn Claude Code is an educational project by shareAI Lab that aims to help users understand how modern AI agents work by building one from scratch. The repository provides original educational material on various topics such as the agent loop, tool design, explicit planning, context management, knowledge injection, task systems, parallel execution, team messaging, and autonomous teams. Users can follow a learning path through different versions of the project, each introducing new concepts and mechanisms. The repository also includes technical tutorials, articles, and example skills for users to explore and learn from. The project emphasizes the philosophy that the model is crucial in agent development, with code playing a supporting role.

God-Level-AI

A drill of scientific methods, processes, algorithms, and systems to build stories & models. An in-depth learning resource for humans. This repository is designed for individuals aiming to excel in the field of Data and AI, providing video sessions and text content for learning. It caters to those in leadership positions, professionals, and students, emphasizing the need for dedicated effort to achieve excellence in the tech field. The content covers various topics with a focus on practical application.

LLMs-in-Finance

This repository focuses on the application of Large Language Models (LLMs) in the field of finance. It provides insights and knowledge about how LLMs can be utilized in various scenarios within the finance industry, particularly in generating AI agents. The repository aims to explore the potential of LLMs to enhance financial processes and decision-making through the use of advanced natural language processing techniques.

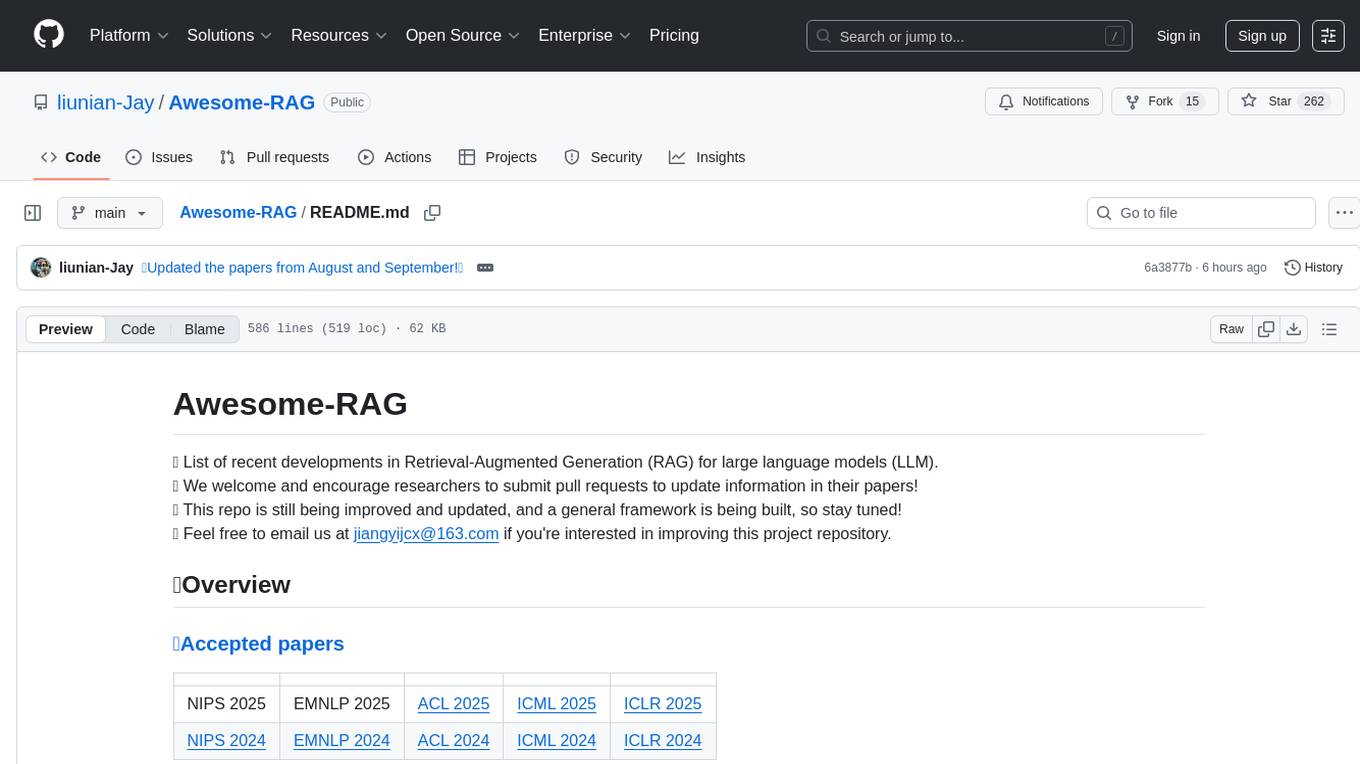

Awesome-RAG

Awesome-RAG is a repository that lists recent developments in Retrieval-Augmented Generation (RAG) for large language models (LLM). It includes accepted papers, evaluation datasets, latest news, and papers from various conferences like NIPS, EMNLP, ACL, ICML, and ICLR. The repository is continuously updated and aims to build a general framework for RAG. Researchers are encouraged to submit pull requests to update information in their papers. The repository covers a wide range of topics related to RAG, including knowledge-enhanced generation, contrastive reasoning, self-alignment, mobile agents, and more.

trae-agent

Trae-agent is a Python library for building and training reinforcement learning agents. It provides a simple and flexible framework for implementing various reinforcement learning algorithms and experimenting with different environments. With Trae-agent, users can easily create custom agents, define reward functions, and train them on a variety of tasks. The library also includes utilities for visualizing agent performance and analyzing training results, making it a valuable tool for both beginners and experienced researchers in the field of reinforcement learning.

openssa

OpenSSA is an open-source framework for creating efficient, domain-specific AI agents. It enables the development of Small Specialist Agents (SSAs) that solve complex problems in specific domains. SSAs tackle multi-step problems that require planning and reasoning beyond traditional language models. They apply OODA for deliberative reasoning (OODAR) and iterative, hierarchical task planning (HTP). This "System-2 Intelligence" breaks down complex tasks into manageable steps. SSAs make informed decisions based on domain-specific knowledge. With OpenSSA, users can create agents that process, generate, and reason about information, making them more effective and efficient in solving real-world challenges.

LLMs-playground

LLMs-playground is a repository containing code examples and tutorials for learning and experimenting with Large Language Models (LLMs). It provides a hands-on approach to understanding how LLMs work and how to fine-tune them for specific tasks. The repository covers various LLM architectures, pre-training techniques, and fine-tuning strategies, making it a valuable resource for researchers, students, and practitioners interested in natural language processing and machine learning. By exploring the code and following the tutorials, users can gain practical insights into working with LLMs and apply their knowledge to real-world projects.

AgentsMeetRL

AgentsMeetRL is an awesome list that summarizes open-source repositories for training LLM Agents using reinforcement learning. The criteria for identifying an agent project are multi-turn interactions or tool use. The project is based on code analysis from open-source repositories using GitHub Copilot Agent. The focus is on reinforcement learning frameworks, RL algorithms, rewards, and environments that projects depend on, for everyone's reference on technical choices.

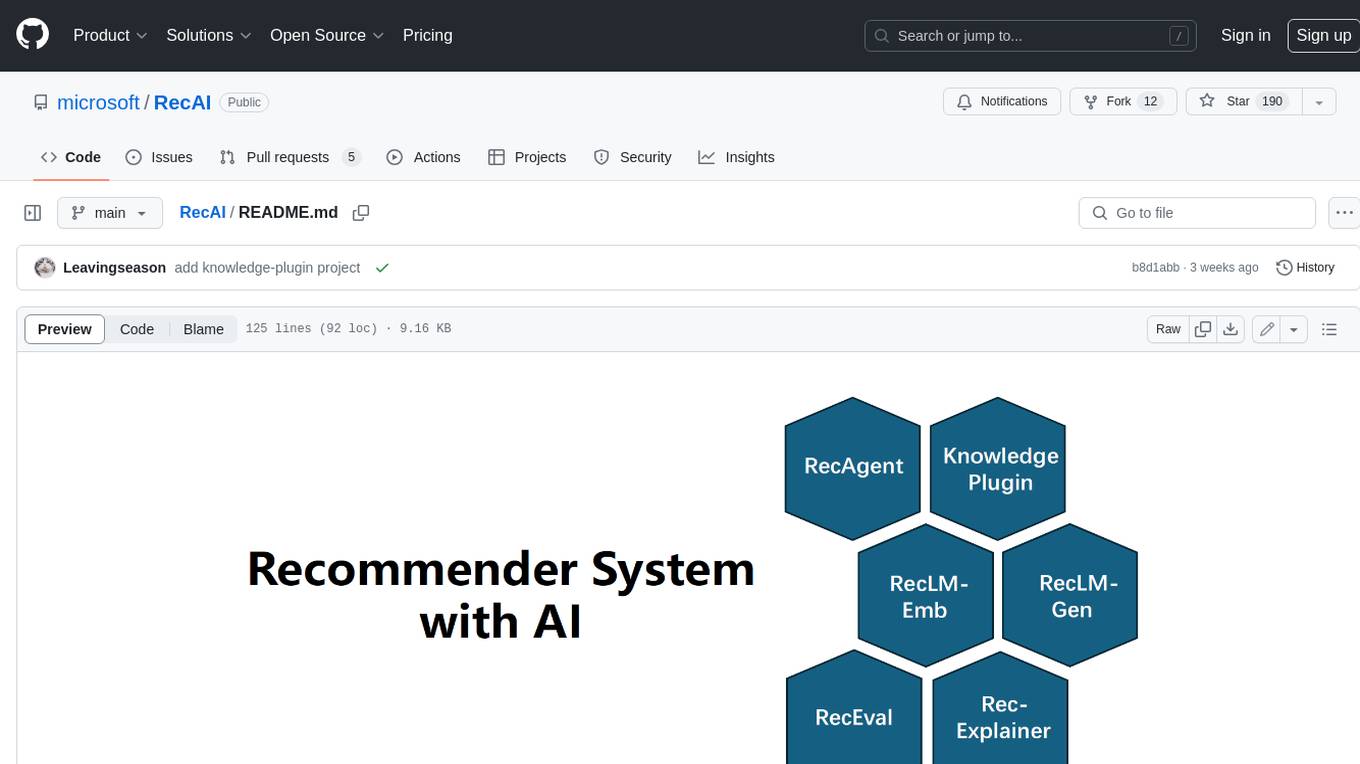

RecAI

RecAI is a project that explores the integration of Large Language Models (LLMs) into recommender systems, addressing the challenges of interactivity, explainability, and controllability. It aims to bridge the gap between general-purpose LLMs and domain-specific recommender systems, providing a holistic perspective on the practical requirements of LLM4Rec. The project investigates various techniques, including Recommender AI agents, selective knowledge injection, fine-tuning language models, evaluation, and LLMs as model explainers, to create more sophisticated, interactive, and user-centric recommender systems.

gpt-researcher

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

Mastering-NLP-from-Foundations-to-LLMs

This code repository is for the book 'Mastering NLP from Foundations to LLMs', which provides an in-depth introduction to Natural Language Processing (NLP) techniques. It covers mathematical foundations of machine learning, advanced NLP applications such as large language models (LLMs) and AI applications, as well as practical skills for working on real-world NLP business problems. The book includes Python code samples and expert insights into current and future trends in NLP.

ai-workshop-code

The ai-workshop-code repository contains code examples and tutorials for various artificial intelligence concepts and algorithms. It serves as a practical resource for individuals looking to learn and implement AI techniques in their projects. The repository covers a wide range of topics, including machine learning, deep learning, natural language processing, computer vision, and reinforcement learning. By exploring the code and following the tutorials, users can gain hands-on experience with AI technologies and enhance their understanding of how these algorithms work in practice.

rag-in-action

rag-in-action is a GitHub repository that provides a practical course structure for developing a RAG system based on DeepSeek. The repository likely contains resources, code samples, and tutorials to guide users through the process of building and implementing a RAG system using DeepSeek technology. Users interested in learning about RAG systems and their development may find this repository helpful in gaining hands-on experience and practical knowledge in this area.

For similar tasks

Awesome-LLM-Agent-Optimization-Papers

This repository contains a curated list of papers related to agent optimization in reinforcement learning. It includes research papers, articles, and resources that focus on improving the performance of agents in various environments through optimization techniques. The collection covers a wide range of topics such as policy optimization, reward shaping, exploration strategies, and more. Whether you are a researcher, student, or practitioner in the field of reinforcement learning, this repository serves as a valuable resource to stay updated on the latest advancements and best practices in agent optimization.

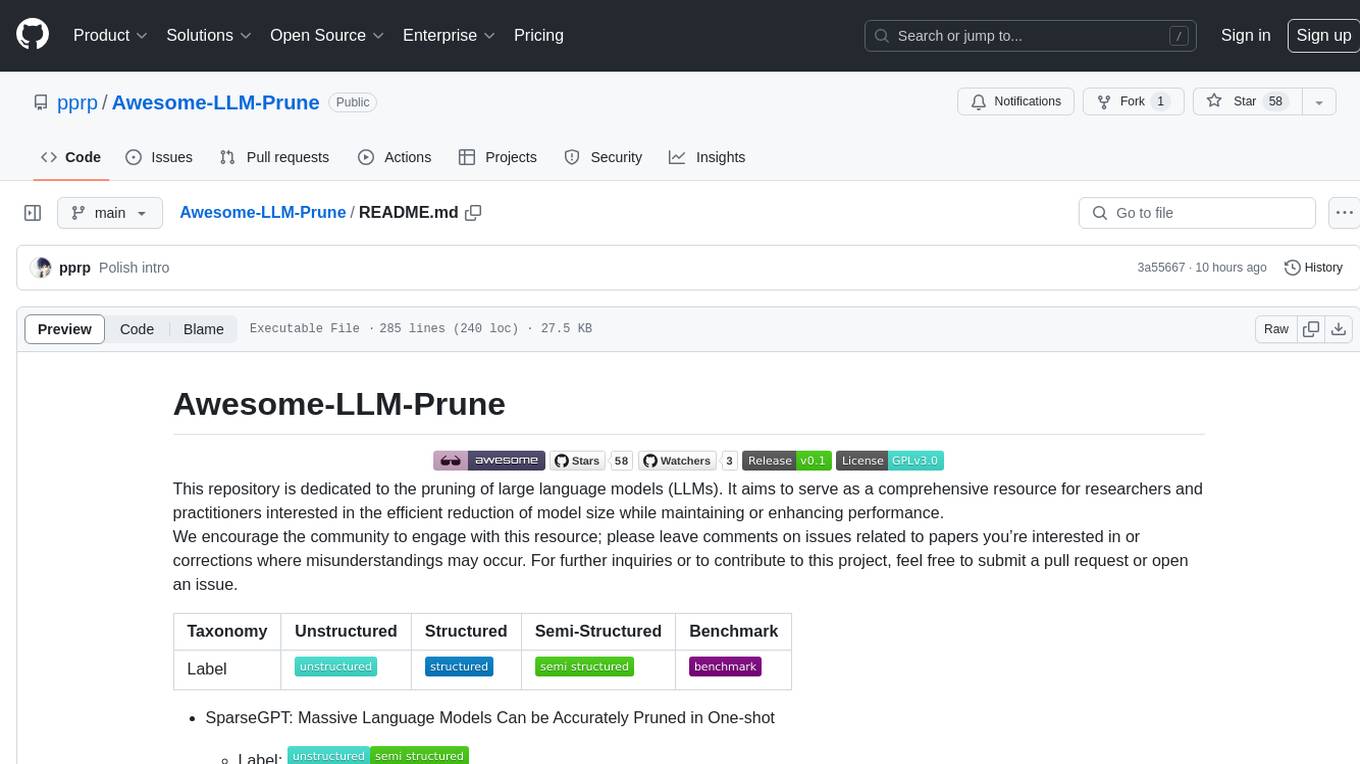

Awesome-LLM-Prune

This repository is dedicated to the pruning of large language models (LLMs). It aims to serve as a comprehensive resource for researchers and practitioners interested in the efficient reduction of model size while maintaining or enhancing performance. The repository contains various papers, summaries, and links related to different pruning approaches for LLMs, along with author information and publication details. It covers a wide range of topics such as structured pruning, unstructured pruning, semi-structured pruning, and benchmarking methods. Researchers and practitioners can explore different pruning techniques, understand their implications, and access relevant resources for further study and implementation.

OpenManus-RL

OpenManus-RL is an open-source initiative focused on enhancing reasoning and decision-making capabilities of large language models (LLMs) through advanced reinforcement learning (RL)-based agent tuning. The project explores novel algorithmic structures, diverse reasoning paradigms, sophisticated reward strategies, and extensive benchmark environments. It aims to push the boundaries of agent reasoning and tool integration by integrating insights from leading RL tuning frameworks and continuously updating progress in a dynamic, live-streaming fashion.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.