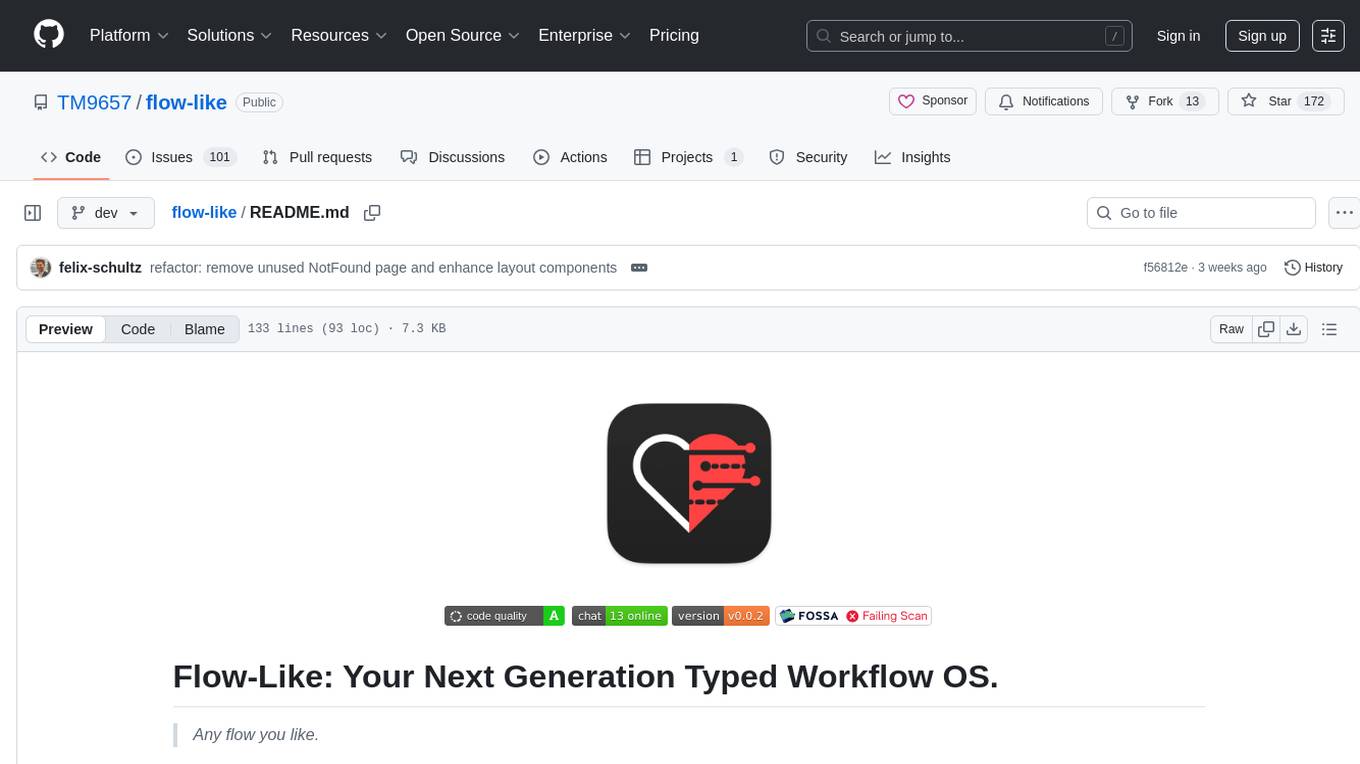

flow-like

Flow-Like: Strongly Typed Enterprise Scale Workflows. Built for scalability, speed, seamless AI integration and rich customization.

Stars: 591

Flow-Like is an enterprise-grade workflow operating system built upon Rust for uncompromising performance, efficiency, and code safety. It offers a modular frontend for apps, a rich set of events, a node catalog, a powerful no-code workflow IDE, and tools to manage teams, templates, and projects within organizations. With typed workflows, users can create complex, large-scale workflows with clear data origins, transformations, and contracts. Flow-Like is designed to automate any process through seamless integration of LLM, ML-based, and deterministic decision-making instances.

README:

If you can't see it, you can't trust it.

A Rust-powered workflow engine that runs on your device — laptop, server, or phone.

Fully typed. Fully traceable. Fully yours.

⭐ Star on GitHub · 📖 Docs · 💬 Discord · 📥 Download

Flow-Like is a visual workflow automation platform that runs entirely on your hardware. Build workflows with drag-and-drop blocks, run them on your laptop, phone, or server, and get a clear record of where data came from, what changed, and what came out — no cloud dependency, no black boxes, no guesswork.

Most workflow tools force you into their cloud. Your data leaves your machine, passes through third-party servers, and you're locked into their infrastructure. Offline? Stuck. Want to self-host? Pay enterprise prices.

Flow-Like runs wherever you choose — your laptop, your phone, your private server, or cloud infrastructure you control. Your data stays where you put it. No forced cloud dependency, no vendor lock-in, no "upgrade to enterprise for basic autonomy."

Local-first by default. Cloud-ready when you need it.

Automate on your couch without Wi-Fi. Deploy to your own AWS/GCP/Azure. Run in air-gapped factory networks. Process sensitive data in hospital environments. Your workflows, your infrastructure, your rules.

The reason this works is raw performance. Flow-Like's engine is built in Rust — compiled to native code, no garbage collector, no runtime overhead. The same workflow that takes 500ms in a Node.js engine takes 0.6ms in Flow-Like.

| Metric | Flow-Like | Typical workflow engines |

|---|---|---|

| Execution speed | ~244,000 workflows/sec | ~200 workflows/sec |

| Latency per workflow | ~0.6ms | ~50-500ms |

| Engine | Rust (native compiled) | Python / Node.js (interpreted) |

That 1000x performance gap means real workflows can run on resource-constrained devices — phones, edge hardware, Raspberry Pis — not just beefy cloud servers. And on powerful machines, it means processing millions of executions without breaking a sweat.

Most workflow tools show a green checkmark and move on. You're left guessing where data came from and why the result looks the way it does.

Flow-Like workflows are fully typed — they track what data flows where and why. Every input, transformation, and output is recorded with complete lineage and audit trails.

- Data Origins — See exactly where each value came from: the API response, the file, the user input.

- Transformations — Every validation, enrichment, and reformatting step is visible and traceable.

- Clear Contracts — Type-safe input/output definitions catch errors before deployment, not in production.

- Three Perspectives — Process view for business, Data view for analysts, Execution view for engineers. Same workflow, different lenses.

- AI-Native — Run LLMs locally or in the cloud with guardrails, approval gates, and full execution tracing on every call.

- White-Label Ready — Embed the editor in your product. Your logo, your colors, your brand. SSO, usage metering, and per-tenant scoping included.

- Source Available — BSL license, free for the vast majority of users (<2,000 employees and <$300M ARR).

| Feature | Flow-Like | n8n | Zapier / Make | Temporal |

|---|---|---|---|---|

| Runs on your device | ✅ Desktop, phone, edge, server | ❌ Cloud only | ||

| Works 100% offline | ✅ Full capability | ❌ Requires internet | ✅ Self-hosted | |

| Type safety | ✅ Fully typed | ❌ Runtime only | ❌ None | |

| Data lineage / audit trail | ✅ Complete | ❌ Limited | ❌ None | |

| Performance | ✅ ~244K/sec (Rust) | |||

| Visual builder | ✅ Full IDE | ✅ Good | ✅ Simple | ❌ Code only |

| UI builder | ✅ Built-in | ❌ None | ❌ None | ❌ None |

| LLM orchestration | ✅ Built-in + guardrails | ❌ Manual | ||

| White-label / embed | ✅ Full customization | ❌ Branded | ❌ Branded | ❌ No UI |

| Business process views | ✅ Process / Data / Execution | ❌ Single view | ❌ Single view | ❌ Code only |

| License | Source Available (BSL) | Sustainable Use | Proprietary | MIT |

| 💻 Desktop App | ☁️ Web App | 📱 Mobile App | ⚙️ From Source |

|---|---|---|---|

| Download Now | Try Online | Coming Soon | Build Yourself |

| macOS · Windows · Linux | Available now | iOS · Android | Latest features |

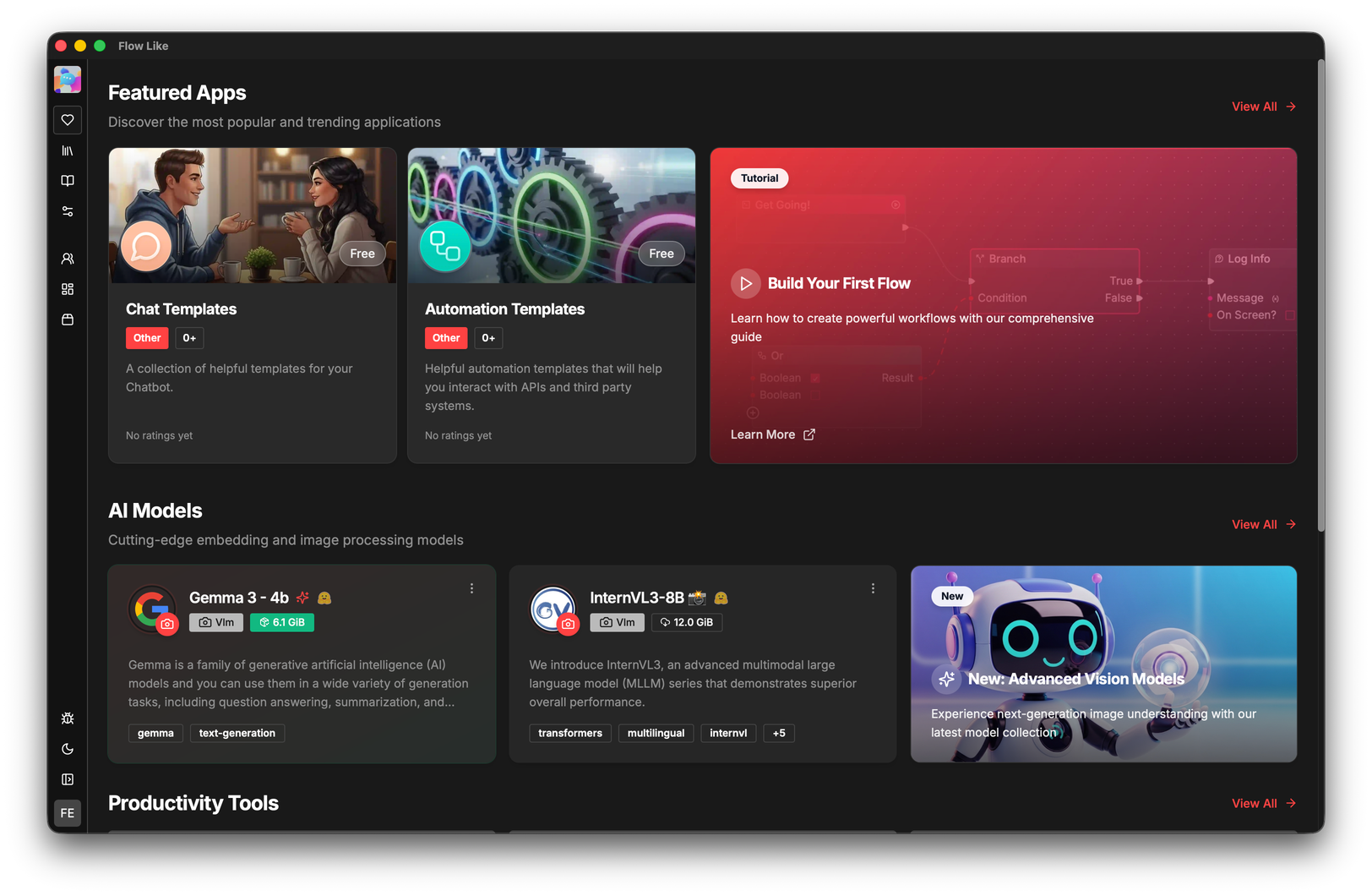

A no-code IDE for building workflows. Smart wiring with type-aware pins, inline execution feedback, live validation, and snapshot-based debugging.

APIs & webhooks, databases, file processing (Excel, CSV, PDF), AI models & computer vision, messaging (Slack, Discord, email), IoT, logic & control flow, security & auth — and growing.

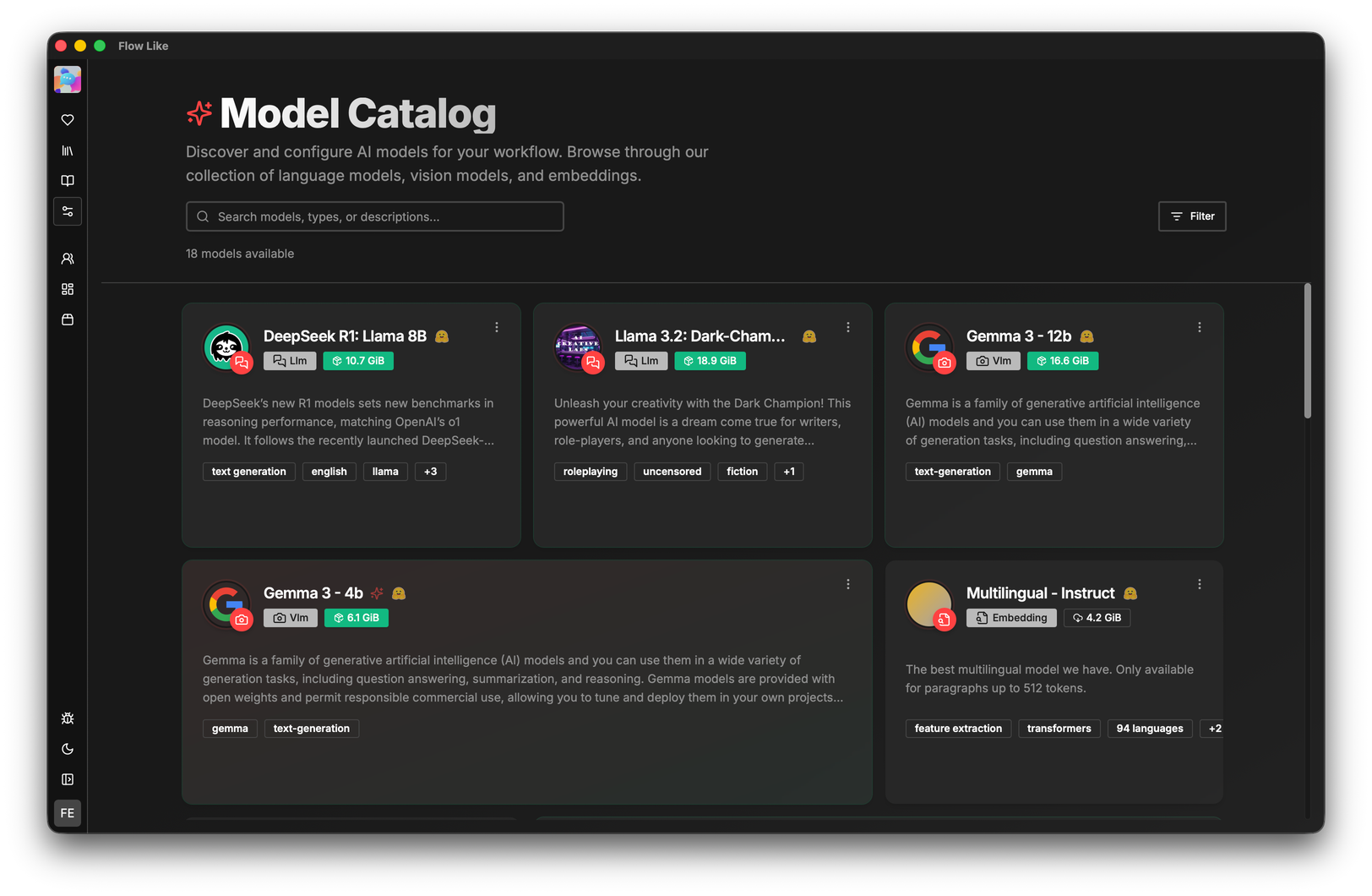

Download and run LLMs, vision models, and embeddings locally or in the cloud. Every AI decision is logged with full context — inputs, outputs, model version, and reasoning trace.

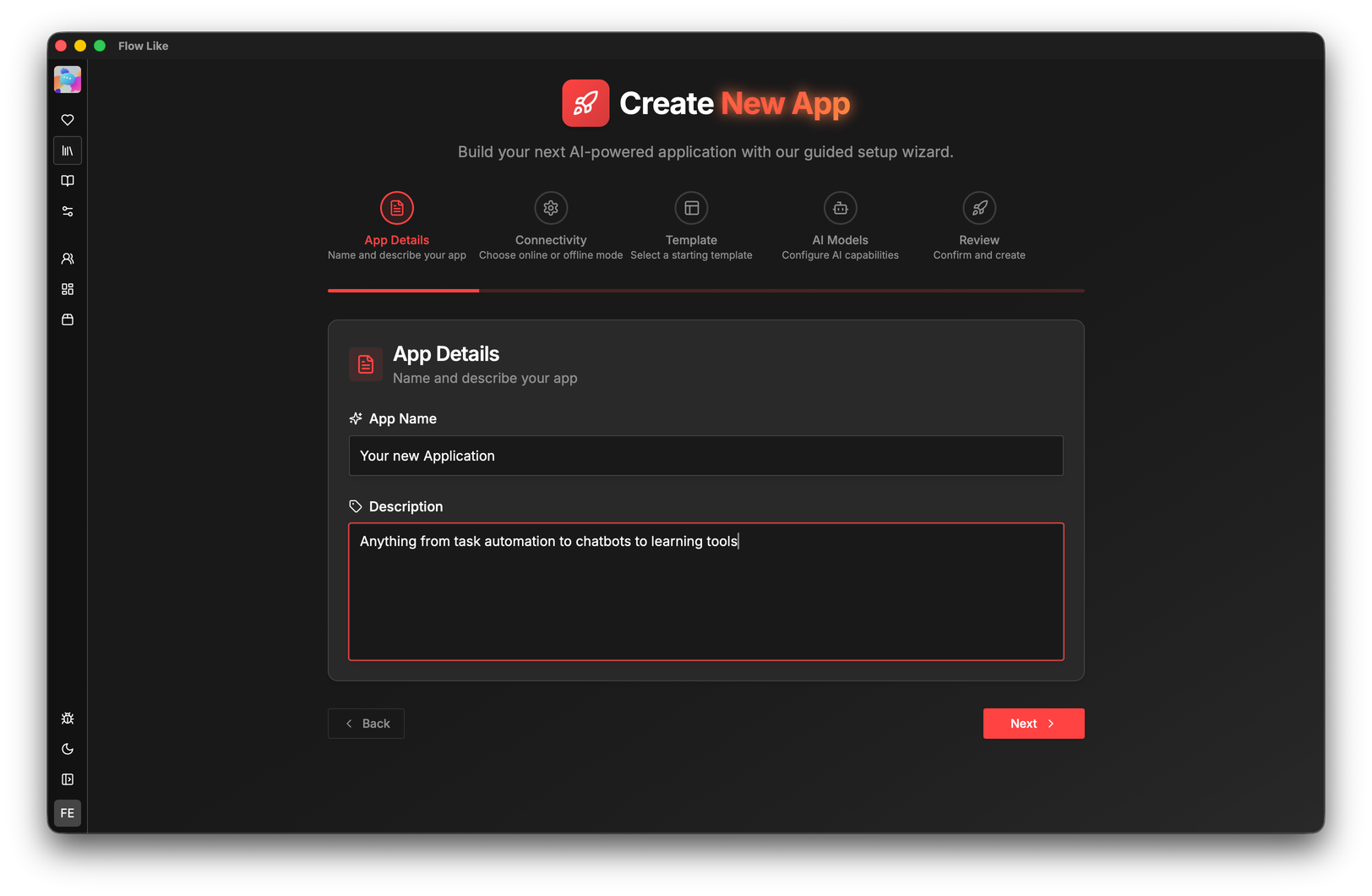

Package workflows as shareable applications with built-in storage. Run them offline or in the cloud. Browse the template store or share your own.

|

|

|

# Prerequisites: mise, Rust, Bun, Tauri prerequisites, Protobuf compiler

# Full guide: https://docs.flow-like.com/contributing/getting-started/

git clone https://github.com/TM9657/flow-like.git

cd flow-like

mise trust && mise install # install toolchains (Rust, Bun, Node, Python, uv)

bun install # install Node packages

mise run build:desktop # production buildAll dev / build / deploy tasks are defined in the root mise.toml.

Run mise tasks to see every available task, or mise run <task> to execute one:

mise run dev:desktop:mac:arm # dev mode – macOS Apple Silicon

mise run dev:web # dev mode – Next.js web app

mise run build:desktop # production desktop build

mise run fix # auto-fix lint (clippy + fmt + biome)

mise run check # run all checks without fixing💡 Platform-specific hints for macOS, Windows, and Linux are in the docs.

Embed the visual editor in your application, or run the engine headlessly behind the scenes.

- Themes — Catppuccin, Cosmic Night, Neo-Brutalism, Soft Pop, Doom, or create your own

- Design Tokens — Map your brand palette with dark/light mode support

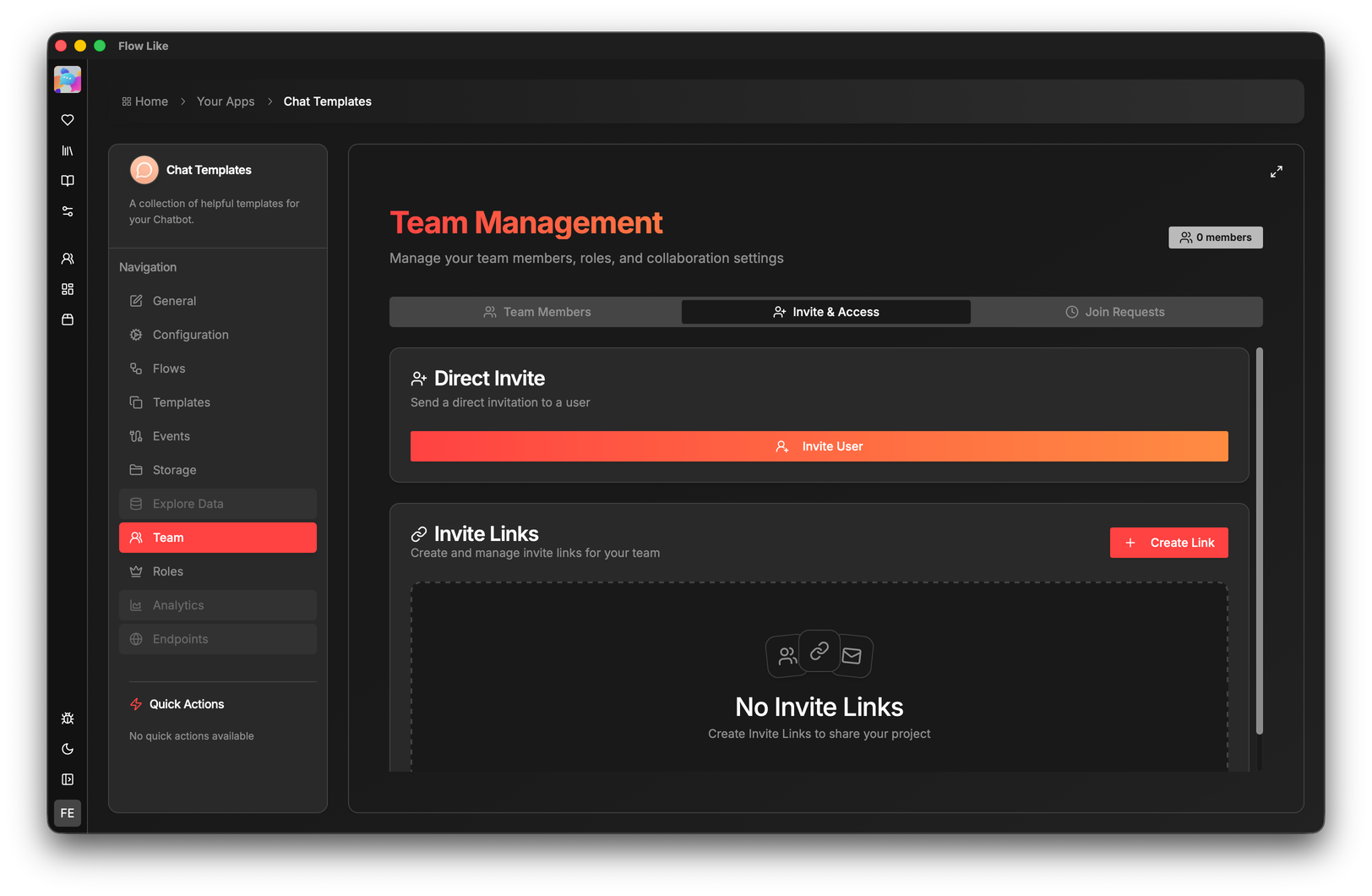

- SSO — OIDC/JWT with scoped secrets per tenant

- Usage Metering — Per-tenant quotas, event tracking, audit trails

- SDKs & APIs — Control workflows programmatically

Integrate Flow-Like into your applications with official SDKs:

| Language | Package | Install |

|---|---|---|

| Node.js / TypeScript | @flow-like/sdk |

npm install @flow-like/sdk |

| Python | flow-like |

uv add flow-like |

Both SDKs support workflows, file management, LanceDB, chat completions, embeddings, and optional LangChain integration. → SDK Docs

Perfect for SaaS platforms, internal tools, client portals, and embedded automation.

We welcome contributions of all kinds — new nodes, bug fixes, docs, themes, and ideas.

→ Read CONTRIBUTING.md for setup instructions and guidelines.

→ Browse good first issue to find a place to start.

→ Join Discord for questions and discussion.

📸 Screenshots & Gallery

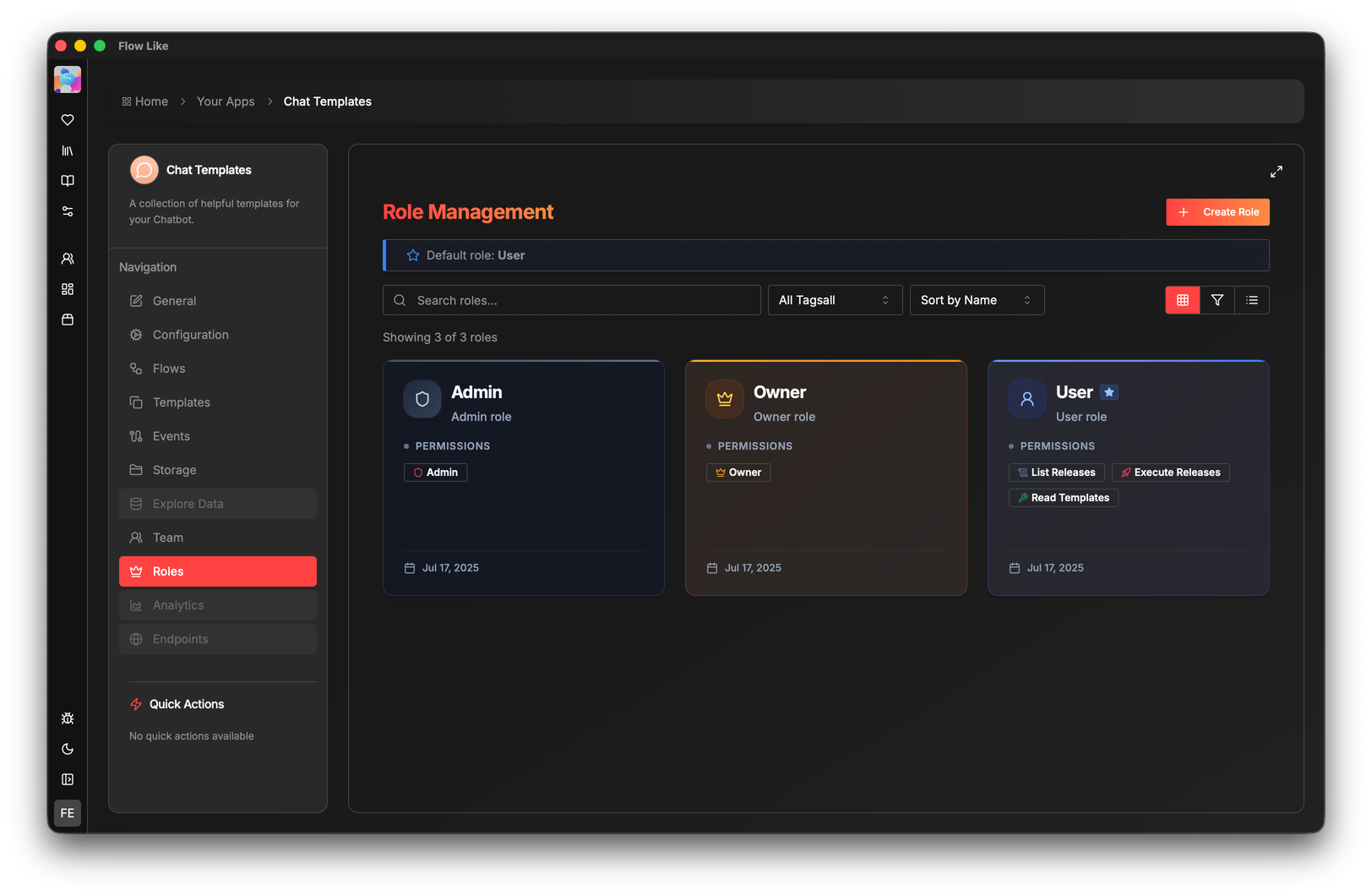

Team & Access Management

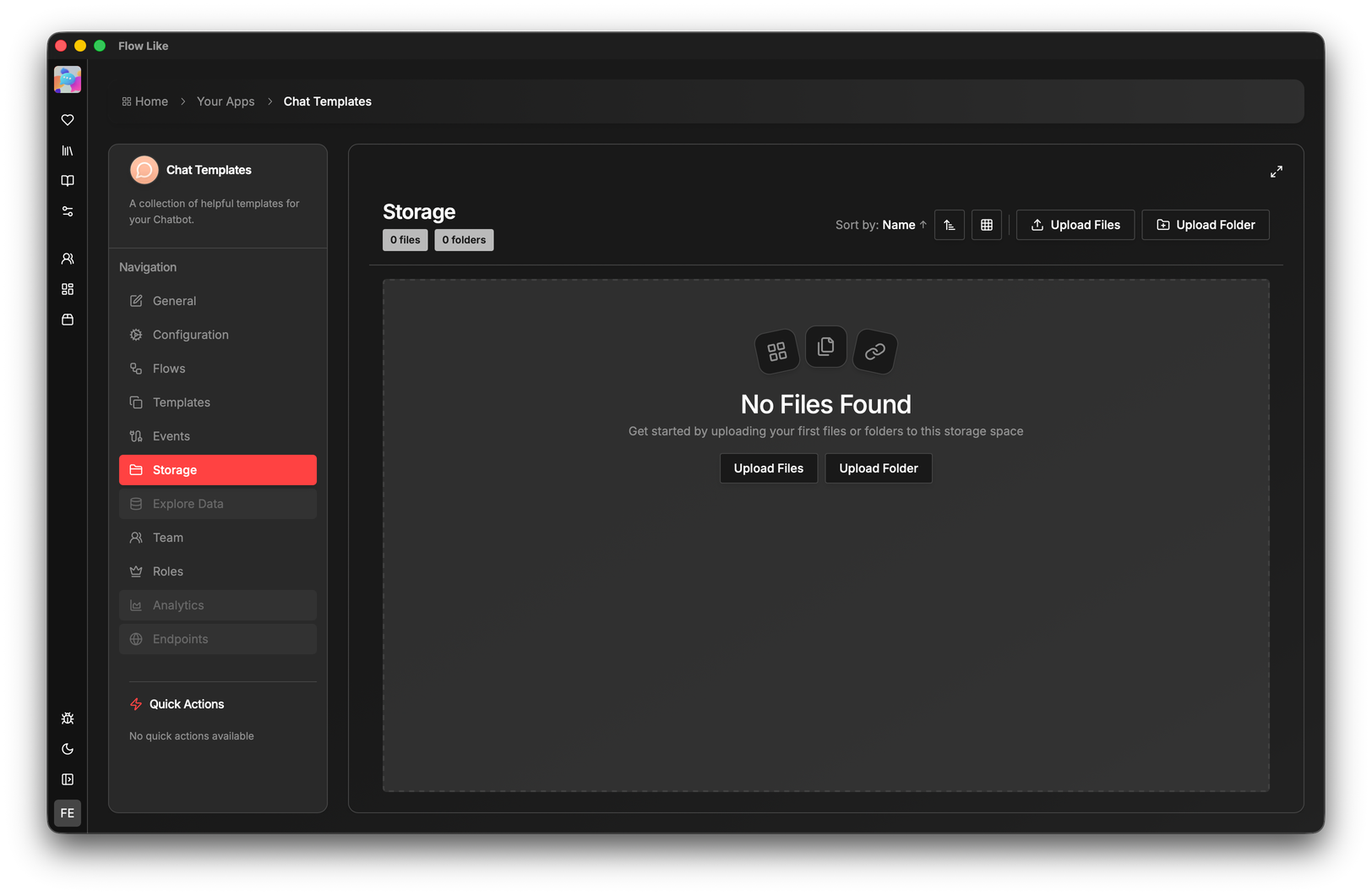

Built-in Storage & Search

Files, tables, and hybrid keyword+vector search — right on the canvas. No extra services needed.

❓ FAQ

Is Flow-Like free to use? Most likely, yes. Flow-Like uses the Business Source License (BSL), which is free if your organization has fewer than 2,000 employees and less than $300M in annual recurring revenue. This covers startups, SMBs, and most enterprises. Read the full license.

Can I run it completely offline? Yes, 100%. Flow-Like works fully offline on your local machine — ideal for air-gapped networks and secure environments. Switch to online mode anytime to collaborate.

Can I embed it in my product? Yes. Flow-Like is white-label ready — embed the visual editor, customize the theme to your brand, integrate SSO, or run just the engine headlessly.

What languages can I use? The visual builder is no-code. For custom nodes, you write Rust. SDKs and REST APIs are available for programmatic control.

Is it production-ready? Flow-Like is actively developed and used in production. We recommend thorough testing for mission-critical workflows. See the releases page for version stability.

How do I get support? Discord for quick help, Docs for guides, or GitHub Issues for bugs and features.

🏗️ Built With

Flow-Like stands on the shoulders of incredible open-source projects:

Frontend: React Flow · Radix UI · shadcn/ui · Next.js · Tailwind CSS · Framer Motion

Desktop & Runtime: Tauri · Rust · Tokio · Axum

AI & ML: llama.cpp · Candle · ONNX Runtime

Data: Zustand · TanStack Query · Dexie.js · SeaORM · Zod

Tooling: mise · Bun · Vite · Biome

Thank you to all maintainers and contributors of these projects! 🙏

Website · Docs · Download · Blog

Made with ❤️ in Munich, Germany

License ·

Code of Conduct ·

Security

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for flow-like

Similar Open Source Tools

flow-like

Flow-Like is an enterprise-grade workflow operating system built upon Rust for uncompromising performance, efficiency, and code safety. It offers a modular frontend for apps, a rich set of events, a node catalog, a powerful no-code workflow IDE, and tools to manage teams, templates, and projects within organizations. With typed workflows, users can create complex, large-scale workflows with clear data origins, transformations, and contracts. Flow-Like is designed to automate any process through seamless integration of LLM, ML-based, and deterministic decision-making instances.

netdata

Netdata is an open-source, real-time infrastructure monitoring platform that provides instant insights, zero configuration deployment, ML-powered anomaly detection, efficient monitoring with minimal resource usage, and secure & distributed data storage. It offers real-time, per-second updates and clear insights at a glance. Netdata's origin story involves addressing the limitations of existing monitoring tools and led to a fundamental shift in infrastructure monitoring. It is recognized as the most energy-efficient tool for monitoring Docker-based systems according to a study by the University of Amsterdam.

yournextstore

Your Next Store is an open-source Next.js e-commerce platform designed for AI development. It offers a Stripe-native integration, ultra-fast page loads, and typed APIs. The codebase follows consistent patterns, provides blazing fast performance with Next.js 16 and edge caching, and allows direct API integration without the need for plugins. With a focus on AI coding tools, Your Next Store offers familiar patterns, typed APIs for Commerce Kit SDK methods, and a well-defined domain for commerce data models, making it easier for AI models to generate accurate suggestions and write correct code.

lmms-lab-writer

LMMs-Lab Writer is an AI-native LaTeX editor designed for researchers who prioritize ideas over syntax. It offers a local-first approach with AI agents for editing assistance, one-click LaTeX setup with automatic package installation, support for multiple languages, AI-powered workflows with OpenCode integration, Git integration for modern collaboration, fully open-source with MIT license, cross-platform compatibility, and a comparison with Overleaf highlighting its advantages. The tool aims to streamline the writing and publishing process for researchers while ensuring data security and control.

lancedb

LanceDB is an open-source database for vector-search built with persistent storage, which greatly simplifies retrieval, filtering, and management of embeddings. The key features of LanceDB include: Production-scale vector search with no servers to manage. Store, query, and filter vectors, metadata, and multi-modal data (text, images, videos, point clouds, and more). Support for vector similarity search, full-text search, and SQL. Native Python and Javascript/Typescript support. Zero-copy, automatic versioning, manage versions of your data without needing extra infrastructure. GPU support in building vector index(*). Ecosystem integrations with LangChain 🦜️🔗, LlamaIndex 🦙, Apache-Arrow, Pandas, Polars, DuckDB, and more on the way. LanceDB's core is written in Rust 🦀 and is built using Lance, an open-source columnar format designed for performant ML workloads.

graphbit

GraphBit is an industry-grade agentic AI framework built for developers and AI teams that demand stability, scalability, and low resource usage. It is written in Rust for maximum performance and safety, delivering significantly lower CPU usage and memory footprint compared to leading alternatives. The framework is designed to run multi-agent workflows in parallel, persist memory across steps, recover from failures, and ensure 100% task success under load. With lightweight architecture, observability, and concurrency support, GraphBit is suitable for deployment in high-scale enterprise environments and low-resource edge scenarios.

aegra

Aegra is a self-hosted AI agent backend platform that provides LangGraph power without vendor lock-in. Built with FastAPI + PostgreSQL, it offers complete control over agent orchestration for teams looking to escape vendor lock-in, meet data sovereignty requirements, enable custom deployments, and optimize costs. Aegra is Agent Protocol compliant and perfect for teams seeking a free, self-hosted alternative to LangGraph Platform with zero lock-in, full control, and compatibility with existing LangGraph Client SDK.

agentsys

AgentSys is a modular runtime and orchestration system for AI agents, with 14 plugins, 43 agents, and 30 skills that compose into structured pipelines for software development. Each agent has a single responsibility, a specific model assignment, and defined inputs/outputs. The system runs on Claude Code, OpenCode, and Codex CLI, and plugins are fetched automatically from their repos. AgentSys orchestrates agents to handle tasks like task selection, branch management, code review, artifact cleanup, CI, PR comments, and deployment.

BharatMLStack

BharatMLStack is a comprehensive, production-ready machine learning infrastructure platform designed to democratize ML capabilities across India and beyond. It provides a robust, scalable, and accessible ML stack empowering organizations to build, deploy, and manage machine learning solutions at massive scale. It includes core components like Horizon, Trufflebox UI, Online Feature Store, Go SDK, Python SDK, and Numerix, offering features such as control plane, ML management console, real-time features, mathematical compute engine, and more. The platform is production-ready, cloud agnostic, and offers observability through built-in monitoring and logging.

MemMachine

MemMachine is an open-source long-term memory layer designed for AI agents and LLM-powered applications. It enables AI to learn, store, and recall information from past sessions, transforming stateless chatbots into personalized, context-aware assistants. With capabilities like episodic memory, profile memory, working memory, and agent memory persistence, MemMachine offers a developer-friendly API, flexible storage options, and seamless integration with various AI frameworks. It is suitable for developers, researchers, and teams needing persistent, cross-session memory for their LLM applications.

ToolJet

ToolJet is an open-source platform for building and deploying internal tools, workflows, and AI agents. It offers a visual builder with drag-and-drop UI, integrations with databases, APIs, SaaS apps, and object storage. The community edition includes features like a visual app builder, ToolJet database, multi-page apps, collaboration tools, extensibility with plugins, code execution, and security measures. ToolJet AI, the enterprise version, adds AI capabilities for app generation, query building, debugging, agent creation, security compliance, user management, environment management, GitSync, branding, access control, embedded apps, and enterprise support.

screenpipe

Screenpipe is an open source application that turns your computer into a personal AI, capturing screen and audio to create a searchable memory of your activities. It allows you to remember everything, search with AI, and keep your data 100% local. The tool is designed for knowledge workers, developers, researchers, people with ADHD, remote workers, and anyone looking for a private, local-first alternative to cloud-based AI memory tools.

proxypal

ProxyPal is a desktop app that allows users to utilize multiple AI subscriptions (such as Claude, ChatGPT, Gemini, GitHub Copilot) with any coding tool. It acts as a bridge between various AI providers and coding tools, offering features like connecting to different AI services, GitHub Copilot integration, Antigravity support, usage analytics, request monitoring, and auto-configuration. The app supports multiple platforms and clients, providing a seamless experience for developers to leverage AI capabilities in their coding workflow. ProxyPal simplifies the process of using AI models in coding environments, enhancing productivity and efficiency.

openclaw-nerve

Nerve is a self-hosted web UI for OpenClaw AI agents, offering voice conversations, live workspace editing, inline charts, cron scheduling, and full token-level visibility. It provides a dashboard for interacting with OpenClaw agents beyond messaging channels, allowing users to have a comprehensive view of their agents' activities and data. Nerve stands out with features like multilingual voice interaction, workspace visibility, responsive design, live charts integration, cron scheduling, and various other tools for managing and monitoring AI agents.

awesome-slash

Automate the entire development workflow beyond coding. awesome-slash provides production-ready skills, agents, and commands for managing tasks, branches, reviews, CI, and deployments. It automates the entire workflow, including task exploration, planning, implementation, review, and shipping. The tool includes 11 plugins, 40 agents, 26 skills, and 26k lines of lib code, with 3,357 tests and support for 3 platforms. It works with Claude Code, OpenCode, and Codex CLI, offering specialized capabilities through skills and agents.

learn-low-code-agentic-ai

This repository is dedicated to learning about Low-Code Full-Stack Agentic AI Development. It provides material for building modern AI-powered applications using a low-code full-stack approach. The main tools covered are UXPilot for UI/UX mockups, Lovable.dev for frontend applications, n8n for AI agents and workflows, Supabase for backend data storage, authentication, and vector search, and Model Context Protocol (MCP) for integration. The focus is on prompt and context engineering as the foundation for working with AI systems, enabling users to design, develop, and deploy AI-driven full-stack applications faster, smarter, and more reliably.

For similar tasks

unstract

Unstract is a no-code platform that enables users to launch APIs and ETL pipelines to structure unstructured documents. With Unstract, users can go beyond co-pilots by enabling machine-to-machine automation. Unstract's Prompt Studio provides a simple, no-code approach to creating prompts for LLMs, vector databases, embedding models, and text extractors. Users can then configure Prompt Studio projects as API deployments or ETL pipelines to automate critical business processes that involve complex documents. Unstract supports a wide range of LLM providers, vector databases, embeddings, text extractors, ETL sources, and ETL destinations, providing users with the flexibility to choose the best tools for their needs.

mslearn-knowledge-mining

The mslearn-knowledge-mining repository contains lab files for Azure AI Knowledge Mining modules. It provides resources for learning and implementing knowledge mining techniques using Azure AI services. The repository is designed to help users explore and understand how to leverage AI for knowledge mining purposes within the Azure ecosystem.

nous

Nous is an open-source TypeScript platform for autonomous AI agents and LLM based workflows. It aims to automate processes, support requests, review code, assist with refactorings, and more. The platform supports various integrations, multiple LLMs/services, CLI and web interface, human-in-the-loop interactions, flexible deployment options, observability with OpenTelemetry tracing, and specific agents for code editing, software engineering, and code review. It offers advanced features like reasoning/planning, memory and function call history, hierarchical task decomposition, and control-loop function calling options. Nous is designed to be a flexible platform for the TypeScript community to expand and support different use cases and integrations.

LLMs-in-Finance

This repository focuses on the application of Large Language Models (LLMs) in the field of finance. It provides insights and knowledge about how LLMs can be utilized in various scenarios within the finance industry, particularly in generating AI agents. The repository aims to explore the potential of LLMs to enhance financial processes and decision-making through the use of advanced natural language processing techniques.

docq

Docq is a private and secure GenAI tool designed to extract knowledge from business documents, enabling users to find answers independently. It allows data to stay within organizational boundaries, supports self-hosting with various cloud vendors, and offers multi-model and multi-modal capabilities. Docq is extensible, open-source (AGPLv3), and provides commercial licensing options. The tool aims to be a turnkey solution for organizations to adopt AI innovation safely, with plans for future features like more data ingestion options and model fine-tuning.

sophia

Sophia is an open-source TypeScript platform designed for autonomous AI agents and LLM based workflows. It aims to automate processes, review code, assist with refactorings, and support various integrations. The platform offers features like advanced autonomous agents, reasoning/planning inspired by Google's Self-Discover paper, memory and function call history, adaptive iterative planning, and more. Sophia supports multiple LLMs/services, CLI and web interface, human-in-the-loop interactions, flexible deployment options, observability with OpenTelemetry tracing, and specific agents for code editing, software engineering, and code review. It provides a flexible platform for the TypeScript community to expand and support various use cases and integrations.

Upsonic

Upsonic offers a cutting-edge enterprise-ready framework for orchestrating LLM calls, agents, and computer use to complete tasks cost-effectively. It provides reliable systems, scalability, and a task-oriented structure for real-world cases. Key features include production-ready scalability, task-centric design, MCP server support, tool-calling server, computer use integration, and easy addition of custom tools. The framework supports client-server architecture and allows seamless deployment on AWS, GCP, or locally using Docker.

clearml

ClearML is an auto-magical suite of tools designed to streamline AI workflows. It includes modules for experiment management, MLOps/LLMOps, data management, model serving, and more. ClearML offers features like experiment tracking, model serving, orchestration, and automation. It supports various ML/DL frameworks and integrates with Jupyter Notebook and PyCharm for remote debugging. ClearML aims to simplify collaboration, automate processes, and enhance visibility in AI projects.

For similar jobs

aiscript

AiScript is a lightweight scripting language that runs on JavaScript. It supports arrays, objects, and functions as first-class citizens, and is easy to write without the need for semicolons or commas. AiScript runs in a secure sandbox environment, preventing infinite loops from freezing the host. It also allows for easy provision of variables and functions from the host.

askui

AskUI is a reliable, automated end-to-end automation tool that only depends on what is shown on your screen instead of the technology or platform you are running on.

bots

The 'bots' repository is a collection of guides, tools, and example bots for programming bots to play video games. It provides resources on running bots live, installing the BotLab client, debugging bots, testing bots in simulated environments, and more. The repository also includes example bots for games like EVE Online, Tribal Wars 2, and Elvenar. Users can learn about developing bots for specific games, syntax of the Elm programming language, and tools for memory reading development. Additionally, there are guides on bot programming, contributing to BotLab, and exploring Elm syntax and core library.

ain

Ain is a terminal HTTP API client designed for scripting input and processing output via pipes. It allows flexible organization of APIs using files and folders, supports shell-scripts and executables for common tasks, handles url-encoding, and enables sharing the resulting curl, wget, or httpie command-line. Users can put things that change in environment variables or .env-files, and pipe the API output for further processing. Ain targets users who work with many APIs using a simple file format and uses curl, wget, or httpie to make the actual calls.

LaVague

LaVague is an open-source Large Action Model framework that uses advanced AI techniques to compile natural language instructions into browser automation code. It leverages Selenium or Playwright for browser actions. Users can interact with LaVague through an interactive Gradio interface to automate web interactions. The tool requires an OpenAI API key for default examples and offers a Playwright integration guide. Contributors can help by working on outlined tasks, submitting PRs, and engaging with the community on Discord. The project roadmap is available to track progress, but users should exercise caution when executing LLM-generated code using 'exec'.

robocorp

Robocorp is a platform that allows users to create, deploy, and operate Python automations and AI actions. It provides an easy way to extend the capabilities of AI agents, assistants, and copilots with custom actions written in Python. Users can create and deploy tools, skills, loaders, and plugins that securely connect any AI Assistant platform to their data and applications. The Robocorp Action Server makes Python scripts compatible with ChatGPT and LangChain by automatically creating and exposing an API based on function declaration, type hints, and docstrings. It simplifies the process of developing and deploying AI actions, enabling users to interact with AI frameworks effortlessly.

Open-Interface

Open Interface is a self-driving software that automates computer tasks by sending user requests to a language model backend (e.g., GPT-4V) and simulating keyboard and mouse inputs to execute the steps. It course-corrects by sending current screenshots to the language models. The tool supports MacOS, Linux, and Windows, and requires setting up the OpenAI API key for access to GPT-4V. It can automate tasks like creating meal plans, setting up custom language model backends, and more. Open Interface is currently not efficient in accurate spatial reasoning, tracking itself in tabular contexts, and navigating complex GUI-rich applications. Future improvements aim to enhance the tool's capabilities with better models trained on video walkthroughs. The tool is cost-effective, with user requests priced between $0.05 - $0.20, and offers features like interrupting the app and primary display visibility in multi-monitor setups.

AI-Case-Sorter-CS7.1

AI-Case-Sorter-CS7.1 is a project focused on building a case sorter using machine vision and machine learning AI to sort cases by headstamp. The repository includes Arduino code and 3D models necessary for the project.