MarkLLM

MarkLLM: An Open-Source Toolkit for LLM Watermarking.(EMNLP 2024 System Demonstration)

Stars: 758

MarkLLM is an open-source toolkit designed for watermarking technologies within large language models (LLMs). It simplifies access, understanding, and assessment of watermarking technologies, supporting various algorithms, visualization tools, and evaluation modules. The toolkit aids researchers and the community in ensuring the authenticity and origin of machine-generated text.

README:

🎉 We welcome PRs! If you have implemented a LLM watermarking algorithm or are interested in contributing one, we'd love to include it in MarkLLM. Join our community and help make text watermarking more accessible to everyone!

🔥 If you are interested in watermarking for diffusion models (image/video watermark), please refer to the MarkDiffusion toolkit from our group.

-

(ICLR 2024) A Semantic Invariant Robust Watermark for Large Language Models

Aiwei Liu, Leyi Pan, Xuming Hu, Shiao Meng, Lijie Wen

-

(ICLR 2024) An Unforgeable Publicly Verifiable Watermark for Large Language Models

Aiwei Liu, Leyi Pan, Xuming Hu, Shu'ang Li, Lijie Wen, Irwin King, Philip S. Yu

-

(ACM Computing Surveys) A Survey of Text Watermarking in the Era of Large Language Models

Aiwei Liu*, Leyi Pan*, Yijian Lu, Jingjing Li, Xuming Hu, Xi Zhang, Lijie Wen, Irwin King, Hui Xiong, Philip S. Yu

-

(ICLR 2025 Spotlight) Can Watermarked LLMs be Identified by Users via Crafted Prompts?

Aiwei Liu, Sheng Guan, Yiming Liu, Leyi Pan, Yifei Zhang, Liancheng Fang, Lijie Wen, Philip S. Yu, Xuming Hu

-

(ACL 2025 Main) Can LLM Watermarks Robustly Prevent Unauthorized Knowledge Distillation?

Leyi Pan, Aiwei Liu, Shiyu Huang, Yijian Lu, Xuming Hu, Lijie Wen, Irwin King, Philip S. Yu

-

Leyi Pan, Aiwei Liu, Yijian Lu, Zitian Gao, Yichen Di, Lijie Wen, Irwin King, Philip S. Yu

-

(ACL 2024 Main) An Entropy-based Text Watermarking Detection Method

Yijian Lu, AIwei Liu, Dianzhi Yu, Jingjing Li, Irwin King

-

Zhiwei He, Binglin Zhou, Hongkun Hao, Aiwei Liu, Xing Wang, Zhaopeng Tu, Zhuosheng Zhang, Rui Wang

As the MarkLLM repository content becomes increasingly rich and its size grows larger, we have created a model storage repository on Hugging Face called Generative-Watermark-Toolkits to facilitate usage. This repository contains various default models for watermarking algorithms that involve self-trained models. We have removed the model weights from the corresponding model/ folders of these watermarking algorithms in the main repository. When using the code, please first download the corresponding models from the Hugging Face repository according to the config paths and save them to the model/ directory before running the code.

- 🎉 (2025.09.22) Add SemStamp watermark method. Thanks Huan Wang for her PR!

- 🎉 (2025.09.17) Add IE watermark method. Thanks Tianle Gu for her PR!

- 🎉 (2025.09.14) Add Watermark Stealing attack method. Thanks Shuhao Zhang for his PR!

- 🎉 (2025.07.17) Add k-SemStamp watermarking method. Thanks Huan Wang for her PR!

- 🎉 (2025.07.17) Add Adaptive Watermark watermarking method. Thanks Yepeng Liu for his PR!

- 🎉 (2025.05.24) Add MorphMark watermarking method. Thanks Zongqi Wang for his PR!

- 🎉 (2025.03.12) Add Permute-and-Flip (PF) watermarking method. Thanks Zian Wang for his PR!

- 🎉 (2025.02.27) Add δ-reweight and LLR score detection for Unbiased watermarking method.

- 🎉 (2025.01.08) Add AutoConfiguration for watermarking methods.

- 🎉 (2024.12.21) Provide example code for integrating VLLM with MarkLLM in

MarkvLLM_demo.py. Thanks to @zhangjf-nlp for his PR! - 🎉 (2024.11.21) Support distortionary version of SynthID-Text method (Nature).

- 🎉 (2024.11.03) Add SynthID-Text method (Nature) and support detection methods including mean, weighted mean, and bayesian.

- 🎉 (2024.11.01) Add TS-Watermark method (ICML 2024). Thanks to Kyle Zheng and Minjia Huo for their PR!

- 🎉 (2024.10.07) Provide an alternative, equivalent implementation of the EXP watermarking algorithm (EXPGumbel) utilizing Gumbel noise. With this implementation, users should be able to modify the watermark strength by adjusting the sampling temperature in the configuration file.

- 🎉 (2024.10.07) Add Unbiased watermarking method.

- 🎉 (2024.10.06) We are excited to announce that our paper "MarkLLM: An Open-Source Toolkit for LLM Watermarking" has been accepted by EMNLP 2024 Demo!

- 🎉 (2024.08.08) Add DiPmark watermarking method. Thanks to Sheng Guan for his PR!

- 🎉 (2024.08.01) Released as a python package! Try

pip install markllm. We provide a user example at the end of this file. - 🎉 (2024.07.13) Add ITSEdit watermarking method. Thanks to Yiming Liu for his PR!

- 🎉 (2024.07.09) Add more hashing schemes for KGW (skip, min, additive, selfhash). Thanks to Yichen Di for his PR!

- 🎉 (2024.07.08) Add top-k filter for watermarking methods in Christ family. Thanks to Kai Shi for his PR!

- 🎉 (2024.07.03) Updated Back-Translation Attack. Thanks to Zihan Tang for his PR!

- 🎉 (2024.06.19) Updated Random Walk Attack from the impossibility results of strong watermarking paper at ICML, 2024. (Blog). Thanks to Hanlin Zhang for his PR!

- 🎉 (2024.05.23) We're thrilled to announce the release of our website demo!

MarkLLM is an open-source toolkit developed to facilitate the research and application of watermarking technologies within large language models (LLMs). As the use of large language models (LLMs) expands, ensuring the authenticity and origin of machine-generated text becomes critical. MarkLLM simplifies the access, understanding, and assessment of watermarking technologies, making it accessible to both researchers and the broader community.

-

Implementation Framework: MarkLLM provides a unified and extensible platform for the implementation of various LLM watermarking algorithms. It currently supports nine specific algorithms from two prominent families, facilitating the integration and expansion of watermarking techniques.

Framework Design:

Currently Supported Algorithms:

-

Visualization Solutions: The toolkit includes custom visualization tools that enable clear and insightful views into how different watermarking algorithms operate under various scenarios. These visualizations help demystify the algorithms' mechanisms, making them more understandable for users.

-

Evaluation Module: With 12 evaluation tools that cover detectability, robustness, and impact on text quality, MarkLLM stands out in its comprehensive approach to assessing watermarking technologies. It also features customizable automated evaluation pipelines that cater to diverse needs and scenarios, enhancing the toolkit's practical utility.

Tools:

- Success Rate Calculator of Watermark Detection: FundamentalSuccessRateCalculator, DynamicThresholdSuccessRateCalculator

- Text Editor: WordDeletion, SynonymSubstitution, ContextAwareSynonymSubstitution, GPTParaphraser, DipperParaphraser, RandomWalkAttack

- Text Quality Analyzer: PPLCalculator, LogDiversityAnalyzer, BLEUCalculator, PassOrNotJudger, GPTDiscriminator

Pipelines:

- Watermark Detection Pipeline: WatermarkedTextDetectionPipeline, UnwatermarkedTextDetectionPipeline

- Text Quality Pipeline: DirectTextQualityAnalysisPipeline, ReferencedTextQualityAnalysisPipeline, ExternalDiscriminatorTextQualityAnalysisPipeline

- python 3.10

- pytorch

- pip install -r requirements.txt

Tips: If you wish to utilize the EXPEdit or ITSEdit algorithm, you will need to import for .pyx file, take EXPEdit as an example:

- run

python watermark/exp_edit/cython_files/setup.py build_ext --inplace - move the generated

.sofile intowatermark/exp_edit/cython_files/

import torch

from watermark.auto_watermark import AutoWatermark

from utils.transformers_config import TransformersConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

# Device

device = "cuda" if torch.cuda.is_available() else "cpu"

# Transformers config

transformers_config = TransformersConfig(model=AutoModelForCausalLM.from_pretrained('facebook/opt-1.3b').to(device),

tokenizer=AutoTokenizer.from_pretrained('facebook/opt-1.3b'),

vocab_size=50272,

device=device,

max_new_tokens=200,

min_length=230,

do_sample=True,

no_repeat_ngram_size=4)

# Load watermark algorithm

myWatermark = AutoWatermark.load('KGW',

algorithm_config='config/KGW.json',

transformers_config=transformers_config)

# Prompt

prompt = 'Good Morning.'

# Generate and detect

watermarked_text = myWatermark.generate_watermarked_text(prompt)

detect_result = myWatermark.detect_watermark(watermarked_text)

unwatermarked_text = myWatermark.generate_unwatermarked_text(prompt)

detect_result = myWatermark.detect_watermark(unwatermarked_text)Assuming you already have a pair of watermarked_text and unwatermarked_text, and you wish to visualize the differences and specifically highlight the watermark within the watermarked text using a watermarking algorithm, you can utilize the visualization tools available in the visualize/ directory.

KGW Family

import torch

from visualize.font_settings import FontSettings

from watermark.auto_watermark import AutoWatermark

from utils.transformers_config import TransformersConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

from visualize.visualizer import DiscreteVisualizer

from visualize.legend_settings import DiscreteLegendSettings

from visualize.page_layout_settings import PageLayoutSettings

from visualize.color_scheme import ColorSchemeForDiscreteVisualization

# Load watermark algorithm

device = "cuda" if torch.cuda.is_available() else "cpu"

transformers_config = TransformersConfig(

model=AutoModelForCausalLM.from_pretrained('facebook/opt-1.3b').to(device),

tokenizer=AutoTokenizer.from_pretrained('facebook/opt-1.3b'),

vocab_size=50272,

device=device,

max_new_tokens=200,

min_length=230,

do_sample=True,

no_repeat_ngram_size=4)

myWatermark = AutoWatermark.load('KGW',

algorithm_config='config/KGW.json',

transformers_config=transformers_config)

# Get data for visualization

watermarked_data = myWatermark.get_data_for_visualization(watermarked_text)

unwatermarked_data = myWatermark.get_data_for_visualization(unwatermarked_text)

# Init visualizer

visualizer = DiscreteVisualizer(color_scheme=ColorSchemeForDiscreteVisualization(),

font_settings=FontSettings(),

page_layout_settings=PageLayoutSettings(),

legend_settings=DiscreteLegendSettings())

# Visualize

watermarked_img = visualizer.visualize(data=watermarked_data,

show_text=True,

visualize_weight=True,

display_legend=True)

unwatermarked_img = visualizer.visualize(data=unwatermarked_data,

show_text=True,

visualize_weight=True,

display_legend=True)

# Save

watermarked_img.save("KGW_watermarked.png")

unwatermarked_img.save("KGW_unwatermarked.png")Christ Family

import torch

from visualize.font_settings import FontSettings

from watermark.auto_watermark import AutoWatermark

from utils.transformers_config import TransformersConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

from visualize.visualizer import ContinuousVisualizer

from visualize.legend_settings import ContinuousLegendSettings

from visualize.page_layout_settings import PageLayoutSettings

from visualize.color_scheme import ColorSchemeForContinuousVisualization

# Load watermark algorithm

device = "cuda" if torch.cuda.is_available() else "cpu"

transformers_config = TransformersConfig(

model=AutoModelForCausalLM.from_pretrained('facebook/opt-1.3b').to(device),

tokenizer=AutoTokenizer.from_pretrained('facebook/opt-1.3b'),

vocab_size=50272,

device=device,

max_new_tokens=200,

min_length=230,

do_sample=True,

no_repeat_ngram_size=4)

myWatermark = AutoWatermark.load('EXP',

algorithm_config='config/EXP.json',

transformers_config=transformers_config)

# Get data for visualization

watermarked_data = myWatermark.get_data_for_visualization(watermarked_text)

unwatermarked_data = myWatermark.get_data_for_visualization(unwatermarked_text)

# Init visualizer

visualizer = ContinuousVisualizer(color_scheme=ColorSchemeForContinuousVisualization(),

font_settings=FontSettings(),

page_layout_settings=PageLayoutSettings(),

legend_settings=ContinuousLegendSettings())

# Visualize

watermarked_img = visualizer.visualize(data=watermarked_data,

show_text=True,

visualize_weight=True,

display_legend=True)

unwatermarked_img = visualizer.visualize(data=unwatermarked_data,

show_text=True,

visualize_weight=True,

display_legend=True)

# Save

watermarked_img.save("EXP_watermarked.png")

unwatermarked_img.save("EXP_unwatermarked.png")For more examples on how to use the visualization tools, please refer to the test/test_visualize.py script in the project directory.

Using Watermark Detection Pipelines

import torch

from evaluation.dataset import C4Dataset

from watermark.auto_watermark import AutoWatermark

from utils.transformers_config import TransformersConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

from evaluation.tools.text_editor import TruncatePromptTextEditor, WordDeletion

from evaluation.tools.success_rate_calculator import DynamicThresholdSuccessRateCalculator

from evaluation.pipelines.detection import WatermarkedTextDetectionPipeline, UnWatermarkedTextDetectionPipeline, DetectionPipelineReturnType

# Load dataset

my_dataset = C4Dataset('dataset/c4/processed_c4.json')

# Device

device = 'cuda' if torch.cuda.is_available() else 'cpu'

# Transformers config

transformers_config = TransformersConfig(

model=AutoModelForCausalLM.from_pretrained('facebook/opt-1.3b').to(device),

tokenizer=AutoTokenizer.from_pretrained('facebook/opt-1.3b'),

vocab_size=50272,

device=device,

max_new_tokens=200,

do_sample=True,

min_length=230,

no_repeat_ngram_size=4)

# Load watermark algorithm

my_watermark = AutoWatermark.load('KGW',

algorithm_config='config/KGW.json',

transformers_config=transformers_config)

# Init pipelines

pipeline1 = WatermarkedTextDetectionPipeline(

dataset=my_dataset,

text_editor_list=[TruncatePromptTextEditor(), WordDeletion(ratio=0.3)],

show_progress=True,

return_type=DetectionPipelineReturnType.SCORES)

pipeline2 = UnWatermarkedTextDetectionPipeline(dataset=my_dataset,

text_editor_list=[],

show_progress=True,

return_type=DetectionPipelineReturnType.SCORES)

# Evaluate

calculator = DynamicThresholdSuccessRateCalculator(labels=['TPR', 'F1'], rule='best')

print(calculator.calculate(pipeline1.evaluate(my_watermark), pipeline2.evaluate(my_watermark)))Using Text Quality Analysis Pipeline

import torch

from evaluation.dataset import C4Dataset

from watermark.auto_watermark import AutoWatermark

from utils.transformers_config import TransformersConfig

from transformers import AutoModelForCausalLM, AutoTokenizer

from evaluation.tools.text_editor import TruncatePromptTextEditor

from evaluation.tools.text_quality_analyzer import PPLCalculator

from evaluation.pipelines.quality_analysis import DirectTextQualityAnalysisPipeline, QualityPipelineReturnType

# Load dataset

my_dataset = C4Dataset('dataset/c4/processed_c4.json')

# Device

device = 'cuda' if torch.cuda.is_available() else 'cpu'

# Transformer config

transformers_config = TransformersConfig(

model=AutoModelForCausalLM.from_pretrained('facebook/opt-1.3b').to(device), tokenizer=AutoTokenizer.from_pretrained('facebook/opt-1.3b'),

vocab_size=50272,

device=device,

max_new_tokens=200,

min_length=230,

do_sample=True,

no_repeat_ngram_size=4)

# Load watermark algorithm

my_watermark = AutoWatermark.load('KGW',

algorithm_config='config/KGW.json',

transformers_config=transformers_config)

# Init pipeline

quality_pipeline = DirectTextQualityAnalysisPipeline(

dataset=my_dataset,

watermarked_text_editor_list=[TruncatePromptTextEditor()],

unwatermarked_text_editor_list=[],

analyzer=PPLCalculator(

model=AutoModelForCausalLM.from_pretrained('..model/llama-7b/', device_map='auto'), tokenizer=LlamaTokenizer.from_pretrained('..model/llama-7b/'),

device=device),

unwatermarked_text_source='natural',

show_progress=True,

return_type=QualityPipelineReturnType.MEAN_SCORES)

# Evaluate

print(quality_pipeline.evaluate(my_watermark))For more examples on how to use the pipelines, please refer to the test/test_pipeline.py script in the project directory.

Leveraging example scripts for evaluation

In the evaluation/examples/ directory of our repository, you will find a collection of Python scripts specifically designed for systematic and automated evaluation of various algorithms. By using these examples, you can quickly and effectively gauge the d etectability, robustness and impact on text quality of each algorithm implemented within our toolkit.

Note: To execute the scripts in evaluation/examples/, first run the following command to set the environment variables.

export PYTHONPATH="path_to_the_MarkLLM_project:$PYTHONPATH"Additional user examples are available in test/. To execute the scripts contained within, first run the following command to set the environment variables.

export PYTHONPATH="path_to_the_MarkLLM_project:$PYTHONPATH"In addition to the Colab Jupyter notebook we provide (some models cannot be downloaded due to storage limits), you can also easily deploy using MarkLLM_demo.ipynb on your local machine.

@inproceedings{pan-etal-2024-markllm,

title = "{M}ark{LLM}: An Open-Source Toolkit for {LLM} Watermarking",

author = "Pan, Leyi and

Liu, Aiwei and

He, Zhiwei and

Gao, Zitian and

Zhao, Xuandong and

Lu, Yijian and

Zhou, Binglin and

Liu, Shuliang and

Hu, Xuming and

Wen, Lijie and

King, Irwin and

Yu, Philip S.",

editor = "Hernandez Farias, Delia Irazu and

Hope, Tom and

Li, Manling",

booktitle = "Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing: System Demonstrations",

month = nov,

year = "2024",

address = "Miami, Florida, USA",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2024.emnlp-demo.7",

pages = "61--71",

abstract = "Watermarking for Large Language Models (LLMs), which embeds imperceptible yet algorithmically detectable signals in model outputs to identify LLM-generated text, has become crucial in mitigating the potential misuse of LLMs. However, the abundance of LLM watermarking algorithms, their intricate mechanisms, and the complex evaluation procedures and perspectives pose challenges for researchers and the community to easily understand, implement and evaluate the latest advancements. To address these issues, we introduce MarkLLM, an open-source toolkit for LLM watermarking. MarkLLM offers a unified and extensible framework for implementing LLM watermarking algorithms, while providing user-friendly interfaces to ensure ease of access. Furthermore, it enhances understanding by supporting automatic visualization of the underlying mechanisms of these algorithms. For evaluation, MarkLLM offers a comprehensive suite of 12 tools spanning three perspectives, along with two types of automated evaluation pipelines. Through MarkLLM, we aim to support researchers while improving the comprehension and involvement of the general public in LLM watermarking technology, fostering consensus and driving further advancements in research and application. Our code is available at https://github.com/THU-BPM/MarkLLM.",

}

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for MarkLLM

Similar Open Source Tools

MarkLLM

MarkLLM is an open-source toolkit designed for watermarking technologies within large language models (LLMs). It simplifies access, understanding, and assessment of watermarking technologies, supporting various algorithms, visualization tools, and evaluation modules. The toolkit aids researchers and the community in ensuring the authenticity and origin of machine-generated text.

superlinked

Superlinked is a compute framework for information retrieval and feature engineering systems, focusing on converting complex data into vector embeddings for RAG, Search, RecSys, and Analytics stack integration. It enables custom model performance in machine learning with pre-trained model convenience. The tool allows users to build multimodal vectors, define weights at query time, and avoid postprocessing & rerank requirements. Users can explore the computational model through simple scripts and python notebooks, with a future release planned for production usage with built-in data infra and vector database integrations.

superagentx

SuperAgentX is a lightweight open-source AI framework designed for multi-agent applications with Artificial General Intelligence (AGI) capabilities. It offers goal-oriented multi-agents with retry mechanisms, easy deployment through WebSocket, RESTful API, and IO console interfaces, streamlined architecture with no major dependencies, contextual memory using SQL + Vector databases, flexible LLM configuration supporting various Gen AI models, and extendable handlers for integration with diverse APIs and data sources. It aims to accelerate the development of AGI by providing a powerful platform for building autonomous AI agents capable of executing complex tasks with minimal human intervention.

GPTSwarm

GPTSwarm is a graph-based framework for LLM-based agents that enables the creation of LLM-based agents from graphs and facilitates the customized and automatic self-organization of agent swarms with self-improvement capabilities. The library includes components for domain-specific operations, graph-related functions, LLM backend selection, memory management, and optimization algorithms to enhance agent performance and swarm efficiency. Users can quickly run predefined swarms or utilize tools like the file analyzer. GPTSwarm supports local LM inference via LM Studio, allowing users to run with a local LLM model. The framework has been accepted by ICML2024 and offers advanced features for experimentation and customization.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

LLM4Decompile

LLM4Decompile is an open-source large language model dedicated to decompilation of Linux x86_64 binaries, supporting GCC's O0 to O3 optimization levels. It focuses on assessing re-executability of decompiled code through HumanEval-Decompile benchmark. The tool includes models with sizes ranging from 1.3 billion to 33 billion parameters, available on Hugging Face. Users can preprocess C code into binary and assembly instructions, then decompile assembly instructions into C using LLM4Decompile. Ongoing efforts aim to expand capabilities to support more architectures and configurations, integrate with decompilation tools like Ghidra and Rizin, and enhance performance with larger training datasets.

executorch

ExecuTorch is an end-to-end solution for enabling on-device inference capabilities across mobile and edge devices including wearables, embedded devices and microcontrollers. It is part of the PyTorch Edge ecosystem and enables efficient deployment of PyTorch models to edge devices. Key value propositions of ExecuTorch are: * **Portability:** Compatibility with a wide variety of computing platforms, from high-end mobile phones to highly constrained embedded systems and microcontrollers. * **Productivity:** Enabling developers to use the same toolchains and SDK from PyTorch model authoring and conversion, to debugging and deployment to a wide variety of platforms. * **Performance:** Providing end users with a seamless and high-performance experience due to a lightweight runtime and utilizing full hardware capabilities such as CPUs, NPUs, and DSPs.

indexify

Indexify is an open-source engine for building fast data pipelines for unstructured data (video, audio, images, and documents) using reusable extractors for embedding, transformation, and feature extraction. LLM Applications can query transformed content friendly to LLMs by semantic search and SQL queries. Indexify keeps vector databases and structured databases (PostgreSQL) updated by automatically invoking the pipelines as new data is ingested into the system from external data sources. **Why use Indexify** * Makes Unstructured Data **Queryable** with **SQL** and **Semantic Search** * **Real-Time** Extraction Engine to keep indexes **automatically** updated as new data is ingested. * Create **Extraction Graph** to describe **data transformation** and extraction of **embedding** and **structured extraction**. * **Incremental Extraction** and **Selective Deletion** when content is deleted or updated. * **Extractor SDK** allows adding new extraction capabilities, and many readily available extractors for **PDF**, **Image**, and **Video** indexing and extraction. * Works with **any LLM Framework** including **Langchain**, **DSPy**, etc. * Runs on your laptop during **prototyping** and also scales to **1000s of machines** on the cloud. * Works with many **Blob Stores**, **Vector Stores**, and **Structured Databases** * We have even **Open Sourced Automation** to deploy to Kubernetes in production.

mem0

Mem0 is a tool that provides a smart, self-improving memory layer for Large Language Models, enabling personalized AI experiences across applications. It offers persistent memory for users, sessions, and agents, self-improving personalization, a simple API for easy integration, and cross-platform consistency. Users can store memories, retrieve memories, search for related memories, update memories, get the history of a memory, and delete memories using Mem0. It is designed to enhance AI experiences by enabling long-term memory storage and retrieval.

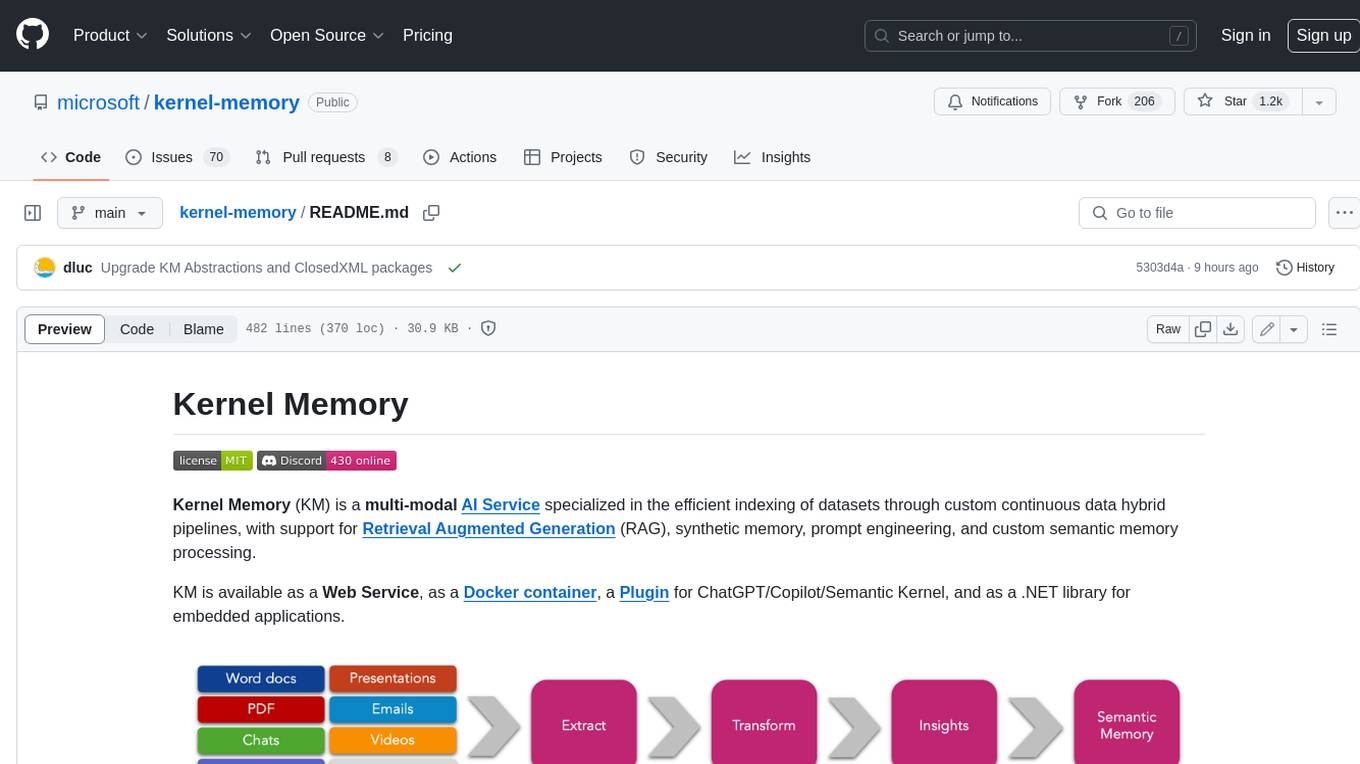

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

AI-Infra-Guard

A.I.G (AI-Infra-Guard) is an AI red teaming platform by Tencent Zhuque Lab that integrates capabilities such as AI infra vulnerability scan, MCP Server risk scan, and Jailbreak Evaluation. It aims to provide users with a comprehensive, intelligent, and user-friendly solution for AI security risk self-examination. The platform offers features like AI Infra Scan, AI Tool Protocol Scan, and Jailbreak Evaluation, along with a modern web interface, complete API, multi-language support, cross-platform deployment, and being free and open-source under the MIT license.

trpc-agent-go

A powerful Go framework for building intelligent agent systems with large language models (LLMs), hierarchical planners, memory, telemetry, and a rich tool ecosystem. tRPC-Agent-Go enables the creation of autonomous or semi-autonomous agents that reason, call tools, collaborate with sub-agents, and maintain long-term state. The framework provides detailed documentation, examples, and tools for accelerating the development of AI applications.

agentscope

AgentScope is an agent-oriented programming tool for building LLM (Large Language Model) applications. It provides transparent development, realtime steering, agentic tools management, model agnostic programming, LEGO-style agent building, multi-agent support, and high customizability. The tool supports async invocation, reasoning models, streaming returns, async/sync tool functions, user interruption, group-wise tools management, streamable transport, stateful/stateless mode MCP client, distributed and parallel evaluation, multi-agent conversation management, and fine-grained MCP control. AgentScope Studio enables tracing and visualization of agent applications. The tool is highly customizable and encourages customization at various levels.

DeepMesh

DeepMesh is an auto-regressive artist-mesh creation tool that utilizes reinforcement learning to generate high-quality meshes conditioned on a given point cloud. It offers pretrained weights and allows users to generate obj/ply files based on specific input parameters. The tool has been tested on Ubuntu 22 with CUDA 11.8 and supports A100, A800, and A6000 GPUs. Users can clone the repository, create a conda environment, install pretrained model weights, and use command line inference to generate meshes.

lancedb

LanceDB is an open-source database for vector-search built with persistent storage, which greatly simplifies retrieval, filtering, and management of embeddings. The key features of LanceDB include: Production-scale vector search with no servers to manage. Store, query, and filter vectors, metadata, and multi-modal data (text, images, videos, point clouds, and more). Support for vector similarity search, full-text search, and SQL. Native Python and Javascript/Typescript support. Zero-copy, automatic versioning, manage versions of your data without needing extra infrastructure. GPU support in building vector index(*). Ecosystem integrations with LangChain 🦜️🔗, LlamaIndex 🦙, Apache-Arrow, Pandas, Polars, DuckDB, and more on the way. LanceDB's core is written in Rust 🦀 and is built using Lance, an open-source columnar format designed for performant ML workloads.

CrackSQL

CrackSQL is a powerful SQL dialect translation tool that integrates rule-based strategies with large language models (LLMs) for high accuracy. It enables seamless conversion between dialects (e.g., PostgreSQL → MySQL) with flexible access through Python API, command line, and web interface. The tool supports extensive dialect compatibility, precision & advanced processing, and versatile access & integration. It offers three modes for dialect translation and demonstrates high translation accuracy over collected benchmarks. Users can deploy CrackSQL using PyPI package installation or source code installation methods. The tool can be extended to support additional syntax, new dialects, and improve translation efficiency. The project is actively maintained and welcomes contributions from the community.

For similar tasks

MarkLLM

MarkLLM is an open-source toolkit designed for watermarking technologies within large language models (LLMs). It simplifies access, understanding, and assessment of watermarking technologies, supporting various algorithms, visualization tools, and evaluation modules. The toolkit aids researchers and the community in ensuring the authenticity and origin of machine-generated text.

langkit

LangKit is an open-source text metrics toolkit for monitoring language models. It offers methods for extracting signals from input/output text, compatible with whylogs. Features include text quality, relevance, security, sentiment, toxicity analysis. Installation via PyPI. Modules contain UDFs for whylogs. Benchmarks show throughput on AWS instances. FAQs available.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.