Best AI tools for< Slow Aging >

9 - AI tool Sites

bigmp4

bigmp4 is an AI-powered video enhancement tool that uses cutting-edge AI models to enhance the quality of videos. It offers features such as lossless video enlargement, video enhancement, AI interpolation to make videos more vivid, black and white video colorization, and smooth slow motion. The tool supports various file formats, including mp4, mov, mkv, m4v, mpg, mpeg, avi, jpg, png, bmp, webp, and gif. It also supports batch mode processing, allowing users to upload multiple files for simultaneous processing.

Cimphony

Cimphony is a legal champion AI tool designed for startups and small businesses. It offers services such as legal counsel, employment/HR support, drafting and reviewing business contracts, and upcoming features like fundraise management. The platform leverages AI to address corporate needs, equity financing, contracts, and blockchain-specific issues with transparency and fair pricing. Cimphony aims to bring corporate legal services into the 21st century by providing a transparent view into legal work, faster delivery of legal advice, and flat-rate pricing for better planning.

Contentful

Contentful is a next-gen digital experience platform that offers a modular and composable architecture to manage and deliver personalized content efficiently. It provides AI capabilities for content creation, localization, and personalization, enabling users to drive higher omnichannel engagement and optimize performance at a granular level. With features like centralized content management, faster workflows, and no-code personalization tools, Contentful empowers growth teams to create consistent, on-brand experiences across multiple channels and regions. The platform is user-friendly, allowing marketers to tweak, test, and tailor experiences in real time without developer assistance.

Evinced

Evinced is an AI-powered accessibility tool that helps developers identify and address accessibility issues in websites and mobile apps. By utilizing AI and machine learning, Evinced can automatically find, cluster, and track accessibility problems that would typically require manual audits. The tool provides a comprehensive solution for developers to ensure their digital products are accessible to all users. Evinced offers features such as advanced bug detection, organization of issues, lifecycle tracking, easy integration with existing testing systems, and more.

Basejump AI

Basejump AI is an AI-powered data access tool that allows users to interact with their database using natural language queries. It empowers teams to access data quickly and easily, providing instant insights and eliminating the need to navigate through complex dashboards. With Basejump AI, users can explore data, save relevant information, create custom collections, and refine datapoints to meet their specific requirements. The tool ensures data accuracy by allowing users to compare datapoints side by side. Basejump AI caters to various industries such as healthcare, HR, and software, offering real-time insights and analytics to streamline decision-making processes and optimize workflow efficiency.

SinCode AI

SinCode AI is an AI-powered copywriting tool that helps businesses create high-quality, engaging content quickly and easily. With SinCode AI, you can generate website copy, blog posts, social media posts, and more in just a few clicks. Our AI engine is trained on a massive dataset of high-performing copy, so you can be sure that your content will be effective and persuasive.

TensorPix

TensorPix is an online AI-powered video enhancer and upscaler that can improve and upscale videos in less than 3 minutes. It is a cloud-based service that can be used to enhance videos from any device, including smartphones and tablets. TensorPix uses AI to enhance video quality, including resolution, framerate, and color correction. It can also remove flickering, film dirt, and interlacing artifacts from old videos. TensorPix is used by thousands of users, including filmmakers, studios, and businesses. It is a powerful tool that can help you improve the quality of your videos and images.

Birdseye

Birdseye is the world's first autonomous email marketing platform that revolutionizes how brands and retailers target customers. It offers hyper-personalized emails on autopilot, analyzing customers' buying and browsing habits to send tailored emails that resonate with them and boost sales. Birdseye's AI engages customers when they want to hear from you, ensuring personalized offers find their perfect home. The platform helps clear slow-moving stock with precision and continues to learn about customers to deliver increasingly personalized offers and drive sales. Birdseye is trusted by leading ecommerce brands for its significant engagement and conversion rates.

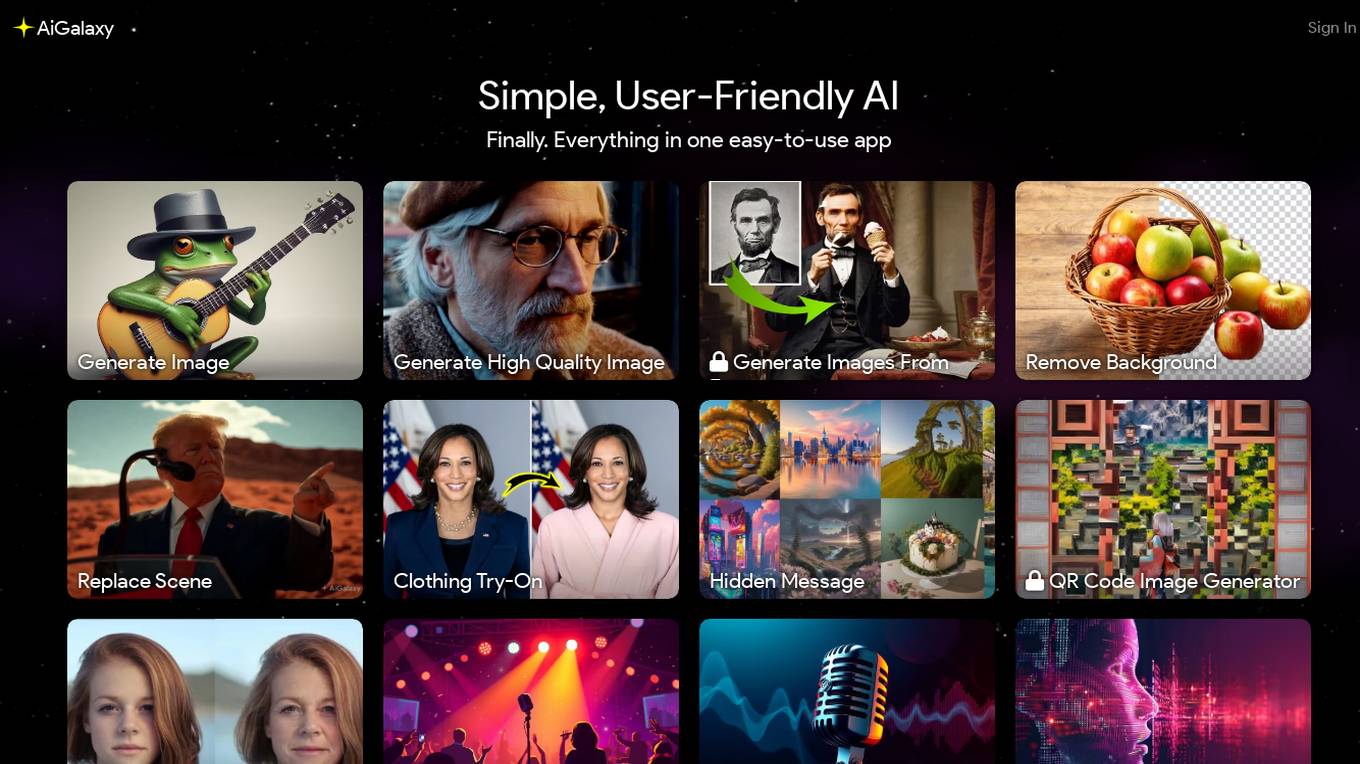

AiGalaxy

AiGalaxy is an all-in-one AI solution that offers a wide range of user-friendly AI tools in a single app. Users can easily generate images, remove backgrounds, change clothing, create hidden messages, generate QR codes, change ages, extract music and vocals, create voice models, convert images to videos, transcribe speech to text, convert text to speech, turn tunes into music tracks, change voices, unblur images, add sound to videos, create slow-motion videos, restore old images, and more. The app is designed to be easy enough for beginners while also offering powerful features for professionals. AiGalaxy constantly adds new AI tools to its platform, making it a versatile and evolving tool for various tasks.

0 - Open Source AI Tools

3 - OpenAI Gpts

Metabolic & Longevity Benefits of Supplement+Food

Analyzes supplements & food for their metabolic benefits and hallmarks of aging.