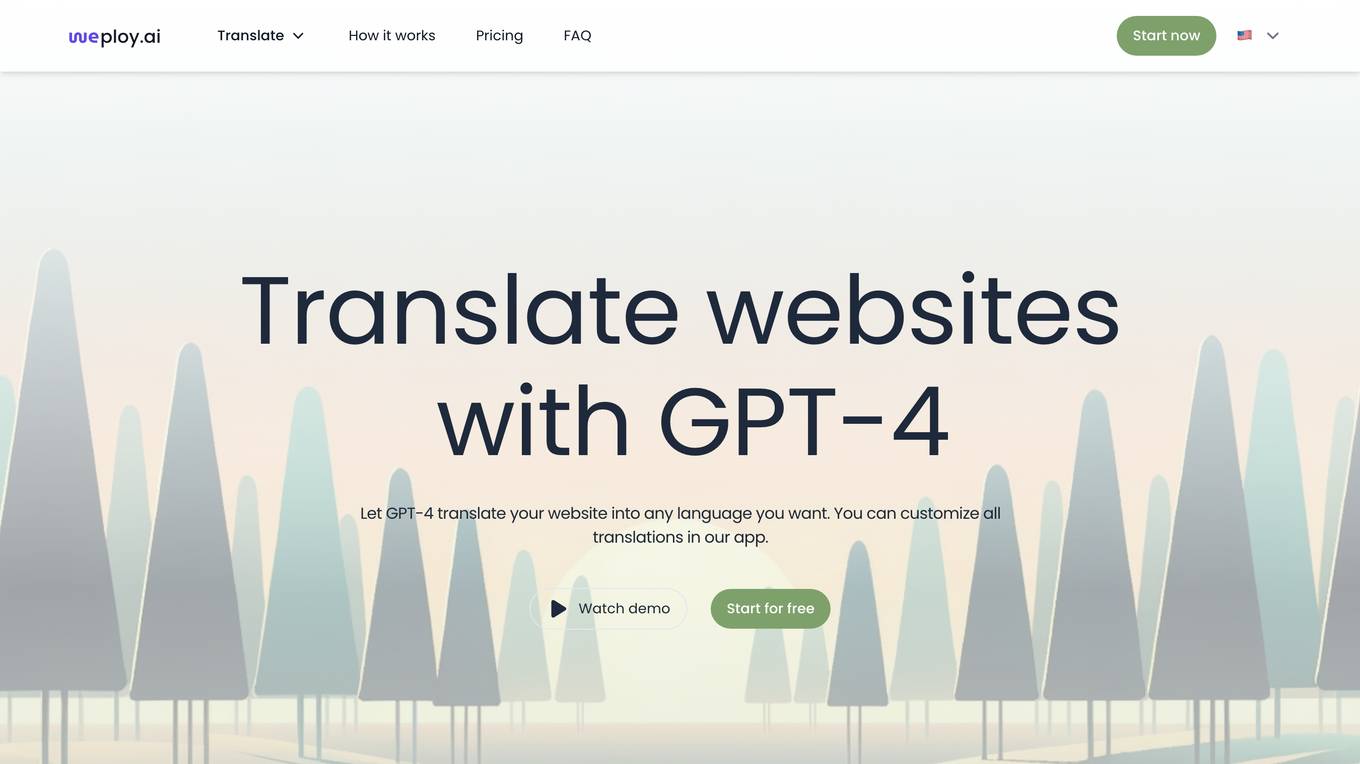

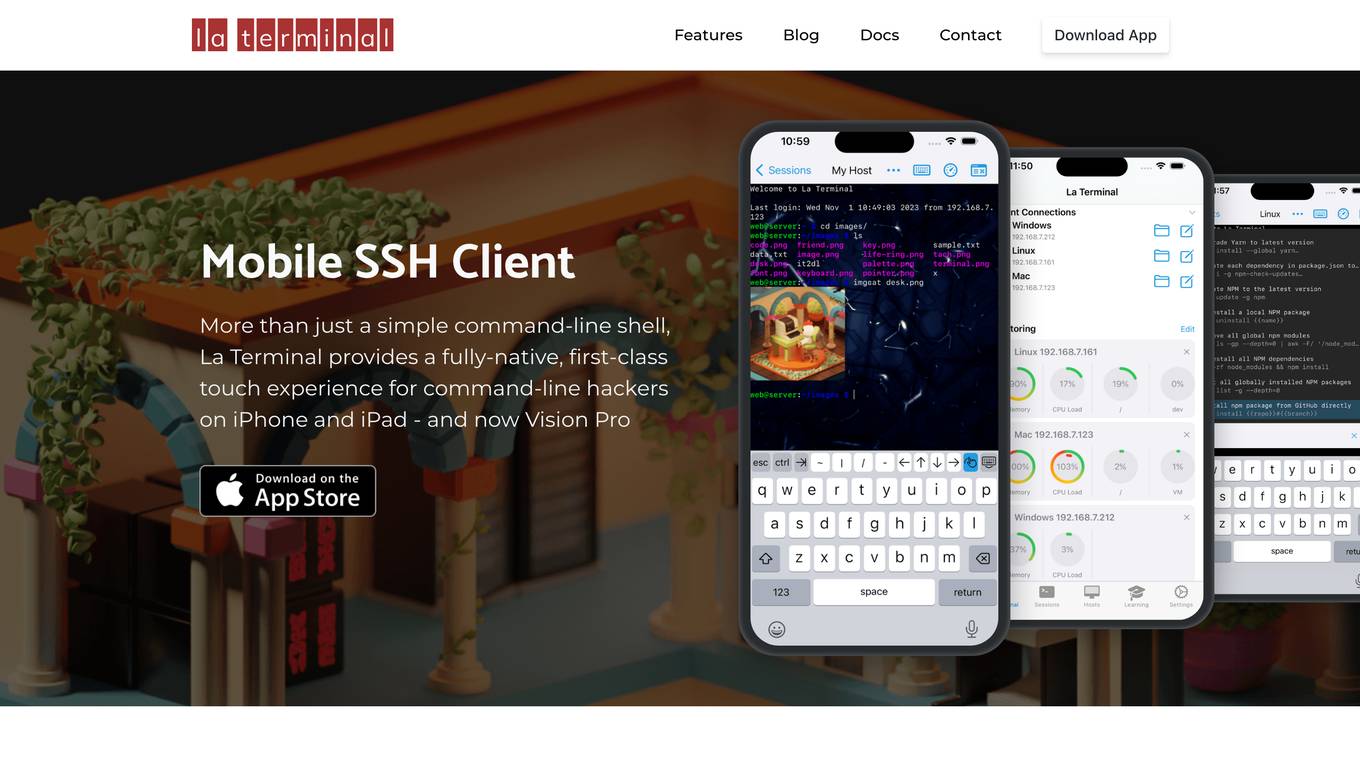

Best AI tools for< Manage Servers >

20 - AI tool Sites

OpenResty

The website is currently displaying a '403 Forbidden' error, which means that access to the requested resource is forbidden. This error is typically caused by insufficient permissions or misconfiguration on the server side. The message 'openresty' suggests that the server is using the OpenResty web platform. OpenResty is a dynamic web platform based on NGINX and Lua that is commonly used for building high-performance web applications. It provides a powerful and flexible environment for developing and deploying web services.

Magimaker

Magimaker.com is a website that currently shows a connection timed out error (Error code 522) due to issues with Cloudflare. The site seems to be a platform that may offer services related to web hosting or server management. Users experiencing this error are advised to wait a few minutes and try again, or for website owners, to contact their hosting provider for assistance. The error indicates a timeout between Cloudflare's network and the origin web server, preventing the web page from being displayed.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server is refusing to respond to the request. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message refers to a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. The website may be experiencing technical issues that need to be resolved by the website administrator.

OpenResty

The website is currently displaying a '403 Forbidden' error, which means that access to the requested resource is denied. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' message indicates that the server is using the OpenResty web platform. OpenResty is a web platform based on NGINX and LuaJIT, commonly used for building dynamic web applications. It provides a powerful and flexible environment for web development.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server understood the request but refuses to authorize it. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message refers to a web platform based on NGINX and LuaJIT, often used for building high-performance web applications. It seems that the website is currently inaccessible due to server-side issues.

OpenResty Web Platform

The website is currently displaying a '403 Forbidden' error, which means that access to the requested resource is denied. This error is typically caused by insufficient permissions or server misconfiguration. The message 'openresty' suggests that the server is using the OpenResty web platform. OpenResty is a web platform based on NGINX and Lua that provides a powerful and flexible way to build web applications. It is often used for high-performance web applications and APIs.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server is refusing to respond to the request. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message is a web platform based on NGINX and LuaJIT, often used for building high-performance web applications. It is important to troubleshoot and resolve the server-side issues to restore access to the website.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request, but is refusing to fulfill it. This error message is often encountered when trying to access a webpage or resource that is restricted or unavailable to the user. The 'openresty' mentioned in the text refers to a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. It is designed to handle a large number of concurrent connections and requests efficiently.

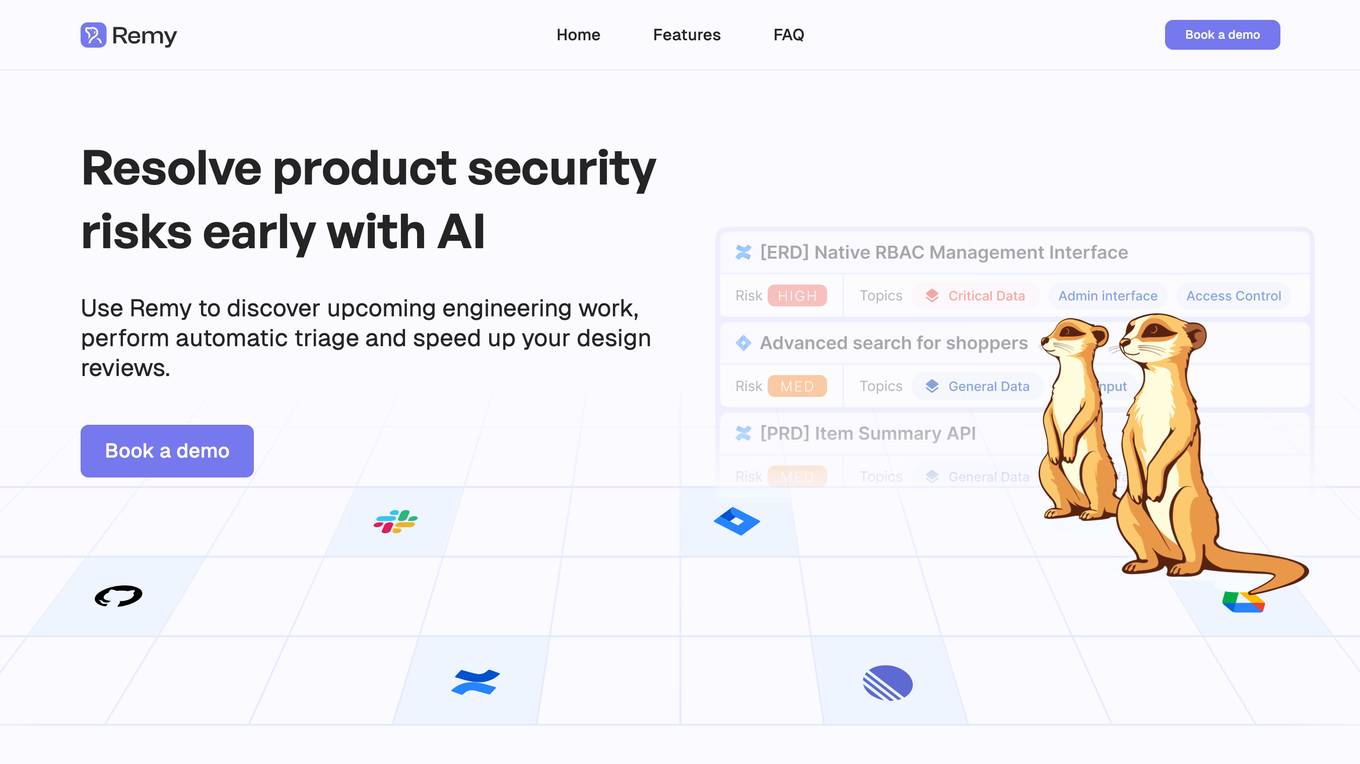

La Terminal

La Terminal is a fully-native, first-class touch experience for command-line hackers on iPhone and iPad. It provides a secure, open-source, and feature-rich environment for managing remote servers, automating tasks, and exploring the command line. With AI assistance from El Copiloto, La Terminal makes it easy to find and execute commands, even for beginners.

OpenResty

The website is currently displaying a '403 Forbidden' error, which means that access to the requested resource is denied. This error is typically caused by insufficient permissions or server misconfiguration. The 'openresty' message indicates that the server is using the OpenResty web platform. OpenResty is a scalable web platform that integrates the Nginx web server with various Lua-based modules, providing powerful features for web development and server-side scripting.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server is refusing to respond to the request. This error is often caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the error message is a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. It is designed to handle a large number of concurrent connections and provide advanced features for web development.

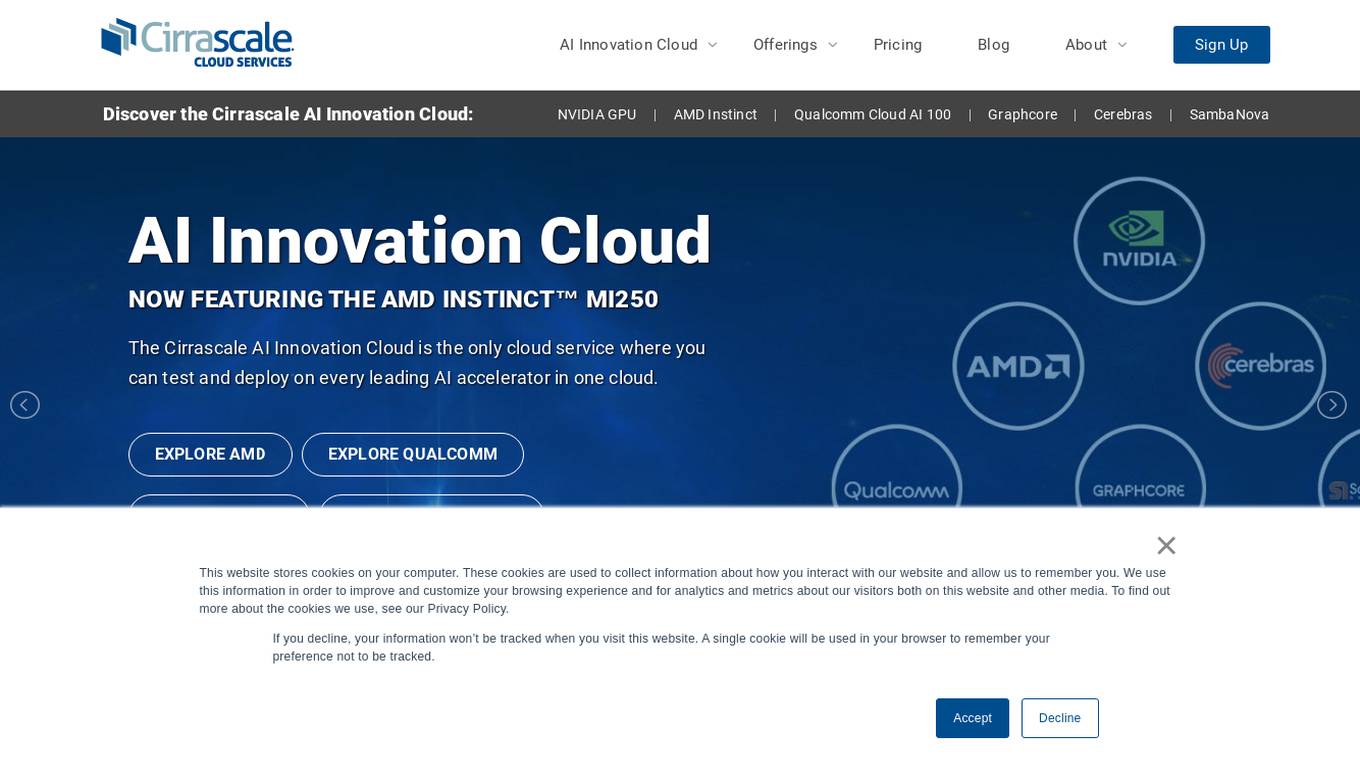

Cirrascale Cloud Services

Cirrascale Cloud Services is an AI tool that offers cloud solutions for Artificial Intelligence applications. The platform provides a range of cloud services and products tailored for AI innovation, including NVIDIA GPU Cloud, AMD Instinct Series Cloud, Qualcomm Cloud, Graphcore, Cerebras, and SambaNova. Cirrascale's AI Innovation Cloud enables users to test and deploy on leading AI accelerators in one cloud, democratizing AI by delivering high-performance AI compute and scalable deep learning solutions. The platform also offers professional and managed services, tailored multi-GPU server options, and high-throughput storage and networking solutions to accelerate development, training, and inference workloads.

WebServerPro

The website is a platform that provides web server hosting services. It helps users set up and manage their web servers efficiently. Users can easily deploy their websites and applications on the server, ensuring a seamless online presence. The platform offers a user-friendly interface and reliable hosting solutions to meet various needs.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error is often encountered when trying to access a webpage without the necessary permissions. The 'openresty' mentioned in the text is likely the software running on the server. It is a web platform based on NGINX and LuaJIT, known for its high performance and scalability in handling web traffic. The website may be using OpenResty to manage its server configurations and handle incoming requests.

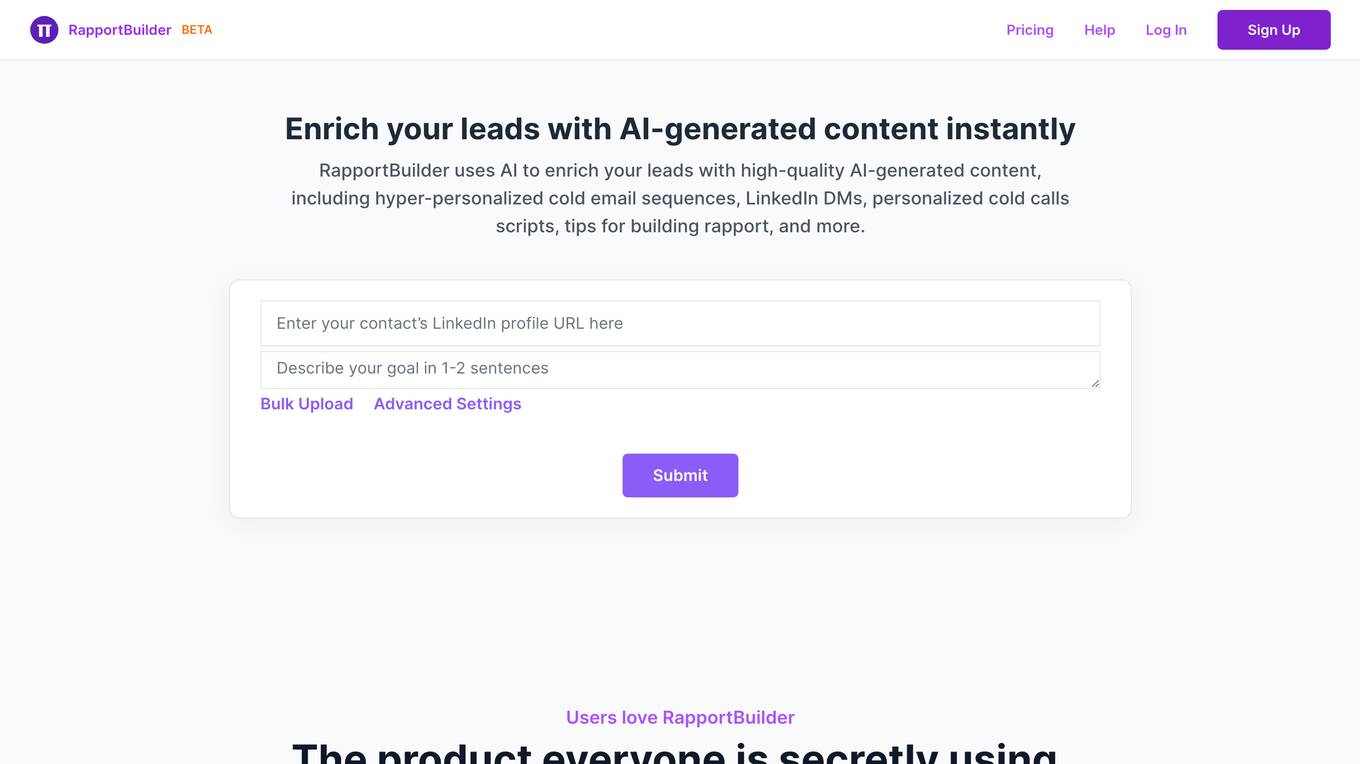

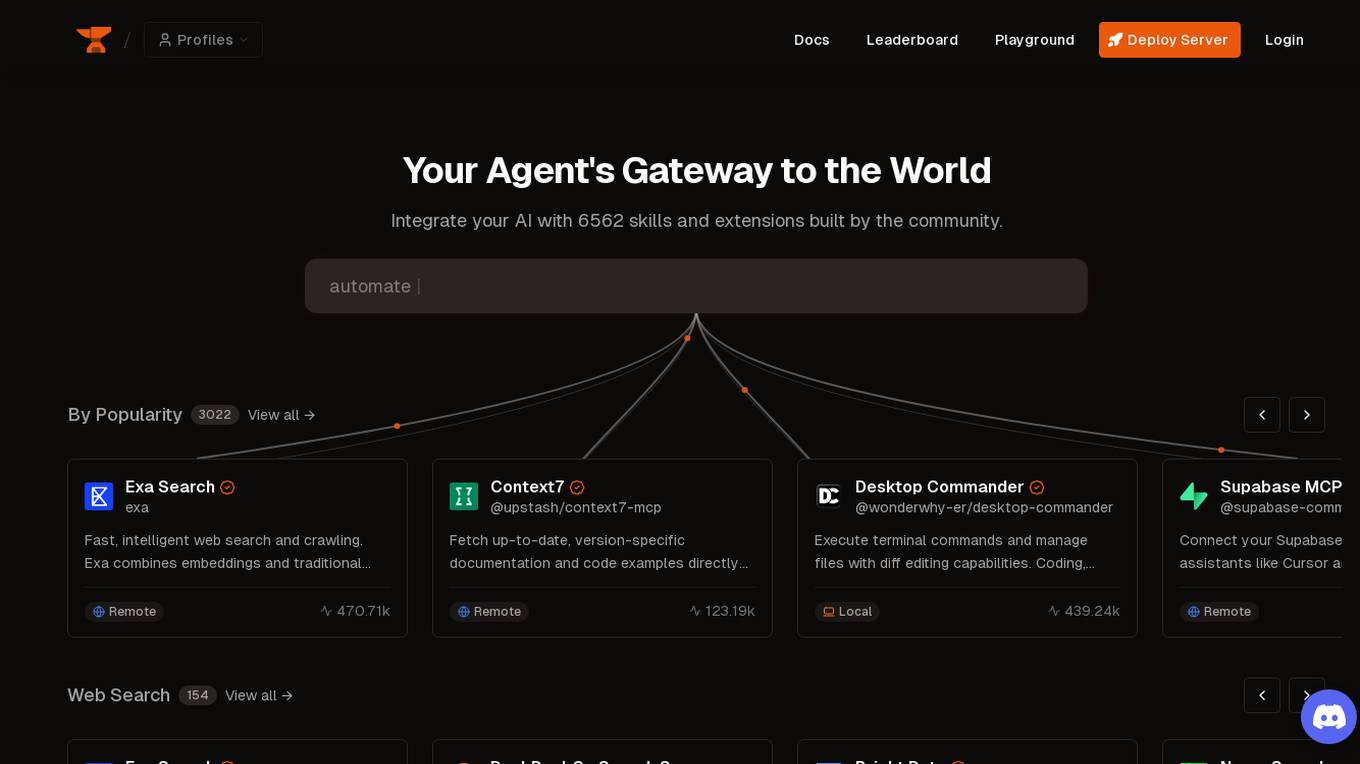

Smithery

Smithery is an AI tool that serves as an agent's gateway to the world, allowing users to extend their agent's capabilities by integrating with a wide range of skills and extensions developed by the community. With a focus on accelerating the agent economy, Smithery provides resources, documentation, and system status updates to support users in leveraging AI technology effectively. The platform offers various functionalities such as web search, browser automation, memory management, weather data & forecasts, AI image generation, web data extraction, and development boilerplates.

Lokal.so

Lokal.so is an AI-powered tool designed to supercharge your localhost development experience. It offers features like sharing your localhost with the public, debugging incoming requests, and developing with the assistance of an AI assistant. With Lokal.so, you can leverage Cloudflare's network for faster site delivery, use a built-in S3 server for easy file debugging, and automatically convert JSON payloads into different programming language models. The tool aims to simplify local development by providing a self-hosted tunnel server, unlimited .local domain access, and endpoint management with memorable names.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server is refusing to respond to the request. This error is often caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message is a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. It is designed to handle a large number of concurrent connections and provide a scalable and efficient web server solution.

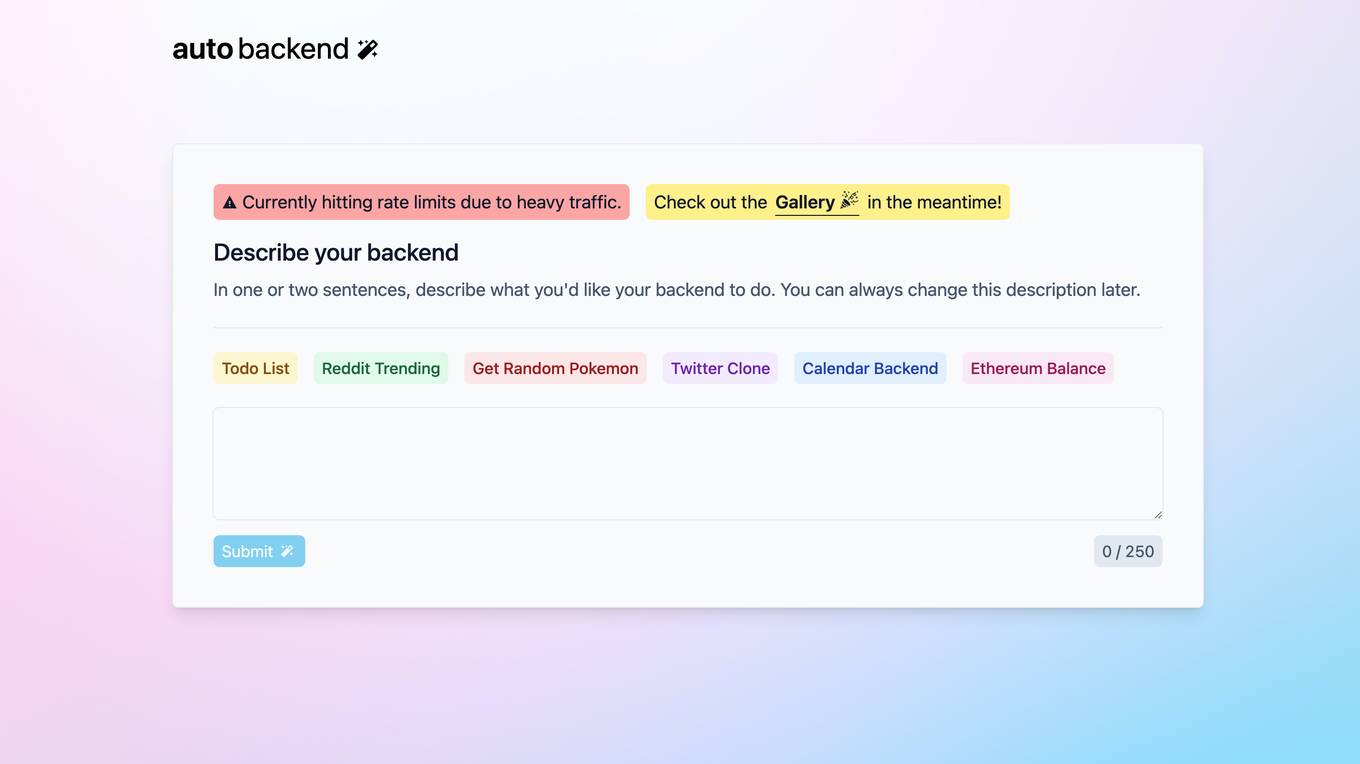

Autobackend.dev

Autobackend.dev is a web development platform that provides tools and resources for building and managing backend systems. It offers a range of features such as database management, API integration, and server configuration. With Autobackend.dev, users can streamline the process of backend development and focus on creating innovative web applications.

satprep.me

The website satprep.me is currently unavailable and prompts the site administrator to renew the hosting service. It offers services such as domain registration, VPS/VDS hosting, server rental, virtual hosting, and SSL certificates. The site is managed by RU-CENTER, with a copyright year of 2024.

nginx

The website is a landing page for the nginx web server. It indicates that the server is successfully installed and working, with further configuration required. Users can access online documentation and support at nginx.org, and commercial support is available at nginx.com. The page serves as a thank you message for using nginx.

4 - Open Source AI Tools

1Panel

1Panel is an open-source, modern web-based control panel for Linux server management. It provides efficient management through a user-friendly web graphical interface, enabling users to effortlessly manage their Linux servers. Key features include host monitoring, file management, database administration, container management, rapid website deployment with WordPress integration, an application store for easy installation and updates, security and reliability through containerization and secure application deployment practices, integrated firewall management, log auditing capabilities, and one-click backup & restore functionality supporting various cloud storage solutions.

mcphub.nvim

MCPHub.nvim is a powerful Neovim plugin that integrates MCP (Model Context Protocol) servers into your workflow. It offers a centralized config file for managing servers and tools, with an intuitive UI for testing resources. Ideal for LLM integration, it provides programmatic API access and interactive testing through the `:MCPHub` command.

opcode

opcode is a powerful desktop application built with Tauri 2 that serves as a command center for interacting with Claude Code. It offers a visual GUI for managing Claude Code sessions, creating custom agents, tracking usage, and more. Users can navigate projects, create specialized AI agents, monitor usage analytics, manage MCP servers, create session checkpoints, edit CLAUDE.md files, and more. The tool bridges the gap between command-line tools and visual experiences, making AI-assisted development more intuitive and productive.

mcp-router

MCP Router is a desktop application that simplifies the management of Model Context Protocol (MCP) servers. It is a universal tool that allows users to connect to any MCP server, supports remote or local servers, and provides data portability by enabling easy export and import of MCP configurations. The application prioritizes privacy and security by storing all data locally, ensuring secure credentials, and offering complete control over server connections and data. Transparency is maintained through auditable source code, verifiable privacy practices, and community-driven security improvements and audits.

20 - OpenAI Gpts

Software expert

Server admin expert in cPanel, Softaculous, WHM, WordPress, and Elementor Pro.

BashEmulator GPT

BashEmulator GPT: A Virtualized Bash Environment for Linux Command Line Interaction. It virtualized all network interfaces and local network

SQL Server assistant

Expert in SQL Server for database management, optimization, and troubleshooting.

Baci's AI Server

An AI waiter for Baci Bistro & Bar, knowledgeable about the menu and ready to assist.

アダチさん13号(SQLServer篇)

安達孝一さんがSE時代に蓄積してきた、SQL Serverのナレッジやノウハウ等 (SQL Server 2000/2005/2008/2012) について、ご質問頂けます。また、対話内容を基に、ChatGPT(GPT-4)向けの、汎用的な質問文例も作成できます。