Best AI tools for< Deploy On Aws >

20 - AI tool Sites

Code Companion AI

Code Companion AI is a desktop application powered by OpenAI's ChatGPT, designed to aid by performing a myriad of coding tasks. This application streamlines project management with its chatbot interface that can execute shell commands, generate code, handle database queries and review your existing code. Tasks are as simple as sending a message - you could request creation of a .gitignore file, or deploy an app on AWS, and CodeCompanion.AI does it for you. Simply download CodeCompanion.AI from the website to enjoy all features across various programming languages and platforms.

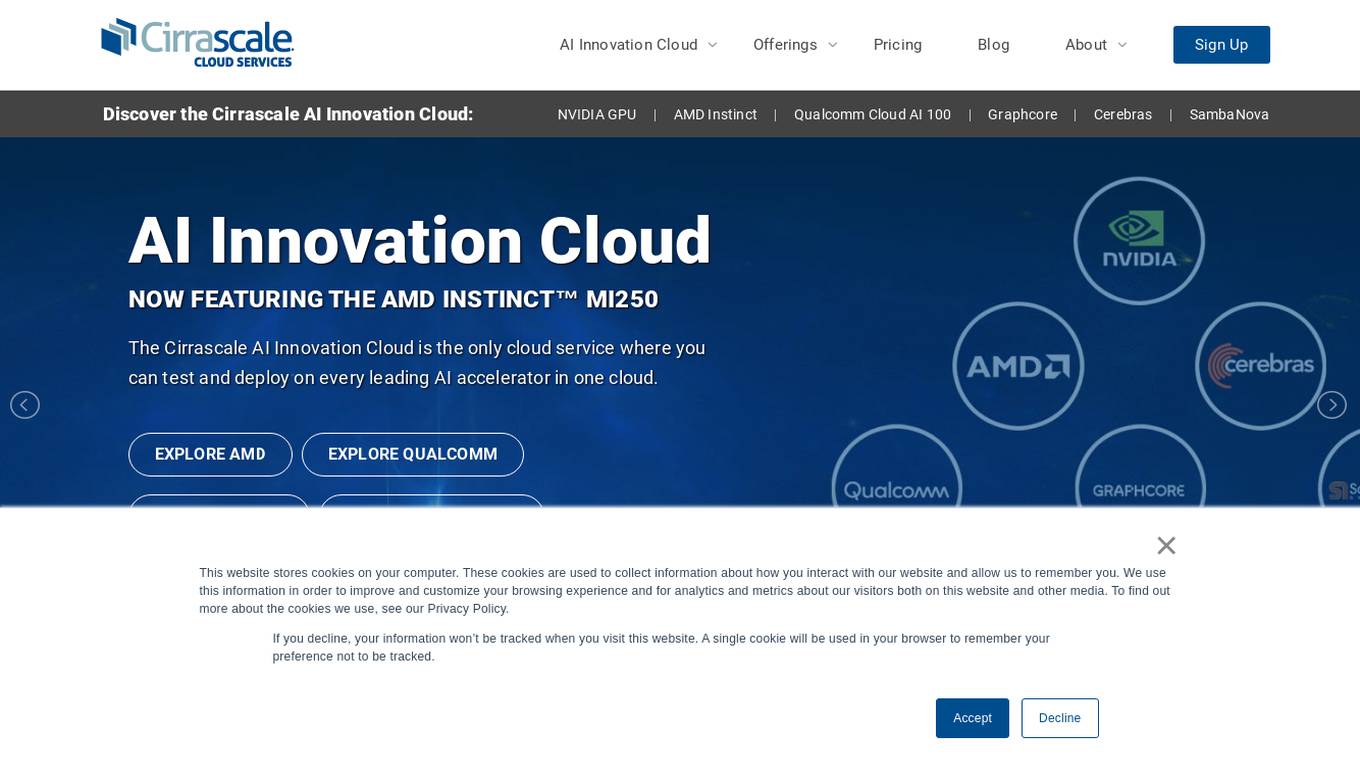

Cirrascale Cloud Services

Cirrascale Cloud Services is an AI tool that offers cloud solutions for Artificial Intelligence applications. The platform provides a range of cloud services and products tailored for AI innovation, including NVIDIA GPU Cloud, AMD Instinct Series Cloud, Qualcomm Cloud, Graphcore, Cerebras, and SambaNova. Cirrascale's AI Innovation Cloud enables users to test and deploy on leading AI accelerators in one cloud, democratizing AI by delivering high-performance AI compute and scalable deep learning solutions. The platform also offers professional and managed services, tailored multi-GPU server options, and high-throughput storage and networking solutions to accelerate development, training, and inference workloads.

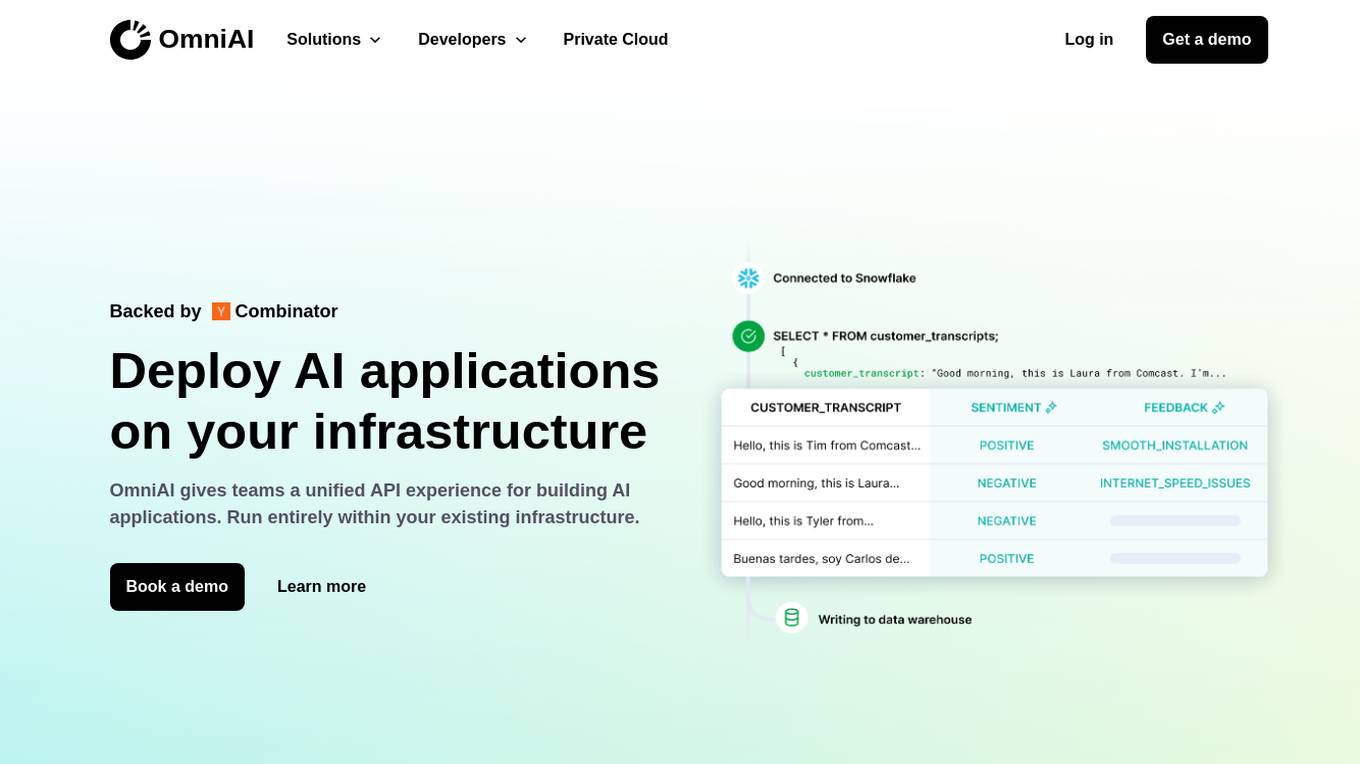

OmniAI

OmniAI is an AI tool that allows teams to deploy AI applications on their existing infrastructure. It provides a unified API experience for building AI applications and offers a wide selection of industry-leading models. With tools like Llama 3, Claude 3, Mistral Large, and AWS Titan, OmniAI excels in tasks such as natural language understanding, generation, safety, ethical behavior, and context retention. It also enables users to deploy and query the latest AI models quickly and easily within their virtual private cloud environment.

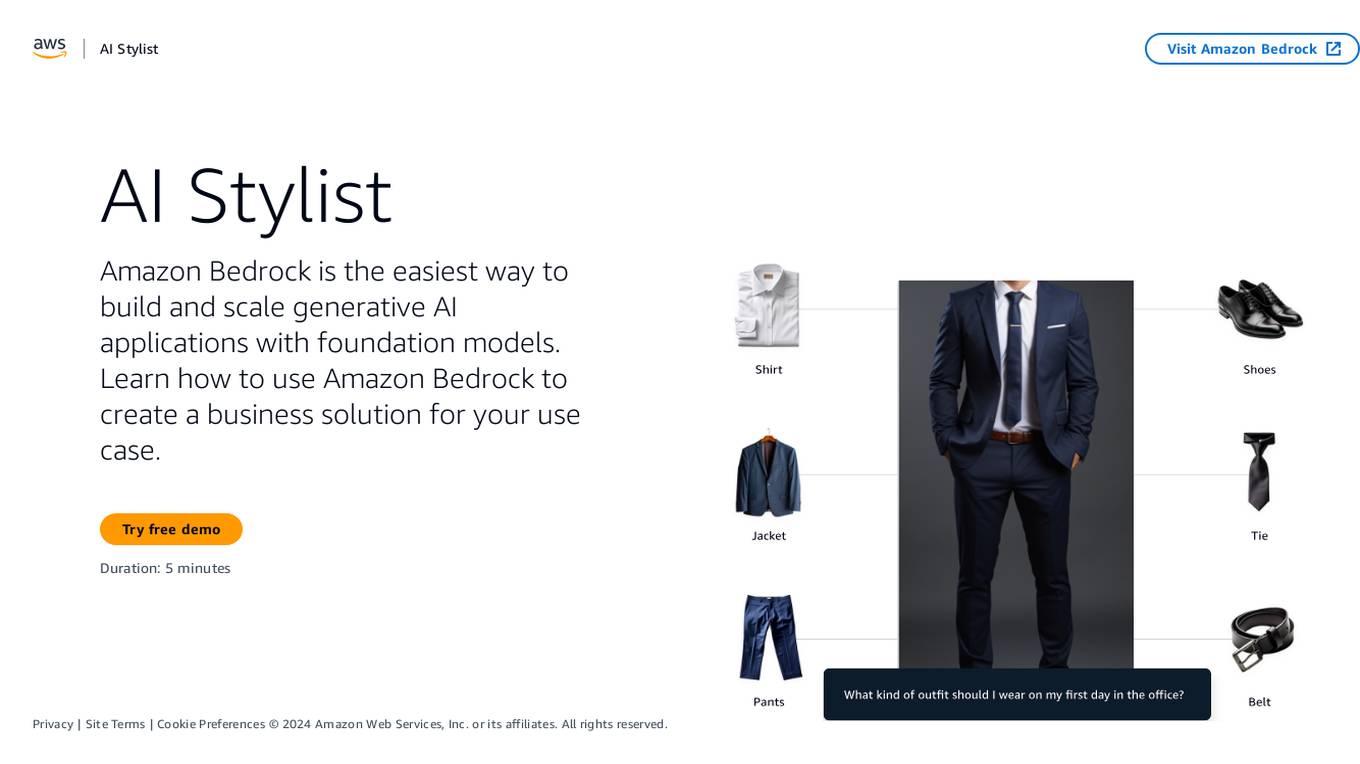

Amazon Bedrock

Amazon Bedrock is a cloud-based platform that enables developers to build, deploy, and manage serverless applications. It provides a fully managed environment that takes care of the infrastructure and operations, so developers can focus on writing code. Bedrock also offers a variety of tools and services to help developers build and deploy their applications, including a code editor, a debugger, and a deployment pipeline.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

Cerebium

Cerebium is a serverless AI infrastructure platform that allows teams to build, test, and deploy AI applications quickly and efficiently. With a focus on speed, performance, and cost optimization, Cerebium offers a range of features and tools to simplify the development and deployment of AI projects. The platform ensures high reliability, security, and compliance while providing real-time logging, cost tracking, and observability tools. Cerebium also offers GPU variety and effortless autoscaling to meet the diverse needs of developers and businesses.

ibl.ai

ibl.ai is a generative AI platform that focuses on education, providing cutting-edge solutions for institutions to create AI mentors, tutoring apps, and content creation tools. The platform empowers educators by giving them full control over their code, data, and models. With advanced features and support for both web and native mobile platforms, ibl.ai seamlessly integrates with existing infrastructure, making it easy to deploy across organizations. The platform is designed to enhance learning experiences, foster critical thinking, and engage students deeply in educational content.

Qubinets

Qubinets is a cloud data environment solutions platform that provides building blocks for building big data, AI, web, and mobile environments. It is an open-source, no lock-in, secured, and private platform that can be used on any cloud, including AWS, Digital Ocean, Google Cloud, and Microsoft Azure. Qubinets makes it easy to plan, build, and run data environments, and it streamlines and saves time and money by reducing the grunt work in setup and provisioning.

Teraflow.ai

Teraflow.ai is an AI-enablement company that specializes in helping businesses adopt and scale their artificial intelligence models. They offer services in data engineering, ML engineering, AI/UX, and cloud architecture. Teraflow.ai assists clients in fixing data issues, boosting ML model performance, and integrating AI into legacy customer journeys. Their team of experts deploys solutions quickly and efficiently, using modern practices and hyper scaler technology. The company focuses on making AI work by providing fixed pricing solutions, building team capabilities, and utilizing agile-scrum structures for innovation. Teraflow.ai also offers certifications in GCP and AWS, and partners with leading tech companies like HashiCorp, AWS, and Microsoft Azure.

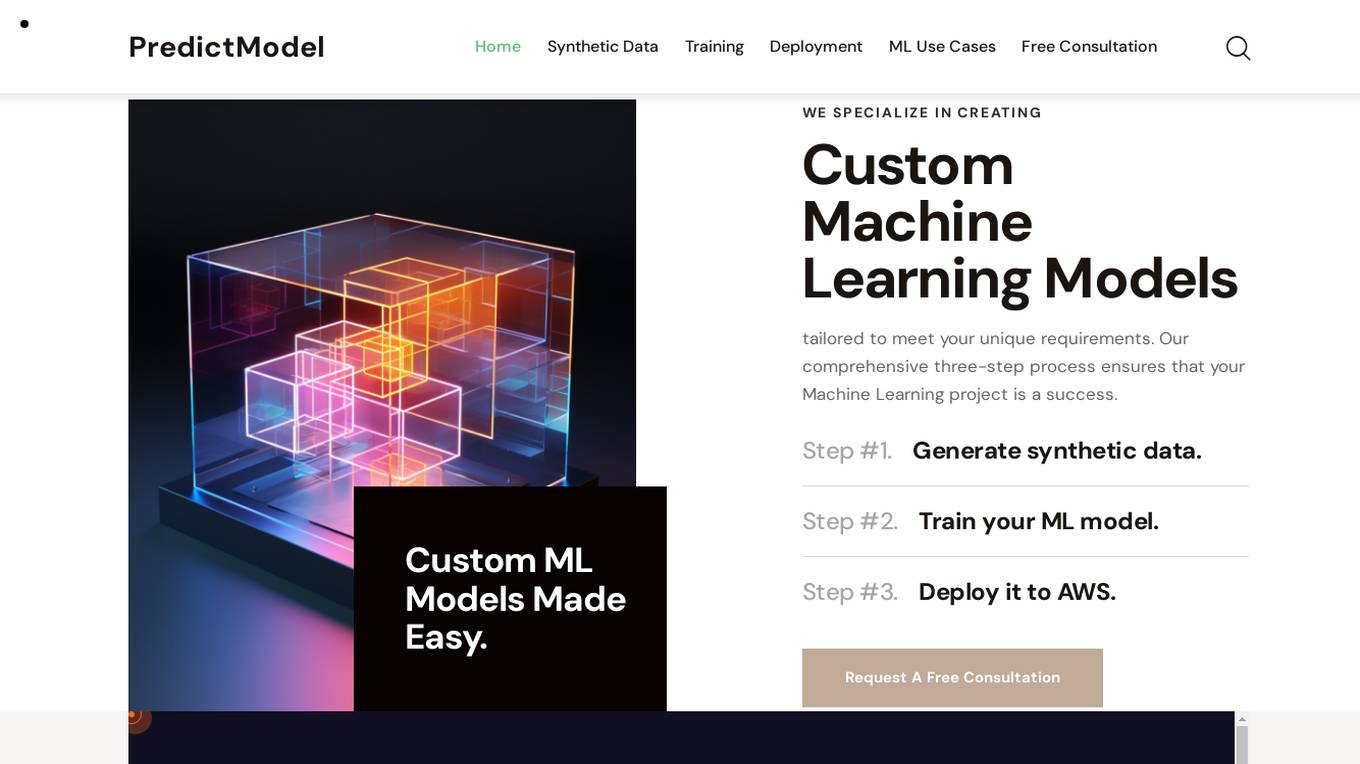

PredictModel

PredictModel is an AI tool that specializes in creating custom Machine Learning models tailored to meet unique requirements. The platform offers a comprehensive three-step process, including generating synthetic data, training ML models, and deploying them to AWS. PredictModel helps businesses streamline processes, improve customer segmentation, enhance client interaction, and boost overall business performance. The tool maximizes accuracy through customized synthetic data generation and saves time and money by providing expert ML engineers. With a focus on automated lead prioritization, fraud detection, cost optimization, and planning, PredictModel aims to stay ahead of the curve in the ML industry.

Databutton

Databutton is an AI developer tool designed for non-techies to build software applications with the help of reasoning AI. It allows users to share their app vision, specs, and design inspiration to get started, create high-level development plans, execute tasks, and take technical ownership. The tool offers deployment to AWS and Google Cloud, scalable infrastructure, and snappy load times. Databutton provides different pricing plans for users based on their needs, including options for teaming up with AI, human advisors, or human developers.

Amazon SageMaker Python SDK

Amazon SageMaker Python SDK is an open source library for training and deploying machine-learned models on Amazon SageMaker. With the SDK, you can train and deploy models using popular deep learning frameworks, algorithms provided by Amazon, or your own algorithms built into SageMaker-compatible Docker images.

Qualcomm AI Hub

Qualcomm AI Hub is a platform that allows users to run AI models on Snapdragon® 8 Elite devices. It provides a collaborative ecosystem for model makers, cloud providers, runtime, and SDK partners to deploy on-device AI solutions quickly and efficiently. Users can bring their own models, optimize for deployment, and access a variety of AI services and resources. The platform caters to various industries such as mobile, automotive, and IoT, offering a range of models and services for edge computing.

Leapwork

Leapwork is an AI-powered test automation platform that enables users to build, manage, maintain, and analyze complex data-driven testing across various applications, including AI apps. It offers a democratized testing approach with an intuitive visual interface, composable architecture, and generative AI capabilities. Leapwork supports testing of diverse application types, web, mobile, desktop applications, and APIs. It allows for scalable testing with reusable test flows that adapt to changes in the application under test. Leapwork can be deployed on the cloud or on-premises, providing full control to the users.

Caffe

Caffe is a deep learning framework developed by Berkeley AI Research (BAIR) and community contributors. It is designed for speed, modularity, and expressiveness, allowing users to define models and optimization through configuration without hard-coding. Caffe supports both CPU and GPU training, making it suitable for research experiments and industry deployment. The framework is extensible, actively developed, and tracks the state-of-the-art in code and models. Caffe is widely used in academic research, startup prototypes, and large-scale industrial applications in vision, speech, and multimedia.

fsck.ai

fsck.ai is an AI-powered software creation kit designed to help developers ship high-quality software faster. It offers cutting-edge AI tools that accelerate code reviews and identify potential problems in code. Similar to Copilot, fsck.ai is fully open-source and can run locally or on a remote machine. Users can sign up for early access to leverage the power of AI in their development workflow.

Hopsworks

Hopsworks is an AI platform that offers a comprehensive solution for building, deploying, and monitoring machine learning systems. It provides features such as a Feature Store, real-time ML capabilities, and generative AI solutions. Hopsworks enables users to develop and deploy reliable AI systems, orchestrate and monitor models, and personalize machine learning models with private data. The platform supports batch and real-time ML tasks, with the flexibility to deploy on-premises or in the cloud.

Narrow AI

Narrow AI is an AI application that autonomously writes, monitors, and optimizes prompts for any model, enabling users to ship AI features 10x faster at a fraction of the cost. It streamlines the workflow by allowing users to test new models in minutes, compare prompt performance, and deploy on the optimal model for their use case. Narrow AI helps users maximize efficiency by generating expert-level prompts, adapting prompts to new models, and optimizing prompts for quality, cost, and speed.

Hanabi.rest

Hanabi.rest is an AI-based API building platform that allows users to create REST APIs from natural language and screenshots using AI technology. Users can deploy the APIs on Cloudflare Workers and roll them out globally. The platform offers a live editor for testing database access and API endpoints, generates code compatible with various runtimes, and provides features like sharing APIs via URL, npm package integration, and CLI dump functionality. Hanabi.rest simplifies API design and deployment by leveraging natural language processing, image recognition, and v0.dev components.

Quidget

Quidget is a no-code AI Agent platform designed to build, train, and deploy AI assistants for websites, apps, or as standalone chatbots. It offers features such as answering customer support questions, engaging leads for sales, automating bookings, orders, and inquiries, as well as assisting with HR, finance, and operations. Quidget AI Agents are trained virtual assistants that go beyond basic chatbots by understanding, learning, and intelligently assisting customers. The platform allows customization of AI behavior, deployment on multiple channels, and integration with various tools. Quidget supports 45+ languages and ensures data security with end-to-end encryption and GDPR compliance.

2 - Open Source AI Tools

aws-genai-llm-chatbot

This repository provides code to deploy a chatbot powered by Multi-Model and Multi-RAG using AWS CDK on AWS. Users can experiment with various Large Language Models and Multimodal Language Models from different providers. The solution supports Amazon Bedrock, Amazon SageMaker self-hosted models, and third-party providers via API. It also offers additional resources like AWS Generative AI CDK Constructs and Project Lakechain for building generative AI solutions and document processing. The roadmap and authors are listed, along with contributors. The library is licensed under the MIT-0 License with information on changelog, code of conduct, and contributing guidelines. A legal disclaimer advises users to conduct their own assessment before using the content for production purposes.

ai-paint-today-BE

AI Paint Today is an API server repository that allows users to record their emotions and daily experiences, and based on that, AI generates a beautiful picture diary of their day. The project includes features such as generating picture diaries from written entries, utilizing DALL-E 2 model for image generation, and deploying on AWS and Cloudflare. The project also follows specific conventions and collaboration strategies for development.

20 - OpenAI Gpts

Rust on ESP32 Expert

Expert in Rust coding for ESP32, offering detailed programming and deployment guidance.

React on Rails Pro

Expert in Rails & React, focusing on high-standard software development.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

XRPL GPT

Build on the XRP Ledger with assistance from this GPT trained on extensive documentation and code samples.

Javascript Cloud services coding assistant

Expert on google cloud services with javascript

Apple CoreML Complete Code Expert

A detailed expert trained on all 3,018 pages of Apple CoreML, offering complete coding solutions. Saving time? https://www.buymeacoffee.com/parkerrex ☕️❤️

Auto Custom Actions GPT

This GPT help you on one single task, generating valid OpenAI Schemas for Custom Actions in GPTs