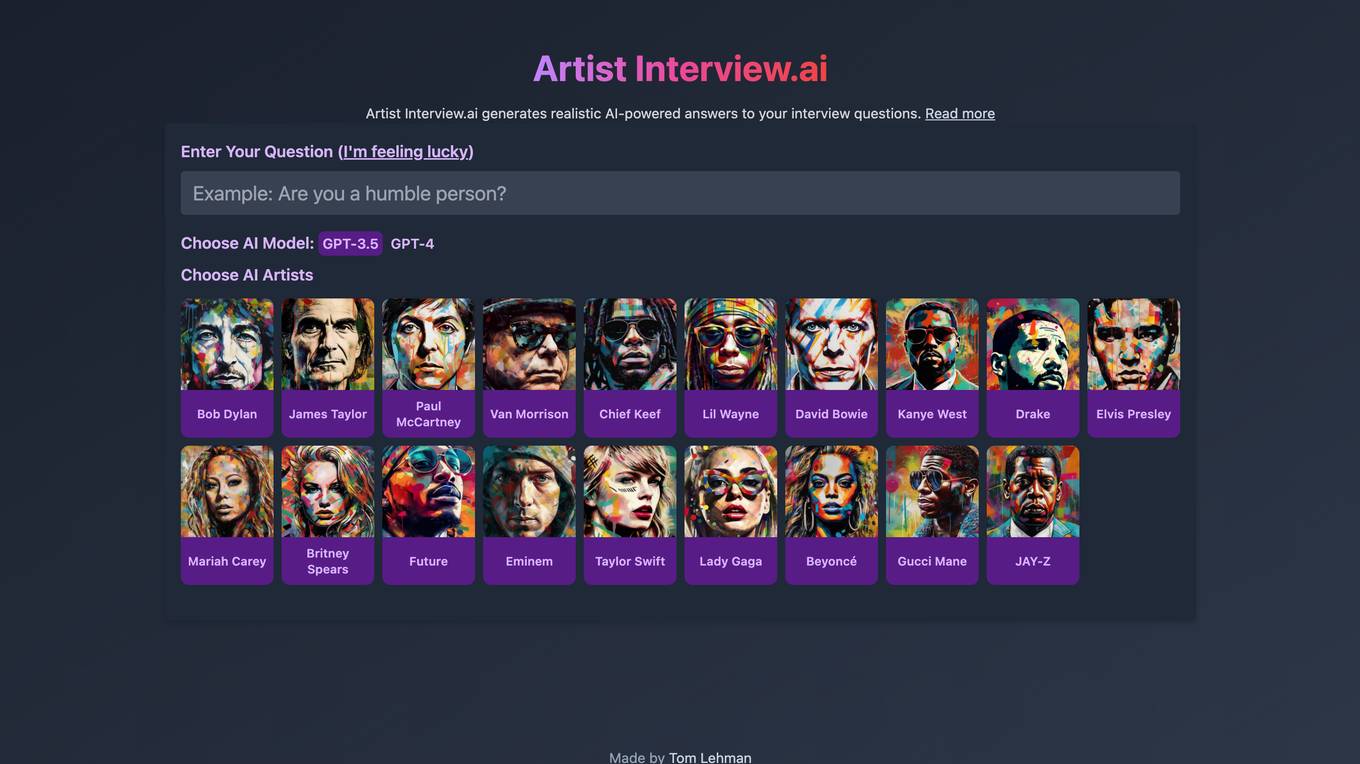

Best AI tools for< Debug Systems >

20 - AI tool Sites

Rerun

Rerun is an SDK, time-series database, and visualizer for temporal and multimodal data. It is used in fields like robotics, spatial computing, 2D/3D simulation, and finance to verify, debug, and explain data. Rerun allows users to log data like tensors, point clouds, and text to create streams, visualize and interact with live and recorded streams, build layouts, customize visualizations, and extend data and UI functionalities. The application provides a composable data model, dynamic schemas, and custom views for enhanced data visualization and analysis.

Interview Solver

Interview Solver is a desktop application that acts as your copilot during coding interviews, providing instant solutions to LeetCode problems and system design questions. It features screengrabbing capabilities, one-shot solutions, query selected text functionality, global hotkeys, and syntax highlighting for all major languages. Interview Solver is designed to give you an AI advantage during live interviews, helping you land your dream job.

Cognition

Cognition is an applied AI lab focused on reasoning. Their first product, Devin, is the first AI software engineer. Cognition is a small team based in New York and the San Francisco Bay Area.

Elixir

Elixir is an AI tool designed for observability and testing of AI voice agents. It offers features such as automated testing, call review, monitoring, analytics, tracing, scoring, and reviewing. Elixir helps in simulating realistic test calls, analyzing conversations, identifying mistakes, and debugging issues with audio snippets and call transcripts. It provides detailed traces for complex abstractions, streamlines manual review processes, and allows for simulating thousands of calls for full test coverage. The tool is suitable for monitoring agent performance, detecting anomalies in real-time, and improving conversational systems through human-in-the-loop feedback.

Refraction

Refraction is an AI-powered code generation tool designed to help developers learn, improve, and generate code effortlessly. It offers a wide range of features such as bug detection, code conversion, function creation, CSP generation, CSS style conversion, debug statement addition, diagram generation, documentation creation, code explanation, code improvement, concept learning, CI/CD pipeline creation, SQL query generation, code refactoring, regex generation, style checking, type addition, and unit test generation. With support for 56 programming languages, Refraction is a versatile tool trusted by innovative companies worldwide to streamline software development processes using the magic of AI.

Microsoft Responsible AI Toolbox

Microsoft Responsible AI Toolbox is a suite of tools designed to assess, develop, and deploy AI systems in a safe, trustworthy, and ethical manner. It offers integrated tools and functionalities to help operationalize Responsible AI in practice, enabling users to make user-facing decisions faster and easier. The Responsible AI Dashboard provides a customizable experience for model debugging, decision-making, and business actions. With a focus on responsible assessment, the toolbox aims to promote ethical AI practices and transparency in AI development.

Helicone

Helicone is an open-source platform designed for developers, offering observability solutions for logging, monitoring, and debugging. It provides sub-millisecond latency impact, 100% log coverage, industry-leading query times, and is ready for production-level workloads. Trusted by thousands of companies and developers, Helicone leverages Cloudflare Workers for low latency and high reliability, offering features such as prompt management, uptime of 99.99%, scalability, and reliability. It allows risk-free experimentation, prompt security, and various tools for monitoring, analyzing, and managing requests.

Portkey

Portkey is a control panel for production AI applications that offers an AI Gateway, Prompts, Guardrails, and Observability Suite. It enables teams to ship reliable, cost-efficient, and fast apps by providing tools for prompt engineering, enforcing reliable LLM behavior, integrating with major agent frameworks, and building AI agents with access to real-world tools. Portkey also offers seamless AI integrations for smarter decisions, with features like managed hosting, smart caching, and edge compute layers to optimize app performance.

Lokal.so

Lokal.so is an AI-powered tool designed to supercharge your localhost development experience. It offers features like sharing your localhost with the public, debugging incoming requests, and developing with the assistance of an AI assistant. With Lokal.so, you can leverage Cloudflare's network for faster site delivery, use a built-in S3 server for easy file debugging, and automatically convert JSON payloads into different programming language models. The tool aims to simplify local development by providing a self-hosted tunnel server, unlimited .local domain access, and endpoint management with memorable names.

Application Error

The website is experiencing an application error, which indicates a technical issue preventing the proper functioning of the application. An application error can occur due to various reasons such as bugs in the code, server issues, or incorrect user input. It is essential to troubleshoot and resolve application errors promptly to ensure the smooth operation of the website.

Application Error

The website is experiencing an application error, which indicates a technical issue preventing the proper functioning of the application. An application error can occur due to various reasons such as software bugs, server issues, or incorrect user inputs. It is essential for the website administrators to troubleshoot and resolve the error promptly to ensure a seamless user experience.

Warp

Warp is a terminal reimagined with AI and collaborative tools for better productivity. It is built with Rust for speed and has an intuitive interface. Warp includes features such as modern editing, command generation, reusable workflows, and Warp Drive. Warp AI allows users to ask questions about programming and get answers, recall commands, and debug errors. Warp Drive helps users organize hard-to-remember commands and share them with their team. Warp is a private and secure application that is trusted by hundreds of thousands of professional developers.

Cirroe AI

Cirroe AI is an intelligent chatbot designed to help users deploy and troubleshoot their AWS cloud infrastructure quickly and efficiently. With Cirroe AI, users can experience seamless automation, reduced downtime, and increased productivity by simplifying their AWS cloud operations. The chatbot allows for fast deployments, intuitive debugging, and cost-effective solutions, ultimately saving time and boosting efficiency in managing cloud infrastructure.

Highcountry Toyota Internet Connection Troubleshooter

Highcountrytoyota.stage.autogo.ai is an AI tool designed to provide assistance and support for troubleshooting internet connection issues. The website offers guidance on resolving connection problems, including checking network settings, firewall configurations, and proxy server issues. Users can find step-by-step instructions and tips to troubleshoot and fix connection errors. The platform aims to help users quickly identify and resolve connectivity issues to ensure seamless internet access.

TimeComplexity.ai

TimeComplexity.ai is an AI tool that helps users analyze the runtime complexity of their code. It works seamlessly across different programming languages without the need for headers, imports, or a main statement. Users can input their code and receive insights on its runtime efficiency. However, it's important to note that the results may not always be accurate, so caution is advised when using the tool.

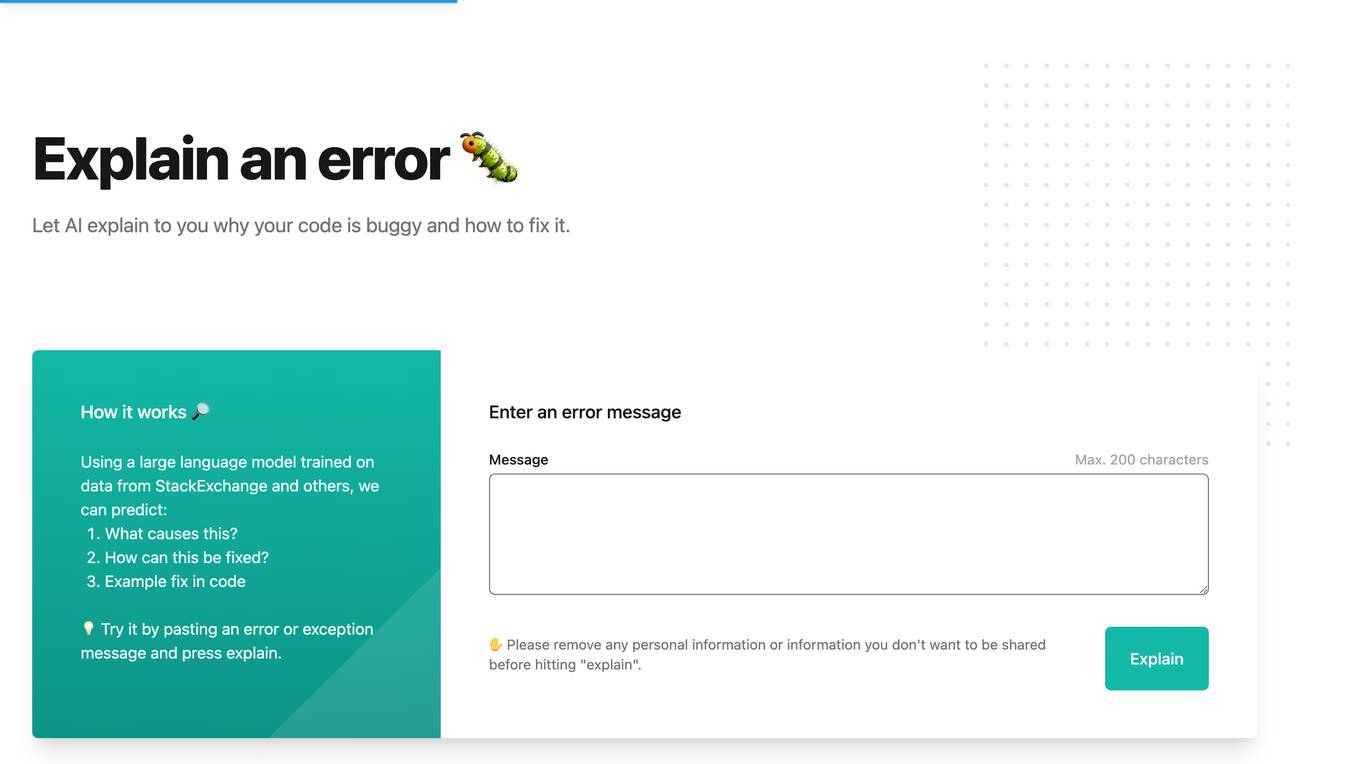

Whybug

Whybug is an AI tool designed to help developers debug their code by providing explanations for errors. By utilizing a large language model trained on data from StackExchange and other sources, Whybug can predict the causes of errors and suggest fixes. Users can simply paste an error message and receive detailed explanations on how to resolve the issue. The tool aims to streamline the debugging process and improve code quality.

New Relic

New Relic is an AI monitoring platform that offers an all-in-one observability solution for monitoring, debugging, and improving the entire technology stack. With over 30 capabilities and 750+ integrations, New Relic provides the power of AI to help users gain insights and optimize performance across various aspects of their infrastructure, applications, and digital experiences.

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Snaplet

Snaplet is a data management tool for developers that provides AI-generated dummy data for local development, end-to-end testing, and debugging. It uses a real programming language (TypeScript) to define and edit data, ensuring type safety and auto-completion. Snaplet understands database structures and relationships, automatically transforming personally identifiable information and seeding data accordingly. It integrates seamlessly into development workflows, providing data where it's needed most: on local machines, for CI/CD testing, and preview environments.

Langfuse

Langfuse is an AI tool that offers the Langfuse TypeScript SDK v4 for building and debugging LLM (Large Language Models) applications. It provides features such as tracing, prompt management, evaluation, and metrics to enhance the performance of LLM applications. Langfuse is backed by a team of experts and offers integrations with various platforms and SDKs. The tool aims to simplify the development process of complex LLM applications and improve overall efficiency.

2 - Open Source AI Tools

prompting

This repository contains the official codebase for Bittensor Subnet 1 (SN1) v1.0.0+, released on 22nd January 2024. It defines an incentive mechanism to create a distributed conversational AI for Subnet 1. Validators and miners are based on large language models (LLM) using internet-scale datasets and goal-driven behavior to drive human-like conversations. The repository requires python3.9 or higher and provides compute requirements for running validators and miners. Users can run miners or validators using specific commands and are encouraged to run on the testnet before deploying on the main network. The repository also highlights limitations and provides resources for understanding the architecture and methodology of SN1.

dagger

Dagger is an open-source runtime for composable workflows, ideal for systems requiring repeatability, modularity, observability, and cross-platform support. It features a reproducible execution engine, a universal type system, a powerful data layer, native SDKs for multiple languages, an open ecosystem, an interactive command-line environment, batteries-included observability, and seamless integration with various platforms and frameworks. It also offers LLM augmentation for connecting to LLM endpoints. Dagger is suitable for AI agents and CI/CD workflows.

20 - OpenAI Gpts

Linux Kernel Expert

Formal and professional Linux Kernel Expert, adept in technical jargon.

42 C C++

A coding assistant specializing in C and C++98, offering guidance and explanations.

God's Code

A god of coding, programming, IDE, libraries, software kits for modern development.

Xilinx FPGA Assistant

Expert in Xilinx FPGA development, catering to all experience levels.

Shell Mentor

An AI GPT model designed to assist with Shell/Bash programming, providing real-time code suggestions, debugging tips, and script optimization for efficient command-line operations.

Code Like a GOAT 🐐🧙🏻♂️

Unleash Your Inner GOAT in Coding! Be the ultimate full-stack developer with unrivaled skills in all coding languages and platforms. Write elegant, secure code, and more. Excel in cybersecurity and innovate with your comprehensive expertise. Ready to code like never before?