Winter

UCI Chess Engine

Stars: 80

Winter is a UCI chess engine that has competed at top invite-only computer chess events. It is the top-rated chess engine from Switzerland and has a level of play that is super human but below the state of the art reached by large, distributed, and resource-intensive open-source projects like Stockfish and Leela Chess Zero. Winter has relied on many machine learning algorithms and techniques over the course of its development, including certain clustering methods not used in any other chess programs, such as Gaussian Mixture Models and Soft K-Means. As of Winter 0.6.2, the evaluation function relies on a small neural network for more precise evaluations.

README:

Winter is a UCI chess engine.

It is the top rated chess engine from Switzerland and has competed at top invite only computer chess events such as the Top Chess Engine Competition and the Chess.com Computer Championships. Its level of play is super human, but below the state of the art reached by the large, distributed and resource intensive open source projects Stockfish and Leela Chess Zero.

Winter has relied on many machine learning algorithms and techniques over the course of its development. This includes certain clustering methods not used in any other chess programs, such as Gaussian Mixture Models and Soft K-Means.

As of Winter 0.6.2, the evaluation function relies on a small neural network for more precise evaluations.

In order to run it on Linux, just compile it via "make" in the root directory and then run it from the root directory.

The makefile will assume you are making a native build, but if you are making a build for a different system, it should be reasonably straightforward to modify yourself.

Winter does not rely on any external libraries aside from the Standard Template Library. All algorithms have been implemented from scratch. As of Winter 0.6.2 I have started to build an external codebase for neural network training.

Compiling for android is only a bit more complicated than compiling for Linux. Follow the following instructions.

- Download and extract Android's NDK.

- Export your NDK path. For example

$ export PATH=$PATH:$HOME/android-ndk-r21d/toolchains/llvm/prebuilt/linux-x86_64/bin - Switch to the Winter root directory

$ cd Winter - Get the sse2neon header file.

$ wget -O src/learning/sse2neon.h https://raw.githubusercontent.com/DLTcollab/sse2neon/master/sse2neon.h - Build!

$ make ARCH=aarch64(64-bit) or$ make ARCH=armv7a(32-bit)$ aarch64-linux-android-strip Winter

Winter versions 0.7 and later have support for contempt settings. In most engines contempt is used to reduce the number of draws and thus increase performance against weaker engines, often at the cost of performance in self play or against stronger opposition.

Winter uses a novel contempt implementation that utilizes the fact that Winter calculates win, draw and loss probabilities. Increasing contempt in Winter reduces how much it values draws for itself and increases how much it believes the opponent values draws.

As of version v0.8.1 disabled. If I don't remember to add this again before v0.9, please pester me with an issue on github!

Internally Winter actually tends to have a negative score for positive contempt and vice versa. This is natural as positive contempt is reducing the value of a draw for the side to move, so the score will be negatively biased.

In order to have more realistic score outputs, Winter does a bias adjustment for non-mate scores. The formula assumes a maximum probability for a draw and readjusts the score based on that. This results in an overcorrection. Ie: positive contempt values will result in a reported upper bound score and negative contempt values will result in a reported lower bound score.

For high contempt values it is recommended to adjust adjudication settings.

An increasingly popular format in human chess is Armageddon. Winter is the first engine to the author's knowledge to natively support Armageddon play as a UCI option. Internally this works by setting contempt to high positive value when playing white and a high negative value when playing black.

At the moment contempt is not set to the maximum in Armageddon mode. In the limited testing done this proved to perform more consistently. This may change in the future.

In Armageddon mode score recalibration is not performed. The score recalibration formula for regular contempt assumes the contempt pushes the score away from the true symmetrical evaluation. In Armageddon the true eval is not symmetric.

As of version v0.8.1 disabled. If I don't remember to add this again before v0.9, please pester me with an issue on github!

At the moment training a neural network for use in Winter is only supported in a very limited way. I intend to release the script shortly which was used in order to train the initial 0.6.2 net.

In the following I describe the steps to get from a pgn game database to a network for Winter.

- Get and compile the latest pgn-extract by David J. Barnes.

- Use pgn-extract on your .pgn file with the arguments

-Wuciand--notags. This will create a file readable by Winter. - Run Winter from command line. Call

gen_eval_csv filename out_filenamewhere filename is the name of the file generated in 2. and out_filename is what Winter should call the generated file. This will create a .csv dataset file (described below) based on pseudo-quiescent positions from the input games. - Train a neural network on the dataset. It is recommended to try to train something simple for now. Keep in mind I would like to refrain from making Winter rely on any external libraries.

- Integrate the network into Winter. In the future I will probably support loading external weight files, but for now you need to replace the appropriate entries in

src/net_weights.h. -

make cleanandmake

The structure of the .csv dataset generated in 3. is as follows. The first column is a boolean value indicating wether the player to move won. The second column is a boolean value indicating whether the player to move scored at least a draw. The remaining collumns are features which are somewhat sparse. An overview of these features can be found in src/net_evaluation.h.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Winter

Similar Open Source Tools

Winter

Winter is a UCI chess engine that has competed at top invite-only computer chess events. It is the top-rated chess engine from Switzerland and has a level of play that is super human but below the state of the art reached by large, distributed, and resource-intensive open-source projects like Stockfish and Leela Chess Zero. Winter has relied on many machine learning algorithms and techniques over the course of its development, including certain clustering methods not used in any other chess programs, such as Gaussian Mixture Models and Soft K-Means. As of Winter 0.6.2, the evaluation function relies on a small neural network for more precise evaluations.

Bagatur

Bagatur chess engine is a powerful Java chess engine that can run on Android devices and desktop computers. It supports the UCI protocol and can be easily integrated into chess programs with user interfaces. The engine is available for download on various platforms and has advanced features like SMP (multicore) support and NNUE evaluation function. Bagatur also includes syzygy endgame tablebases and offers various UCI options for customization. The project started as a personal challenge to create a chess program that could defeat a friend, leading to years of development and improvements.

blackmarlin

Black Marlin is a UCI compliant chess engine fully written in Rust by Doruk Sekercioglu. It supports Chess960 and features a variety of search algorithms, pruning techniques, and evaluation methods. Black Marlin is designed to be efficient and accurate, and it has been shown to perform well against other top chess engines.

deep-seek

DeepSeek is a new experimental architecture for a large language model (LLM) powered internet-scale retrieval engine. Unlike current research agents designed as answer engines, DeepSeek aims to process a vast amount of sources to collect a comprehensive list of entities and enrich them with additional relevant data. The end result is a table with retrieved entities and enriched columns, providing a comprehensive overview of the topic. DeepSeek utilizes both standard keyword search and neural search to find relevant content, and employs an LLM to extract specific entities and their associated contents. It also includes a smaller answer agent to enrich the retrieved data, ensuring thoroughness. DeepSeek has the potential to revolutionize research and information gathering by providing a comprehensive and structured way to access information from the vastness of the internet.

modelbench

ModelBench is a tool for running safety benchmarks against AI models and generating detailed reports. It is part of the MLCommons project and is designed as a proof of concept to aggregate measures, relate them to specific harms, create benchmarks, and produce reports. The tool requires LlamaGuard for evaluating responses and a TogetherAI account for running benchmarks. Users can install ModelBench from GitHub or PyPI, run tests using Poetry, and create benchmarks by providing necessary API keys. The tool generates static HTML pages displaying benchmark scores and allows users to dump raw scores and manage cache for faster runs. ModelBench is aimed at enabling users to test their own models and create tests and benchmarks.

skyeye

SkyEye is an AI-powered Ground Controlled Intercept (GCI) bot designed for the flight simulator Digital Combat Simulator (DCS). It serves as an advanced replacement for the in-game E-2, E-3, and A-50 AI aircraft, offering modern voice recognition, natural-sounding voices, real-world brevity and procedures, a wide range of commands, and intelligent battlespace monitoring. The tool uses Speech-To-Text and Text-To-Speech technology, can run locally or on a cloud server, and is production-ready software used by various DCS communities.

aiohomekit

aiohomekit is a Python library that implements the HomeKit protocol for controlling HomeKit accessories using asyncio. It is primarily used with Home Assistant, targeting the same versions of Python and following their code standards. The library is still under development and does not offer API guarantees yet. It aims to match the behavior of real HAP controllers, even when not strictly specified, and works around issues like JSON formatting, boolean encoding, header sensitivity, and TCP packet splitting. aiohomekit is primarily tested with Phillips Hue and Eve Extend bridges via Home Assistant, but is known to work with many more devices. It does not support BLE accessories and is intended for client-side use only.

qlora-pipe

qlora-pipe is a pipeline parallel training script designed for efficiently training large language models that cannot fit on one GPU. It supports QLoRA, LoRA, and full fine-tuning, with efficient model loading and the ability to load any dataset that Axolotl can handle. The script allows for raw text training, resuming training from a checkpoint, logging metrics to Tensorboard, specifying a separate evaluation dataset, training on multiple datasets simultaneously, and supports various models like Llama, Mistral, Mixtral, Qwen-1.5, and Cohere (Command R). It handles pipeline- and data-parallelism using Deepspeed, enabling users to set the number of GPUs, pipeline stages, and gradient accumulation steps for optimal utilization.

textcoder

Textcoder is a proof-of-concept tool for steganographically encoding secret messages into ordinary text using arithmetic coding based on a statistical model derived from an LLM. It encrypts the secret message to produce a pseudorandom bit stream, which is then decompressed to generate text that appears randomly sampled from the LLM while encoding the secret message in specific token choices.

TFTMuZeroAgent

TFTMuZeroAgent is an implementation of a purely artificial intelligence algorithm to play Teamfight Tactics, an auto chess game made by Riot. It uses a simulation of TFT Set 4 and the MuZero reinforcement learning algorithm. The project provides a multi-agent petting zoo environment where players, pool, and game round classes are designed for AI project. The implementation excludes graphics and sounds but covers all aspects of the game from set 4. The codebase is open for contributions and improvements, allowing for additional models to be added to the environment.

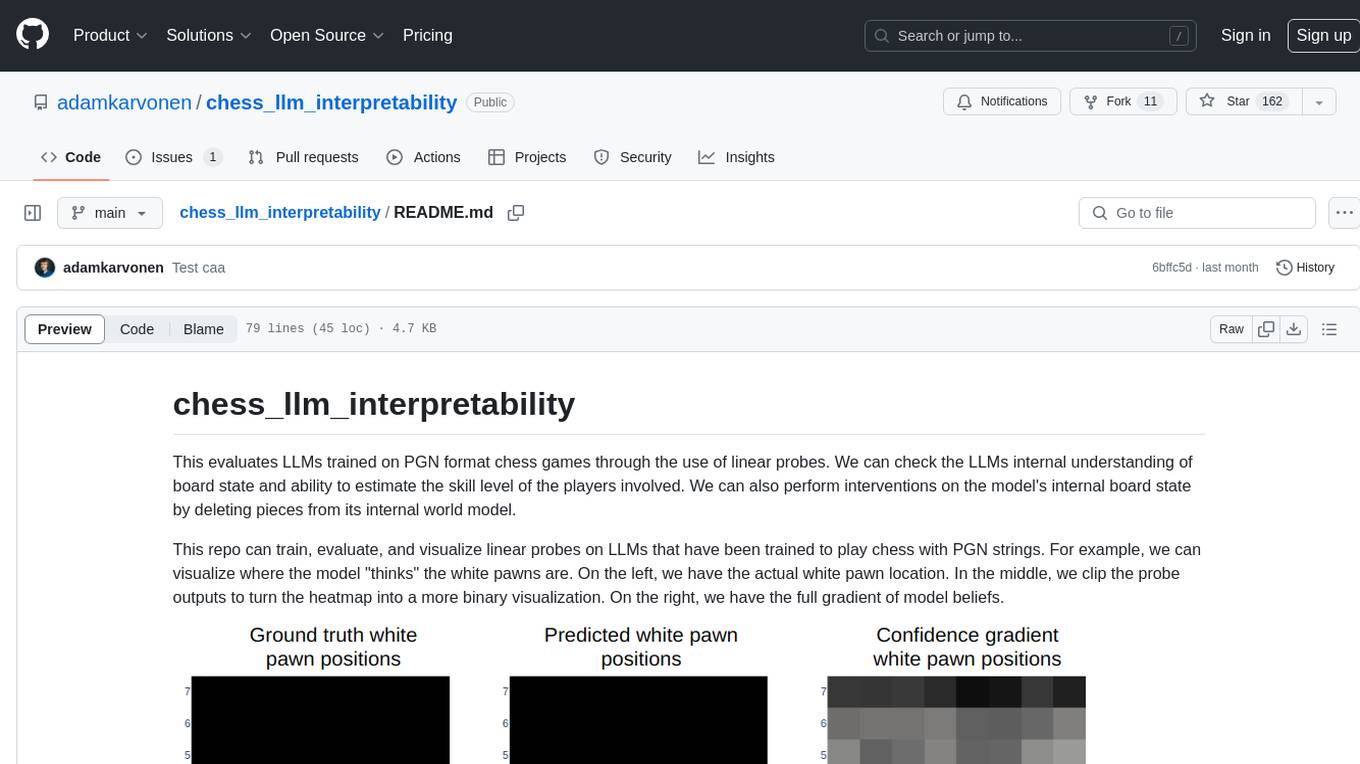

chess_llm_interpretability

This repository evaluates Large Language Models (LLMs) trained on PGN format chess games using linear probes. It assesses the LLMs' internal understanding of board state and their ability to estimate player skill levels. The repo provides tools to train, evaluate, and visualize linear probes on LLMs trained to play chess with PGN strings. Users can visualize the model's predictions, perform interventions on the model's internal board state, and analyze board state and player skill level accuracy across different LLMs. The experiments in the repo can be conducted with less than 1 GB of VRAM, and training probes on the 8 layer model takes about 10 minutes on an RTX 3050. The repo also includes scripts for performing board state interventions and skill interventions, along with useful links to open-source code, models, datasets, and pretrained models.

dota2ai

The Dota2 AI Framework project aims to provide a framework for creating AI bots for Dota2, focusing on coordination and teamwork. It offers a LUA sandbox for scripting, allowing developers to code bots that can compete in standard matches. The project acts as a proxy between the game and a web service through JSON objects, enabling bots to perform actions like moving, attacking, casting spells, and buying items. It encourages contributions and aims to enhance the AI capabilities in Dota2 modding.

llama3_interpretability_sae

This project focuses on implementing Sparse Autoencoders (SAEs) for mechanistic interpretability in Large Language Models (LLMs) like Llama 3.2-3B. The SAEs aim to untangle superimposed representations in LLMs into separate, interpretable features for each neuron activation. The project provides an end-to-end pipeline for capturing training data, training the SAEs, analyzing learned features, and verifying results experimentally. It includes comprehensive logging, visualization, and checkpointing of SAE training, interpretability analysis tools, and a pure PyTorch implementation of Llama 3.1/3.2 chat and text completion. The project is designed for scalability, efficiency, and maintainability.

gpdb

Greenplum Database (GPDB) is an advanced, fully featured, open source data warehouse, based on PostgreSQL. It provides powerful and rapid analytics on petabyte scale data volumes. Uniquely geared toward big data analytics, Greenplum Database is powered by the world’s most advanced cost-based query optimizer delivering high analytical query performance on large data volumes.

reverse-engineering-assistant

ReVA (Reverse Engineering Assistant) is a project aimed at building a disassembler agnostic AI assistant for reverse engineering tasks. It utilizes a tool-driven approach, providing small tools to the user to empower them in completing complex tasks. The assistant is designed to accept various inputs, guide the user in correcting mistakes, and provide additional context to encourage exploration. Users can ask questions, perform tasks like decompilation, class diagram generation, variable renaming, and more. ReVA supports different language models for online and local inference, with easy configuration options. The workflow involves opening the RE tool and program, then starting a chat session to interact with the assistant. Installation includes setting up the Python component, running the chat tool, and configuring the Ghidra extension for seamless integration. ReVA aims to enhance the reverse engineering process by breaking down actions into small parts, including the user's thoughts in the output, and providing support for monitoring and adjusting prompts.

llm_steer

LLM Steer is a Python module designed to steer Large Language Models (LLMs) towards specific topics or subjects by adding steer vectors to different layers of the model. It enhances the model's capabilities, such as providing correct responses to logical puzzles. The tool should be used in conjunction with the transformers library. Users can add steering vectors to specific layers of the model with coefficients and text, retrieve applied steering vectors, and reset all steering vectors to the initial model. Advanced usage involves changing default parameters, but it may lead to the model outputting gibberish in most cases. The tool is meant for experimentation and can be used to enhance role-play characteristics in LLMs.

For similar tasks

Winter

Winter is a UCI chess engine that has competed at top invite-only computer chess events. It is the top-rated chess engine from Switzerland and has a level of play that is super human but below the state of the art reached by large, distributed, and resource-intensive open-source projects like Stockfish and Leela Chess Zero. Winter has relied on many machine learning algorithms and techniques over the course of its development, including certain clustering methods not used in any other chess programs, such as Gaussian Mixture Models and Soft K-Means. As of Winter 0.6.2, the evaluation function relies on a small neural network for more precise evaluations.

blackmarlin

Black Marlin is a UCI compliant chess engine fully written in Rust by Doruk Sekercioglu. It supports Chess960 and features a variety of search algorithms, pruning techniques, and evaluation methods. Black Marlin is designed to be efficient and accurate, and it has been shown to perform well against other top chess engines.

CameraChessWeb

Camera Chess Web is a tool that allows you to use your phone camera to replace chess eBoards. With Camera Chess Web, you can broadcast your game to Lichess, play a game on Lichess, or digitize a chess game from a video or live stream. Camera Chess Web is free to download on Google Play.

For similar jobs

Winter

Winter is a UCI chess engine that has competed at top invite-only computer chess events. It is the top-rated chess engine from Switzerland and has a level of play that is super human but below the state of the art reached by large, distributed, and resource-intensive open-source projects like Stockfish and Leela Chess Zero. Winter has relied on many machine learning algorithms and techniques over the course of its development, including certain clustering methods not used in any other chess programs, such as Gaussian Mixture Models and Soft K-Means. As of Winter 0.6.2, the evaluation function relies on a small neural network for more precise evaluations.

blackmarlin

Black Marlin is a UCI compliant chess engine fully written in Rust by Doruk Sekercioglu. It supports Chess960 and features a variety of search algorithms, pruning techniques, and evaluation methods. Black Marlin is designed to be efficient and accurate, and it has been shown to perform well against other top chess engines.