Best AI tools for< Safety >

20 - AI tool Sites

European Agency for Safety and Health at Work

The European Agency for Safety and Health at Work (EU-OSHA) is an EU agency that provides information, statistics, legislation, and risk assessment tools on occupational safety and health (OSH). The agency's mission is to make Europe's workplaces safer, healthier, and more productive.

Voxel's Safety Intelligence Platform

Voxel's Safety Intelligence Platform is an AI-driven site intelligence platform that empowers safety and operations leaders to make strategic decisions. It provides real-time visibility into critical safety practices, offers custom insights through on-demand dashboards, facilitates risk management with collaborative tools, and promotes a sustainable safety culture. The platform helps enterprises reduce risks, increase efficiency, and enhance workforce safety through innovative AI technology.

Center for AI Safety (CAIS)

The Center for AI Safety (CAIS) is a research and field-building nonprofit based in San Francisco. Their mission is to reduce societal-scale risks associated with artificial intelligence (AI) by conducting impactful research, building the field of AI safety researchers, and advocating for safety standards. They offer resources such as a compute cluster for AI/ML safety projects, a blog with in-depth examinations of AI safety topics, and a newsletter providing updates on AI safety developments. CAIS focuses on technical and conceptual research to address the risks posed by advanced AI systems.

Center for AI Safety (CAIS)

The Center for AI Safety (CAIS) is a research and field-building nonprofit organization based in San Francisco. They conduct impactful research, advocacy projects, and provide resources to reduce societal-scale risks associated with artificial intelligence (AI). CAIS focuses on technical AI safety research, field-building projects, and offers a compute cluster for AI/ML safety projects. They aim to develop and use AI safely to benefit society, addressing inherent risks and advocating for safety standards.

AI Safety Initiative

The AI Safety Initiative is a premier coalition of trusted experts that aims to develop and deliver essential AI guidance and tools for organizations to deploy safe, responsible, and compliant AI solutions. Through vendor-neutral research, training programs, and global industry experts, the initiative provides authoritative AI best practices and tools. It offers certifications, training, and resources to help organizations navigate the complexities of AI governance, compliance, and security. The initiative focuses on AI technology, risk, governance, compliance, controls, and organizational responsibilities.

SC Training

SC Training, formerly known as EdApp, is a mobile learning management system that offers a wide range of features to enhance the training experience for both administrators and learners. The platform provides tools for creating, managing, and tracking training courses, with a strong focus on microlearning and gamification. SC Training aims to deliver bite-sized, engaging content that can be accessed anytime, anywhere, on any device. The application also incorporates AI technology to streamline course creation and improve the learning experience. With a diverse course library, practical assessments, and group training capabilities, SC Training is designed to help organizations deliver effective and efficient training programs.

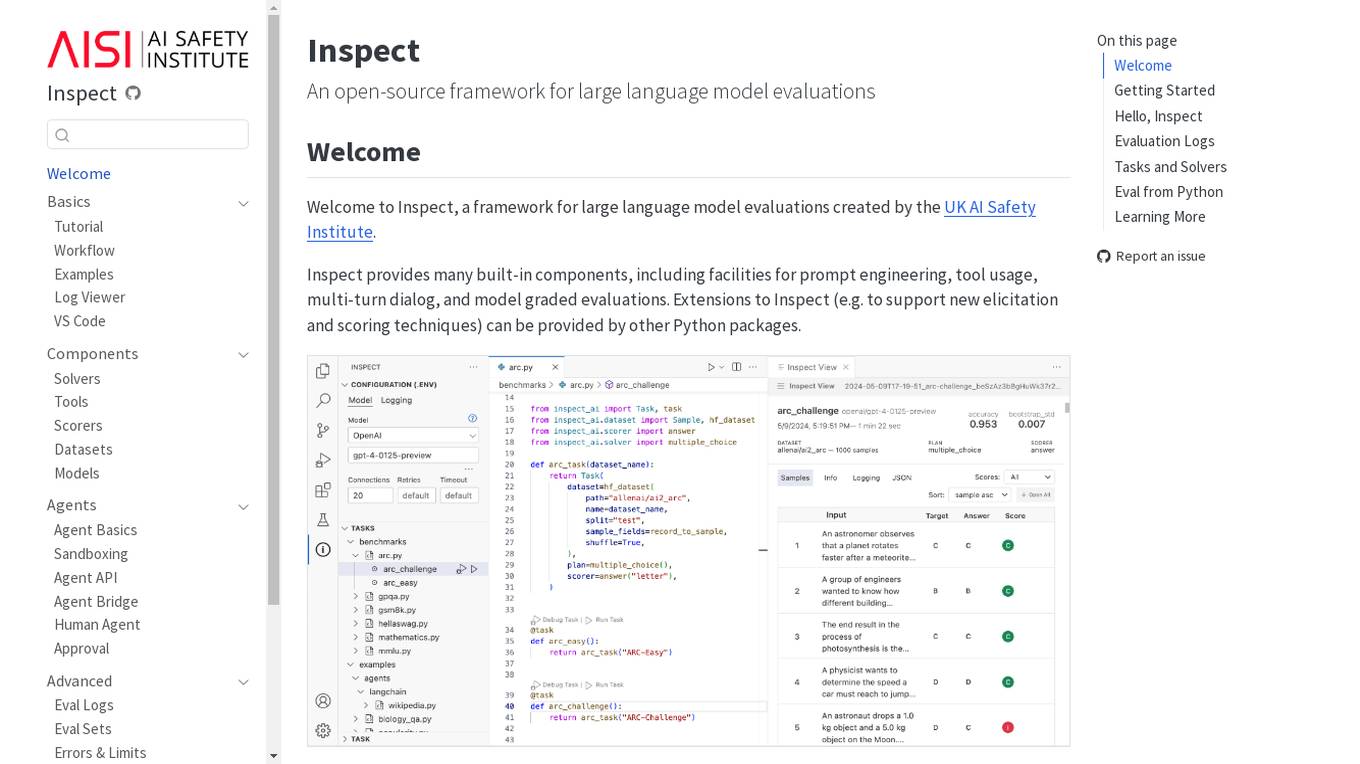

Inspect

Inspect is an open-source framework for large language model evaluations created by the UK AI Safety Institute. It provides built-in components for prompt engineering, tool usage, multi-turn dialog, and model graded evaluations. Users can explore various solvers, tools, scorers, datasets, and models to create advanced evaluations. Inspect supports extensions for new elicitation and scoring techniques through Python packages.

Visionify.ai

Visionify.ai is an advanced Vision AI application designed to enhance workplace safety and compliance through AI-driven surveillance. The platform offers over 60 Vision AI scenarios for hazard warnings, worker health, compliance policies, environment monitoring, vehicle monitoring, and suspicious activity detection. Visionify.ai empowers EHS professionals with continuous monitoring, real-time alerts, proactive hazard identification, and privacy-focused data security measures. The application transforms ordinary cameras into vigilant protectors, providing instant alerts and video analytics tailored to safety needs.

SWMS AI

SWMS AI is an AI-powered safety risk assessment tool that helps businesses streamline compliance and improve safety. It leverages a vast knowledge base of occupational safety resources, codes of practice, risk assessments, and safety documents to generate risk assessments tailored specifically to a project, trade, and industry. SWMS AI can be customized to a company's policies to align its AI's document generation capabilities with proprietary safety standards and requirements.

Kami Home

Kami Home is an AI-powered security application that provides effortless safety and security for homes. It offers smart alerts, secure cloud video storage, and a Pro Security Alarm system with 24/7 emergency response. The application uses AI-vision to detect humans, vehicles, and animals, ensuring that users receive custom alerts for relevant activities. With features like Fall Detect for seniors living at home, Kami Home aims to protect families and provide peace of mind through advanced technology.

Turing AI

Turing AI is a cloud-based video security system powered by artificial intelligence. It offers a range of AI-powered video surveillance products and solutions to enhance safety, security, and operations. The platform provides smart video search capabilities, real-time alerts, instant video sharing, and hardware offerings compatible with various cameras. With flexible licensing options and integration with third-party devices, Turing AI is trusted by customers across industries for its robust and innovative approach to cloud video security.

Frontier Model Forum

The Frontier Model Forum (FMF) is a collaborative effort among leading AI companies to advance AI safety and responsibility. The FMF brings together technical and operational expertise to identify best practices, conduct research, and support the development of AI applications that meet society's most pressing needs. The FMF's core objectives include advancing AI safety research, identifying best practices, collaborating across sectors, and helping AI meet society's greatest challenges.

SEA.AI

SEA.AI is an AI tool that provides Machine Vision for Safety at Sea. It utilizes the latest camera technology combined with artificial intelligence to detect and classify objects on the surface of the water, including unsignalled craft, floating obstacles, buoys, kayaks, and persons overboard. The application offers various solutions for sailing, commercial, motor, maritime surveillance, search & rescue, and government sectors. SEA.AI aims to enhance safety and convenience for sailors by leveraging AI technology for early detection of potential hazards at sea.

Recognito

Recognito is a leading facial recognition technology provider, offering the NIST FRVT Top 1 Face Recognition Algorithm. Their high-performance biometric technology is used by police forces and security services to enhance public safety, manage individual movements, and improve audience analytics for businesses. Recognito's software goes beyond object detection to provide detailed user role descriptions and develop user flows. The application enables rapid face and body attribute recognition, video analytics, and artificial intelligence analysis. With a focus on security, living, and business improvements, Recognito helps create safer and more prosperous cities.

DisplayGateGuard

DisplayGateGuard is an AI-powered brand safety and suitability provider that helps advertisers choose the right placements, isolate fraudulent websites, and enhance brand safety. By leveraging artificial intelligence, the platform offers curated inclusion and exclusion lists to provide deeper insights into the environments and contexts where ads are shown, ensuring campaigns reach the right audience effectively.

EdgeDX

EdgeDX is a leading provider of Edge AI Video Analysis Solutions, specializing in security and surveillance, construction and logistics safety, efficient store management, public safety management, and intelligent transportation system. The application offers over 50 intuitive AI apps capable of advanced human behavior analysis, supports various protocols and VMS, and provides features like P2P based mobile alarm viewer, LTE & GPS support, and internal recording with M.2 NVME SSD. EdgeDX aims to protect customer assets, ensure safety, and enable seamless integration with AI Bridge for easy and efficient implementation.

Anthropic

Anthropic is an AI safety and research company based in San Francisco. Our interdisciplinary team has experience across ML, physics, policy, and product. Together, we generate research and create reliable, beneficial AI systems.

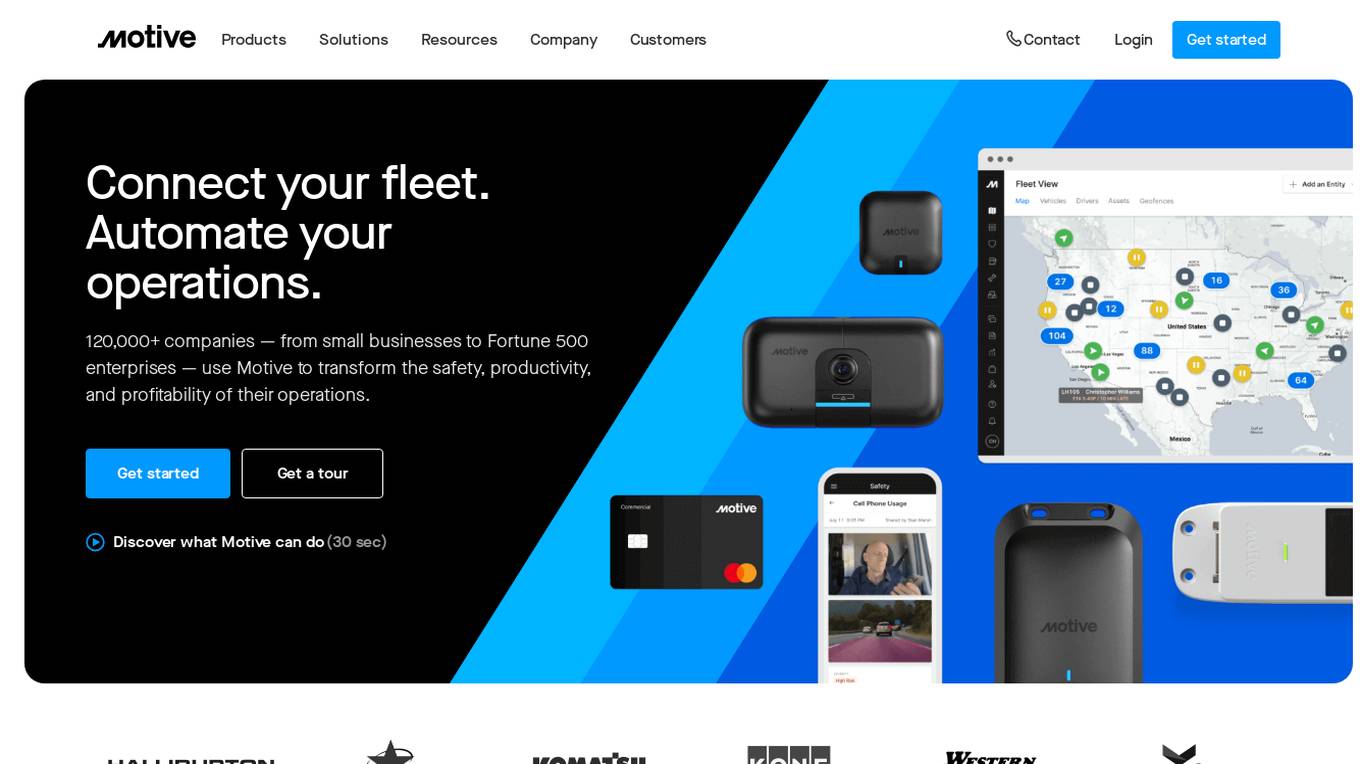

Motive

Motive is an all-in-one fleet management platform that provides businesses with a variety of tools to help them improve safety, efficiency, and profitability. Motive's platform includes features such as AI-powered dashcams, ELD compliance, GPS fleet tracking, equipment monitoring, and fleet card management. Motive's platform is used by over 120,000 companies, including small businesses and Fortune 500 enterprises.

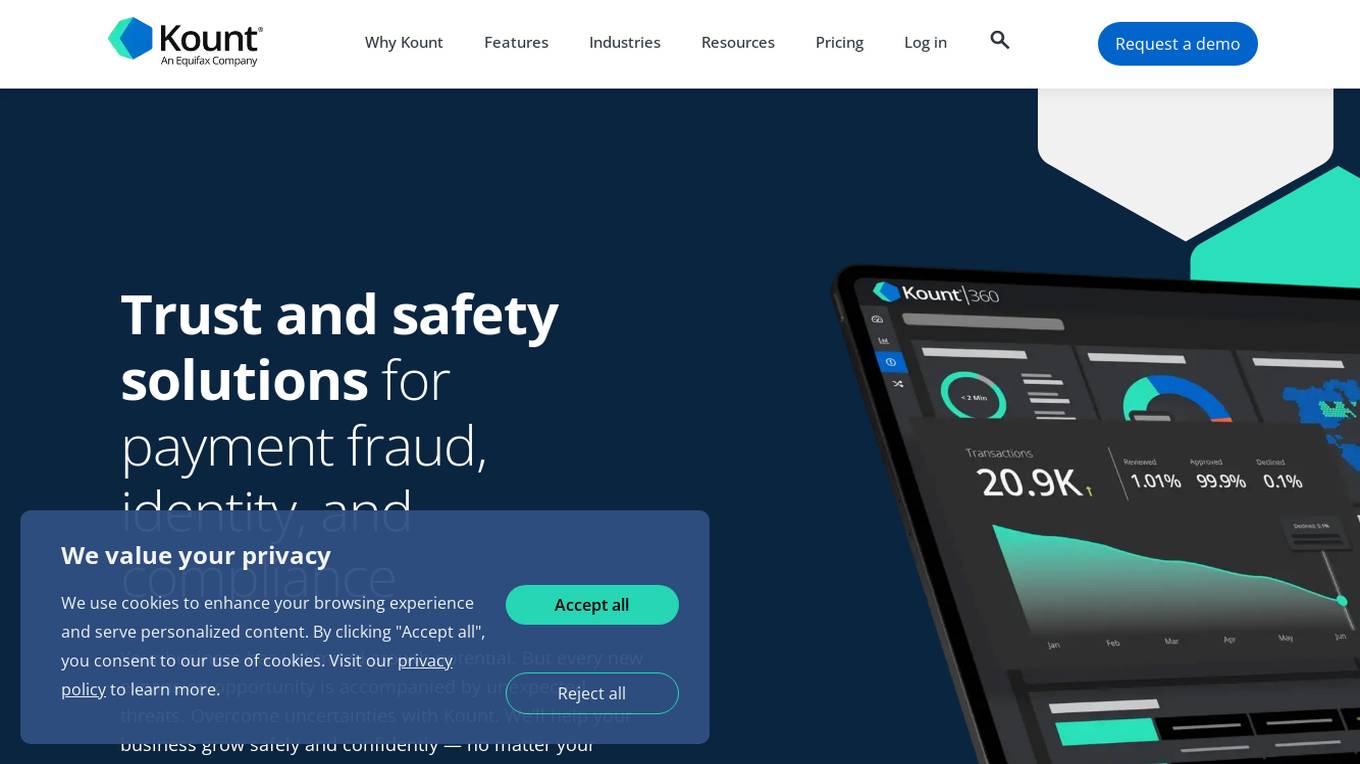

Kount

Kount is a comprehensive trust and safety platform that offers solutions for fraud detection, chargeback management, identity verification, and compliance. With advanced artificial intelligence and machine learning capabilities, Kount provides businesses with robust data and customizable policies to protect against various threats. The platform is suitable for industries such as ecommerce, health care, online learning, gaming, and more, offering personalized solutions to meet individual business needs.

Storytell.ai

Storytell.ai is an enterprise-grade AI platform that offers Business-Grade Intelligence across data, focusing on boosting productivity for employees and teams. It provides a secure environment with features like creating project spaces, multi-LLM chat, task automation, chat with company data, and enterprise-AI security suite. Storytell.ai ensures data security through end-to-end encryption, data encryption at rest, provenance chain tracking, and AI firewall. It is committed to making AI safe and trustworthy by not training LLMs with user data and providing audit logs for accountability. The platform continuously monitors and updates security protocols to stay ahead of potential threats.

0 - Open Source AI Tools

20 - OpenAI Gpts

Canadian Film Industry Safety Expert

Film studio safety expert guiding on regulations and practices

The Building Safety Act Bot (Beta)

Simplifying the BSA for your project. Created by www.arka.works

Brand Safety Audit

Get a detailed risk analysis for public relations, marketing, and internal communications, identifying challenges and negative impacts to refine your messaging strategy.

GPT Safety Liaison

A liaison GPT for AI safety emergencies, connecting users to OpenAI experts.

Travel Safety Advisor

Up-to-date travel safety advisor using web data, avoids subjective advice.

香港地盤安全佬 HK Construction Site Safety Advisor

Upload a site photo to assess the potential hazard and seek advises from experience AI Safety Officer

Emergency Training

Provides emergency training assistance with a focus on safety and clear guidelines.

Dog Safe: Can My Dog Eat This?

Your expert guide to dog safety, find out what's safe for dogs to eat. You may be suprised!