vector-search-class-notes

Class notes for the course "Long Term Memory in AI - Vector Search and Databases" COS 597A @ Princeton Fall 2023

Stars: 316

The 'vector-search-class-notes' repository contains class materials for a course on Long Term Memory in AI, focusing on vector search and databases. The course covers theoretical foundations and practical implementation of vector search applications, algorithms, and systems. It explores the intersection of Artificial Intelligence and Database Management Systems, with topics including text embeddings, image embeddings, low dimensional vector search, dimensionality reduction, approximate nearest neighbor search, clustering, quantization, and graph-based indexes. The repository also includes information on the course syllabus, project details, selected literature, and contributions from industry experts in the field.

README:

NOTE: COS 597A class times changed for Fall semester 2023. Classes will be held 9am-12noon.

-

Edo Liberty, Founder and CEO of Pinecone, the world's leading Vector Database. Publications.

-

Matthijs Douze, Research Scientist at Meta. Architect and main developer of FAISS the most popular and advanced open source library for vector search. Publications.

-

Teaching assistant Nataly Brukhim PhD sdudent working with Prof. Elad Hazan and researcher at Google AI Princeton. email: [email protected]. Publications.

-

Guest lecture by Harsha Vardhan Simhadri Senior Principal Researcher, at Microsoft Research. Creator of DiskANN. Publications

Long Term Memory is a foundational capability in the modern AI Stack. At their core, these systems use vector search. Vector search is also a basic tool for systems that manipulate large collections of media like search engines, knowledge bases, content moderation tools, recommendation systems, etc. As such, the discipline lays at the intersection of Artificial Intelligence and Database Management Systems. This course will cover the theoretical foundations and practical implementation of vector search applications, algorithms, and systems. The course will be evaluated with project and in-class presentation.

All class materials are intended to be used freely by academics anywhere, students and professors alike. Please contribute in the form of pull requests or by opening issues.

https://github.com/edoliberty/vector-search-class-notes

On unix-like systems (e.g. macos) with bibtex and pdflatex available you should be able to run this:

git clone [email protected]:edoliberty/vector-search-class-notes.git

cd vector-search-class-notes

./build

-

9/8 - Class 1 - Introduction to Vector Search [Matthijs + Edo + Nataly]

- Intro to the course: Topic, Schedule, Project, Grading, ...

- Embeddings as an information bottleneck. Instead of learning end-to-end, use embeddings as an intermediate representation

- Advantages: scalability, instant updates, and explainability

- Typical volumes of data and scalability. Embeddings are the only way to manage / access large databases

- The embedding contract: the embedding extractor and embedding indexer agree on the meaning of the distance. Separation of concerns.

- The vector space model in information retrieval

- Vector embeddings in machine learning

- Define vector, vector search, ranking, retrieval, recall

-

9/15 - Class 2 - Text embeddings [Matthijs]

- 2-layer word embeddings. Word2vec and fastText, obtained via a factorization of a co-occurrence matrix. Embedding arithmetic: king + woman - man = queen, (already based on similarity search)

- Sentence embeddings: How to train, masked LM. Properties of sentence embeddings.

- Large Language Models: reasoning as an emerging property of a LM. What happens when the training set = the whole web

-

9/22 - Class 3 - Image embeddings [Matthijs]

- Pixel structures of images. Early works on direct pixel indexing

- Traditional CV models. Global descriptors (GIST). Local descriptors (SIFT and friends)Direct indexing of local descriptors for image matching, local descriptor pooling (Fisher, VLAD)

- Convolutional Neural Nets. Off-the-shelf models. Trained specifically (contrastive learning, self-supervised learning)

- Modern Computer Vision models

-

9/29 - Class 4 - Low Dimensional Vector Search [Edo]

- Vector search problem definition

- k-d tree, space partitioning data structures

- Worst case proof for kd-trees

- Probabilistic inequalities. Recap of basic inequalities: Markov, Chernoof, Hoeffding

- Concentration Of Measure phenomena. Orthogonality of random vectors in high dimensions

- Curse of dimensionality and the failure of space partitioning

-

10/6 - Class 5 - Dimensionality Reduction [Edo]

- Singular Value Decomposition (SVD)

- Applications of the SVD

- Rank-k approximation in the spectral norm

- Rank-k approximation in the Frobenius norm

- Linear regression in the least-squared loss

- PCA, Optimal squared loss dimension reduction

- Closest orthogonal matrix

- Computing the SVD: The power method

- Random-projection

- Matrices with normally distributed independent entries

- Fast Random Projections

-

10/13 - No Class - Midterm Examination Week

-

10/20 - No Class - Fall Recess

-

10/27 - Class 6 - Approximate Nearest Neighbor Search [Edo]

- Definition of Approximate Nearest Neighbor Search (ANNS)

- Criteria: Speed / accuracy / memory usage / updateability / index construction time

- Definition of Locality Sensitive Hashing and examples

- The LSH Algorithm

- LSH Analysis, proof of correctness, and asymptotics

-

11/3 - Class 7 - Clustering [Edo]

- K-means clustering - mean squared error criterion.

- Lloyd’s Algorithm

- k-means and PCA

- ε-net argument for fixed dimensions

- Sampling based seeding for k-means

- k-means++

- The Inverted File Model (IVF)

-

11/10 - Class 8 - Quantization for lossy vector compression This class will take place remotely via zoom, see the edstem message to get the link [Matthijs]

- Python notebook corresponding to the class: Class_08_runbook_for_students.ipynb

- Vector quantization is a topline (directly optimizes the objective)

- Binary quantization and hamming comparison

- Product quantization. Chunked vector quantization. Optimized vector quantization

- Additive quantization. Extension of product quantization. Difficulty in training approximations (Residual quantization, CQ, TQ, LSQ, etc.)

- Cost of coarse quantization vs. inverted list scanning

-

11/17 - Class 9 - Graph based indexes by Guest lecturer Harsha Vardhan Simhadri.

- Early works: hierarchical k-means

- Neighborhood graphs. How to construct them. Nearest Neighbor Descent

- Greedy search in Neighborhood graphs. That does not work -- need long jumps

- HNSW. A practical hierarchical graph-based index

- NSG. Evolving a graph k-NN graph

-

11/24 - No Class - Thanksgiving Recess

-

12/1 - Class 10 - Student project and paper presentations [Edo + Nataly]

Class work includes a final project. It will be graded based on

- 50% - Project submission

- 50% - In-class presentation

Projects can be in three different flavors

- Theory/Research: propose a new algorithm for a problem we explored in class (or modify an existing one), explain what it achieves, give experimental evidence or a proof for its behavior. If you choose this kind of project you are expected to submit a write up.

- Data Science/AI: create an interesting use case for vector search using Pinecone, explain what data you used, what value your application brings, and what insights you gained. If you choose this kind of project you are expected to submit code (e.g. Jupyter Notebooks) and a writeup of your results and insights.

- Engineering/HPC: adapt or add to FAISS, explain your improvements, show experimental results. If you choose this kind of project you are expected to submit a branch of FAISS for review along with a short writeup of your suggested improvement and experiments.

Project schedule

- 11/24 - One-page project proposal approved by the instructors

- 12/1 - Final project submission

- 12/1 - In-class presentation

Some more details

- Project Instructor: Nataly [email protected]

- Projects can be worked on individually, in teams of two or at most three students.

- Expect to spend a few hours over the semester on the project proposal. Try to get it approved well ahead of the deadline.

- Expect to spent 3-5 full days on the project itself (on par with preparing for a final exam)

- In class project project presentation are 5 minutes per student (teams of two students present for 10 minutes. Teams of three, 15 minutes).

- A fast random sampling algorithm for sparsifying matrices - Arora, Sanjeev and Hazan, Elad and Kale, Satyen - 2006

- A Randomized Algorithm for Principal Component Analysis - Vladimir Rokhlin and Arthur Szlam and Mark Tygert - 2009

- A search structure based on kd trees for efficient ray tracing - Subramanian, KR and Fussel, DS - 1990

- A Short Proof for Gap Independence of Simultaneous Iteration - Edo Liberty - 2016

- Accelerating Large-Scale Inference with Anisotropic Vector Quantization - Ruiqi Guo and Philip Sun and Erik Lindgren and Quan Geng and David Simcha and Felix Chern and Sanjiv Kumar - 2020

- Advances in Neural Information Processing Systems 28: Annual Conference on Neural Information Processing Systems 2015, December 7-12, 2015, Montreal, Quebec, Canada - 2015

- An Algorithm for Online K-Means Clustering - Edo Liberty and Ram Sriharsha and Maxim Sviridenko

- An Almost Optimal Unrestricted Fast Johnson-Lindenstrauss Transform - Nir Ailon and Edo Liberty - 2011

- An elementary proof of the Johnson-Lindenstrauss lemma - S. DasGupta and A. Gupta - 1999

- Approximate nearest neighbors and the fast Johnson-Lindenstrauss transform - Nir Ailon and Bernard Chazelle - 2006

- Billion-scale similarity search with GPUs - Jeff Johnson and Matthijs Douze and Herv{'e} J{'e}gou - 2017

- Clustering Data Streams: Theory and Practice - Sudipto Guha and Adam Meyerson and Nina Mishra and Rajeev Motwani and Liadan O'Callaghan - 2003

- DiskANN: Fast Accurate Billion-point Nearest Neighbor Search on a Single Node - Jayaram Subramanya, Suhas and Devvrit, Fnu and Simhadri, Harsha Vardhan and Krishnawamy, Ravishankar and Kadekodi, Rohan - 2019

- Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs - Yu. A. Malkov and D. A. Yashunin - 2018

- Efficient K-Nearest Neighbor Graph Construction for Generic Similarity Measures - Dong, Wei and Moses, Charikar and Li, Kai - 2011

- Even Simpler Deterministic Matrix Sketching - Edo Liberty - 2022

- Extensions of Lipschitz mappings into a Hilbert space - W. B. Johnson and J. Lindenstrauss - 1984

- Fast Approximate Nearest Neighbor Search With The Navigating Spreading-out Graph - Cong Fu and Chao Xiang and Changxu Wang and Deng Cai - 2018

- Finding Structure with Randomness: Probabilistic Algorithms for Constructing Approximate Matrix Decompositions - Halko, N. and Martinsson, P. G. and Tropp, J. A. - 2011

- Invertibility of random matrices: norm of the inverse - Mark Rudelson - 2008

- K-means clustering via principal component analysis - Chris H. Q. Ding and Xiaofeng He - 2004

- k-means++: the advantages of careful seeding - David Arthur and Sergei Vassilvitskii - 2007

- Least squares quantization in pcm - Stuart P. Lloyd - 1982

- LSQ++: Lower Running Time and Higher Recall in Multi-Codebook Quantization - Martinez, Julieta and Zakhmi, Shobhit and Hoos, Holger H. and Little, James J. - 2018

- Multidimensional binary search trees used for associative searching - Bentley, Jon Louis - 1975

- Near-Optimal Entrywise Sampling for Data Matrices - Achlioptas, Dimitris and Karnin, Zohar S and Liberty, Edo - 2013

- Pass Efficient Algorithms for Approximating Large Matrices - Petros Drineas and Ravi Kannan - 2003

- Product Quantization for Nearest Neighbor Search - Jegou, Herve and Douze, Matthijs and Schmid, Cordelia - 2011

- QuickCSG: Arbitrary and faster boolean combinations of n solids - Douze, Matthijs and Franco, Jean-S{'e}bastien and Raffin, Bruno - 2015

- Quicker {ADC} : Unlocking the Hidden Potential of Product Quantization With {SIMD - Fabien Andre and Anne-Marie Kermarrec and Nicolas Le Scouarnec - 2021

- Random Projection Trees and Low Dimensional Manifolds - Dasgupta, Sanjoy and Freund, Yoav - 2008

- Randomized Algorithms for Low-Rank Matrix Factorizations: Sharp Performance Bounds - Witten, Rafi and Cand`{e}s, Emmanuel - 2015

- Randomized Block Krylov Methods for Stronger and Faster Approximate Singular Value Decomposition - Cameron Musco and Christopher Musco - 2015

- Revisiting Additive Quantization - Julieta Martinez and Joris Clement and Holger H. Hoos and J. Little - 2016

- Sampling from large matrices: An approach through geometric functional analysis - Rudelson, Mark and Vershynin, Roman - 2007

- Similarity estimation techniques from rounding algorithms - Moses Charikar - 2002

- Similarity Search in High Dimensions via Hashing - Aristides Gionis and Piotr Indyk and Rajeev Motwani - 1999

- Simple and Deterministic Matrix Sketching - Edo Liberty - 2012

- Smaller Coresets for k-Median and k-Means Clustering - S. {Har-Peled} and A. Kushal - 2005

- Sparser Johnson-Lindenstrauss transforms - Daniel M. Kane and Jelani Nelson - 2012

- Sparsity Lower Bounds for Dimensionality Reducing Maps - Jelani Nelson and Huy L. Nguyen - 2012

- Spectral Relaxation for K-means Clustering - Hongyuan Zha and Xiaofeng He and Chris H. Q. Ding and Ming Gu and Horst D. Simon - 2001

- Streaming k-means approximation - Nir Ailon and Ragesh Jaiswal and Claire Monteleoni - 2009

- Strong converse for identification via quantum channels - Rudolf Ahlswede and Andreas Winter - 2002

- Transformer Memory as a Differentiable Search Index - Yi Tay and Vinh Q. Tran and Mostafa Dehghani and Jianmo Ni and Dara Bahri and Harsh Mehta and Zhen Qin and Kai Hui and Zhe Zhao and Jai Gupta and Tal Schuster and William W. Cohen and Donald Metzler - 2022

- Unsupervised Neural Quantization for Compressed-Domain Similarity Search - S. Morozov and A. Babenko - 2019

- Worst-Case Analysis for Region and Partial Region Searches in Multidimensional Binary Search Trees and Balanced Quad Trees - Lee, D. T. and Wong, C. K. - 1977

- A Comprehensive Survey and Experimental Comparison of Graph-Based Approximate Nearest Neighbor Search - Mengzhao Wang and Xiaoliang Xu and Qiang Yue and Yuxiang Wang - 2021

- Approximate Nearest Neighbor Search on High Dimensional Data - Experiments, Analyses, and Improvement - Wen Li and Ying Zhang and Yifang Sun and Wei Wang and Mingjie Li and Wenjie Zhang and Xuemin Lin - 2020

- Survey of vector database management systems - James Jie Pan and Jianguo Wang and Guoliang Li - 2024

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for vector-search-class-notes

Similar Open Source Tools

vector-search-class-notes

The 'vector-search-class-notes' repository contains class materials for a course on Long Term Memory in AI, focusing on vector search and databases. The course covers theoretical foundations and practical implementation of vector search applications, algorithms, and systems. It explores the intersection of Artificial Intelligence and Database Management Systems, with topics including text embeddings, image embeddings, low dimensional vector search, dimensionality reduction, approximate nearest neighbor search, clustering, quantization, and graph-based indexes. The repository also includes information on the course syllabus, project details, selected literature, and contributions from industry experts in the field.

Slow_Thinking_with_LLMs

STILL is an open-source project exploring slow-thinking reasoning systems, focusing on o1-like reasoning systems. The project has released technical reports on enhancing LLM reasoning with reward-guided tree search algorithms and implementing slow-thinking reasoning systems using an imitate, explore, and self-improve framework. The project aims to replicate the capabilities of industry-level reasoning systems by fine-tuning reasoning models with long-form thought data and iteratively refining training datasets.

llm-course

The LLM course is divided into three parts: 1. 🧩 **LLM Fundamentals** covers essential knowledge about mathematics, Python, and neural networks. 2. 🧑🔬 **The LLM Scientist** focuses on building the best possible LLMs using the latest techniques. 3. 👷 **The LLM Engineer** focuses on creating LLM-based applications and deploying them. For an interactive version of this course, I created two **LLM assistants** that will answer questions and test your knowledge in a personalized way: * 🤗 **HuggingChat Assistant**: Free version using Mixtral-8x7B. * 🤖 **ChatGPT Assistant**: Requires a premium account. ## 📝 Notebooks A list of notebooks and articles related to large language models. ### Tools | Notebook | Description | Notebook | |----------|-------------|----------| | 🧐 LLM AutoEval | Automatically evaluate your LLMs using RunPod |  | | 🥱 LazyMergekit | Easily merge models using MergeKit in one click. |  | | 🦎 LazyAxolotl | Fine-tune models in the cloud using Axolotl in one click. |  | | ⚡ AutoQuant | Quantize LLMs in GGUF, GPTQ, EXL2, AWQ, and HQQ formats in one click. |  | | 🌳 Model Family Tree | Visualize the family tree of merged models. |  | | 🚀 ZeroSpace | Automatically create a Gradio chat interface using a free ZeroGPU. |  |

Medical_Image_Analysis

The Medical_Image_Analysis repository focuses on X-ray image-based medical report generation using large language models. It provides pre-trained models and benchmarks for CheXpert Plus dataset, context sample retrieval for X-ray report generation, and pre-training on high-definition X-ray images. The goal is to enhance diagnostic accuracy and reduce patient wait times by improving X-ray report generation through advanced AI techniques.

interpret

InterpretML is an open-source package that incorporates state-of-the-art machine learning interpretability techniques under one roof. With this package, you can train interpretable glassbox models and explain blackbox systems. InterpretML helps you understand your model's global behavior, or understand the reasons behind individual predictions. Interpretability is essential for: - Model debugging - Why did my model make this mistake? - Feature Engineering - How can I improve my model? - Detecting fairness issues - Does my model discriminate? - Human-AI cooperation - How can I understand and trust the model's decisions? - Regulatory compliance - Does my model satisfy legal requirements? - High-risk applications - Healthcare, finance, judicial, ...

merlin

Merlin is a groundbreaking model capable of generating natural language responses intricately linked with object trajectories of multiple images. It excels in predicting and reasoning about future events based on initial observations, showcasing unprecedented capability in future prediction and reasoning. Merlin achieves state-of-the-art performance on the Future Reasoning Benchmark and multiple existing multimodal language models benchmarks, demonstrating powerful multi-modal general ability and foresight minds.

only_train_once

Only Train Once (OTO) is an automatic, architecture-agnostic DNN training and compression framework that allows users to train a general DNN from scratch or a pretrained checkpoint to achieve high performance and slimmer architecture simultaneously in a one-shot manner without fine-tuning. The framework includes features for automatic structured pruning and erasing operators, as well as hybrid structured sparse optimizers for efficient model compression. OTO provides tools for pruning zero-invariant group partitioning, constructing pruned models, and visualizing pruning and erasing dependency graphs. It supports the HESSO optimizer and offers a sanity check for compliance testing on various DNNs. The repository also includes publications, installation instructions, quick start guides, and a roadmap for future enhancements and collaborations.

oat

Oat is a simple and efficient framework for running online LLM alignment algorithms. It implements a distributed Actor-Learner-Oracle architecture, with components optimized using state-of-the-art tools. Oat simplifies the experimental pipeline of LLM alignment by serving an Oracle online for preference data labeling and model evaluation. It provides a variety of oracles for simulating feedback and supports verifiable rewards. Oat's modular structure allows for easy inheritance and modification of classes, enabling rapid prototyping and experimentation with new algorithms. The framework implements cutting-edge online algorithms like PPO for math reasoning and various online exploration algorithms.

gepa

GEPA (Genetic-Pareto) is a framework for optimizing arbitrary systems composed of text components like AI prompts, code snippets, or textual specs against any evaluation metric. It employs LLMs to reflect on system behavior, using feedback from execution and evaluation traces to drive targeted improvements. Through iterative mutation, reflection, and Pareto-aware candidate selection, GEPA evolves robust, high-performing variants with minimal evaluations, co-evolving multiple components in modular systems for domain-specific gains. The repository provides the official implementation of the GEPA algorithm as proposed in the paper titled 'GEPA: Reflective Prompt Evolution Can Outperform Reinforcement Learning'.

ReaLHF

ReaLHF is a distributed system designed for efficient RLHF training with Large Language Models (LLMs). It introduces a novel approach called parameter reallocation to dynamically redistribute LLM parameters across the cluster, optimizing allocations and parallelism for each computation workload. ReaL minimizes redundant communication while maximizing GPU utilization, achieving significantly higher Proximal Policy Optimization (PPO) training throughput compared to other systems. It supports large-scale training with various parallelism strategies and enables memory-efficient training with parameter and optimizer offloading. The system seamlessly integrates with HuggingFace checkpoints and inference frameworks, allowing for easy launching of local or distributed experiments. ReaLHF offers flexibility through versatile configuration customization and supports various RLHF algorithms, including DPO, PPO, RAFT, and more, while allowing the addition of custom algorithms for high efficiency.

MMMU

MMMU is a benchmark designed to evaluate multimodal models on college-level subject knowledge tasks, covering 30 subjects and 183 subfields with 11.5K questions. It focuses on advanced perception and reasoning with domain-specific knowledge, challenging models to perform tasks akin to those faced by experts. The evaluation of various models highlights substantial challenges, with room for improvement to stimulate the community towards expert artificial general intelligence (AGI).

foundations-of-gen-ai

This repository contains code for the O'Reilly Live Online Training for 'Transformer Architectures for Generative AI'. The course provides a deep understanding of transformer architectures and their impact on natural language processing (NLP) and vision tasks. Participants learn to harness transformers to tackle problems in text, image, and multimodal AI through theory and practical exercises.

AI2BMD

AI2BMD is a program for efficiently simulating protein molecular dynamics with ab initio accuracy. The repository contains datasets, simulation programs, and public materials related to AI2BMD. It provides a Docker image for easy deployment and a standalone launcher program. Users can run simulations by downloading the launcher script and specifying simulation parameters. The repository also includes ready-to-use protein structures for testing. AI2BMD is designed for x86-64 GNU/Linux systems with recommended hardware specifications. The related research includes model architectures like ViSNet, Geoformer, and fine-grained force metrics for MLFF. Citation information and contact details for the AI2BMD Team are provided.

nlp-llms-resources

The 'nlp-llms-resources' repository is a comprehensive resource list for Natural Language Processing (NLP) and Large Language Models (LLMs). It covers a wide range of topics including traditional NLP datasets, data acquisition, libraries for NLP, neural networks, sentiment analysis, optical character recognition, information extraction, semantics, topic modeling, multilingual NLP, domain-specific LLMs, vector databases, ethics, costing, books, courses, surveys, aggregators, newsletters, papers, conferences, and societies. The repository provides valuable information and resources for individuals interested in NLP and LLMs.

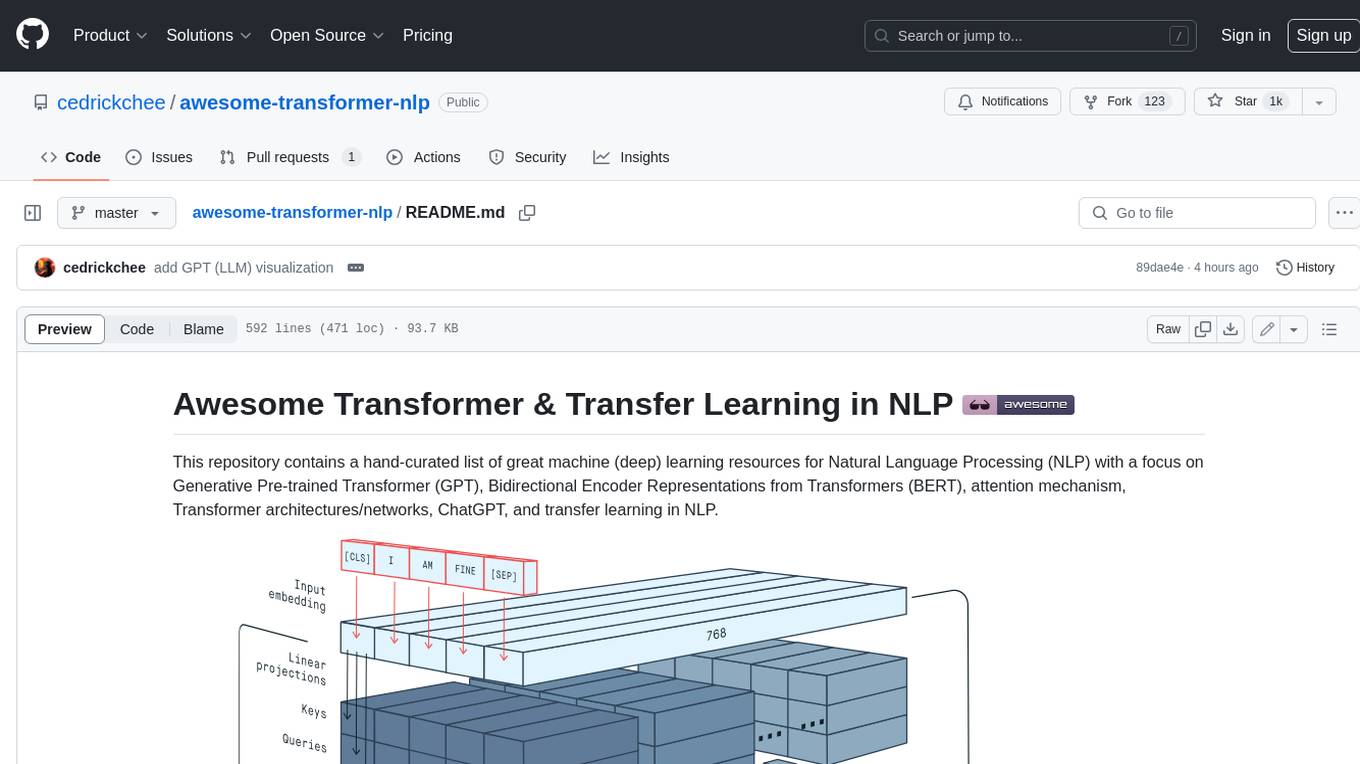

awesome-transformer-nlp

This repository contains a hand-curated list of great machine (deep) learning resources for Natural Language Processing (NLP) with a focus on Generative Pre-trained Transformer (GPT), Bidirectional Encoder Representations from Transformers (BERT), attention mechanism, Transformer architectures/networks, Chatbot, and transfer learning in NLP.

For similar tasks

OpenAI

OpenAI is a Swift community-maintained implementation over OpenAI public API. It is a non-profit artificial intelligence research organization founded in San Francisco, California in 2015. OpenAI's mission is to ensure safe and responsible use of AI for civic good, economic growth, and other public benefits. The repository provides functionalities for text completions, chats, image generation, audio processing, edits, embeddings, models, moderations, utilities, and Combine extensions.

vector-search-class-notes

The 'vector-search-class-notes' repository contains class materials for a course on Long Term Memory in AI, focusing on vector search and databases. The course covers theoretical foundations and practical implementation of vector search applications, algorithms, and systems. It explores the intersection of Artificial Intelligence and Database Management Systems, with topics including text embeddings, image embeddings, low dimensional vector search, dimensionality reduction, approximate nearest neighbor search, clustering, quantization, and graph-based indexes. The repository also includes information on the course syllabus, project details, selected literature, and contributions from industry experts in the field.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.