Awesome-LLM-for-RecSys

Survey: A collection of AWESOME papers and resources on the large language model (LLM) related recommender system topics.

Stars: 1242

README:

A collection of AWESOME papers and resources on the large language model (LLM) related recommender system topics.

🎉 Our survey paper has been accepted by ACM Transactions on Information Systems (TOIS): How Can Recommender Systems Benefit from Large Language Models: A Survey

🔔 Since our survey paper is archived, we will update the latest research works at 1.7 Newest Research Work List.

😁 I am also wrting weekly paper notes about latest LLM-enhanced RS at WeChat. Welcome to follow by scanning the QR-Code.

🚀 2024.07.09 - Paper v6 released: Our archived camera-ready version for TOIS.

Survey Paper Update Logs

- 2024.07.09 - Paper v6 released: Our camera-ready Version for TOIS, which will be archived.

- 2024.02.05 - Paper v5 released: New release with 27-page main content & more thorough taxonomies.

- 2023.06.29 - Paper v4 released: 7 papers have been newly added.

- 2023.06.28 - Paper v3 released: Fix typos.

- 2023.06.12 - Paper v2 released: Add summerization table in the appendix.

- 2023.06.09 - Paper v1 released: Initial version.

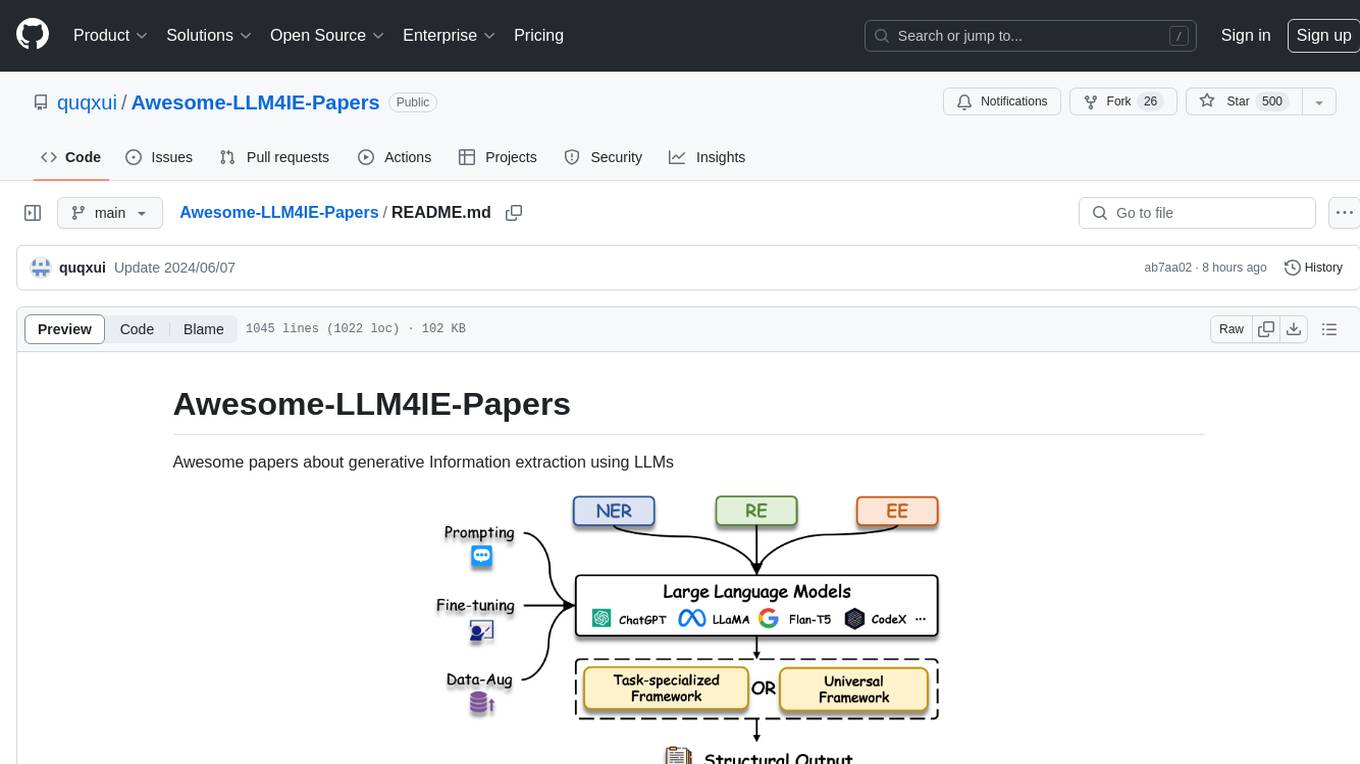

We classify papers according to where LLM will be adapted in the pipeline of RS, which is summarized in the figure below.

1.1 LLM for Feature Engineering

1.1.1 User- and Item-level Feature Augmentation

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| LLM4KGC | Knowledge Graph Completion Models are Few-shot Learners: An Empirical Study of Relation Labeling in E-commerce with LLMs | PaLM (540B)/ ChatGPT | Frozen | Arxiv 2023 | [Link] |

| TagGPT | TagGPT: Large Language Models are Zero-shot Multimodal Taggers | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| ICPC | Large Language Models for User Interest Journeys | LaMDA (137B) | Full Finetuning/ Prompt Tuning | Arxiv 2023 | [Link] |

| KAR | Towards Open-World Recommendation with Knowledge Augmentation from Large Language Models | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| PIE | Product Information Extraction using ChatGPT | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| LGIR | Enhancing Job Recommendation through LLM-based Generative Adversarial Networks | GhatGLM (6B) | Frozen | AAAI 2024 | [Link] |

| GIRL | Generative Job Recommendations with Large Language Model | BELLE (7B) | Full Finetuning | Arxiv 2023 | [Link] |

| LLM-Rec | LLM-Rec: Personalized Recommendation via Prompting Large Language Models | text-davinci-003 | Frozen | Arxiv 2023 | [Link] |

| HKFR | Heterogeneous Knowledge Fusion: A Novel Approach for Personalized Recommendation via LLM | ChatGPT | Frozen | RecSys 2023 | [Link] |

| LLaMA-E | LLaMA-E: Empowering E-commerce Authoring with Multi-Aspect Instruction Following | LLaMA (30B) | LoRA | Arxiv 2023 | [Link] |

| EcomGPT | EcomGPT: Instruction-tuning Large Language Models with Chain-of-Task Tasks for E-commerce | BLOOMZ (7.1B) | Full Finetuning | Arxiv 2023 | [Link] |

| TF-DCon | Leveraging Large Language Models (LLMs) to Empower Training-Free Dataset Condensation for Content-Based Recommendation | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| RLMRec | Representation Learning with Large Language Models for Recommendation | ChatGPT | Frozen | WWW 2024 | [Link] |

| LLMRec | LLMRec: Large Language Models with Graph Augmentation for Recommendation | ChatGPT | Frozen | WSDM 2024 | [Link] |

| LLMRG | Enhancing Recommender Systems with Large Language Model Reasoning Graphs | GPT4 | Frozen | Arxiv 2023 | [Link] |

| CUP | Recommendations by Concise User Profiles from Review Text | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| SINGLE | Modeling User Viewing Flow using Large Language Models for Article Recommendation | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| SAGCN | Understanding Before Recommendation: Semantic Aspect-Aware Review Exploitation via Large Language Models | Vicuna (13B) | Frozen | Arxiv 2023 | [Link] |

| UEM | User Embedding Model for Personalized Language Prompting | FLAN-T5-base (250M) | Full Finetuning | Arxiv 2024 | [Link] |

| LLMHG | LLM-Guided Multi-View Hypergraph Learning for Human-Centric Explainable Recommendation | GPT4 | Frozen | Arxiv 2024 | [Link] |

| Llama4Rec | Integrating Large Language Models into Recommendation via Mutual Augmentation and Adaptive Aggregation | LLaMA2 (7B) | Full Finetuning | Arxiv 2024 | [Link] |

| LLM4Vis | LLM4Vis: Explainable Visualization Recommendation using ChatGPT | ChatGPT | Frozen | EMNLP 2023 | [Link] |

| LoRec | LoRec: Large Language Model for Robust Sequential Recommendation against Poisoning Attacks | LLaMA2 | Frozen | SIGIR 2024 | [Link] |

1.1.2 Instance-level Sample Generation

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| GReaT | Language Models are Realistic Tabular Data Generators | GPT2-medium (355M) | Full Finetuning | ICLR 2023 | [Link] |

| ONCE | ONCE: Boosting Content-based Recommendation with Both Open- and Closed-source Large Language Models | ChatGPT | Frozen | WSDM 2024 | [Link] |

| AnyPredict | AnyPredict: Foundation Model for Tabular Prediction | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| DPLLM | Privacy-Preserving Recommender Systems with Synthetic Query Generation using Differentially Private Large Language Models | T5-XL (3B) | Full Finetuning | Arxiv 2023 | [Link] |

| MINT | Large Language Model Augmented Narrative Driven Recommendations | text-davinci-003 | Frozen | RecSys 2023 | [Link] |

| Agent4Rec | On Generative Agents in Recommendation | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| RecPrompt | RecPrompt: A Prompt Tuning Framework for News Recommendation Using Large Language Models | GPT4 | Frozen | Arxiv 2023 | [Link] |

| PO4ISR | Large Language Models for Intent-Driven Session Recommendations | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| BEQUE | Large Language Model based Long-tail Query Rewriting in Taobao Search | ChatGLM (6B) | FFT | Arxiv 2023 | [Link] |

| Agent4Ranking | Agent4Ranking: Semantic Robust Ranking via Personalized Query Rewriting Using Multi-agent LLM | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| PopNudge | Improving Conversational Recommendation Systems via Bias Analysis and Language-Model-Enhanced Data Augmentation | ChatGPT | Frozen | Arxiv 2023 | [Link] |

1.2 LLM as Feature Encoder

1.2.1 Representation Enhancement

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| U-BERT | U-BERT: Pre-training User Representations for Improved Recommendation | BERT-base (110M) | Full Finetuning | AAAI 2021 | [Link] |

| UNBERT | UNBERT: User-News Matching BERT for News Recommendation | BERT-base (110M) | Full Finetuning | IJCAI 2021 | [Link] |

| PLM-NR | Empowering News Recommendation with Pre-trained Language Models | RoBERTa-base (125M) | Full Finetuning | SIGIR 2021 | [Link] |

| Pyramid-ERNIE | Pre-trained Language Model based Ranking in Baidu Search | ERNIE (110M) | Full Finetuning | KDD 2021 | [Link] |

| ERNIE-RS | Pre-trained Language Model for Web-scale Retrieval in Baidu Search | ERNIE (110M) | Full Finetuning | KDD 2021 | [Link] |

| CTR-BERT | CTR-BERT: Cost-effective knowledge distillation for billion-parameter teacher models | Customized BERT (1.5B) | Full Finetuning | ENLSP 2021 | [Link] |

| SuKD | Learning Supplementary NLP Features for CTR Prediction in Sponsored Search | RoBERTa-large (355M) | Full Finetuning | KDD 2022 | [Link] |

| PREC | Boosting Deep CTR Prediction with a Plug-and-Play Pre-trainer for News Recommendation | BERT-base (110M) | Full Finetuning | COLING 2022 | [Link] |

| MM-Rec | MM-Rec: Visiolinguistic Model Empowered Multimodal News Recommendation | BERT-base (110M) | Full Finetuning | SIGIR 2022 | [Link] |

| Tiny-NewsRec | Tiny-NewsRec: Effective and Efficient PLM-based News Recommendation | UniLMv2-base (110M) | Full Finetuning | EMNLP 2022 | [Link] |

| PLM4Tag | PTM4Tag: Sharpening Tag Recommendation of Stack Overflow Posts with Pre-trained Models | CodeBERT (125M) | Full Finetuning | ICPC 2022 | [Link] |

| TwHIN-BERT | TwHIN-BERT: A Socially-Enriched Pre-trained Language Model for Multilingual Tweet Representations | BERT-base (110M) | Full Finetuning | Arxiv 2022 | [Link] |

| LSH | Improving Code Example Recommendations on Informal Documentation Using BERT and Query-Aware LSH: A Comparative Study | BERT-base (110M) | Full Finetuning | Arxiv 2023 | [Link] |

| LLM2BERT4Rec | Leveraging Large Language Models for Sequential Recommendation | text-embedding-ada-002 | Frozen | RecSys 2023 | [Link] |

| LLM4ARec | Prompt Tuning Large Language Models on Personalized Aspect Extraction for Recommendations | GPT2 (110M) | Prompt Tuning | Arxiv 2023 | [Link] |

| TIGER | Recommender Systems with Generative Retrieval | Sentence-T5-base (223M) | Frozen | NIPS 2023 | [Link] |

| TBIN | TBIN: Modeling Long Textual Behavior Data for CTR Prediction | BERT-base (110M) | Frozen | DLP-RecSys 2023 | [Link] |

| LKPNR | LKPNR: LLM and KG for Personalized News Recommendation Framework | LLaMA2 (7B) | Frozen | Arxiv 2023 | [Link] |

| SSNA | Towards Efficient and Effective Adaptation of Large Language Models for Sequential Recommendation | DistilRoBERTa-base (83M) | Layerwise Adapter Tuning | Arxiv 2023 | [Link] |

| CollabContext | Collaborative Contextualization: Bridging the Gap between Collaborative Filtering and Pre-trained Language Model | Instructor-XL (1.5B) | Frozen | Arxiv 2023 | [Link] |

| LMIndexer | Language Models As Semantic Indexers | T5-base (223M) | Full Finetuning | Arxiv 2023 | [Link] |

| Stack | A BERT based Ensemble Approach for Sentiment Classification of Customer Reviews and its Application to Nudge Marketing in e-Commerce | BERT-base (110M) | Frozen | Arxiv 2023 | [Link] |

| N/A | Utilizing Language Models for Tour Itinerary Recommendation | BERT-base (110M) | Full Finetuning | PMAI@IJCAI 2023 | [Link] |

| UEM | User Embedding Model for Personalized Language Prompting | Sentence-T5-base (223M) | Frozen | Arxiv 2024 | [Link] |

| Social-LLM | Social-LLM: Modeling User Behavior at Scale using Language Models and Social Network Data | SBERT-MPNet-base (110M) | Frozen | Arxiv 2024 | [Link] |

| LLMRS | LLMRS: Unlocking Potentials of LLM-Based Recommender Systems for Software Purchase | MPNet (110M) | Frozen | Arxiv 2024 | [Link] |

| KERL | Knowledge Graphs and Pre-trained Language Models enhanced Representation Learning for Conversational Recommender Systems | BERT-mini | Frozen | TNNLS | [Link] |

| N/A | Empowering Few-Shot Recommender Systems with Large Language Models -- Enhanced Representations | ChatGPT | Frozen | IEEE Access | [Link] |

| N/A | Better Generalization with Semantic IDs: A Case Study in Ranking for Recommendations | Unknown | Frozen | Arxiv 2023 | [Link] |

1.2.2 Unified Cross-domain Recommendation

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| ZESRec | Zero-Shot Recommender Systems | BERT-base (110M) | Frozen | Arxiv 2021 | [Link] |

| UniSRec | Towards Universal Sequence Representation Learning for Recommender Systems | BERT-base (110M) | Frozen | KDD 2022 | [Link] |

| TransRec | TransRec: Learning Transferable Recommendation from Mixture-of-Modality Feedback | BERT-base (110M) | Full Finetuning | Arxiv 2022 | [Link] |

| VQ-Rec | Learning Vector-Quantized Item Representation for Transferable Sequential Recommenders | BERT-base (110M) | Frozen | WWW 2023 | [Link] |

| IDRec vs MoRec | Where to Go Next for Recommender Systems? ID- vs. Modality-based Recommender Models Revisited | BERT-base (110M) | Full Finetuning | SIGIR 2023 | [Link] |

| TransRec | Exploring Adapter-based Transfer Learning for Recommender Systems: Empirical Studies and Practical Insights | RoBERTa-base (125M) | Layerwise Adapter Tuning | Arxiv 2023 | [Link] |

| TCF | Exploring the Upper Limits of Text-Based Collaborative Filtering Using Large Language Models: Discoveries and Insights | OPT-175B (175B) | Frozen/ Full Finetuning | Arxiv 2023 | [Link] |

| S&R Foundation | An Unified Search and Recommendation Foundation Model for Cold-Start Scenario | ChatGLM (6B) | Frozen | CIKM 2023 | [Link] |

| MISSRec | MISSRec: Pre-training and Transferring Multi-modal Interest-aware Sequence Representation for Recommendation | CLIP-B/32 (400M) | Full Finetuning | MM 2023 | [Link] |

| UFIN | UFIN: Universal Feature Interaction Network for Multi-Domain Click-Through Rate Prediction | FLAN-T5-base (250M) | Frozen | Arxiv 2023 | [Link] |

| PMMRec | Multi-Modality is All You Need for Transferable Recommender Systems | RoBERTa-large (355M) | Top-2-layer Finetuning | ICDE 2024 | [Link] |

| Uni-CTR | A Unified Framework for Multi-Domain CTR Prediction via Large Language Models | Sheared-LLaMA (1.3B) | LoRA | Arxiv 2023 | [Link] |

| PCDR | Prompt-enhanced Federated Content Representation Learning for Cross-domain Recommendation | BERT-base (110M) | Frozen | WWW 2024 | [Link] |

1.3 LLM as Scoring/Ranking Function

1.3.1 Item Scoring Task

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| LMRecSys | Language Models as Recommender Systems: Evaluations and Limitations | GPT2-XL (1.5B) | Full Finetuning | ICBINB 2021 | [Link] |

| PTab | PTab: Using the Pre-trained Language Model for Modeling Tabular Data | BERT-base (110M) | Full Finetuning | Arxiv 2022 | [Link] |

| UniTRec | UniTRec: A Unified Text-to-Text Transformer and Joint Contrastive Learning Framework for Text-based Recommendation | BART (406M) | Full Finetuning | ACL 2023 | [Link] |

| Prompt4NR | Prompt Learning for News Recommendation | BERT-base (110M) | Full Finetuning | SIGIR 2023 | [Link] |

| RecFormer | Text Is All You Need: Learning Language Representations for Sequential Recommendation | LongFormer (149M) | Full Finetuning | KDD 2023 | [Link] |

| TabLLM | TabLLM: Few-shot Classification of Tabular Data with Large Language Models | T0 (11B) | Few-shot Parameter-effiecnt Finetuning | AISTATS 2023 | [Link] |

| Zero-shot GPT | Zero-Shot Recommendation as Language Modeling | GPT2-medium (355M) | Frozen | Arxiv 2023 | [Link] |

| FLAN-T5 | Do LLMs Understand User Preferences? Evaluating LLMs On User Rating Prediction | FLAN-5-XXL (11B) | Full Finetuning | Arxiv 2023 | [Link] |

| BookGPT | BookGPT: A General Framework for Book Recommendation Empowered by Large Language Model | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| TALLRec | TALLRec: An Effective and Efficient Tuning Framework to Align Large Language Model with Recommendation | LLaMA (7B) | LoRA | RecSys 2023 | [Link] |

| PBNR | PBNR: Prompt-based News Recommender System | T5-small (60M) | Full Finetuning | Arxiv 2023 | [Link] |

| CR-SoRec | CR-SoRec: BERT driven Consistency Regularization for Social Recommendation | BERT-base (110M) | Full Finetuning | RecSys 2023 | [Link] |

| PromptRec | Towards Personalized Cold-Start Recommendation with Prompts | LLaMA (7B) | Frozen | Arxiv 2023 | [Link] |

| GLRec | Exploring Large Language Model for Graph Data Understanding in Online Job Recommendations | BELLE-LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| BERT4CTR | BERT4CTR: An Efficient Framework to Combine Pre-trained Language Model with Non-textual Features for CTR Prediction | RoBERTa-large (355M) | Full Finetuning | KDD 2023 | [Link] |

| ReLLa | ReLLa: Retrieval-enhanced Large Language Models for Lifelong Sequential Behavior Comprehension in Recommendation | Vicuna (13B) | LoRA | WWW 2024 | [Link] |

| TASTE | Text Matching Improves Sequential Recommendation by Reducing Popularity Biases | T5-base (223M) | Full Finetuning | CIKM 2023 | [Link] |

| N/A | Unveiling Challenging Cases in Text-based Recommender Systems | BERT-base (110M) | Full Finetuning | RecSys Workshop 2023 | [Link] |

| ClickPrompt | ClickPrompt: CTR Models are Strong Prompt Generators for Adapting Language Models to CTR Prediction | RoBERTa-large (355M) | Full Finetuning | WWW 2024 | [Link] |

| SetwiseRank | A Setwise Approach for Effective and Highly Efficient Zero-shot Ranking with Large Language Models | FLAN-T5-XXL (11B) | Frozen | Arxiv 2023 | [Link] |

| UPSR | Thoroughly Modeling Multi-domain Pre-trained Recommendation as Language | T5-base (223M) | Full Finetuning | Arxiv 2023 | [Link] |

| LLM-Rec | One Model for All: Large Language Models are Domain-Agnostic Recommendation Systems | OPT (6.7B) | LoRA | Arxiv 2023 | [Link] |

| LLMRanker | Beyond Yes and No: Improving Zero-Shot LLM Rankers via Scoring Fine-Grained Relevance Labels | FLAN PaLM2 S | Frozen | Arxiv 2023 | [Link] |

| CoLLM | CoLLM: Integrating Collaborative Embeddings into Large Language Models for Recommendation | Vicuna (7B) | LoRA | Arxiv 2023 | [Link] |

| FLIP | FLIP: Towards Fine-grained Alignment between ID-based Models and Pretrained Language Models for CTR Prediction | RoBERTa-large (355M) | Full Finetuning | Arxiv 2023 | [Link] |

| BTRec | BTRec: BERT-Based Trajectory Recommendation for Personalized Tours | BERT-base (110M) | Full Finetuning | Arxiv 2023 | [Link] |

| CLLM4Rec | Collaborative Large Language Model for Recommender Systems | GPT2 (110M) | Full Finetuning | Arxiv 2023 | [Link] |

| CUP | Recommendations by Concise User Profiles from Review Text | BERT-base (110M) | Last-layer Finetuning | Arxiv 2023 | [Link] |

| N/A | Instruction Distillation Makes Large Language Models Efficient Zero-shot Rankers | FLAN-T5-XL (3B) | Full Finetuning | Arxiv 2023 | [Link] |

| CoWPiRec | Collaborative Word-based Pre-trained Item Representation for Transferable Recommendation | BERT-base (110M) | Full Finetuning | ICDM 2023 | [Link] |

| RecExplainer | RecExplainer: Aligning Large Language Models for Recommendation Model Interpretability | Vicuna-v1.3 (7B) | LoRA | Arxiv 2023 | [Link] |

| E4SRec | E4SRec: An Elegant Effective Efficient Extensible Solution of Large Language Models for Sequential Recommendation | LLaMA2 (13B) | LoRA | Arxiv 2023 | [Link] |

| CER | The Problem of Coherence in Natural Language Explanations of Recommendations | GPT2 (110M) | Full Finetuning | ECAI 2023 | [Link] |

| LSAT | Preliminary Study on Incremental Learning for Large Language Model-based Recommender Systems | LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| Llama4Rec | Integrating Large Language Models into Recommendation via Mutual Augmentation and Adaptive Aggregation | LLaMA2 (7B) | Full Finetuning | Arxiv 2024 | [Link] |

1.3.2 Item Generation Task

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| GPT4Rec | GPT4Rec: A Generative Framework for Personalized Recommendation and User Interests Interpretation | GPT2 (110M) | Full Finetuning | Arxiv 2023 | [Link] |

| VIP5 | VIP5: Towards Multimodal Foundation Models for Recommendation | T5-base (223M) | Layerwise Adater Tuning | EMNLP 2023 | [Link] |

| P5-ID | How to Index Item IDs for Recommendation Foundation Models | T5-small (60M) | Full Finetuning | Arxiv 2023 | [Link] |

| FaiRLLM | Is ChatGPT Fair for Recommendation? Evaluating Fairness in Large Language Model Recommendation | ChatGPT | Frozen | RecSys 2023 | [Link] |

| PALR | PALR: Personalization Aware LLMs for Recommendation | LLaMA (7B) | Full Finetuning | Arxiv 2023 | [Link] |

| ChatGPT | Large Language Models are Zero-Shot Rankers for Recommender Systems | ChatGPT | Frozen | ECIR 2024 | [Link] |

| AGR | Sparks of Artificial General Recommender (AGR): Early Experiments with ChatGPT | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| NIR | Zero-Shot Next-Item Recommendation using Large Pretrained Language Models | GPT3 (175B) | Frozen | Arxiv 2023 | [Link] |

| GPTRec | Generative Sequential Recommendation with GPTRec | GPT2-medium (355M) | Full Finetuning | Gen-IR@SIGIR 2023 | [Link] |

| ChatNews | A Preliminary Study of ChatGPT on News Recommendation: Personalization, Provider Fairness, Fake News | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| N/A | Large Language Models are Competitive Near Cold-start Recommenders for Language- and Item-based Preferences | PaLM (62B) | Frozen | RecSys 2023 | [Link] |

| LLMSeqPrompt | Leveraging Large Language Models for Sequential Recommendation | OpenAI ada model | Finetune | RecSys 2023 | [Link] |

| GenRec | GenRec: Large Language Model for Generative Recommendation | LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| UP5 | UP5: Unbiased Foundation Model for Fairness-aware Recommendation | T5-base (223M) | Prefix Tuning | Arxiv 2023 | [Link] |

| HKFR | Heterogeneous Knowledge Fusion: A Novel Approach for Personalized Recommendation via LLM | ChatGLM (6B) | LoRA | RecSys 2023 | [Link] |

| N/A | The Unequal Opportunities of Large Language Models: Revealing Demographic Bias through Job Recommendations | ChatGPT | Frozen | EAAMO 2023 | [Link] |

| BIGRec | A Bi-Step Grounding Paradigm for Large Language Models in Recommendation Systems | LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| KP4SR | Knowledge Prompt-tuning for Sequential Recommendation | T5-small (60M) | Full Finetuning | Arxiv 2023 | [Link] |

| RecSysLLM | Leveraging Large Language Models for Pre-trained Recommender Systems | GLM (10B) | LoRA | Arxiv 2023 | [Link] |

| POD | Prompt Distillation for Efficient LLM-based Recommendation | T5-small (60M) | Full Finetuning | CIKM 2023 | [Link] |

| N/A | Evaluating ChatGPT as a Recommender System: A Rigorous Approach | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| RaRS | Retrieval-augmented Recommender System: Enhancing Recommender Systems with Large Language Models | ChatGPT | Frozen | RecSys Doctoral Symposium 2023 | [Link] |

| JobRecoGPT | JobRecoGPT -- Explainable job recommendations using LLMs | GPT4 | Frozen | Arxiv 2023 | [Link] |

| LANCER | Reformulating Sequential Recommendation: Learning Dynamic User Interest with Content-enriched Language Modeling | GPT2 (110M) | Prefix Tuning | Arxiv 2023 | [Link] |

| TransRec | A Multi-facet Paradigm to Bridge Large Language Model and Recommendation | LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| AgentCF | AgentCF: Collaborative Learning with Autonomous Language Agents for Recommender Systems | text-davinci-003 & gpt-3.5-turbo | Frozen | WWW 2024 | [Link] |

| P4LM | Factual and Personalized Recommendations using Language Models and Reinforcement Learning | PaLM2-XS | Full Finetuning | Arxiv 2023 | [Link] |

| InstructMK | Multiple Key-value Strategy in Recommendation Systems Incorporating Large Language Model | LLaMA (7B) | Full Finetuning | CIKM GenRec 2023 | [Link] |

| LightLM | LightLM: A Lightweight Deep and Narrow Language Model for Generative Recommendation | T5-small (60M) | Full Finetuning | Arxiv 2023 | [Link] |

| LlamaRec | LlamaRec: Two-Stage Recommendation using Large Language Models for Ranking | LLaMA2 (7B) | QLoRA | PGAI@CIKM 2023 | [Link] |

| N/A | Exploring Recommendation Capabilities of GPT-4V(ision): A Preliminary Case Study | GPT-4V | Frozen | Arxiv 2023 | [Link] |

| N/A | Exploring Fine-tuning ChatGPT for News Recommendation | ChatGPT | gpt-3.5-turbo finetuning API | Arxiv 2023 | [Link] |

| N/A | Do LLMs Implicitly Exhibit User Discrimination in Recommendation? An Empirical Study | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| LC-Rec | Adapting Large Language Models by Integrating Collaborative Semantics for Recommendation | LLaMA (7B) | LoRA | Arxiv 2023 | [Link] |

| DOKE | Knowledge Plugins: Enhancing Large Language Models for Domain-Specific Recommendations | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| ControlRec | ControlRec: Bridging the Semantic Gap between Language Model and Personalized Recommendation | T5-base (223M) | Full Finetuning | Arxiv 2023 | [Link] |

| LLaRA | LLaRA: Large Language-Recommendation Assistant | LLaMA2 (7B) | LoRA | SIGIR 2024 | [Link] |

| PO4ISR | Large Language Models for Intent-Driven Session Recommendations | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| DRDT | DRDT: Dynamic Reflection with Divergent Thinking for LLM-based Sequential Recommendation | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| RecPrompt | RecPrompt: A Prompt Tuning Framework for News Recommendation Using Large Language Models | GPT4 | Frozen | Arxiv 2023 | [Link] |

| LiT5 | Scaling Down, LiTting Up: Efficient Zero-Shot Listwise Reranking with Seq2seq Encoder-Decoder Models | T5-XL (3B) | Full Finetuning | Arxiv 2023 | [Link] |

| STELLA | Large Language Models are Not Stable Recommender Systems | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| Llama4Rec | Integrating Large Language Models into Recommendation via Mutual Augmentation and Adaptive Aggregation | LLaMA2 (7B) | Full Finetuning | Arxiv 2024 | [Link] |

| RECLLM | Understanding Biases in ChatGPT-based Recommender Systems: Provider Fairness, Temporal Stability, and Recency | ChatGPT | Frozen | Arxiv 2024 | [Link] |

| DEALRec | Data-efficient Fine-tuning for LLM-based Recommendation | LLaMA (7B) | LoRA | Arxiv 2024 | [Link] |

1.3.3 Hybrid Task

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| P5 | Recommendation as Language Processing (RLP): A Unified Pretrain, Personalized Prompt & Predict Paradigm (P5) | T5-base (223M) | Full Finetuning | RecSys 2022 | [Link] |

| M6-Rec | M6-Rec: Generative Pretrained Language Models are Open-Ended Recommender Systems | M6-base (300M) | Option Tuning | Arxiv 2022 | [Link] |

| InstructRec | Recommendation as Instruction Following: A Large Language Model Empowered Recommendation Approach | FLAN-T5-XL (3B) | Full Finetuning | Arxiv 2023 | [Link] |

| ChatGPT | Is ChatGPT a Good Recommender? A Preliminary Study | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| ChatGPT | Is ChatGPT Good at Search? Investigating Large Language Models as Re-Ranking Agent | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| ChatGPT | Uncovering ChatGPT's Capabilities in Recommender Systems | ChatGPT | Frozen | RecSys 2023 | [Link] |

| BDLM | Bridging the Information Gap Between Domain-Specific Model and General LLM for Personalized Recommendation | Vicuna (7B) | Full Finetuning | Arxiv 2023 | [Link] |

| RecRanker | RecRanker: Instruction Tuning Large Language Model as Ranker for Top-k Recommendation | LLaMA2 (13B) | Full Finetuning | Arxiv 2023 | [Link] |

1.4 LLM for User Interaction

1.4.1 Task-oriented User Interaction

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| TG-ReDial | Towards Topic-Guided Conversational Recommender System | BERT-base (110M) & GPT2 (110M) | Unknown | COLING 2020 | [Link] |

| TCP | Follow Me: Conversation Planning for Target-driven Recommendation Dialogue Systems | BERT-base (110M) | Full Finetuning | Arxiv 2022 | [Link] |

| MESE | Improving Conversational Recommendation Systems' Quality with Context-Aware Item Meta-Information | DistilBERT (67M) & GPT2 (110M) | Full Finetuning | ACL 2022 | [Link] |

| UniMIND | A Unified Multi-task Learning Framework for Multi-goal Conversational Recommender Systems | BART-base (139M) | Full Finetuning | ACM TOIS 2023 | [Link] |

| VRICR | Variational Reasoning over Incomplete Knowledge Graphs for Conversational Recommendation | BERT-base (110M) | Full Finetuning | WSDM 2023 | [Link] |

| KECR | Explicit Knowledge Graph Reasoning for Conversational Recommendation | BERT-base (110M) & GPT2 (110M) | Frozen | ACM TIST 2023 | [Link] |

| N/A | Large Language Models as Zero-Shot Conversational Recommenders | GPT4 | Frozen | CIKM 2023 | [Link] |

| MuseChat | MuseChat: A Conversational Music Recommendation System for Videos | Vicuna (7B) | LoRA | Arxiv 2023 | [Link] |

| N/A | Conversational Recommender System and Large Language Model Are Made for Each Other in E-commerce Pre-sales Dialogue | Chinese-Alpaca (7B) | LoRA | EMNLP 2023 Findings | [Link] |

| N/A | ChatGPT for Conversational Recommendation: Refining Recommendations by Reprompting with Feedback | ChatGPT | Frozen | Arxiv 2024 | [Link] |

1.4.2 Open-ended User Interaction

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| BARCOR | BARCOR: Towards A Unified Framework for Conversational Recommendation Systems | BART-base (139M) | Selective-layer Finetuning | Arxiv 2022 | [Link] |

| RecInDial | RecInDial: A Unified Framework for Conversational Recommendation with Pretrained Language Models | DialoGPT (110M) | Full Finetuning | AACL 2022 | [Link] |

| UniCRS | Towards Unified Conversational Recommender Systems via Knowledge-Enhanced Prompt Learning | DialoGPT-small (176M) | Frozen | KDD 2022 | [Link] |

| T5-CR | Multi-Task End-to-End Training Improves Conversational Recommendation | T5-base (223M) | Full Finetuning | Arxiv 2023 | [Link] |

| TtW | Talk the Walk: Synthetic Data Generation for Conversational Music Recommendation | T5-base (223M) & T5-XXL (11B) | Full Finetuning & Frozen | Arxiv 2023 | [Link] |

| N/A | Rethinking the Evaluation for Conversational Recommendation in the Era of Large Language Models | ChatGPT | Frozen | EMNLP 2023 | [Link] |

| PECRS | Parameter-Efficient Conversational Recommender System as a Language Processing Task | GPT2-medium (355M) | LoRA | EACL 2024 | [Link] |

1.5 LLM for RS Pipeline Controller

| Name | Paper | LLM Backbone (Largest) | LLM Tuning Strategy | Publication | Link |

|---|---|---|---|---|---|

| Chat-REC | Chat-REC: Towards Interactive and Explainable LLMs-Augmented Recommender System | ChatGPT | Frozen | Arxiv 2023 | [Link] |

| RecLLM | Leveraging Large Language Models in Conversational Recommender Systems | LLaMA (7B) | Full Finetuning | Arxiv 2023 | [Link] |

| RAH | RAH! RecSys-Assistant-Human: A Human-Central Recommendation Framework with Large Language Models | GPT4 | Frozen | Arxiv 2023 | [Link] |

| RecMind | RecMind: Large Language Model Powered Agent For Recommendation | ChatGPT | Frozen | NAACL 2024 | [Link] |

| InteRecAgent | Recommender AI Agent: Integrating Large Language Models for Interactive Recommendations | GPT4 | Frozen | Arxiv 2023 | [Link] |

| CORE | Lending Interaction Wings to Recommender Systems with Conversational Agents | N/A | N/A | NIPS 2023 | [Link] |

| LLMCRS | A Large Language Model Enhanced Conversational Recommender System | LLaMA (7B) | Full Finetuning | Arxiv 2023 | [Link] |

1.6 Related Survey Papers

| Paper | Publication | Link |

|---|---|---|

| A Survey of Large Language Model Empowered Agents for Recommendation and Search: Towards Next-Generation Information Retrieval | Arxiv 2025 | [Link] |

| Agent-centric Information Access | Arxiv 2025 | [Link] |

| A Survey on LLM-based News Recommender Systems | Arxiv 2025 | [Link] |

| A Survey on LLM-powered Agents for Recommender Systems | Arxiv 2025 | [Link] |

| Cold-Start Recommendation towards the Era of Large Language Models (LLMs): A Comprehensive Survey and Roadmap | Arxiv 2025 | [link] |

| Large Language Model Enhanced Recommender Systems: Taxonomy, Trend, Application and Future | Arxiv 2024 | [Link] |

| Recommender Systems in the Era of Large Language Model Agents: A Survey | Preprint | [Link] |

| A Survey on Efficient Solutions of Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| Towards Next-Generation LLM-based Recommender Systems: A Survey and Beyond | Arxiv 2024 | [Link] |

| Bias and Unfairness in Information Retrieval Systems: New Challenges in the LLM Era | KDD 2024 | [Link] |

| All Roads Lead to Rome: Unveiling the Trajectory of Recommender Systems Across the LLM Era | Arxiv 2024 | [Link] |

| Survey for Landing Generative AI in Social and E-commerce Recsys - the Industry Perspectives | Arxiv 2024 | [Link] |

| A Survey of Generative Search and Recommendation in the Era of Large Language Models | Arxiv 2024 | [Link] |

| When Search Engine Services meet Large Language Models: Visions and Challenges | Arxiv 2024 | [Link] |

| A Review of Modern Recommender Systems Using Generative Models (Gen-RecSys) | Arxiv 2024 | [Link] |

| Exploring the Impact of Large Language Models on Recommender Systems: An Extensive Review | Arxiv 2024 | [Link] |

| Foundation Models for Recommender Systems: A Survey and New Perspectives | Arxiv 2024 | [Link] |

| Prompting Large Language Models for Recommender Systems: A Comprehensive Framework and Empirical Analysis | Arixv 2024 | [Link] |

| User Modeling in the Era of Large Language Models: Current Research and Future Directions | IEEE Data Engineering Bulletin 2023 | [Link] |

| A Survey on Large Language Models for Personalized and Explainable Recommendations | Arxiv 2023 | [Link] |

| Large Language Models for Generative Recommendation: A Survey and Visionary Discussions | Arxiv 2023 | [Link] |

| Large Language Models for Information Retrieval: A Survey | Arxiv 2023 | [Link] |

| When Large Language Models Meet Personalization: Perspectives of Challenges and Opportunities | Arxiv 2023 | [Link] |

| Recommender Systems in the Era of Large Language Models (LLMs) | Arxiv 2023 | [Link] |

| A Survey on Large Language Models for Recommendation | Arxiv 2023 | [Link] |

| Pre-train, Prompt and Recommendation: A Comprehensive Survey of Language Modelling Paradigm Adaptations in Recommender Systems | TACL 2023 | [Link] |

| Self-Supervised Learning for Recommender Systems: A Survey | TKDE 2022 | [Link] |

1.7 Newest Research Work List

| Paper | Publication | Link |

|---|---|---|

| Large Language Model Can Interpret Latent Space of Sequential Recommender | Arxiv 2023 | [Link] |

| Zero-Shot Recommendations with Pre-Trained Large Language Models for Multimodal Nudging | Arxiv 2023 | [Link] |

| INTERS: Unlocking the Power of Large Language Models in Search with Instruction Tuning | Arxiv 2024 | [Link] |

| Evaluation of Synthetic Datasets for Conversational Recommender Systems | Arxiv 2023 | [Link] |

| Generative Recommendation: Towards Next-generation Recommender Paradigm | Arxiv 2023 | [Link] |

| Towards Personalized Prompt-Model Retrieval for Generative Recommendation | Arxiv 2023 | [Link] |

| Generative Next-Basket Recommendation | RecSys 2023 | [Link] |

| Unlocking the Potential of Large Language Models for Explainable Recommendations | Arxiv 2023 | [Link] |

| Logic-Scaffolding: Personalized Aspect-Instructed Recommendation Explanation Generation using LLMs | Falcon (40B) | Frozen |

| Improving Sequential Recommendations with LLMs | Arxiv 2024 | [Link] |

| A Multi-Agent Conversational Recommender System | Arxiv 2024 | [Link] |

| TransFR: Transferable Federated Recommendation with Pre-trained Language Models | Arxiv 2024 | [Link] |

| Large Language Model Distilling Medication Recommendation Model | Arxiv 2024 | [Link] |

| Uncertainty-Aware Explainable Recommendation with Large Language Models | Arxiv 2024 | [Link] |

| Natural Language User Profiles for Transparent and Scrutable Recommendations | Arxiv 2024 | [Link] |

| Leveraging LLMs for Unsupervised Dense Retriever Ranking | Arxiv 2024 | [Link] |

| RA-Rec: An Efficient ID Representation Alignment Framework for LLM-based Recommendation | Arxiv 2024 | [Link] |

| A Multi-Agent Conversational Recommender System | Arxiv 2024 | [Link] |

| Fairly Evaluating Large Language Model-based Recommendation Needs Revisit the Cross-Entropy Loss | Arxiv 2024 | [Link] |

| SearchAgent: A Lightweight Collaborative Search Agent with Large Language Models | Arxiv 2024 | [Link] |

| Large Language Model Interaction Simulator for Cold-Start Item Recommendation | Arxiv 2024 | [Link] |

| Enhancing ID and Text Fusion via Alternative Training in Session-based Recommendation | Arxiv 2024 | [Link] |

| eCeLLM: Generalizing Large Language Models for E-commerce from Large-scale, High-quality Instruction Data | Arxiv 2024 | [Link] |

| LLM-Enhanced User-Item Interactions: Leveraging Edge Information for Optimized Recommendations | Arxiv 2024 | [Link] |

| LLM-based Federated Recommendation | Arxiv 2024 | [Link] |

| Rethinking Large Language Model Architectures for Sequential Recommendations | Arxiv 2024 | [Link] |

| Large Language Model with Graph Convolution for Recommendation | Arxiv 2024 | [Link] |

| Rec-GPT4V: Multimodal Recommendation with Large Vision-Language Models | Arxiv 2024 | [Link] |

| Enhancing Recommendation Diversity by Re-ranking with Large Language Models | Arxiv 2024 | [Link] |

| Are ID Embeddings Necessary? Whitening Pre-trained Text Embeddings for Effective Sequential Recommendation | Arxiv 2024 | [Link] |

| SPAR: Personalized Content-Based Recommendation via Long Engagement Attention | Arxiv 2024 | [Link] |

| Cognitive Personalized Search Integrating Large Language Models with an Efficient Memory Mechanism | WWW 2024 | [Link] |

| Large Language Models as Data Augmenters for Cold-Start Item Recommendation | Arxiv 2024 | [Link] |

| Explain then Rank: Scale Calibration of Neural Rankers Using Natural Language Explanations from Large Language Models | Arxiv 2024 | [Link] |

| LLM4SBR: A Lightweight and Effective Framework for Integrating Large Language Models in Session-based Recommendation | Arxiv 2024 | [Link] |

| Breaking the Barrier: Utilizing Large Language Models for Industrial Recommendation Systems through an Inferential Knowledge Graph | Arxiv 2024 | [Link] |

| User-LLM: Efficient LLM Contextualization with User Embeddings | Arxiv 2024 | [Link] |

| Stealthy Attack on Large Language Model based Recommendation | Arxiv 2024 | [Link] |

| Multi-Agent Collaboration Framework for Recommender Systems | Arxiv 2024 | [Link] |

| Item-side Fairness of Large Language Model-based Recommendation System | WWW 2024 | [Link] |

| Integrating Large Language Models with Graphical Session-Based Recommendation | Arxiv 2024 | [Link] |

| Language-Based User Profiles for Recommendation | LLM-IGS@WSDM2024 | [Link] |

| BASES: Large-scale Web Search User Simulation with Large Language Model based Agents | Arxiv 2024 | [Link] |

| Prospect Personalized Recommendation on Large Language Model-based Agent Platform | Arxiv 2024 | [Link] |

| Sequence-level Semantic Representation Fusion for Recommender Systems | Arxiv 2024 | [Link] |

| Corpus-Steered Query Expansion with Large Language Models | ECAL 2024 | [Link] |

| NoteLLM: A Retrievable Large Language Model for Note Recommendation | WWW 2024 | [Link] |

| An Interpretable Ensemble of Graph and Language Models for Improving Search Relevance in E-Commerce | WWW 2024 | [Link] |

| LLM-Ensemble: Optimal Large Language Model Ensemble Method for E-commerce Product Attribute Value Extraction | Arxiv 2024 | [Link] |

| Enhancing Long-Term Recommendation with Bi-level Learnable Large Language Model Planning | Arxiv 2024 | [Link] |

| InteraRec: Interactive Recommendations Using Multimodal Large Language Models | Arxiv 2024 | [Link] |

| ChatDiet: Empowering Personalized Nutrition-Oriented Food Recommender Chatbots through an LLM-Augmented Framework | CHASE 2024 | [Link] |

| Towards Efficient and Effective Unlearning of Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| Generative News Recommendation | WWW 2024 | [Link] |

| Bridging Language and Items for Retrieval and Recommendation | Arxiv 2024 | [Link] |

| Can Small Language Models be Good Reasoners for Sequential Recommendation? | WWW 2024 | [Link] |

| Aligning Large Language Models for Controllable Recommendations | Arxiv 2024 | [Link] |

| Personalized Audiobook Recommendations at Spotify Through Graph Neural Networks | WWW 2024 | [Link] |

| Towards Graph Foundation Models for Personalization | Arxiv 2024 | [Link] |

| CFaiRLLM: Consumer Fairness Evaluation in Large-Language Model Recommender System | Arxiv 2024 | [Link] |

| CoRAL: Collaborative Retrieval-Augmented Large Language Models Improve Long-tail Recommendation | Arxiv 2024 | [Link] |

| RecAI: Leveraging Large Language Models for Next-Generation Recommender Systems | WWW 2024 Demo | [Link] |

| KELLMRec: Knowledge-Enhanced Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| USimAgent: Large Language Models for Simulating Search Users | Arxiv 2024 | [Link] |

| CALRec: Contrastive Alignment of Generative LLMs For Sequential Recommendation | Arxiv 2024 | [Link] |

| Integrating Large Language Models with Graphical Session-Based Recommendation | Arxiv 2024 | [Link] |

| Language-Based User Profiles for Recommendation | LLM-IGS@WSDM2024 | [Link] |

| BASES: Large-scale Web Search User Simulation with Large Language Model based Agents | Arxiv 2024 | [Link] |

| Prospect Personalized Recommendation on Large Language Model-based Agent Platform | Arxiv 2024 | [Link] |

| Sequence-level Semantic Representation Fusion for Recommender Systems | Arxiv 2024 | [Link] |

| Corpus-Steered Query Expansion with Large Language Models | EACL 2024 | [Link] |

| NoteLLM: A Retrievable Large Language Model for Note Recommendation | WWW 2024 | [Link] |

| An Interpretable Ensemble of Graph and Language Models for Improving Search Relevance in E-Commerce | WWW 2024 | [Link] |

| LLM-Ensemble: Optimal Large Language Model Ensemble Method for E-commerce Product Attribute Value Extraction | SIGIR 2024 | [Link] |

| Enhancing Long-Term Recommendation with Bi-level Learnable Large Language Model Planning | SIGIR 2024 | [Link] |

| Towards Efficient and Effective Unlearning of Large Language Models for Recommendation | FCS | [Link] |

| Generative News Recommendation | WWW 2024 | [Link] |

| Bridging Language and Items for Retrieval and Recommendation | Arxiv 2024 | [Link] |

| Can Small Language Models be Good Reasoners for Sequential Recommendation? | WWW 2024 | [Link] |

| Aligning Large Language Models for Controllable Recommendations | Arxiv 2024 | [Link] |

| Personalized Audiobook Recommendations at Spotify Through Graph Neural Networks | WWW 2024 | [Link] |

| CFaiRLLM: Consumer Fairness Evaluation in Large-Language Model Recommender System | Arxiv 2024 | [Link] |

| CoRAL: Collaborative Retrieval-Augmented Large Language Models Improve Long-tail Recommendation | Arxiv 2024 | [Link] |

| RecAI: Leveraging Large Language Models for Next-Generation Recommender Systems | WWW 2024 | [Link] |

| KELLMRec: Knowledge-Enhanced Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| Towards Graph Foundation Models for Personalization | Arxiv 2024 | [Link] |

| USimAgent: Large Language Models for Simulating Search Users | Arxiv 2024 | [Link] |

| The Whole is Better than the Sum: Using Aggregated Demonstrations in In-Context Learning for Sequential Recommendation | NAACL 2024 | [Link] |

| PPM : A Pre-trained Plug-in Model for Click-through Rate Prediction | WWW 2024 | [Link] |

| Evaluating Large Language Models as Generative User Simulators for Conversational Recommendation | Arxiv 2024 | [Link] |

| Towards Unified Multi-Modal Personalization: Large Vision-Language Models for Generative Recommendation and Beyond | ICLR 2024 | [Link] |

| Harnessing Large Language Models for Text-Rich Sequential Recommendation | Arxiv 2024 | [Link] |

| A Large Language Model Enhanced Sequential Recommender for Joint Video and Comment Recommendation | Arxiv 2024 | [Link] |

| Could Small Language Models Serve as Recommenders? Towards Data-centric Cold-start Recommendations | Arxiv 2024 | [Link] |

| Play to Your Strengths: Collaborative Intelligence of Conventional Recommender Models and Large Language Models | Arxiv 2024 | [Link] |

| Reinforcement Learning-based Recommender Systems with Large Language Models for State Reward and Action Modeling | Arxiv 2024 | [Link] |

| Large Language Models Enhanced Collaborative Filtering | Arxiv 2024 | [Link] |

| Improving Content Recommendation: Knowledge Graph-Based Semantic Contrastive Learning for Diversity and Cold-Start Users | LREC-COLING 2024 | [Link] |

| Sequential Recommendation with Latent Relations based on Large Language Model | Arxiv 2024 | [Link] |

| Enhanced Generative Recommendation via Content and Collaboration Integration | Arxiv 2024 | [Link] |

| To Recommend or Not: Recommendability Identification in Conversations with Pre-trained Language Models | Arxiv 2024 | [Link] |

| IDGenRec: LLM-RecSys Alignment with Textual ID Learning | SIGIR 2024 | [Link] |

| Breaking the Length Barrier: LLM-Enhanced CTR Prediction in Long Textual User Behaviors | SIGIR 2024 | [Link] |

| Make Large Language Model a Better Ranker | Arxiv 2024 | [Link] |

| Do Large Language Models Rank Fairly? An Empirical Study on the Fairness of LLMs as Rankers | NAACL 2024 | [Link] |

| IISAN: Efficiently Adapting Multimodal Representation for Sequential Recommendation with Decoupled PEFT | SIGIR 2024 | [Link] |

| Where to Move Next: Zero-shot Generalization of LLMs for Next POI Recommendation | Arxiv 2024 | [Link] |

| Tired of Plugins? Large Language Models Can Be End-To-End Recommender | Arxiv 2024 | [Link] |

| Aligning Large Language Models with Recommendation Knowledge | NAACL 2024 | [Link] |

| Enhancing Content-based Recommendation via Large Language Model | Arxiv 2024 | [Link] |

| DRE: Generating Recommendation Explanations by Aligning Large Language Models at Data-level | Arxiv 2024 | [Link] |

| Optimization Methods for Personalizing Large Language Models through Retrieval Augmentation | Arxiv 2024 | [Link] |

| Q-PEFT: Query-dependent Parameter Efficient Fine-tuning for Text Reranking with Large Language Models | Arxiv 2024 | [Link] |

| JobFormer: Skill-Aware Job Recommendation with Semantic-Enhanced Transformer | Arxiv 2024 | [Link] |

| PMG : Personalized Multimodal Generation with Large Language Models | Arxiv 2024 | [Link] |

| The Elephant in the Room: Rethinking the Usage of Pre-trained Language Model in Sequential Recommendation | Arxiv 2024 | [Link] |

| Exact and Efficient Unlearning for Large Language Model-based Recommendation | Arxiv 2024 | [Link] |

| Large Language Models meet Collaborative Filtering: An Efficient All-round LLM-based Recommender System | Arxiv 2024 | [Link] |

| Behavior Alignment: A New Perspective of Evaluating LLM-based Conversational Recommendation Systems | SIGIR 2024 | [Link] |

| Generating Diverse Criteria On-the-Fly to Improve Point-wise LLM Rankers | Arxiv 2024 | [Link] |

| RecGPT: Generative Personalized Prompts for Sequential Recommendation via ChatGPT Training Paradigm | Arxiv 2024 | [Link] |

| MMGRec: Multimodal Generative Recommendation with Transformer Model | Arxiv 2024 | [Link] |

| Hi-Gen: Generative Retrieval For Large-Scale Personalized E-commerce Search | Arxiv 2024 | [Link] |

| Contrastive Quantization based Semantic Code for Generative Recommendation | Arxiv 2024 | [Link] |

| ImplicitAVE: An Open-Source Dataset and Multimodal LLMs Benchmark for Implicit Attribute Value Extraction | Arxiv 2024 | [Link] |

| Large Language Models for Next Point-of-Interest Recommendation | SIGIR 2024 | [Link] |

| Ranked List Truncation for Large Language Model-based Re-Ranking | SIGIR 2024 | [Link] |

| Large Language Models as Conversational Movie Recommenders: A User Study | Arxiv 2024 | [Link] |

| Distillation Matters: Empowering Sequential Recommenders to Match the Performance of Large Language Model | Arxiv 2024 | [Link] |

| Efficient and Responsible Adaptation of Large Language Models for Robust Top-k Recommendations | Arxiv 2024 | [Link] |

| FairEvalLLM. A Comprehensive Framework for Benchmarking Fairness in Large Language Model Recommender Systems | Arxiv 2024 | [Link] |

| Improve Temporal Awareness of LLMs for Sequential Recommendation | Arxiv 2024 | [Link] |

| CALRec: Contrastive Alignment of Generative LLMs For Sequential Recommendation | Arxiv 2024 | [Link] |

| Knowledge Adaptation from Large Language Model to Recommendation for Practical Industrial Application | Arxiv 2024 | [Link] |

| DynLLM: When Large Language Models Meet Dynamic Graph Recommendation | Arxiv 2024 | [Link] |

| Learnable Tokenizer for LLM-based Generative Recommendation | Arxiv 2024 | [Link] |

| CELA: Cost-Efficient Language Model Alignment for CTR Prediction | Arxiv 2024 | [Link] |

| RDRec: Rationale Distillation for LLM-based Recommendation | ACL 2024 | [Link] |

| EmbSum: Leveraging the Summarization Capabilities of Large Language Models for Content-Based Recommendations | Arxiv 2024 | [Link] |

| Reindex-Then-Adapt: Improving Large Language Models for Conversational Recommendation | Arxiv 2024 | [Link] |

| RecGPT: Generative Pre-training for Text-based Recommendation | ACL 2024 | [Link] |

| Let Me Do It For You: Towards LLM Empowered Recommendation via Tool Learning | SIGIR 2024 | [Link] |

| Finetuning Large Language Model for Personalized Ranking | Arxiv 2024 | [Link] |

| LLMs for User Interest Exploration: A Hybrid Approach | Arxiv 2024 | [Link] |

| NoteLLM-2: Multimodal Large Representation Models for Recommendation | Arxiv 2024 | [Link] |

| Multimodality Invariant Learning for Multimedia-Based New Item Recommendation | Arxiv 2024 | [Link] |

| SLMRec: Empowering Small Language Models for Sequential Recommendation | Arxiv 2024 | [Link] |

| Keyword-driven Retrieval-Augmented Large Language Models for Cold-start User Recommendations | Arxiv 2024 | [Link] |

| Generating Query Recommendations via LLMs | Arxiv 2024 | [Link] |

| Large Language Models Enhanced Sequential Recommendation for Long-tail User and Item | Arxiv 2024 | [Link] |

| DisCo: Towards Harmonious Disentanglement and Collaboration between Tabular and Semantic Space for Recommendation | KDD 2024 | [Link] |

| LLM-RankFusion: Mitigating Intrinsic Inconsistency in LLM-based Ranking | Arxiv 2024 | [Link] |

| A Practice-Friendly Two-Stage LLM-Enhanced Paradigm in Sequential Recommendation | Arxiv 2024 | [Link] |

| Large Language Models as Recommender Systems: A Study of Popularity Bias | Gen-IR@SIGIR24 | [Link] |

| Privacy in LLM-based Recommendation: Recent Advances and Future Directions | Arxiv 2024 | [Link] |

| An LLM-based Recommender System Environment | Arxiv 2024 | [Link] |

| Robust Interaction-based Relevance Modeling for Online E-Commerce and LLM-based Retrieval | ECML-PKDD 2024 | [Link] |

| Large Language Models Make Sample-Efficient Recommender Systems | FCS | [Link] |

| XRec: Large Language Models for Explainable Recommendation | Arxiv 2024 | [Link] |

| Exploring User Retrieval Integration towards Large Language Models for Cross-Domain Sequential Recommendation | Arxiv 2024 | [Link] |

| Large Language Models as Evaluators for Recommendation Explanations | Arxiv 2024 | [Link] |

| Text-like Encoding of Collaborative Information in Large Language Models for Recommendation | ACL 2024 | [Link] |

| Item-Language Model for Conversational Recommendation | Arxiv 2024 | [Link] |

| Improving LLMs for Recommendation with Out-Of-Vocabulary Tokens | Arxiv 2024 | [Link] |

| On Softmax Direct Preference Optimization for Recommendation | Arxiv 2024 | [Link] |

| TokenRec: Learning to Tokenize ID for LLM-based Generative Recommendation | Arxiv 2024 | [Link] |

| DELRec: Distilling Sequential Pattern to Enhance LLM-based Recommendation | Arxiv 2024 | [Link] |

| TourRank: Utilizing Large Language Models for Documents Ranking with a Tournament-Inspired Strategy | Arxiv 2024 | [Link] |

| Multi-Layer Ranking with Large Language Models for News Source Recommendation | SIGIR 2024 | [Link] |

| Intermediate Distillation: Data-Efficient Distillation from Black-Box LLMs for Information Retrieval | Arxiv 2024 | [Link] |

| LLM-enhanced Reranking in Recommender Systems | Arxiv 2024 | [Link] |

| LLM4MSR: An LLM-Enhanced Paradigm for Multi-Scenario Recommendation | Arxiv 2024 | [Link] |

| Taxonomy-Guided Zero-Shot Recommendations with LLMs | Arxiv 2024 | [Link] |

| EAGER: Two-Stream Generative Recommender with Behavior-Semantic Collaboration | KDD 2024 | [Link] |

| An Investigation of Prompt Variations for Zero-shot LLM-based Rankers | Arxiv 2024 | [Link] |

| Optimizing Novelty of Top-k Recommendations using Large Language Models and Reinforcement Learning | KDD 2024 | [Link] |

| Enhancing Collaborative Semantics of Language Model-Driven Recommendations via Graph-Aware Learning | Arxiv 2024 | [Link] |

| Decoding Matters: Addressing Amplification Bias and Homogeneity Issue for LLM-based Recommendation | Arxiv 2024 | [Link] |

| FIRST: Faster Improved Listwise Reranking with Single Token Decoding | Arxiv 2024 | [Link] |

| LLM-Powered Explanations: Unraveling Recommendations Through Subgraph Reasoning | Arxiv 2024 | [Link] |

| DemoRank: Selecting Effective Demonstrations for Large Language Models in Ranking Task | Arxiv 2024 | [Link] |

| ELCoRec: Enhance Language Understanding with Co-Propagation of Numerical and Categorical Features for Recommendation | Arxiv 2024 | [Link] |

| Generative Explore-Exploit: Training-free Optimization of Generative Recommender Systems using LLM Optimizers | ACL 2024 | [Link] |

| ProductAgent: Benchmarking Conversational Product Search Agent with Asking Clarification Questions | Arxiv 2024 | [Link] |

| MemoCRS: Memory-enhanced Sequential Conversational Recommender Systems with Large Language Models | Arxiv 2024 | [Link] |

| Preference Distillation for Personalized Generative Recommendation | Arxiv 2024 | [Link] |

| Towards Bridging the Cross-modal Semantic Gap for Multi-modal Recommendation | Arxiv 2024 | [Link] |

| Language Models Encode Collaborative Signals in Recommendation | Arxiv 2024 | [Link] |

| A Neural Matrix Decomposition Recommender System Model based on the Multimodal Large Language Model | Arxiv 2024 | [Link] |

| LLMGR: Large Language Model-based Generative Retrieval in Alipay Search | SIGIR 2024 | [Link] |

| Enhancing Sequential Recommenders with Augmented Knowledge from Aligned Large Language Models | SIGIR 2024 | [Link] |

| Reinforced Prompt Personalization for Recommendation with Large Language Models | Arxiv 2024 | [Link] |

| Improving Retrieval in Sponsored Search by Leveraging Query Context Signals | Arxiv 2024 | [Link] |

| Generative Retrieval with Preference Optimization for E-commerce Search | Arxiv 2024 | [Link] |

| GenRec: Generative Personalized Sequential Recommendation | Arxiv 2024 | [Link] |

| Breaking the Hourglass Phenomenon of Residual Quantization: Enhancing the Upper Bound of Generative Retrieval | Arxiv 2024 | [Link] |

| Enhancing Taobao Display Advertising with Multimodal Representations: Challenges, Approaches and Insights | CIKM 2024 | [Link] |

| Leveraging LLM Reasoning Enhances Personalized Recommender Systems | ACL 2024 | [Link] |

| Multi-Aspect Reviewed-Item Retrieval via LLM Query Decomposition and Aspect Fusion | Arxiv 2024 | [Link] |

| Lifelong Personalized Low-Rank Adaptation of Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| Exploring Query Understanding for Amazon Product Search | Arxiv 2024 | [Link] |

| A Decoding Acceleration Framework for Industrial Deployable LLM-based Recommender Systems | Arxiv 2024 | [Link] |

| Prompt Tuning as User Inherent Profile Inference Machine | Arxiv 2024 | [Link] |

| Beyond Inter-Item Relations: Dynamic Adaptive Mixture-of-Experts for LLM-Based Sequential Recommendation | Arxiv 2024 | [Link] |

| Review-driven Personalized Preference Reasoning with Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| DaRec: A Disentangled Alignment Framework for Large Language Model and Recommender System | Arxiv 2024 | [Link] |

| LLM4DSR: Leveraing Large Language Model for Denoising Sequential Recommendation | Arxiv 2024 | [Link] |

| EasyRec: Simple yet Effective Language Models for Recommendation | Arxiv 2024 | [Link] |

| Collaborative Cross-modal Fusion with Large Language Model for Recommendation | CIKM 2024 | [Link] |

| Customizing Language Models with Instance-wise LoRA for Sequential Recommendation | Arxiv 2024 | [Link] |

| Efficient and Deployable Knowledge Infusion for Open-World Recommendations via Large Language Models | Arxiv 2024 | [Link] |

| CoRA: Collaborative Information Perception by Large Language Model's Weights for Recommendation | Arxiv 2024 | [Link] |

| GANPrompt: Enhancing Robustness in LLM-Based Recommendations with GAN-Enhanced Diversity Prompts | Arxiv 2024 | [Link] |

| Harnessing Multimodal Large Language Models for Multimodal Sequential Recommendation | Arxiv 2024 | [Link] |

| DLCRec: A Novel Approach for Managing Diversity in LLM-Based Recommender Systems | Arxiv | [Link] |

| LARR: Large Language Model Aided Real-time Scene Recommendation with Semantic Understanding | RecSys 2024 | [Link] |

| SC-Rec: Enhancing Generative Retrieval with Self-Consistent Reranking for Sequential Recommendation | Arxiv 2024 | [Link] |

| Are LLM-based Recommenders Already the Best? Simple Scaled Cross-entropy Unleashes the Potential of Traditional Sequential Recommenders | Arxiv 2024 | [Link] |

| HRGraph: Leveraging LLMs for HR Data Knowledge Graphs with Information Propagation-based Job Recommendation | KaLLM 2024 | [Link] |

| An Extremely Data-efficient and Generative LLM-based Reinforcement Learning Agent for Recommenders | Arxiv 2024 | [Link] |

| CheatAgent: Attacking LLM-Empowered Recommender Systems via LLM Agent | KDD 2024 | [Link] |

| Laser: Parameter-Efficient LLM Bi-Tuning for Sequential Recommendation with Collaborative Information | Arxiv 2024 | [Link] |

| MARS: Matching Attribute-aware Representations for Text-based Sequential Recommendation | CIKM 2024 | [Link] |

| End-to-End Learnable Item Tokenization for Generative Recommendation | Arxiv 2024 | [Link] |

| Incorporate LLMs with Influential Recommender System | Arxiv 2024 | [Link] |

| Enhancing Sequential Recommendations through Multi-Perspective Reflections and Iteration | Arxiv 2024 | [Link] |

| STORE: Streamlining Semantic Tokenization and Generative Recommendation with A Single LLM | Arxiv 2024 | [Link] |

| Multilingual Prompts in LLM-Based Recommenders: Performance Across Languages | Arxiv 2024 | [Link] |

| Unleash LLMs Potential for Recommendation by Coordinating Twin-Tower Dynamic Semantic Token Generator | Arxiv 2024 | [Link] |

| Large Language Model Enhanced Hard Sample Identification for Denoising Recommendation | Arxiv 2024 | [Link] |

| Chain-of-thought prompting empowered generative user modeling for personalized recommendation | Neural Computing and Applications | [Link] |

| Challenging Fairness: A Comprehensive Exploration of Bias in LLM-Based Recommendations | Arxiv 2024 | [Link] |

| Decoding Style: Efficient Fine-Tuning of LLMs for Image-Guided Outfit Recommendation with Preference | CIKM 2024 | [Link] |

| LLM-Powered Text Simulation Attack Against ID-Free Recommender Systems | Arxiv 2024 | [Link] |

| FLARE: Fusing Language Models and Collaborative Architectures for Recommender Enhancement | Arxiv 2024 | [Link] |

| Retrieve, Annotate, Evaluate, Repeat: Leveraging Multimodal LLMs for Large-Scale Product Retrieval Evaluation | Arxiv 2024 | [Link] |

| HLLM: Enhancing Sequential Recommendations via Hierarchical Large Language Models for Item and User Modeling | Arxiv 2024 | [Link] |

| Large Language Model Ranker with Graph Reasoning for Zero-Shot Recommendation | ICANN 2024 | [Link] |

| User Knowledge Prompt for Sequential Recommendation | RecSys 2024 | [Link] |

| RLRF4Rec: Reinforcement Learning from Recsys Feedback for Enhanced Recommendation Reranking | Arxiv 2024 | [Link] |

| FELLAS: Enhancing Federated Sequential Recommendation with LLM as External Services | Arxiv 2024 | [Link] |

| TLRec: A Transfer Learning Framework to Enhance Large Language Models for Sequential Recommendation Tasks | RecSys 2024 | [Link] |

| SeCor: Aligning Semantic and Collaborative Representations by Large Language Models for Next-Point-of-Interest Recommendations | RecSys 2024 | [Link] |

| Efficient Inference for Large Language Model-based Generative Recommendation | Arxiv 2024 | [Link] |

| Instructing and Prompting Large Language Models for Explainable Cross-domain Recommendations | RecSys 2024 | [Link] |

| ReLand: Integrating Large Language Models' Insights into Industrial Recommenders via a Controllable Reasoning Pool | RecSys 2024 | [Link] |

| Inductive Generative Recommendation via Retrieval-based Speculation | Arxiv 2024 | [Link] |

| Constructing and Masking Preference Profile with LLMs for Filtering Discomforting Recommendation | Arxiv 2024 | [Link] |

| Towards Scalable Semantic Representation for Recommendation | Arxiv 2024 | [Link] |

| Large Language Models as Narrative-Driven Recommenders | Arxiv 2024 | [Link] |

| The Moral Case for Using Language Model Agents for Recommendation | Arxiv 2024 | [Link] |

| RosePO: Aligning LLM-based Recommenders with Human Values | Arxiv 2024 | [Link] |

| Comprehending Knowledge Graphs with Large Language Models for Recommender Systems | Arxiv 2024 | [Link] |

| Triple Modality Fusion: Aligning Visual, Textual, and Graph Data with Large Language Models for Multi-Behavior Recommendations | Arxiv 2024 | [Link] |

| Improving Pinterest Search Relevance Using Large Language Models | CIKM 2024 Workshop | [Link] |

| STAR: A Simple Training-free Approach for Recommendations using Large Language Models | Arxiv 2024 | [Link] |

| End-to-end Training for Recommendation with Language-based User Profiles | Arxiv 2024 | [Link] |

| Knowledge Graph Enhanced Language Agents for Recommendation | Arxiv 2024 | [Link] |

| Collaborative Knowledge Fusion: A Novel Approach for Multi-task Recommender Systems via LLMs | Arxiv 2024 | [Link] |

| Real-Time Personalization for LLM-based Recommendation with Customized In-Context Learning | Arxiv 2024 | [Link] |

| ReasoningRec: Bridging Personalized Recommendations and Human-Interpretable Explanations through LLM Reasoning | Arxiv 2024 | [Link] |

| Beyond Utility: Evaluating LLM as Recommender | Arxiv 2024 | [Link] |

| Enhancing ID-based Recommendation with Large Language Models | Arxiv 2024 | [Link] |

| LLM4PR: Improving Post-Ranking in Search Engine with Large Language Models | Arxiv 2024 | [Link] |

| Proactive Detection and Calibration of Seasonal Advertisements with Multimodal Large Language Models | Arxiv 2024 | [Link] |

| Enhancing ID-based Recommendation with Large Language Models | Arxiv 2024 | [Link] |

| Transferable Sequential Recommendation via Vector Quantized Meta Learning | Arxiv 2024 | [Link] |

| Self-Calibrated Listwise Reranking with Large Language Models | Arxiv 2024 | [Link] |

| Enhancing Large Language Model Based Sequential Recommender Systems with Pseudo Labels Reconstruction | ACL Findings 2024 | [Link] |

| Unleashing the Power of Large Language Models for Group POI Recommendations | Avrxi 2024 | [Link] |

| Scaling Laws for Online Advertisement Retrieval | Arxiv 2024 | [Link] |

| Explainable LLM-driven Multi-dimensional Distillation for E-Commerce Relevance Learning | Arxiv 2024 | [Link] |

| GOT4Rec: Graph of Thoughts for Sequential Recommendation | Arxiv 2024 | [Link] |

| HARec: Hyperbolic Graph-LLM Alignment for Exploration and Exploitation in Recommender Systems | Arxiv 2024 | [Link] |

| Cross-Domain Recommendation Meets Large Language Models | Arxiv 2024 | [Link] |

| Explainable CTR Prediction via LLM Reasoning | WSDM 2025 | [Link] |

| Enabling Explainable Recommendation in E-commerce with LLM-powered Product Knowledge Graph | IJCAI Workshop 2025 | [Link] |

| Break the ID-Language Barrier: An Adaption Framework for Sequential Recommendation | Arxiv 2024 | [Link] |

| LEADRE: Multi-Faceted Knowledge Enhanced LLM Empowered Display Advertisement Recommender System | Arxiv 2024 | [Link] |

| Pre-train, Align, and Disentangle: Empowering Sequential Recommendation with Large Language Models | Arxiv 2024 | [Link] |

| ULMRec: User-centric Large Language Model for Sequential Recommendation | Arxiv 2024 | [Link] |

| AltFS: Agency-light Feature Selection with Large Language Models in Deep Recommender Systems | Arxiv 2024 | [Link] |

| MRP-LLM: Multitask Reflective Large Language Models for Privacy-Preserving Next POI Recommendation | Arxiv 2024 | [Link] |

| MOPI-HFRS: A Multi-objective Personalized Health-aware Food Recommendation System with LLM-enhanced Interpretation | Arxiv 2024 | [Link] |

| SPRec: Leveraging Self-Play to Debias Preference Alignment for Large Language Model-based Recommendations | Arxiv 2024 | [Link] |

| RecSys Arena: Pair-wise Recommender System Evaluation with Large Language Models | Arxiv 2024 | [Link] |

| CRS Arena: Crowdsourced Benchmarking of Conversational Recommender Systems | Arxiv 2024 | [Link] |

| Boosting LLM-based Relevance Modeling with Distribution-Aware Robust Learning | Arxiv 2024 | [Link] |

| LLM is Knowledge Graph Reasoner: LLM's Intuition-aware Knowledge Graph Reasoning for Cold-start Sequential Recommendation | Arxiv 2024 | [Link] |

| Bridging the User-side Knowledge Gap in Knowledge-aware Recommendations with Large Language Models | Arxiv 2024 | [Link] |

| Sliding Windows Are Not the End: Exploring Full Ranking with Long-Context Large Language Models | Arxiv 2024 | [Link] |

| ChainRank-DPO: Chain Rank Direct Preference Optimization for LLM Rankers | Arxiv 2024 | [Link] |

| Are Longer Prompts Always Better? Prompt Selection in Large Language Models for Recommendation Systems | Arxiv 2024 | [Link] |

| Towards a Unified Paradigm: Integrating Recommendation Systems as a New Language in Large Models | Arxiv 2024 | [Link] |

| LLM-Powered User Simulator for Recommender System | Arxiv 2024 | [Link] |

| Enhancing Item Tokenization for Generative Recommendation through Self-Improvement | Arxiv 2024 | [Link] |

| Molar: Multimodal LLMs with Collaborative Filtering Alignment for Enhanced Sequential Recommendation | Arxiv 2024 | [Link] |

| An Automatic Graph Construction Framework based on Large Language Models for Recommendation | Arxiv 2024 | [Link] |

| RecLM: Recommendation Instruction Tuning | Arxiv 2024 | [Link] |

| The Efficiency vs. Accuracy Trade-off: Optimizing RAG-Enhanced LLM Recommender Systems Using Multi-Head Early Exit | Arxiv 2025 | [Link] |

| Knowledge Graph Retrieval-Augmented Generation for LLM-based Recommendation | Arxiv 2025 | [Link] |

| Efficient and Responsible Adaptation of Large Language Models for Robust and Equitable Top-k Recommendations | Avrxi 2025 | [Link] |

| Collaboration of Large Language Models and Small Recommendation Models for Device-Cloud Recommendation | KDD 2025 | [Link] |

| Guiding Retrieval using LLM-based Listwise Rankers | Avrxi 2025 | [Link] |

| Generative Retrieval for Book search | KDD 2025 | [Link] |

| Full-Stack Optimized Large Language Models for Lifelong Sequential Behavior Comprehension in Recommendation | Arxiv 2025 | [Link] |

| Large Language Model driven Policy Exploration for Recommender Systems | WSDM 2025 | [Link] |

| SampleLLM: Optimizing Tabular Data Synthesis in Recommendations | Arxiv 2025 | [Link] |

| PatchRec: Multi-Grained Patching for Efficient LLM-based Sequential Recommendation | Arxiv 2025 | [Link] |

| Uncertainty Quantification and Decomposition for LLM-based Recommendation | WWW 2025 | [Link] |

| A Zero-Shot Generalization Framework for LLM-Driven Cross-Domain Sequential Recommendation | Arxiv 2025 | [Link] |

| RankFlow: A Multi-Role Collaborative Reranking Workflow Utilizing Large Language Models | Arxiv 2025 | [Link] |

| FACTER: Fairness-Aware Conformal Thresholding and Prompt Engineering for Enabling Fair LLM-Based Recommender Systems | Arxiv 2025 | [Link] |

| Large Language Models Are Universal Recommendation Learners | Arxiv 2025 | [Link] |

| Intent Representation Learning with Large Language Model for Recommendation | Arxiv 2025 | [Link] |

| Boosting Knowledge Graph-based Recommendations through Confidence-Aware Augmentation with Large Language Models | Arxiv 2025 | [Link] |

| RALLRec: Improving Retrieval Augmented Large Language Model Recommendation with Representation Learning | WWW 2025 | [Link] |

| Solving the Content Gap in Roblox Game Recommendations: LLM-Based Profile Generation and Reranking | Arxiv 2025 | [Link] |

| MoLoRec: A Generalizable and Efficient Framework for LLM-Based Recommendation | Arxiv 2025 | [Link] |

| Unleashing the Power of Large Language Model for Denoising Recommendation | WWW 2025 | [Link] |

| Semantic Ads Retrieval at Walmart eCommerce with Language Models Progressively Trained on Multiple Knowledge Domains | Arxiv 2025 | [Link] |

| Order-agnostic Identifier for Large Language Model-based Generative Recommendation | Arxiv 2025 | [Link] |

| G-Refer: Graph Retrieval-Augmented Large Language Model for Explainable Recommendation | WWW 2025 | [Link] |

| LLM4Tag: Automatic Tagging System for Information Retrieval via Large Language Models | Arxiv 2025 | [Link] |

| Bursting Filter Bubble: Enhancing Serendipity Recommendations with Aligned Large Language Models | Arxiv 2025 | [Link] |

| ActionPiece: Contextually Tokenizing Action Sequences for Generative Recommendation | Arxiv 2025 | [Link] |

| TALKPLAY: Multimodal Music Recommendation with Large Language Models | Arxiv 2025 | [Link] |

| Enhancing Cross-Domain Recommendations with Memory-Optimized LLM-Based User Agents | Arxiv 2025 | [Link] |

| Enhancing LLM-Based Recommendations Through Personalized Reasoning | Arxiv 2025 | [Link] |

| Lost in Sequence: Do Large Language Models Understand Sequential Recommendation? | Arxiv 2025 | [Link] |

| InstructAgent: Building User Controllable Recommender via LLM Agent | Arxiv 2025 | [Link] |

| EAGER-LLM: Enhancing Large Language Models as Recommenders through Exogenous Behavior-Semantic Integration | Arxiv 2025 | [Link] |

| Efficient AI in Practice: Training and Deployment of Efficient LLMs for Industry Applications | Arxiv 2025 | [Link] |

| Collaborative Retrieval for Large Language Model-based Conversational Recommender Systems | Arxiv 2025 | [Link] |

| Active Large Language Model-based Knowledge Distillation for Session-based Recommendation | Arxiv 2025 | [Link] |

| Training Large Recommendation Models via Graph-Language Token Alignment | WWW 2025 | [Link] |

| PCL: Prompt-based Continual Learning for User Modeling in Recommender Systems | WWW 2025 | |

| FilterLLM: Text-To-Distribution LLM for Billion-Scale Cold-Start Recommendation | Arxiv 2025 | [Link] |

| Towards An Efficient LLM Training Paradigm for CTR Prediction | Arxiv 2025 | [Link] |

| LLMInit: A Free Lunch from Large Language Models for Selective Initialization of Recommendation | Arxiv 2025 | [Link] |

| PersonaX: A Recommendation Agent Oriented User Modeling Framework for Long Behavior Sequence | Arxiv 2025 | [Link] |

| Towards Next-Generation Recommender Systems: A Benchmark for Personalized Recommendation Assistant with LLMs | Arxiv 2025 | [Link] |

| Uncovering Cross-Domain Recommendation Ability of Large Language Models | Arxiv 2025 | [Link] |

| LLM-Driven Usefulness Labeling for IR Evaluation | Arxiv 2025 | [Link] |

| LREF: A Novel LLM-based Relevance Framework for E-commerce | WWW 2025 | [Link] |

| Process-Supervised LLM Recommenders via Flow-guided Tuning | Arxiv 2025 | [Link] |

| Image is All You Need: Towards Efficient and Effective Large Language Model-Based Recommender Systems | Arxiv 2025 | [Link] |

| Rank-R1: Enhancing Reasoning in LLM-based Document Rerankers via Reinforcement Learning | Arxiv 2025 | [Link] |

| Federated Cross-Domain Click-Through Rate Prediction With Large Language Model Augmentation | Arxiv 2025 | [Link] |

| BeLightRec: A lightweight recommender system enhanced with BERT | Arxiv 2025 | [Link] |

| RALLRec+: Retrieval Augmented Large Language Model Recommendation with Reasoning | Arxiv 2025 | [Link] |

| Alleviating LLM-based Generative Retrieval Hallucination in Alipay Search | Arxiv 2025 | [Link] |

| RuleAgent: Discovering Rules for Recommendation Denoising with Autonomous Language Agents | Arxiv 2025 | [Link] |

| CoRanking: Collaborative Ranking with Small and Large Ranking Agents | Arxiv 2025 | [Link] |

| Get the Agents Drunk: Memory Perturbations in Autonomous Agent-based Recommender Systems | Arxiv 2025 | [Link] |

| Rec-R1: Bridging Generative Large Language Models and User-Centric Recommendation Systems via Reinforcement Learning | Arxiv 2025 | [Link] |

| LLM-Augmented Graph Neural Recommenders: Integrating User Reviews | Arxiv 2025 | [Link] |

| Enhancing Embedding Representation Stability in Recommendation Systems with Semantic ID | Arxiv 2025 | [Link] |

| Retrieval-Augmented Purifier for Robust LLM-Empowered Recommendation | Arxiv 2025 | [Link] |

The datasets & benchmarks for LLM-related RS topics should maintain the original semantic/textual features, instead of anonymous feature IDs.

| Dataset | RS Scenario | Link |

|---|---|---|

| RecSysLLMsP | Social Networks | [Link] |

| AmazonQAC | Query Autocomplete | [Link] |

| NineRec | 9 Domains | [Link] |

| MicroLens | Video Streaming | [Link] |

| Amazon-Review 2023 | E-commerce | [Link] |

| Reddit-Movie | Conversational & Movie | [Link] |

| Amazon-M2 | E-commerce | [Link] |

| MovieLens | Movie | [Link] |

| Amazon | E-commerce | [Link] |

| BookCrossing | Book | [Link] |

| GoodReads | Book | [Link] |

| Anime | Anime | [Link] |

| PixelRec | Short Video | [Link] |

| Netflix | Movie | [Link] |

| Benchmarks | Webcite Link | Paper |

|---|---|---|

| RecBench | [Paper] | |

| RecBench+ | [Paper] | |

| Shopping MMLU | [Paper] | |

| Amazon-M2 (KDD Cup 2023) | [Link] | [Paper] |

| LLMRec | [Link] | [Paper] |

| OpenP5 | [Link] | [Paper] |

| TABLET | [Link] | [Paper] |

| Repo Name | Maintainer |

|---|---|

| rs-llm-paper-list | wwliu555 |

| awesome-recommend-system-pretraining-papers | archersama |

| LLM4Rec | WLiK |

| Awesome-LLM4RS-Papers | nancheng58 |

| LLM4IR-Survey | RUC-NLPIR |

👍 Welcome to contribute to this repository.

If you have come across relevant resources or found some errors in this repesitory, feel free to open an issue or submit a pull request.

Contact: chiangel [DOT] ljh [AT] gmail [DOT] com

@article{10.1145/3678004,

author = {Lin, Jianghao and Dai, Xinyi and Xi, Yunjia and Liu, Weiwen and Chen, Bo and Zhang, Hao and Liu, Yong and Wu, Chuhan and Li, Xiangyang and Zhu, Chenxu and Guo, Huifeng and Yu, Yong and Tang, Ruiming and Zhang, Weinan},

title = {How Can Recommender Systems Benefit from Large Language Models: A Survey},

year = {2024},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

issn = {1046-8188},

url = {https://doi.org/10.1145/3678004},

doi = {10.1145/3678004},

journal = {ACM Trans. Inf. Syst.},

month = {jul}

}

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-LLM-for-RecSys

Similar Open Source Tools

Awesome-Tabular-LLMs

This repository is a collection of papers on Tabular Large Language Models (LLMs) specialized for processing tabular data. It includes surveys, models, and applications related to table understanding tasks such as Table Question Answering, Table-to-Text, Text-to-SQL, and more. The repository categorizes the papers based on key ideas and provides insights into the advancements in using LLMs for processing diverse tables and fulfilling various tabular tasks based on natural language instructions.

LLamaTuner

LLamaTuner is a repository for the Efficient Finetuning of Quantized LLMs project, focusing on building and sharing instruction-following Chinese baichuan-7b/LLaMA/Pythia/GLM model tuning methods. The project enables training on a single Nvidia RTX-2080TI and RTX-3090 for multi-round chatbot training. It utilizes bitsandbytes for quantization and is integrated with Huggingface's PEFT and transformers libraries. The repository supports various models, training approaches, and datasets for supervised fine-tuning, LoRA, QLoRA, and more. It also provides tools for data preprocessing and offers models in the Hugging Face model hub for inference and finetuning. The project is licensed under Apache 2.0 and acknowledges contributions from various open-source contributors.

ailia-models

The collection of pre-trained, state-of-the-art AI models. ailia SDK is a self-contained, cross-platform, high-speed inference SDK for AI. The ailia SDK provides a consistent C++ API across Windows, Mac, Linux, iOS, Android, Jetson, and Raspberry Pi platforms. It also supports Unity (C#), Python, Rust, Flutter(Dart) and JNI for efficient AI implementation. The ailia SDK makes extensive use of the GPU through Vulkan and Metal to enable accelerated computing. # Supported models 323 models as of April 8th, 2024

Awesome-LWMs