Best AI tools for< Detect Harmful Language >

20 - AI tool Sites

Alphy

Alphy is a modern AI tool for communication compliance that helps companies detect and prevent harmful and unlawful language in their communication. The AI classifier has a 94% accuracy rate and can identify over 40 high-risk categories of harmful language. By using Reflect AI, companies can shield themselves from reputational, ethical, and legal risks, ensuring compliance and preventing costly litigation.

Redflag AI

Redflag AI is a leading provider of content and brand protection solutions. Our mission is to help businesses protect their brands and reputations from online threats. We offer a range of services to help businesses identify, remove, and prevent harmful content from appearing online.

Amanda Moderation Platform

Amanda is an AI-driven content moderation platform designed to help online communities detect harmful behavior and manage risk across user interactions. It reduces fragmentation and manual work by bringing moderation workflows together as teams expand their setup. Amanda is modular, flexible, and can be adopted step by step, alongside existing systems. It offers a complete moderation toolbox, clear workflows, and features that can be tuned to fit different platforms.

Checkstep

Checkstep is an AI-powered content moderation platform that helps businesses detect and remove harmful content from their platforms. It offers a range of features, including image, text, audio, and video moderation, as well as compliance reporting and moderation tools. Checkstep's platform is designed to be easy to use and integrate, and it can be customized to meet the specific needs of each business.

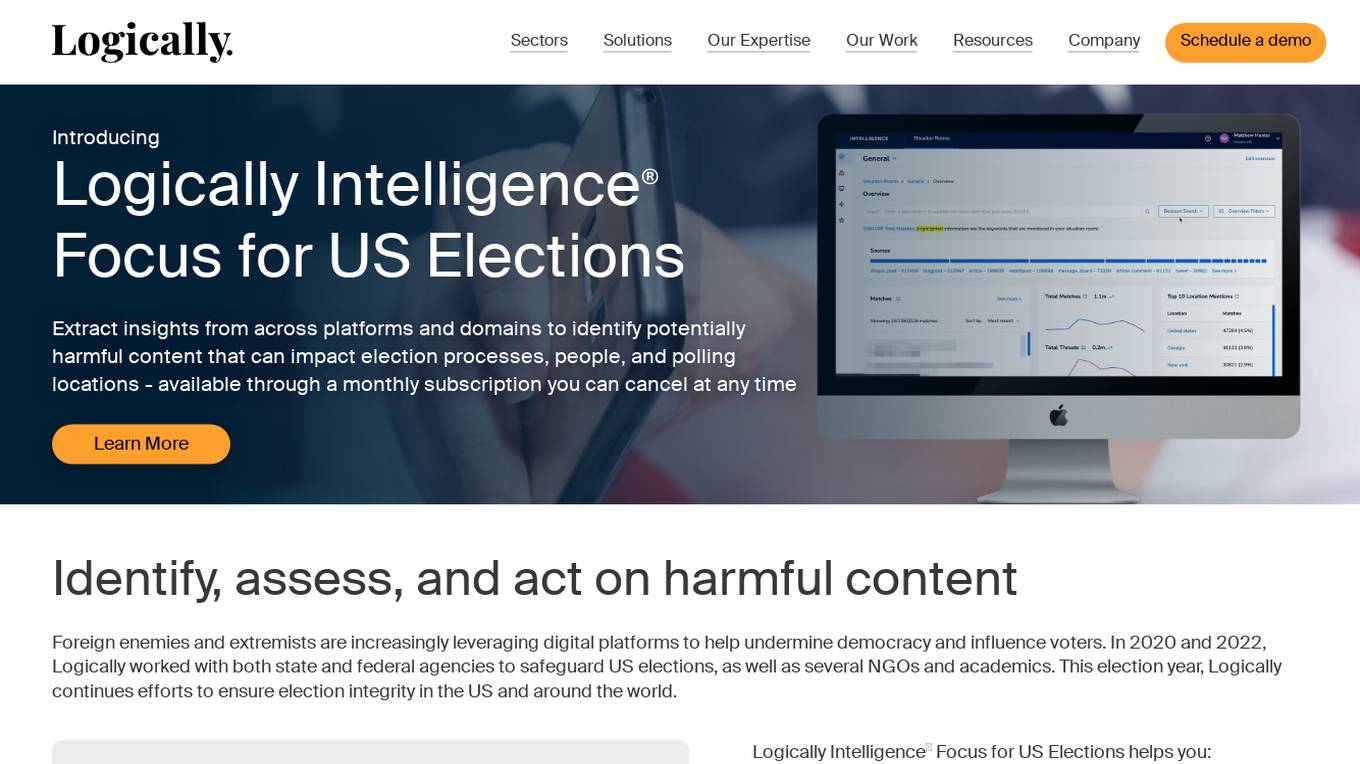

Logically

Logically is an AI-powered platform that helps governments, NGOs, and enterprise organizations detect and address harmful and deliberately inaccurate information online. The platform combines artificial intelligence with human expertise to deliver actionable insights and reduce the harms associated with misleading or deceptive information. Logically offers services such as Analyst Services, Logically Intelligence, Point Solutions, and Trust and Safety, focusing on threat detection, online narrative detection, intelligence reports, and harm reduction. The platform is known for its expertise in analysis, data science, and government affairs, providing solutions for various sectors including Corporate, Defense, Digital Platforms, Elections, National Security, and NGO Solutions.

Modulate

Modulate is a voice intelligence tool that provides proactive voice chat moderation solutions for various platforms, including gaming, delivery services, and social platforms. It uses advanced AI technology to detect and prevent harmful behaviors, ensuring a safer and more positive user experience. Modulate helps organizations comply with regulations, enhance user safety, and improve community interactions through its customizable and intelligent moderation tools.

Lakera

Lakera is the world's most advanced AI security platform that offers cutting-edge solutions to safeguard GenAI applications against various security threats. Lakera provides real-time security controls, stress-testing for AI systems, and protection against prompt attacks, data loss, and insecure content. The platform is powered by a proprietary AI threat database and aligns with global AI security frameworks to ensure top-notch security standards. Lakera is suitable for security teams, product teams, and LLM builders looking to secure their AI applications effectively and efficiently.

Link Shield

Link Shield is an AI-powered malicious URL detection API platform that helps protect online security. It utilizes advanced machine learning algorithms to analyze URLs and identify suspicious activity, safeguarding users from phishing scams, malware, and other harmful threats. The API is designed for ease of integration, affordability, and flexibility, making it accessible to developers of all levels. Link Shield empowers businesses to ensure the safety and security of their applications and online communities.

Secur3D

Secur3D is an AI tool designed for automated 3D asset analysis and moderation. It focuses on protecting user-generated content (UGC) on creator marketplaces by utilizing advanced algorithms to detect and prevent unauthorized or harmful content. With Secur3D, creators can ensure the safety and integrity of their content, providing a secure environment for both creators and users.

Deepfake Detection Challenge Dataset

The Deepfake Detection Challenge Dataset is a project initiated by Facebook AI to accelerate the development of new ways to detect deepfake videos. The dataset consists of over 100,000 videos and was created in collaboration with industry leaders and academic experts. It includes two versions: a preview dataset with 5k videos and a full dataset with 124k videos, each featuring facial modification algorithms. The dataset was used in a Kaggle competition to create better models for detecting manipulated media. The top-performing models achieved high accuracy on the public dataset but faced challenges when tested against the black box dataset, highlighting the importance of generalization in deepfake detection. The project aims to encourage the research community to continue advancing in detecting harmful manipulated media.

Face Shape Detect

Face Shape Detect is an AI-powered tool that allows users to analyze their unique facial structure and determine their face shape for personalized recommendations. Users can upload a photo to receive accurate face shape analysis and styling tips. The tool prioritizes privacy by securely processing images without storing them. It helps users understand their face shape for better fashion and beauty choices.

ZeroGPT

ZeroGPT is a trusted AI detector tool that specializes in detecting AI-generated content like ChatGPT, GPT4, and Gemini. It offers advanced features such as AI summarization, paraphrasing, grammar and spell checking, translation, word counting, and citation generation. The tool is designed to provide highly accurate results and supports multiple languages. ZeroGPT stands out for its highlighted sentences feature, batch file upload capability, high accuracy model, and automatically generated reports. It utilizes DeepAnalyse™ Technology, a multi-stage methodology that optimizes accuracy while minimizing false positives and negatives. Users can unlock premium features and API access to enhance their writing skills and integrate the tool on a large scale.

AI or Not

AI or Not is an AI-powered tool that helps businesses and individuals detect AI-generated images and audio. It uses advanced machine learning algorithms to analyze content and determine the likelihood of AI manipulation. With AI or Not, users can protect themselves from fraud, misinformation, and other malicious activities involving AI-generated content.

AIDP

AIDP is a comprehensive platform that helps you find and remove the fingerprints of AI in documents. It includes automatic and manual tools for revising content that was written by ChatGPT and other AI models. With AIDP, you can: * Detect and wipe the traces of AI instantly. * See what triggers AI detection. * Get suggestions for wording changes and rewrites. * Make AI sound human. * Get a tone analysis to determine how your document sounds. * Find and wipe AI from any document.

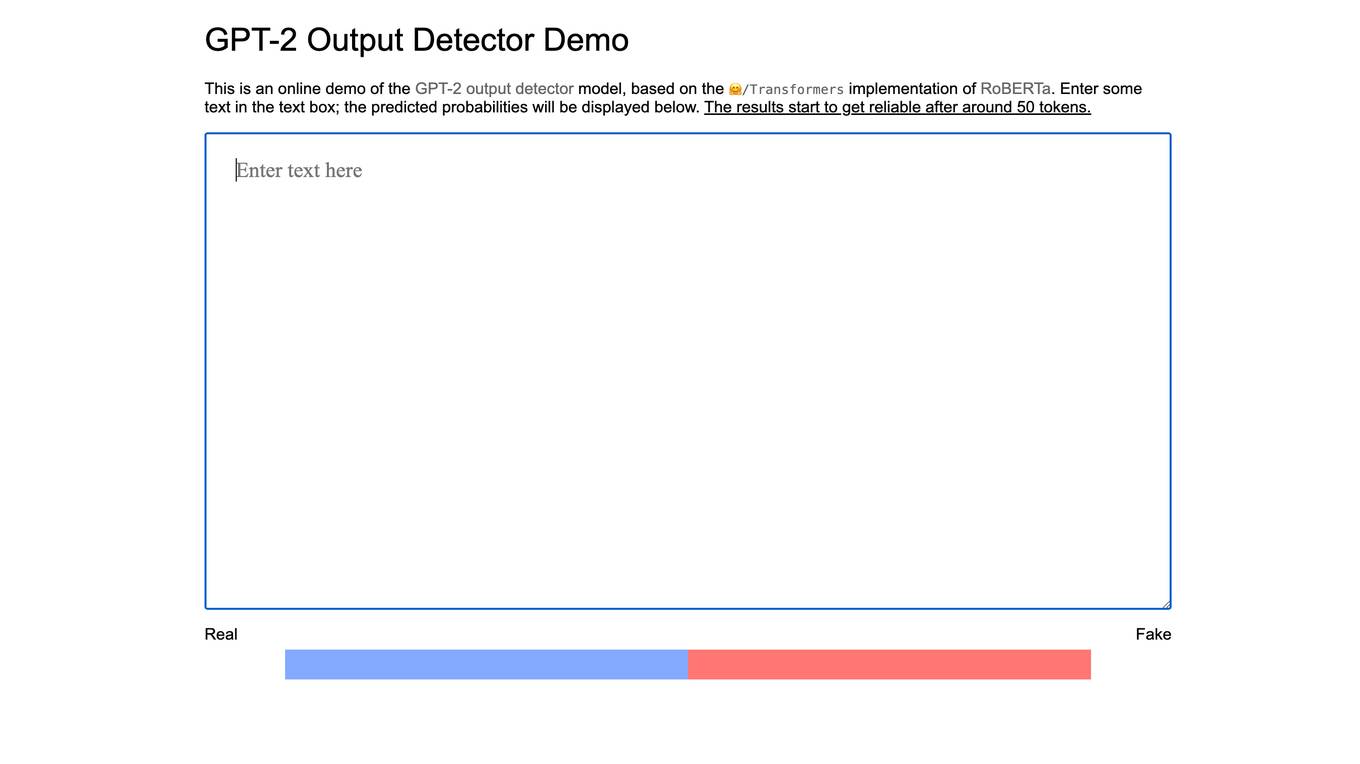

GPT-2 Output Detector

The GPT-2 Output Detector is an online tool that helps users identify whether a given text was generated by the GPT-2 language model. The tool is based on the RoBERTa implementation of Transformers, a popular natural language processing library. Users can enter text into the text box, and the tool will predict the probability that the text was generated by GPT-2. The results start to get reliable after around 50 tokens.

GRAIL

GRAIL is a healthcare company innovating to solve medicine’s most important challenges. Our team of leading scientists, engineers and clinicians are on an urgent mission to detect cancer early, when it is more treatable and potentially curable. GRAIL's Galleri® test is a first-of-its-kind multi-cancer early detection (MCED) test that can detect a signal shared by more than 50 cancer types and predict the tissue type or organ associated with the signal to help healthcare providers determine next steps.

AIDetect

AIDetect is a powerful AI content detector tool that allows users to identify AI-generated writing within any text. It offers cutting-edge features and high accuracy, comparable to Turnitin, to help users verify the authenticity of content. With advanced technology, AIDetect ensures that users can distinguish between human and AI-generated content effortlessly.

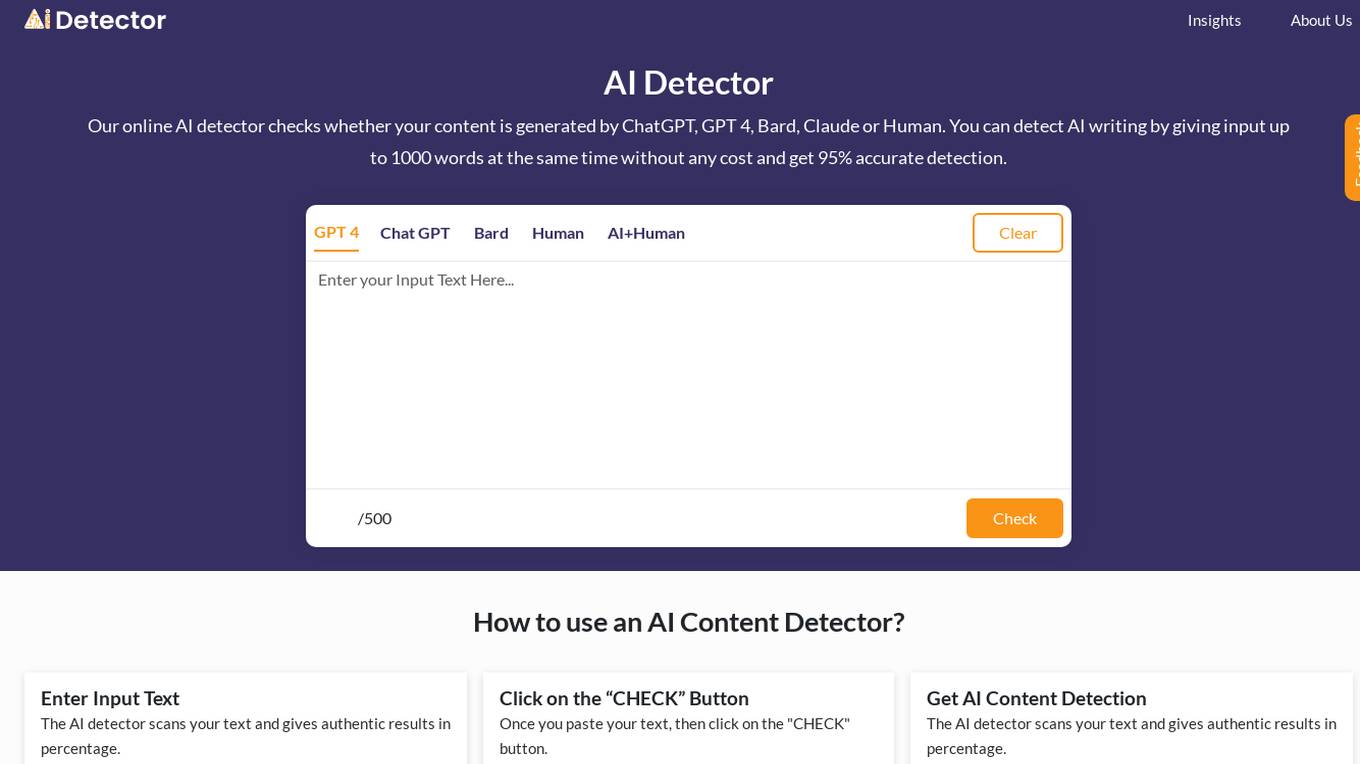

AI Detector

AI Detector is an online tool that uses advanced algorithms and machine learning to check if your written text is generated by AI or a human writer. It analyzes the writing style, sentence structure, and other linguistic patterns to determine the likelihood of AI authorship. The tool provides a percentage score indicating the probability of AI-generated content, helping users identify potential plagiarism or AI-assisted writing.

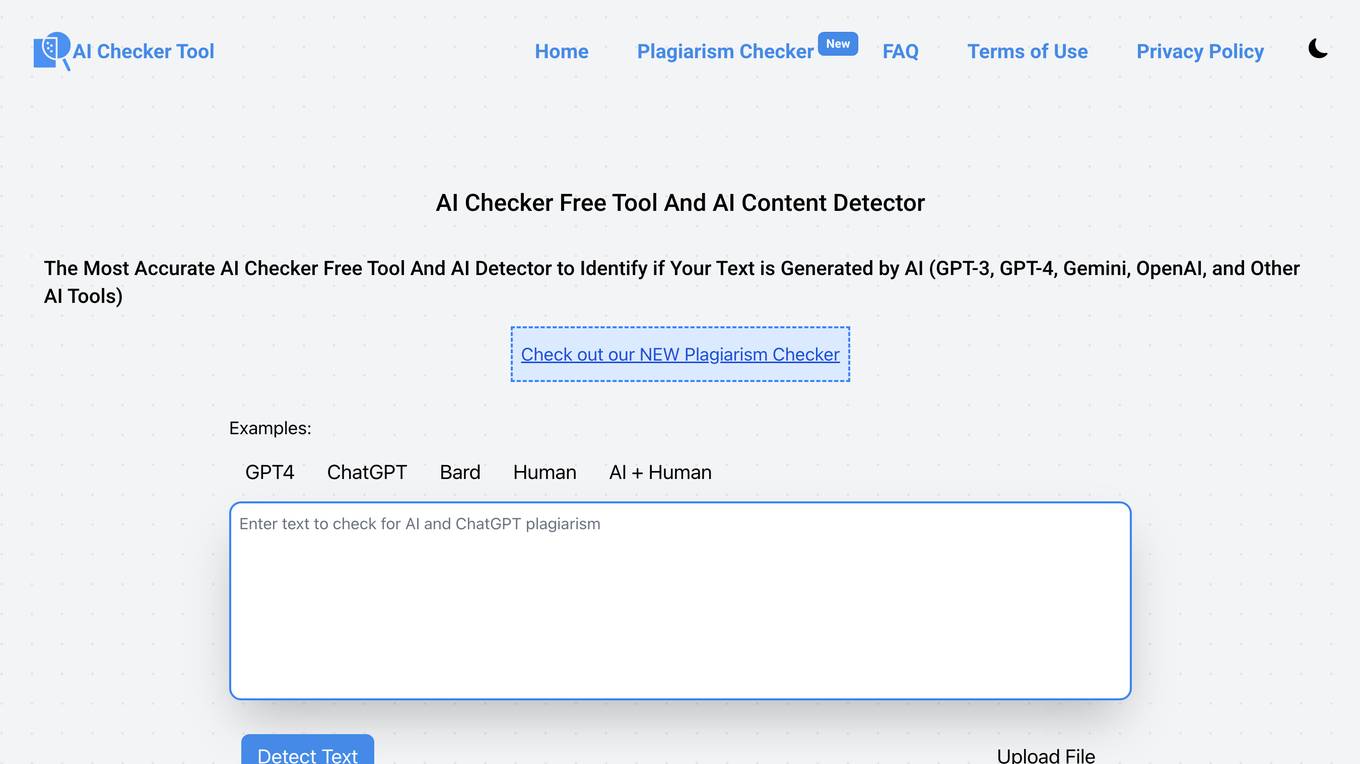

AI Checker

AI Checker is a free tool and plagiarism detector that accurately identifies if a text is generated by AI tools like GPT-3, GPT-4, Gemini, OpenAI, and others. It helps users protect their content by detecting AI-generated text and human-written content. The tool uses advanced algorithms to provide accurate results and percentage analysis of AI-generated content within a text. AI Checker is beneficial for writers, students, educators, content marketers, freelancers, editors, publishers, researchers, and content consumers across different languages and contexts.

HEALWELL AI

HEALWELL AI is a healthcare technology company focusing on preventative care through AI and data science. Their mission is to improve healthcare and save lives by early disease detection. HEALWELL provides AI tools for healthcare providers to screen and detect rare, complex, and chronic diseases. They have developed AI clinical co-pilot technologies to assist physicians in early disease detection, ultimately accelerating time to diagnosis and saving lives.

1 - Open Source AI Tools

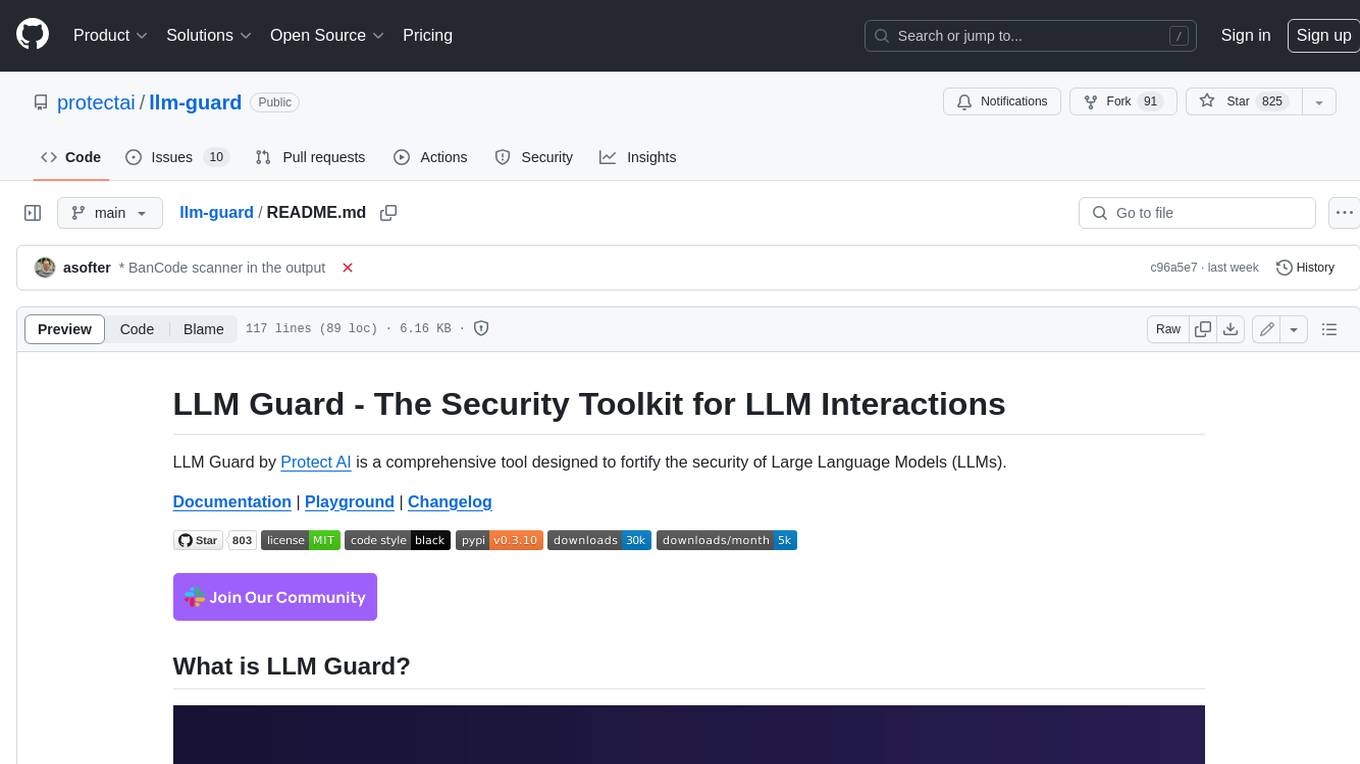

llm-guard

LLM Guard is a comprehensive tool designed to fortify the security of Large Language Models (LLMs). It offers sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection attacks, ensuring that your interactions with LLMs remain safe and secure.

20 - OpenAI Gpts

AI fact-checking paper

A helpful guide for understanding the paper "Artificial intelligence is ineffective and potentially harmful for fact checking"

FallacyGPT

Detect logical fallacies and lapses in critical thinking to help avoid misinformation in the style of Socrates

AI Detector

AI Detector GPT is powered by Winston AI and created to help identify AI generated content. It is designed to help you detect use of AI Writing Chatbots such as ChatGPT, Claude and Bard and maintain integrity in academia and publishing. Winston AI is the most trusted AI content detector.

Plagiarism Checker

Plagiarism Checker GPT is powered by Winston AI and created to help identify plagiarized content. It is designed to help you detect instances of plagiarism and maintain integrity in academia and publishing. Winston AI is the most trusted AI and Plagiarism Checker.

BS Meter Realtime

Detects and measures information credibility. Provides a "BS Score" (0-100) based on content analysis for misinformation signs, including factual inaccuracies and sensationalist language. Real-time feedback.

Wowza Bias Detective

I analyze cognitive biases in scenarios and thoughts, providing neutral, educational insights.

Defender for Endpoint Guardian

To assist individuals seeking to learn about or work with Microsoft's Defender for Endpoint. I provide detailed explanations, step-by-step guides, troubleshooting advice, cybersecurity best practices, and demonstrations, all specifically tailored to Microsoft Defender for Endpoint.

Prompt Injection Detector

GPT used to classify prompts as valid inputs or injection attempts. Json output.

Blue Team Guide

it is a meticulously crafted arsenal of knowledge, insights, and guidelines that is shaped to empower organizations in crafting, enhancing, and refining their cybersecurity defenses

PBN Detector

A tool to help you decide if a website is part of a PBN or link network, created solely for link building. >> Get in touch with Gareth if you need a Freelance SEO for link building <<

ethicallyHackingspace (eHs)® METEOR™ STORM™

Multiple Environment Threat Evaluation of Resources (METEOR)™ Space Threats and Operational Risks to Mission (STORM)™ non-profit product AI co-pilot