Best AI tools for< Debug Machine Learning Applications >

20 - AI tool Sites

Langfuse

Langfuse is an AI tool that offers the Langfuse TypeScript SDK v4 for building and debugging LLM (Large Language Models) applications. It provides features such as tracing, prompt management, evaluation, and metrics to enhance the performance of LLM applications. Langfuse is backed by a team of experts and offers integrations with various platforms and SDKs. The tool aims to simplify the development process of complex LLM applications and improve overall efficiency.

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Haystack

Haystack is a production-ready open-source AI framework designed to facilitate building AI applications. It offers a flexible components and pipelines architecture, allowing users to customize and build applications according to their specific requirements. With partnerships with leading LLM providers and AI tools, Haystack provides freedom of choice for users. The framework is built for production, with fully serializable pipelines, logging, monitoring integrations, and deployment guides for full-scale deployments on various platforms. Users can build Haystack apps faster using deepset Studio, a platform for drag-and-drop construction of pipelines, testing, debugging, and sharing prototypes.

LangChain

LangChain is a framework for developing applications powered by large language models (LLMs). It simplifies every stage of the LLM application lifecycle, including development, productionization, and deployment. LangChain consists of open-source libraries such as langchain-core, langchain-community, and partner packages. It also includes LangGraph for building stateful agents and LangSmith for debugging and monitoring LLM applications.

Amazon Bedrock

Amazon Bedrock is a cloud-based platform that enables developers to build, deploy, and manage serverless applications. It provides a fully managed environment that takes care of the infrastructure and operations, so developers can focus on writing code. Bedrock also offers a variety of tools and services to help developers build and deploy their applications, including a code editor, a debugger, and a deployment pipeline.

Galileo AI

Galileo AI is a platform that offers automated evaluations for AI applications, bringing automation and insight to AI evaluations to ensure reliable and confident shipping. It helps in eliminating 80% of evaluation time by replacing manual reviews with high-accuracy metrics, enabling rapid iteration, achieving real-time protection, and providing end-to-end visibility into agent completions. Galileo also allows developers to take control of AI complexity, de-risk AI in production, and deploy AI applications flexibly across different environments. The platform is trusted by enterprises and loved by developers for its accuracy, low-latency, and ability to run on L4 GPUs.

Langtail

Langtail is a platform that helps developers build, test, and deploy AI-powered applications. It provides a suite of tools to help developers debug prompts, run tests, and monitor the performance of their AI models. Langtail also offers a community forum where developers can share tips and tricks, and get help from other users.

Portkey

Portkey is a control panel for production AI applications that offers an AI Gateway, Prompts, Guardrails, and Observability Suite. It enables teams to ship reliable, cost-efficient, and fast apps by providing tools for prompt engineering, enforcing reliable LLM behavior, integrating with major agent frameworks, and building AI agents with access to real-world tools. Portkey also offers seamless AI integrations for smarter decisions, with features like managed hosting, smart caching, and edge compute layers to optimize app performance.

Aim

Aim is an open-source, self-hosted AI Metadata tracking tool designed to handle 100,000s of tracked metadata sequences. Two most famous AI metadata applications are: experiment tracking and prompt engineering. Aim provides a performant and beautiful UI for exploring and comparing training runs, prompt sessions.

Agenta.ai

Agenta.ai is a platform designed to provide prompt management, evaluation, and observability for LLM (Large Language Model) applications. It aims to address the challenges faced by AI development teams in managing prompts, collaborating effectively, and ensuring reliable product outcomes. By centralizing prompts, evaluations, and traces, Agenta.ai helps teams streamline their workflows and follow best practices in LLMOps. The platform offers features such as unified playground for prompt comparison, automated evaluation processes, human evaluation integration, observability tools for debugging AI systems, and collaborative workflows for PMs, experts, and developers.

Testsigma

Testsigma is a cloud-based test automation platform that enables teams to create, execute, and maintain automated tests for web, mobile, and API applications. It offers a range of features including natural language processing (NLP)-based scripting, record-and-playback capabilities, data-driven testing, and AI-driven test maintenance. Testsigma integrates with popular CI/CD tools and provides a marketplace for add-ons and extensions. It is designed to simplify and accelerate the test automation process, making it accessible to testers of all skill levels.

Plumb

Plumb is a no-code, node-based builder that empowers product, design, and engineering teams to create AI features together. It enables users to build, test, and deploy AI features with confidence, fostering collaboration across different disciplines. With Plumb, teams can ship prototypes directly to production, ensuring that the best prompts from the playground are the exact versions that go to production. It goes beyond automation, allowing users to build complex multi-tenant pipelines, transform data, and leverage validated JSON schema to create reliable, high-quality AI features that deliver real value to users. Plumb also makes it easy to compare prompt and model performance, enabling users to spot degradations, debug them, and ship fixes quickly. It is designed for SaaS teams, helping ambitious product teams collaborate to deliver state-of-the-art AI-powered experiences to their users at scale.

Code Snippets AI

Code Snippets AI is an AI-powered code snippets library for teams. It helps developers master their codebase with contextually-rich AI chats, integrated with a secure code snippets library. Developers can build new features, fix bugs, add comments, and understand their codebase with the help of Code Snippets AI. The tool is trusted by the best development teams and helps developers code smarter than ever. With Code Snippets AI, developers can leverage the power of a codebase aware assistant, helping them write clean, performance optimized code. They can also create documentation, refactor, debug and generate code with full codebase context. This helps developers spend more time creating code and less time debugging errors.

Code Companion AI

Code Companion AI is a desktop application powered by OpenAI's ChatGPT, designed to aid by performing a myriad of coding tasks. This application streamlines project management with its chatbot interface that can execute shell commands, generate code, handle database queries and review your existing code. Tasks are as simple as sending a message - you could request creation of a .gitignore file, or deploy an app on AWS, and CodeCompanion.AI does it for you. Simply download CodeCompanion.AI from the website to enjoy all features across various programming languages and platforms.

Microsoft Copilot

Microsoft Copilot is an AI-powered coding assistant that helps developers write better code, faster. It provides real-time suggestions and code completions, and can even generate entire functions and classes. Copilot is available as a Visual Studio Code extension and as a standalone application.

Interview Solver

Interview Solver is a desktop application that acts as your copilot during coding interviews, providing instant solutions to LeetCode problems and system design questions. It features screengrabbing capabilities, one-shot solutions, query selected text functionality, global hotkeys, and syntax highlighting for all major languages. Interview Solver is designed to give you an AI advantage during live interviews, helping you land your dream job.

Warp

Warp is a terminal reimagined with AI and collaborative tools for better productivity. It is built with Rust for speed and has an intuitive interface. Warp includes features such as modern editing, command generation, reusable workflows, and Warp Drive. Warp AI allows users to ask questions about programming and get answers, recall commands, and debug errors. Warp Drive helps users organize hard-to-remember commands and share them with their team. Warp is a private and secure application that is trusted by hundreds of thousands of professional developers.

GPTAnywhere

GPTAnywhere is a powerful AI-powered tool that allows you to access the latest GPT models and use them to generate text, translate languages, write different kinds of creative content, debug code, and more. It is available as a desktop application for both macOS and Windows.

Coddy

Coddy is an AI-powered coding assistant that helps developers write better code faster. It provides real-time feedback, code completion, and error detection, making it the perfect tool for both beginners and experienced developers. Coddy also integrates with popular development tools like Visual Studio Code and GitHub, making it easy to use in your existing workflow.

SourceAI

SourceAI is an AI-powered code generator that allows users to generate code in any programming language. It is easy to use, even for non-developers, and has a clear and intuitive interface. SourceAI is powered by GPT-3 and Codex, the most advanced AI technology available. It can be used to generate code for a variety of tasks, including calculating the factorial of a number, finding the roots of a polynomial, and translating text from one language to another.

2 - Open Source AI Tools

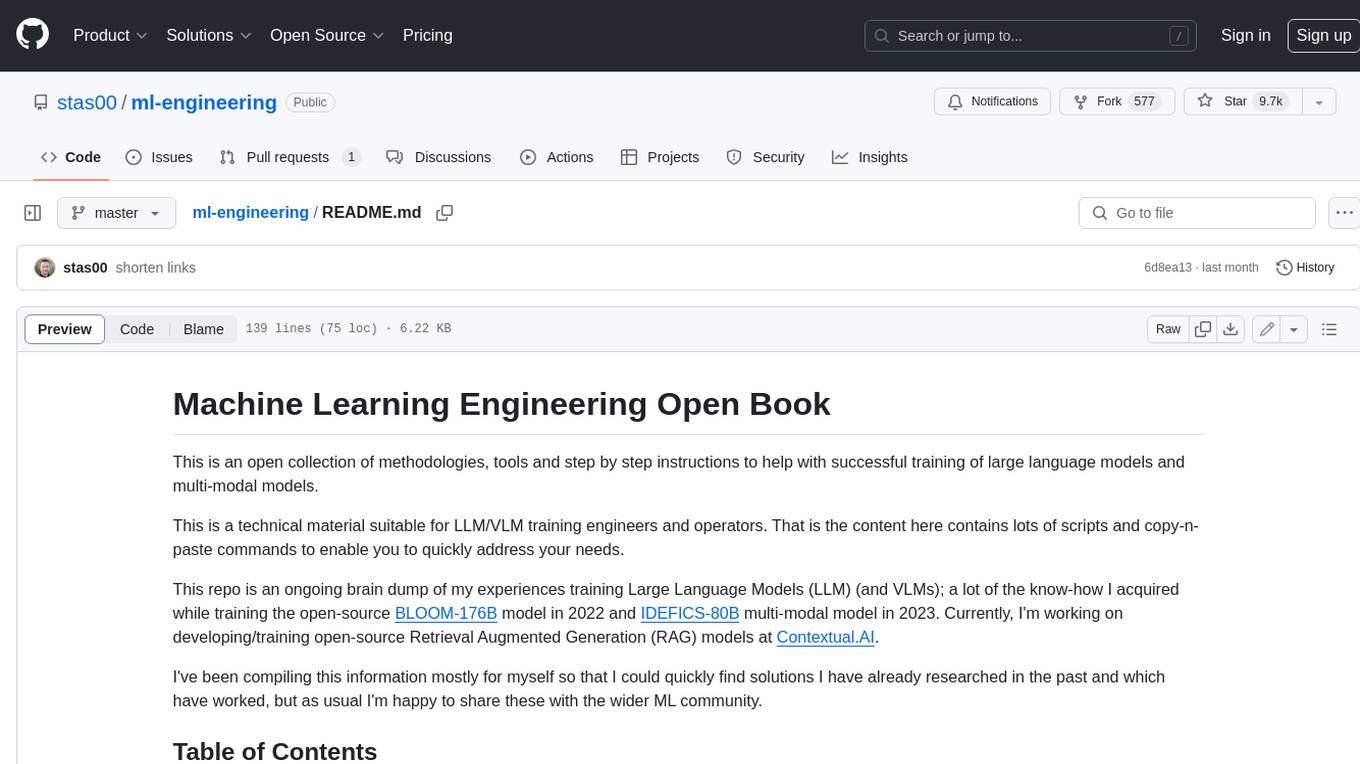

ml-engineering

This repository provides a comprehensive collection of methodologies, tools, and step-by-step instructions for successful training of large language models (LLMs) and multi-modal models. It is a technical resource suitable for LLM/VLM training engineers and operators, containing numerous scripts and copy-n-paste commands to facilitate quick problem-solving. The repository is an ongoing compilation of the author's experiences training BLOOM-176B and IDEFICS-80B models, and currently focuses on the development and training of Retrieval Augmented Generation (RAG) models at Contextual.AI. The content is organized into six parts: Insights, Hardware, Orchestration, Training, Development, and Miscellaneous. It includes key comparison tables for high-end accelerators and networks, as well as shortcuts to frequently needed tools and guides. The repository is open to contributions and discussions, and is licensed under Attribution-ShareAlike 4.0 International.

wandb

Weights & Biases (W&B) is a platform that helps users build better machine learning models faster by tracking and visualizing all components of the machine learning pipeline, from datasets to production models. It offers tools for tracking, debugging, evaluating, and monitoring machine learning applications. W&B provides integrations with popular frameworks like PyTorch, TensorFlow/Keras, Hugging Face Transformers, PyTorch Lightning, XGBoost, and Sci-Kit Learn. Users can easily log metrics, visualize performance, and compare experiments using W&B. The platform also supports hosting options in the cloud or on private infrastructure, making it versatile for various deployment needs.

20 - OpenAI Gpts

Software development front-end GPT - Senior AI

Solve problems at front-end applications development - AI 100% PRO - 500+ Guides trainer

Gary Marcus AI Critic Simulator

Humorous AI critic known for skepticism, contradictory arguments, and combining Animal and Machine Learning related Terms.

PyRefactor

Refactor python code. Python expert with proficiency in data science, machine learning (including LLM apps), and both OOP and functional programming.

GoGPT

Custom GPT to help learning, debugging, and development in Go. Follows good practices, provides examples, pros/cons, and also pitfalls.

Code Tutor

A programming coach and mentor that adapts to your learning style and progress.

Matlab Tutor

Best MATLAB assistant. MATLAB TUTOR is designed to enhance your MATLAB learning experience by offering expert guidance on code, best practices, and programming insights tailored to your skill level.

Python Mentor

AI guide for Python certification PCEP and PCAP with project-based, exam-focused learning.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.