Best AI tools for< Computational Biologist >

Infographic

20 - AI tool Sites

CCDS

CCDS (Center for Computational & Data Sciences) is a research center at Independent University Bangladesh dedicated to artificial intelligence, data sciences, and computational science. The center has various wings focusing on AI, computational biology, physics, data science, human-computer interaction, and industry partnerships. CCDS explores the use of computation to understand nature and society, uncover hidden stories in data, and tackle complex challenges. The center collaborates with institutions like CERN and the Dunlap Institute for Astronomy and Astrophysics.

Owkin

Owkin is a full-stack AI biotech company that integrates the best of human and artificial intelligence to deliver better drugs and diagnostics at scale. By understanding complex biology through AI, Owkin identifies new treatments, de-risks and accelerates clinical trials, and builds diagnostic tools to reduce time to impact for patients.

Cradle

Cradle is a protein engineering platform that uses machine learning to design improved protein sequences. It allows users to import assay data, generate new sequences, test them in the lab, and import the results to improve the model. Cradle can be used to optimize multiple properties of a protein simultaneously, and it has been used by leading biotech teams to accelerate new and ongoing projects.

Cerebras

Cerebras is an AI tool that offers products and services related to AI supercomputers, cloud system processors, and applications for various industries. It provides high-performance computing solutions, including large language models, and caters to sectors such as health, energy, government, scientific computing, and financial services. Cerebras specializes in AI model services, offering state-of-the-art models and training services for tasks like multi-lingual chatbots and DNA sequence prediction. The platform also features the Cerebras Model Zoo, an open-source repository of AI models for developers and researchers.

Genesis Therapeutics

Genesis Therapeutics is an AI platform that leverages cutting-edge technology to revolutionize drug discovery and development processes. The platform integrates advanced algorithms and machine learning models to accelerate the identification of novel drug candidates and optimize their properties. By combining computational simulations with experimental data, Genesis Therapeutics offers a comprehensive solution to streamline the drug development pipeline and bring innovative therapies to market faster. The platform is designed to empower researchers and pharmaceutical companies with powerful tools for predicting drug-target interactions, optimizing molecular structures, and prioritizing lead compounds for further investigation.

VantAI

VantAI is an AI application focused on generative AI-enabled drug discovery. Their mission is to unlock a new chapter in medicine by making protein interactions programmable. They have an integrated discovery platform with phase-shifting technologies designed to unlock the full potential of the proximity modulator modality. VantAI collaborates with industry leaders to build the future of therapeutic design. The company has launched Neo-1, the first AI model to rewire molecular interactions by unifying structure prediction and generation.

Allchemy

Allchemy is a resource-aware AI platform for drug discovery. It combines state-of-the-art computational synthesis with AI algorithms to predict molecular properties. Within minutes, Allchemy creates thousands of synthesizable lead candidates meeting user-defined profiles of drug-likeness, affinity towards specific proteins, toxicity, and a range of other physical-chemical measures. Allchemy encompasses the entire resource-to-drug design process and has been used in academic, corporate and classified environments worldwide to: Design synthesizable leads targeting specific proteins Evolve scaffolds similar to desired drugs Design “circular” drug syntheses from renewable materials Interface with and instruct automated synthesis platforms and optimize pilot-scale processes Operate “iterative synthesis” schemes Predict side reactions and create forensic “synthetic signatures” of hazardous/toxic molecules Design synthetic degradation and recovery cycles for various types of feedstocks and functional target molecules

Institute for Protein Design

The Institute for Protein Design is a research institute at the University of Washington that uses computational design to create new proteins that solve modern challenges in medicine, technology, and sustainability. The institute's research focuses on developing new protein therapeutics, vaccines, drug delivery systems, biological devices, self-assembling nanomaterials, and bioactive peptides. The institute also has a strong commitment to responsible AI development and has developed a set of principles to guide its use of AI in research.

Iambic Therapeutics

Iambic Therapeutics is a cutting-edge AI-driven drug discovery platform that tackles the most challenging design problems in drug discovery, addressing unmet patient need. Its physics-based AI algorithms drive a high-throughput experimental platform, converting new molecular designs to new biological insights each week. Iambic's platform optimizes target product profiles, exploring multiple profiles in parallel to ensure that molecules are designed to solve the right problems in disease biology. It also optimizes drug candidates, deeply exploring chemical space to reveal novel mechanisms of action and deliver diverse high-quality leads.

Green Cubes

Green Cubes is an AI application that provides precise volume, complexity, and biodiversity indication of terrestrial areas at scale for Digital Reality and Sponsorship. It shapes the digital twin of nature, offering transparency and trust through Measure, Report, and Verification (MVR) using data collection, AI computation, and 3D visualization. Green Cubes enables corporations to sponsor nature impact with confidence and transparency, contributing to the preservation of biodiversity.

Artificial Intelligence: Foundations of Computational Agents

Artificial Intelligence: Foundations of Computational Agents, 3rd edition by David L. Poole and Alan K. Mackworth, Cambridge University Press 2023, is a book about the science of artificial intelligence (AI). It presents artificial intelligence as the study of the design of intelligent computational agents. The book is structured as a textbook, but it is accessible to a wide audience of professionals and researchers. In the last decades we have witnessed the emergence of artificial intelligence as a serious science and engineering discipline. This book provides an accessible synthesis of the field aimed at undergraduate and graduate students. It provides a coherent vision of the foundations of the field as it is today. It aims to provide that synthesis as an integrated science, in terms of a multi-dimensional design space that has been partially explored. As with any science worth its salt, artificial intelligence has a coherent, formal theory and a rambunctious experimental wing. The book balances theory and experiment, showing how to link them intimately together. It develops the science of AI together with its engineering applications.

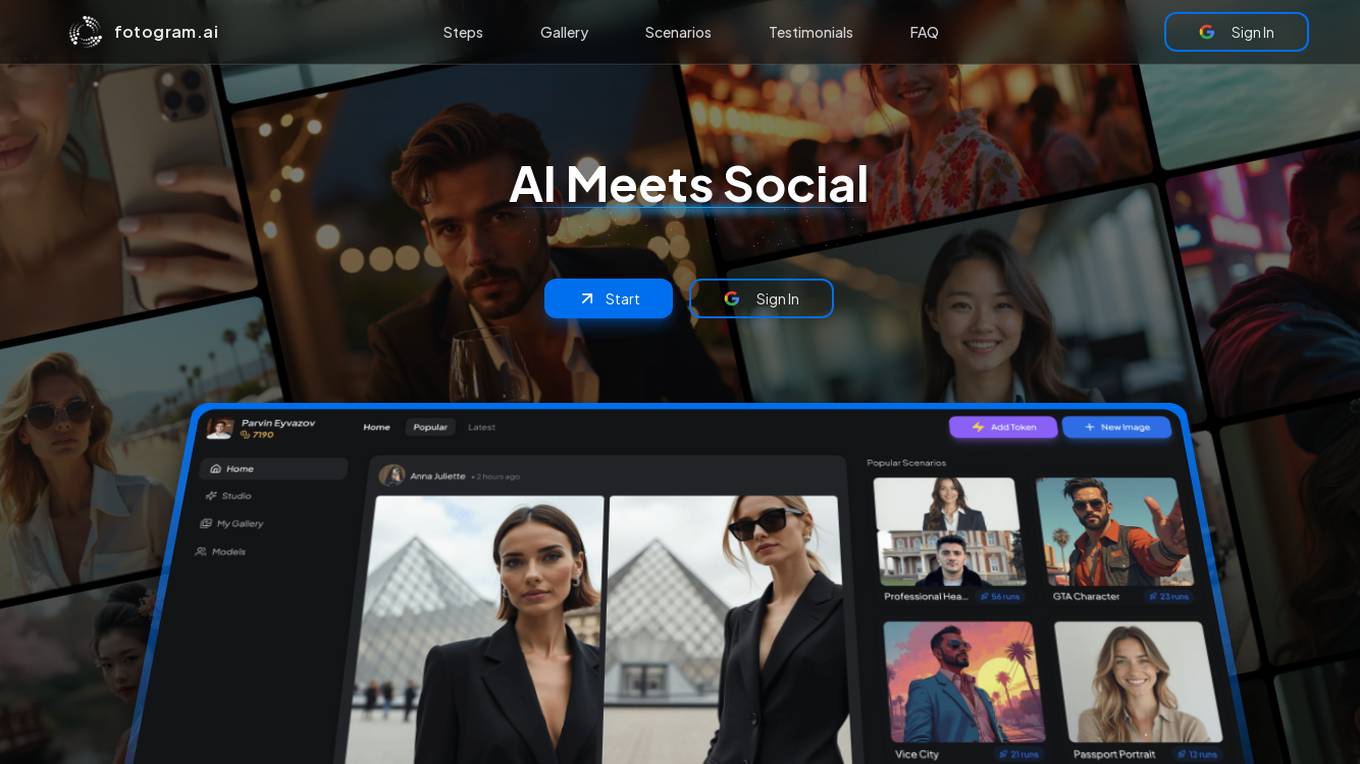

Fotogram.ai

Fotogram.ai is an AI-powered image editing tool that offers a wide range of features to enhance and transform your photos. With Fotogram.ai, users can easily apply filters, adjust colors, remove backgrounds, add effects, and retouch images with just a few clicks. The tool uses advanced AI algorithms to provide professional-level editing capabilities to users of all skill levels. Whether you are a photographer looking to streamline your workflow or a social media enthusiast wanting to create stunning visuals, Fotogram.ai has you covered.

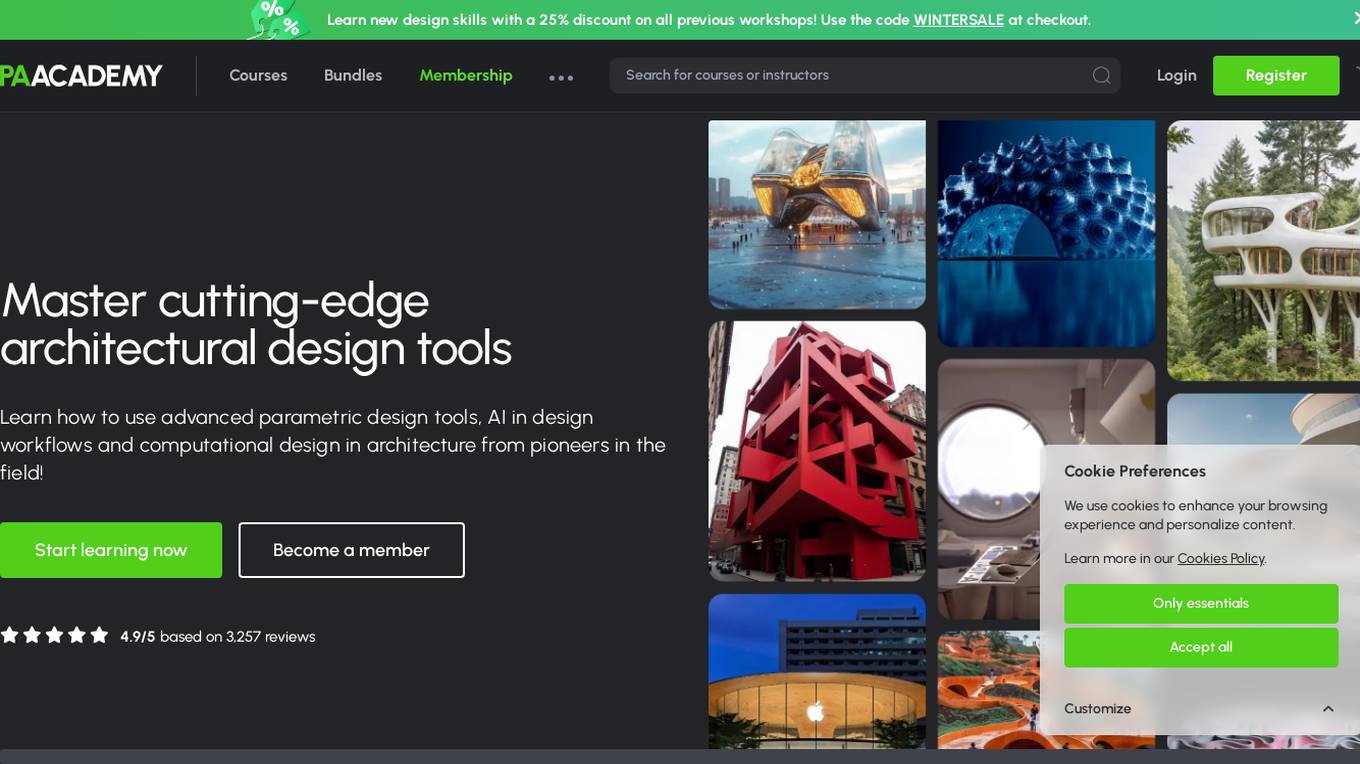

PAACADEMY

PAACADEMY is an educational platform focused on architecture and design, offering workshops and courses on advanced design tools, computational design, and AI integration in architecture. The platform provides insights, tutorials, and live events to inspire and educate aspiring designers. PAACADEMY features renowned instructors and covers topics such as parametric design, 3D printing, and AI-driven architectural practices.

EvolveLab

EvolveLab is a digital solutions provider specializing in BIM management and app development for the AEC (Architecture, Engineering, and Construction) industry. They offer a range of powerful apps and services designed to empower architects, engineers, and contractors to streamline their workflows and bring their ideas to life more efficiently. With a focus on data-driven design and AI technology, EvolveLab's innovative tools help users enhance productivity and turn concepts into reality.

Proscia

Proscia is a leading provider of digital pathology solutions for the modern laboratory. Its flagship product, Concentriq, is an enterprise pathology platform that enables anatomic pathology laboratories to achieve 100% digitization and deliver faster, more precise results. Proscia also offers a range of AI applications that can be used to automate tasks, improve diagnostic accuracy, and accelerate research. The company's mission is to perfect cancer diagnosis with intelligent software that changes the way the world practices pathology.

AIOZ Network

AIOZ Network is an AI-powered platform that focuses on Web3, AI, storage, and streaming services. It offers decentralized AI computation, fast and reliable storage solutions, and seamless video streaming for dApps within the network. AIOZ aims to empower a fast, secure, and decentralized future by providing a one-click integration of dApps on the AIOZ blockchain, supporting popular smart contract languages, and utilizing spare computing resources from a global community of nodes.

Live Portrait Ai Generator

Live Portrait Ai Generator is an AI application that transforms static portrait images into lifelike videos using advanced animation technology. Users can effortlessly animate their portraits, fine-tune animations, unleash artistic styles, and make memories move with text, music, and other elements. The tool offers a seamless stitching technology and retargeting capabilities to achieve perfect results. Live Portrait Ai enhances generation quality and generalization ability through a mixed image-video training strategy and network architecture upgrades.

Altera

Altera is an applied research company focused on building digital humans - machines with fundamental human qualities. Led by Dr. Robert Yang, the team comprises computational neuroscientists, CS and Physics experts from prestigious institutions. Their mission is to create digital human beings that can live, care, and grow with us. The company's early research prototypes began in games, offering a glimpse into the potential of these digital humans.

XtalPi

XtalPi is a world-leading technology company driven by artificial intelligence (AI) and robotics to innovate in the fields of life sciences and new materials. Founded in 2015 at the Massachusetts Institute of Technology (MIT), the company is committed to realizing digital and intelligent innovation in the fields of life sciences and new materials. Based on cutting-edge technologies and capabilities such as quantum physics, artificial intelligence, cloud computing, and large-scale experimental robot clusters, the company provides innovative technologies, services, and products for global industries such as biomedicine, chemicals, new energy, and new materials.

Variational AI

Variational AI is a company that uses generative AI to discover novel drug-like small molecules with optimized properties for defined targets. Their platform, Enki™, is the first commercially accessible foundation model for small molecules. It is designed to make generating novel molecule structures easy, with no data required. Users simply define their target product profile (TPP) and Enki does the rest. Enki is an ensemble of generative algorithms trained on decades worth of experimental data with proven results. The company was founded in September 2019 and is based in Vancouver, BC, Canada.

8 - Open Source Tools

ersilia

The Ersilia Model Hub is a unified platform of pre-trained AI/ML models dedicated to infectious and neglected disease research. It offers an open-source, low-code solution that provides seamless access to AI/ML models for drug discovery. Models housed in the hub come from two sources: published models from literature (with due third-party acknowledgment) and custom models developed by the Ersilia team or contributors.

sciml.ai

SciML.ai is an open source software organization dedicated to unifying packages for scientific machine learning. It focuses on developing modular scientific simulation support software, including differential equation solvers, inverse problems methodologies, and automated model discovery. The organization aims to provide a diverse set of tools with a common interface, creating a modular, easily-extendable, and highly performant ecosystem for scientific simulations. The website serves as a platform to showcase SciML organization's packages and share news within the ecosystem. Pull requests are encouraged for contributions.

aitom

AITom is an open-source platform for AI-driven cellular electron cryo-tomography analysis. It is developed to process large amounts of Cryo-ET data, reconstruct, detect, classify, recover, and spatially model different cellular components using state-of-the-art machine learning approaches. The platform aims to automate cellular structure discovery and provide new insights into molecular biology and medical applications.

PINNACLE

PINNACLE is a flexible geometric deep learning approach that trains on contextualized protein interaction networks to generate context-aware protein representations. It provides protein representations split across various cell-type contexts from different tissues and organs. The tool can be fine-tuned to study the genomic effects of drugs and nominate promising protein targets and cell-type contexts for further investigation. PINNACLE exemplifies the paradigm of incorporating context-specific effects for studying biological systems, especially the impact of disease and therapeutics.

ProLLM

ProLLM is a framework that leverages Large Language Models to interpret and analyze protein sequences and interactions through natural language processing. It introduces the Protein Chain of Thought (ProCoT) method to transform complex protein interaction data into intuitive prompts, enhancing predictive accuracy by incorporating protein-specific embeddings and fine-tuning on domain-specific datasets.

bionemo-framework

NVIDIA BioNeMo Framework is a collection of programming tools, libraries, and models for computational drug discovery. It accelerates building and adapting biomolecular AI models by providing domain-specific, optimized models and tooling for GPU-based computational resources. The framework offers comprehensive documentation and support for both community and enterprise users.

New-AI-Drug-Discovery

New AI Drug Discovery is a repository focused on the applications of Large Language Models (LLM) in drug discovery. It provides resources, tools, and examples for leveraging LLM technology in the pharmaceutical industry. The repository aims to showcase the potential of using AI-driven approaches to accelerate the drug discovery process, improve target identification, and optimize molecular design. By exploring the intersection of artificial intelligence and drug development, this repository offers insights into the latest advancements in computational biology and cheminformatics.

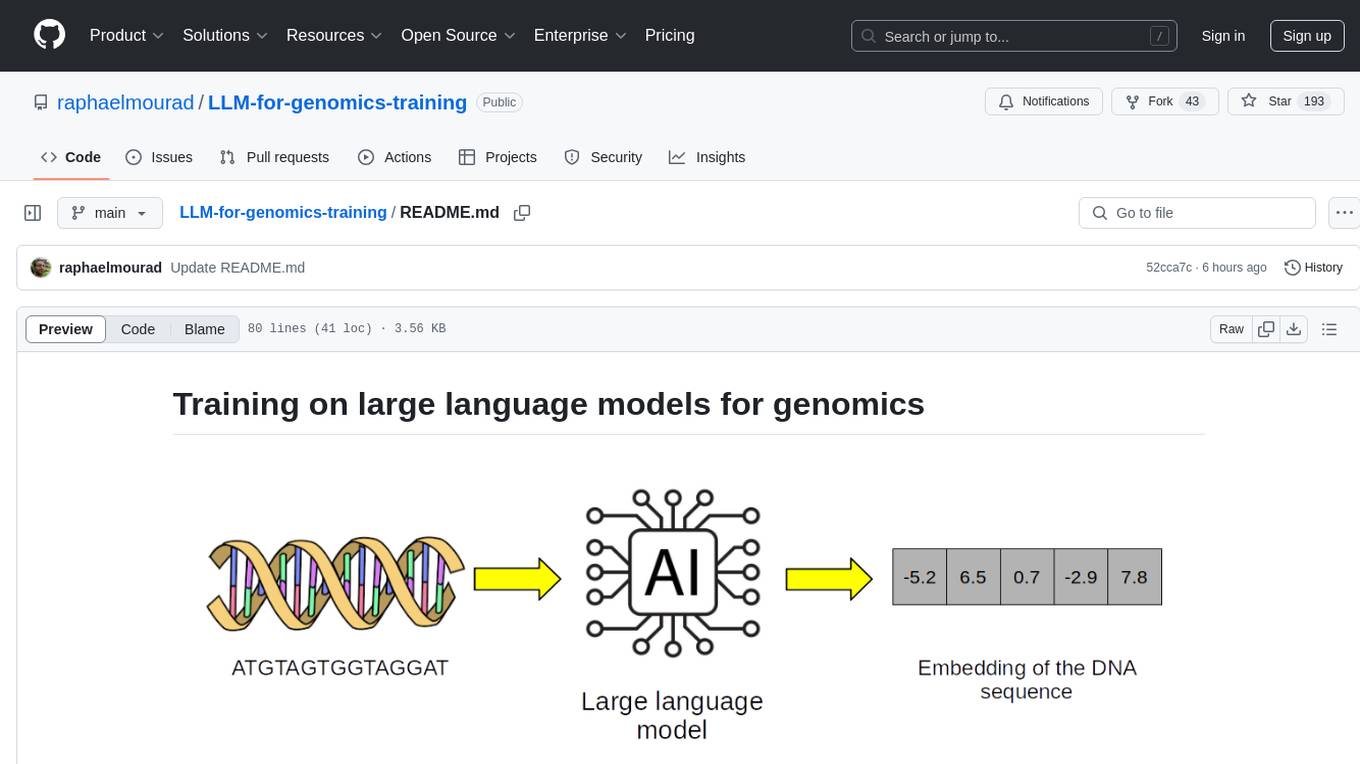

LLM-for-genomics-training

This repository provides training on large language models (LLMs) for genomics, including lecture notes and lab classes covering pretraining, finetuning, zeroshot learning prediction of mutation effect, synthetic DNA sequence generation, and DNA sequence optimization.

16 - OpenAI Gpts

StephenBot

A digital homage to honor Stephen Wolfram's impact on computational science and technology and to celebrate his dedication to public education, powered by Stephen Wolfram's wealth of public presentations, writings, and live streams.

Formula Generator

Expert in generating and explaining mathematical, chemical, and computational formulas.

ChatPNP

Blends academic insights & accessible explanations on P vs NP, drawing from Lance Fortnow's works.