AI tools for Jetstream

Related Tools:

JetStream

JetStream is a throughput and memory optimized engine for LLM inference on XLA devices, starting with TPUs (and GPUs in future -- PRs welcome). It is designed to provide high performance and scalability for large language models, enabling efficient inference on cloud-based TPUs. JetStream leverages XLA to optimize the execution of LLM models, resulting in faster and more efficient inference. Additionally, JetStream supports quantization techniques to further enhance performance and reduce memory consumption. By utilizing JetStream, developers can deploy and run LLM models on TPUs with ease, achieving optimal performance and cost-effectiveness.

JetStream

JetStream is a throughput and memory optimized engine for Large Language Model (LLM) inference on XLA devices, specifically TPUs. It provides reference engine implementations for Jax and Pytorch models, along with documentation for online inference, serving Gemma using TPUs on GKE, benchmarking, observability, profiling, and standalone local setup. Users can easily set up a local server, run tests, and test core modules. JetStream aims to enhance the performance of LLM inference on XLA devices.

opening-up-chatgpt.github.io

This repository provides a curated list of open-source projects that implement instruction-tuned large language models (LLMs) with reinforcement learning from human feedback (RLHF). The projects are evaluated in terms of their openness across a predefined set of criteria in the areas of Availability, Documentation, and Access. The goal of this repository is to promote transparency and accountability in the development and deployment of LLMs.

aimeos-laravel

Aimeos Laravel is a professional, full-featured, and ultra-fast Laravel ecommerce package that can be easily integrated into existing Laravel applications. It offers a wide range of features including multi-vendor, multi-channel, and multi-warehouse support, fast performance, support for various product types, subscriptions with recurring payments, multiple payment gateways, full RTL support, flexible pricing options, admin backend, REST and GraphQL APIs, modular structure, SEO optimization, multi-language support, AI-based text translation, mobile optimization, and high-quality source code. The package is highly configurable and extensible, making it suitable for e-commerce SaaS solutions, marketplaces, and online shops with millions of vendors.

ai-chat-android

AI Chat Android demonstrates Google's Generative AI on Android with Firebase Realtime Database. It showcases Gemini API integration, Jetpack Compose UI elements, Android architecture components with Hilt, Kotlin Coroutines for background tasks, and Firebase Realtime Database integration for real-time events. The project follows Google's official architecture guidance with a modularized structure for reusability, parallel building, and decentralized focusing.

gemini-android

Gemini-Android is a mobile application that allows users to track their expenses and manage their finances on the go. The app provides a user-friendly interface for adding and categorizing expenses, setting budgets, and generating reports to help users make informed financial decisions. With Gemini-Android, users can easily monitor their spending habits, identify areas for saving, and stay on top of their financial goals.

gemini-android

Gemini Android is a repository showcasing Google's Generative AI on Android using Stream Chat SDK for Compose. It demonstrates the Gemini API for Android, implements UI elements with Jetpack Compose, utilizes Android architecture components like Hilt and AppStartup, performs background tasks with Kotlin Coroutines, and integrates chat systems with Stream Chat Compose SDK for real-time event handling. The project also provides technical content, instructions on building the project, tech stack details, architecture overview, modularization strategies, and a contribution guideline. It follows Google's official architecture guidance and offers a real-world example of app architecture implementation.

ai-chat-protocol

The Microsoft AI Chat Protocol SDK is a library for easily building AI Chat interfaces from services that follow the AI Chat Protocol API Specification. By agreeing on a standard API contract, AI backend consumption and evaluation can be performed easily and consistently across different services. It allows developers to develop AI chat interfaces, consume and evaluate AI inference backends, and incorporate HTTP middleware for logging and authentication.

free-for-life

A massive list including a huge amount of products and services that are completely free! ⭐ Star on GitHub • 🤝 Contribute # Table of Contents * APIs, Data & ML * Artificial Intelligence * BaaS * Code Editors * Code Generation * DNS * Databases * Design & UI * Domains * Email * Font * For Students * Forms * Linux Distributions * Messaging & Streaming * PaaS * Payments & Billing * SSL

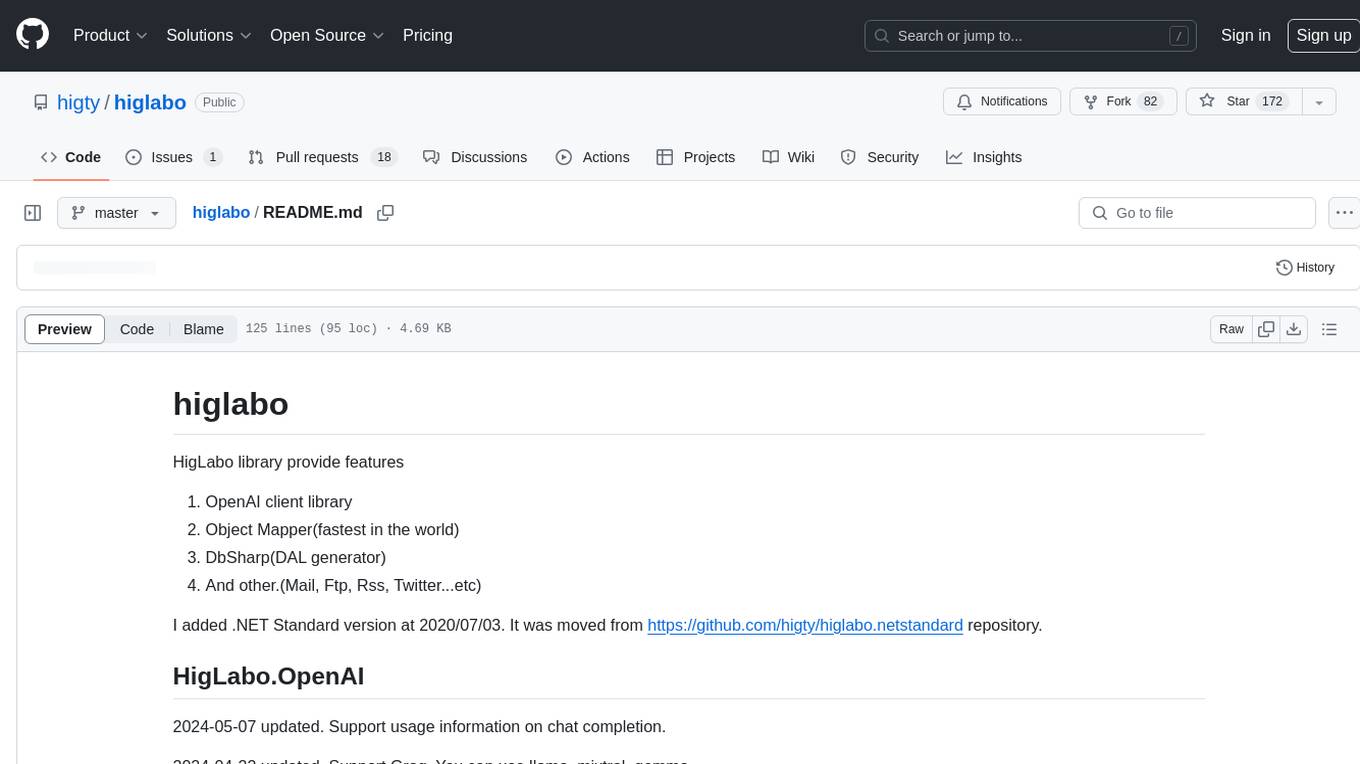

higlabo

HigLabo is a versatile C# library that provides various features such as an OpenAI client library, the fastest object mapper, a DAL generator, and support for functionalities like Mail, FTP, RSS, and Twitter. The library includes modules like HigLabo.OpenAI for chat completion and Groq support, HigLabo.Anthropic for Anthropic Claude AI, HigLabo.Mapper for object mapping, DbSharp for stored procedure calls, HigLabo.Mime for MIME parsing, HigLabo.Mail for SMTP, POP3, and IMAP functionalities, and other utility modules like HigLabo.Data, HigLabo.Converter, and HigLabo.Net.Slack. HigLabo is designed to be easy to use and highly customizable, offering performance optimizations for tasks like object mapping and database access.