Best AI tools for< Web Scraper >

Infographic

20 - AI tool Sites

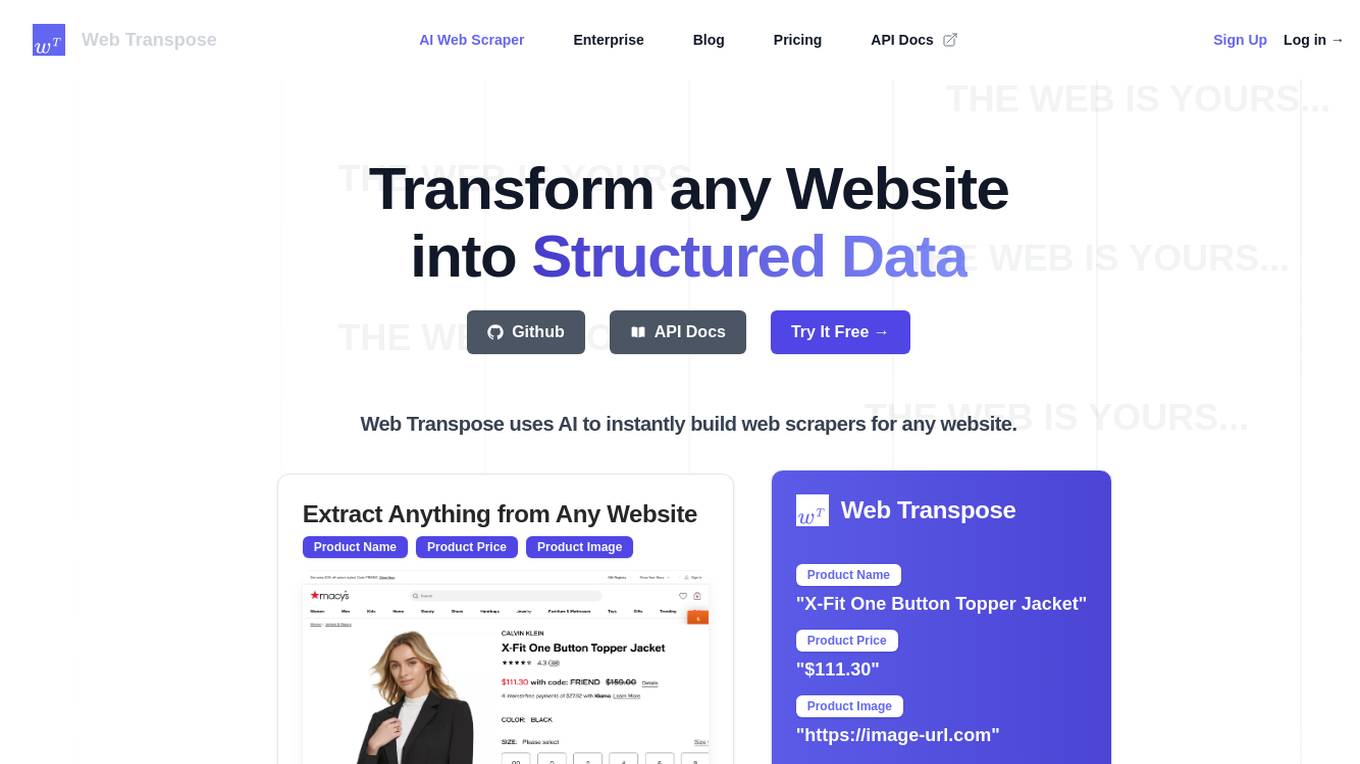

Web Transpose

Web Transpose is an AI-powered web scraping and web crawling API that allows users to transform any website into structured data. By utilizing artificial intelligence, Web Transpose can instantly build web scrapers for any website, enabling users to extract valuable information efficiently and accurately. The tool is designed for production use, offering low latency and effective proxy handling. Web Transpose learns the structure of the target website, reducing latency and preventing hallucinations commonly associated with traditional web scraping methods. Users can query any website like an API and build products quickly using the scraped data.

Apify

Apify is a full-stack web scraping and data extraction platform that offers a wide range of tools and services for developers to build, deploy, and publish web scrapers, AI agents, and automation tools. The platform provides pre-built web scraping tools, serverless programs, integrations with various apps and services, and AI agents equipped with Actors. Apify also offers solutions for building and monetizing MCP servers, as well as professional services for custom web scraping solutions. With a marketplace of over 6,000 Actors, Apify caters to a diverse range of use cases and industries, including generative AI, lead generation, market research, and sentiment analysis.

Octoparse

Octoparse is an AI web scraping tool that offers a no-coding solution for turning web pages into structured data with just a few clicks. It provides users with the ability to build reliable web scrapers without any coding knowledge, thanks to its intuitive workflow designer. With features like AI assistance, automation, and template libraries, Octoparse is a powerful tool for data extraction and analysis across various industries.

Simplescraper

Simplescraper is a web scraping tool that allows users to extract data from any website in seconds. It offers the ability to download data instantly, scrape at scale in the cloud, or create APIs without the need for coding. The tool is designed for developers and no-coders, making web scraping simple and efficient. Simplescraper AI Enhance provides a new way to pull insights from web data, allowing users to summarize, analyze, format, and understand extracted data using AI technology.

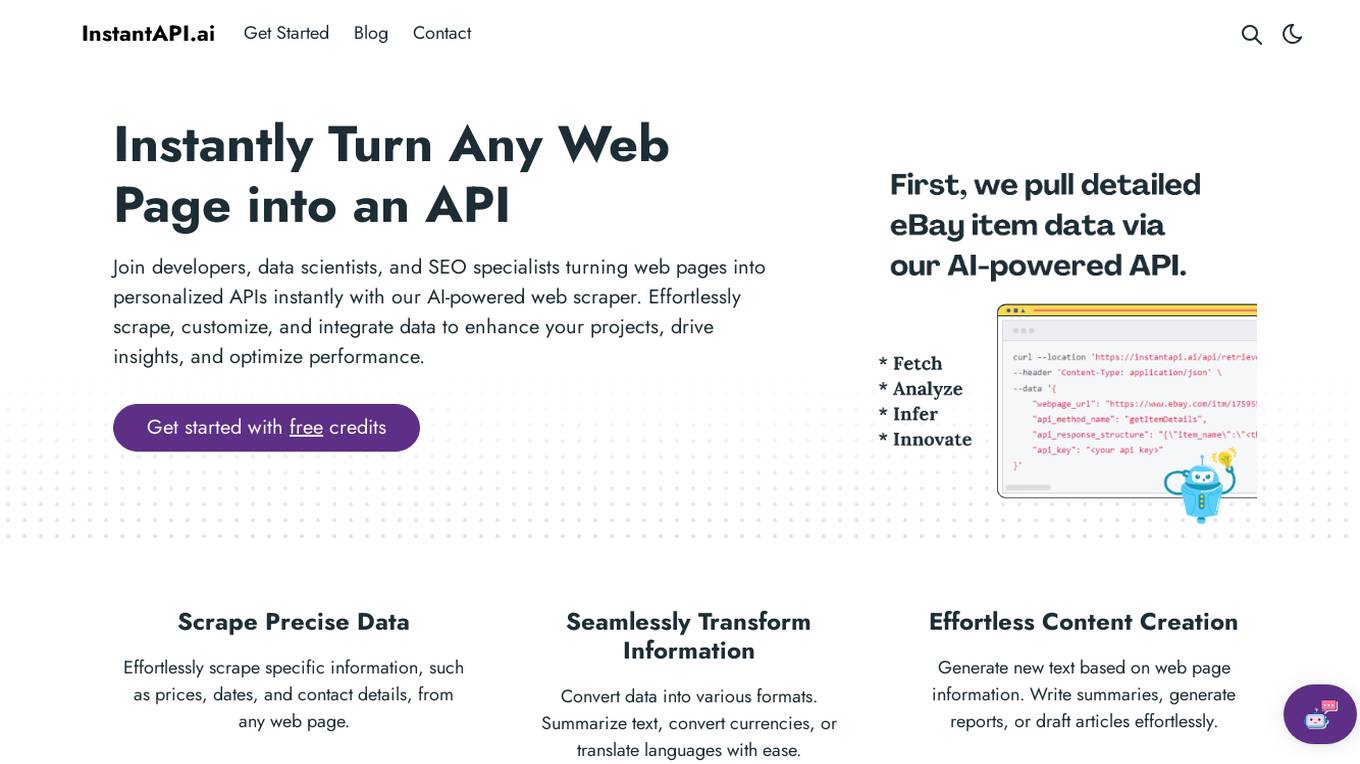

InstantAPI.ai

InstantAPI.ai is an AI-powered web scraping tool that allows developers, data scientists, and SEO specialists to instantly turn any web page into a personalized API. With the ability to effortlessly scrape, customize, and integrate data, users can enhance their projects, drive insights, and optimize performance. The tool offers features such as scraping precise data, transforming information into various formats, generating new content, providing advanced analysis, and extracting valuable insights from data. Users can tailor the output to meet specific needs and unleash creativity by using AI for unique purposes. InstantAPI.ai simplifies the process of web scraping and data manipulation, offering a seamless experience for users seeking to leverage AI technology for their projects.

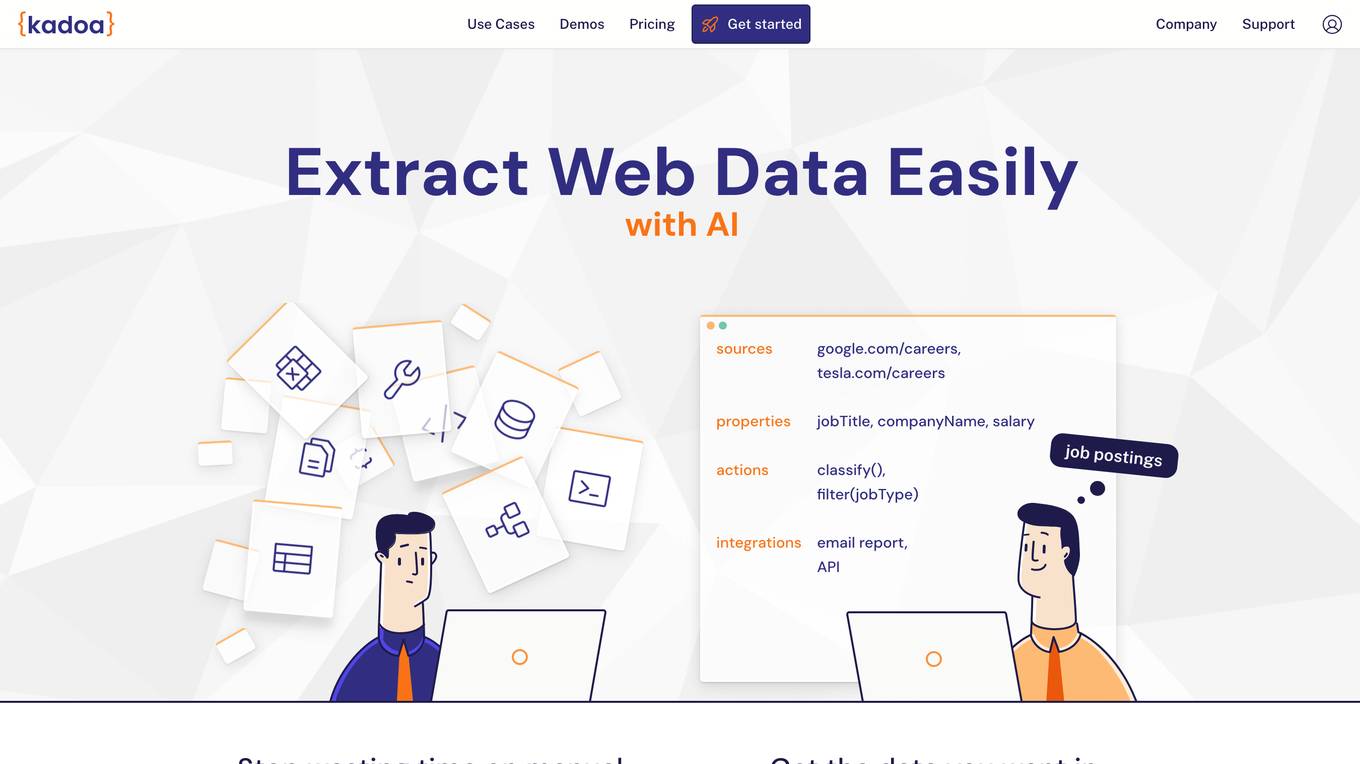

Kadoa

Kadoa is an AI web scraper tool that extracts unstructured web data at scale automatically, without the need for coding. It offers a fast and easy way to integrate web data into applications, providing high accuracy, scalability, and automation in data extraction and transformation. Kadoa is trusted by various industries for real-time monitoring, lead generation, media monitoring, and more, offering zero setup or maintenance effort and smart navigation capabilities.

Extracto.bot

Extracto.bot is an AI web scraping tool that automates the process of extracting data from websites. It is a no-configuration, intelligent web scraper that allows users to collect data from any site using Google Sheets and AI technology. The tool is designed to be simple, instant, and intelligent, enabling users to save time and effort in collecting and organizing data for various purposes.

Autotab

Autotab is an AI-powered digital robot that can automate repetitive tasks on any website or web application. It is designed to help businesses save time and money by automating tasks such as data entry, web scraping, and social media management. Autotab is easy to use and can be set up in minutes. It is also very affordable, with plans starting at just $1 per hour.

Lazy AI

Lazy AI is an AI tool that enables users to quickly build and modify web apps with prompts and deploy them to the cloud with just one click. Users can create various applications such as customer portals, API endpoints for AI text summarization, metrics dashboards, web scrapers, chatbots, and discord bots. The platform offers a wide range of template categories and tools for automation, data mining, AI agents, dashboards, reporting, and more. Users can also access reusable templates from the Lazy AI community to streamline their development process.

UseScraper

UseScraper is a web crawler and scraper API that allows users to extract data from websites for research, analysis, and AI applications. It offers features such as full browser rendering, markdown conversion, and automatic proxies to prevent rate limiting. UseScraper is designed to be fast, easy to use, and cost-effective, with plans starting at $0 per month.

Lazy AI

Lazy AI is a platform that enables users to build full stack web applications 10 times faster by utilizing AI technology. Users can create and modify web apps with prompts and deploy them to the cloud with just one click. The platform offers a variety of features including AI Component Builder, eCommerce store creation, Crypto Arbitrage Scraper, Text to Speech Converter, Lazy Image to Video generation, PDF Chatbot, and more. Lazy AI aims to streamline the app development process and empower users to leverage AI for various tasks.

FetchFox

FetchFox is an AI-powered web scraping tool that allows users to extract data from any website by providing a prompt in plain English. It runs as a Chrome Extension and can bypass anti-scraping measures on sites like LinkedIn and Facebook. FetchFox is designed to quickly gather data for tasks such as lead generation, research data assembly, and market segment analysis.

Jsonify

Jsonify is an AI tool that automates the process of exploring and understanding websites to find, filter, and extract structured data at scale. It uses AI-powered agents to navigate web content, replacing traditional data scrapers and providing data insights with speed and precision. Jsonify integrates with leading data analysis and business intelligence suites, allowing users to visualize and gain insights into their data easily. The tool offers a no-code dashboard for creating workflows and easily iterating on data tasks. Jsonify is trusted by companies worldwide for its ability to adapt to page changes, learn as it runs, and provide technical and non-technical integrations.

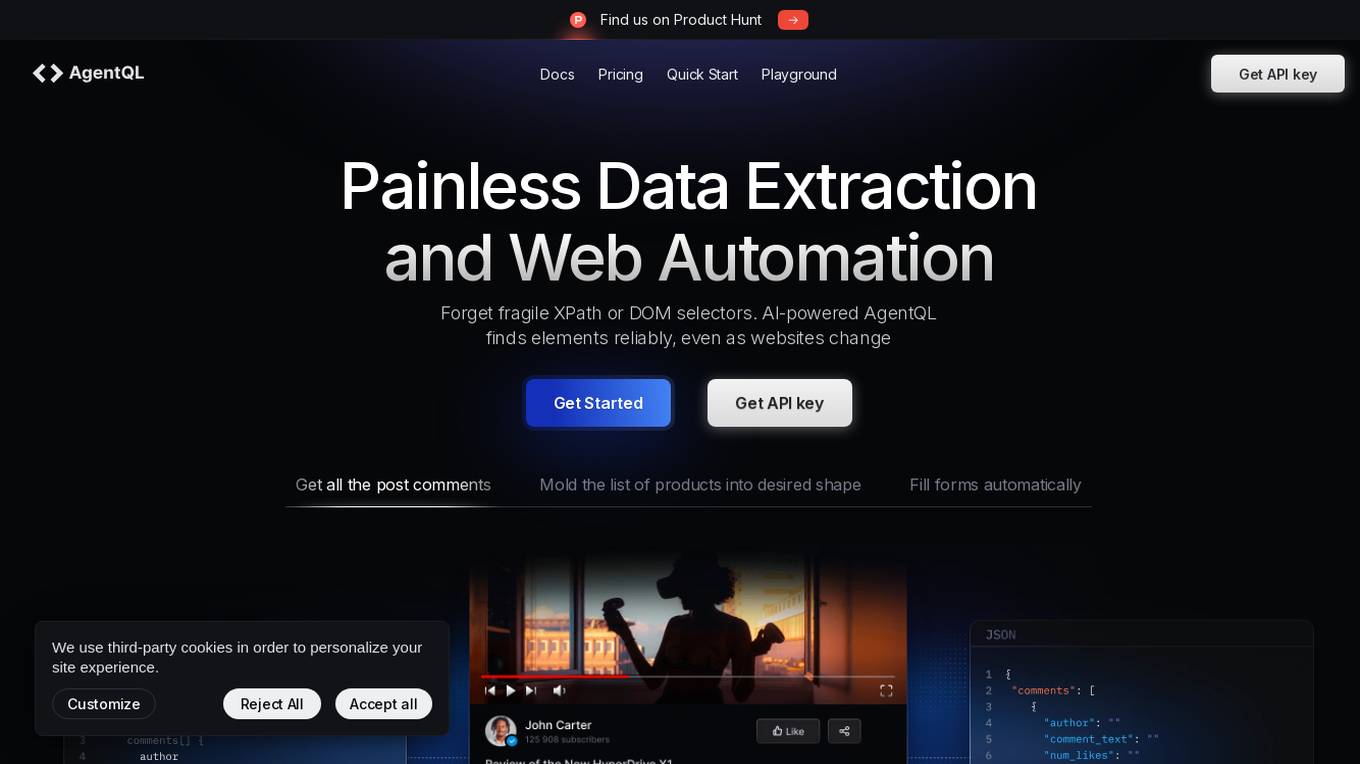

AgentQL

AgentQL is an AI-powered tool for painless data extraction and web automation. It eliminates the need for fragile XPath or DOM selectors by using semantic selectors and natural language descriptions to find web elements reliably. With controlled output and deterministic behavior, AgentQL allows users to shape data exactly as needed. The tool offers features such as extracting data, filling forms automatically, and streamlining testing processes. It is designed to be user-friendly and efficient for developers and data engineers.

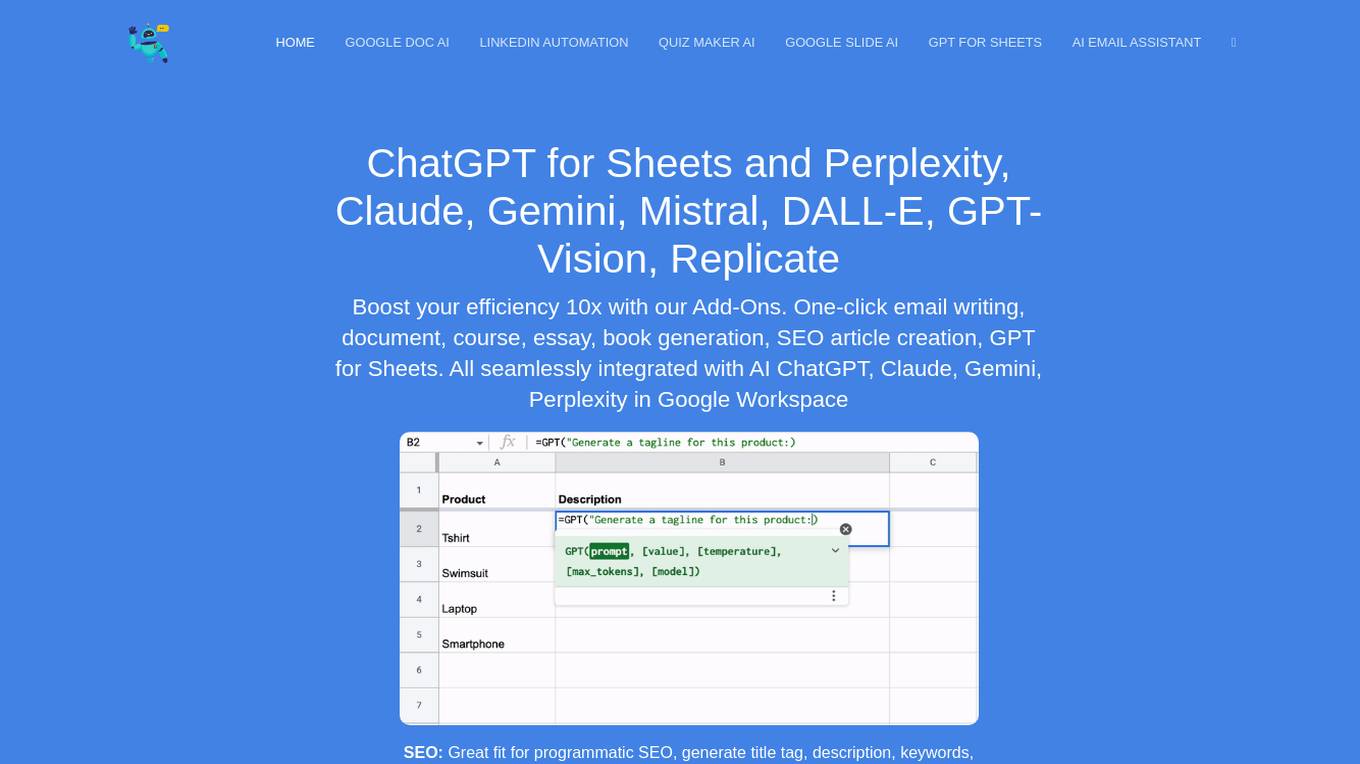

DocGPT.ai

DocGPT.ai is an AI-powered tool designed to enhance productivity and efficiency in various tasks such as email writing, document generation, content creation, SEO optimization, data enrichment, and more. It seamlessly integrates with Google Workspace applications to provide users with advanced AI capabilities for content generation and management. With support for multiple AI models and a wide range of features, DocGPT.ai is a comprehensive solution for individuals and businesses looking to streamline their workflows and improve their content creation processes.

OdiaGenAI

OdiaGenAI is a collaborative initiative focused on conducting research on Generative AI and Large Language Models (LLM) for the Odia Language. The project aims to leverage AI technology to develop Generative AI and LLM-based solutions for the overall development of Odisha and the Odia language through collaboration among Odia technologists. The initiative offers pre-trained models, codes, and datasets for non-commercial and research purposes, with a focus on building language models for Indic languages like Odia and Bengali.

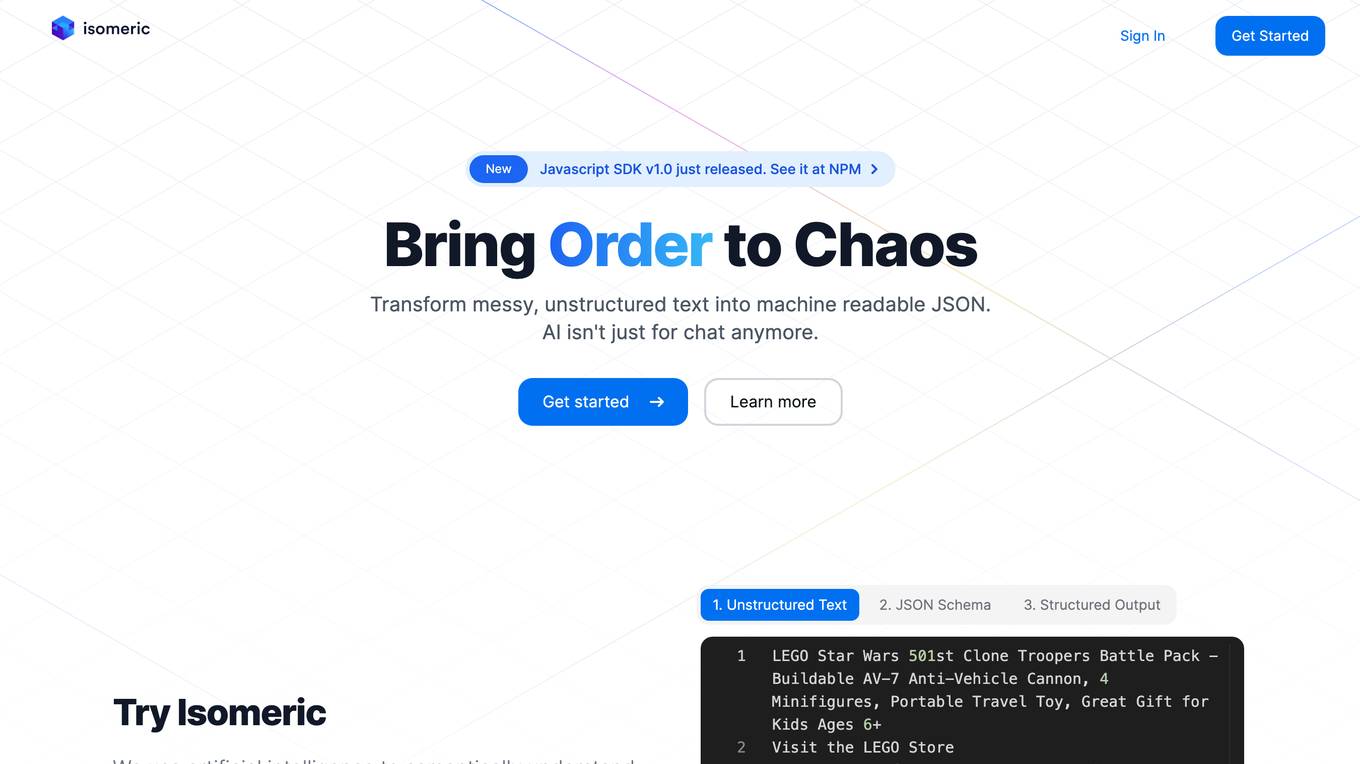

Isomeric

Isomeric is an AI tool that uses artificial intelligence to semantically understand unstructured text and extract specific data. It helps transform messy text into machine-readable JSON, enabling tasks such as web scraping, browser extensions, and general information extraction. With Isomeric, users can scale their data gathering pipeline in seconds, making it a valuable tool for various industries like customer support, data platforms, and legal services.

todai

todai is a personal branding assistant enhanced by AI that turbo-charges content creation and posting across social media channels. It helps users create a month's worth of content in less than 30 minutes, offering features like creating viral video clips, carousels, book reviews, and personalized views on any topic. With AI integration, todai provides unique content based on the user's professional profile, tone of voice, and writing style. It consolidates various content creation tools into one platform, enabling users to create visual and interactive personal branded content effortlessly.

Cohesive

Cohesive is an AI tool designed to provide outsourced analysts and assistants for businesses. It enables users to prospect at scale, perform outbound activities with AI enrichment and web scraping directly within Google Sheets. The tool is Google Sheets native, allowing users to enrich and scrape the web without the need to import data into a separate platform. Cohesive also leverages AI for bulk data analysis, personalization generation, web scraping, and email finding/validation. It offers free usage with the option to join the Cohesive Slack community for additional support.

ScrapeComfort

ScrapeComfort is an AI-driven web scraping tool that offers an effortless and intuitive data mining solution. It leverages AI technology to extract data from websites without the need for complex coding or technical expertise. Users can easily input URLs, download data, set up extractors, and save extracted data for immediate use. The tool is designed to cater to various needs such as data analytics, market investigation, and lead acquisition, making it a versatile solution for businesses and individuals looking to streamline their data collection process.

7 - Open Source Tools

Scrapegraph-ai

ScrapeGraphAI is a Python library that uses Large Language Models (LLMs) and direct graph logic to create web scraping pipelines for websites, documents, and XML files. It allows users to extract specific information from web pages by providing a prompt describing the desired data. ScrapeGraphAI supports various LLMs, including Ollama, OpenAI, Gemini, and Docker, enabling users to choose the most suitable model for their needs. The library provides a user-friendly interface through its `SmartScraper` class, which simplifies the process of building and executing scraping pipelines. ScrapeGraphAI is open-source and available on GitHub, with extensive documentation and examples to guide users. It is particularly useful for researchers and data scientists who need to extract structured data from web pages for analysis and exploration.

scylla

Scylla is an intelligent proxy pool tool designed for humanities, enabling users to extract content from the internet and build their own Large Language Models in the AI era. It features automatic proxy IP crawling and validation, an easy-to-use JSON API, a simple web-based user interface, HTTP forward proxy server, Scrapy and requests integration, and headless browser crawling. Users can start using Scylla with just one command, making it a versatile tool for various web scraping and content extraction tasks.

firecrawl-mcp-server

Firecrawl MCP Server is a Model Context Protocol (MCP) server implementation that integrates with Firecrawl for web scraping capabilities. It supports features like scrape, crawl, search, extract, and batch scrape. It provides web scraping with JS rendering, URL discovery, web search with content extraction, automatic retries with exponential backoff, credit usage monitoring, comprehensive logging system, support for cloud and self-hosted FireCrawl instances, mobile/desktop viewport support, and smart content filtering with tag inclusion/exclusion. The server includes configurable parameters for retry behavior and credit usage monitoring, rate limiting and batch processing capabilities, and tools for scraping, batch scraping, checking batch status, searching, crawling, and extracting structured information from web pages.

PulsarRPA

PulsarRPA is a high-performance, distributed, open-source Robotic Process Automation (RPA) framework designed to handle large-scale RPA tasks with ease. It provides a comprehensive solution for browser automation, web content understanding, and data extraction. PulsarRPA addresses challenges of browser automation and accurate web data extraction from complex and evolving websites. It incorporates innovative technologies like browser rendering, RPA, intelligent scraping, advanced DOM parsing, and distributed architecture to ensure efficient, accurate, and scalable web data extraction. The tool is open-source, customizable, and supports cutting-edge information extraction technology, making it a preferred solution for large-scale web data extraction.

firecrawl

Firecrawl is an API service that empowers AI applications with clean data from any website. It features advanced scraping, crawling, and data extraction capabilities. The repository is still in development, integrating custom modules into the mono repo. Users can run it locally but it's not fully ready for self-hosted deployment yet. Firecrawl offers powerful capabilities like scraping, crawling, mapping, searching, and extracting structured data from single pages, multiple pages, or entire websites with AI. It supports various formats, actions, and batch scraping. The tool is designed to handle proxies, anti-bot mechanisms, dynamic content, media parsing, change tracking, and more. Firecrawl is available as an open-source project under the AGPL-3.0 license, with additional features offered in the cloud version.

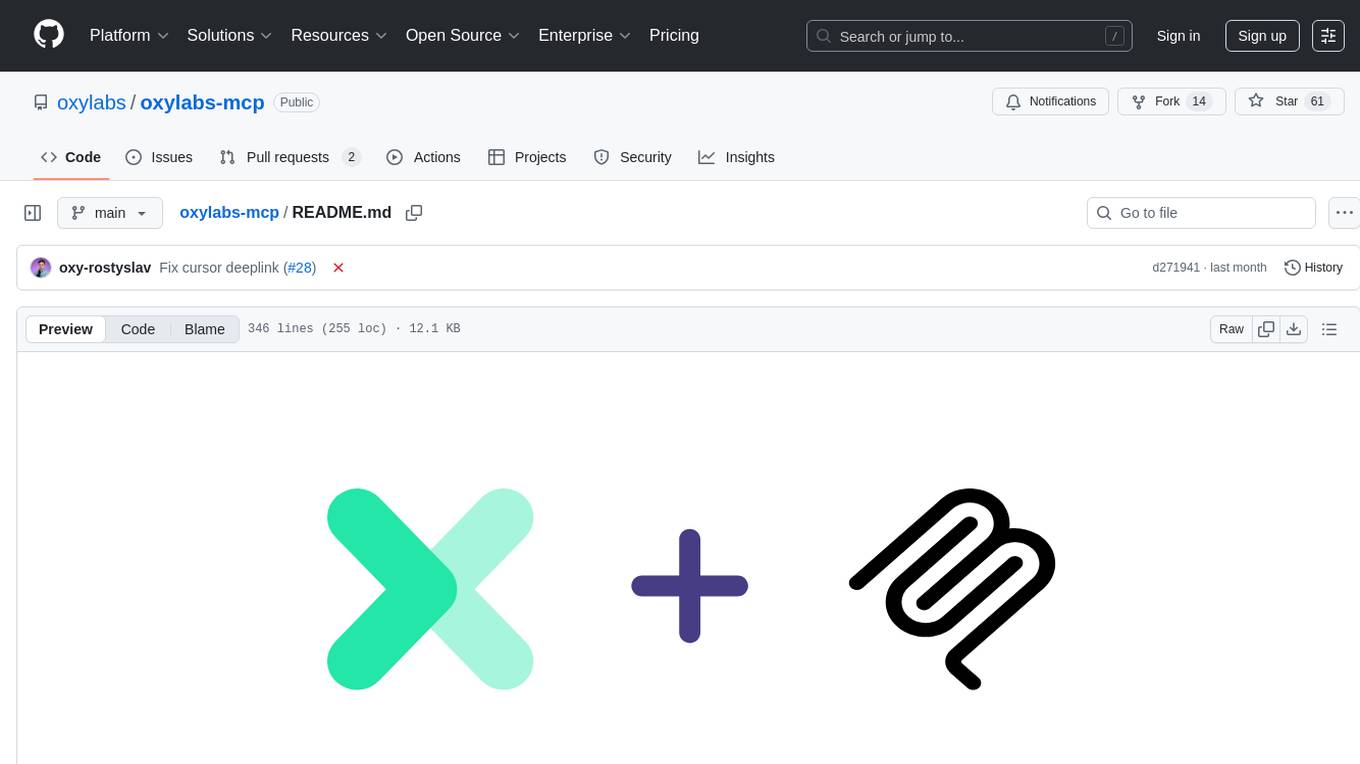

oxylabs-mcp

The Oxylabs MCP Server acts as a bridge between AI models and the web, providing clean, structured data from any site. It enables scraping of URLs, rendering JavaScript-heavy pages, content extraction for AI use, bypassing anti-scraping measures, and accessing geo-restricted web data from 195+ countries. The implementation utilizes the Model Context Protocol (MCP) to facilitate secure interactions between AI assistants and web content. Key features include scraping content from any site, automatic data cleaning and conversion, bypassing blocks and geo-restrictions, flexible setup with cross-platform support, and built-in error handling and request management.

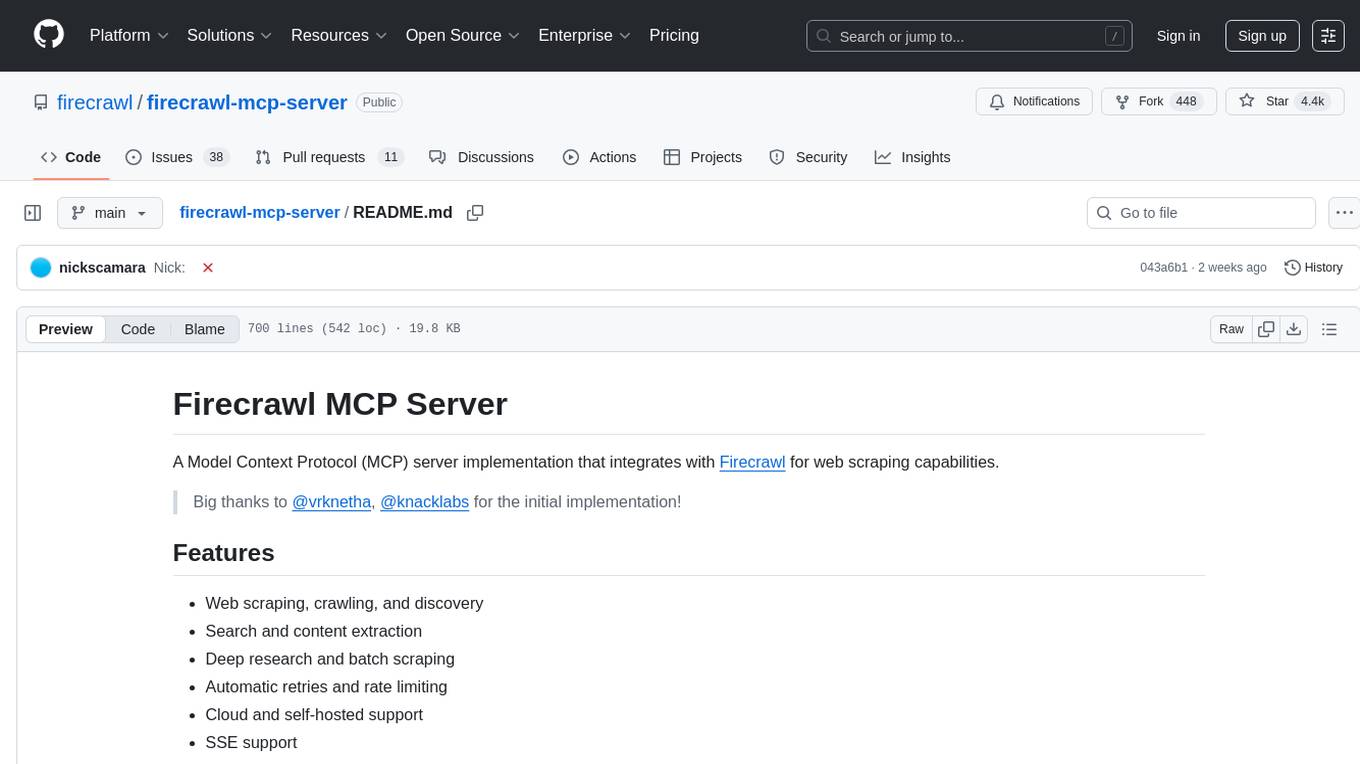

firecrawl-mcp-server

Firecrawl MCP Server is a Model Context Protocol (MCP) server implementation that integrates with Firecrawl for web scraping capabilities. It offers features such as web scraping, crawling, and discovery, search and content extraction, deep research and batch scraping, automatic retries and rate limiting, cloud and self-hosted support, and SSE support. The server can be configured to run with various tools like Cursor, Windsurf, SSE Local Mode, Smithery, and VS Code. It supports environment variables for cloud API and optional configurations for retry settings and credit usage monitoring. The server includes tools for scraping, batch scraping, mapping, searching, crawling, and extracting structured data from web pages. It provides detailed logging and error handling functionalities for robust performance.

20 - OpenAI Gpts

Advanced Web Scraper with Code Generator

Generates web scraping code with accurate selectors.

Scraping GPT Proxy and Web Scraping Tips

Scraping ChatGPT helps you with web scraping and proxy management. It provides advanced tips and strategies for efficiently handling CAPTCHAs, and managing IP rotations. Its expertise extends to ethical scraping practices, and optimizing proxy usage for seamless data retrieval

CodeGPT

This GPT can generate code for you. For now it creates full-stack apps using Typescript. Just describe the feature you want and you will get a link to the Github code pull request and the live app deployed.

Web and Social Media Guide for Artists

Guiding artists in crafting engaging websites and social media content.