Best AI tools for< Train Reward Models >

20 - AI tool Sites

Teachr

Teachr is an online course creation platform that uses artificial intelligence to help users create and sell stunning courses. With Teachr, users can create interactive courses with 3D visuals, 360° perspectives, and augmented reality. They can also use speech recognition and AI voice-over technology to create engaging learning experiences. Teachr also offers a range of features to help users manage their courses, including a payment system, reward system, and fitness challenges. With Teachr, users can turn their expertise into a product that they can sell infinitely and create the perfect learning experience for their customers.

Reword

Reword is an AI-powered writing assistant that helps you write better articles, faster. With Reword, you can train your own AI assistant to write in your unique voice and style. Reword also provides you with a library of pre-trained AI assistants that you can use to get started quickly. Reword is the perfect tool for anyone who wants to write better articles, faster.

Catizen

Catizen is an AI-powered application that combines gaming, blockchain technology, and artificial intelligence to create a unique virtual world for users and their digital companions, AI kitties. With features like mini-games, user acquisition tasks, and blockchain-powered gaming, Catizen offers a fun and interactive experience for players. The application is designed to provide entertainment, user engagement, and rewards through a seamless integration of AI technology and gaming elements.

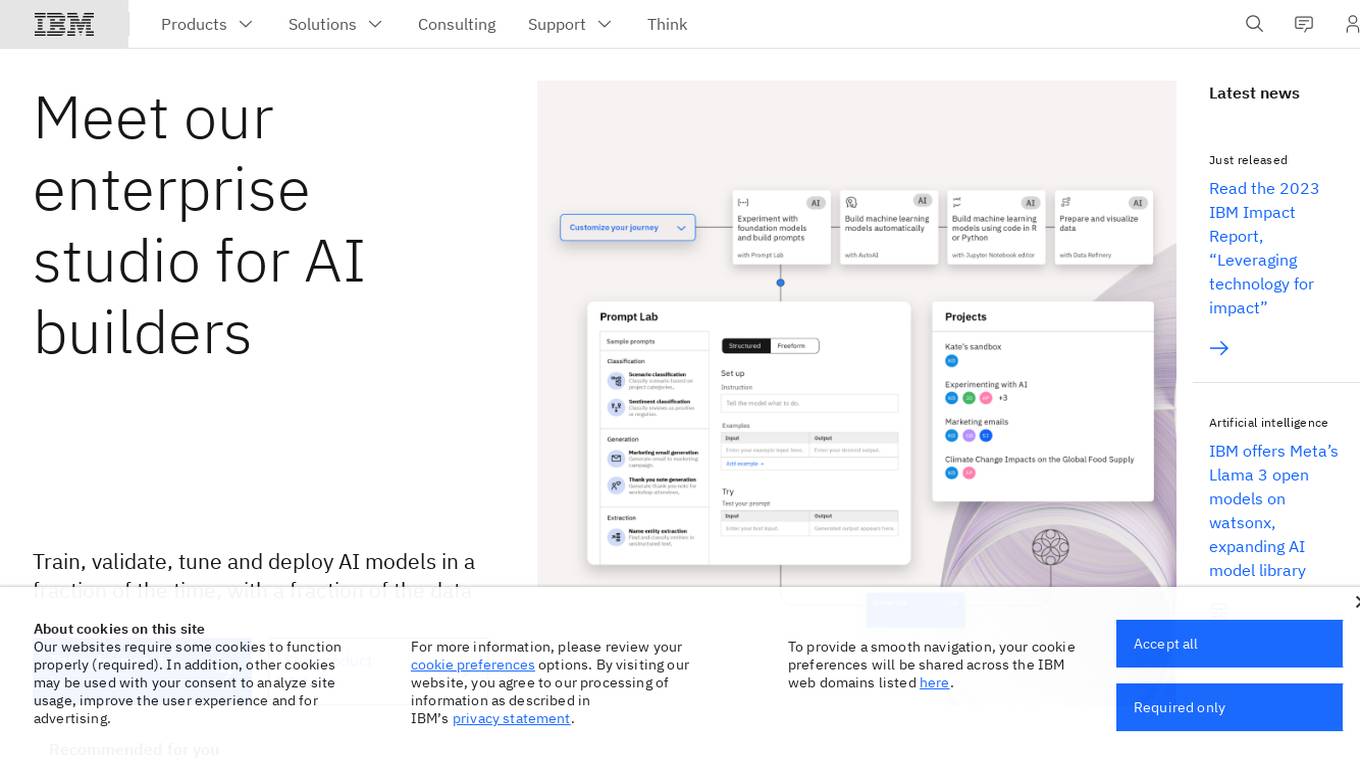

IBM Watsonx

IBM Watsonx is an enterprise studio for AI builders. It provides a platform to train, validate, tune, and deploy AI models quickly and efficiently. With Watsonx, users can access a library of pre-trained AI models, build their own models, and deploy them to the cloud or on-premises. Watsonx also offers a range of tools and services to help users manage and monitor their AI models.

Outlier AI

Outlier AI is a platform that connects subject matter experts to help build the world's most advanced Generative AI. It allows experts to work on various projects from generating training data to evaluating model performance. The platform offers flexibility, allowing contributors to work from home on their own schedule. Outlier AI aims to redefine how AI learns by leveraging the expertise of domain specialists across different fields.

Athletica AI

Athletica AI is an AI-powered athletic training and personalized fitness application that offers tailored coaching and training plans for various sports like cycling, running, duathlon, triathlon, and rowing. It adapts to individual fitness levels, abilities, and availability, providing daily step-by-step training plans and comprehensive session analyses. Athletica AI integrates seamlessly with workout data from platforms like Garmin, Strava, and Concept 2 to craft personalized training plans and workouts. The application aims to help athletes train smarter, not harder, by leveraging the power of AI to optimize performance and achieve fitness goals.

Backend.AI

Backend.AI is an enterprise-scale cluster backend for AI frameworks that offers scalability, GPU virtualization, HPC optimization, and DGX-Ready software products. It provides a fast and efficient way to build, train, and serve AI models of any type and size, with flexible infrastructure options. Backend.AI aims to optimize backend resources, reduce costs, and simplify deployment for AI developers and researchers. The platform integrates seamlessly with existing tools and offers fractional GPU usage and pay-as-you-play model to maximize resource utilization.

Kaiden AI

Kaiden AI is an AI-powered training platform that offers personalized, immersive simulations to enhance skills and performance across various industries and roles. It provides feedback-rich scenarios, voice-enabled interactions, and detailed performance insights. Users can create custom training scenarios, engage with AI personas, and receive real-time feedback to improve communication skills. Kaiden AI aims to revolutionize training solutions by combining AI technology with real-world practice.

Endurance

Endurance is a platform designed for runners, swimmers, and cyclists to engage in group training activities with friends or local communities. Users can create or join teams, share structured workouts, and benefit from collective motivation and accountability. The platform aims to make training fun and effective by leveraging the power of group workouts and social connections.

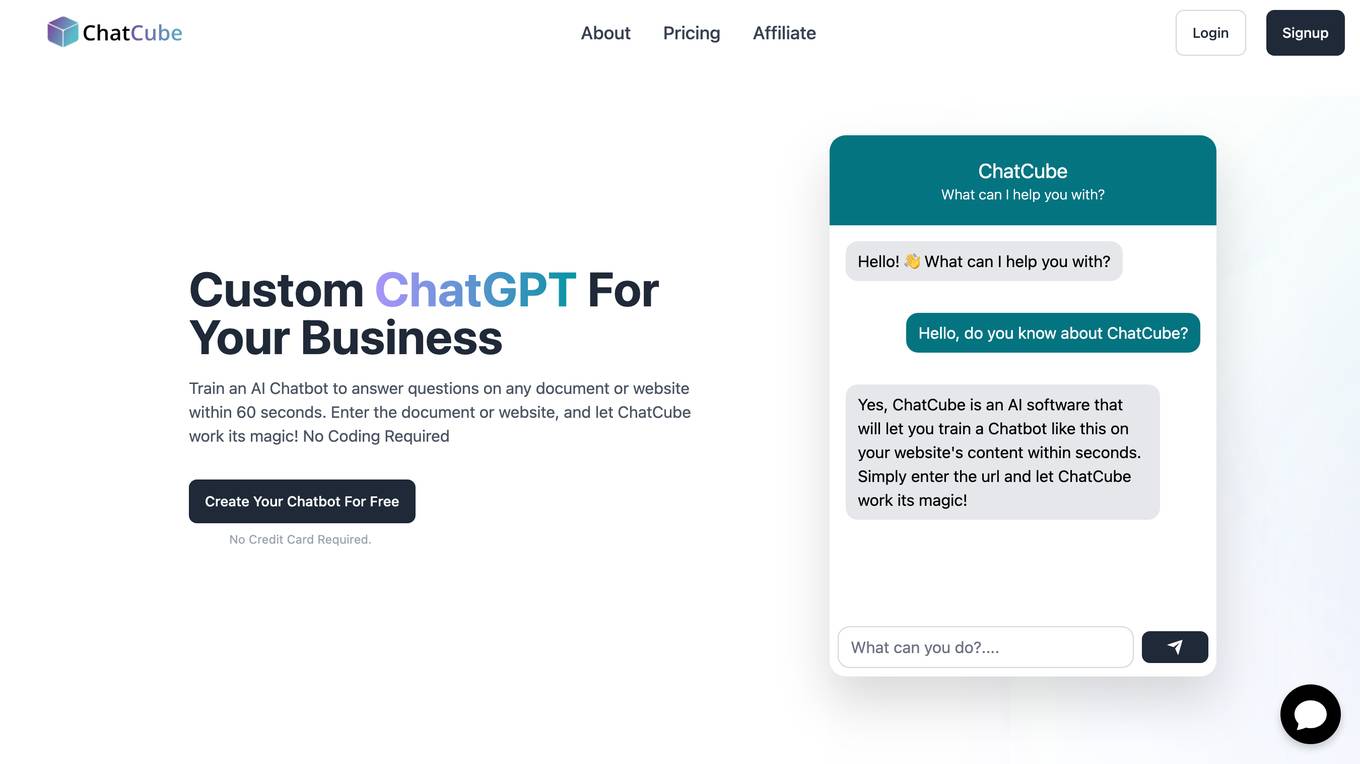

ChatCube

ChatCube is an AI-powered chatbot maker that allows users to create chatbots for their websites without coding. It uses advanced AI technology to train chatbots on any document or website within 60 seconds. ChatCube offers a range of features, including a user-friendly visual editor, lightning-fast integration, fine-tuning on specific data sources, data encryption and security, and customizable chatbots. By leveraging the power of AI, ChatCube helps businesses improve customer support efficiency and reduce support ticket reductions by up to 28%.

Workout Tools

Workout Tools is an AI-powered personal trainer that helps you train smarter and reach your fitness goals faster. It takes into account different parameters, such as your physics, the type of workout you're interested in, your available equipment, and comes up with a suggested workout. Don't like the workout? Just generate another one. It's that simple.

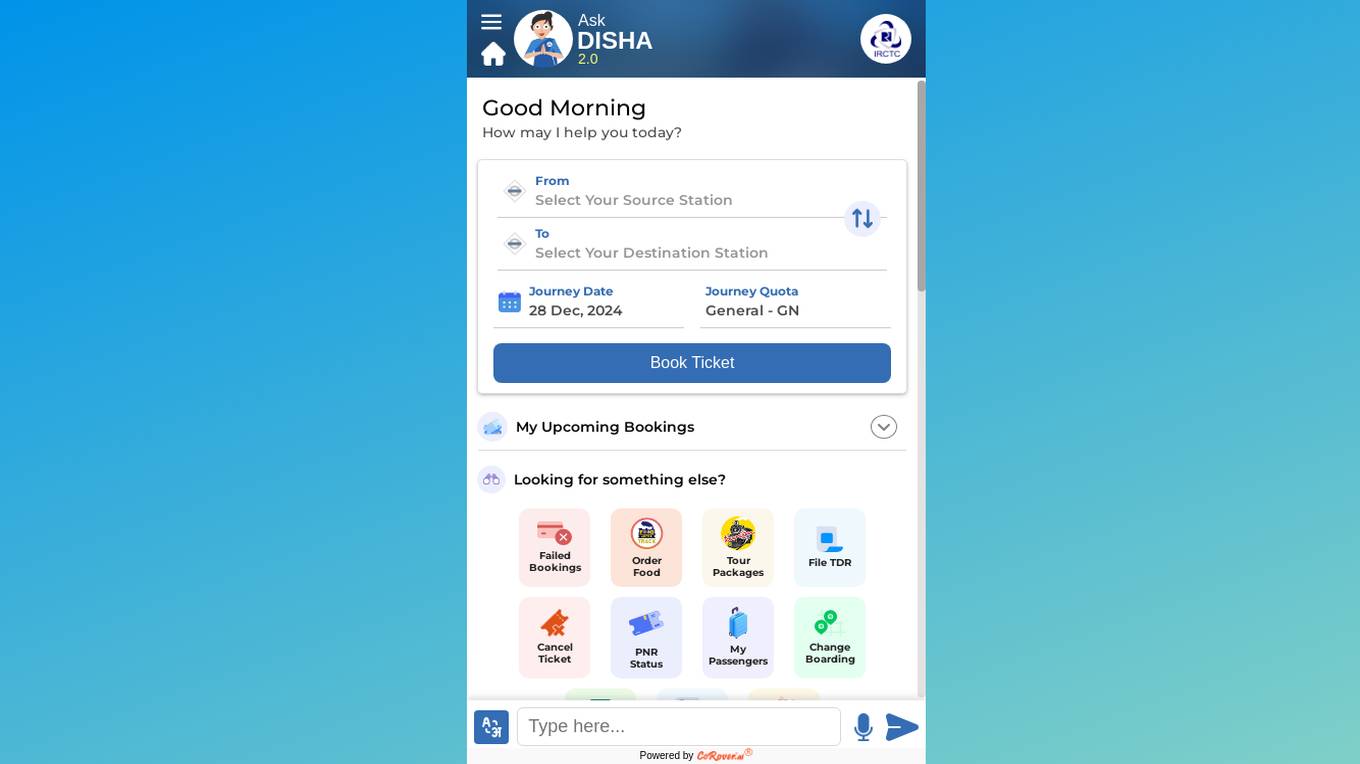

CoRover.ai

CoRover.ai is an AI-powered chatbot designed to help users book train tickets seamlessly through conversation. The chatbot, named AskDISHA, is integrated with the IRCTC platform, allowing users to inquire about train schedules, ticket availability, and make bookings effortlessly. CoRover.ai leverages artificial intelligence to provide personalized assistance and streamline the ticket booking process for users, enhancing their overall experience.

IllumiDesk

IllumiDesk is a generative AI platform for instructors and content developers that helps teams create and monetize content tailored 10X faster. With IllumiDesk, you can automate grading tasks, collaborate with your learners, create awesome content at the speed of AI, and integrate with the services you know and love. IllumiDesk's AI will help you create, maintain, and structure your content into interactive lessons. You can also leverage IllumiDesk's flexible integration options using the RESTful API and/or LTI v1.3 to leverage existing content and flows. IllumiDesk is trusted by training agencies and universities around the world.

Tovuti LMS

Tovuti LMS is an adaptive, people-first learning platform that helps organizations create engaging courses, train teams, and track progress. With its easy-to-use interface and powerful features, Tovuti LMS makes learning fun and easy. Tovuti LMS is trusted by leading organizations around the world to provide their employees with the training they need to succeed.

Kaba

Kaba is an open-source digital laboratory that empowers users to build their own AI models easily. It emphasizes privacy, security, and user control over data. The platform enables users to observe their attention and create brain-like mechanisms for intelligent systems based on their observable context. Kaba offers multi-modal, multi-input, and multi-context capabilities to enhance user experiences. The team behind Kaba comprises experts in design, technical advancements, innovation, security, and privacy, aiming to revolutionize technology interactions.

Chatbond

Chatbond is an AI chatbot builder that enables users to create customized chatbots for websites and messaging platforms without the need for coding skills. With Chatbond, users can design conversational interfaces, integrate AI capabilities, and deploy chatbots to enhance customer engagement and streamline communication processes. The platform offers a user-friendly interface with drag-and-drop functionality, pre-built templates, and analytics tools to monitor chatbot performance and optimize interactions. Chatbond empowers businesses to automate customer support, lead generation, and sales processes, improving efficiency and scalability.

micro1

micro1 is an AI platform that leverages human intelligence to power cutting-edge AI solutions for various sectors such as AI labs, government, enterprises, and robotics. It acts as a data engine, collecting expert-level human data to train and improve frontier foundation models. The platform offers services like agentic AI design, robotics data transformation, and an AI recruiter agent named Zara to source and vet top talent at scale. micro1 aims to accelerate model capability, advance agentic reasoning, and drive the next generation of AI through its human intelligence infrastructure.

Teachable Machine

Teachable Machine is a web-based tool that makes it easy to create custom machine learning models, even if you don't have any coding experience. With Teachable Machine, you can train models to recognize images, sounds, and poses. Once you've trained a model, you can export it to use in your own projects.

Sherpa.ai

Sherpa.ai is a SaaS platform that enables data collaborations without sharing data. It allows businesses to build and train models with sensitive data from different parties, without compromising privacy or regulatory compliance. Sherpa.ai's Federated Learning platform is used in various industries, including healthcare, financial services, and manufacturing, to improve AI models, accelerate research, and optimize operations.

Surge AI

Surge AI is a data labeling platform that provides human-generated data for training and evaluating large language models (LLMs). It offers a global workforce of annotators who can label data in over 40 languages. Surge AI's platform is designed to be easy to use and integrates with popular machine learning tools and frameworks. The company's customers include leading AI companies, research labs, and startups.

3 - Open Source AI Tools

RLHF-Reward-Modeling

This repository contains code for training reward models for Deep Reinforcement Learning-based Reward-modulated Hierarchical Fine-tuning (DRL-based RLHF), Iterative Selection Fine-tuning (Rejection sampling fine-tuning), and iterative Decision Policy Optimization (DPO). The reward models are trained using a Bradley-Terry model based on the Gemma and Mistral language models. The resulting reward models achieve state-of-the-art performance on the RewardBench leaderboard for reward models with base models of up to 13B parameters.

RLHF-Reward-Modeling

This repository, RLHF-Reward-Modeling, is dedicated to training reward models for DRL-based RLHF (PPO), Iterative SFT, and iterative DPO. It provides state-of-the-art performance in reward models with a base model size of up to 13B. The installation instructions involve setting up the environment and aligning the handbook. Dataset preparation requires preprocessing conversations into a standard format. The code can be run with Gemma-2b-it, and evaluation results can be obtained using provided datasets. The to-do list includes various reward models like Bradley-Terry, preference model, regression-based reward model, and multi-objective reward model. The repository is part of iterative rejection sampling fine-tuning and iterative DPO.

AceCoder

AceCoder is a tool that introduces a fully automated pipeline for synthesizing large-scale reliable tests used for reward model training and reinforcement learning in the coding scenario. It curates datasets, trains reward models, and performs RL training to improve coding abilities of language models. The tool aims to unlock the potential of RL training for code generation models and push the boundaries of LLM's coding abilities.

20 - OpenAI Gpts

How to Train a Chessie

Comprehensive training and wellness guide for Chesapeake Bay Retrievers.

The Train Traveler

Friendly train travel guide focusing on the best routes, essential travel information, and personalized travel insights, for both experienced and novice travelers.

How to Train Your Dog (or Cat, or Dragon, or...)

Expert in pet training advice, friendly and engaging.

TrainTalk

Your personal advisor for eco-friendly train travel. Let's plan your next journey together!

Monster Battle - RPG Game

Train monsters, travel the world, earn Arena Tokens and become the ultimate monster battling champion of earth!

Hero Master AI: Superhero Training

Train to become a superhero or a supervillain. Master your powers, make pivotal choices. Each decision you make in this action-packed game not only shapes your abilities but also your moral alignment in the battle between good and evil. Another GPT Simulator by Dave Lalande

Pytorch Trainer GPT

Your purpose is to create the pytorch code to train language models using pytorch

Design Recruiter

Job interview coach for product designers. Train interviews and say stop when you need a feedback. You got this!!

Pocket Training Activity Expert

Expert in engaging, interactive training methods and activities.

RailwayGPT

Technical expert on locomotives, trains, signalling, and railway technology. Can answer questions and draw designs specific to transportation domain.

Railroad Conductors and Yardmasters Roadmap

Don’t know where to even begin? Let me help create a roadmap towards the career of your dreams! Type "help" for More Information

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.