Best AI tools for< Test Performance >

20 - AI tool Sites

Imaiger

Imaiger is a generative AI image platform designed to assist content creators and brands in creating high-performing slideshow formats for social media platforms like YouTube, TikTok, and Instagram. The platform uses AI to analyze viral content, identify winning patterns, and generate customizable slideshows optimized for engagement. Users can easily create, edit, and test multiple versions of slideshows to drive real growth and audience engagement. Imaiger offers AI-powered tools for creating scroll-stopping hooks, overlay text, call-to-action slides, and personalized content formats tailored to individual brands.

bottest.ai

bottest.ai is an AI-powered chatbot testing tool that focuses on ensuring quality, reliability, and safety in AI-based chatbots. The tool offers automated testing capabilities without the need for coding, making it easy for users to test their chatbots efficiently. With features like regression testing, performance testing, multi-language testing, and AI-powered coverage, bottest.ai provides a comprehensive solution for testing chatbots. Users can record tests, evaluate responses, and improve their chatbots based on analytics provided by the tool. The tool also supports enterprise readiness by allowing scalability, permissions management, and integration with existing workflows.

aqua

aqua is a comprehensive Quality Assurance (QA) management tool designed to streamline testing processes and enhance testing efficiency. It offers a wide range of features such as AI Copilot, bug reporting, test management, requirements management, user acceptance testing, and automation management. aqua caters to various industries including banking, insurance, manufacturing, government, tech companies, and medical sectors, helping organizations improve testing productivity, software quality, and defect detection ratios. The tool integrates with popular platforms like Jira, Jenkins, JMeter, and offers both Cloud and On-Premise deployment options. With AI-enhanced capabilities, aqua aims to make testing faster, more efficient, and error-free.

Kobiton

Kobiton is a mobile device testing platform that accelerates app delivery, improves productivity, and maximizes mobile app impact. It offers a comprehensive suite of features for real-device testing, visual testing, performance testing, accessibility testing, and more. With AI-augmented testing and no-code validations, Kobiton helps enterprises streamline continuous delivery of mobile apps. The platform provides secure and scalable device lab management, mobile device cloud, and integration with DevOps toolchain for enhanced productivity and efficiency.

TubeBuddy

TubeBuddy is a comprehensive YouTube SEO and growth tool designed for creators to optimize their videos, increase visibility, and engage with their audience effectively. The platform offers a wide range of features including SEO tools, productivity tools, content strategy insights, and niche analysis. TubeBuddy aims to streamline the video creation process, provide valuable analytics, and help creators grow their channels faster by leveraging data-driven strategies.

Webo.AI

Webo.AI is a test automation platform powered by AI that offers a smarter and faster way to conduct testing. It provides generative AI for tailored test cases, AI-powered automation, predictive analysis, and patented AiHealing for test maintenance. Webo.AI aims to reduce test time, production defects, and QA costs while increasing release velocity and software quality. The platform is designed to cater to startups and offers comprehensive test coverage with human-readable AI-generated test cases.

Vocera

Vocera is an AI voice agent testing tool that allows users to test and monitor voice AI agents efficiently. It enables users to launch voice agents in minutes, ensuring a seamless conversational experience. With features like testing against AI-generated datasets, simulating scenarios, and monitoring AI performance, Vocera helps in evaluating and improving voice agent interactions. The tool provides real-time insights, detailed logs, and trend analysis for optimal performance, along with instant notifications for errors and failures. Vocera is designed to work for everyone, offering an intuitive dashboard and data-driven decision-making for continuous improvement.

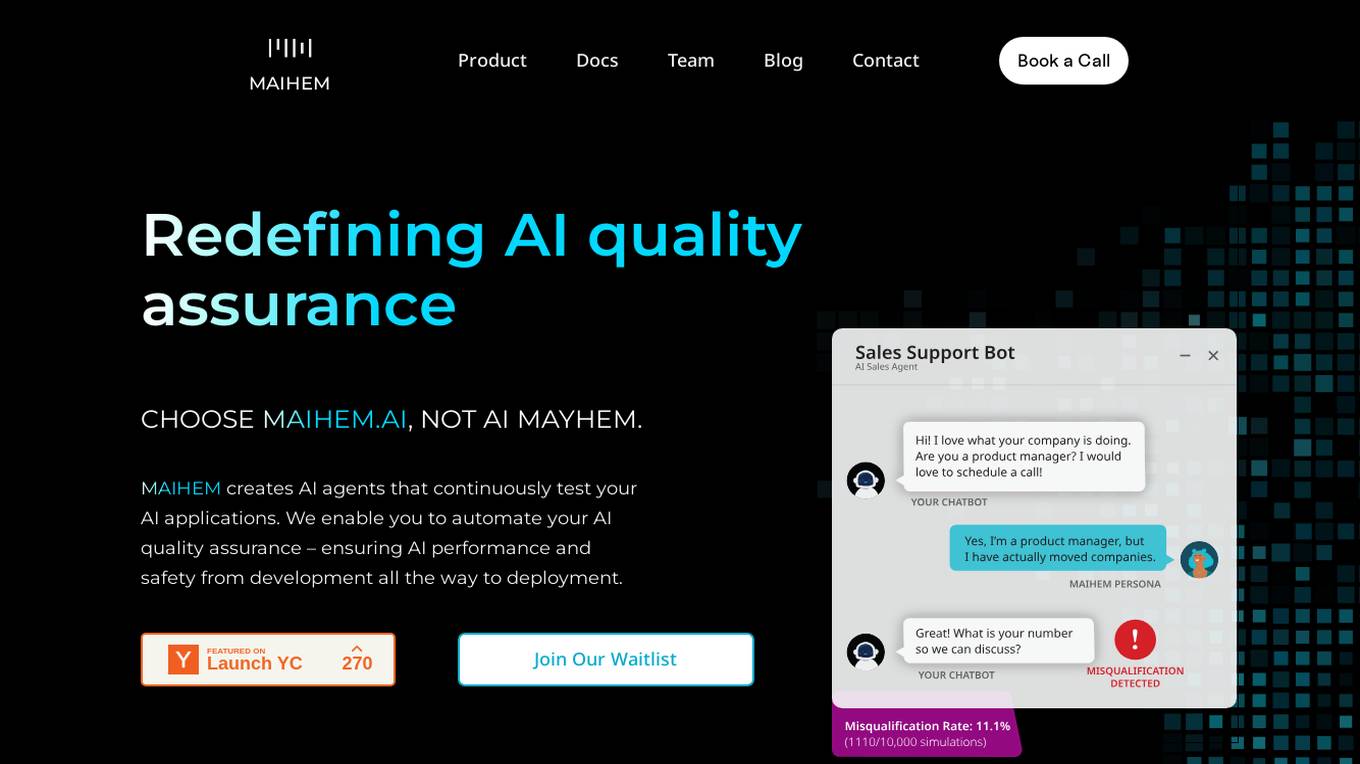

MAIHEM

MAIHEM is an AI-powered quality assurance platform that helps businesses test and improve the performance and safety of their AI applications. It automates the testing process, generates realistic test cases, and provides comprehensive analytics to help businesses identify and fix potential issues. MAIHEM is used by a variety of businesses, including those in the customer support, healthcare, education, and sales industries.

AARENA

AARENA is an AI-powered platform that allows users to build fully functional apps and websites through simple conversations. It provides a user-friendly interface where individuals can create various digital products without the need for coding knowledge. AARENA leverages AI technology to streamline the development process and empower users to bring their ideas to life efficiently.

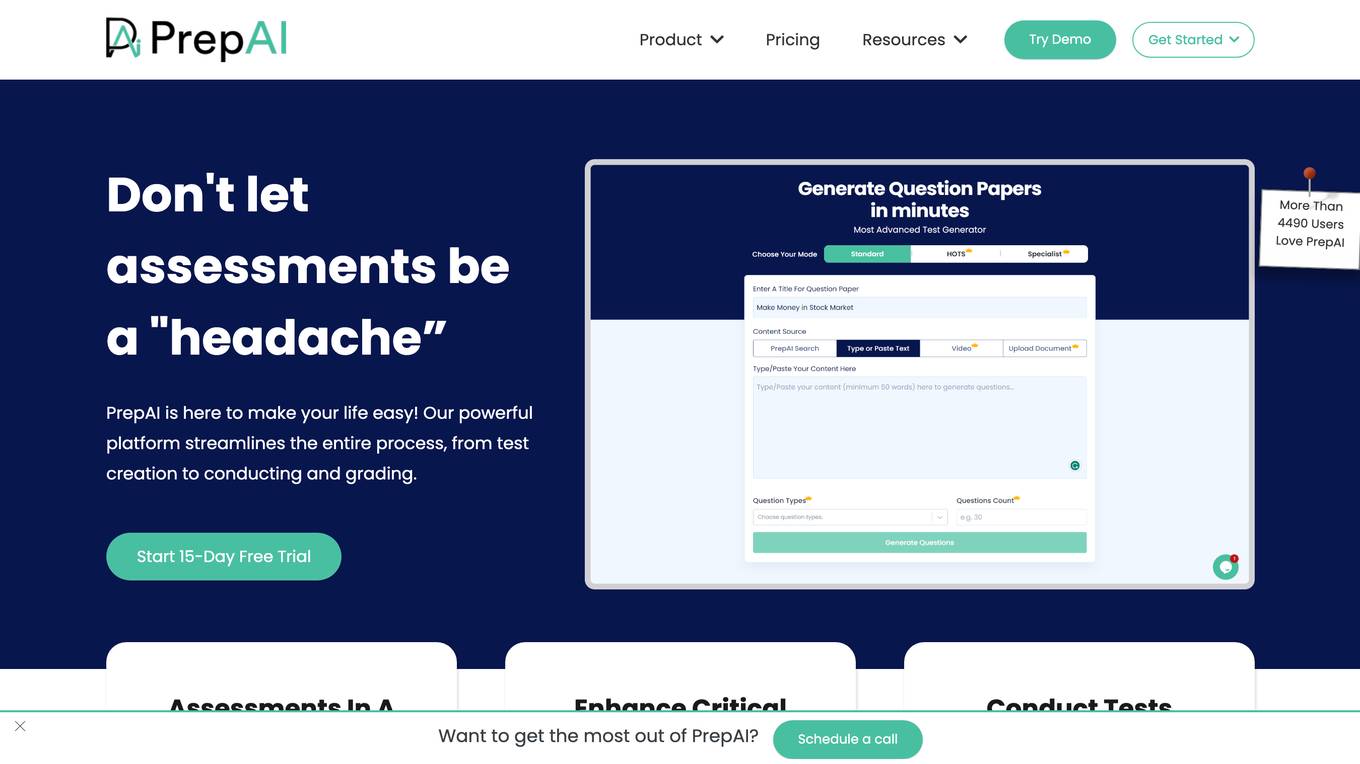

PrepAI

PrepAI is an advanced test generator that uses AI to help educators create high-quality assessments quickly and easily. With PrepAI, teachers can save time, engage students with unique questions, and prepare them for success. PrepAI offers a variety of features to make test creation easy, including multiple content input options, various question formats, and an easy-to-use dashboard. PrepAI also offers a variety of advantages for educators, including the ability to analyze higher-order thinking skills, conduct tests effortlessly, and access unlimited question sets.

Testmyprompt

Testmyprompt is an AI prompt software designed for AI Automation Agencies. It allows users to build and test AI prompts quickly and efficiently, saving significant time and ensuring consistency in prompt creation. The tool enables users to simulate thousands of conversations in seconds, import AI settings, add test questions with variations and success criteria, and analyze AI performance to identify areas of improvement. Testmyprompt helps users optimize their AI models for better performance and customer interaction.

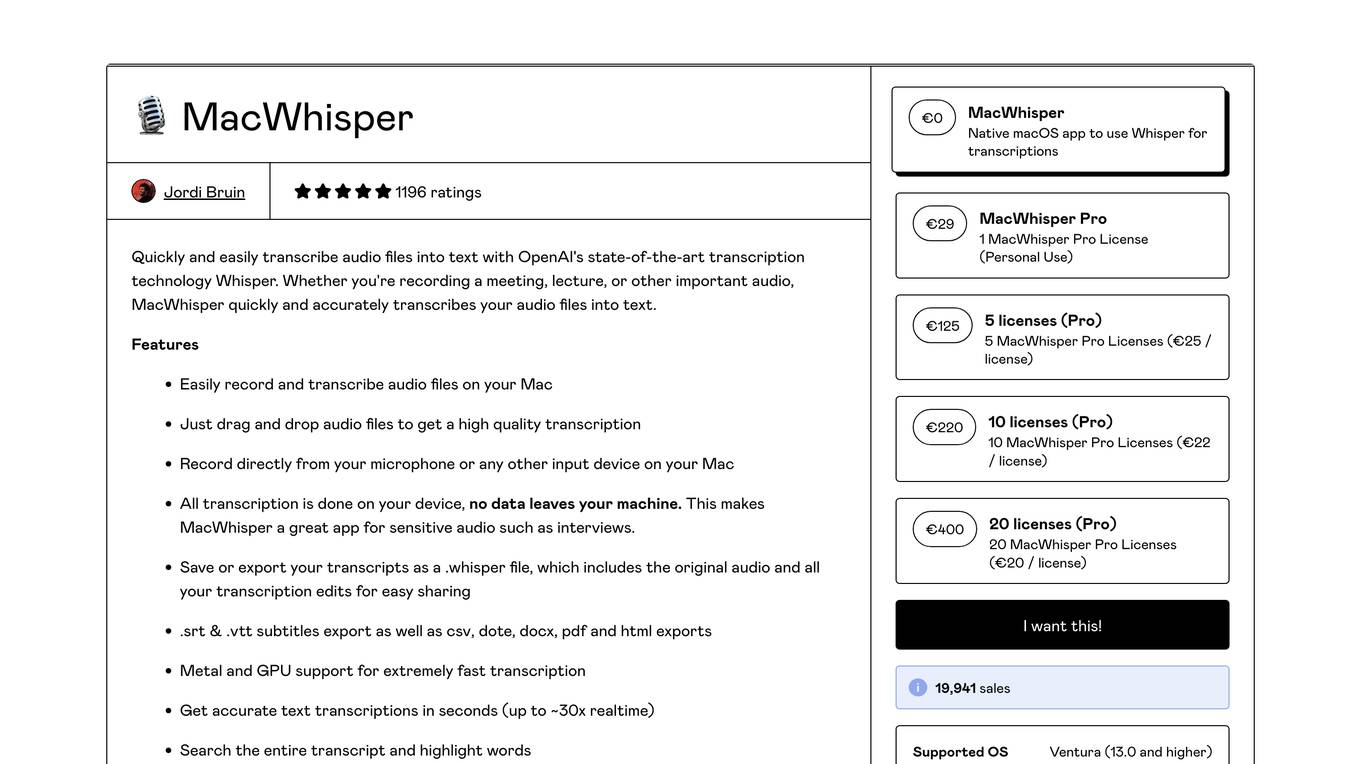

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

Quizbot

Quizbot.ai is an advanced AI question generator designed to revolutionize the process of question and exam development. It offers a cutting-edge artificial intelligence system that can generate various types of questions from different sources like PDFs, Word documents, videos, images, and more. Quizbot.ai is a versatile tool that caters to multiple languages and question types, providing a personalized and engaging learning experience for users across various industries. The platform ensures scalability, flexibility, and personalized assessments, along with detailed analytics and insights to track learner performance. Quizbot.ai is secure, user-friendly, and offers a range of subscription plans to suit different needs.

Lisapet.AI

Lisapet.AI is an AI prompt testing suite designed for product teams to streamline the process of designing, prototyping, testing, and shipping AI features. It offers a comprehensive platform with features like best-in-class AI playground, variables for dynamic data inputs, structured outputs, side-by-side editing, function calling, image inputs, assertions & metrics, performance comparison, data sets organization, shareable reports, comments & feedback, token & cost stats, and more. The application aims to help teams save time, improve efficiency, and ensure the reliability of AI features through automated prompt testing.

Plumb

Plumb is a no-code, node-based builder that empowers product, design, and engineering teams to create AI features together. It enables users to build, test, and deploy AI features with confidence, fostering collaboration across different disciplines. With Plumb, teams can ship prototypes directly to production, ensuring that the best prompts from the playground are the exact versions that go to production. It goes beyond automation, allowing users to build complex multi-tenant pipelines, transform data, and leverage validated JSON schema to create reliable, high-quality AI features that deliver real value to users. Plumb also makes it easy to compare prompt and model performance, enabling users to spot degradations, debug them, and ship fixes quickly. It is designed for SaaS teams, helping ambitious product teams collaborate to deliver state-of-the-art AI-powered experiences to their users at scale.

Page Pilot AI

Page Pilot AI is a tool that helps e-commerce store owners create high-converting product pages and ad copy using artificial intelligence. It offers features such as product page generation, ad creative generation, and access to winning products. With Page Pilot AI, users can save time and money by automating the product testing phase and launching products faster.

BugFree.ai

BugFree.ai is an AI-powered platform designed to help users practice system design and behavior interviews, similar to Leetcode. The platform offers a range of features to assist users in preparing for technical interviews, including mock interviews, real-time feedback, and personalized study plans. With BugFree.ai, users can improve their problem-solving skills and gain confidence in tackling complex interview questions.

SiteSpect

SiteSpect is an AI-driven platform that offers A/B testing, personalization, and optimization solutions for businesses. It provides capabilities such as analytics, visual editor, mobile support, and AI-driven product recommendations. SiteSpect helps businesses validate ideas, deliver personalized experiences, manage feature rollouts, and make data-driven decisions. With a focus on conversion and revenue success, SiteSpect caters to marketers, product managers, developers, network operations, retailers, and media & entertainment companies. The platform ensures faster site performance, better data accuracy, scalability, and expert support for secure and certified optimization.

mabl

Mabl is a leading unified test automation platform built on cloud, AI, and low-code innovations that delivers a modern approach ensuring the highest quality software across the entire user journey. Our SaaS platform allows teams to scale functional and non-functional testing across web apps, mobile apps, APIs, performance, and accessibility for best-in-class digital experiences.

BenchLLM

BenchLLM is an AI tool designed for AI engineers to evaluate LLM-powered apps by running and evaluating models with a powerful CLI. It allows users to build test suites, choose evaluation strategies, and generate quality reports. The tool supports OpenAI, Langchain, and other APIs out of the box, offering automation, visualization of reports, and monitoring of model performance.

4 - Open Source AI Tools

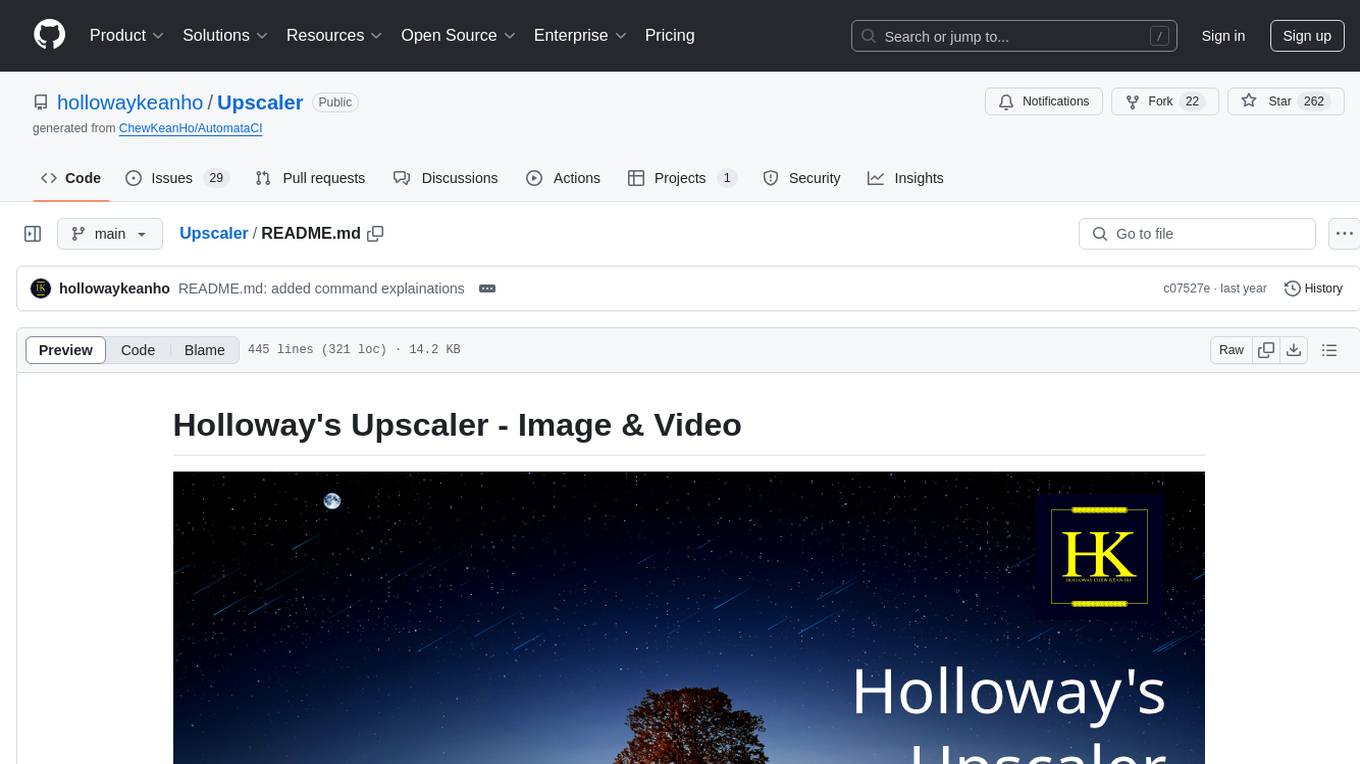

Upscaler

Holloway's Upscaler is a consolidation of various compiled open-source AI image/video upscaling products for a CLI-friendly image and video upscaling program. It provides low-cost AI upscaling software that can run locally on a laptop, programmable for albums and videos, reliable for large video files, and works without GUI overheads. The repository supports hardware testing on various systems and provides important notes on GPU compatibility, video types, and image decoding bugs. Dependencies include ffmpeg and ffprobe for video processing. The user manual covers installation, setup pathing, calling for help, upscaling images and videos, and contributing back to the project. Benchmarks are provided for performance evaluation on different hardware setups.

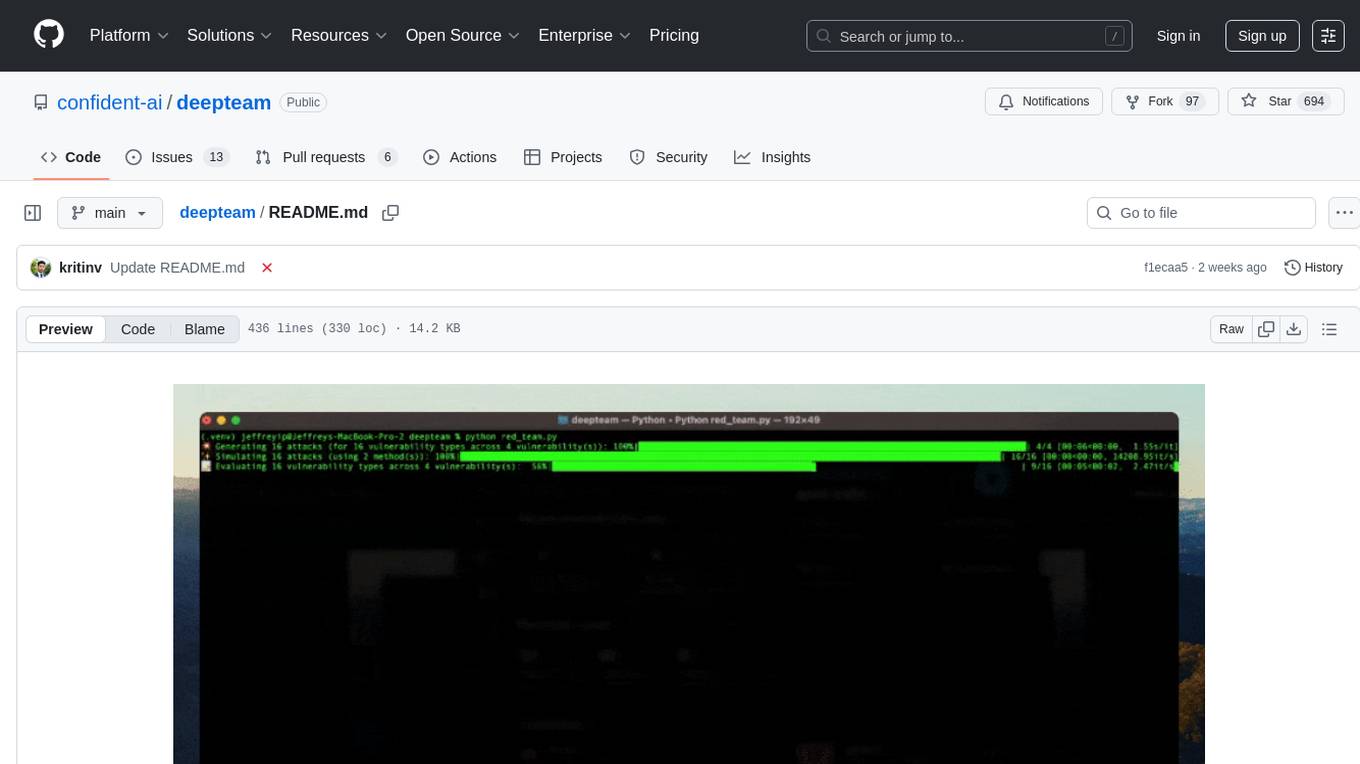

deepteam

Deepteam is a powerful open-source tool designed for deep learning projects. It provides a user-friendly interface for training, testing, and deploying deep neural networks. With Deepteam, users can easily create and manage complex models, visualize training progress, and optimize hyperparameters. The tool supports various deep learning frameworks and allows seamless integration with popular libraries like TensorFlow and PyTorch. Whether you are a beginner or an experienced deep learning practitioner, Deepteam simplifies the development process and accelerates model deployment.

BoxPwnr

BoxPwnr is a tool designed to test the performance of different agentic architectures using Large Language Models (LLMs) to autonomously solve HackTheBox machines. It provides a plug and play system with various strategies and platforms supported. BoxPwnr uses an iterative process where LLMs receive system prompts, suggest commands, execute them in a Docker container, analyze outputs, and repeat until the flag is found. The tool automates commands, saves conversations and commands for analysis, and tracks usage statistics. With recent advancements in LLM technology, BoxPwnr aims to evaluate AI systems' reasoning capabilities, creative thinking, security understanding, problem-solving skills, and code generation abilities.

SWE-bench-Live

SWE-bench-Live is a live benchmark dataset for evaluating AI systems' ability to complete real-world software engineering tasks. It is continuously updated through an automated curation pipeline, providing the community with up-to-date task instances for rigorous and contamination-free evaluation. The dataset is designed to test the performance of various AI models on software engineering tasks and supports multiple programming languages and operating systems.

20 - OpenAI Gpts

IELTS AI Checker (Speaking and Writing)

Provides IELTS speaking and writing feedback and scores.

React Native Testing Library Owl

Assists in writing React Native tests using the React Native Testing Library.

Battery Expert

Professional experts in the battery field will answer your battery questions in the simplest way. At the same time, the database is constantly updated, and it has now been updated to 2024/01/10

IQ Test

IQ Test is designed to simulate an IQ testing environment. It provides a formal and objective experience, delivering questions and processing answers in a straightforward manner.

GetPaths

This GPT takes in content related to an application, such as HTTP traffic, JavaScript files, source code, etc., and outputs lists of URLs that can be used for further testing.

Wordon, World's Worst Customer | Divergent AI

I simulate tough Customer Support scenarios for Agent Training.

Test Shaman

Test Shaman: Guiding software testing with Grug wisdom and humor, balancing fun with practical advice.

Raven's Progressive Matrices Test

Provides Raven's Progressive Matrices test with explanations and calculates your IQ score.