Best AI tools for< Optimize Training Throughput >

20 - AI tool Sites

Cirrascale Cloud Services

Cirrascale Cloud Services is an AI tool that offers cloud solutions for Artificial Intelligence applications. The platform provides a range of cloud services and products tailored for AI innovation, including NVIDIA GPU Cloud, AMD Instinct Series Cloud, Qualcomm Cloud, Graphcore, Cerebras, and SambaNova. Cirrascale's AI Innovation Cloud enables users to test and deploy on leading AI accelerators in one cloud, democratizing AI by delivering high-performance AI compute and scalable deep learning solutions. The platform also offers professional and managed services, tailored multi-GPU server options, and high-throughput storage and networking solutions to accelerate development, training, and inference workloads.

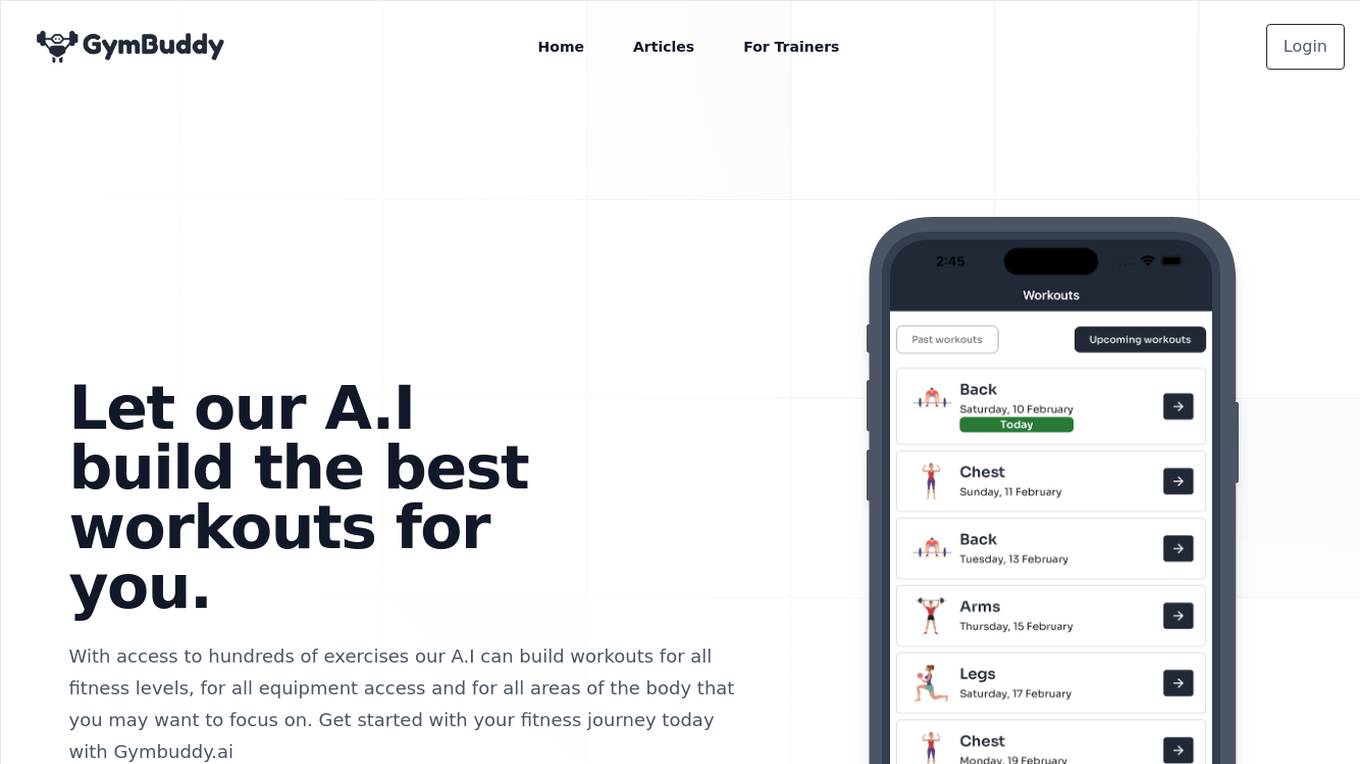

GymBuddy.ai

GymBuddy.ai is an AI workout planner that leverages artificial intelligence to create personalized workout plans tailored to individual fitness levels, equipment access, and target areas of the body. The platform offers a wide range of exercises and features to help users achieve their fitness goals effectively. With advanced analytics and full workout customization, GymBuddy.ai aims to revolutionize the way people approach their fitness journey.

Answer.AI

Answer.AI is an AI tool developed by Benjamin Clavié, focusing on NLP&IR with a special interest in developing smaller models to power LLM-based applications. The tool includes models like answerai-colbert-small-v1 and JaColBERTv2.5🇯🇵, offering efficient pooling tricks and optimizing retrieval training for lower-resource languages. Benjamin Clavié shares insights and thoughts on ML/NLP/IR ecosystem through blog posts on the website.

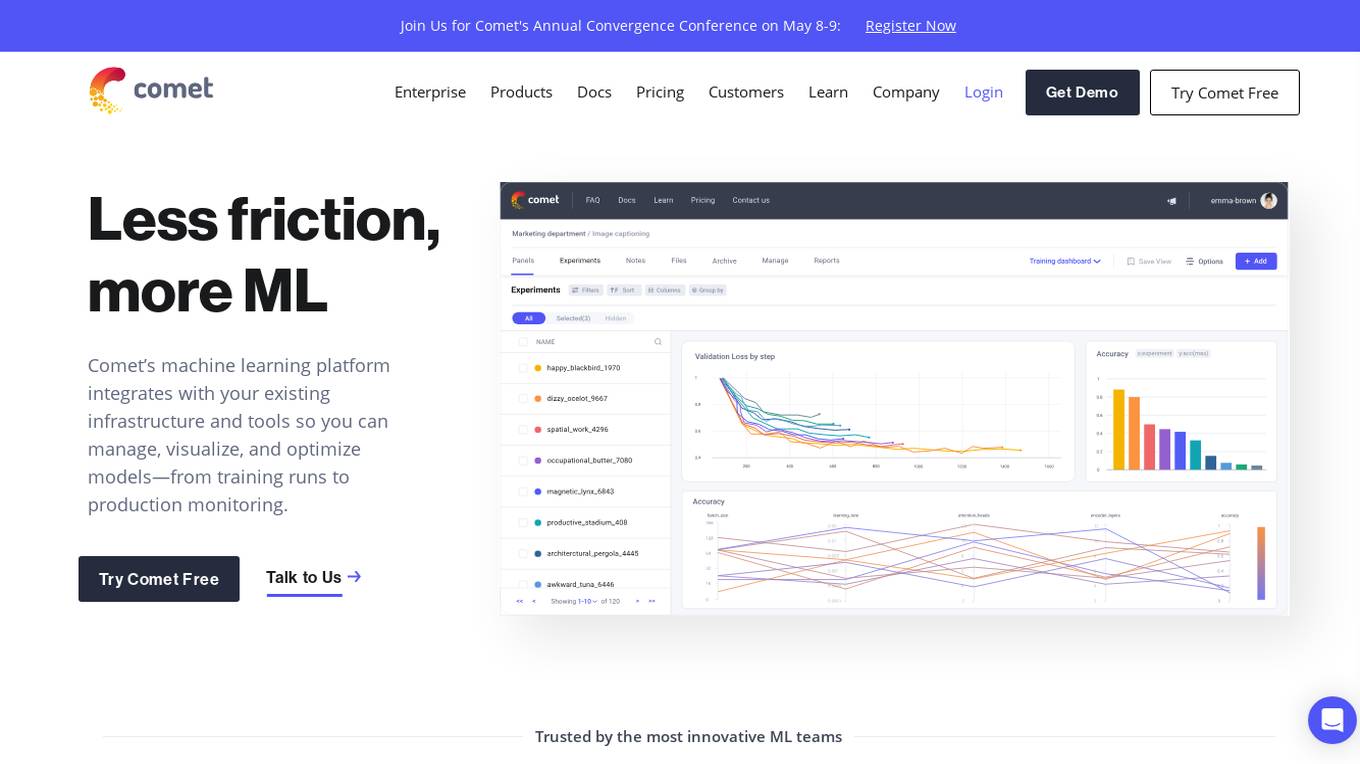

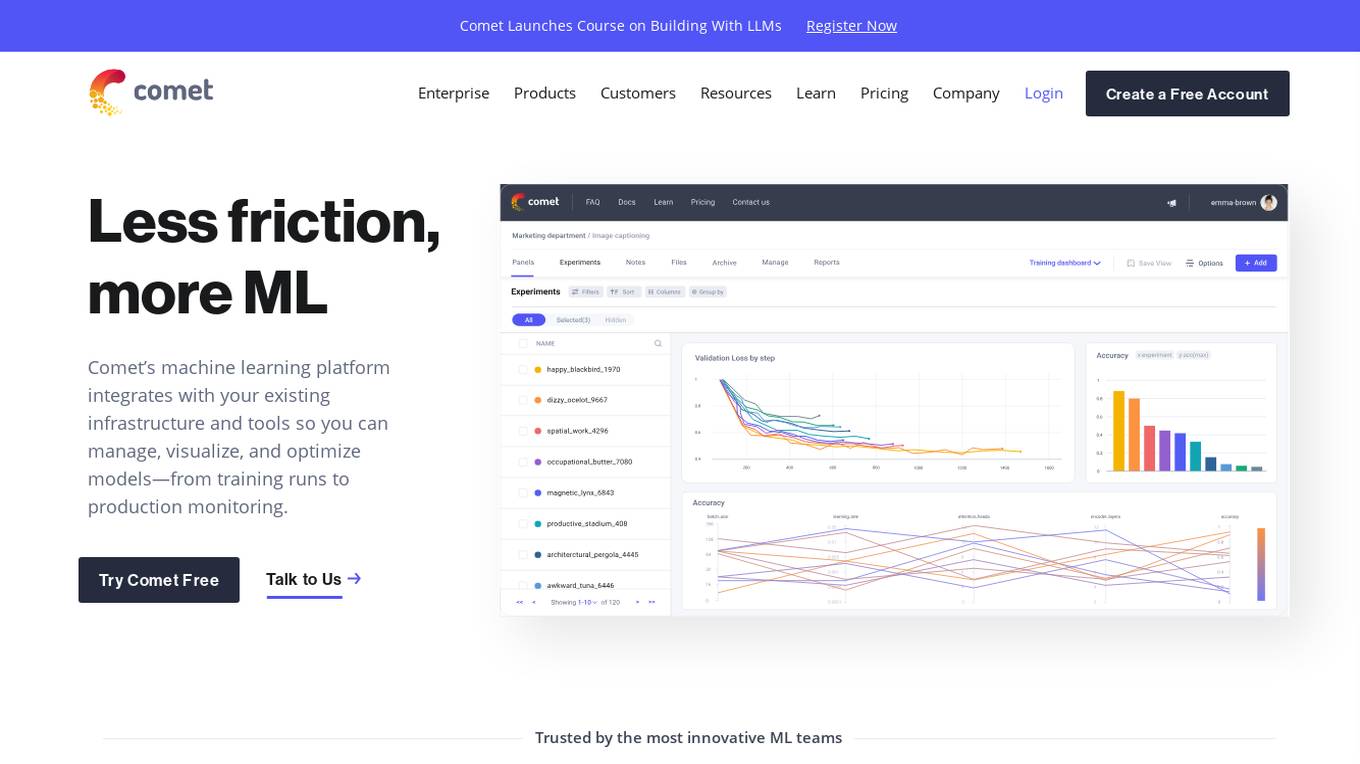

Comet ML

Comet ML is an extensible, fully customizable machine learning platform that aims to move ML forward by supporting productivity, reproducibility, and collaboration. It integrates with existing infrastructure and tools to manage, visualize, and optimize models from training runs to production monitoring. Users can track and compare training runs, create a model registry, and monitor models in production all in one platform. Comet's platform can be run on any infrastructure, enabling users to reshape their ML workflow and bring their existing software and data stack.

Comet ML

Comet ML is a machine learning platform that integrates with your existing infrastructure and tools so you can manage, visualize, and optimize models—from training runs to production monitoring.

Comet ML

Comet ML is a machine learning platform that integrates with your existing infrastructure and tools so you can manage, visualize, and optimize models—from training runs to production monitoring.

Macgence AI Training Data Services

Macgence is an AI training data services platform that offers high-quality off-the-shelf structured training data for organizations to build effective AI systems at scale. They provide services such as custom data sourcing, data annotation, data validation, content moderation, and localization. Macgence combines global linguistic, cultural, and technological expertise to create high-quality datasets for AI models, enabling faster time-to-market across the entire model value chain. With more than 5 years of experience, they support and scale AI initiatives of leading global innovators by designing custom data collection programs. Macgence specializes in handling AI training data for text, speech, image, and video data, offering cognitive annotation services to unlock the potential of unstructured textual data.

Bibit AI

Bibit AI is a real estate marketing AI designed to enhance the efficiency and effectiveness of real estate marketing and sales. It can help create listings, descriptions, and property content, and offers a host of other features. Bibit AI is the world's first AI for Real Estate. We are transforming the real estate industry by boosting efficiency and simplifying tasks like listing creation and content generation.

Wedo AI

Wedo AI is an all-in-one AI-powered platform designed to help businesses attract customers, convert leads, and manage various aspects of online marketing, sales, and delivery. It offers a range of tools such as AI ads, chat bots, social media planner, websites, ecommerce store, memberships, CRM, email marketing, analytics, and more. Wedo AI aims to streamline processes, increase efficiency, and drive revenue growth for entrepreneurs, startups, influencers, non-profits, coaches, contractors, freelancers, and consultants. The platform provides features for managing finances, automating billing, creating funnels, building websites, selling products, engaging with customers, and analyzing data to make informed decisions.

Aiden Solutions

Aiden Solutions is an AI application that provides advanced tech support and training for modern businesses. It leverages AI technology to optimize technical support and training, offering tailored AI tools for businesses to enhance support, streamline training, and drive productivity. The platform features customizable chatbots, multi-channel integration, analytics & reporting, file management, security & compliance, and message automation. Aiden Solutions is known for its efficient, intuitive, and always available support, offering 24/7 intelligent assistance to improve customer interactions and operational efficiency. The application is designed with a user-friendly interface and powerful analytics for data-driven decisions.

BuildAi

BuildAi is an AI tool designed to provide the lowest cost GPU cloud for AI training on the market. The platform is powered with renewable energy, enabling companies to train AI models at a significantly reduced cost. BuildAi offers interruptible pricing, short term reserved capacity, and high uptime pricing options. The application focuses on optimizing infrastructure for training and fine-tuning machine learning models, not inference, and aims to decrease the impact of computing on the planet. With features like data transfer support, SSH access, and monitoring tools, BuildAi offers a comprehensive solution for ML teams.

SymTrain

SymTrain is an AI-powered platform that automates training and coaching for contact center agents. By utilizing simulations and AI technology, SymTrain offers a cost-effective solution that enhances agent performance, reduces training time, and improves overall customer satisfaction. The platform provides automated role-play scenarios, consistent feedback, and data-driven coaching to help organizations streamline their training processes and achieve better results. SymTrain revolutionizes how companies train and coach their agents, leading to increased efficiency, revenue, and customer satisfaction.

FluidStack

FluidStack is a leading GPU cloud platform designed for AI and LLM (Large Language Model) training. It offers unlimited scale for AI training and inference, allowing users to access thousands of fully-interconnected GPUs on demand. Trusted by top AI startups, FluidStack aggregates GPU capacity from data centers worldwide, providing access to over 50,000 GPUs for accelerating training and inference. With 1000+ data centers across 50+ countries, FluidStack ensures reliable and efficient GPU cloud services at competitive prices.

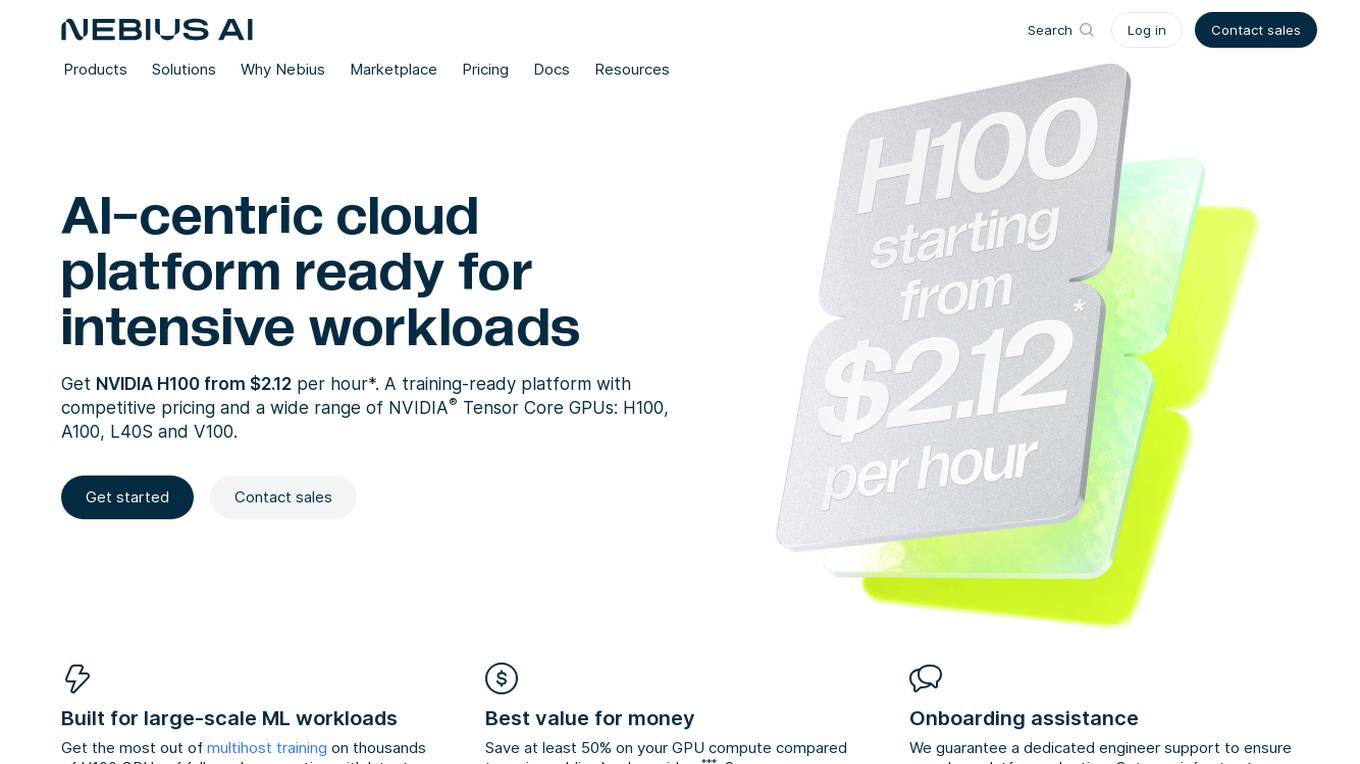

Nebius AI

Nebius AI is an AI-centric cloud platform designed to handle intensive workloads efficiently. It offers a range of advanced features to support various AI applications and projects. The platform ensures high performance and security for users, enabling them to leverage AI technology effectively in their work. With Nebius AI, users can access cutting-edge AI tools and resources to enhance their projects and streamline their workflows.

Qualint

Qualint is an AI-driven platform revolutionizing call center quality assurance. It empowers call centers with superior analytics, comprehensive call analysis, and streamlined operations. The platform provides real-time insights, tailored quality standards, and enhances collaboration to ensure every customer interaction is understood. Qualint offers core features such as real-time call uploads & analysis, efficiency improvement, information accessibility, and team communication. With different pricing plans, Qualint caters to call centers of all sizes, from small to large, helping them optimize agent performance, navigate regulatory challenges, and elevate customer service.

FineTuneAIs.com

FineTuneAIs.com is a platform that specializes in custom AI model fine-tuning. Users can fine-tune their AI models to achieve better performance and accuracy. The platform requires JavaScript to be enabled for optimal functionality.

ONNX Runtime

ONNX Runtime is a production-grade AI engine designed to accelerate machine learning training and inferencing in various technology stacks. It supports multiple languages and platforms, optimizing performance for CPU, GPU, and NPU hardware. ONNX Runtime powers AI in Microsoft products and is widely used in cloud, edge, web, and mobile applications. It also enables large model training and on-device training, offering state-of-the-art models for tasks like image synthesis and text generation.

Jyotax.ai

Jyotax.ai is an AI-powered tax solution that revolutionizes tax compliance by simplifying the tax process with advanced AI solutions. It offers comprehensive bookkeeping, payroll processing, worldwide tax returns and filing automation, profit recovery, contract compliance, and financial modeling and budgeting services. The platform ensures accurate reporting, real-time compliance monitoring, global tax solutions, customizable tax tools, and seamless data integration. Jyotax.ai optimizes tax workflows, ensures compliance with precise AI tax calculations, and simplifies global tax operations through innovative AI solutions.

Athletica AI

Athletica AI is an AI-powered athletic training and personalized fitness application that offers tailored coaching and training plans for various sports like cycling, running, duathlon, triathlon, and rowing. It adapts to individual fitness levels, abilities, and availability, providing daily step-by-step training plans and comprehensive session analyses. Athletica AI integrates seamlessly with workout data from platforms like Garmin, Strava, and Concept 2 to craft personalized training plans and workouts. The application aims to help athletes train smarter, not harder, by leveraging the power of AI to optimize performance and achieve fitness goals.

The OR Society

The OR Society is a professional membership body that supports the development of people working in operational research, data science, and analytics. The society provides a range of services to its members, including access to world-class journals, events and conferences, training courses, and pro bono opportunities. The OR Society also works to promote the use of operational research in all areas of industry, business, government, the community, and the third sector.

2 - Open Source AI Tools

Liger-Kernel

Liger Kernel is a collection of Triton kernels designed for LLM training, increasing training throughput by 20% and reducing memory usage by 60%. It includes Hugging Face Compatible modules like RMSNorm, RoPE, SwiGLU, CrossEntropy, and FusedLinearCrossEntropy. The tool works with Flash Attention, PyTorch FSDP, and Microsoft DeepSpeed, aiming to enhance model efficiency and performance for researchers, ML practitioners, and curious novices.

verl

veRL is a flexible and efficient reinforcement learning training framework designed for large language models (LLMs). It allows easy extension of diverse RL algorithms, seamless integration with existing LLM infrastructures, and flexible device mapping. The framework achieves state-of-the-art throughput and efficient actor model resharding with 3D-HybridEngine. It supports popular HuggingFace models and is suitable for users working with PyTorch FSDP, Megatron-LM, and vLLM backends.

20 - OpenAI Gpts

Training Innovator

Helps develop training modules in Business, Management, Leadership, and HRM.

Singularity Chisel Code Architect

Your digital design & verification guide. The conversation data will not be used for training.

CV & Resume ATS Optimize + 🔴Match-JOB🔴

Professional Resume & CV Assistant 📝 Optimize for ATS 🤖 Tailor to Job Descriptions 🎯 Compelling Content ✨ Interview Tips 💡

Website Conversion by B12

I'll help you optimize your website for more conversions, and compare your site's CRO potential to competitors’.

Thermodynamics Advisor

Advises on thermodynamics processes to optimize system efficiency.

Cloud Architecture Advisor

Guides cloud strategy and architecture to optimize business operations.

International Tax Advisor

Advises on international tax matters to optimize company's global tax position.

Investment Management Advisor

Provides strategic financial guidance for investment behavior to optimize organization's wealth.

ESG Strategy Navigator 🌱🧭

Optimize your business with sustainable practices! ESG Strategy Navigator helps integrate Environmental, Social, Governance (ESG) factors into corporate strategy, ensuring compliance, ethical impact, and value creation. 🌟