Best AI tools for< Optimize Ai Performance >

20 - AI tool Sites

Arthur

Arthur is an industry-leading MLOps platform that simplifies deployment, monitoring, and management of traditional and generative AI models. It ensures scalability, security, compliance, and efficient enterprise use. Arthur's turnkey solutions enable companies to integrate the latest generative AI technologies into their operations, making informed, data-driven decisions. The platform offers open-source evaluation products, model-agnostic monitoring, deployment with leading data science tools, and model risk management capabilities. It emphasizes collaboration, security, and compliance with industry standards.

deepsense.ai

deepsense.ai is an Artificial Intelligence Development Company that offers AI Guidance and Implementation Services across various industries such as Retail, Manufacturing, Financial Services, IT Operations, TMT, Medical & Beauty. The company provides Generative AI Solution Center resources to help plan and implement AI solutions. With a focus on AI vision, solutions, and products, deepsense.ai leverages its decade of AI experience to accelerate AI implementation for businesses.

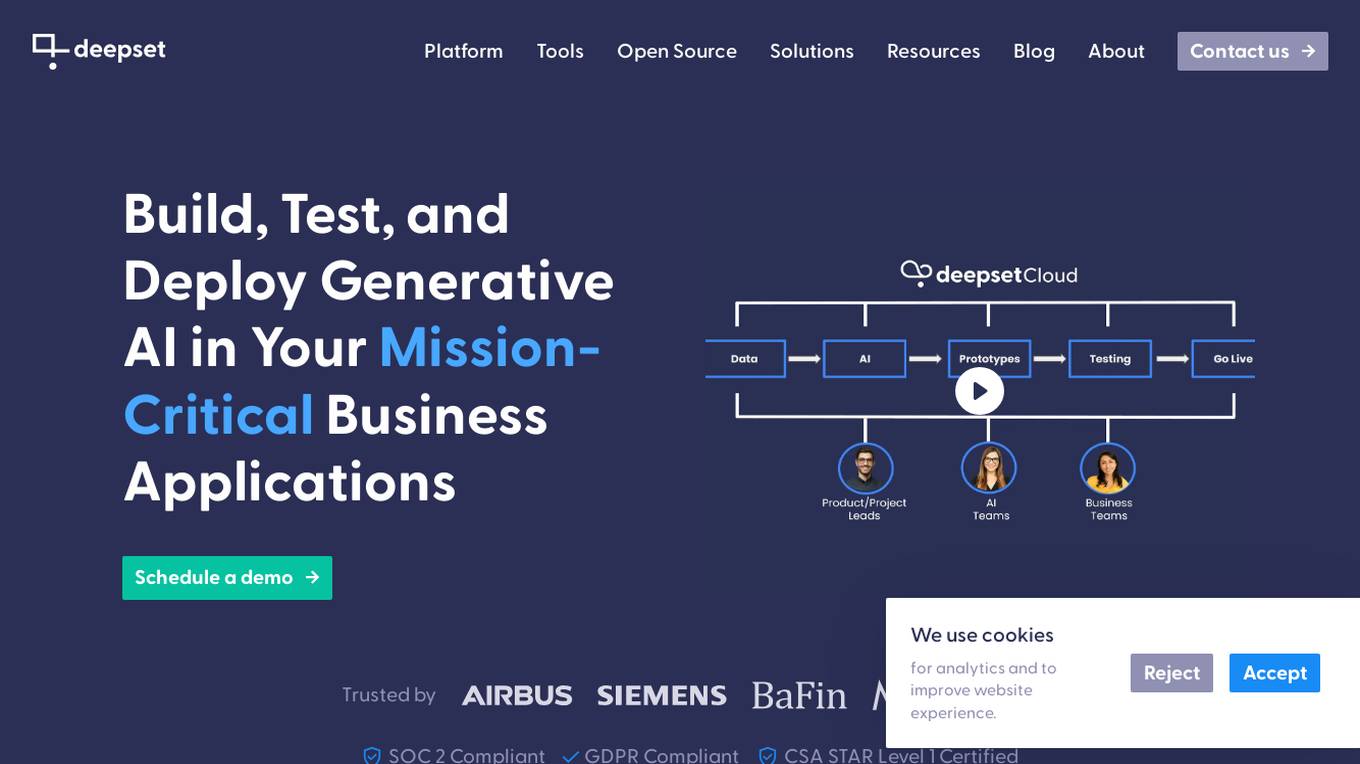

deepset

deepset is an AI platform that offers enterprise-level products and solutions for AI teams. It provides deepset Cloud, a platform built with Haystack, enabling fast and accurate prototyping, building, and launching of advanced AI applications. The platform streamlines the AI application development lifecycle, offering processes, tools, and expertise to move from prototype to production efficiently. With deepset Cloud, users can optimize solution accuracy, performance, and cost, and deploy AI applications at any scale with one click. The platform also allows users to explore new models and configurations without limits, extending their team with access to world-class AI engineers for guidance and support.

Teammately

Teammately is an AI tool that redefines how Human AI-Engineers build AI. It is an Agentic AI for AI development process, designed to enable Human AI-Engineers to focus on more creative and productive missions in AI development. Teammately follows the best practices of Human LLM DevOps and offers features like Development Prompt Engineering, Knowledge Tuning, Evaluation, and Optimization to assist in the AI development process. The tool aims to revolutionize AI engineering by allowing AI AI-Engineers to handle technical tasks, while Human AI-Engineers focus on planning and aligning AI with human preferences and requirements.

NeuReality

NeuReality is an AI-centric solution designed to democratize AI adoption by providing purpose-built tools for deploying and scaling inference workflows. Their innovative AI-centric architecture combines hardware and software components to optimize performance and scalability. The platform offers a one-stop shop for AI inference, addressing barriers to AI adoption and streamlining computational processes. NeuReality's tools enable users to deploy, afford, use, and manage AI more efficiently, making AI easy and accessible for a wide range of applications.

Cambricon

Cambricon is an AI technology company that specializes in developing intelligent acceleration cards and systems. They offer a range of products including cloud AI acceleration cards, edge AI chips, and intelligent processing units. Cambricon's advanced chiplet technology and MLUarch03 architecture provide high-performance AI solutions for training and inference tasks. The company is dedicated to advancing the AI industry through innovative hardware and software platforms.

Ardor

Ardor is an AI tool that offers an all-in agentic software development lifecycle automation platform. It helps users build, deploy, and scale AI agents on the cloud efficiently and cost-effectively. With Ardor, users can start with a prompt, design AI agents visually, see their product get built, refine and iterate, and launch in minutes. The platform provides real-time collaboration features, simple pricing plans, and various tools like Ardor Copilot, AI Agent-Builder Canvas, Instant Build Messages, AI Debugger, Proactive Monitoring, Role-Based Access Control, and Single Sign-On.

Freeplay

Freeplay is a tool that helps product teams experiment, test, monitor, and optimize AI features for customers. It provides a single pane of glass for the entire team, lightweight developer SDKs for Python, Node, and Java, and deployment options to meet compliance needs. Freeplay also offers best practices for the entire AI development lifecycle.

Lunary

Lunary is an AI developer platform designed to bring AI applications to production. It offers a comprehensive set of tools to manage, improve, and protect LLM apps. With features like Logs, Metrics, Prompts, Evaluations, and Threads, Lunary empowers users to monitor and optimize their AI agents effectively. The platform supports tasks such as tracing errors, labeling data for fine-tuning, optimizing costs, running benchmarks, and testing open-source models. Lunary also facilitates collaboration with non-technical teammates through features like A/B testing, versioning, and clean source-code management.

Testmyprompt

Testmyprompt is an AI prompt software designed for AI Automation Agencies. It allows users to build and test AI prompts quickly and efficiently, saving significant time and ensuring consistency in prompt creation. The tool enables users to simulate thousands of conversations in seconds, import AI settings, add test questions with variations and success criteria, and analyze AI performance to identify areas of improvement. Testmyprompt helps users optimize their AI models for better performance and customer interaction.

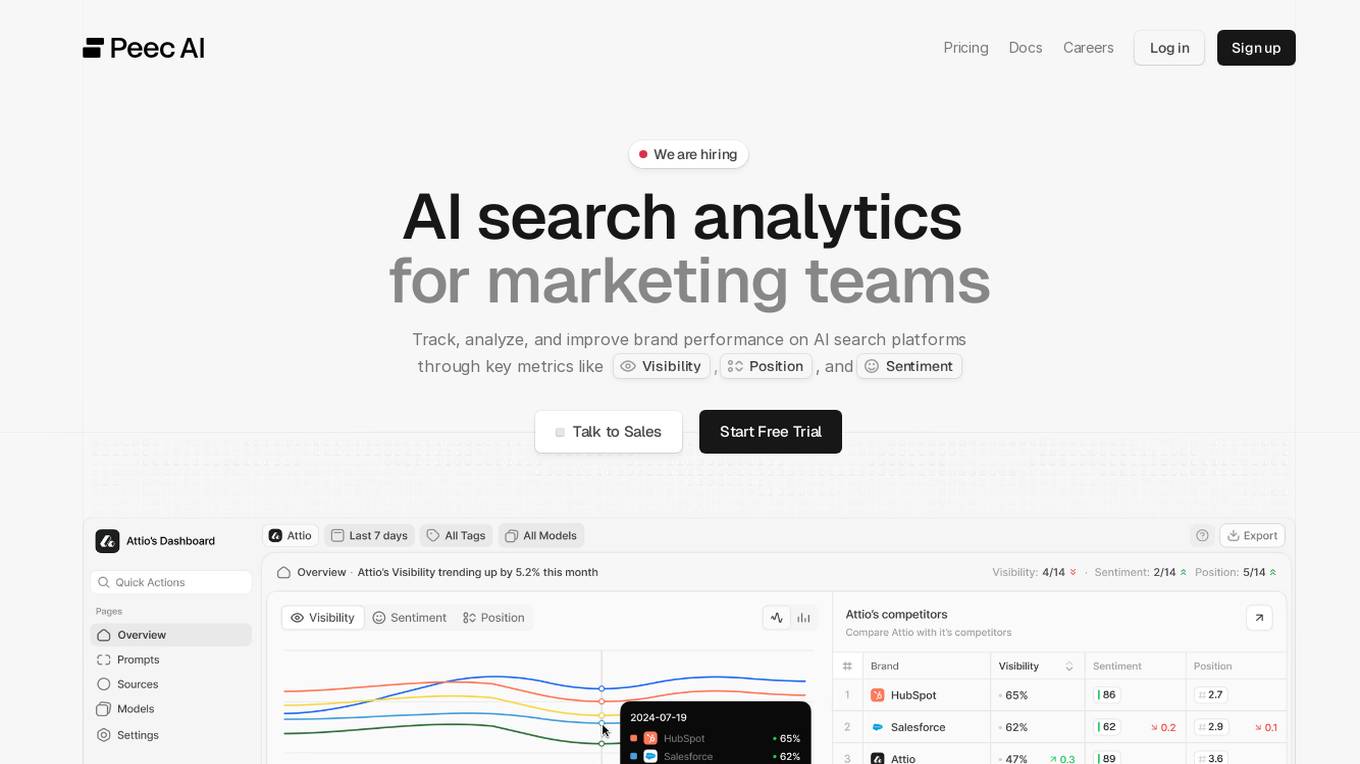

Peec AI

Peec AI is an AI search analytics tool designed for marketing teams to track, analyze, and improve brand performance on AI search platforms. It provides key metrics such as Visibility, Position, and Sentiment to help businesses understand how AI perceives their brand. The platform offers insights on AI visibility, prompts analysis, and competitor tracking to enhance marketing strategies in the era of AI and generative search.

Vocera

Vocera is an AI voice agent testing tool that allows users to test and monitor voice AI agents efficiently. It enables users to launch voice agents in minutes, ensuring a seamless conversational experience. With features like testing against AI-generated datasets, simulating scenarios, and monitoring AI performance, Vocera helps in evaluating and improving voice agent interactions. The tool provides real-time insights, detailed logs, and trend analysis for optimal performance, along with instant notifications for errors and failures. Vocera is designed to work for everyone, offering an intuitive dashboard and data-driven decision-making for continuous improvement.

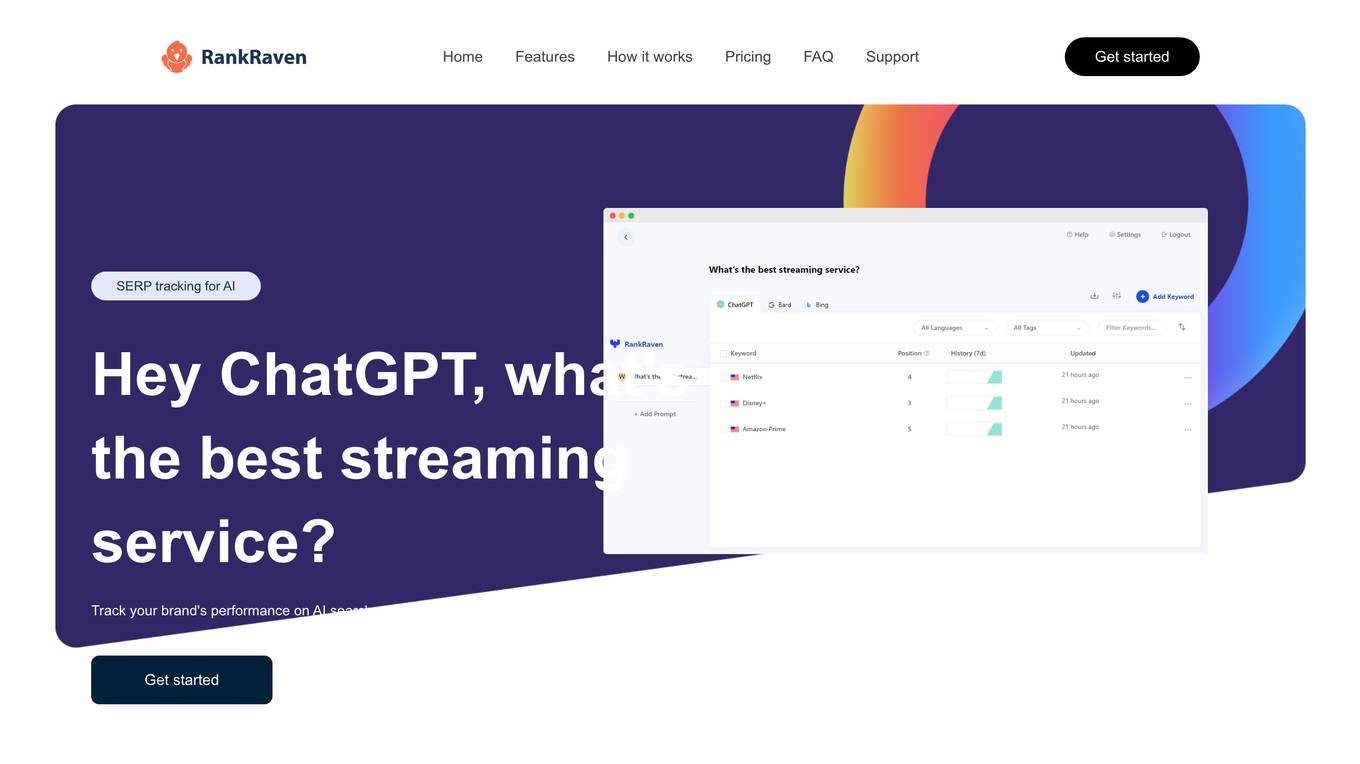

RankRaven

RankRaven is an advanced AI rank tracking tool that allows users to monitor and analyze their brand's performance on AI search engines. The tool leverages multiple AI models such as OpenAI ChatGPT, Google Bard, and Microsoft Bing to provide fast and accurate SEO tracking. Users can track their brand's rank across different AI search models, receive daily rank updates, compare performance across languages and countries, and analyze trends over time. RankRaven automates the process of running prompts and checking keyword appearances in model answers, making it a valuable tool for individuals, businesses, and agencies looking to optimize their AI SEO strategies.

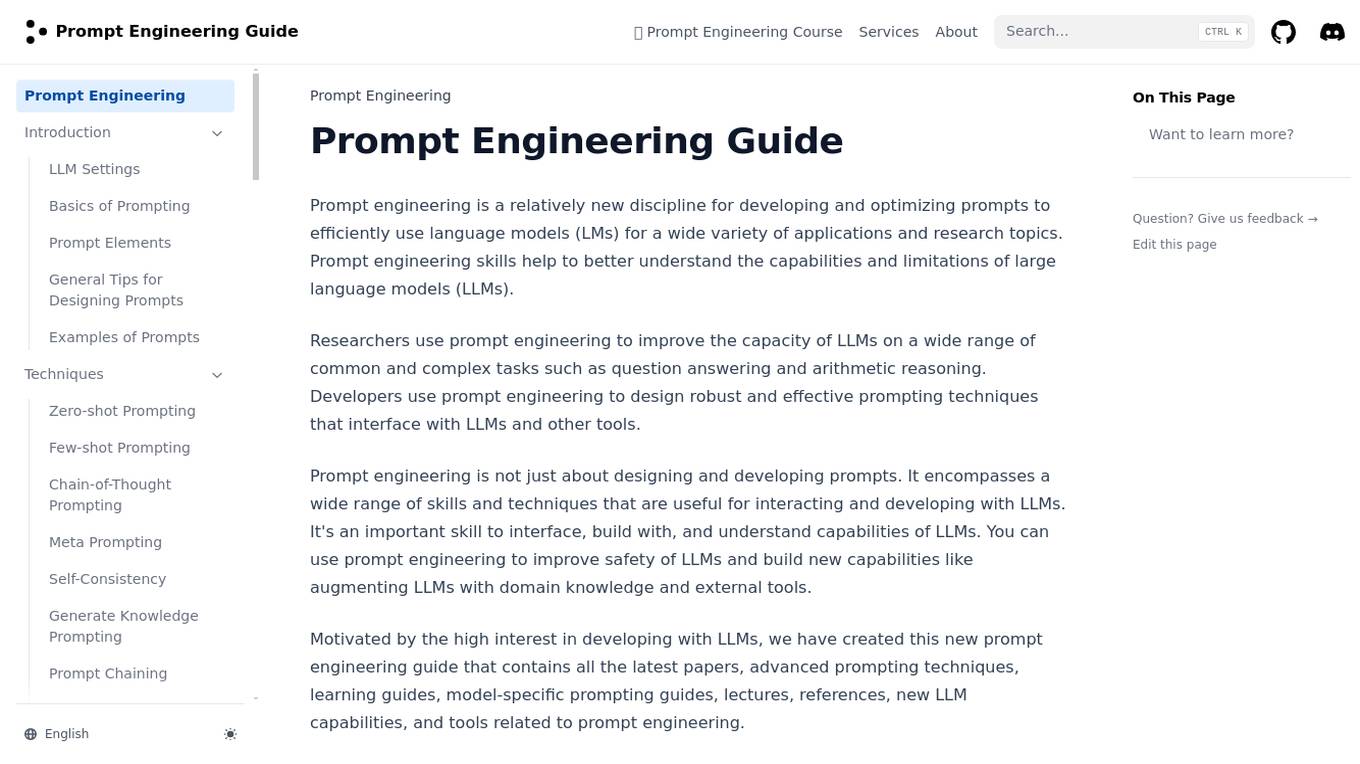

Prompt Engineering

Prompt Engineering is a discipline focused on developing and optimizing prompts to efficiently utilize language models (LMs) for various applications and research topics. It involves skills to understand the capabilities and limitations of large language models, improving their performance on tasks like question answering and arithmetic reasoning. Prompt engineering is essential for designing robust prompting techniques that interact with LLMs and other tools, enhancing safety and building new capabilities by augmenting LLMs with domain knowledge and external tools.

BrightBid

BrightBid is an AI-powered performance marketing engine that offers ad optimization solutions for businesses. It provides tools for keyword management, campaign optimization, insights, and protection. The platform enables users to automate bids, optimize keywords, monitor competitors, and gain powerful insights to make data-driven decisions. BrightBid aims to help businesses boost their advertising performance, save time, and improve ROI through AI-driven strategies and automation.

DevSecCops

DevSecCops is an AI-driven automation platform designed to revolutionize DevSecOps processes. The platform offers solutions for cloud optimization, machine learning operations, data engineering, application modernization, infrastructure monitoring, security, compliance, and more. With features like one-click infrastructure security scan, AI engine security fixes, compliance readiness using AI engine, and observability, DevSecCops aims to enhance developer productivity, reduce cloud costs, and ensure secure and compliant infrastructure management. The platform leverages AI technology to identify and resolve security issues swiftly, optimize AI workflows, and provide cost-saving techniques for cloud architecture.

Prompt Dev Tool

Prompt Dev Tool is an AI application designed to boost prompt engineering efficiency by helping users create, test, and optimize AI prompts for better results. It offers an intuitive interface, real-time feedback, model comparison, variable testing, prompt iteration, and advanced analytics. The tool is suitable for both beginners and experts, providing detailed insights to enhance AI interactions and improve outcomes.

RagaAI Catalyst

RagaAI Catalyst is a sophisticated AI observability, monitoring, and evaluation platform designed to help users observe, evaluate, and debug AI agents at all stages of Agentic AI workflows. It offers features like visualizing trace data, instrumenting and monitoring tools and agents, enhancing AI performance, agentic testing, comprehensive trace logging, evaluation for each step of the agent, enterprise-grade experiment management, secure and reliable LLM outputs, finetuning with human feedback integration, defining custom evaluation logic, generating synthetic data, and optimizing LLM testing with speed and precision. The platform is trusted by AI leaders globally and provides a comprehensive suite of tools for AI developers and enterprises.

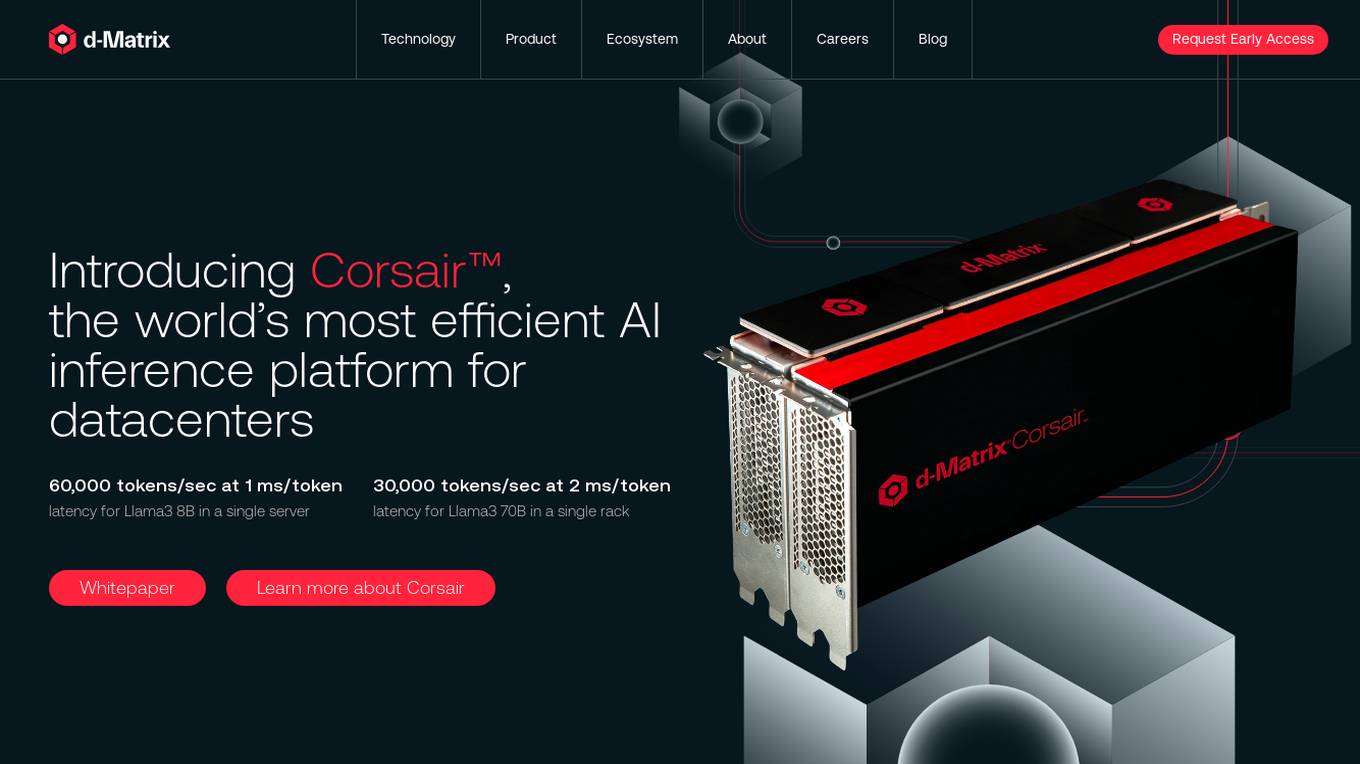

d-Matrix

d-Matrix is an AI tool that offers ultra-low latency batched inference for generative AI technology. It introduces Corsair™, the world's most efficient AI inference platform for datacenters, providing high performance, efficiency, and scalability for large-scale inference tasks. The tool aims to transform the economics of AI inference by delivering fast, sustainable, and scalable AI solutions without compromising on speed or usability.

Peak

Peak is a game-changing AI platform designed to optimize product inventory and pricing for businesses of all sizes. It offers unique AI solutions tailored to each business, transforming decision-making processes and driving competitive advantage. With a focus on inventory intelligence, pricing intelligence, and AI performance guarantee, Peak aims to accelerate growth, widen margins, and increase profits for its users.

3 - Open Source AI Tools

Mortal

Mortal (凡夫) is a free and open source AI for Japanese mahjong, powered by deep reinforcement learning. It provides a comprehensive solution for playing Japanese mahjong with AI assistance. The project focuses on utilizing deep reinforcement learning techniques to enhance gameplay and decision-making in Japanese mahjong. Mortal offers a user-friendly interface and detailed documentation to assist users in understanding and utilizing the AI effectively. The project is actively maintained and welcomes contributions from the community to further improve the AI's capabilities and performance.

AIInfra

AIInfra is an open-source project focused on AI infrastructure, specifically targeting large models in distributed clusters, distributed architecture, distributed training, and algorithms related to large models. The project aims to explore and study system design in artificial intelligence and deep learning, with a focus on the hardware and software stack for building AI large model systems. It provides a comprehensive curriculum covering key topics such as system overview, AI computing clusters, communication and storage, cluster containers and cloud-native technologies, distributed training, distributed inference, large model algorithms and data, and applications of large models.

cactus

Cactus is an energy-efficient and fast AI inference framework designed for phones, wearables, and resource-constrained arm-based devices. It provides a bottom-up approach with no dependencies, optimizing for budget and mid-range phones. The framework includes Cactus FFI for integration, Cactus Engine for high-level transformer inference, Cactus Graph for unified computation graph, and Cactus Kernels for low-level ARM-specific operations. It is suitable for implementing custom models and scientific computing on mobile devices.

20 - OpenAI Gpts

Market My Site

AI-powered website and SEO analysis 💻 with detailed marketing strategy, content, images and insights guided by experts. Performs 8+ actions to optimize your business website marketing. 📊

Ticket Sales AI_MensBasketball

Data Analyst for USC Athletics Dept, specializes in SQL and ticket sales insights.

360GPT ~ All Things AI & Machine Learning

AI 360 Solutions. Designed to provide all-encompassing solutions in the field of artificial intelligence.

Calendario Editoriale GrowGenius AI

Creatore di calendari editoriali per i social media con analisi delle tendenze.

EngageSmart Analyst

Expert AI companion for optimizing engagement, analyzing metrics, and mastering content strategy.

NetMaster Pro 🌐🛠️

Your AI network guru for setup and fixing connectivity woes! 🌐 Assists with network configurations, troubleshooting, and optimizes your internet experience. 💻✨

Back Propagation

I'm Back Propagation, here to help you understand and apply back propagation techniques to your AI models.

Ads Expert

Your go-to expert for Facebook Ads, this AI is fed with loads of facebook ad courses.

Marketplace Mind for POD (Print On Demand) | YAYAI

Innovates digital, AI-driven product ideas in marketplace style.