Best AI tools for< Monitor Applications >

20 - AI tool Sites

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Amazon Bedrock

Amazon Bedrock is a cloud-based platform that enables developers to build, deploy, and manage serverless applications. It provides a fully managed environment that takes care of the infrastructure and operations, so developers can focus on writing code. Bedrock also offers a variety of tools and services to help developers build and deploy their applications, including a code editor, a debugger, and a deployment pipeline.

Scale AI

Scale AI is an AI tool that accelerates the development of AI applications for various sectors including enterprise, government, and automotive industries. It offers solutions for training models, fine-tuning, generative AI, and model evaluations. Scale Data Engine and GenAI Platform enable users to leverage enterprise data effectively. The platform collaborates with leading AI models and provides high-quality data for public and private sector applications.

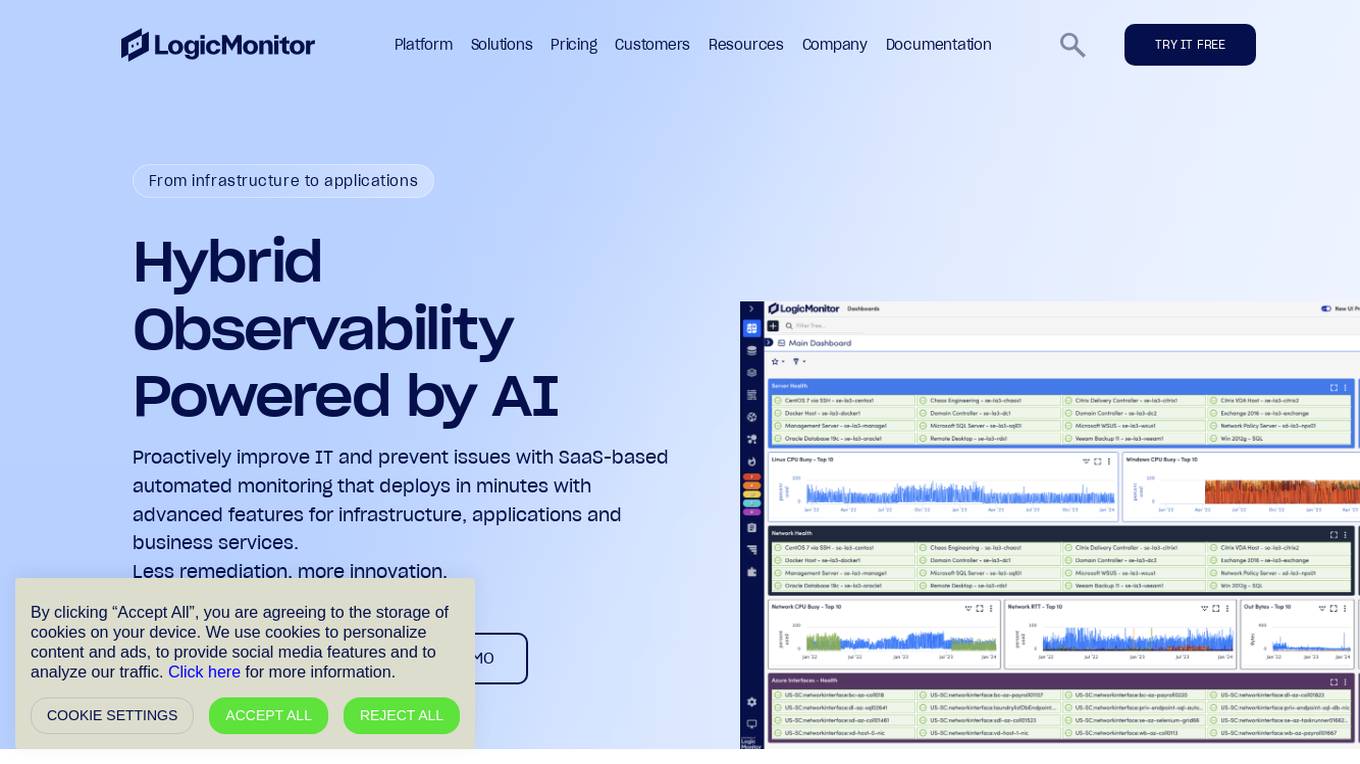

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a unified platform for monitoring infrastructure, applications, and business services, with advanced features for hybrid observability. LogicMonitor's AI-driven capabilities simplify complex IT ecosystems, accelerate incident response, and empower organizations to thrive in the digital landscape.

Wing Security

Wing Security is a SaaS Security Posture Management (SSPM) solution that helps businesses protect their data by providing full visibility and control over applications, users, and data. The platform offers features such as automated remediation, AI discovery, real-time SaaS visibility, vendor risk management, insider risk management, and more. Wing Security enables organizations to eliminate risky applications, manage user behavior, and protect sensitive data from unauthorized access. With a focus on security first, Wing Security helps businesses leverage the benefits of SaaS while staying protected.

ZeroThreat

ZeroThreat is a web app and API security scanner that helps businesses identify and fix vulnerabilities in their web applications and APIs. It uses a combination of static and dynamic analysis techniques to scan for a wide range of vulnerabilities, including OWASP Top 10, CWE Top 25, and SANS Top 25. ZeroThreat also provides continuous monitoring and alerting, so businesses can stay on top of new vulnerabilities as they emerge.

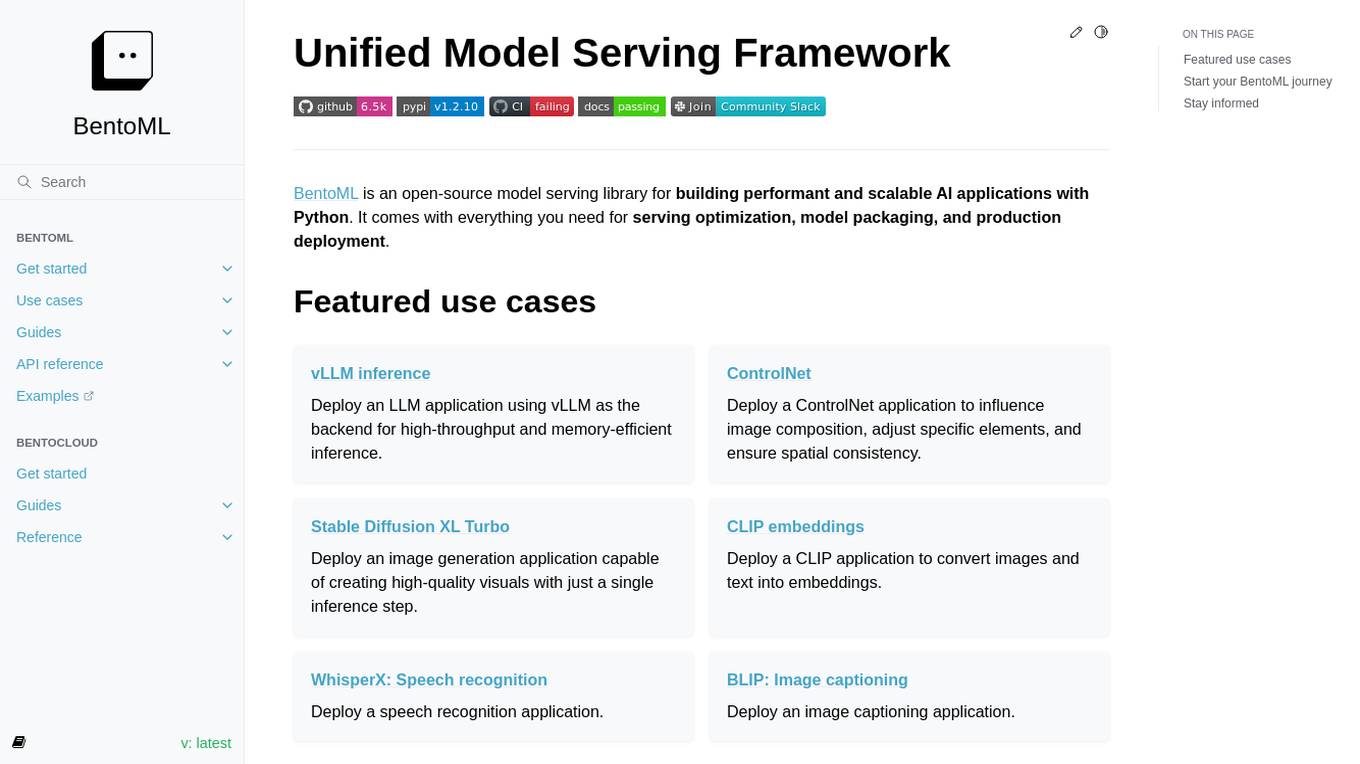

BentoML

BentoML is a framework for building reliable, scalable, and cost-efficient AI applications. It provides everything needed for model serving, application packaging, and production deployment.

Future AGI

Future AGI is a revolutionary AI data management platform that aims to achieve 99% accuracy in AI applications across software and hardware. It provides a comprehensive evaluation and optimization platform for enterprises to enhance the performance of their AI models. Future AGI offers features such as creating trustworthy, accurate, and responsible AI, 10x faster processing, generating and managing diverse synthetic datasets, testing and analyzing agentic workflow configurations, assessing agent performance, enhancing LLM application performance, monitoring and protecting applications in production, and evaluating AI across different modalities.

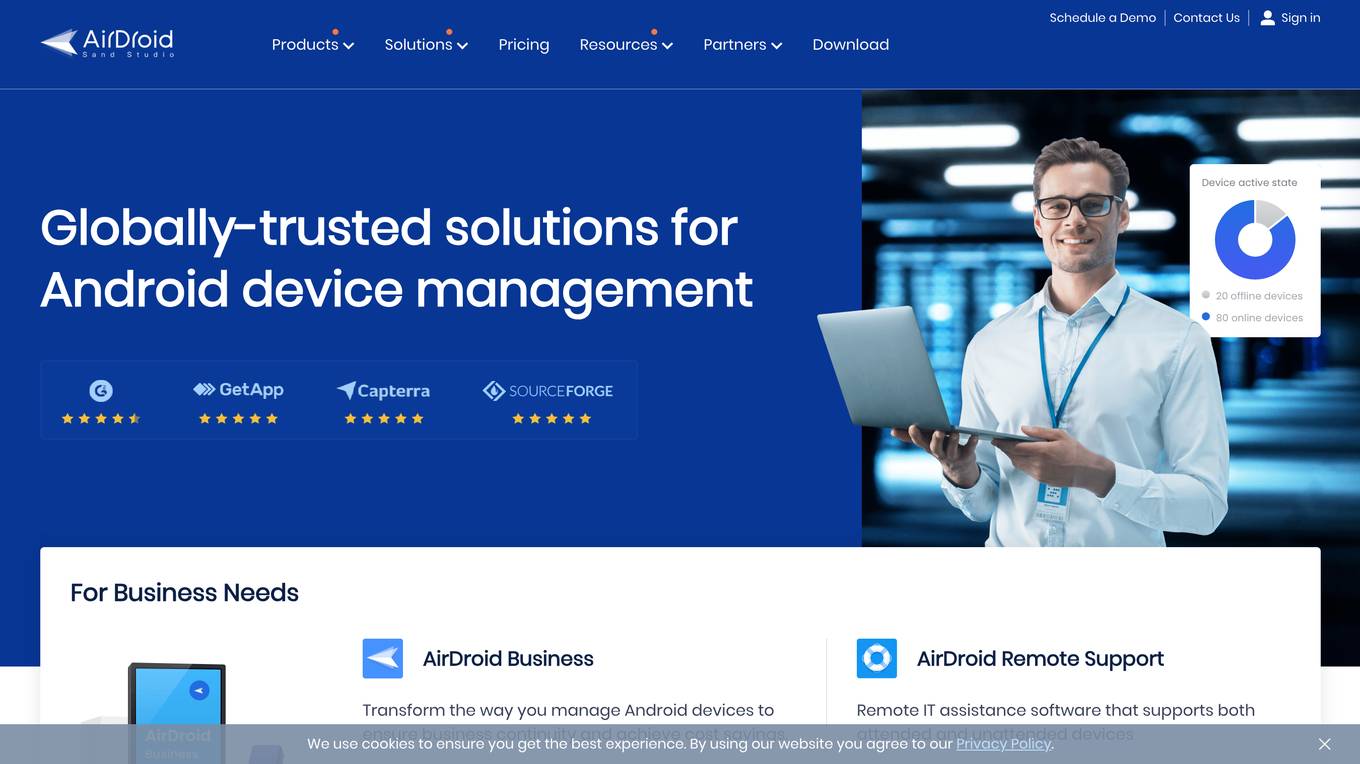

AirDroid

AirDroid is an AI-powered device management solution that offers both business and personal services. It provides features such as remote support, file transfer, application management, and AI-powered insights. The application aims to streamline IT resources, reduce costs, and increase efficiency for businesses, while also offering personal management solutions for private mobile devices. AirDroid is designed to empower businesses with intelligent AI assistance and enhance user experience through seamless multi-screen interactions.

Unified DevOps platform to build AI applications

This is a unified DevOps platform to build AI applications. It provides a comprehensive set of tools and services to help developers build, deploy, and manage AI applications. The platform includes a variety of features such as a code editor, a debugger, a profiler, and a deployment manager. It also provides access to a variety of AI services, such as natural language processing, machine learning, and computer vision.

OpenLIT

OpenLIT is an AI application designed as an Observability tool for GenAI and LLM applications. It empowers model understanding and data visualization through an interactive Learning Interpretability Tool. With OpenTelemetry-native support, it seamlessly integrates into projects, offering features like fine-tuning performance, real-time data streaming, low latency processing, and visualizing data insights. The tool simplifies monitoring with easy installation and light/dark mode options, connecting to popular observability platforms for data export. Committed to OpenTelemetry community standards, OpenLIT provides valuable insights to enhance application performance and reliability.

New Relic

New Relic is an AI monitoring platform that offers an all-in-one observability solution for monitoring, debugging, and improving the entire technology stack. With over 30 capabilities and 750+ integrations, New Relic provides the power of AI to help users gain insights and optimize performance across various aspects of their infrastructure, applications, and digital experiences.

Dynamiq

Dynamiq is an operating platform for GenAI applications that enables users to build compliant GenAI applications in their own infrastructure. It offers a comprehensive suite of features including rapid prototyping, testing, deployment, observability, and model fine-tuning. The platform helps streamline the development cycle of AI applications and provides tools for workflow automations, knowledge base management, and collaboration. Dynamiq is designed to optimize productivity, reduce AI adoption costs, and empower organizations to establish AI ahead of schedule.

Fiddler AI

Fiddler AI is an AI Observability platform that provides tools for monitoring, explaining, and improving the performance of AI models. It offers a range of capabilities, including explainable AI, NLP and CV model monitoring, LLMOps, and security features. Fiddler AI helps businesses to build and deploy high-performing AI solutions at scale.

Haystack

Haystack is a production-ready open-source AI framework designed to facilitate building AI applications. It offers a flexible components and pipelines architecture, allowing users to customize and build applications according to their specific requirements. With partnerships with leading LLM providers and AI tools, Haystack provides freedom of choice for users. The framework is built for production, with fully serializable pipelines, logging, monitoring integrations, and deployment guides for full-scale deployments on various platforms. Users can build Haystack apps faster using deepset Studio, a platform for drag-and-drop construction of pipelines, testing, debugging, and sharing prototypes.

JFrog ML

JFrog ML is an AI platform designed to streamline AI development from prototype to production. It offers a unified MLOps platform to build, train, deploy, and manage AI workflows at scale. With features like Feature Store, LLMOps, and model monitoring, JFrog ML empowers AI teams to collaborate efficiently and optimize AI & ML models in production.

Galileo AI

Galileo AI is a platform that offers automated evaluations for AI applications, bringing automation and insight to AI evaluations to ensure reliable and confident shipping. It helps in eliminating 80% of evaluation time by replacing manual reviews with high-accuracy metrics, enabling rapid iteration, achieving real-time protection, and providing end-to-end visibility into agent completions. Galileo also allows developers to take control of AI complexity, de-risk AI in production, and deploy AI applications flexibly across different environments. The platform is trusted by enterprises and loved by developers for its accuracy, low-latency, and ability to run on L4 GPUs.

UpTrain

UpTrain is a full-stack LLMOps platform designed to help users confidently scale AI by providing a comprehensive solution for all production needs, from evaluation to experimentation to improvement. It offers diverse evaluations, automated regression testing, enriched datasets, and innovative techniques to generate high-quality scores. UpTrain is built for developers, compliant to data governance needs, cost-efficient, remarkably reliable, and open-source. It provides precision metrics, task understanding, safeguard systems, and covers a wide range of language features and quality aspects. The platform is suitable for developers, product managers, and business leaders looking to enhance their LLM applications.

Hexowatch

Hexowatch is an AI-powered website monitoring and archiving tool that helps businesses track changes to any website, including visual, content, source code, technology, availability, or price changes. It provides detailed change reports, archives snapshots of pages, and offers side-by-side comparisons and diff reports to highlight changes. Hexowatch also allows users to access monitored data fields as a downloadable CSV file, Google Sheet, RSS feed, or sync any update via Zapier to over 2000 different applications.

Continual

Continual is an AI copilot platform that helps you build, operate, monitor, and continually improve a production-ready AI copilot for your application, remarkably fast. Continual connects to your application data and APIs and gives your users an AI copilot that never stops improving. With Continual, you can give your users a tireless AI assistant that understands your application, provide users a copilot that can answer any question instantly, automate user workflows with a copilot that can reason and act, and build unique AI product features powered by a unified copilot engine.

3 - Open Source AI Tools

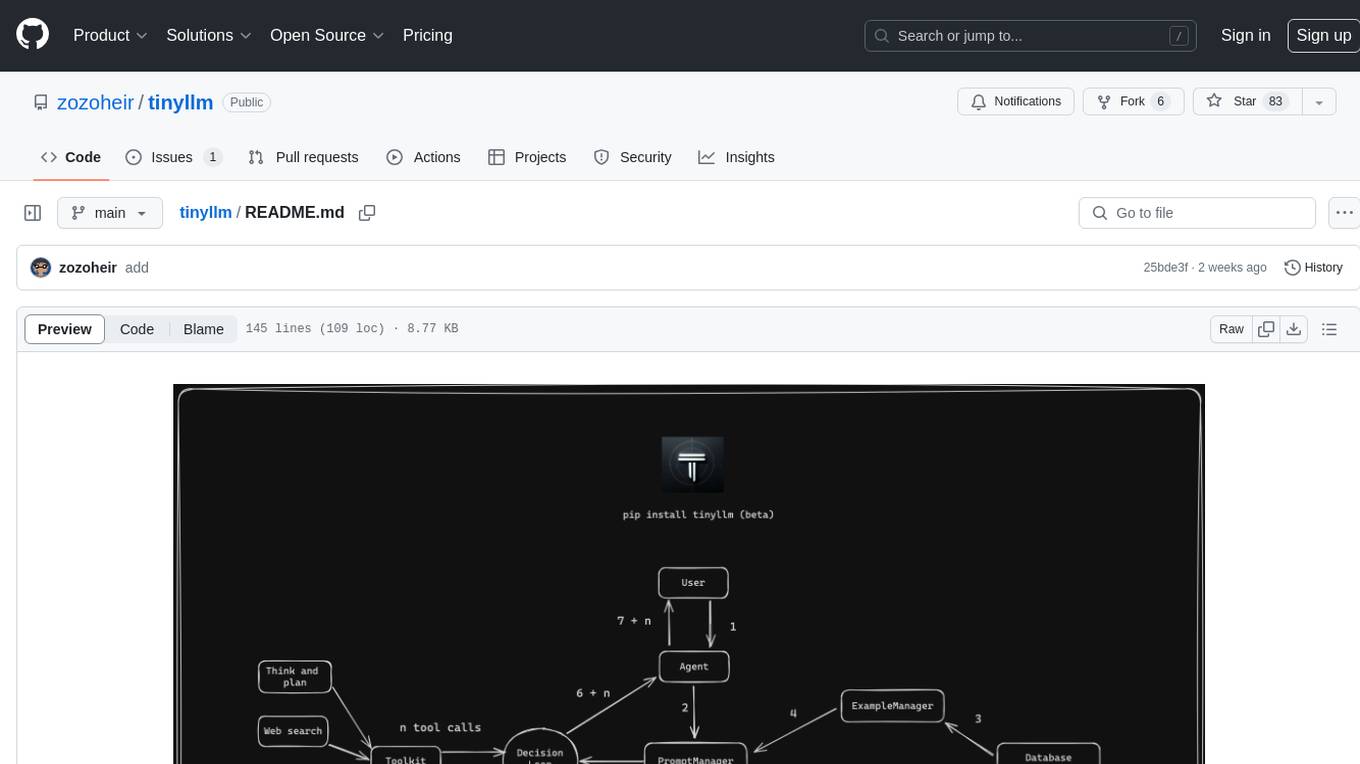

tinyllm

tinyllm is a lightweight framework designed for developing, debugging, and monitoring LLM and Agent powered applications at scale. It aims to simplify code while enabling users to create complex agents or LLM workflows in production. The core classes, Function and FunctionStream, standardize and control LLM, ToolStore, and relevant calls for scalable production use. It offers structured handling of function execution, including input/output validation, error handling, evaluation, and more, all while maintaining code readability. Users can create chains with prompts, LLM models, and evaluators in a single file without the need for extensive class definitions or spaghetti code. Additionally, tinyllm integrates with various libraries like Langfuse and provides tools for prompt engineering, observability, logging, and finite state machine design.

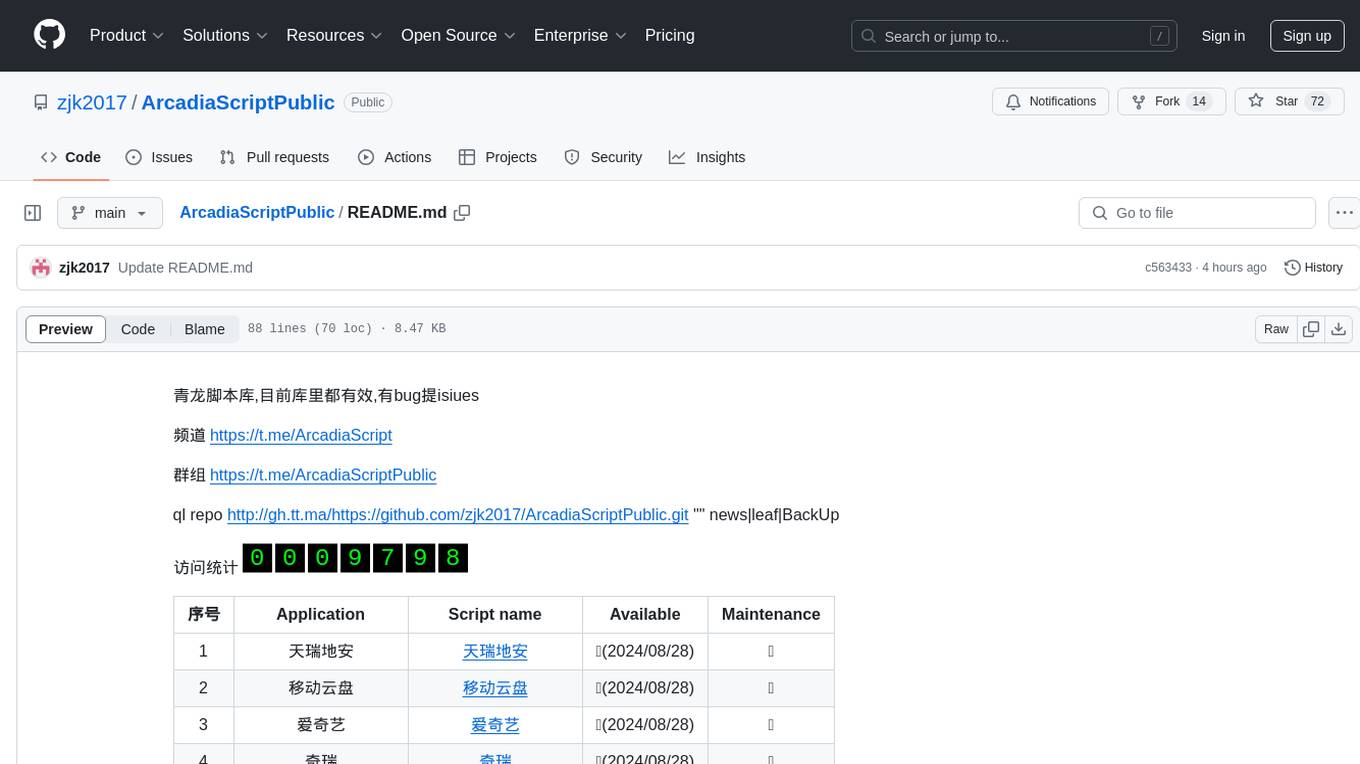

ArcadiaScriptPublic

ArcadiaScriptPublic is a repository containing various scripts for learning and practicing JavaScript, Python, and Shell scripting. It is intended for testing and educational purposes only, and not for commercial use. The repository does not guarantee the legality, accuracy, completeness, or effectiveness of the scripts, and users are advised to use them at their own discretion. No resources from the repository are allowed to be republished or redistributed by any public account or self-media. The repository owner disclaims any responsibility for script-related issues, including losses or damages resulting from script errors. Users indirectly utilizing the scripts, such as setting up VPS or engaging in activities that violate national/regional laws or regulations, are solely responsible for any privacy leaks or consequences. If any entity or individual believes that the scripts in the project may infringe upon their rights, they should promptly notify and provide proof of identity and ownership, upon which the relevant scripts will be removed after verification. Anyone viewing or using the scripts in this project should carefully read and accept the disclaimer provided by zjk2017/ArcadiaScriptPublic, as the repository reserves the right to change or supplement the disclaimer at any time. Users must completely delete the downloaded content from their computers or phones within 24 hours of downloading, and any form of profit chain generation is strictly prohibited.

awesome-generative-ai-data-scientist

A curated list of 50+ resources to help you become a Generative AI Data Scientist. This repository includes resources on building GenAI applications with Large Language Models (LLMs), and deploying LLMs and GenAI with Cloud-based solutions.

20 - OpenAI Gpts

Azure Mentor

Expert in Azure's latest services, including Application Insights, API Management, and more.

The Dock - Your Docker Assistant

Technical assistant specializing in Docker and Docker Compose. Lets Debug !

Quake and Volcano Watch Iceland

Seismic and volcanic monitor with in-depth data and visuals.

Qtech | FPS

Frost Protection System is an AI bot optimizing open field farming of fruits, vegetables, and flowers, combining real-time data and AI to boost yield, cut costs, and foster sustainable practices in a user-friendly interface.

DataKitchen DataOps and Data Observability GPT

A specialist in DataOps and Data Observability, aiding in data management and monitoring.