Best AI tools for< Hybrid Parallelism >

20 - AI tool Sites

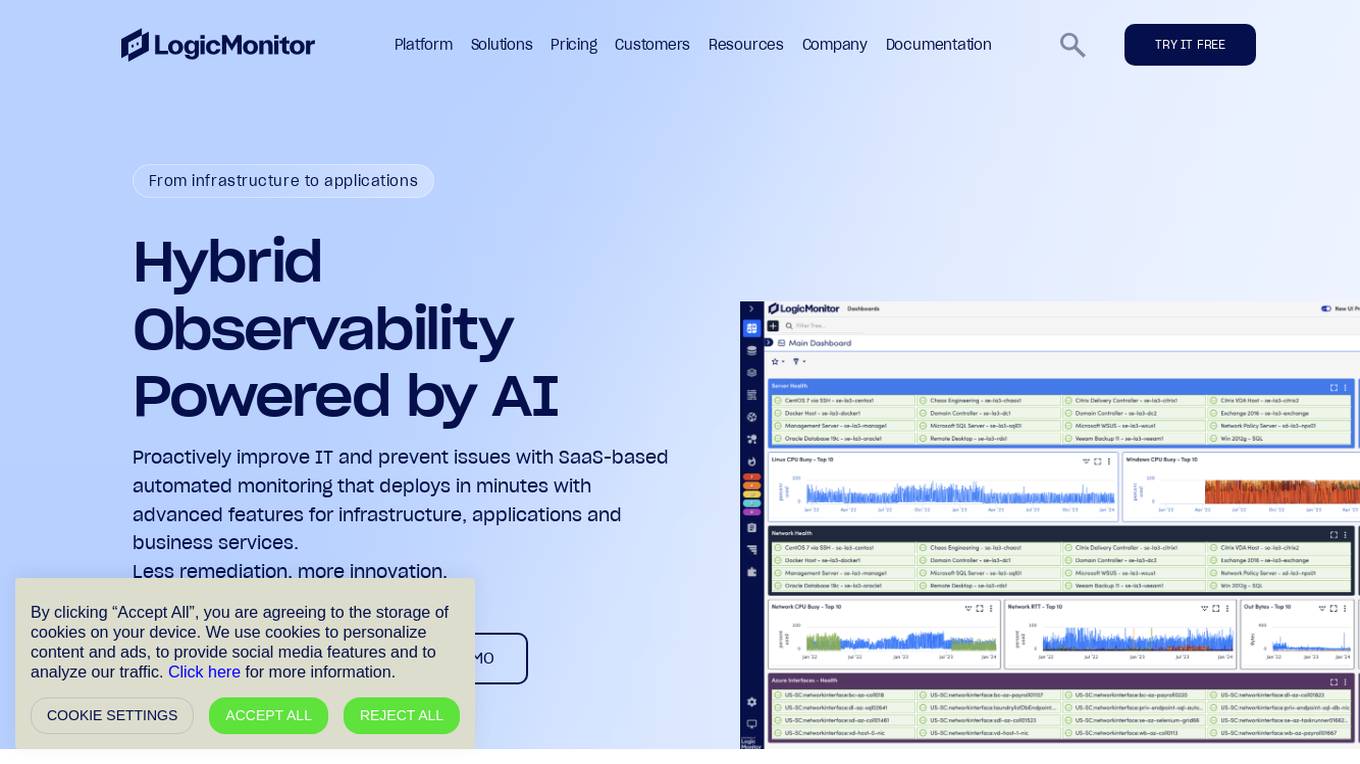

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a unified platform for monitoring infrastructure, applications, and business services, with advanced features for hybrid observability. LogicMonitor's AI-driven capabilities simplify complex IT ecosystems, accelerate incident response, and empower organizations to thrive in the digital landscape.

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a wide range of features including infrastructure monitoring, network monitoring, server monitoring, remote monitoring, virtual machine monitoring, SD-WAN monitoring, database monitoring, storage monitoring, configuration monitoring, cloud monitoring, container monitoring, AWS Monitoring, GCP Monitoring, Azure Monitoring, digital experience SaaS monitoring, website monitoring, APM, AIOPS, Dexda Integrations, security dashboards, and platform demo logs. LogicMonitor's AI-driven hybrid observability helps organizations simplify complex IT ecosystems, accelerate incident response, and thrive in the digital landscape.

MemFree

MemFree is a hybrid AI search tool that allows users to search for information instantly and receive accurate answers from the internet, bookmarks, notes, and documents. With MemFree, users can easily index their bookmarks and web pages with just one click. The tool leverages GPT-4o mini for enhanced search capabilities, making it a powerful and efficient AI application for information retrieval.

Reworked

Reworked is a leading online community for professionals in the fields of employee experience, digital workplace, and talent management. It provides news, research, and events on the latest trends and best practices in these areas. Reworked also offers a variety of resources for members, including a podcast, awards program, and research library.

Reworked

Reworked is a leading online community for professionals in the fields of employee experience, digital workplace, and talent management. It provides news, research, and events on the latest trends and best practices in these areas. Reworked also offers a variety of resources for members, including a podcast, awards program, and research library.

ZEBCOIN

ZEBCOIN is an AI-powered platform that focuses on decentralized identity verification, intellectual property rights, AI-driven decentralized finance (DeFi) and micro-lending, environmental sustainability, and transparency in supply chains. It aims to leverage AI and blockchain technology to offer innovative financial solutions, promote clean energy initiatives, ensure transparency in global supply chains, and provide decentralized identity verification services. The platform also plans to launch its own blockchain (ZEB Chain) with features like an explorer, decentralized exchanges (DEX) transparency, NFT marketplace, and lending and DeFi services. ZEBCOIN aims to revolutionize the blockchain space by integrating AI technology into various aspects of its ecosystem.

Derwen

Derwen is an open-source integration platform for production machine learning in enterprise, specializing in natural language processing, graph technologies, and decision support. It offers expertise in developing knowledge graph applications and domain-specific authoring. Derwen collaborates closely with Hugging Face and provides strong data privacy guarantees, low carbon footprint, and no cloud vendor involvement. The platform aims to empower AI engineers and domain experts with quality, time-to-value, and ownership since 2017.

BalancedWork

BalancedWork is an AI-powered platform that helps teams make data-driven decisions about when to work in-person. By combining AI and Social Science, the application addresses challenges such as frustrating virtual meetings, decreasing employee satisfaction due to mandates, weakening team bonds, and underutilized office spaces. BalancedWork offers solutions like ingesting data from enterprise APIs, evaluating work patterns, generating team schedules, and providing ongoing recommendations to adapt to changing needs. The platform aims to boost productivity, engagement, and collaboration in organizations by optimizing work interactions and relationships.

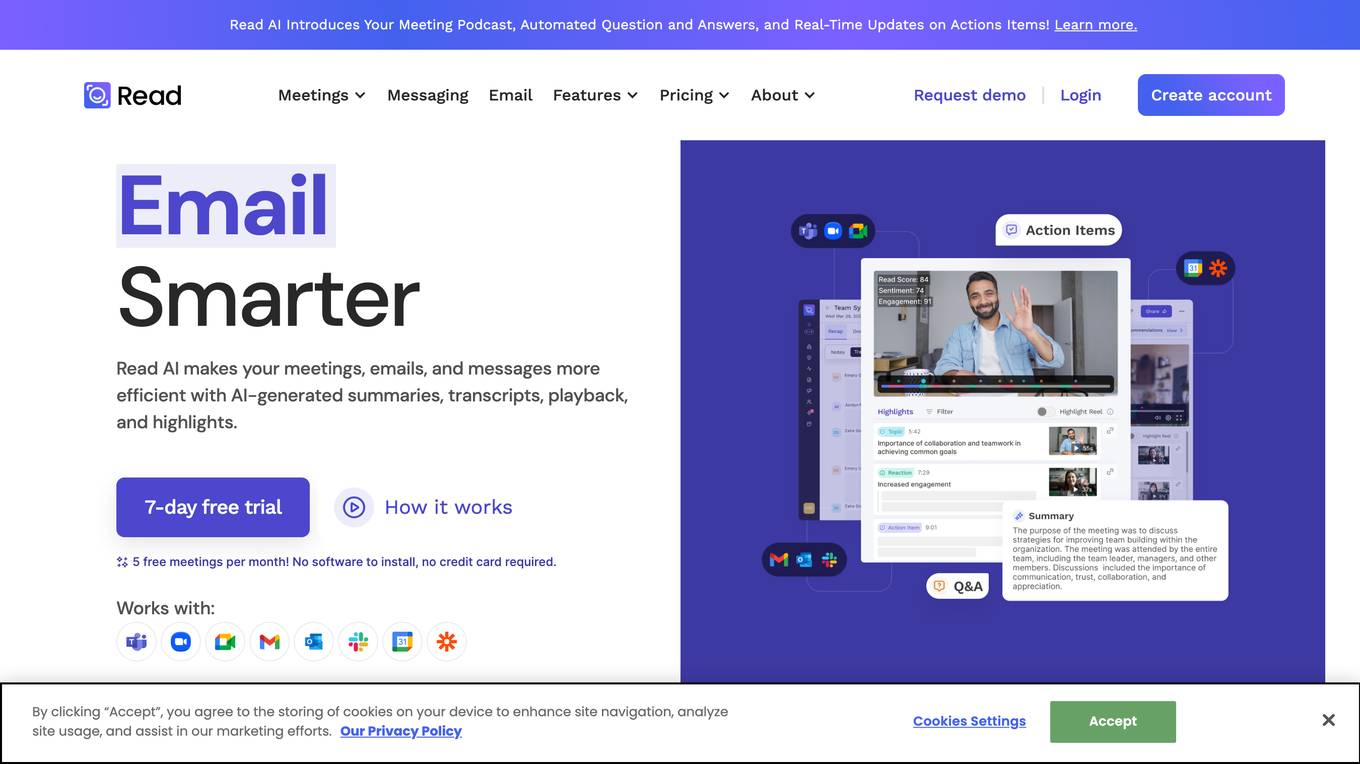

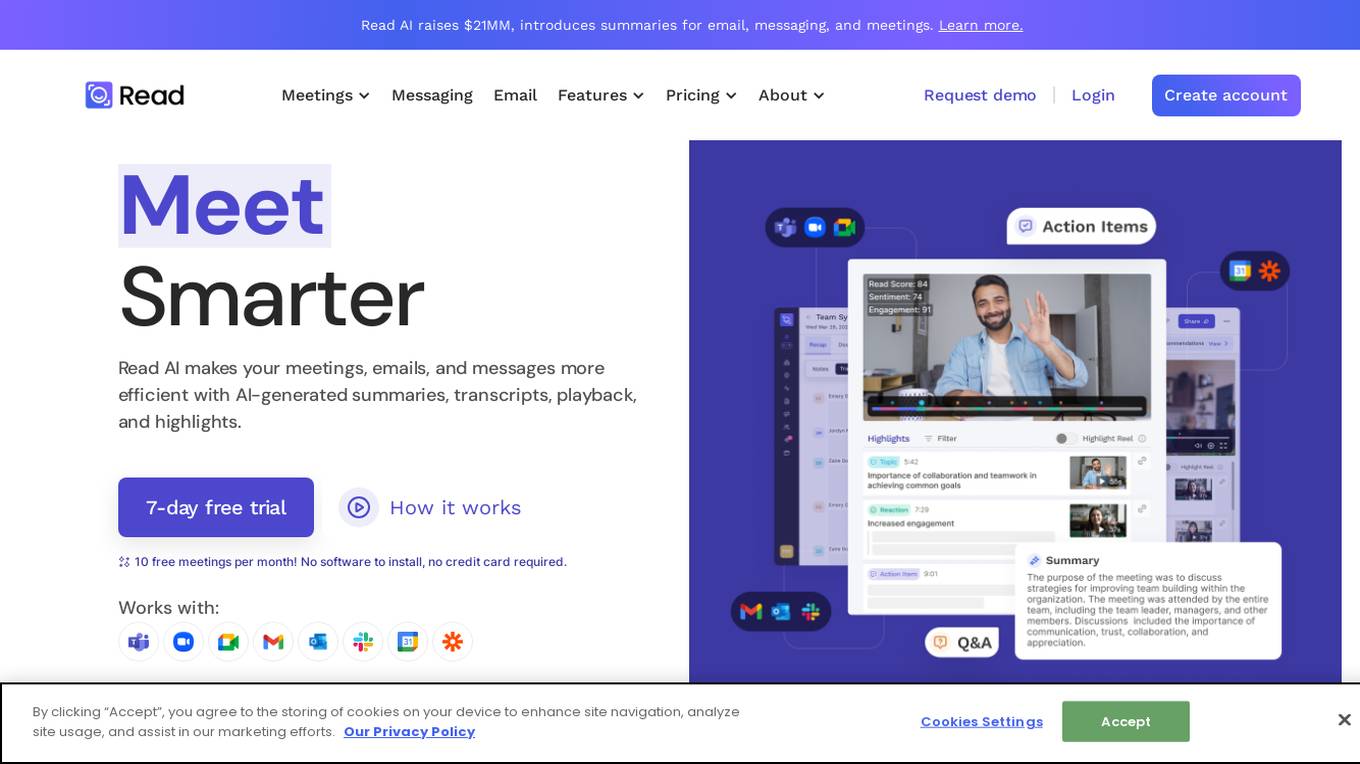

Read AI

Read AI is an AI-powered application that enhances productivity by generating summaries, transcripts, and highlights for meetings, emails, and messages. It offers features like real-time meeting summaries, smart scheduler, speaker coach insights, and multi-language support. Read AI helps users save time, improve communication, and stay organized across various platforms. With a focus on security and actionable accountability, it aims to streamline workflows and maximize productivity for knowledge workers.

Read AI

Read AI is an AI-powered application that enhances productivity by generating summaries, transcripts, and highlights for meetings, emails, and messages. It offers features like playback, coaching, smart scheduling, and integrations with various platforms. With multi-language support and secure handling of data, Read AI aims to streamline communication and collaboration for users across different languages and industries.

RingCentral Events

RingCentral Events is a comprehensive platform for organizing hybrid and virtual events. It allows users to host engaging events with AI-powered features, personalized branding, and immersive experiences. The platform offers tools for event management, engagement tracking, and content creation, making it a valuable solution for businesses of all sizes.

Remesh

Remesh is an AI-powered insights platform designed for market research and employee experience teams. It allows users to conduct conversational surveys, qualitative and quantitative research at scale, in-depth interviews, and on-demand recruitment with built-in AI analysis. The platform helps users to turn thousands of responses into actionable insights, enabling faster and better decision-making. With features like live digital focus groups, real-time participant voting, automated verbatim analysis, and multilingual support, Remesh streamlines the research process and provides high-quality insights for smarter decision-making.

Snyk

Snyk is a developer security platform powered by DeepCode AI, offering solutions for application security, software supply chain security, and secure AI-generated code. It provides comprehensive vulnerability data, license compliance management, and self-service security education. Snyk integrates AI models trained on security-specific data to secure applications and manage tech debt effectively. The platform ensures developer-first security with one-click security fixes and AI-powered recommendations, enhancing productivity while maintaining security standards.

GAPVelocity AI Modernization Studio

GAPVelocity AI Modernization Studio is an enterprise-grade modernization tool that efficiently migrates legacy applications to cutting-edge cloud-native technologies. The tool offers a hybrid AI approach, combining deterministic AI for precise code transformation with intelligent Generative AI tools for analysis, testing, and optimization. It provides deep scanning for dependencies, technical debt identification, and upgrade opportunities, along with automated assessments and migration plans. The tool supports the transformation of various legacy systems into modern applications with minimal disruption, offering accelerated delivery and production-ready output. GAPVelocity AI leverages cutting-edge technologies like cloud infrastructure, AI & machine learning, DevOps, backend and frontend technologies, and database systems to ensure successful modernization. The tool has been successfully used in various industries, including agriculture, healthcare, and manufacturing, with proven results and satisfied customers.

Leapmax

Leapmax is a workforce analytics software designed to enhance operational efficiency by improving employee productivity, ensuring data security, facilitating communication and collaboration, and managing compliance. The application offers features such as productivity management, data security, remote team collaboration, reporting management, and network health monitoring. Leapmax provides advantages like AI-based user detection, real-time activity tracking, remote co-browsing, collaboration suite, and actionable analytics. However, some disadvantages include the need for employee monitoring, potential privacy concerns, and dependency on internet connectivity. The application is commonly used by contact centers, outsourcers, enterprises, and back offices. Users can perform tasks like productivity monitoring, app usage tracking, communication and collaboration, compliance management, and remote workforce monitoring.

LifeShack

LifeShack is an AI-powered job search tool that revolutionizes the job application process. It automates job searching, evaluation, and application submission, saving users time and increasing their chances of landing top-notch opportunities. With features like automatic job matching, AI-optimized cover letters, and tailored resumes, LifeShack streamlines the job search experience and provides peace of mind to job seekers.

Google Cloud

Google Cloud is a suite of cloud computing services that runs on the same infrastructure as Google. Its services include computing, storage, networking, databases, machine learning, and more. Google Cloud is designed to make it easy for businesses to develop and deploy applications in the cloud. It offers a variety of tools and services to help businesses with everything from building and deploying applications to managing their infrastructure. Google Cloud is also committed to sustainability, and it has a number of programs in place to reduce its environmental impact.

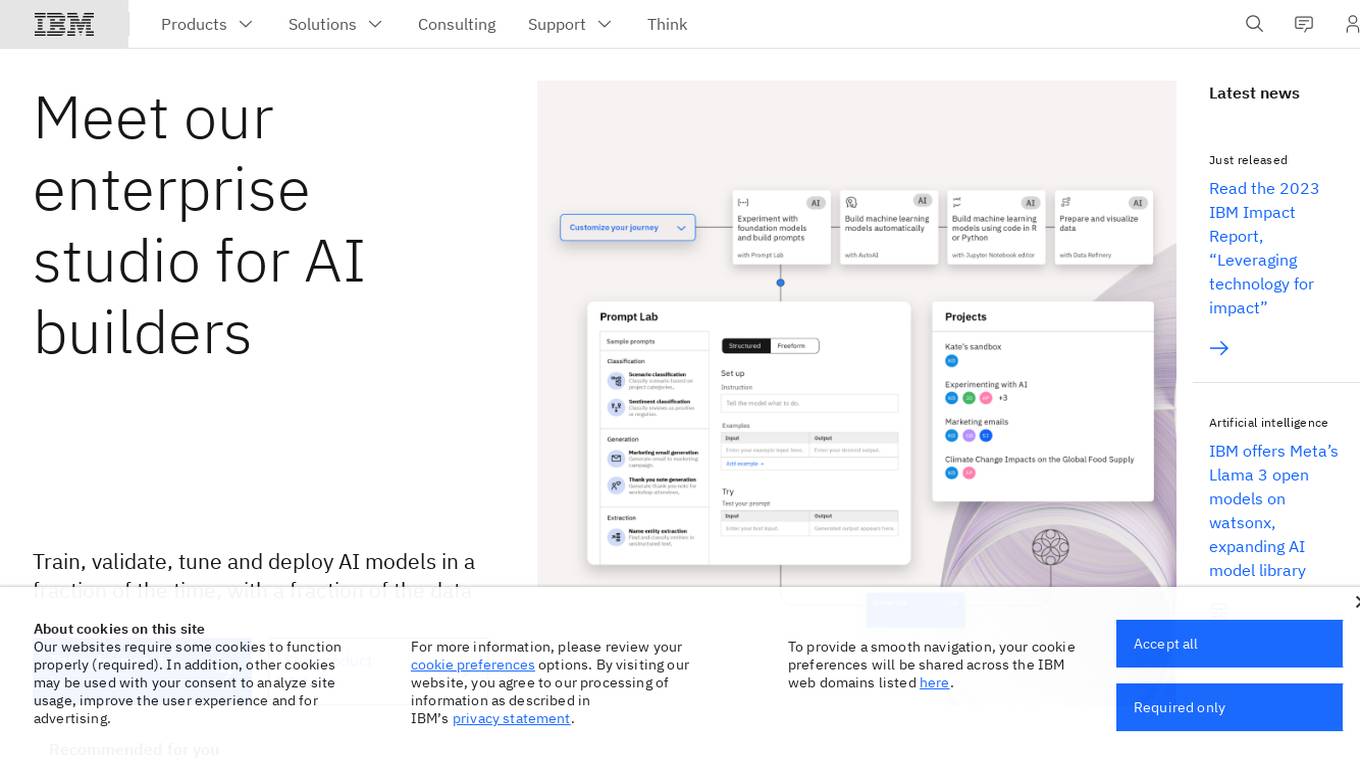

IBM Watsonx

IBM Watsonx is an enterprise studio for AI builders. It provides a platform to train, validate, tune, and deploy AI models quickly and efficiently. With Watsonx, users can access a library of pre-trained AI models, build their own models, and deploy them to the cloud or on-premises. Watsonx also offers a range of tools and services to help users manage and monitor their AI models.

IBM

IBM is a leading technology company that offers a wide range of AI and machine learning solutions to help businesses innovate and grow. From AI models to cloud services, IBM provides cutting-edge technology to address various business challenges. The company also focuses on AI ethics and offers training programs to enhance skills in cybersecurity and data analytics. With a strong emphasis on research and development, IBM continues to push the boundaries of technology to solve real-world problems and drive digital transformation across industries.

Palo Alto Networks

Palo Alto Networks is a cybersecurity company offering advanced security solutions powered by Precision AI to protect modern enterprises from cyber threats. The company provides network security, cloud security, and AI-driven security operations to defend against AI-generated threats in real time. Palo Alto Networks aims to simplify security and achieve better security outcomes through platformization, intelligence-driven expertise, and proactive monitoring of sophisticated threats.

1 - Open Source AI Tools

chitu

Chitu is a high-performance inference framework for large language models, focusing on efficiency, flexibility, and availability. It supports various mainstream large language models, including DeepSeek, LLaMA series, Mixtral, and more. Chitu integrates latest optimizations for large language models, provides efficient operators with online FP8 to BF16 conversion, and is deployed for real-world production. The framework is versatile, supporting various hardware environments beyond NVIDIA GPUs. Chitu aims to enhance output speed per unit computing power, especially in decoding processes dependent on memory bandwidth.

9 - OpenAI Gpts

Hybrid Workplace Navigator

Advises organizations on optimizing hybrid work models, blending remote and in-office strategies.

Leadership for Remote Teams

An advanced remote leadership coach with dynamic updates and expanded scenarios.

Chimerai

HybridCreator GPT crafts unique animal hybrids with emphasis weighting for traits. Input animals and desired trait balance for imaginative, detailed creations.

Pet Breed Mixer

Allows users to upload pictures of their pets and witness fascinating visualizations of potential crossbreeds with other species or different breeds.

Dogify Me | Put my face on a dog 🐶

I create fun, semi-realistic dog-human hybrids with humor.