Best AI tools for< Deploy Self-hosted Models >

20 - AI tool Sites

SiteRetriever

SiteRetriever is a self-hosted AI chatbot platform that enables users to build and deploy chatbots on their websites without any monthly fees. It offers a completely self-contained and self-hosted solution, providing full control over data and costs. With features like easy embedding, complete control over customization, lightning-fast responses, and smart AI powered by advanced language models, SiteRetriever simplifies the process of creating and managing chatbots. Users can upload content, configure bot settings, and embed the chatbot on their site within minutes. Join the waitlist to experience the benefits of SiteRetriever and take control of your chatbot today.

Activepieces

Activepieces is an open-source no-code business automation tool that allows users to securely deploy automation for various departments such as marketing, sales, operations, HR, finance, and IT teams. It offers a customizable and self-hosted solution, enabling users to put their work on autopilot. Activepieces stands out for its user experience, ease of integration, and control over hosting. The tool leverages AI automation to streamline tasks like content strategy, security compliance, sales outreach, and customer support. With a community-driven approach, Activepieces aims to make the automation world more open and accessible.

Hatchet

Hatchet is an AI companion designed to assist on-call engineers in incident response by providing intelligent insights and suggestions based on logs, communications channels, and code analysis. It helps save time and money by automating the triaging and investigation process during critical incidents. The tool is built by engineers with a focus on data security, offering self-hosted deployments, permissions, audit trails, SSO, and version control. Hatchet aims to streamline incident resolution for tier-1 services, enabling faster response and potential problem resolution.

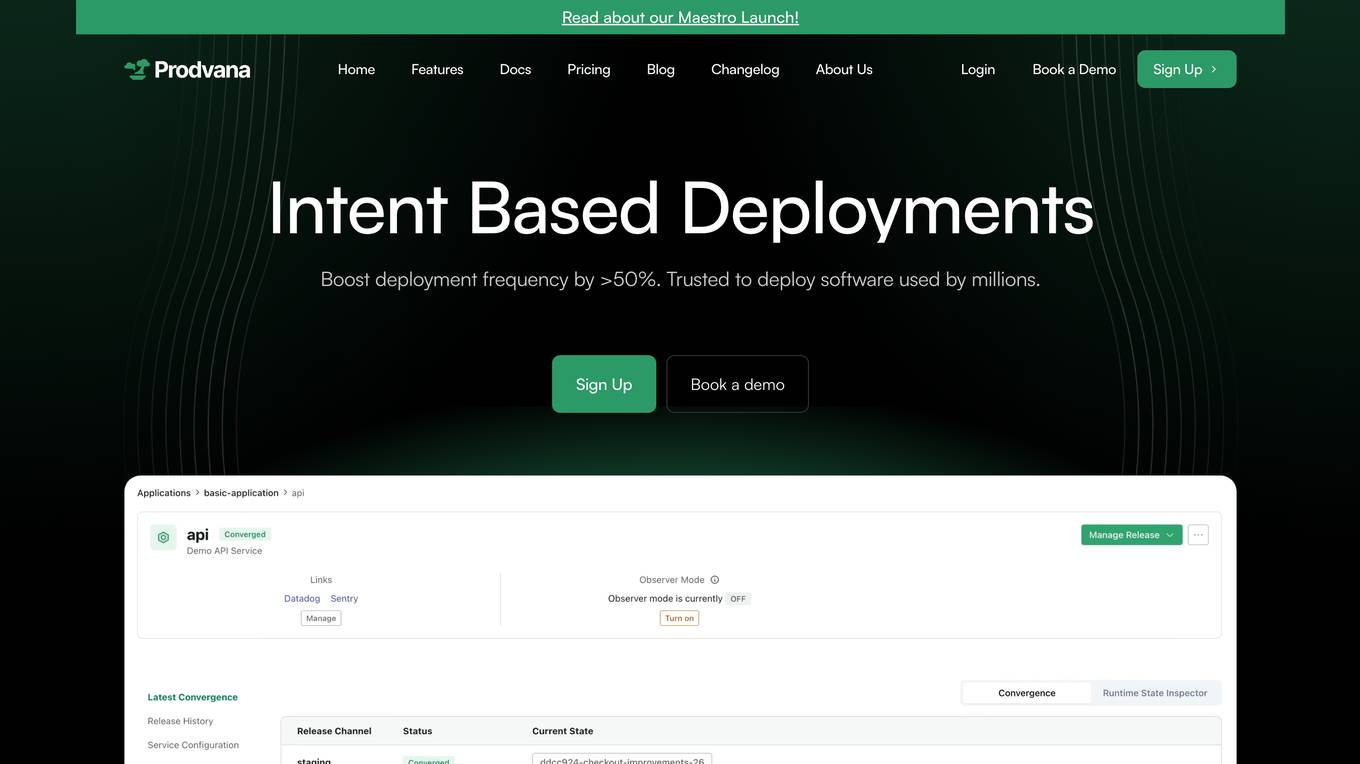

Prodvana

Prodvana is an intelligent deployment platform that helps businesses automate and streamline their software deployment process. It provides a variety of features to help businesses improve the speed, reliability, and security of their deployments. Prodvana is a cloud-based platform that can be used with any type of infrastructure, including on-premises, hybrid, and multi-cloud environments. It is also compatible with a wide range of DevOps tools and technologies. Prodvana's key features include: Intent-based deployments: Prodvana uses intent-based deployment technology to automate the deployment process. This means that businesses can simply specify their deployment goals, and Prodvana will automatically generate and execute the necessary steps to achieve those goals. This can save businesses a significant amount of time and effort. Guardrails for deployments: Prodvana provides a variety of guardrails to help businesses ensure the security and reliability of their deployments. These guardrails include approvals, database validations, automatic deployment validation, and simple interfaces to add custom guardrails. This helps businesses to prevent errors and reduce the risk of outages. Frictionless DevEx: Prodvana provides a frictionless developer experience by tracking commits through the infrastructure, ensuring complete visibility beyond just Docker images. This helps developers to quickly identify and resolve issues, and it also makes it easier to collaborate with other team members. Intelligence with Clairvoyance: Prodvana's Clairvoyance feature provides businesses with insights into the impact of their deployments before they are executed. This helps businesses to make more informed decisions about their deployments and to avoid potential problems. Easy integrations: Prodvana integrates seamlessly with a variety of DevOps tools and technologies. This makes it easy for businesses to use Prodvana with their existing workflows and processes.

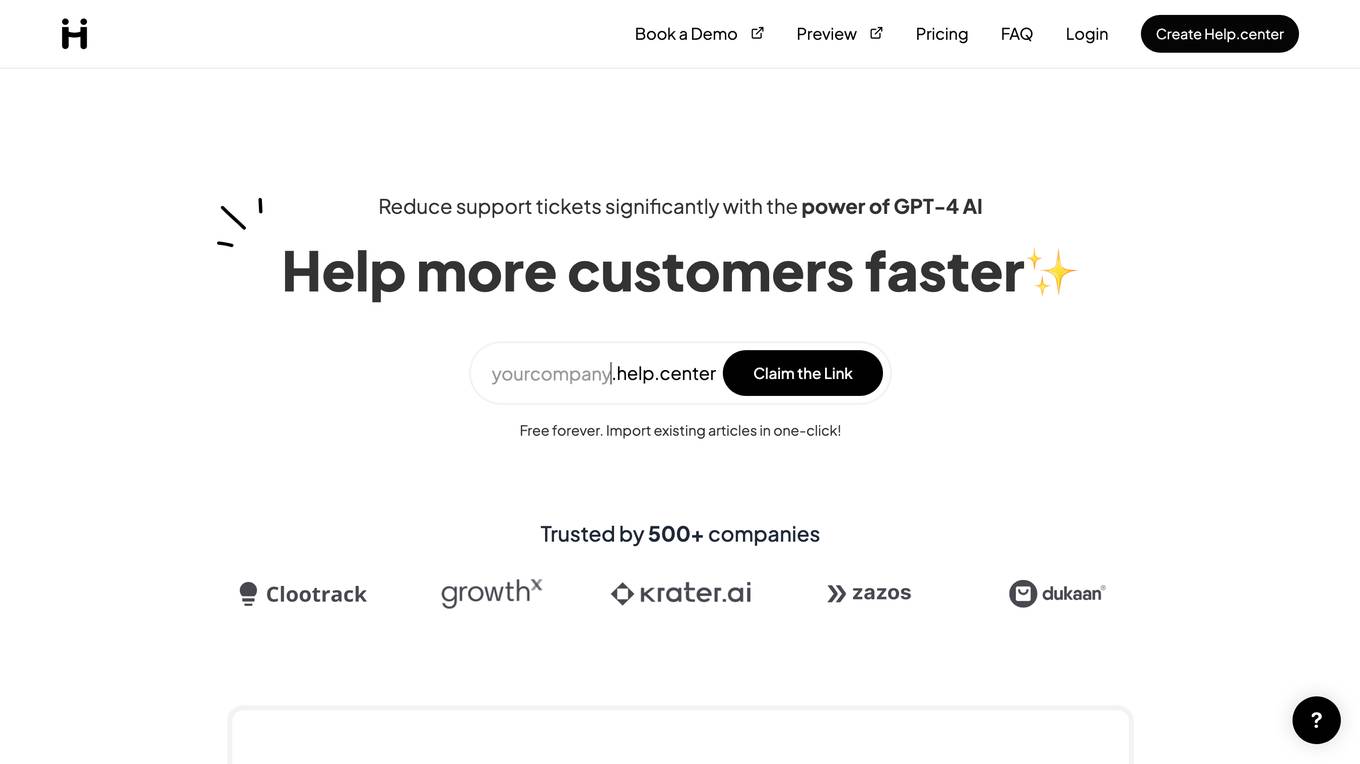

Help.center

Help.center is a customer support knowledge base powered by AI that empowers businesses to reduce support tickets significantly and help more customers faster. It offers AI chatbot and knowledge base features to enable self-service for customers, manage customer conversations efficiently, and improve customer satisfaction rates. The application is designed to provide 24x7 support, multilingual assistance, and automatic learning capabilities. Help.center is trusted by over 500 companies and offers a user-friendly interface for easy integration into product ecosystems.

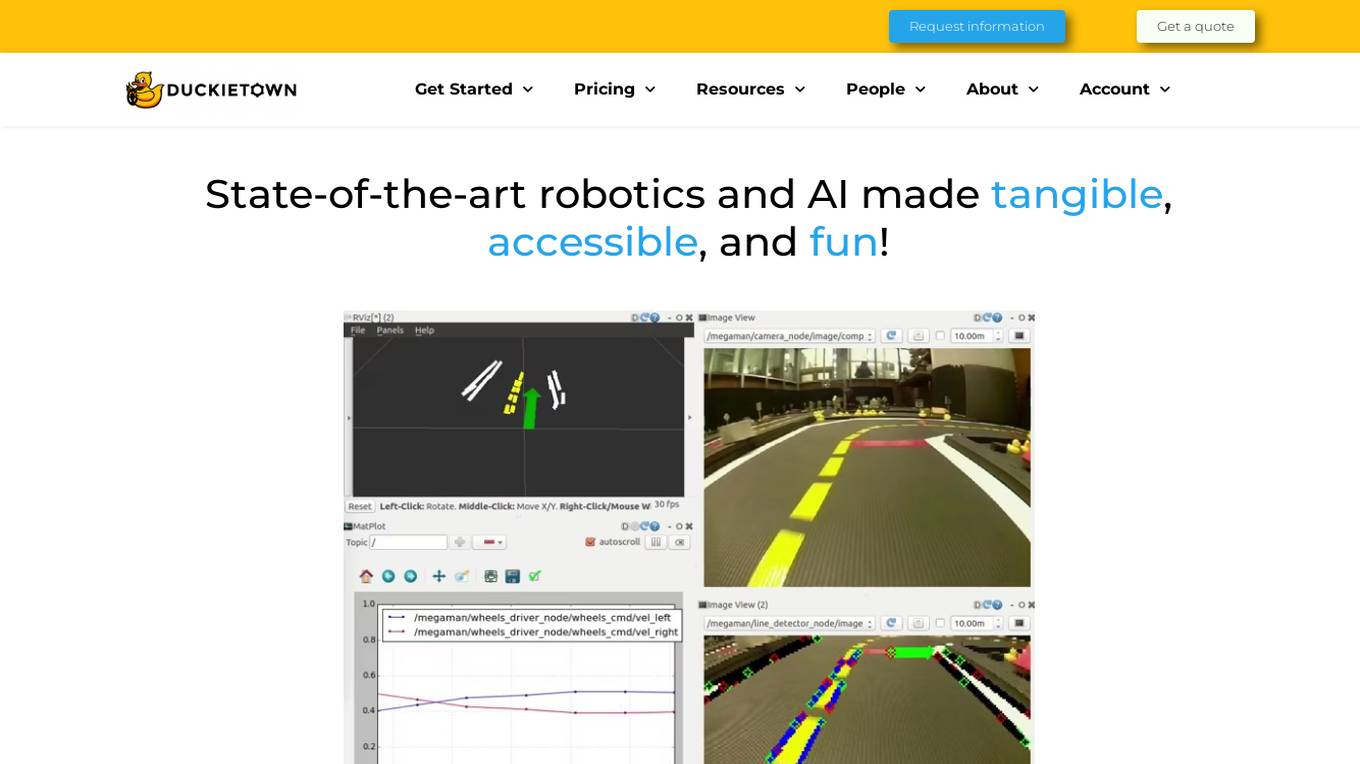

Duckietown

Duckietown is a platform for delivering cutting-edge robotics and AI learning experiences. It offers teaching resources to instructors, hands-on activities to learners, an accessible research platform to researchers, and a state-of-the-art ecosystem for professional training. Duckietown's mission is to make robotics and AI education state-of-the-art, hands-on, and accessible to all.

Humley

Humley is a Conversational AI platform that allows users to build and launch AI assistants in under an hour. The platform provides a no-code environment for creating self-serve experiences and managing AI outputs. Humley aims to revolutionize customer experiences and boost efficiencies by making Conversational AI accessible and safe for all users. With features like Knowledge Search, Build Flows, Integrate with Systems, Capture Feedback, and Multi-Channel Support, Humley Studio offers a comprehensive toolkit for creating engaging conversational experiences. The platform empowers businesses to deliver exceptional customer service, streamline access to AI models, and improve operational efficiencies.

Cresta AI

Cresta AI is an enterprise-grade Gen AI platform designed for the contact center, offering a suite of intelligent products that analyze conversations, provide real-time guidance to agents, and drive transformative results for Fortune 500 companies. The platform leverages generative AI to deliver targeted automation, personalized coaching, and AI-native management solutions, all trained on the user's data. Cresta's no-code command center empowers non-technical leaders to deploy AI models effortlessly, ensuring businesses can adapt and evolve seamlessly. With a focus on enhancing sales, customer care, retention, and collections processes, Cresta aims to revolutionize contact center operations with cutting-edge AI technology.

DiscuroAI

DiscuroAI is an all-in-one platform designed for developers to easily build, test, and consume complex AI workflows. Users can define their workflows in a user-friendly interface and execute them with a single API call. The platform integrates with GPT-3, DALLE-2, and other OpenAI models, allowing users to chain prompts together in powerful ways and extract output in JSON format via API. DiscuroAI enables users to build and test complex self-transforming AI workflows and datasets, execute workflows with one API call, and monitor AI usage across workflows.

MagikKraft

MagikKraft is an AI-powered platform that simplifies complex controls by enabling users to create personalized sequences and actions for programmable devices like drones, automated appliances, and self-driving vehicles. Users can craft, simulate, and deploy customized recipes through the AI-powered tool, enhancing the potential of technology while prioritizing privacy, user control, and creative freedom.

Together AI

Together AI is an AI Acceleration Cloud platform that offers fast inference, fine-tuning, and training services. It provides self-service NVIDIA GPUs, model deployment on custom hardware, AI chat app, code execution sandbox, and tools to find the right model for specific use cases. The platform also includes a model library with open-source models, documentation for developers, and resources for advancing open-source AI. Together AI enables users to leverage pre-trained models, fine-tune them, or build custom models from scratch, catering to various generative AI needs.

Global Blockchain Show

The Global Blockchain Show is an annual event that brings together experts and enthusiasts in the blockchain and AI industries. The event features a variety of speakers, workshops, and exhibitions, and provides a platform for attendees to learn about the latest developments in these fields. The 2024 Global Blockchain Show will be held in Dubai, UAE, from April 16-17. The event will feature a keynote address from Sophia, the world's most famous humanoid robot, as well as presentations from other leading experts in the blockchain and AI fields. Attendees will also have the opportunity to network with other professionals in the industry and learn about the latest products and services from leading companies. The Global Blockchain Show is a must-attend event for anyone interested in the latest developments in blockchain and AI.

EyePop.ai

EyePop.ai is a hassle-free AI vision partner designed for innovators to easily create and own custom AI-powered vision models tailored to their visual data needs. The platform simplifies building AI-powered vision models through a fast, intuitive, and fully guided process without the need for coding or technical expertise. Users can define their target, upload data, train their model, deploy and detect, and iterate and improve to ensure effective AI solutions. EyePop.ai offers pre-trained model library, self-service training platform, and future-ready solutions to help users innovate faster, offer unique solutions, and make real-time decisions effortlessly.

Coursera

Coursera is an online learning platform that offers courses, specializations, and degrees from top universities and companies. It provides a wide range of subjects, including business, computer science, data science, and more. Coursera also offers a variety of learning formats, including self-paced courses, live online classes, and guided projects. With Coursera, you can learn at your own pace and on your own schedule.

Tangram Vision

Tangram Vision is a company that provides sensor calibration tools and infrastructure for robotics and autonomous vehicles. Their products include MetriCal, a high-speed bundle adjustment software for precise sensor calibration, and AutoCal, an on-device, real-time calibration health check and adjustment tool. Tangram Vision also offers a high-resolution depth sensor called HiFi, which combines high-resolution depth data with high-powered AI capabilities. The company's mission is to accelerate the development and deployment of autonomous systems by providing the tools and infrastructure needed to ensure the accuracy and reliability of sensors.

AudioCodes VoiceAI Connect

AudioCodes VoiceAI Connect is a cloud-based platform that enables developers to build and deploy voicebots. It provides a range of features, including connectivity to any contact center or SIP trunk, support for any speech engine or bot framework, and the ability to reduce the cost of speech services by up to 40%. VoiceAI Connect is available as a fully managed service (Enterprise edition) and as a self-service SaaS solution (AudioCodes Live Hub) to support any deployment, integration, or regulatory needs.

Luxonis

Luxonis is an AI application that offers Visual AI solutions engineered for precision edge inference. The application provides stereo depth cameras with unique features and quality, enabling users to perform advanced vision tasks on-device, reducing latency and bandwidth demands. With open-source DepthAI API, users can create and deploy custom vision solutions that scale with their needs. Luxonis also offers real-world training data for self-improving vision intelligence and operates flawlessly through vibrations, temperature shifts, and extended use. The application integrates advanced sensing capabilities with up to 48MP cameras, wide field of view, IMUs, microphones, ToF, thermal, IR illumination, and active stereo for unparalleled perception.

Seldon

Seldon is an MLOps platform that helps enterprises deploy, monitor, and manage machine learning models at scale. It provides a range of features to help organizations accelerate model deployment, optimize infrastructure resource allocation, and manage models and risk. Seldon is trusted by the world's leading MLOps teams and has been used to install and manage over 10 million ML models. With Seldon, organizations can reduce deployment time from months to minutes, increase efficiency, and reduce infrastructure and cloud costs.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

Azure Static Web Apps

Azure Static Web Apps is a platform provided by Microsoft Azure for building and deploying modern web applications. It allows developers to easily host static web content and serverless APIs with seamless integration to popular frameworks like React, Angular, and Vue. With Azure Static Web Apps, developers can quickly set up continuous integration and deployment workflows, enabling them to focus on building great user experiences without worrying about infrastructure management.

1 - Open Source AI Tools

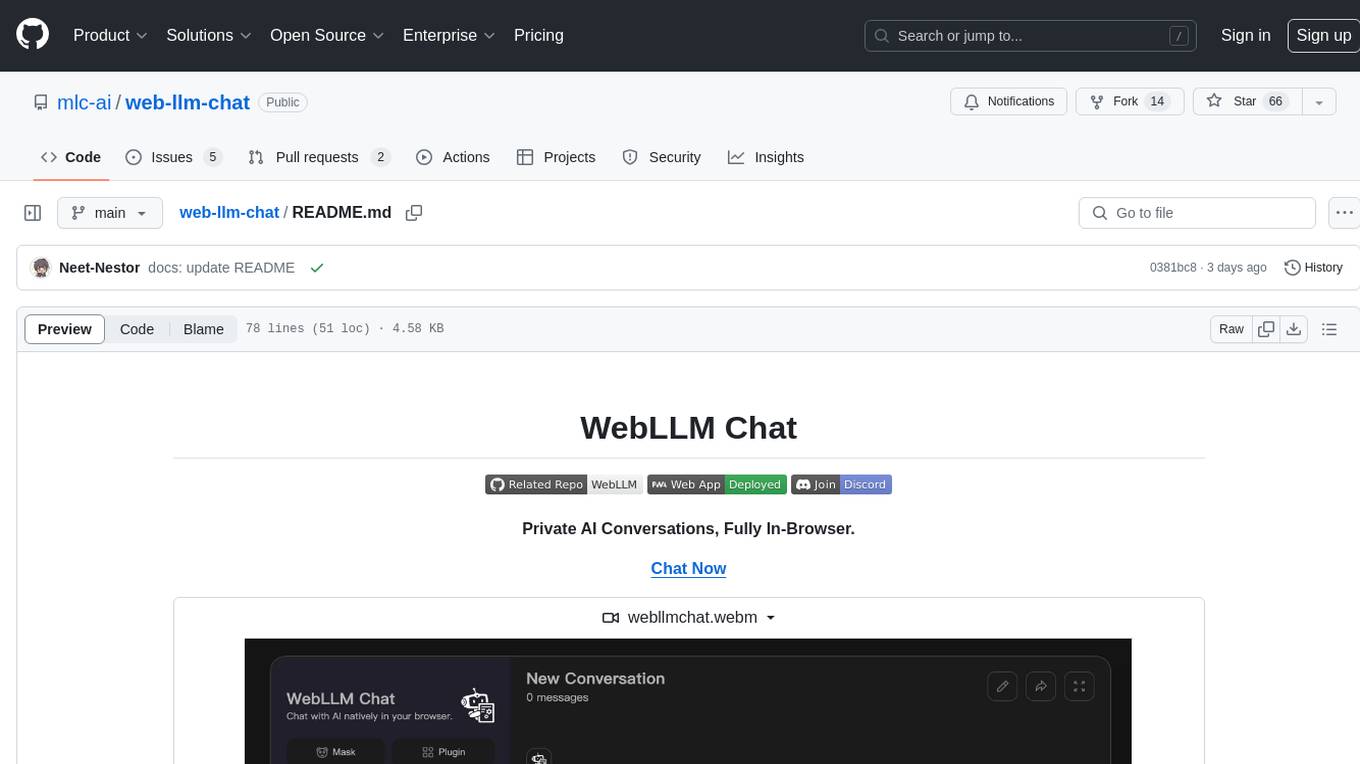

web-llm-chat

WebLLM Chat is a private AI chat interface that combines WebLLM with a user-friendly design, leveraging WebGPU to run large language models natively in your browser. It offers browser-native AI experience with WebGPU acceleration, guaranteed privacy as all data processing happens locally, offline accessibility, user-friendly interface with markdown support, and open-source customization. The project aims to democratize AI technology by making powerful tools accessible directly to end-users, enhancing the chatting experience and broadening the scope for deployment of self-hosted and customizable language models.

20 - OpenAI Gpts

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

Cloudwise Consultant

Expert in cloud-native solutions, provides tailored tech advice and cost estimates.