Best AI tools for< Conduct Evaluation >

20 - AI tool Sites

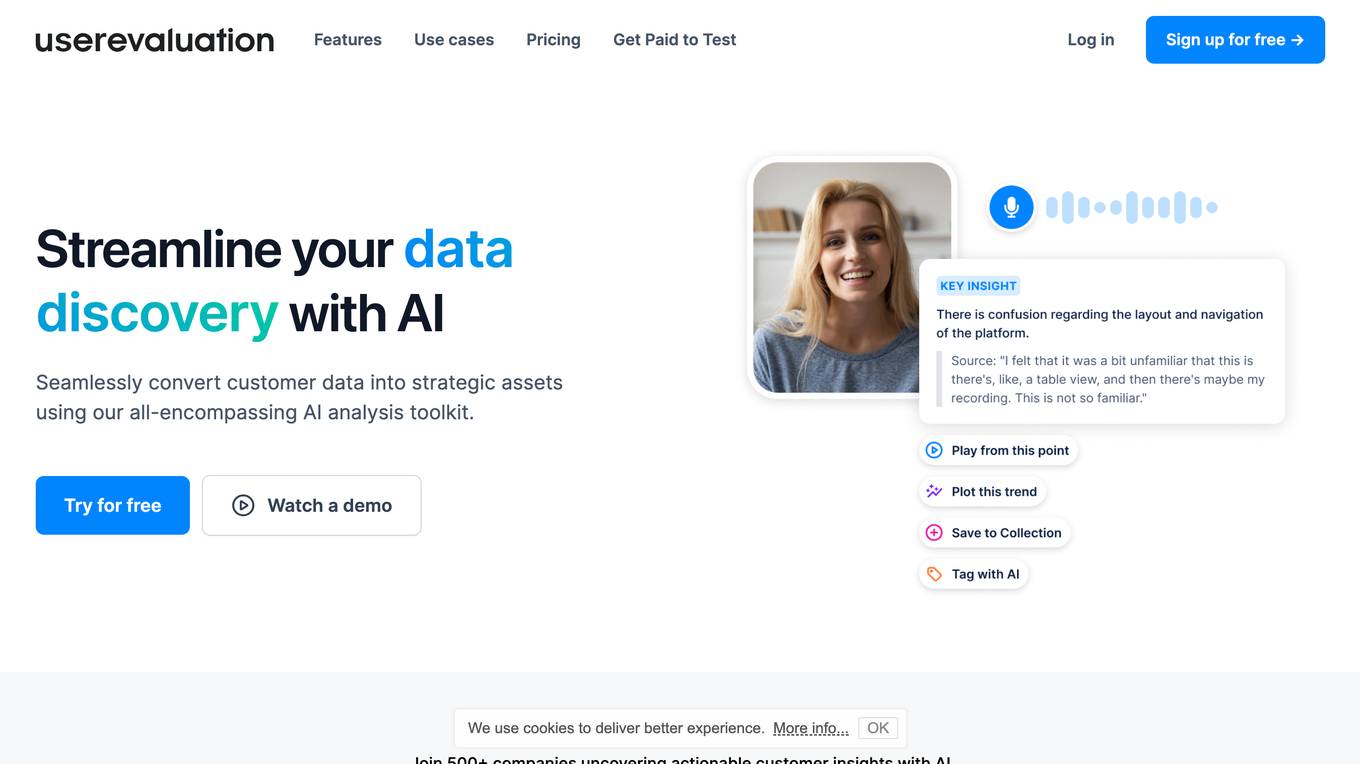

User Evaluation

User Evaluation is an AI-first user research platform that leverages AI technology to provide instant insights, comprehensive reports, and on-demand answers to enhance customer research. The platform offers features such as AI-driven data analysis, multilingual transcription, live timestamped notes, AI reports & presentations, and multimodal AI chat. User Evaluation empowers users to analyze qualitative and quantitative data, synthesize AI-generated recommendations, and ensure data security through encryption protocols. It is designed for design agencies, product managers, founders, and leaders seeking to accelerate innovation and shape exceptional product experiences.

DeepEval

DeepEval by Confident AI is a comprehensive LLM Evaluation Framework used by leading AI companies. It enables users to build reliable evaluation pipelines to test any AI system. With 50+ research-backed metrics, native multi-modal support, and auto-optimization of prompts, DeepEval offers a sophisticated evaluation ecosystem for AI applications. The framework covers unit-testing for LLMs, single and multi-turn evaluations, generation & simulation of test data, and state-of-the-art evaluation techniques like G-Eval and DAG. DeepEval is integrated with Pytest and supports various system architectures, making it a versatile tool for AI testing.

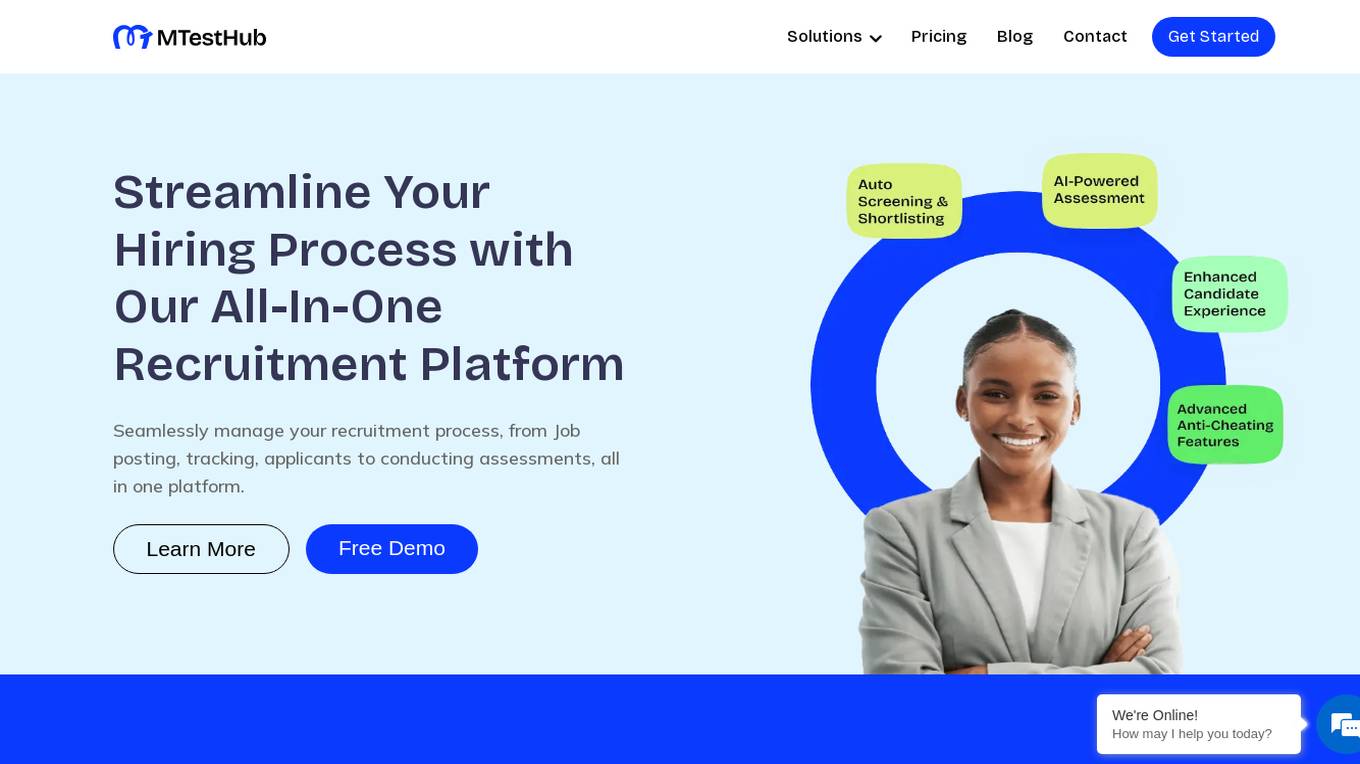

MTestHub

MTestHub is an all-in-one recruiting and assessment automation solution that streamlines the hiring process for organizations. It harnesses AI technology to identify top talent, provides an authentic online examination platform, and offers features such as auto screening, shortlisting, efficient interviews, and post-shortlisting actions. The platform aims to enhance recruitment efficiency, improve candidate experience, and reduce administrative burdens through automation and data-driven insights.

Parroview

Parroview is a revolutionary AI-powered user research platform that automates the process of conducting user interviews. It uses natural language processing (NLP) to engage with users in real-time conversations, asking follow-up questions and uncovering insights that would be difficult to obtain through traditional methods. Parroview is designed to be fully autonomous, allowing researchers to set up interviews and gather insights without the need for manual intervention. It supports multiple languages, making it accessible to a global audience. Parroview offers a range of features, including the ability to conduct interviews via text or voice, analyze insights in real-time, and generate detailed transcripts. It is suitable for a wide range of research needs, including product validation, consumer behavior analysis, post-purchase evaluations, brand perception studies, and customer persona development.

AILYZE

AILYZE is an AI tool designed for qualitative data collection and analysis. Users can upload various document formats in any language to generate codes, conduct thematic, frequency, content, and cross-group analysis, extract top quotes, and more. The tool also allows users to create surveys, utilize an AI voice interviewer, and recruit participants globally. AILYZE offers different plans with varying features and data security measures, including options for advanced analysis and AI interviewer add-ons. Additionally, users can tap into data scientists for detailed and customized analyses on a wide range of documents.

Snapteams

Snapteams is an AI-powered hiring assistant that streamlines the recruitment process by conducting real-time video interviews and candidate screening. It leverages AI technology to engage, interview, and assess top talent seamlessly, allowing employers to focus on evaluating candidates from the comfort of their desk.

ThitaHire

ThitaHire is an AI Interview Platform designed for recruiters to automate first-round interviews and provide structured decision-ready feedback. It offers a world-class AI interviewer for modern hiring teams, enabling a shift from manual screening to dependable AI interviews with better speed, consistent quality, and recruiter-friendly feedback. ThitaHire supports various interview types including software engineering, data science, AI/LLM, product, behavioral, HLD, and LLD rounds. The platform is trusted by top companies like Google, Meta, Amazon, Microsoft, Stripe, Atlassian, Databricks, and Flipkart.

JobSynergy

JobSynergy is an AI-powered platform that revolutionizes the hiring process by automating and conducting interviews at scale. It offers a real-world interview simulator that adapts dynamically to candidates' responses, custom questions and metrics evaluation, cheating detection using eye, voice, and screen, and detailed reports for better hiring decisions. The platform enhances efficiency, candidate experience, and ensures security and integrity in the hiring process.

Effy AI

Effy AI is a free performance management software for teams. It is AI-powered and backed by Run your first 360 review in 60 sec. Fast, and stress-free 360 feedback and performance review software build for teams. With Effy AI, you can collect reviews from different sources such as self, peer, manager, and subordinate evaluations. The platform goes even further by allowing employees to suggest particular peers and seek approval from their manager, giving them a voice in their reviews. Effy AI uses cutting-edge artificial intelligence to carefully process reviewers' answers and generate comprehensive reports for each employee based on the review responses.

Gen AI Interviewer

Gen AI Interviewer is an AI-powered tool designed to conduct interviews. It utilizes artificial intelligence to simulate real interview scenarios and evaluate candidates' responses. By leveraging advanced algorithms, it provides valuable insights to recruiters and hiring managers, helping them make informed decisions in the hiring process. With Gen AI Interviewer, users can streamline their interview process, save time, and improve the overall efficiency of candidate evaluation.

Sereda.ai

Sereda.ai is an AI-powered platform designed to unleash a team's potential by bringing together all documents and knowledge into one place, conducting employee surveys and satisfaction ratings, facilitating performance reviews, and providing solutions to increase team productivity. The platform offers features such as a knowledge base, employee surveys, performance review tools, interactive learning courses, and an AI assistant for instant answers. Sereda.ai aims to streamline HR processes, improve employee training and evaluation, and enhance overall team productivity.

Xobin

Xobin is an AI tool that offers AI Interviews, a feature that revolutionizes the candidate assessment process. It utilizes a smart Copilot to conduct automated, role-specific interviews, providing real-time analysis of candidate responses. This innovation streamlines the recruitment process by reducing manual effort for recruiters and ensuring a structured and unbiased evaluation process. Xobin generates detailed reports that include questions asked, responses given, and insights into candidates' communication, reasoning, and domain expertise.

LEVI AI Recruiting Software

LEVI AI Recruiting Software is a modern recruitment automation platform powered by artificial intelligence. It revolutionizes the candidate evaluation and selection process by using advanced AI recruitment tools. LEVI assists in making data-driven hiring decisions, matches candidates to job requirements, conducts independent interviews, generates comprehensive reports, integrates with hiring systems, and enables informed and efficient hiring decisions. The application unlocks the full potential of machine learning models, eliminates bias in the hiring process, and automates candidate screening. LEVI's AI-powered recruitment tools change how candidate evaluations are performed through automated resume screening, candidate sourcing, and AI interview assessments.

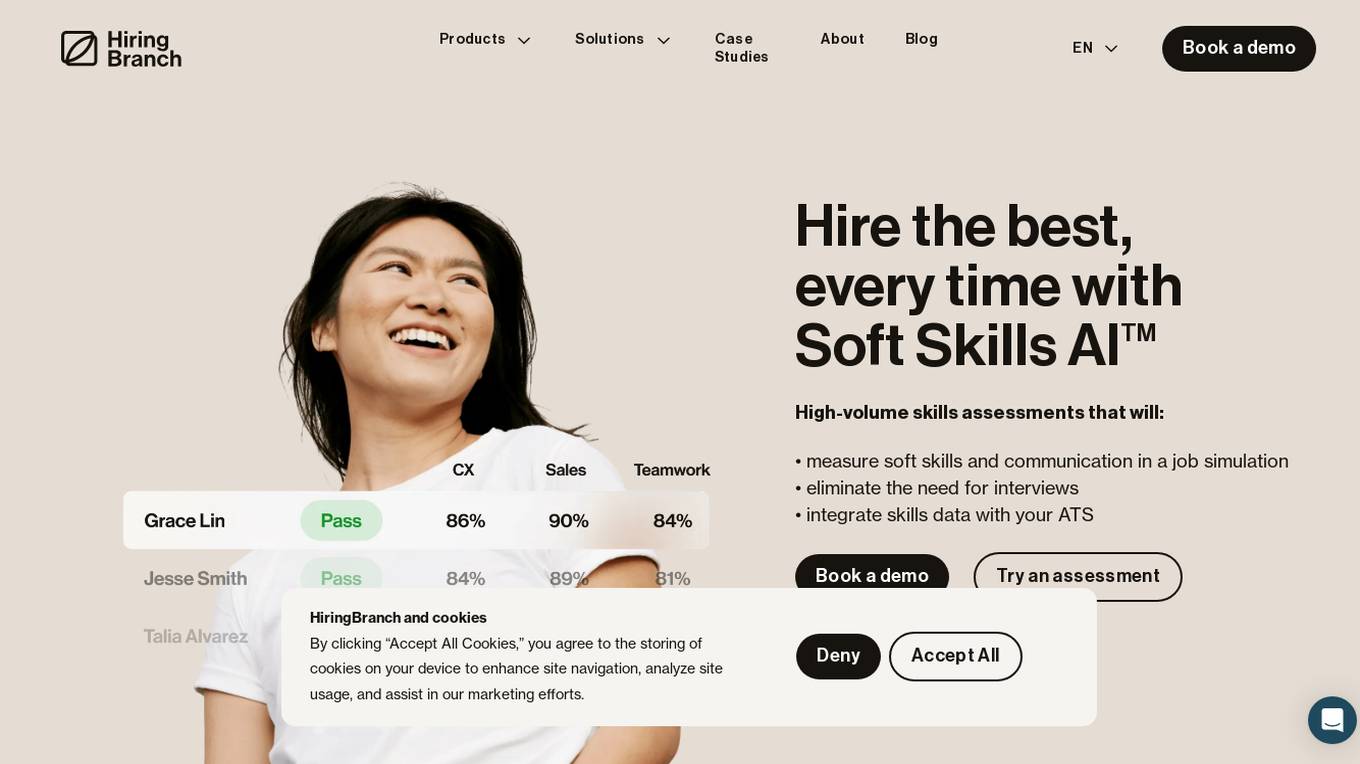

HiringBranch

HiringBranch is an AI-powered platform that offers high-volume skills assessments to help companies hire the best candidates efficiently. The platform accurately measures soft skills and communication through open-ended conversational assessments, eliminating the need for traditional interviews. HiringBranch's AI skills assessments are tailored to various industries such as Telecommunication, Retail, Banking & Insurance, and Contact Centers, providing real-time evaluation of role-critical skills. The platform aims to streamline the hiring process, reduce mis-hires, and improve retention rates for enterprises globally.

RoundOneAI

RoundOneAI is an AI-driven platform revolutionizing tech recruitment by offering unbiased and efficient candidate assessments, ensuring skill-based evaluations free from demographic biases. The platform streamlines the hiring process with tailored job descriptions, AI-powered interviews, and insightful analytics. RoundOneAI helps companies evaluate candidates simultaneously, make informed hiring decisions, and identify top talent efficiently.

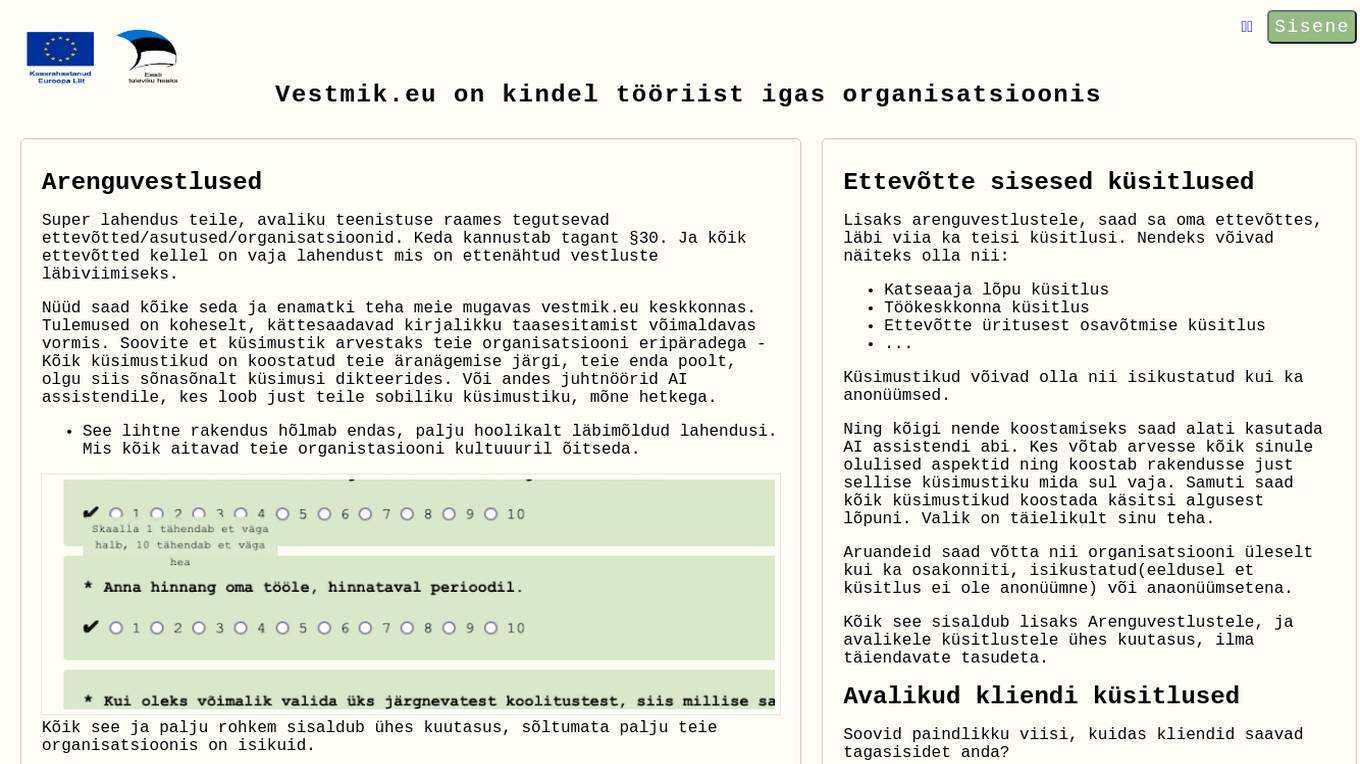

Vestmik.eu

Vestmik.eu is an AI tool designed for conducting development conversations, surveys, and questionnaires in organizations. It offers a comprehensive solution for companies, institutions, and organizations operating within the public sector. The platform allows users to create customized questionnaires tailored to their organization's specific needs, either manually or with the assistance of an AI assistant. Additionally, Vestmik.eu provides features for conducting internal and public surveys, as well as guided conversation processes for performance reviews. The tool aims to enhance organizational culture and streamline communication processes through its user-friendly interface and advanced functionalities.

Sereda.ai

Sereda.ai is an AI-powered platform designed to unleash a team's potential by offering solutions for employee knowledge management, surveys, performance reviews, learning, and more. It integrates artificial intelligence to streamline HR processes, improve employee engagement, and boost productivity. The platform provides a user-friendly interface, personalized settings, and automation features to enhance organizational efficiency and reduce costs.

GreetAI

GreetAI is an AI-powered platform that revolutionizes the hiring process by conducting AI video interviews to evaluate applicants efficiently. The platform provides insightful reports, customizable interview questions, and highlights key points to help recruiters make informed decisions. GreetAI offers features such as interview simulations, job post generation, AI video screenings, and detailed candidate performance metrics.

ShortlistIQ

ShortlistIQ is an AI recruiting tool that revolutionizes the candidate screening process by conducting first-round interviews using conversational AI. It automates over 80% of the time spent screening candidates, providing human-like scoring reports for every job candidate. The AI assistant engages candidates in a personalized and engaging way, ensuring fair assessments and revealing true candidate competence through strategic questioning. ShortlistIQ aims to streamline the recruitment process, decrease time to hire, and increase candidate satisfaction.

Sapia.ai

Sapia.ai is an AI hiring agent that revolutionizes the recruitment process by conducting structured interviews with candidates, evaluating real skills, and providing valuable insights at scale. Trusted by leading brands, Sapia.ai streamlines hiring processes, enhances candidate experience, and improves hiring outcomes through AI-driven solutions. The platform offers features such as chat and video interviews, interview scheduling, talent insights, and candidate engagement tools. With a focus on speed, fairness, and diversity, Sapia.ai helps organizations find the right talent efficiently and effectively.

1 - Open Source AI Tools

TempCompass

TempCompass is a benchmark designed to evaluate the temporal perception ability of Video LLMs. It encompasses a diverse set of temporal aspects and task formats to comprehensively assess the capability of Video LLMs in understanding videos. The benchmark includes conflicting videos to prevent models from relying on single-frame bias and language priors. Users can clone the repository, install required packages, prepare data, run inference using examples like Video-LLaVA and Gemini, and evaluate the performance of their models across different tasks such as Multi-Choice QA, Yes/No QA, Caption Matching, and Caption Generation.

20 - OpenAI Gpts

Engineering Manager Coach

Guiding engineering managers with insights on team dynamics, development, and evaluations.

CIM Analyst

In-depth CIM analysis with a structured rating scale, offering detailed business evaluations.

Valves Cardio Echo Consultant

Consultant GPT pour cardiologues, expert en évaluation échocardiographique des valves cardiaques et des prothèses valvulaires.

Agile Consultant

Expert in Agile SDLC, helping the teams to get familiar with best practices and provide audit and evaluation services

Design Crit

I conduct design critiques focused on enhancing understanding and improvement.

IQ Test Assistant

An AI conducting 30-question IQ tests, assessing and providing detailed feedback.

MEICCA expert

Experto en educación y evaluación de aprendizajes. Parte de equipo de investigación del proyecto MEICCA

Evolutionary Psychologist

The evolutionary psychologist answers questions based on academic sources

Calidad en Educación Superior

Puedo asesorar en temas relacionados con calidad en IES (planificación, autoevaluación, acreditación, mejora continua)

UK Visajob

Conduct various flexible analyses and inquiries based on official information about companies with work visa sponsorship qualifications.

Automation QA Interview Assistant

I provide Automation QA interview prep and conduct mock interviews.