Best AI tools for< Compare Llms >

20 - AI tool Sites

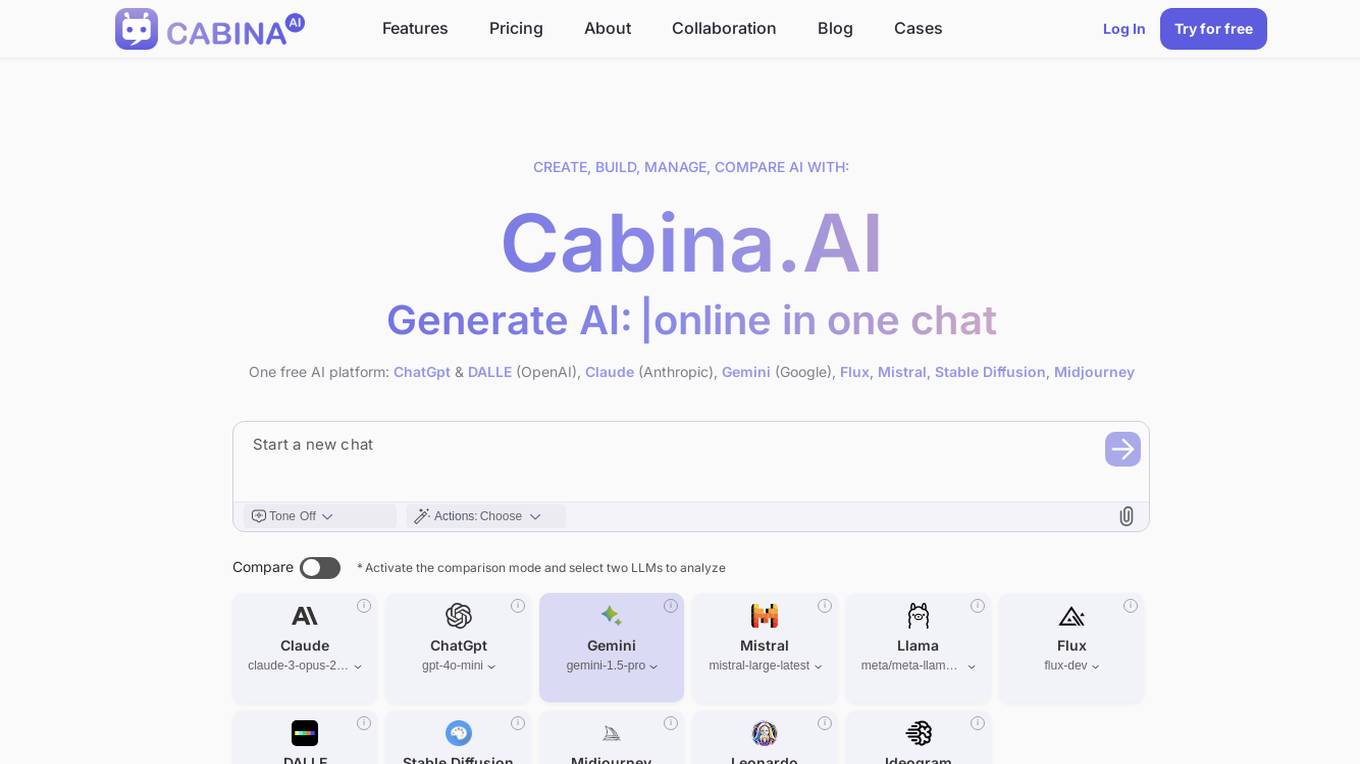

Cabina.AI

Cabina.AI is a free AI platform that allows users to generate content, text, and images online through a single chat interface. It offers a range of AI models such as ChatGpt, DALLE, Claude, Gemini, Flux, Mistral, and more for tasks like content creation, research, and real-time task solving. Users can access different LLMs, compare results, and find the best solutions faster. Cabina.AI also provides personalized actions, organization of chats, and the ability to track various data points. With flexible pricing plans and a friendly community, Cabina.AI aims to be a universal tool for research and content creation.

LLM Clash

LLM Clash is a web-based application that allows users to compare the outputs of different large language models (LLMs) on a given task. Users can input a prompt and select which LLMs they want to compare. The application will then display the outputs of the LLMs side-by-side, allowing users to compare their strengths and weaknesses.

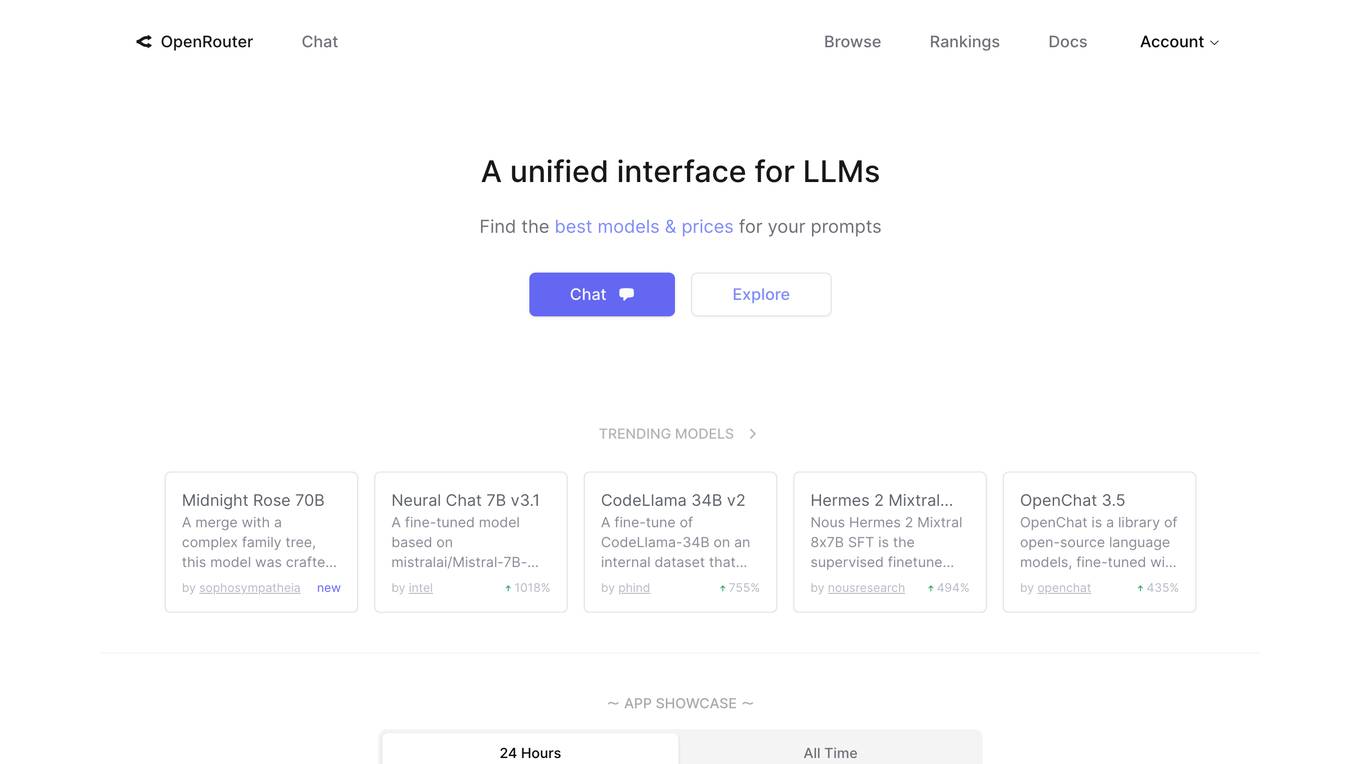

OpenRouter

OpenRouter is an AI tool that provides a unified interface for Large Language Models (LLMs). Users can find the best models and prices for various prompts related to roleplay, programming, marketing, technology, science, translation, legal, finance, health, trivia, and academia. The platform offers transformer-based models with multilingual capabilities, coding, mathematics, and reasoning. It features SwiGLU activation, attention QKV bias, and group query attention. OpenRouter allows users to interact with trending models, simulate the web, chat with multiple LLMs simultaneously, and engage in AI character chat and roleplay.

AI Tool Reviews

The website is a platform that provides comprehensive and unbiased reviews of various AI tools and applications. It aims to help users, especially small businesses, make informed decisions about selecting the right AI tools to enhance productivity and stay ahead of the competition. The site offers detailed comparisons, interviews with AI innovators, expert tips, and insights into the future of artificial intelligence. It also features blog posts on AI-related topics, free resources, and a newsletter for staying updated on the latest AI trends and tools.

NavamAI

NavamAI is a personal AI tool designed to supercharge productivity and workflow by providing fast and quality AI capabilities. It offers a rich UI within the command prompt, allowing users to interact with 15 language models and 7 providers seamlessly. NavamAI enables users to automate markdown workflows, generate situational apps, and create personalized AI applications with ease. With features like quick start commands, customizable prompts, and privacy controls, NavamAI empowers users to do more with less and make their AI experience their own.

ChatLabs

ChatLabs is an AI application that provides users with access to a variety of AI models for tasks such as chatting, writing, web searching, image generation, and more. Users can interact with AI assistants, browse the web, generate AI art, and utilize voice input features. The platform offers a prompt library, chat with files functionality, split-screen mode, and a Chrome extension for enhanced user experience.

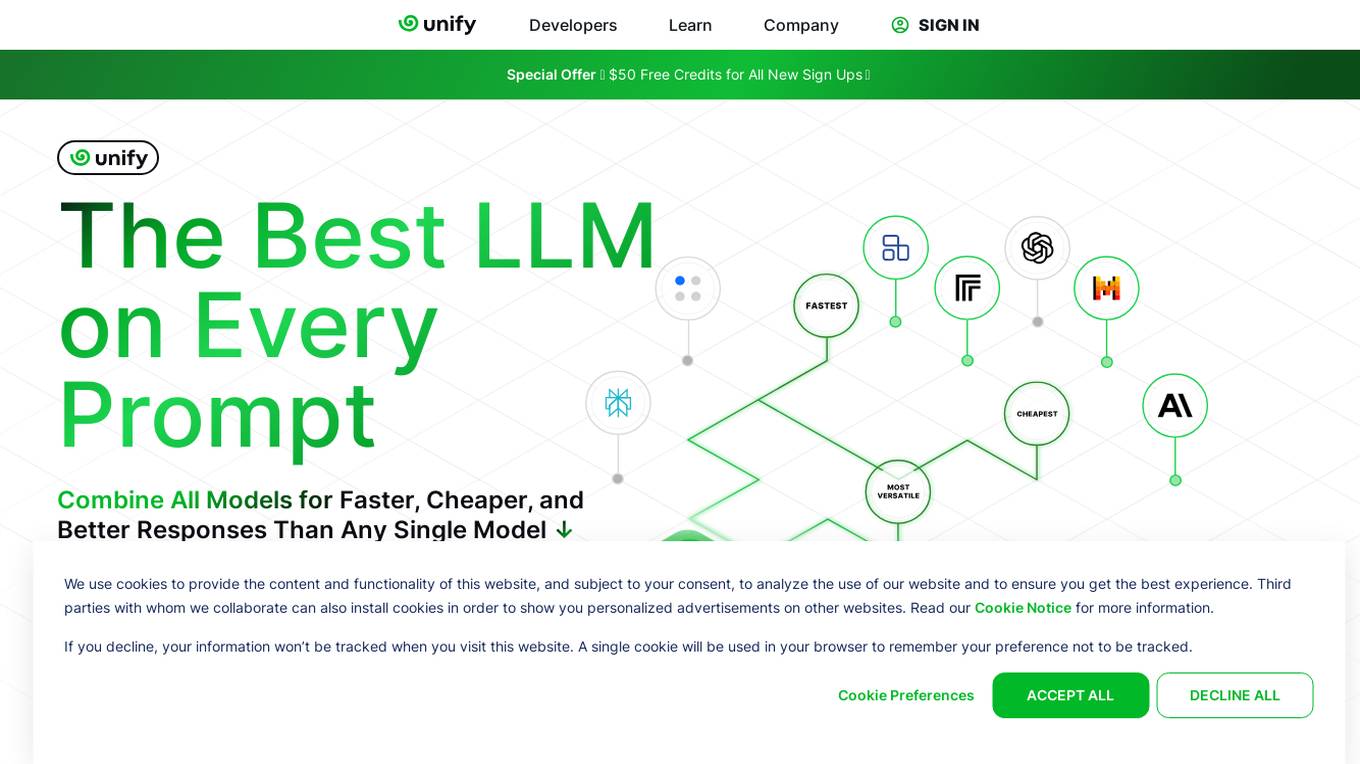

Unify

Unify is an AI tool that offers a unified platform for accessing and comparing various Language Models (LLMs) from different providers. It allows users to combine models for faster, cheaper, and better responses, optimizing for quality, speed, and cost-efficiency. Unify simplifies the complex task of selecting the best LLM by providing transparent benchmarks, personalized routing, and performance optimization tools.

Semaj AI

Semaj AI is an AI tool designed to simplify the process of generating quizzes and obtaining answers from various AI models. It allows users to create quizzes on any topic with customizable settings and export options. Additionally, users can chat with different AI models like GPT, Gemini, and CLAUDE to get accurate and diverse responses. The platform aims to streamline the quiz creation process and provide access to cutting-edge AI technologies for enhanced learning and research purposes.

BrainChat

BrainChat is an AI application that enables teams to utilize ChatGPT and other Large Language Models (LLMs) in a structured, secure, and collaborative manner for work purposes. It offers organized and collaborative chats, tailored AI assistants for various job roles, private and safe infrastructure, multiple LLM options, and cost-efficient pricing compared to ChatGPT Team. BrainChat allows users to import chats from ChatGPT, offers real-time collaboration, and ensures data security and GDPR compliance.

Joia

Joia is a private ChatGPT alternative built for collaboration within teams. It provides secure access to various large language models (LLMs) like GPT-4, Claude, and Gemini, allowing teams to build and share internal AI chat applications. Joia prioritizes data security, cost control, and offers a more affordable option compared to ChatGPT for Teams, with savings of up to 70%. It enables users to experiment with different LLMs and create personalized chatbots for repetitive tasks, enhancing team collaboration and efficiency.

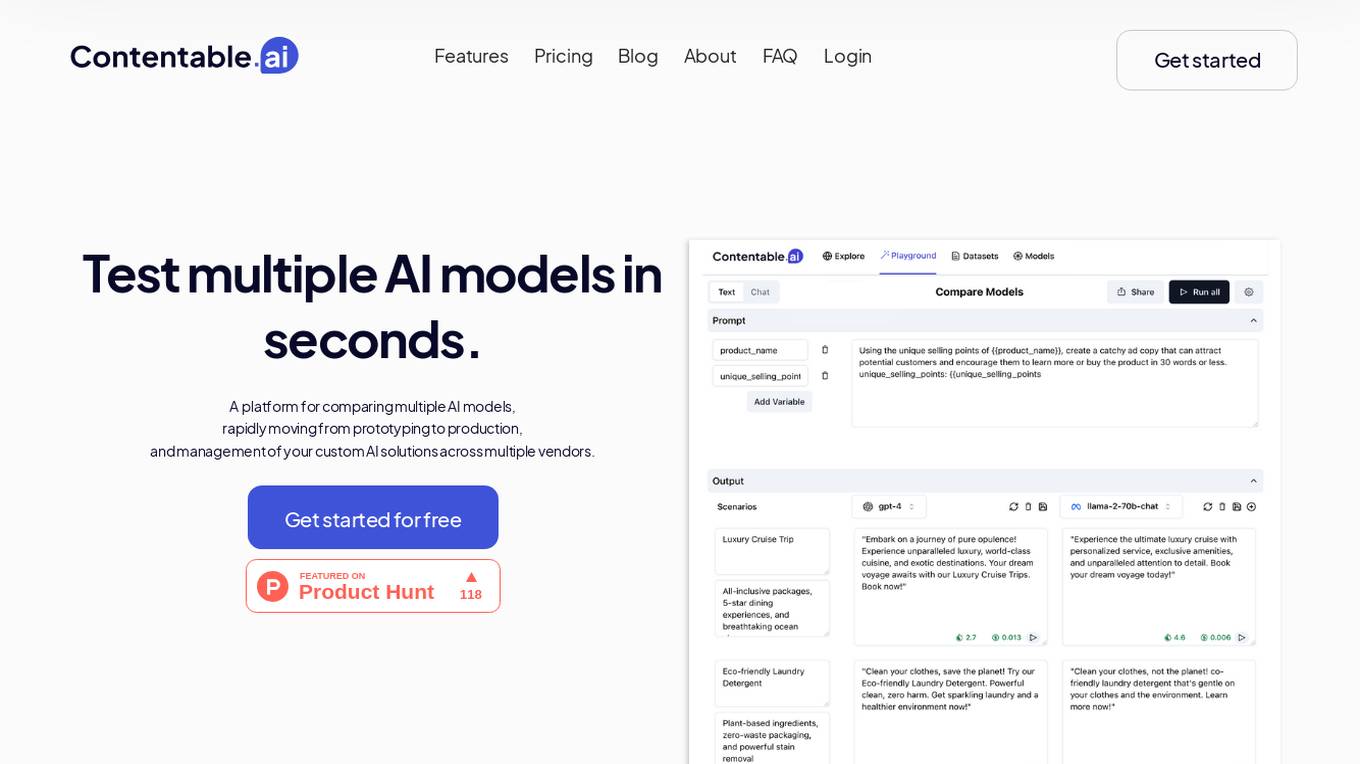

Contentable.ai

Contentable.ai is a platform for comparing multiple AI models, rapidly moving from prototyping to production, and management of your custom AI solutions across multiple vendors. It allows users to test multiple AI models in seconds, compare models side-by-side across top AI providers, collaborate on AI models with their team seamlessly, design complex AI workflows without coding, and pay as they go.

Sofon

Sofon is a knowledge aggregation and curation platform that provides users with personalized insights on topics they care about. It aggregates and curates knowledge shared across 1,000+ articles, podcasts, and books, delivering a personalized stream of ideas to users. Sofon uses AI to compare ideas across hundreds of people on any question, saving users thousands of hours of curation. Users can indicate the people they want to learn from, and Sofon will curate insights across all their knowledge. Users can receive an idealetter, which is a unique combination of ideas across all the people they've selected around a common theme, delivered at an interval of their choice.

Prompt Octopus

Prompt Octopus is a free tool that allows you to compare multiple prompts side-by-side. You can add as many prompts as you need and view the responses in real-time. This can be helpful for fine-tuning your prompts and getting the best possible results from your AI model.

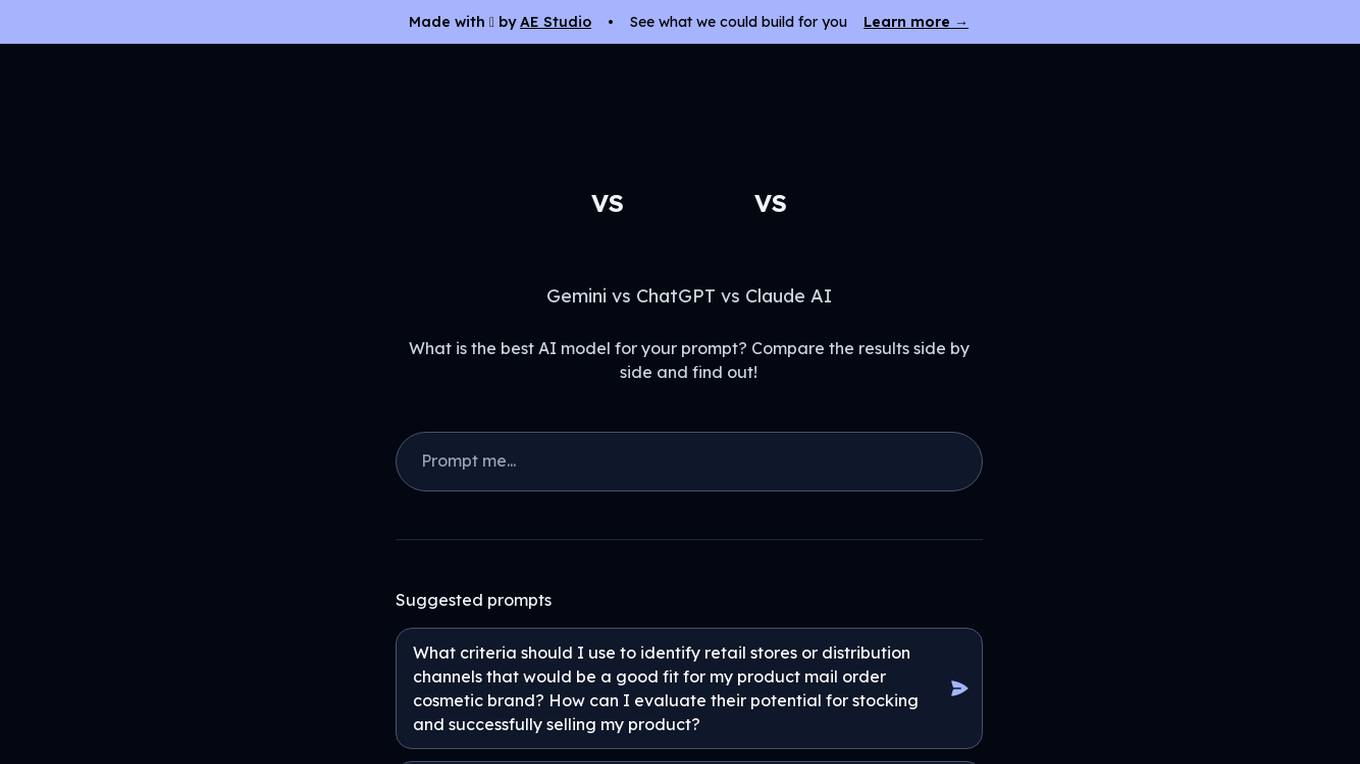

Gemini vs ChatGPT

Gemini is a multi-modal AI model, developed by Google. It is designed to understand and generate human language, and can be used for a variety of tasks, including question answering, translation, and dialogue generation. ChatGPT is a large language model, developed by OpenAI. It is also designed to understand and generate human language, and can be used for a variety of tasks, including question answering, translation, and dialogue generation.

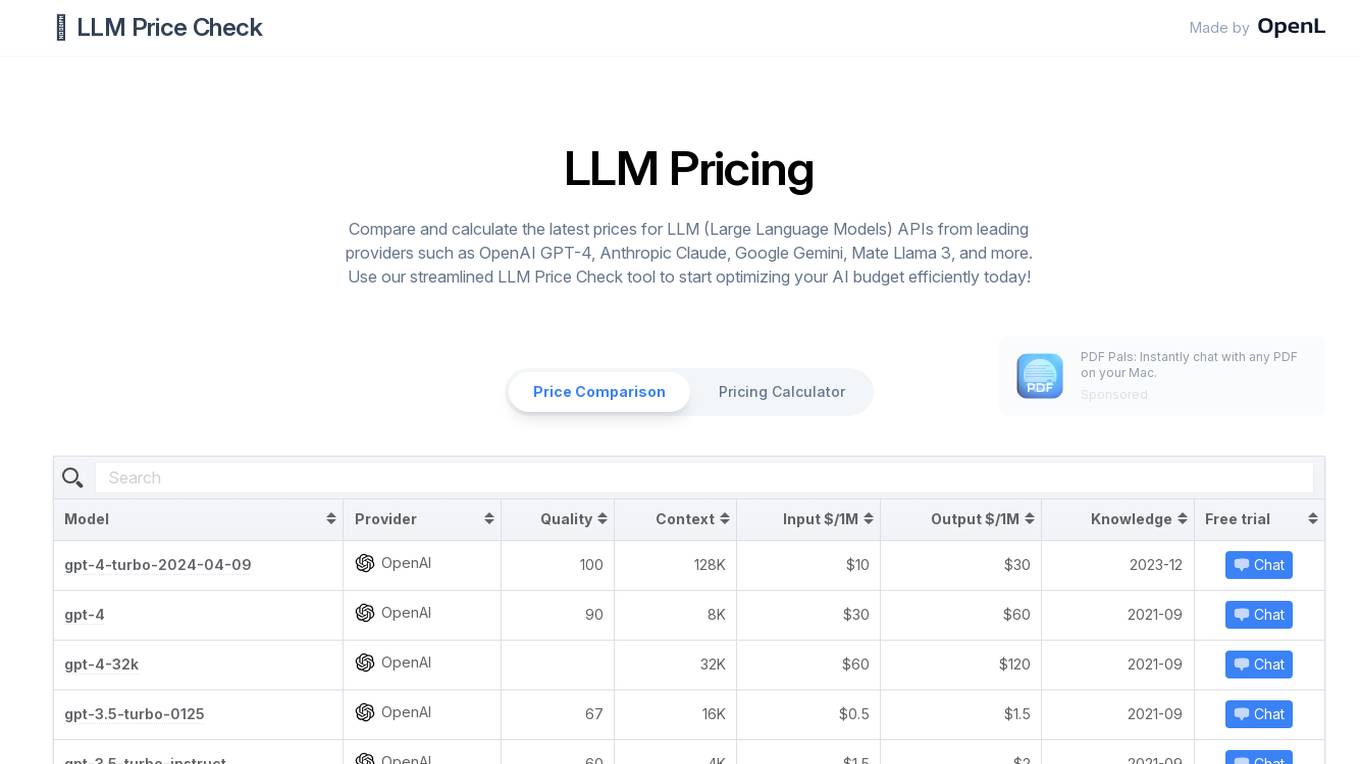

LLM Price Check

LLM Price Check is an AI tool designed to compare and calculate the latest prices for Large Language Models (LLM) APIs from leading providers such as OpenAI, Anthropic, Google, and more. Users can use the streamlined tool to optimize their AI budget efficiently by comparing pricing, sorting by various parameters, and searching for specific models. The tool provides a comprehensive overview of pricing information to help users make informed decisions when selecting an LLM API provider.

ChatPlayground AI

ChatPlayground AI is a versatile platform that allows users to compare multiple AI chatbots to obtain the best responses. With 14+ AI apps and features available, users can achieve better AI answers 73% of the time. The platform offers a comprehensive prompt library, real-time web search capabilities, image generation, history recall, document upload and analysis, and multilingual support. It caters to developers, data scientists, students, researchers, content creators, writers, and AI enthusiasts. Testimonials from users highlight the efficiency and creativity-enhancing benefits of using ChatPlayground AI.

thisorthis.ai

thisorthis.ai is an AI tool that allows users to compare generative AI models and AI model responses. It helps users analyze and evaluate different AI models to make informed decisions. The tool requires JavaScript to be enabled for optimal functionality.

GetOData

GetOData is an AI-based data extraction tool designed for small-scale scraping. It allows users to discover and compare over 4,000 APIs for various use cases. The tool offers Apify Actors for extracting structured listings from any website and a Chrome Extension for seamless data extraction. With features like AI-based data extraction, side-by-side API comparisons, and automated scrolling for data collection, GetOData is a powerful tool for web scraping and data analysis.

Ai Tool Hunt

Ai Tool Hunt is a comprehensive directory of free AI tools, software, and websites. It provides users with a curated list of the best AI resources available online, empowering them to enhance their digital experiences and leverage the latest advancements in artificial intelligence. With Ai Tool Hunt, users can discover powerful AI tools for various tasks, including content creation, data analysis, image editing, language learning, and more. The platform offers detailed descriptions, user ratings, and easy access to these tools, making it a valuable resource for individuals and businesses seeking to integrate AI into their workflows.

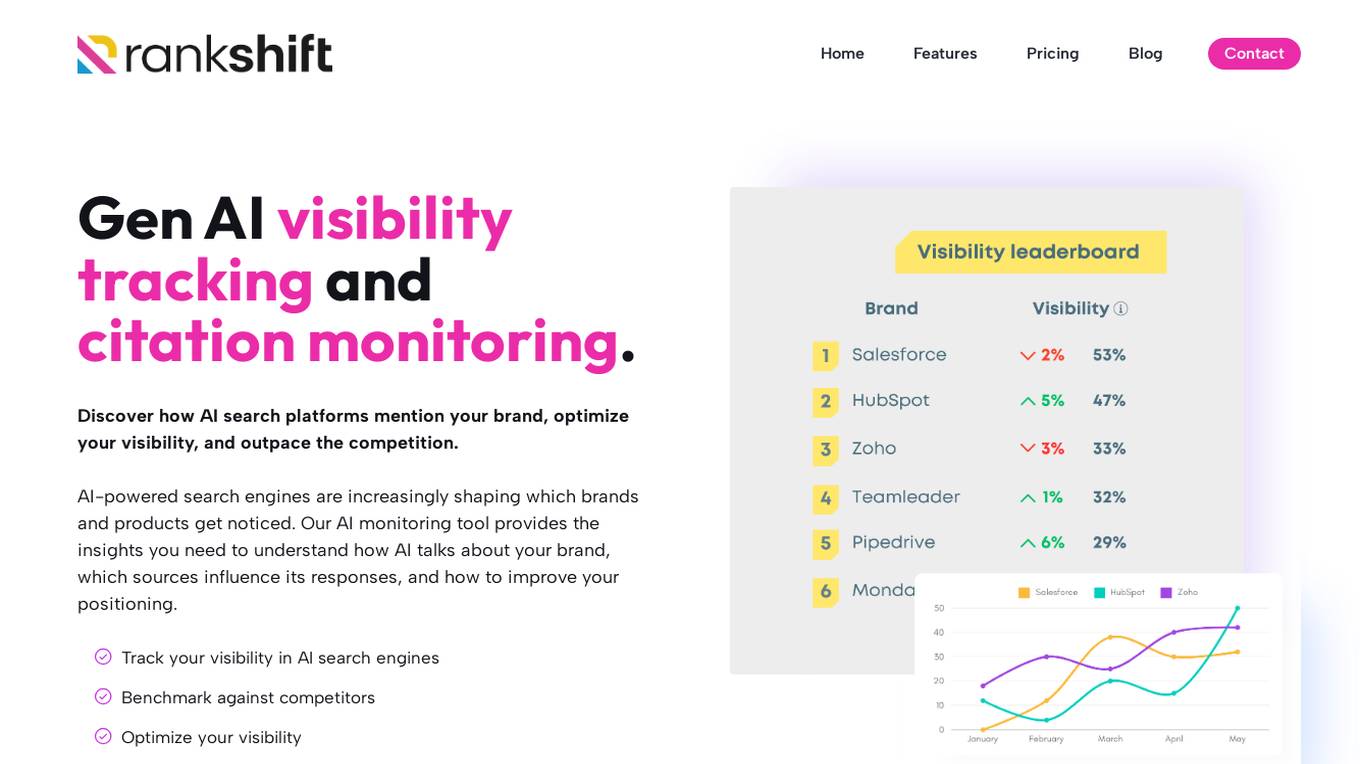

RANKSHIFT

RANKSHIFT is an AI brand visibility tracking tool that helps businesses monitor their visibility in AI search engines and optimize their presence. It provides insights on how AI platforms mention brands, compares visibility against competitors, and offers data-driven insights to improve AI search strategy. The tool tracks brand mentions, identifies sources influencing AI-generated answers, and helps businesses stay ahead in the competitive AI landscape.

4 - Open Source AI Tools

bench

Bench is a tool for evaluating LLMs for production use cases. It provides a standardized workflow for LLM evaluation with a common interface across tasks and use cases. Bench can be used to test whether open source LLMs can do as well as the top closed-source LLM API providers on specific data, and to translate the rankings on LLM leaderboards and benchmarks into scores that are relevant for actual use cases.

llm-autoeval

LLM AutoEval is a tool that simplifies the process of evaluating Large Language Models (LLMs) using a convenient Colab notebook. It automates the setup and execution of evaluations using RunPod, allowing users to customize evaluation parameters and generate summaries that can be uploaded to GitHub Gist for easy sharing and reference. LLM AutoEval supports various benchmark suites, including Nous, Lighteval, and Open LLM, enabling users to compare their results with existing models and leaderboards.

raga-llm-hub

Raga LLM Hub is a comprehensive evaluation toolkit for Language and Learning Models (LLMs) with over 100 meticulously designed metrics. It allows developers and organizations to evaluate and compare LLMs effectively, establishing guardrails for LLMs and Retrieval Augmented Generation (RAG) applications. The platform assesses aspects like Relevance & Understanding, Content Quality, Hallucination, Safety & Bias, Context Relevance, Guardrails, and Vulnerability scanning, along with Metric-Based Tests for quantitative analysis. It helps teams identify and fix issues throughout the LLM lifecycle, revolutionizing reliability and trustworthiness.

BTGenBot

BTGenBot is a tool that generates behavior trees for robots using lightweight large language models (LLMs) with a maximum of 7 billion parameters. It fine-tunes on a specific dataset, compares multiple LLMs, and evaluates generated behavior trees using various methods. The tool demonstrates the potential of LLMs with a limited number of parameters in creating effective and efficient robot behaviors.

20 - OpenAI Gpts

Best Spy Apps for Android (Q&A)

FREE tool to compare best spy apps for Android. Get answers to your questions and explore features, pricing, pros and cons of each spy app.

GPTValue

Compare similar GPTs outputs quality on the same question, identify the most valuable one.

TV Comparison | Comprehensive TV Database

Compare TV Devices Uncover the pros and cons of different latest TV models.

PerspectiveBot

Provide TOPIC & different views to compare: Gateway to Informed Comparisons. Harness AI-powered insights to analyze and score different viewpoints on any topic, delivering balanced, data-driven perspectives for smarter decision-making.

Calorie Count & Cut Cost: Food Data

Apples vs. Oranges? Optimize your low-calorie diet. Compare food items. Get tailored advice on satiating, nutritious, cost-effective food choices based on 240 items.

Best price kuwait

A customized GPT model for price comparison would search and compare product prices on websites in Kuwait, tailored to local markets and languages.

Software Comparison

I compare different software, providing detailed, balanced information.

Website Conversion by B12

I'll help you optimize your website for more conversions, and compare your site's CRO potential to competitors’.

Course Finder

Find the perfect online course in tech, business, marketing, programming, and more. Compare options from top platforms like Udemy, Coursera, and EDX.

AI Hub

Your Gateway to AI Discovery – Ask, Compare, Learn. Explore AI tools and software with ease. Create AI Tech Stacks for your business and much more – Just ask, and AI Hub will do the rest!

🔵 GPT Boosted

GPT- 5 ? | Enhanced version of GPT-4 Turbo, don't believe, try and compare! | ver .001