EmotiVoice

EmotiVoice 😊: a Multi-Voice and Prompt-Controlled TTS Engine

Stars: 6695

EmotiVoice is a powerful and modern open-source text-to-speech engine that supports emotional synthesis, enabling users to create speech with a wide range of emotions such as happy, excited, sad, and angry. It offers over 2000 different voices in both English and Chinese. Users can access EmotiVoice through an easy-to-use web interface or a scripting interface for batch generation of results. The tool is continuously evolving with new features and updates, prioritizing community input and user feedback.

README:

EmotiVoice is a powerful and modern open-source text-to-speech engine that is available to you at no cost. EmotiVoice speaks both English and Chinese, and with over 2000 different voices (refer to the List of Voices for details). The most prominent feature is emotional synthesis, allowing you to create speech with a wide range of emotions, including happy, excited, sad, angry and others.

An easy-to-use web interface is provided. There is also a scripting interface for batch generation of results.

Here are a few samples that EmotiVoice generates:

A demo is hosted on Replicate, EmotiVoice.

-

[x] Tuning voice speed is now supported in 'OpenAI-compatible-TTS API', thanks to @john9405. #90 #67 #77

-

[x] The EmotiVoice app for Mac was released on December 28th, 2023. Just download and taste EmotiVoice's offerings!

-

[x] The EmotiVoice HTTP API was released on December 6th, 2023. Easier to start, faster to use, and with over 13,000 free calls. Additionally, users can explore more captivating voices provided by Zhiyun.

-

[x] Voice Cloning with your personal data has been released on December 13th, 2023, along with DataBaker Recipe and LJSpeech Recipe.

EmotiVoice prioritizes community input and user requests. We welcome your feedback!

The easiest way to try EmotiVoice is by running the docker image. You need a machine with a NVidia GPU. If you have not done so, set up NVidia container toolkit by following the instructions for Linux or Windows WSL2. Then EmotiVoice can be run with,

docker run -dp 127.0.0.1:8501:8501 syq163/emoti-voice:latestThe Docker image was updated on January 4th, 2024. If you have an older version, please update it by running the following commands:

docker pull syq163/emoti-voice:latest

docker run -dp 127.0.0.1:8501:8501 -p 127.0.0.1:8000:8000 syq163/emoti-voice:latestNow open your browser and navigate to http://localhost:8501 to start using EmotiVoice's powerful TTS capabilities.

Starting from this version, the 'OpenAI-compatible-TTS API' is now accessible via http://localhost:8000/.

conda create -n EmotiVoice python=3.8 -y

conda activate EmotiVoice

pip install torch torchaudio

pip install numpy numba scipy transformers soundfile yacs g2p_en jieba pypinyin pypinyin_dictWe recommend that users refer to the wiki page How to download the pretrained model files if they encounter any issues.

git lfs install

git lfs clone https://huggingface.co/WangZeJun/simbert-base-chinese WangZeJun/simbert-base-chineseor, you can run:

git clone https://www.modelscope.cn/syq163/WangZeJun.git- You can download the pretrained models by simply running the following command:

git clone https://www.modelscope.cn/syq163/outputs.git- The inference text format is

<speaker>|<style_prompt/emotion_prompt/content>|<phoneme>|<content>.

- inference text example:

8051|Happy|<sos/eos> [IH0] [M] [AA1] [T] engsp4 [V] [OY1] [S] engsp4 [AH0] engsp1 [M] [AH1] [L] [T] [IY0] engsp4 [V] [OY1] [S] engsp1 [AE1] [N] [D] engsp1 [P] [R] [AA1] [M] [P] [T] engsp4 [K] [AH0] [N] [T] [R] [OW1] [L] [D] engsp1 [T] [IY1] engsp4 [T] [IY1] engsp4 [EH1] [S] engsp1 [EH1] [N] [JH] [AH0] [N] . <sos/eos>|Emoti-Voice - a Multi-Voice and Prompt-Controlled T-T-S Engine.

-

You can get phonemes by

python frontend.py data/my_text.txt > data/my_text_for_tts.txt. -

Then run:

TEXT=data/inference/text

python inference_am_vocoder_joint.py \

--logdir prompt_tts_open_source_joint \

--config_folder config/joint \

--checkpoint g_00140000 \

--test_file $TEXTthe synthesized speech is under outputs/prompt_tts_open_source_joint/test_audio.

- Or if you just want to use the interactive TTS demo page, run:

pip install streamlit

streamlit run demo_page.pyThanks to @lewangdev for adding an OpenAI compatible API #60. To set it up, use the following command:

pip install fastapi pydub uvicorn[standard] pyrubberband

uvicorn openaiapi:app --reloadYou may find more information from our wiki page.

Voice Cloning with your personal data has been released on December 13th, 2023.

- Our future plan can be found in the ROADMAP file.

- The current implementation focuses on emotion/style control by prompts. It uses only pitch, speed, energy, and emotion as style factors, and does not use gender. But it is not complicated to change it to style/timbre control.

- Suggestions are welcome. You can file issues or @ydopensource on twitter.

Welcome to scan the QR code below and join the WeChat group.

- PromptTTS. The PromptTTS paper is a key basis of this project.

- LibriTTS. The LibriTTS dataset is used in training of EmotiVoice.

- HiFiTTS. The HiFi TTS dataset is used in training of EmotiVoice.

- ESPnet.

- WeTTS

- HiFi-GAN

- Transformers

- tacotron

- KAN-TTS

- StyleTTS

- Simbert

- cn2an. EmotiVoice incorporates cn2an for number processing.

EmotiVoice is provided under the Apache-2.0 License - see the LICENSE file for details.

The interactive page is provided under the User Agreement file.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for EmotiVoice

Similar Open Source Tools

EmotiVoice

EmotiVoice is a powerful and modern open-source text-to-speech engine that supports emotional synthesis, enabling users to create speech with a wide range of emotions such as happy, excited, sad, and angry. It offers over 2000 different voices in both English and Chinese. Users can access EmotiVoice through an easy-to-use web interface or a scripting interface for batch generation of results. The tool is continuously evolving with new features and updates, prioritizing community input and user feedback.

stability-sdk

The stability-sdk is a Python package that provides a client implementation for interacting with the Stability API. This API allows users to generate images, upscale images, and animate images using a variety of different models and settings. The stability-sdk makes it easy to use the Stability API from Python code, and it provides a number of helpful features such as command line usage, support for multiple models, and the ability to filter artifacts by type.

instructor_ex

Instructor is a tool designed to structure outputs from OpenAI and other OSS LLMs by coaxing them to return JSON that maps to a provided Ecto schema. It allows for defining validation logic to guide LLMs in making corrections, and supports automatic retries. Instructor is primarily used with the OpenAI API but can be extended to work with other platforms. The tool simplifies usage by creating an ecto schema, defining a validation function, and making calls to chat_completion with instructions for the LLM. It also offers features like max_retries to fix validation errors iteratively.

gemini-next-chat

Gemini Next Chat is an open-source, extensible high-performance Gemini chatbot framework that supports one-click free deployment of private Gemini web applications. It provides a simple interface with image recognition and voice conversation, supports multi-modal models, talk mode, visual recognition, assistant market, support plugins, conversation list, full Markdown support, privacy and security, PWA support, well-designed UI, fast loading speed, static deployment, and multi-language support.

PySpur

PySpur is a graph-based editor designed for LLM workflows, offering modular building blocks for easy workflow creation and debugging at node level. It allows users to evaluate final performance and promises self-improvement features in the future. PySpur is easy-to-hack, supports JSON configs for workflow graphs, and is lightweight with minimal dependencies, making it a versatile tool for workflow management in the field of AI and machine learning.

KIVI

KIVI is a plug-and-play 2bit KV cache quantization algorithm optimizing memory usage by quantizing key cache per-channel and value cache per-token to 2bit. It enables LLMs to maintain quality while reducing memory usage, allowing larger batch sizes and increasing throughput in real LLM inference workloads.

RWKV-Runner

RWKV Runner is a project designed to simplify the usage of large language models by automating various processes. It provides a lightweight executable program and is compatible with the OpenAI API. Users can deploy the backend on a server and use the program as a client. The project offers features like model management, VRAM configurations, user-friendly chat interface, WebUI option, parameter configuration, model conversion tool, download management, LoRA Finetune, and multilingual localization. It can be used for various tasks such as chat, completion, composition, and model inspection.

thread

Thread is an AI-powered Jupyter alternative that integrates an AI copilot into your editing experience. It offers a familiar Jupyter Notebook editing experience with features like natural language code edits, generating cells to answer questions, context-aware chat sidebar, and automatic error explanations or fixes. The tool aims to enhance code editing and data exploration by providing a more interactive and intuitive experience for users. Thread can be used for free with Ollama or your own API key, and it runs locally for convenience and privacy.

aiogram_bot_template

This repository provides a template for easy asynchronous bot development for Telegram using Aiogram library. It includes setup instructions using Docker Compose, migration commands for TortoiseORM, and examples for working with TortoiseORM. The template aims to simplify the process of creating Telegram bots with features like FastAPI integration and Docker Compose setup. The repository also includes a list of tasks to be completed, such as changing SQLAlchemy to TortoiseORM, adding FastAPI for webhook, and setting up CI/CD examples.

WildBench

WildBench is a tool designed for benchmarking Large Language Models (LLMs) with challenging tasks sourced from real users in the wild. It provides a platform for evaluating the performance of various models on a range of tasks. Users can easily add new models to the benchmark by following the provided guidelines. The tool supports models from Hugging Face and other APIs, allowing for comprehensive evaluation and comparison. WildBench facilitates running inference and evaluation scripts, enabling users to contribute to the benchmark and collaborate on improving model performance.

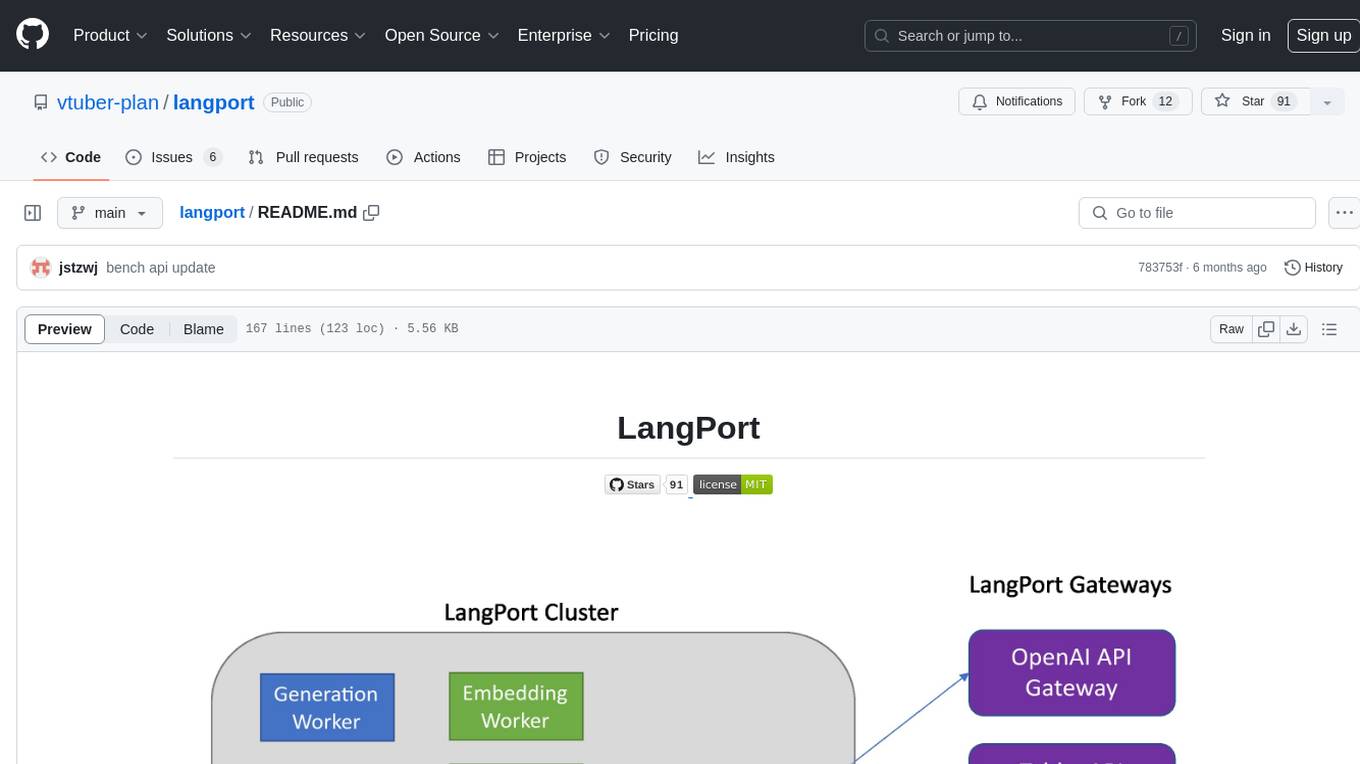

langport

LangPort is an open-source platform for serving large language models. It aims to provide a super fast LLM inference service with core features including Huggingface transformers support, distributed serving system, streaming generation, batch inference, and support for various model architectures. It offers compatibility with OpenAI, FauxPilot, HuggingFace, and Tabby APIs. The project supports model architectures like LLaMa, GLM, GPT2, and GPT Neo, and has been tested with models such as NingYu, Vicuna, ChatGLM, and WizardLM. LangPort also provides features like dynamic batch inference, int4 quantization, and generation logprobs parameter.

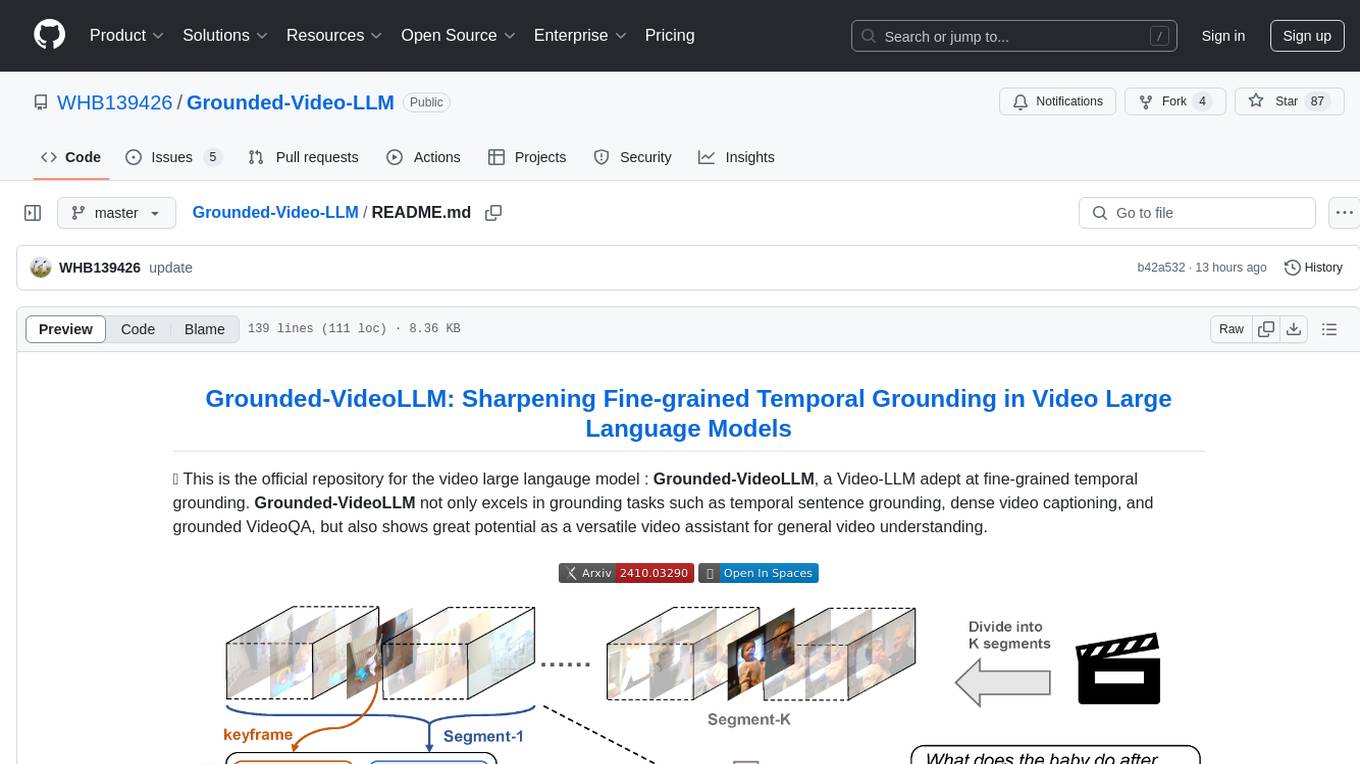

Grounded-Video-LLM

Grounded-VideoLLM is a Video Large Language Model specialized in fine-grained temporal grounding. It excels in tasks such as temporal sentence grounding, dense video captioning, and grounded VideoQA. The model incorporates an additional temporal stream, discrete temporal tokens with specific time knowledge, and a multi-stage training scheme. It shows potential as a versatile video assistant for general video understanding. The repository provides pretrained weights, inference scripts, and datasets for training. Users can run inference queries to get temporal information from videos and train the model from scratch.

ChatDev

ChatDev is a virtual software company powered by intelligent agents like CEO, CPO, CTO, programmer, reviewer, tester, and art designer. These agents collaborate to revolutionize the digital world through programming. The platform offers an easy-to-use, highly customizable, and extendable framework based on large language models, ideal for studying collective intelligence. ChatDev introduces innovative methods like Iterative Experience Refinement and Experiential Co-Learning to enhance software development efficiency. It supports features like incremental development, Docker integration, Git mode, and Human-Agent-Interaction mode. Users can customize ChatChain, Phase, and Role settings, and share their software creations easily. The project is open-source under the Apache 2.0 License and utilizes data licensed under CC BY-NC 4.0.

DemoGPT

DemoGPT is an all-in-one agent library that provides tools, prompts, frameworks, and LLM models for streamlined agent development. It leverages GPT-3.5-turbo to generate LangChain code, creating interactive Streamlit applications. The tool is designed for creating intelligent, interactive, and inclusive solutions in LLM-based application development. It offers model flexibility, iterative development, and a commitment to user engagement. Future enhancements include integrating Gorilla for autonomous API usage and adding a publicly available database for refining the generation process.

HPT

Hyper-Pretrained Transformers (HPT) is a novel multimodal LLM framework from HyperGAI, trained for vision-language models capable of understanding both textual and visual inputs. The repository contains the open-source implementation of inference code to reproduce the evaluation results of HPT Air on different benchmarks. HPT has achieved competitive results with state-of-the-art models on various multimodal LLM benchmarks. It offers models like HPT 1.5 Air and HPT 1.0 Air, providing efficient solutions for vision-and-language tasks.

ComfyUI-IF_AI_tools

ComfyUI-IF_AI_tools is a set of custom nodes for ComfyUI that allows you to generate prompts using a local Large Language Model (LLM) via Ollama. This tool enables you to enhance your image generation workflow by leveraging the power of language models.

For similar tasks

EmotiVoice

EmotiVoice is a powerful and modern open-source text-to-speech engine that supports emotional synthesis, enabling users to create speech with a wide range of emotions such as happy, excited, sad, and angry. It offers over 2000 different voices in both English and Chinese. Users can access EmotiVoice through an easy-to-use web interface or a scripting interface for batch generation of results. The tool is continuously evolving with new features and updates, prioritizing community input and user feedback.

For similar jobs

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

daily-poetry-image

Daily Chinese ancient poetry and AI-generated images powered by Bing DALL-E-3. GitHub Action triggers the process automatically. Poetry is provided by Today's Poem API. The website is built with Astro.

exif-photo-blog

EXIF Photo Blog is a full-stack photo blog application built with Next.js, Vercel, and Postgres. It features built-in authentication, photo upload with EXIF extraction, photo organization by tag, infinite scroll, light/dark mode, automatic OG image generation, a CMD-K menu with photo search, experimental support for AI-generated descriptions, and support for Fujifilm simulations. The application is easy to deploy to Vercel with just a few clicks and can be customized with a variety of environment variables.

SillyTavern

SillyTavern is a user interface you can install on your computer (and Android phones) that allows you to interact with text generation AIs and chat/roleplay with characters you or the community create. SillyTavern is a fork of TavernAI 1.2.8 which is under more active development and has added many major features. At this point, they can be thought of as completely independent programs.

Twitter-Insight-LLM

This project enables you to fetch liked tweets from Twitter (using Selenium), save it to JSON and Excel files, and perform initial data analysis and image captions. This is part of the initial steps for a larger personal project involving Large Language Models (LLMs).

AISuperDomain

Aila Desktop Application is a powerful tool that integrates multiple leading AI models into a single desktop application. It allows users to interact with various AI models simultaneously, providing diverse responses and insights to their inquiries. With its user-friendly interface and customizable features, Aila empowers users to engage with AI seamlessly and efficiently. Whether you're a researcher, student, or professional, Aila can enhance your AI interactions and streamline your workflow.

ChatGPT-On-CS

This project is an intelligent dialogue customer service tool based on a large model, which supports access to platforms such as WeChat, Qianniu, Bilibili, Douyin Enterprise, Douyin, Doudian, Weibo chat, Xiaohongshu professional account operation, Xiaohongshu, Zhihu, etc. You can choose GPT3.5/GPT4.0/ Lazy Treasure Box (more platforms will be supported in the future), which can process text, voice and pictures, and access external resources such as operating systems and the Internet through plug-ins, and support enterprise AI applications customized based on their own knowledge base.

obs-localvocal

LocalVocal is a live-streaming AI assistant plugin for OBS that allows you to transcribe audio speech into text and perform various language processing functions on the text using AI / LLMs (Large Language Models). It's privacy-first, with all data staying on your machine, and requires no GPU, cloud costs, network, or downtime.