Best AI tools for< Tune Model Parameters >

20 - AI tool Sites

Sapien.io

Sapien.io is a decentralized data foundry that offers data labeling services powered by a decentralized workforce and gamified platform. The platform provides high-quality training data for large language models through a human-in-the-loop labeling process, enabling fine-tuning of datasets to build performant AI models. Sapien combines AI and human intelligence to collect and annotate various data types for any model, offering customized data collection and labeling models across industries.

SD3 Medium

SD3 Medium is an advanced text-to-image model developed by Stability AI. It offers a cutting-edge approach to generating high-quality, photorealistic images based on textual prompts. The model is equipped with 2 billion parameters, ensuring exceptional quality and resource efficiency. SD3 Medium is currently in a research preview phase, primarily catering to educational and creative purposes. Users can access the model through various licensing options and explore its capabilities via the Stability Platform.

HappyML

HappyML is an AI tool designed to assist users in machine learning tasks. It provides a user-friendly interface for running machine learning algorithms without the need for complex coding. With HappyML, users can easily build, train, and deploy machine learning models for various applications. The tool offers a range of features such as data preprocessing, model evaluation, hyperparameter tuning, and model deployment. HappyML simplifies the machine learning process, making it accessible to users with varying levels of expertise.

Sylph AI

Sylph AI is an AI tool designed to maximize the potential of LLM applications by providing an auto-optimization library and an AI teammate to assist users in navigating complex LLM workflows. The tool aims to streamline the process of model fine-tuning, hyperparameter optimization, and auto-data labeling for LLM projects, ultimately enhancing productivity and efficiency for users.

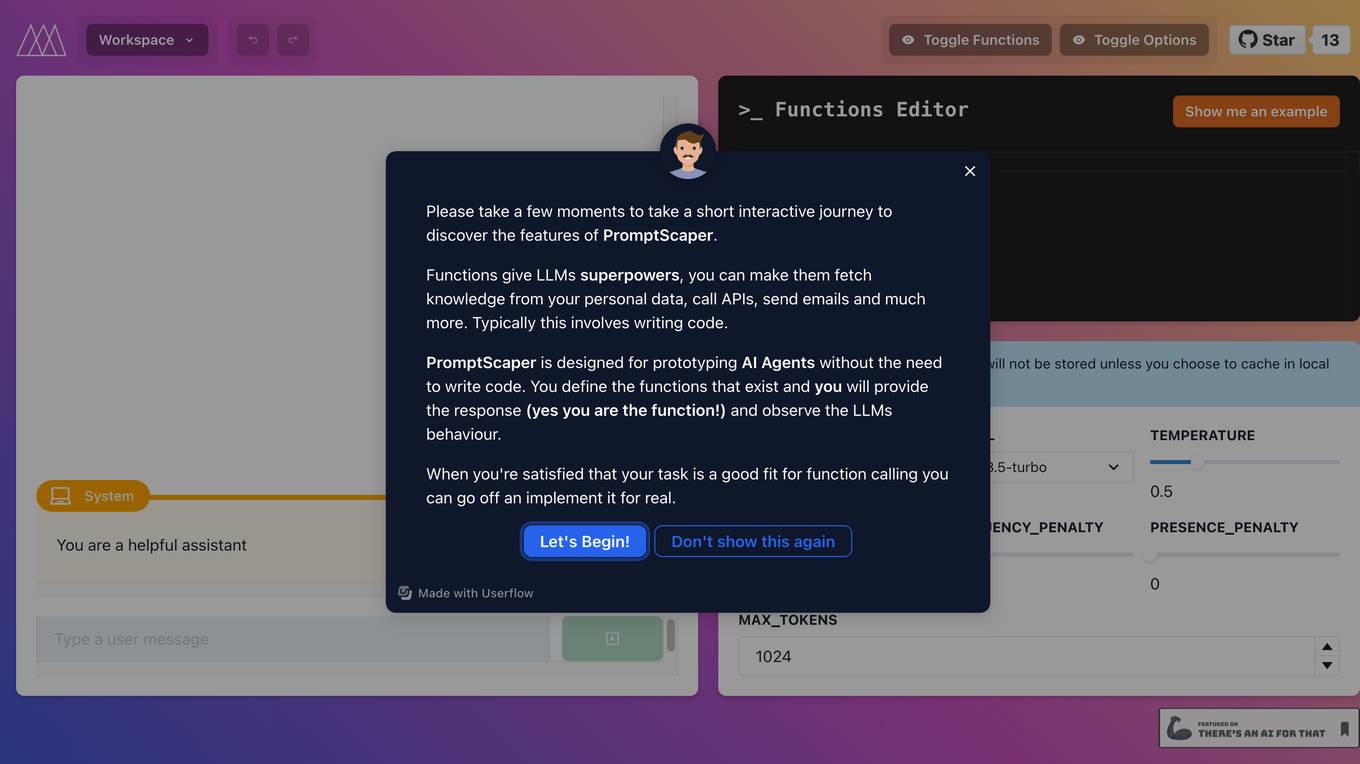

PromptScaper Workspace

PromptScaper Workspace is an AI tool designed to assist users in generating text using OpenAI's powerful language models. The tool provides a user-friendly interface for interacting with OpenAI's API to generate text based on specified parameters. Users can input prompts and customize various settings to fine-tune the generated text output. PromptScaper Workspace streamlines the process of leveraging advanced AI language models for text generation tasks, making it easier for users to create content efficiently.

FineTuneAIs.com

FineTuneAIs.com is a platform that specializes in custom AI model fine-tuning. Users can fine-tune their AI models to achieve better performance and accuracy. The platform requires JavaScript to be enabled for optimal functionality.

poolside

poolside is an advanced foundational AI model designed specifically for software engineering challenges. It allows users to fine-tune the model on their own code, enabling it to understand project uniqueness and complexities that generic models can't grasp. The platform aims to empower teams to build better, faster, and happier by providing a personalized AI model that continuously improves. In addition to the AI model for writing code, poolside offers an intuitive editor assistant and an API for developers to leverage.

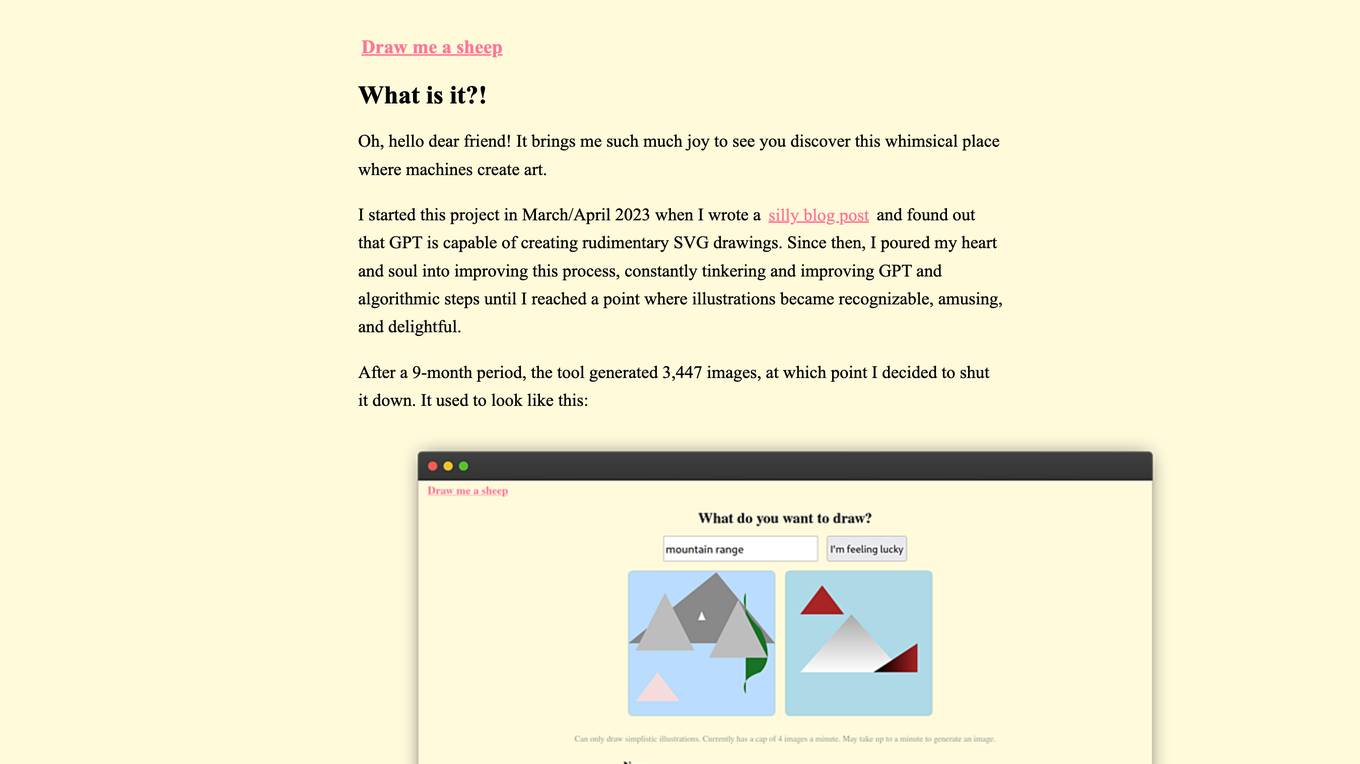

WhimsicalAI

The website is an AI tool that allows users to generate whimsical and delightful illustrations using GPT and algorithmic steps. The project started in March/April 2023 and evolved to create recognizable and amusing SVG drawings. The tool generated 3,447 images over a 9-month period before being shut down. The collected data could be used to fine-tune a model for future projects.

Rocketbrew

Rocketbrew is an AI-powered tool that helps businesses automate their LinkedIn and email outreach. It uses a generative AI model to craft personalized messages for each lead, including follow-ups. Rocketbrew also allows users to easily tune the model with their own product description and value proposition. With Rocketbrew, businesses can save time and effort on prospecting and focus on closing more deals.

re:tune

re:tune is a no-code AI app solution that provides everything you need to transform your business with AI, from custom chatbots to autonomous agents. With re:tune, you can build chatbots for any use case, connect any data source, and integrate with all your favorite tools and platforms. re:tune is the missing platform to build your AI apps.

WriteGPT

WriteGPT is an AI-driven development partner that helps users write smarter, not harder. It offers a range of features to help users create highly personalized content, summarize articles and videos, organize their digital insights, and fine-tune their AI model. WriteGPT can be used for a variety of tasks, including sales, account management, social media, SEO, copywriting, studying, and development.

Fine-Tune AI

Fine-Tune AI is a tool that allows users to generate fine-tune data sets using prompts. This can be useful for a variety of tasks, such as improving the accuracy of machine learning models or creating new training data for AI applications.

Flux LoRA Model Library

Flux LoRA Model Library is an AI tool that provides a platform for finding and using Flux LoRA models suitable for various projects. Users can browse a catalog of popular Flux LoRA models and learn about FLUX models and LoRA (Low-Rank Adaptation) technology. The platform offers resources for fine-tuning models and ensuring responsible use of generated images.

FaceTune.ai

FaceTune.ai is an AI-powered photo editing tool that allows users to enhance their selfies and portraits with various features such as skin smoothing, teeth whitening, and blemish removal. The application uses advanced algorithms to automatically detect facial features and make precise adjustments, resulting in professional-looking photos. With an intuitive interface and real-time editing capabilities, FaceTune.ai is a popular choice for individuals looking to improve their selfies before sharing them on social media.

Together AI

Together AI is an AI tool that offers a variety of generative AI services, including serverless models, fine-tuning capabilities, dedicated endpoints, and GPU clusters. Users can run or fine-tune leading open source models with only a few lines of code. The platform provides a range of functionalities for tasks such as chat, vision, text-to-speech, code/language reranking, and more. Together AI aims to simplify the process of utilizing AI models for various applications.

Predibase

Predibase is a platform for fine-tuning and serving Large Language Models (LLMs). It provides a cost-effective and efficient way to train and deploy LLMs for a variety of tasks, including classification, information extraction, customer sentiment analysis, customer support, code generation, and named entity recognition. Predibase is built on proven open-source technology, including LoRAX, Ludwig, and Horovod.

prompteasy.ai

Prompteasy.ai is an AI tool that allows users to fine-tune AI models in less than 5 minutes. It simplifies the process of training AI models on user data, making it as easy as having a conversation. Users can fully customize GPT by fine-tuning it to meet their specific needs. The tool offers data-driven customization, interactive AI coaching, and seamless model enhancement, providing users with a competitive edge and simplifying AI integration into their workflows.

Rawbot

Rawbot is an AI model comparison tool designed to simplify the selection process by enabling users to identify and understand the strengths and weaknesses of various AI models. It allows users to compare AI models based on performance optimization, strengths and weaknesses identification, customization and tuning, cost and efficiency analysis, and informed decision-making. Rawbot is a user-friendly platform that caters to researchers, developers, and business leaders, offering a comprehensive solution for selecting the best AI models tailored to specific needs.

Together AI

Together AI is an AI Acceleration Cloud platform that offers fast inference, fine-tuning, and training services. It provides self-service NVIDIA GPUs, model deployment on custom hardware, AI chat app, code execution sandbox, and tools to find the right model for specific use cases. The platform also includes a model library with open-source models, documentation for developers, and resources for advancing open-source AI. Together AI enables users to leverage pre-trained models, fine-tune them, or build custom models from scratch, catering to various generative AI needs.

Ultra AI

Ultra AI is an all-in-one AI command center for products, offering features such as multi-provider AI gateway, prompts manager, semantic caching, logs & analytics, model fallbacks, and rate limiting. It is designed to help users efficiently manage and utilize AI capabilities in their products. The platform is privacy-focused, fast, and provides quick support, making it a valuable tool for businesses looking to leverage AI technology.

1 - Open Source AI Tools

lighteval

LightEval is a lightweight LLM evaluation suite that Hugging Face has been using internally with the recently released LLM data processing library datatrove and LLM training library nanotron. We're releasing it with the community in the spirit of building in the open. Note that it is still very much early so don't expect 100% stability ^^' In case of problems or question, feel free to open an issue!

20 - OpenAI Gpts

HuggingFace Helper

A witty yet succinct guide for HuggingFace, offering technical assistance on using the platform - based on their Learning Hub

Pytorch Trainer GPT

Your purpose is to create the pytorch code to train language models using pytorch

Tune Tailor: Playlist Pal

I find and create playlists based on mood, genre, and activities.

Text Tune Up GPT

I edit articles, improving clarity and respectfulness, maintaining your style.

The Name That Tune Game - from lyrics

Joyful music expert in song lyrics, offering trivia, insights, and engaging music discussions.

Joke Smith | Joke Edits for Standup Comedy

A witty editor to fine-tune stand-up comedy jokes.

Rewrite This Song: Lyrics Generator

I rewrite song lyrics to new themes, keeping the tune and essence of the original.

Dr. Tuning your Sim Racing doctor

Your quirky pit crew chief for top-notch sim racing advice

アダチさん12号(Oracle RDBMS篇)

安達孝一さんがSE時代に蓄積してきた、Oracle RDBMSのナレッジやノウハウ等 (Oracle 7/8.1.6/8.1.7/9iR1/9iR2/10gR1/10gR2/11gR2/12c/SQLチューニング) について、ご質問頂けます。また、対話内容を基に、ChatGPT(GPT-4)向けの、汎用的な質問文例も作成できます。

Drone Buddy

An FPV drone specialist aiding in building, tuning, and learning about the hobby.