Best AI tools for< Scale Infrastructure >

20 - AI tool Sites

Union.ai

Union.ai is an infrastructure platform designed for AI, ML, and data workloads. It offers a scalable MLOps platform that optimizes resources, reduces costs, and fosters collaboration among team members. Union.ai provides features such as declarative infrastructure, data lineage tracking, accelerated datasets, and more to streamline AI orchestration on Kubernetes. It aims to simplify the management of AI, ML, and data workflows in production environments by addressing complexities and offering cost-effective strategies.

Backend.AI

Backend.AI is an enterprise-scale cluster backend for AI frameworks that offers scalability, GPU virtualization, HPC optimization, and DGX-Ready software products. It provides a fast and efficient way to build, train, and serve AI models of any type and size, with flexible infrastructure options. Backend.AI aims to optimize backend resources, reduce costs, and simplify deployment for AI developers and researchers. The platform integrates seamlessly with existing tools and offers fractional GPU usage and pay-as-you-play model to maximize resource utilization.

The AI Guild

The AI Guild is Europe's leading practitioner community in various AI-related fields such as Analytics Engineering, Data Science, Machine Learning, NLP, and more. It offers career support, exclusive connections, technical skills profiles, and growth opportunities for its members. Additionally, the AI Guild provides services for companies, including support in evaluating use cases, deploying to production, and scaling infrastructure.

Doctrine

Doctrine is an AI-powered application that allows users to add AI-powered Q&A features to their apps in minutes. It leverages knowledge from data or knowledge bases to answer user questions or embed AI features. With the ability to ingest content from various sources like websites, documents, and images, Doctrine simplifies the process of knowledge extraction and enables seamless integration of AI capabilities into applications.

OpenResty

The website appears to be displaying a '403 Forbidden' error message, which typically means that the user is not authorized to access the requested page. This error is often encountered when trying to access a webpage without the necessary permissions. The message 'openresty' suggests that the website may be using the OpenResty web platform. OpenResty is a web platform based on NGINX and Lua that is commonly used for building dynamic web applications. It provides a powerful and flexible environment for developing and deploying web services.

FinetuneFast

FinetuneFast is an AI tool designed to help developers, indie makers, and businesses to efficiently finetune machine learning models, process data, and deploy AI solutions at lightning speed. With pre-configured training scripts, efficient data loading pipelines, and one-click model deployment, FinetuneFast streamlines the process of building and deploying AI models, saving users valuable time and effort. The tool is user-friendly, accessible for ML beginners, and offers lifetime updates for continuous improvement.

CHAI AI

CHAI AI is a leading conversational AI platform that focuses on building AI solutions for quant traders. The platform has secured significant funding rounds to expand its computational capabilities and talent acquisition. CHAI AI offers a range of models and techniques, such as reinforcement learning with human feedback, model blending, and direct preference optimization, to enhance user engagement and retention. The platform aims to provide users with the ability to create their own ChatAIs and offers custom GPU orchestration for efficient inference. With a strong focus on user feedback and recognition, CHAI AI continues to innovate and improve its AI models to meet the demands of a growing user base.

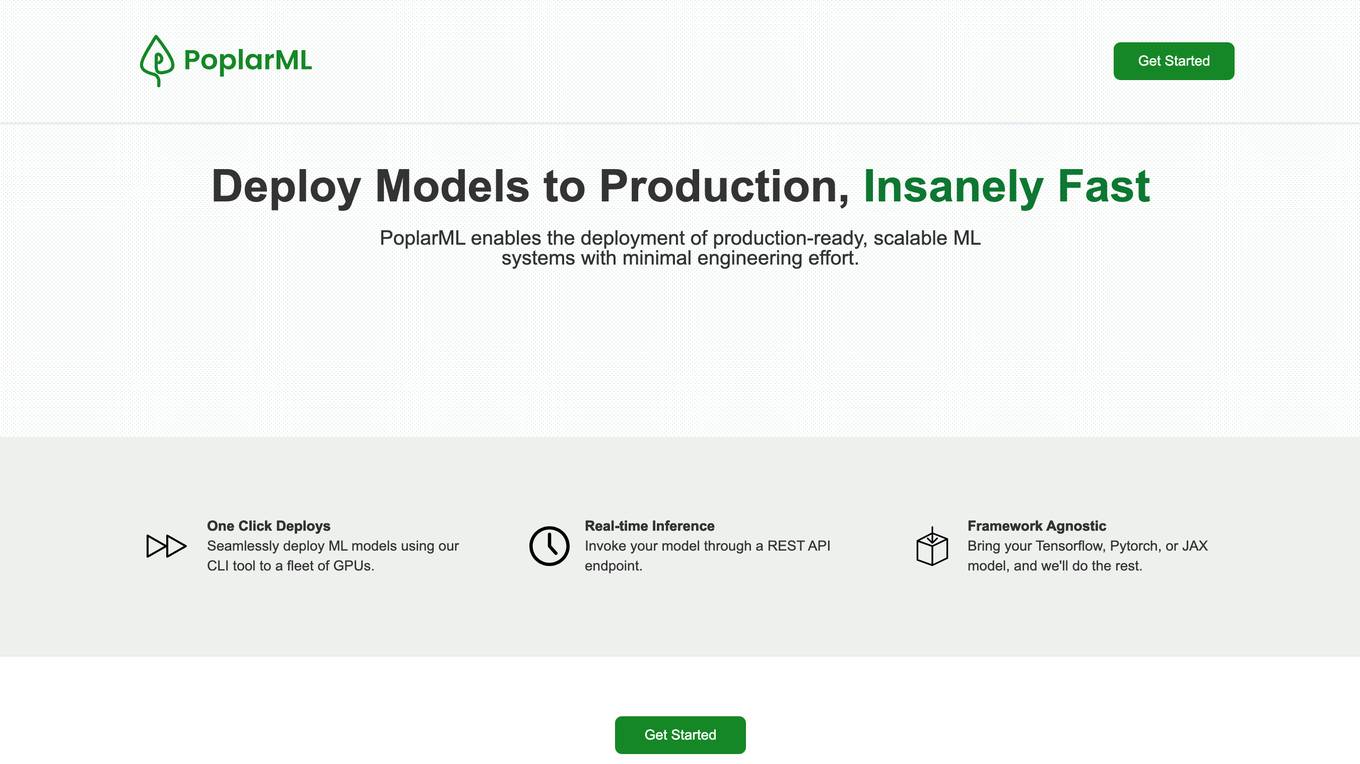

PoplarML

PoplarML is a platform that enables the deployment of production-ready, scalable ML systems with minimal engineering effort. It offers one-click deploys, real-time inference, and framework agnostic support. With PoplarML, users can seamlessly deploy ML models using a CLI tool to a fleet of GPUs and invoke their models through a REST API endpoint. The platform supports Tensorflow, Pytorch, and JAX models.

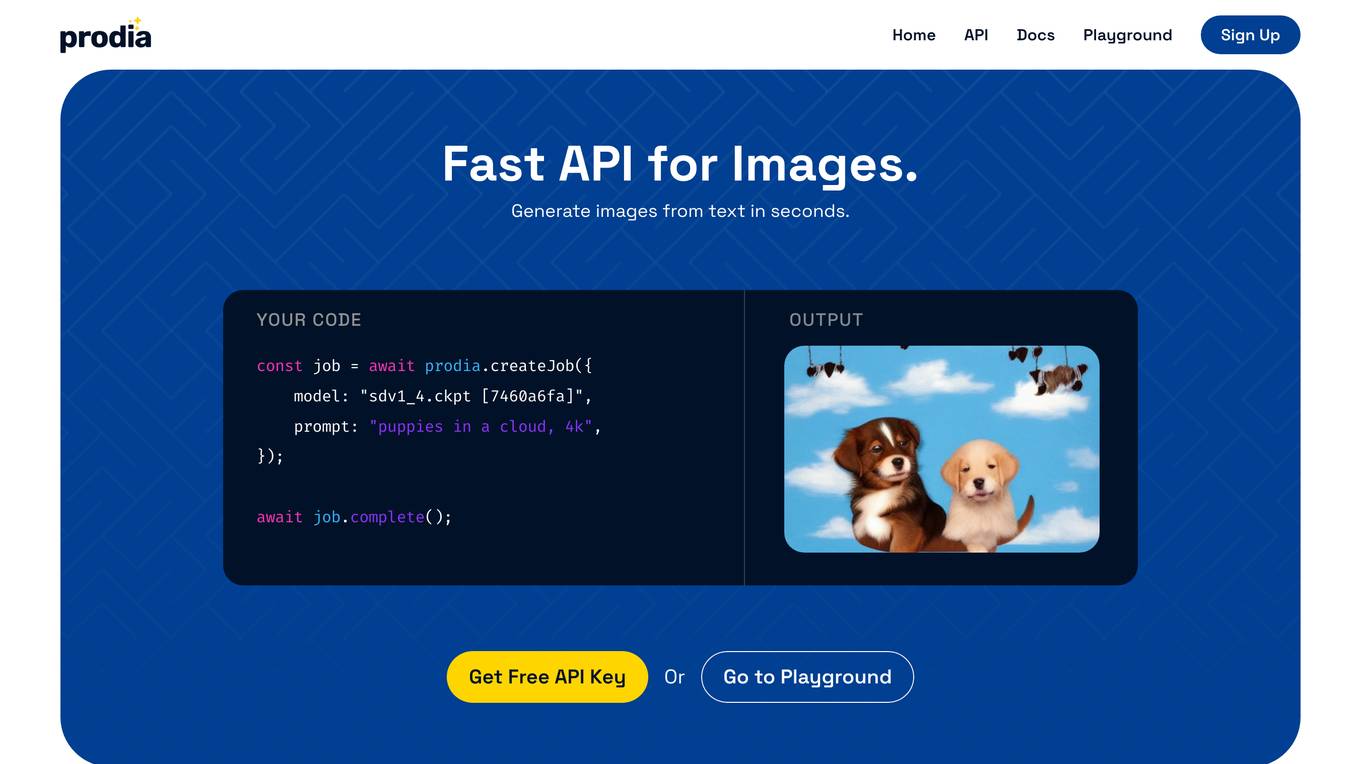

Prodia

Prodia is an API for generating images from text. It is fast, affordable, and scalable. With Prodia, you can create stunning visuals for your projects in seconds. Prodia is perfect for developers, designers, and anyone else who wants to add AI-powered image generation to their applications.

Pixis

Pixis is a codeless AI infrastructure designed for growth marketing, offering purpose-built AI solutions to scale demand generation. The platform leverages transparent AI infrastructure to optimize campaign results across platforms, with features such as targeting AI, creative AI, and performance AI. Pixis helps reduce customer acquisition cost, generate creative assets quickly, refine audience targeting, and deliver contextual communication in real-time. The platform also provides an AI savings calculator to estimate the returns from leveraging its codeless AI infrastructure for marketing. With success stories showcasing significant improvements in various marketing metrics, Pixis aims to empower businesses to unlock the capabilities of AI for enhanced performance and results.

Framer

Framer is a design tool used for creating interactive prototypes and designs. It allows users to visually design interfaces, interactions, and animations. With Framer, designers and developers can collaborate seamlessly to bring their ideas to life. The tool provides a range of features to streamline the design process and enhance creativity.

VeroCloud

VeroCloud is a platform offering tailored solutions for AI, HPC, and scalable growth. It provides cost-effective cloud solutions with guaranteed uptime, performance efficiency, and cost-saving models. Users can deploy HPC workloads seamlessly, configure environments as needed, and access optimized environments for GPU Cloud, HPC Compute, and Tally on Cloud. VeroCloud supports globally distributed endpoints, public and private image repos, and deployment of containers on secure cloud. The platform also allows users to create and customize templates for seamless deployment across computing resources.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

Center for AI Safety (CAIS)

The Center for AI Safety (CAIS) is a research and field-building nonprofit organization based in San Francisco. They conduct impactful research, advocacy projects, and provide resources to reduce societal-scale risks associated with artificial intelligence (AI). CAIS focuses on technical AI safety research, field-building projects, and offers a compute cluster for AI/ML safety projects. They aim to develop and use AI safely to benefit society, addressing inherent risks and advocating for safety standards.

Denvr DataWorks AI Cloud

Denvr DataWorks AI Cloud is a cloud-based AI platform that provides end-to-end AI solutions for businesses. It offers a range of features including high-performance GPUs, scalable infrastructure, ultra-efficient workflows, and cost efficiency. Denvr DataWorks is an NVIDIA Elite Partner for Compute, and its platform is used by leading AI companies to develop and deploy innovative AI solutions.

Heroku

Heroku is a cloud platform that enables developers to build, deliver, monitor, and scale applications quickly and easily. It supports multiple programming languages and provides a seamless deployment process. With Heroku, developers can focus on coding without worrying about infrastructure management.

Wizeline

Wizeline is an AI application that offers practical AI solutions for various industries such as media & entertainment, finance, healthcare, and retail. The application provides AI marketing, AI broadcast, and AI core services to help businesses boost revenue, enhance operational agility, and drive growth through AI-powered solutions. Wizeline excels in consultative thinking, AI innovation, and scaling operations with AI. The application is known for its deep industry expertise, real-world solutioning, and partnership with global tech leaders.

Inworld

Inworld is an AI framework designed for games and media, offering a production-ready framework for building AI agents with client-side logic and local model inference. It provides tools optimized for real-time data ingestion, low latency, and massive scale, enabling developers to create engaging and immersive experiences for users. Inworld allows for building custom AI agent pipelines, refining agent behavior and performance, and seamlessly transitioning from prototyping to production. With support for C++, Python, and game engines, Inworld aims to future-proof AI development by integrating 3rd-party components and foundational models to avoid vendor lock-in.

ColdIQ

ColdIQ is an AI-powered sales prospecting tool that helps B2B companies with revenue above $100k/month to build outbound systems that sell for them. The tool offers end-to-end cold outreach campaign setup and management, email infrastructure setup and warmup, audience research and targeting, data scraping and enrichment, campaigns optimization, sending automation, sales systems implementation, training on tools best practices, sales tools recommendations, free gap analysis, sales consulting, and copywriting frameworks. ColdIQ leverages AI to tailor messaging to each prospect, automate outreach, and flood calendars with opportunities.

StateSet

StateSet's Cloud Platform provides direct-to-consumer (DTC) merchants with the tools and infrastructure they need to build faster, more autonomous commerce operations. The platform includes a suite of AI-powered automation tools that can help merchants streamline their workflows, improve customer satisfaction, and reduce costs. Some of the key features of the platform include:

2 - Open Source AI Tools

game-server

A distributed Java game server based on chess, card, and MMORPG games, theoretically capable of infinitely scaling gateway, lobby, and game servers to accommodate player numbers. It implements a cluster registration center, common servers such as gateway, login, and backend server monitoring; encapsulates Redis cluster, MongoDB, and other database handling; encapsulates message queues, thread models, and tools such as data import. The gateway server uses Mina to encapsulate TCP, UDP, WebSocket, HTTP communication, allowing the framework to support multiple protocol clients for gaming. Each directory ending with 'scripts' contains script files for the corresponding projects.

gaia

Gaia is a powerful open-source tool for managing infrastructure as code. It allows users to define and provision cloud resources using simple configuration files. With Gaia, you can automate the deployment and scaling of your applications, ensuring consistency and reliability across your infrastructure. The tool supports multiple cloud providers and offers a user-friendly interface for managing your resources efficiently. Gaia simplifies the process of infrastructure management, making it easier for teams to collaborate and deploy applications seamlessly.

20 - OpenAI Gpts

ML Engineer GPT

I'm a Python and PyTorch expert with knowledge of ML infrastructure requirements ready to help you build and scale your ML projects.

R&D Process Scale-up Advisor

Optimizes production processes for efficient large-scale operations.

CIM Analyst

In-depth CIM analysis with a structured rating scale, offering detailed business evaluations.

Business Angel - Startup and Insights PRO

Business Angel provides expert startup guidance: funding, growth hacks, and pitch advice. Navigate the startup ecosystem, from seed to scale. Essential for entrepreneurs aiming for success. Master your strategy and launch with confidence. Your startup journey begins here!

Sysadmin

I help you with all your sysadmin tasks, from setting up your server to scaling your already exsisting one. I can help you with understanding the long list of log files and give you solutions to the problems.

Seabiscuit Launch Lander

Startup Strong Within 180 Days: Tailored advice for launching, promoting, and scaling businesses of all types. It covers all stages from pre-launch to post-launch and develops strategies including market research, branding, promotional tactics, and operational planning unique your business. (v1.8)

Startup Advisor

Startup advisor guiding founders through detailed idea evaluation, product-market-fit, business model, GTM, and scaling.