Best AI tools for< Generate Evaluation Datasets >

20 - AI tool Sites

Athina AI

Athina AI is a platform that provides research and guides for building safe and reliable AI products. It helps thousands of AI engineers in building safer products by offering tutorials, research papers, and evaluation techniques related to large language models. The platform focuses on safety, prompt engineering, hallucinations, and evaluation of AI models.

UpTrain

UpTrain is a full-stack LLMOps platform designed to help users confidently scale AI by providing a comprehensive solution for all production needs, from evaluation to experimentation to improvement. It offers diverse evaluations, automated regression testing, enriched datasets, and innovative techniques to generate high-quality scores. UpTrain is built for developers, compliant to data governance needs, cost-efficient, remarkably reliable, and open-source. It provides precision metrics, task understanding, safeguard systems, and covers a wide range of language features and quality aspects. The platform is suitable for developers, product managers, and business leaders looking to enhance their LLM applications.

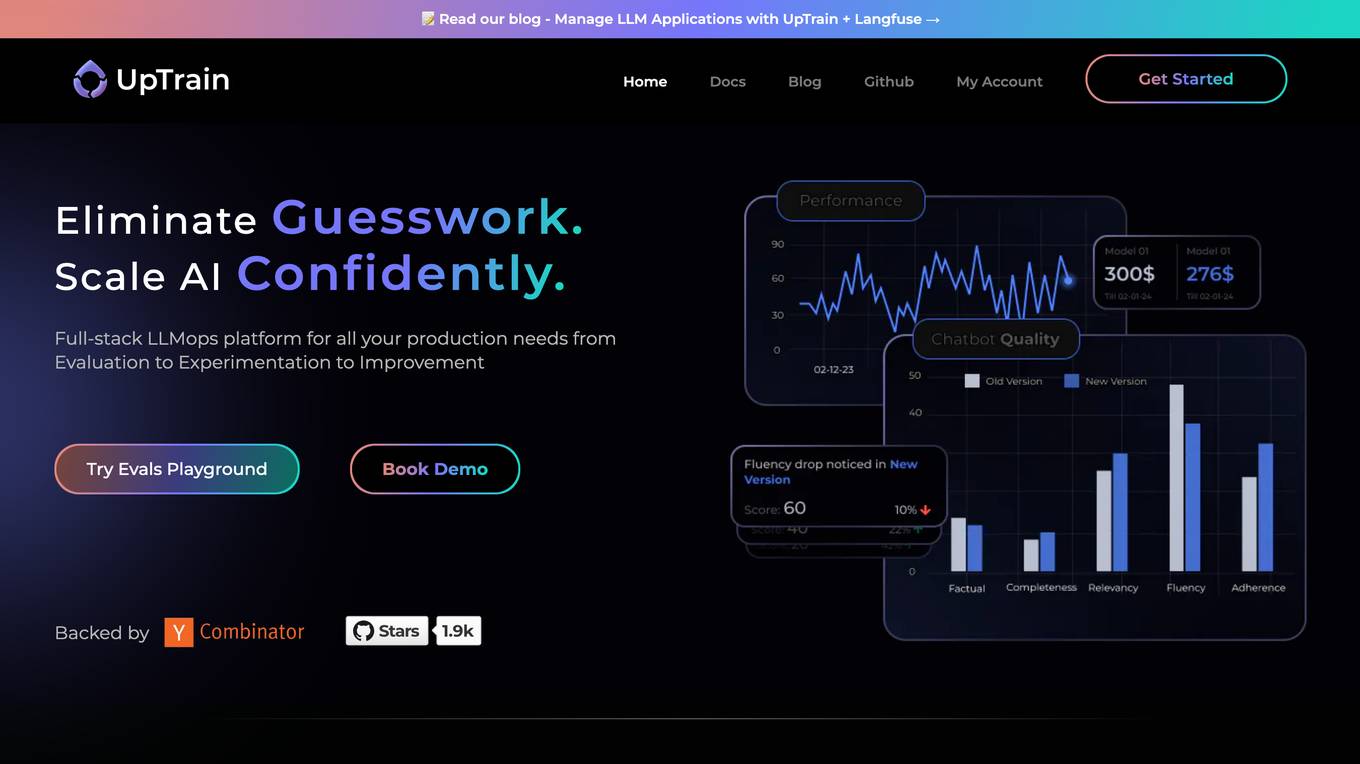

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

Vocera

Vocera is an AI voice agent testing tool that allows users to test and monitor voice AI agents efficiently. It enables users to launch voice agents in minutes, ensuring a seamless conversational experience. With features like testing against AI-generated datasets, simulating scenarios, and monitoring AI performance, Vocera helps in evaluating and improving voice agent interactions. The tool provides real-time insights, detailed logs, and trend analysis for optimal performance, along with instant notifications for errors and failures. Vocera is designed to work for everyone, offering an intuitive dashboard and data-driven decision-making for continuous improvement.

Confident AI

Confident AI is an open-source evaluation infrastructure for Large Language Models (LLMs). It provides a centralized platform to judge LLM applications, ensuring substantial benefits and addressing any weaknesses in LLM implementation. With Confident AI, companies can define ground truths to ensure their LLM is behaving as expected, evaluate performance against expected outputs to pinpoint areas for iterations, and utilize advanced diff tracking to guide towards the optimal LLM stack. The platform offers comprehensive analytics to identify areas of focus and features such as A/B testing, evaluation, output classification, reporting dashboard, dataset generation, and detailed monitoring to help productionize LLMs with confidence.

Surge AI

Surge AI is a data labeling platform that provides human-generated data for training and evaluating large language models (LLMs). It offers a global workforce of annotators who can label data in over 40 languages. Surge AI's platform is designed to be easy to use and integrates with popular machine learning tools and frameworks. The company's customers include leading AI companies, research labs, and startups.

Snapteams

Snapteams is an AI-powered hiring assistant that streamlines the recruitment process by conducting real-time video interviews and candidate screening. It leverages AI technology to engage, interview, and assess top talent seamlessly, allowing employers to focus on evaluating candidates from the comfort of their desk.

User Evaluation

User Evaluation is an AI-first user research platform that leverages AI technology to provide instant insights, comprehensive reports, and on-demand answers to enhance customer research. The platform offers features such as AI-driven data analysis, multilingual transcription, live timestamped notes, AI reports & presentations, and multimodal AI chat. User Evaluation empowers users to analyze qualitative and quantitative data, synthesize AI-generated recommendations, and ensure data security through encryption protocols. It is designed for design agencies, product managers, founders, and leaders seeking to accelerate innovation and shape exceptional product experiences.

DeepEval

DeepEval by Confident AI is a comprehensive LLM Evaluation Framework used by leading AI companies. It enables users to build reliable evaluation pipelines to test any AI system. With 50+ research-backed metrics, native multi-modal support, and auto-optimization of prompts, DeepEval offers a sophisticated evaluation ecosystem for AI applications. The framework covers unit-testing for LLMs, single and multi-turn evaluations, generation & simulation of test data, and state-of-the-art evaluation techniques like G-Eval and DAG. DeepEval is integrated with Pytest and supports various system architectures, making it a versatile tool for AI testing.

BenchLLM

BenchLLM is an AI tool designed for AI engineers to evaluate LLM-powered apps by running and evaluating models with a powerful CLI. It allows users to build test suites, choose evaluation strategies, and generate quality reports. The tool supports OpenAI, Langchain, and other APIs out of the box, offering automation, visualization of reports, and monitoring of model performance.

MindpoolAI

MindpoolAI is a tool that allows users to access multiple leading AI models with a single query. This means that users can get the answers they are looking for, spark ideas, and fuel their work, creativity, and curiosity. MindpoolAI is easy to use and does not require any technical expertise. Users simply need to enter their prompt and select the AI models they want to compare. MindpoolAI will then send the query to the selected models and present the results in an easy-to-understand format.

Adminer

Adminer is a comprehensive platform designed to assist e-commerce entrepreneurs in identifying, analyzing, and validating profitable products. It leverages artificial intelligence to provide users with data-driven insights, enabling them to make informed decisions and optimize their product offerings. Adminer's suite of features includes product research, market analysis, supplier evaluation, and automated copywriting, empowering users to streamline their operations and maximize their sales potential.

AILYZE

AILYZE is an AI tool designed for qualitative data collection and analysis. Users can upload various document formats in any language to generate codes, conduct thematic, frequency, content, and cross-group analysis, extract top quotes, and more. The tool also allows users to create surveys, utilize an AI voice interviewer, and recruit participants globally. AILYZE offers different plans with varying features and data security measures, including options for advanced analysis and AI interviewer add-ons. Additionally, users can tap into data scientists for detailed and customized analyses on a wide range of documents.

Codei

Codei is an AI-powered platform designed to help individuals land their dream software engineering job. It offers features such as application tracking, question generation, and code evaluation to assist users in honing their technical skills and preparing for interviews. Codei aims to provide personalized support and insights to help users succeed in the tech industry.

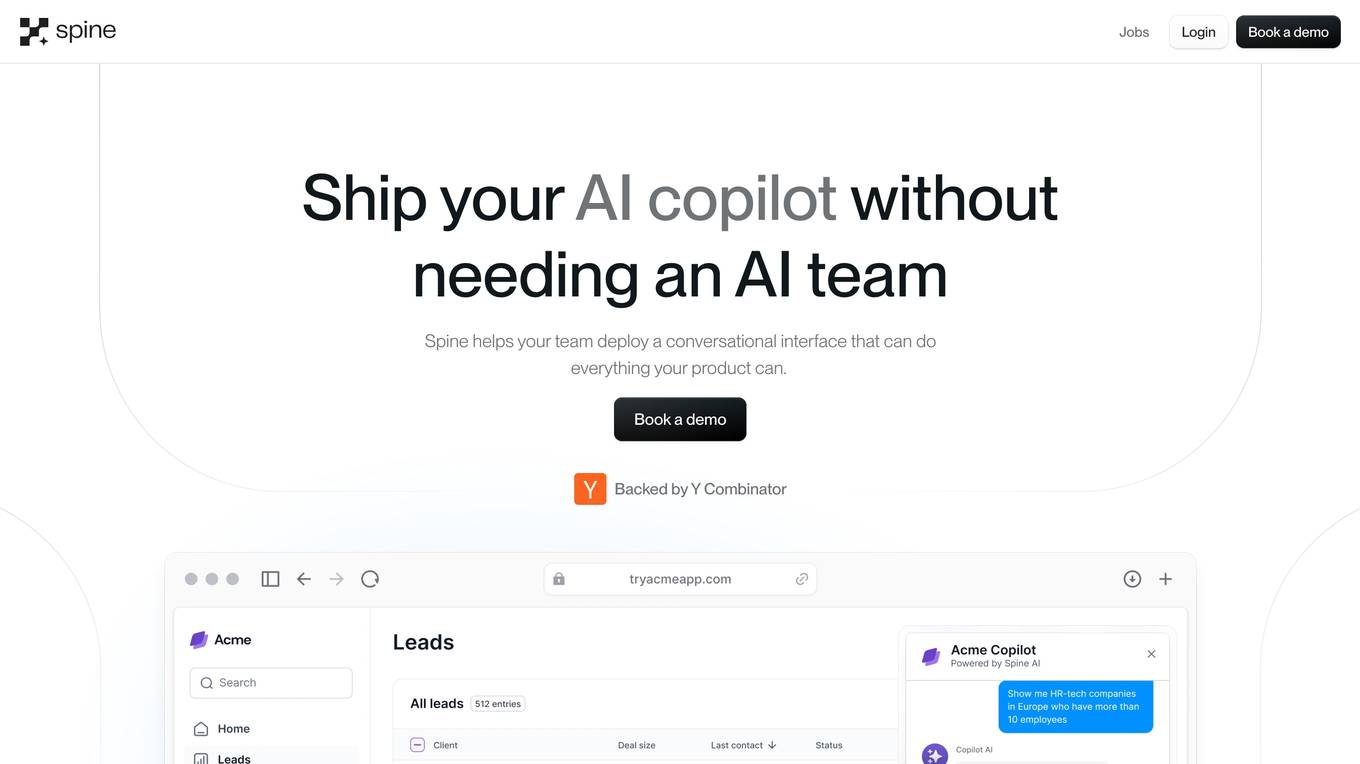

Spine AI

Spine AI is a reliable AI analyst tool that provides conversational analytics tailored to understand your business. It empowers decision-makers by offering customized insights, deep business intelligence, proactive notifications, and flexible dashboards. The tool is designed to help users make better decisions by leveraging a purpose-built Data Processing Unit (DPU) and a semantic layer for natural language interactions. With a focus on rigorous evaluation and security, Spine AI aims to deliver explainable and customizable AI solutions for businesses.

Reka

Reka is a cutting-edge AI application offering next-generation multimodal AI models that empower agents to see, hear, and speak. Their flagship model, Reka Core, competes with industry leaders like OpenAI and Google, showcasing top performance across various evaluation metrics. Reka's models are natively multimodal, capable of tasks such as generating textual descriptions from videos, translating speech, answering complex questions, writing code, and more. With advanced reasoning capabilities, Reka enables users to solve a wide range of complex problems. The application provides end-to-end support for 32 languages, image and video comprehension, multilingual understanding, tool use, function calling, and coding, as well as speech input and output.

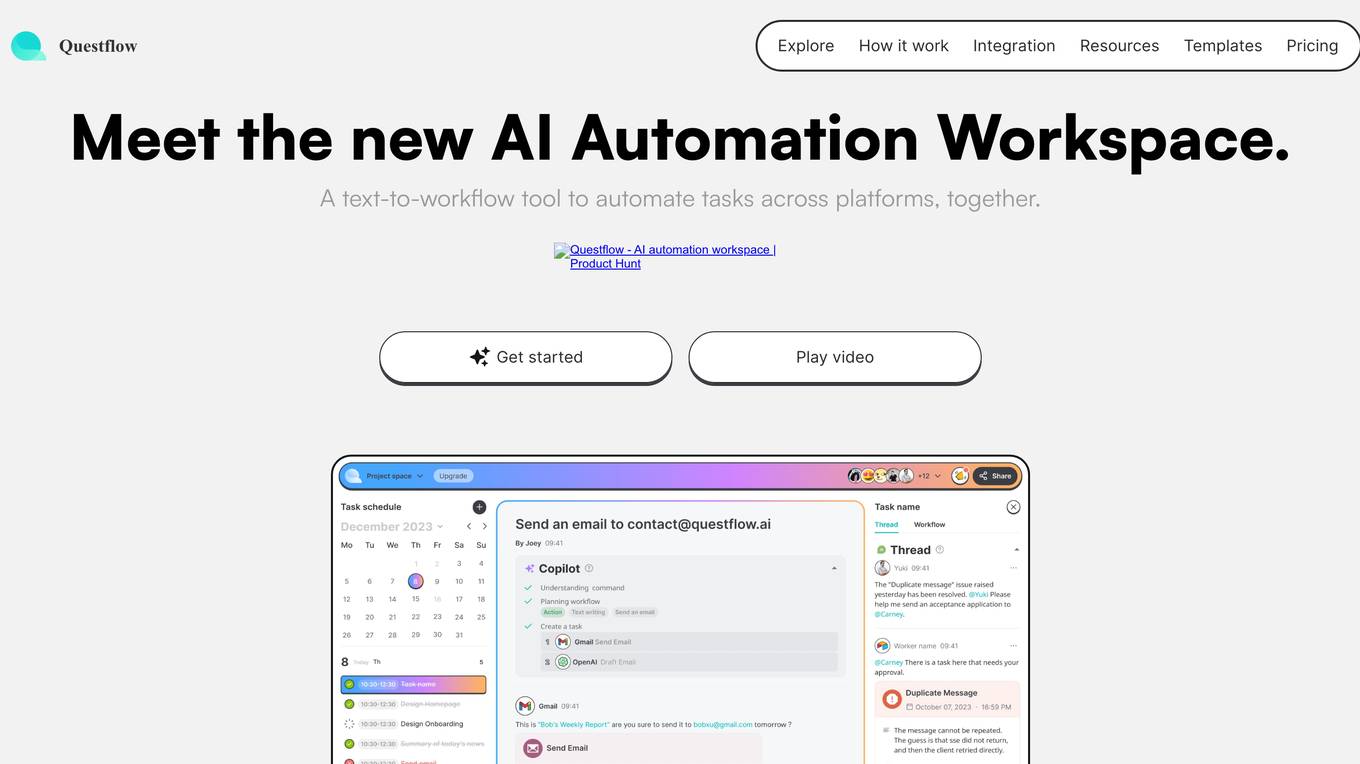

Questflow

Questflow is a decentralized AI agent economy platform that allows users to orchestrate multiple AI agents to gather insights, take action, and earn rewards autonomously. It serves as a co-pilot for work, helping knowledge workers automate repetitive tasks in a private, safety-first approach. The platform offers features such as multi-agent orchestration, user-friendly dashboard, visual reports, smart keyword generator, content evaluation, SEO goal setting, automated alerts, actionable SEO tips, regular SEO goal setting, and link optimization wizard.

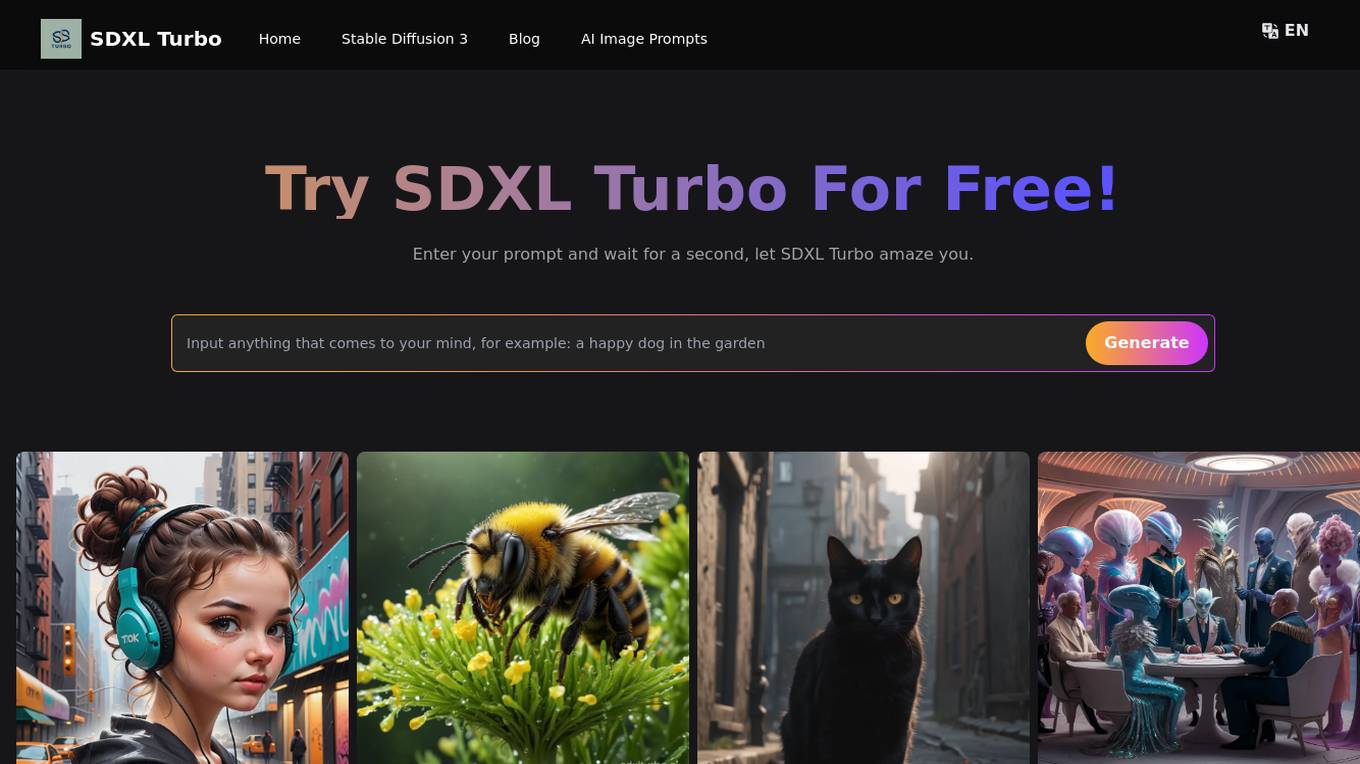

SDXL Turbo

SDXL Turbo is a cutting-edge text-to-image generation model that leverages Adversarial Diffusion Distillation (ADD) technology for high-quality, real-time image synthesis. Developed by Stability AI, SDXL Turbo is a distilled version of the SDXL 1.0 model, specifically trained for real-time synthesis. It excels in generating photorealistic images from text prompts in a single network evaluation, making it ideal for applications demanding speed and efficiency, such as video games, virtual reality, and instant content creation. SDXL Turbo is accessible to both professionals and hobbyists alike, with simple setup requirements and an intuitive interface. It presents unparalleled opportunities for research and development in advanced AI and image synthesis.

RagaAI Catalyst

RagaAI Catalyst is a sophisticated AI observability, monitoring, and evaluation platform designed to help users observe, evaluate, and debug AI agents at all stages of Agentic AI workflows. It offers features like visualizing trace data, instrumenting and monitoring tools and agents, enhancing AI performance, agentic testing, comprehensive trace logging, evaluation for each step of the agent, enterprise-grade experiment management, secure and reliable LLM outputs, finetuning with human feedback integration, defining custom evaluation logic, generating synthetic data, and optimizing LLM testing with speed and precision. The platform is trusted by AI leaders globally and provides a comprehensive suite of tools for AI developers and enterprises.

LEVI AI Recruiting Software

LEVI AI Recruiting Software is a modern recruitment automation platform powered by artificial intelligence. It revolutionizes the candidate evaluation and selection process by using advanced AI recruitment tools. LEVI assists in making data-driven hiring decisions, matches candidates to job requirements, conducts independent interviews, generates comprehensive reports, integrates with hiring systems, and enables informed and efficient hiring decisions. The application unlocks the full potential of machine learning models, eliminates bias in the hiring process, and automates candidate screening. LEVI's AI-powered recruitment tools change how candidate evaluations are performed through automated resume screening, candidate sourcing, and AI interview assessments.

1 - Open Source AI Tools

giskard

Giskard is an open-source Python library that automatically detects performance, bias & security issues in AI applications. The library covers LLM-based applications such as RAG agents, all the way to traditional ML models for tabular data.

20 - OpenAI Gpts

Project Post-Project Evaluation Advisor

Optimizes project outcomes through comprehensive post-project evaluations.

Content Evaluator

Analyzes and rates your writing using insights derived from studying LinkedIn influencers' top performing posts from the last 4 years.

API Evaluator Pro

Examines and evaluates public API documentation and offers detailed guidance for improvements, including AI usability

Financial Sentiment Analyst

A sentiment analysis tool for evaluating management-related texts.

GPT Searcher

Specializes in web searches for chat.openai.com using specific query format.

Angular Architect AI: Generate Angular Components

Generates Angular components based on requirements, with a focus on code-first responses.

🖌️ Line to Image: Generate The Evolved Prompt!

Transforms lines into detailed prompts for visual storytelling.