Best AI tools for< Deploy On Edge Devices >

20 - AI tool Sites

Qualcomm AI Hub

Qualcomm AI Hub is a platform that allows users to run AI models on Snapdragon® 8 Elite devices. It provides a collaborative ecosystem for model makers, cloud providers, runtime, and SDK partners to deploy on-device AI solutions quickly and efficiently. Users can bring their own models, optimize for deployment, and access a variety of AI services and resources. The platform caters to various industries such as mobile, automotive, and IoT, offering a range of models and services for edge computing.

Picovoice

Picovoice is an on-device Voice AI and local LLM platform designed for enterprises. It offers a range of voice AI and LLM solutions, including speech-to-text, noise suppression, speaker recognition, speech-to-index, wake word detection, and more. Picovoice empowers developers to build virtual assistants and AI-powered products with compliance, reliability, and scalability in mind. The platform allows enterprises to process data locally without relying on third-party remote servers, ensuring data privacy and security. With a focus on cutting-edge AI technology, Picovoice enables users to stay ahead of the curve and adapt quickly to changing customer needs.

Captur

Captur is an AI-powered platform that enables users to automate manual image review workflows with easy-to-use APIs. The platform offers real-time guidance to improve user experience, provides AI models for various checks, and helps transform operations for enterprises. Captur's edge AI platform allows developers, product owners, and operations teams to build, test, deploy, and iterate AI models efficiently. The platform is designed to run on-device, ensuring real-time intelligence without lag or weak signals. Captur is a comprehensive solution for deploying edge AI into real-world operations, offering features such as live guidance, quick scanning, confidence-building, and privacy verification.

Luxonis

Luxonis is an AI application that offers Visual AI solutions engineered for precision edge inference. The application provides stereo depth cameras with unique features and quality, enabling users to perform advanced vision tasks on-device, reducing latency and bandwidth demands. With open-source DepthAI API, users can create and deploy custom vision solutions that scale with their needs. Luxonis also offers real-world training data for self-improving vision intelligence and operates flawlessly through vibrations, temperature shifts, and extended use. The application integrates advanced sensing capabilities with up to 48MP cameras, wide field of view, IMUs, microphones, ToF, thermal, IR illumination, and active stereo for unparalleled perception.

Spot AI

Spot AI is an AI camera system and video surveillance platform that offers AI Security Guard and AI Operations Assistant services. It provides extra protection without the payroll, scales operators' performance, and deploys AI agents to standardize operations, coach teams, and execute real-time actions 24/7. The platform helps in live security deterrence, manufacturing optimization, and spotting downtime, hazards, and hidden costs. Spot AI converts critical moments into context-rich judgments, launches automatic actions, and allows users to analyze, dissect, grade, and relay videos in seconds. It offers premium IP cameras, cloud dashboard for location management, and intelligent video recorder with on-edge AI processing. The platform is trusted by various industries for enhancing safety, efficiency, productivity, and security operations.

fsck.ai

fsck.ai is an AI-powered software creation kit designed to help developers ship high-quality software faster. It offers cutting-edge AI tools that accelerate code reviews and identify potential problems in code. Similar to Copilot, fsck.ai is fully open-source and can run locally or on a remote machine. Users can sign up for early access to leverage the power of AI in their development workflow.

SiMa.ai

SiMa.ai is an AI application that offers high-performance, power-efficient, and scalable edge machine learning solutions for various industries such as automotive, industrial, healthcare, drones, and government sectors. The platform provides MLSoC™ boards, DevKit 2.0, Palette Software 1.2, and Edgematic™ for developers to accelerate complete applications and deploy AI-enabled solutions. SiMa.ai's Machine Learning System on Chip (MLSoC) enables full-pipeline implementations of real-world ML solutions, making it a trusted platform for edge AI development.

Sarvam AI

Sarvam AI is an AI application focused on leading transformative research in AI to develop, deploy, and distribute Generative AI applications in India. The platform aims to build efficient large language models for India's diverse linguistic culture and enable new GenAI applications through bespoke enterprise models. Sarvam AI is also developing an enterprise-grade platform for developing and evaluating GenAI apps, while contributing to open-source models and datasets to accelerate AI innovation.

ThirdAI

ThirdAI is an AI platform that offers a production-ready solution for building and deploying AI applications quickly and efficiently. It provides advanced AI/GenAI technology that can run on any infrastructure, reducing barriers to delivering production-grade AI solutions. With features like enterprise SSO, built-in models, no-code interface, and more, ThirdAI empowers users to create AI applications without the need for specialized GPU servers or AI skills. The platform covers the entire workflow of building AI applications end-to-end, allowing for easy customization and deployment in various environments.

Wallaroo.AI

Wallaroo.AI is an AI inference platform that offers production-grade AI inference microservices optimized on OpenVINO for cloud and Edge AI application deployments on CPUs and GPUs. It provides hassle-free AI inferencing for any model, any hardware, anywhere, with ultrafast turnkey inference microservices. The platform enables users to deploy, manage, observe, and scale AI models effortlessly, reducing deployment costs and time-to-value significantly.

Airship AI

Airship AI is a cutting-edge, artificial intelligence-driven video, sensor, and data management surveillance platform. Customers rely on their services to provide actionable intelligence in real-time, collected from a wide range of deployed sensors, utilizing the latest in edge and cloud-based analytics. These capabilities improve public safety and operational efficiency for both public sector and commercial clients. Founded in 2006, Airship AI is U.S. owned and operated, headquartered in Redmond, Washington. Airship's product suite is comprised of three core offerings: Acropolis, the enterprise software stack, Command, the family of viewing clients, and Outpost, edge hardware and software AI offerings.

Axelera AI

Axelera AI is an AI tool that offers datacenter performance and edge efficiency through AI accelerators and AI processing units. The platform provides hardware and software solutions for accelerating inference at the edge, bringing data insights and analytics to various industries such as industrial manufacturing, retail, security, healthcare, smart cities, robotics, agriculture, and computer vision. Axelera AI aims to boost AI applications' performance with cost-effective and efficient inference chips, seamlessly integrating into innovations to enhance customer focus.

Synthreo

Synthreo is a Multi-Tenant AI Automation Platform designed for Managed Service Providers (MSPs) to empower businesses by streamlining operations, reducing costs, and driving growth through intelligent AI agents. The platform offers cutting-edge AI solutions that automate routine tasks, enhance decision-making, and facilitate collaboration between human teams and digital labor. Synthreo's AI agents provide transformative advantages for businesses of all sizes, enabling operational efficiency and strategic growth.

AI Superior

AI Superior is a German-based AI services company focusing on end-to-end AI-based application development and AI consulting. We design and build web and mobile apps as well as custom software products that rely on complex machine learning and AI models and algorithms. Our Ph.D.-level Data Scientists and Software Engineers are ready to help you create your success story.

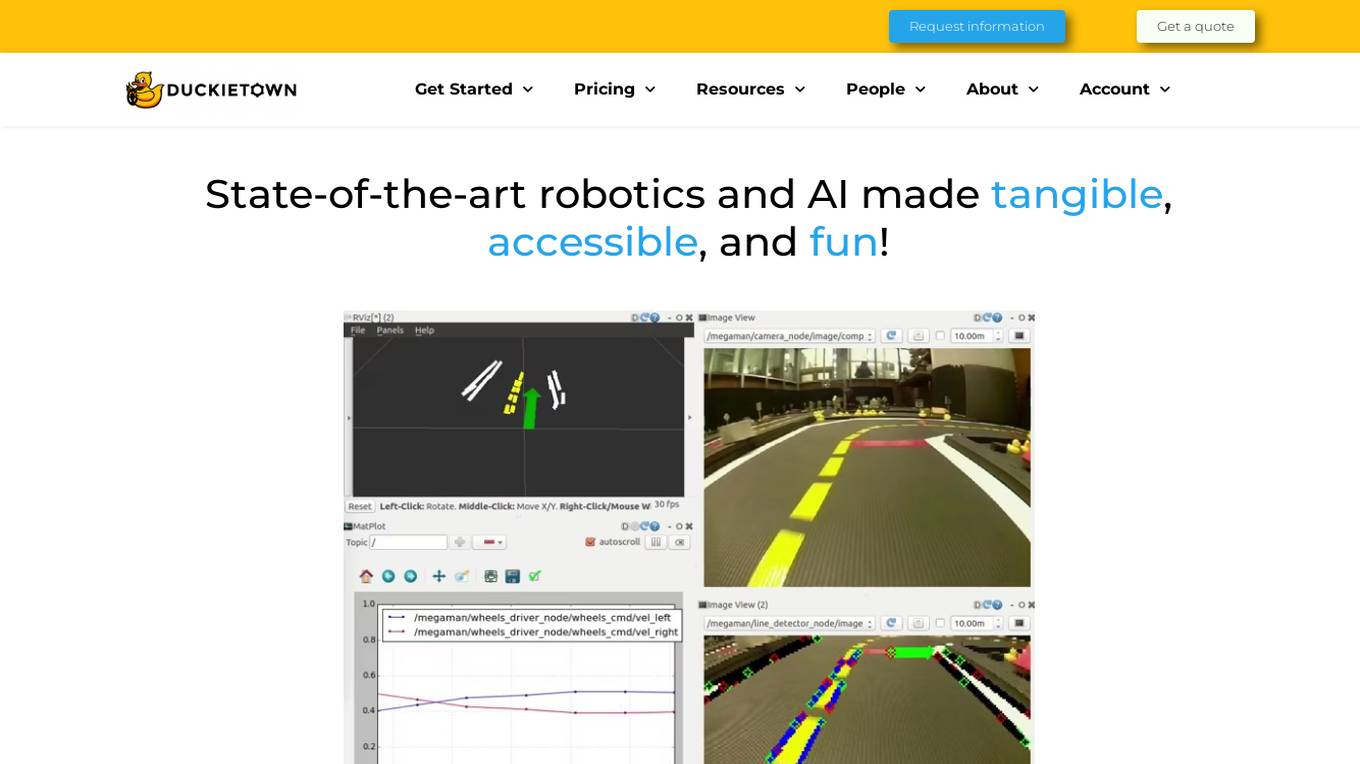

Duckietown

Duckietown is a platform for delivering cutting-edge robotics and AI learning experiences. It offers teaching resources to instructors, hands-on activities to learners, an accessible research platform to researchers, and a state-of-the-art ecosystem for professional training. Duckietown's mission is to make robotics and AI education state-of-the-art, hands-on, and accessible to all.

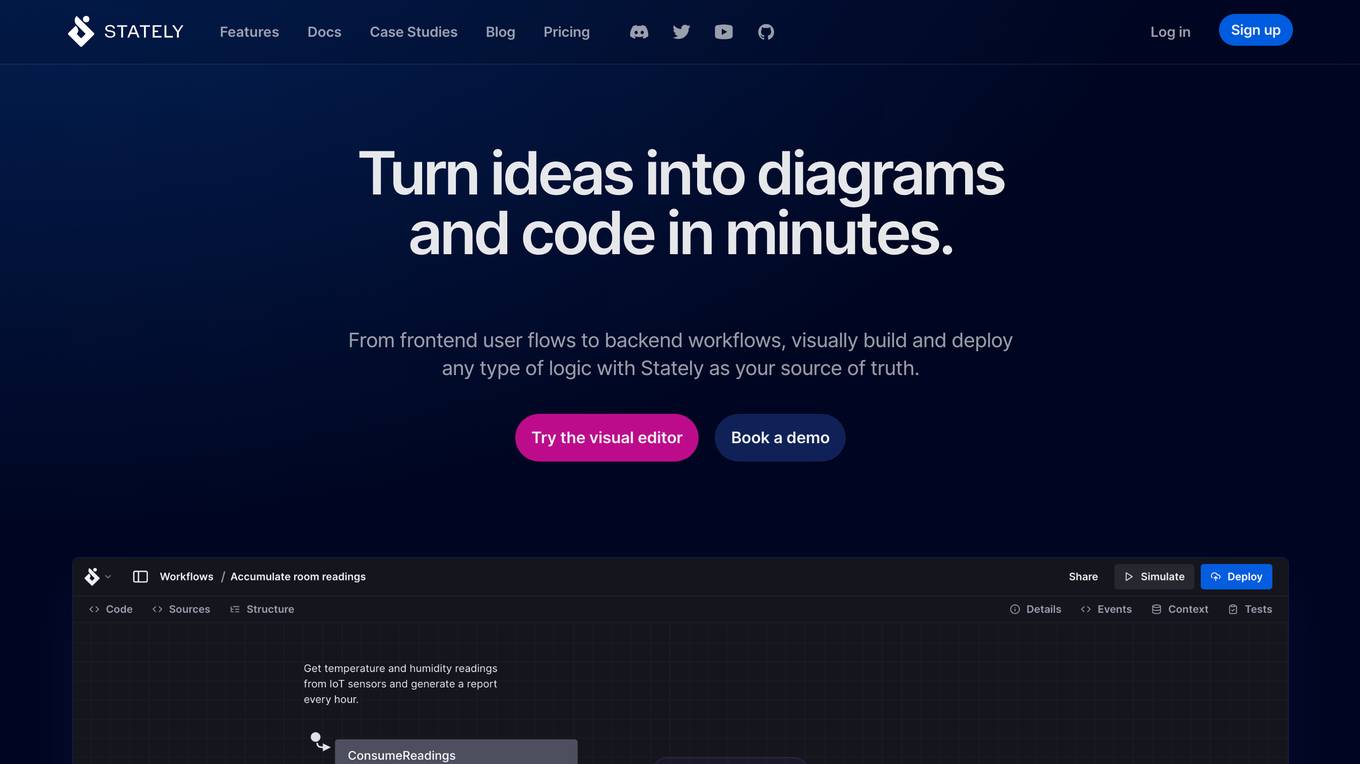

Stately

Stately is a visual logic builder that enables users to create complex logic diagrams and code in minutes. It provides a drag-and-drop editor that brings together contributors of all backgrounds, allowing them to collaborate on code, diagrams, documentation, and test generation in one place. Stately also integrates with AI to assist in each phase of the development process, from scaffolding behavior and suggesting variants to turning up edge cases and even writing code. Additionally, Stately offers bidirectional updates between code and visualization, allowing users to use the tools that make them most productive. It also provides integrations with popular frameworks such as React, Vue, and Svelte, and supports event-driven programming, state machines, statecharts, and the actor model for handling even the most complex logic in predictable, robust, and visual ways.

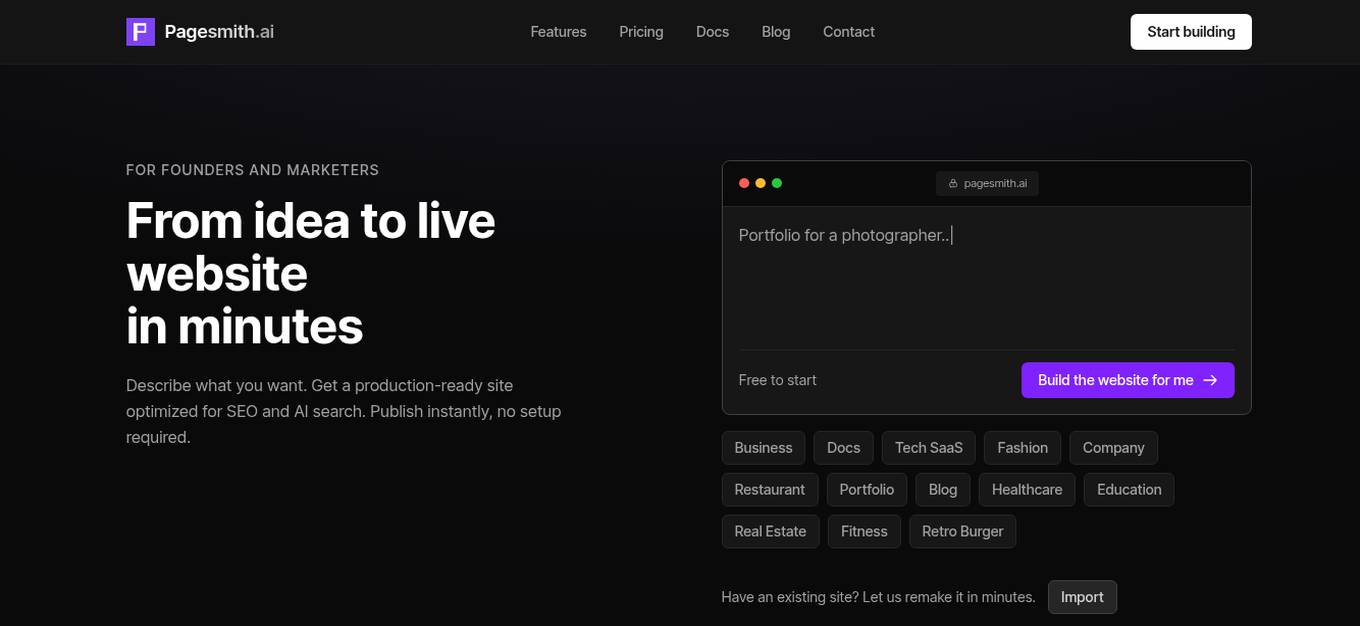

Pagesmith

Pagesmith is an AI website builder that focuses on creating websites optimized for the AI search era. Unlike most AI website builders that create visually appealing sites but are invisible to search engines, Pagesmith generates real HTML pages that are easily indexed by search engines like Google and AI tools. It offers features such as global edge hosting, MDX content editor, built-in database, server-side rendering, and AI editor for natural language editing. Pagesmith allows users to build production-ready websites with proper SEO and code ownership.

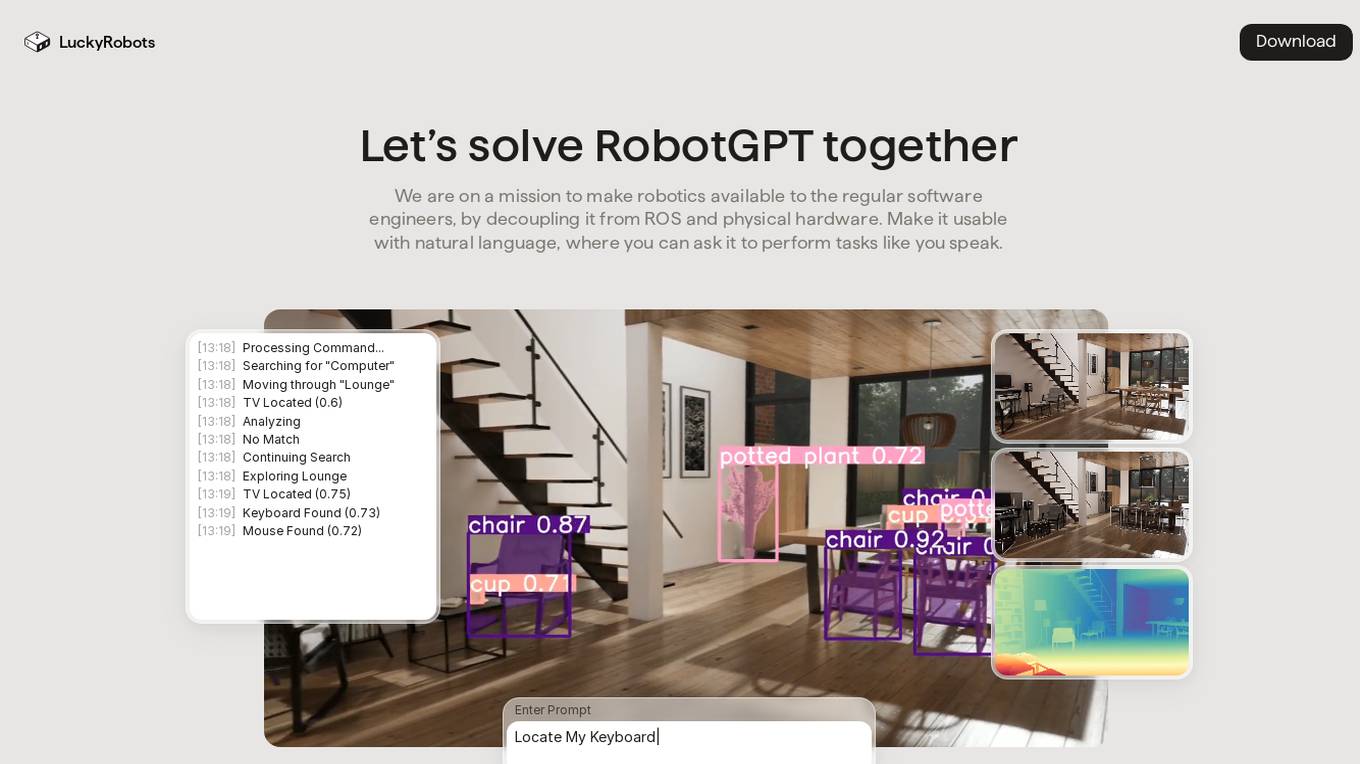

LuckyRobots

LuckyRobots is an AI tool designed to make robotics accessible to software engineers by providing a simulation platform for deploying end-to-end AI models. The platform allows users to interact with robots using natural language commands, explore virtual environments, test robot models in realistic scenarios, and receive camera feeds for monitoring. LuckyRobots aims to train AI models on real-world simulations and respond to natural language inputs, offering a user-friendly and innovative approach to robotics development.

Nebius AI

Nebius AI is an AI-centric cloud platform designed to handle intensive workloads efficiently. It offers a range of advanced features to support various AI applications and projects. The platform ensures high performance and security for users, enabling them to leverage AI technology effectively in their work. With Nebius AI, users can access cutting-edge AI tools and resources to enhance their projects and streamline their workflows.

AI Safety Initiative

The AI Safety Initiative is a premier coalition of trusted experts that aims to develop and deliver essential AI guidance and tools for organizations to deploy safe, responsible, and compliant AI solutions. Through vendor-neutral research, training programs, and global industry experts, the initiative provides authoritative AI best practices and tools. It offers certifications, training, and resources to help organizations navigate the complexities of AI governance, compliance, and security. The initiative focuses on AI technology, risk, governance, compliance, controls, and organizational responsibilities.

2 - Open Source AI Tools

Awesome-LLM-Quantization

Awesome-LLM-Quantization is a curated list of resources related to quantization techniques for Large Language Models (LLMs). Quantization is a crucial step in deploying LLMs on resource-constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements.

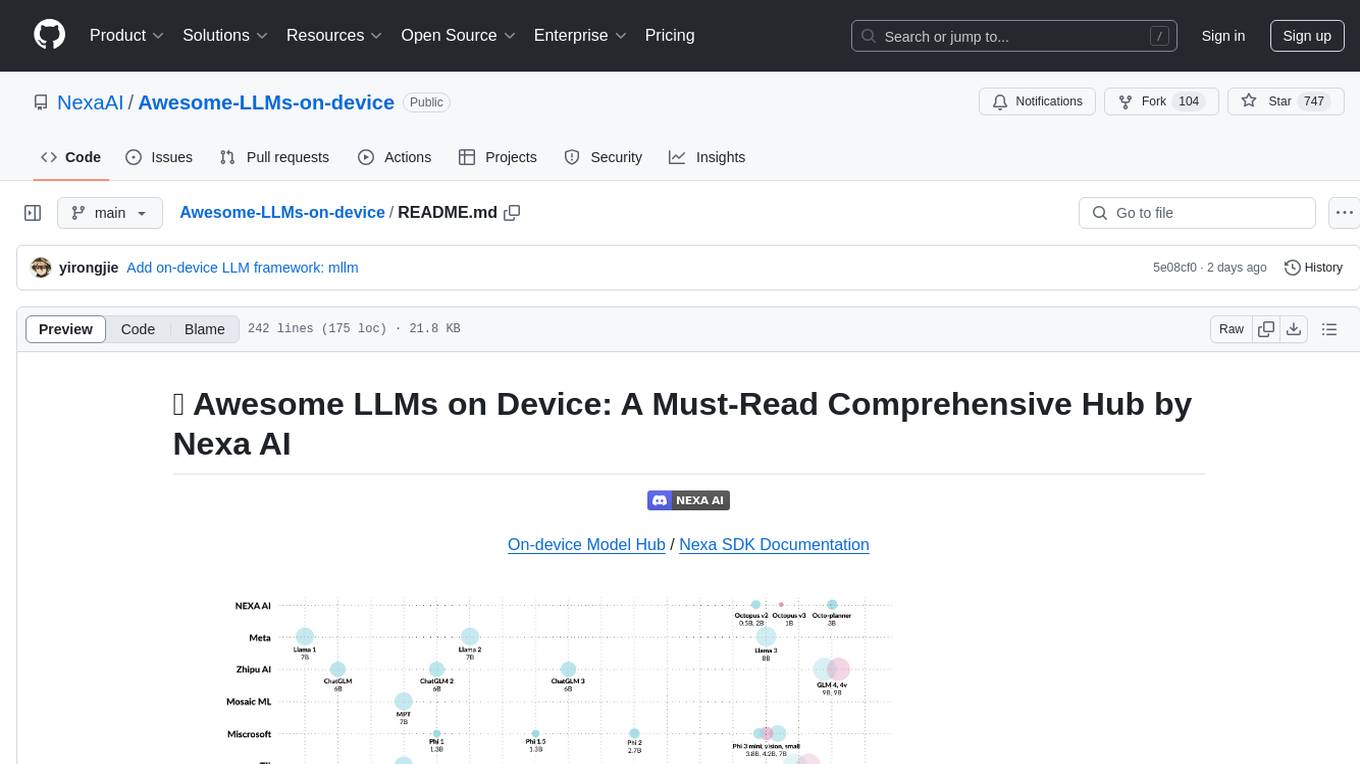

Awesome-LLMs-on-device

Welcome to the ultimate hub for on-device Large Language Models (LLMs)! This repository is your go-to resource for all things related to LLMs designed for on-device deployment. Whether you're a seasoned researcher, an innovative developer, or an enthusiastic learner, this comprehensive collection of cutting-edge knowledge is your gateway to understanding, leveraging, and contributing to the exciting world of on-device LLMs.

20 - OpenAI Gpts

Rust on ESP32 Expert

Expert in Rust coding for ESP32, offering detailed programming and deployment guidance.

React on Rails Pro

Expert in Rails & React, focusing on high-standard software development.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

XRPL GPT

Build on the XRP Ledger with assistance from this GPT trained on extensive documentation and code samples.

Javascript Cloud services coding assistant

Expert on google cloud services with javascript

Apple CoreML Complete Code Expert

A detailed expert trained on all 3,018 pages of Apple CoreML, offering complete coding solutions. Saving time? https://www.buymeacoffee.com/parkerrex ☕️❤️

Auto Custom Actions GPT

This GPT help you on one single task, generating valid OpenAI Schemas for Custom Actions in GPTs