Best AI tools for< Deploy On Cloud >

20 - AI tool Sites

Leapwork

Leapwork is an AI-powered test automation platform that enables users to build, manage, maintain, and analyze complex data-driven testing across various applications, including AI apps. It offers a democratized testing approach with an intuitive visual interface, composable architecture, and generative AI capabilities. Leapwork supports testing of diverse application types, web, mobile, desktop applications, and APIs. It allows for scalable testing with reusable test flows that adapt to changes in the application under test. Leapwork can be deployed on the cloud or on-premises, providing full control to the users.

Cirrascale Cloud Services

Cirrascale Cloud Services is an AI tool that offers cloud solutions for Artificial Intelligence applications. The platform provides a range of cloud services and products tailored for AI innovation, including NVIDIA GPU Cloud, AMD Instinct Series Cloud, Qualcomm Cloud, Graphcore, Cerebras, and SambaNova. Cirrascale's AI Innovation Cloud enables users to test and deploy on leading AI accelerators in one cloud, democratizing AI by delivering high-performance AI compute and scalable deep learning solutions. The platform also offers professional and managed services, tailored multi-GPU server options, and high-throughput storage and networking solutions to accelerate development, training, and inference workloads.

Hanabi.rest

Hanabi.rest is an AI-based API building platform that allows users to create REST APIs from natural language and screenshots using AI technology. Users can deploy the APIs on Cloudflare Workers and roll them out globally. The platform offers a live editor for testing database access and API endpoints, generates code compatible with various runtimes, and provides features like sharing APIs via URL, npm package integration, and CLI dump functionality. Hanabi.rest simplifies API design and deployment by leveraging natural language processing, image recognition, and v0.dev components.

LambdaTest

LambdaTest is a next-generation mobile apps and cross-browser testing cloud platform that offers a wide range of testing services. It allows users to perform manual live-interactive cross-browser testing, run Selenium, Cypress, Playwright scripts on cloud-based infrastructure, and execute AI-powered automation testing. The platform also provides accessibility testing, real devices cloud, visual regression cloud, and AI-powered test analytics. LambdaTest is trusted by over 2 million users globally and offers a unified digital experience testing cloud to accelerate go-to-market strategies.

Lamini

Lamini is an enterprise-level LLM platform that offers precise recall with Memory Tuning, enabling teams to achieve over 95% accuracy even with large amounts of specific data. It guarantees JSON output and delivers massive throughput for inference. Lamini is designed to be deployed anywhere, including air-gapped environments, and supports training and inference on Nvidia or AMD GPUs. The platform is known for its factual LLMs and reengineered decoder that ensures 100% schema accuracy in the JSON output.

Qualcomm AI Hub

Qualcomm AI Hub is a platform that allows users to run AI models on Snapdragon® 8 Elite devices. It provides a collaborative ecosystem for model makers, cloud providers, runtime, and SDK partners to deploy on-device AI solutions quickly and efficiently. Users can bring their own models, optimize for deployment, and access a variety of AI services and resources. The platform caters to various industries such as mobile, automotive, and IoT, offering a range of models and services for edge computing.

Gretel.ai

Gretel.ai is a synthetic data platform purpose-built for AI applications. It allows users to generate artificial, synthetic datasets with the same characteristics as real data, enabling the improvement of AI models without compromising privacy. The platform offers APIs for generating anonymized and safe synthetic data, training generative AI models, and validating models with quality and privacy scores. Users can deploy Gretel for enterprise use cases and run it on various cloud platforms or in their own environment.

VeroCloud

VeroCloud is a platform offering tailored solutions for AI, HPC, and scalable growth. It provides cost-effective cloud solutions with guaranteed uptime, performance efficiency, and cost-saving models. Users can deploy HPC workloads seamlessly, configure environments as needed, and access optimized environments for GPU Cloud, HPC Compute, and Tally on Cloud. VeroCloud supports globally distributed endpoints, public and private image repos, and deployment of containers on secure cloud. The platform also allows users to create and customize templates for seamless deployment across computing resources.

Hopsworks

Hopsworks is an AI platform that offers a comprehensive solution for building, deploying, and monitoring machine learning systems. It provides features such as a Feature Store, real-time ML capabilities, and generative AI solutions. Hopsworks enables users to develop and deploy reliable AI systems, orchestrate and monitor models, and personalize machine learning models with private data. The platform supports batch and real-time ML tasks, with the flexibility to deploy on-premises or in the cloud.

Lyzr AI

Lyzr AI is a full-stack agent framework designed to build GenAI applications faster. It offers a range of AI agents for various tasks such as chatbots, knowledge search, summarization, content generation, and data analysis. The platform provides features like memory management, human-in-loop interaction, toxicity control, reinforcement learning, and custom RAG prompts. Lyzr AI ensures data privacy by running data locally on cloud servers. Enterprises and developers can easily configure, deploy, and manage AI agents using Lyzr's platform.

Ardor

Ardor is an AI tool that offers an all-in agentic software development lifecycle automation platform. It helps users build, deploy, and scale AI agents on the cloud efficiently and cost-effectively. With Ardor, users can start with a prompt, design AI agents visually, see their product get built, refine and iterate, and launch in minutes. The platform provides real-time collaboration features, simple pricing plans, and various tools like Ardor Copilot, AI Agent-Builder Canvas, Instant Build Messages, AI Debugger, Proactive Monitoring, Role-Based Access Control, and Single Sign-On.

Google Cloud

Google Cloud is a suite of cloud computing services that runs on the same infrastructure as Google. Its services include computing, storage, networking, databases, machine learning, and more. Google Cloud is designed to make it easy for businesses to develop and deploy applications in the cloud. It offers a variety of tools and services to help businesses with everything from building and deploying applications to managing their infrastructure. Google Cloud is also committed to sustainability, and it has a number of programs in place to reduce its environmental impact.

Salad

Salad is a distributed GPU cloud platform that offers fully managed and massively scalable services for AI applications. It provides the lowest priced AI transcription in the market, with features like image generation, voice AI, computer vision, data collection, and batch processing. Salad democratizes cloud computing by leveraging consumer GPUs to deliver cost-effective AI/ML inference at scale. The platform is trusted by hundreds of machine learning and data science teams for its affordability, scalability, and ease of deployment.

Pulumi

Pulumi is an AI-powered infrastructure as code tool that allows engineers to manage cloud infrastructure using various programming languages like Node.js, Python, Go, .NET, Java, and YAML. It offers features such as generative AI-powered cloud management, security enforcement through policies, automated deployment workflows, asset management, compliance remediation, and AI insights over the cloud. Pulumi helps teams provision, automate, and evolve cloud infrastructure, centralize and secure secrets management, and gain security, compliance, and cost insights across all cloud assets.

IBM Cloud

IBM Cloud is a cloud computing service offered by IBM that provides a suite of infrastructure and platform services. It allows businesses to build, deploy, and manage applications on the cloud, enabling them to scale and innovate rapidly. With a wide range of services including AI, analytics, blockchain, and IoT, IBM Cloud caters to various industries and use cases, empowering organizations to leverage cutting-edge technologies to drive digital transformation.

Activepieces

Activepieces is an open-source no-code business automation tool that allows users to securely deploy automation for various departments such as marketing, sales, operations, HR, finance, and IT teams. It offers a customizable and self-hosted solution, enabling users to put their work on autopilot. Activepieces stands out for its user experience, ease of integration, and control over hosting. The tool leverages AI automation to streamline tasks like content strategy, security compliance, sales outreach, and customer support. With a community-driven approach, Activepieces aims to make the automation world more open and accessible.

Restack

Restack is a developer tool and cloud infrastructure platform that enables users to build, launch, and scale AI products quickly and efficiently. With Restack, developers can go from local development to production in seconds, leveraging a variety of languages and frameworks. The platform offers templates, repository connections, and Dockerfile customization for seamless deployment. Restack Cloud provides cost-efficient scaling and GitHub integration for instant deployment. The platform simplifies the complexity of building and scaling AI applications, allowing users to move from code to production faster than ever before.

Helix AI

Helix AI is a private GenAI platform that enables users to build AI applications using open source models. The platform offers tools for RAG (Retrieval-Augmented Generation) and fine-tuning, allowing deployment on-premises or in a Virtual Private Cloud (VPC). Users can access curated models, utilize Helix API tools to connect internal and external APIs, embed Helix Assistants into websites/apps for chatbot functionality, write AI application logic in natural language, and benefit from the innovative RAG system for Q&A generation. Additionally, users can fine-tune models for domain-specific needs and deploy securely on Kubernetes or Docker in any cloud environment. Helix Cloud offers free and premium tiers with GPU priority, catering to individuals, students, educators, and companies of varying sizes.

Qubinets

Qubinets is a cloud data environment solutions platform that provides building blocks for building big data, AI, web, and mobile environments. It is an open-source, no lock-in, secured, and private platform that can be used on any cloud, including AWS, Digital Ocean, Google Cloud, and Microsoft Azure. Qubinets makes it easy to plan, build, and run data environments, and it streamlines and saves time and money by reducing the grunt work in setup and provisioning.

Tracecat

Tracecat is an open-source security automation platform that helps you automate security alerts, build AI-assisted workflows, orchestrate alerts, and close cases fast. It is a Tines / Splunk SOAR alternative that is built for builders and allows you to experiment for free. You can deploy Tracecat on your own infrastructure or use Tracecat Cloud with no maintenance overhead. Tracecat is Apache-2.0 licensed, which means it is open vision, open community, and open development. You can have your say in the future of security automation. Tracecat is no-code first, but you can also code as well. You can build automations fast with no-code and customize without vendor lock-in using Python. Tracecat has a click-and-drag workflow builder that allows you to automate SecOps using pre-built actions (API calls, webhooks, data transforms, AI tasks, and more) combined into workflows. No code is required. Tracecat also has a built-in case management system that allows you to open cases directly from workflows and track and manage security incidents all in one platform.

7 - Open Source AI Tools

langflow

Langflow is an open-source Python-powered visual framework designed for building multi-agent and RAG applications. It is fully customizable, language model agnostic, and vector store agnostic. Users can easily create flows by dragging components onto the canvas, connect them, and export the flow as a JSON file. Langflow also provides a command-line interface (CLI) for easy management and configuration, allowing users to customize the behavior of Langflow for development or specialized deployment scenarios. The tool can be deployed on various platforms such as Google Cloud Platform, Railway, and Render. Contributors are welcome to enhance the project on GitHub by following the contributing guidelines.

AI-Video-Boilerplate-Simple

AI-video-boilerplate-simple is a free Live AI Video boilerplate for testing out live video AI experiments. It includes a simple Flask server that serves files, supports live video from various sources, and integrates with Roboflow for AI vision. Users can use this template for projects, research, business ideas, and homework. It is lightweight and can be deployed on popular cloud platforms like Replit, Vercel, Digital Ocean, or Heroku.

aspire-ai-chat-demo

Aspire AI Chat is a full-stack chat sample that combines modern technologies to deliver a ChatGPT-like experience. The backend API is built with ASP.NET Core and interacts with an LLM using Microsoft.Extensions.AI. It uses Entity Framework Core with CosmosDB for flexible, cloud-based NoSQL storage. The AI capabilities include using Ollama for local inference and switching to Azure OpenAI in production. The frontend UI is built with React, offering a modern and interactive chat experience.

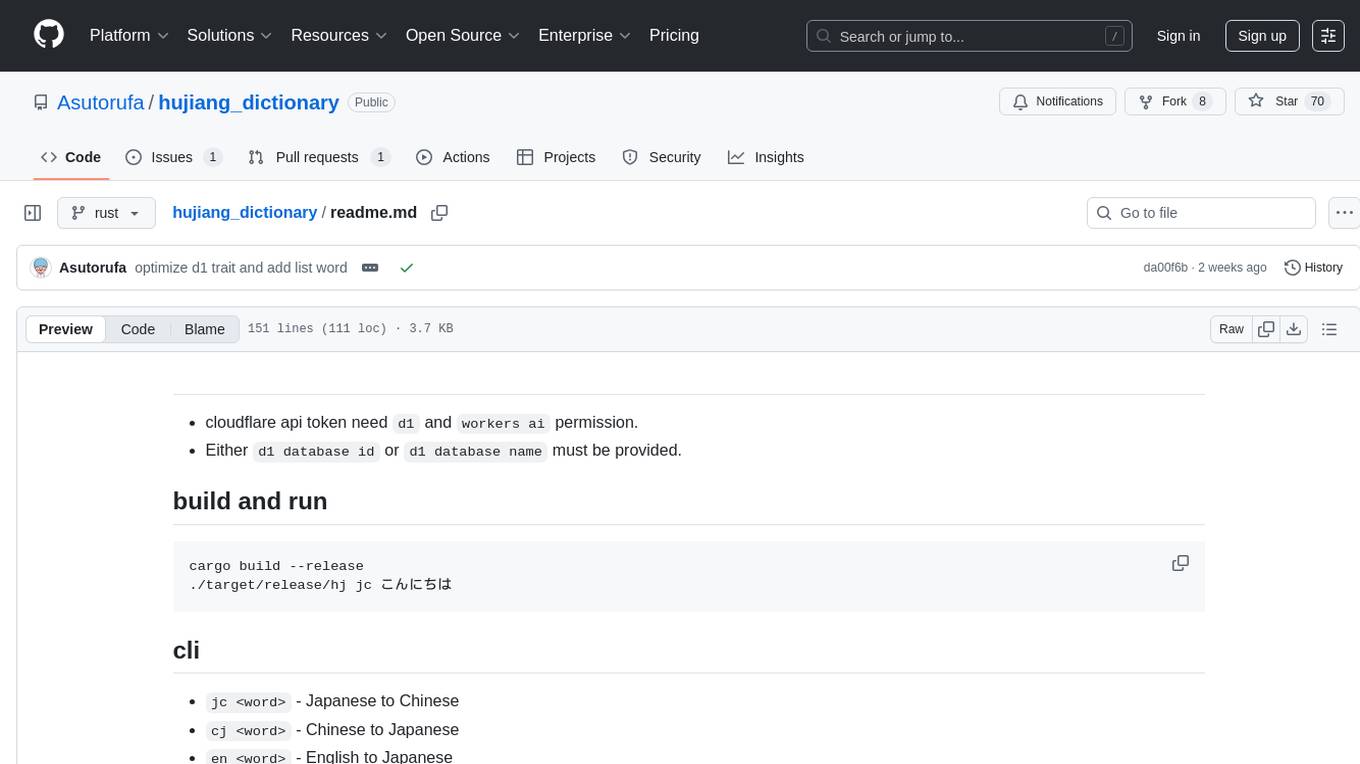

hujiang_dictionary

Hujiang Dictionary is a tool that provides translation services between Japanese, Chinese, and English. It supports various translation modes such as Japanese to Chinese, Chinese to Japanese, English to Japanese, and more. The tool utilizes cloud services like Telegram, Lambda, and Cloudflare Workers for different deployment options. Users can interact with the tool via a command-line interface (CLI) to perform translations and access online resources like weblio and Google Translate. Additionally, the tool offers a Telegram bot for users to access translation services conveniently. The tool also supports setting up and managing databases for storing translation data.

lm-engine

LM Engine is a research-grade, production-ready library for training large language models at scale. It provides support for multiple accelerators including NVIDIA GPUs, Google TPUs, and AWS Trainiums. Key features include multi-accelerator support, advanced distributed training, flexible model architectures, HuggingFace integration, training modes like pretraining and finetuning, custom kernels for high performance, experiment tracking, and efficient checkpointing.

OpenHands

OpenHands is a community focused on AI-driven development, offering a Software Agent SDK, CLI, Local GUI, Cloud deployment, and Enterprise solutions. The SDK is a Python library for defining and running agents, the CLI provides an easy way to start using OpenHands, the Local GUI allows running agents on a laptop with REST API, the Cloud deployment offers hosted infrastructure with integrations, and the Enterprise solution enables self-hosting via Kubernetes with extended support and access to the research team. OpenHands is available under the MIT license.

orla

Orla is a high performance agent execution engine that provides a simple unified API for developing and running agents. It can run as a service or standalone tool, orchestrating agentic workflows across multiple models, LLM backends, GPUs (or CPUs), and cloud instances. The tool sits above LLM backends including SGLang, Ollama, and vLLM.

20 - OpenAI Gpts

MS Learn GPT

Factual, clear guidance based on Microsoft Learn and GitHub Docs. Ask me about cloud native solutions, GitHub collaboration or certifications.

Javascript Cloud services coding assistant

Expert on google cloud services with javascript

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

React on Rails Pro

Expert in Rails & React, focusing on high-standard software development.

Data Engineer Consultant

Guides in data engineering tasks with a focus on practical solutions.

Rust on ESP32 Expert

Expert in Rust coding for ESP32, offering detailed programming and deployment guidance.

XRPL GPT

Build on the XRP Ledger with assistance from this GPT trained on extensive documentation and code samples.

Apple CoreML Complete Code Expert

A detailed expert trained on all 3,018 pages of Apple CoreML, offering complete coding solutions. Saving time? https://www.buymeacoffee.com/parkerrex ☕️❤️