Best AI tools for< Debug Api >

20 - AI tool Sites

GPT-Prompter

GPT-Prompter is a Chrome extension that allows users to harness the power of GPT-3, GPT-4, and ChatGPT API without navigating to the OpenAI website, incurring additional costs, or relying on intermediaries. With the GPT-Prompter extension, users can utilize the full potential of GPT more conveniently than ever before. The extension includes an assortment of pre-made customizable prompts and conversations, as well as a playground-like interface that enables quick usage from any webpage. The result is an effortless, hassle-free experience that streamlines the use of GPT, saving users time and effort.

Chat Blackbox

Chat Blackbox is an AI tool that specializes in AI code generation, code chat, and code search. It provides a platform where users can interact with AI to generate code, discuss code-related topics, and search for specific code snippets. The tool leverages artificial intelligence algorithms to enhance the coding experience and streamline the development process. With Chat Blackbox, users can access a wide range of features to improve their coding skills and efficiency.

Testsigma

Testsigma is a cloud-based test automation platform that enables teams to create, execute, and maintain automated tests for web, mobile, and API applications. It offers a range of features including natural language processing (NLP)-based scripting, record-and-playback capabilities, data-driven testing, and AI-driven test maintenance. Testsigma integrates with popular CI/CD tools and provides a marketplace for add-ons and extensions. It is designed to simplify and accelerate the test automation process, making it accessible to testers of all skill levels.

Rainforest QA

Rainforest QA is an AI-powered test automation platform designed for SaaS startups to streamline and accelerate their testing processes. It offers AI-accelerated testing, no-code test automation, and expert QA services to help teams achieve reliable test coverage and faster release cycles. Rainforest QA's platform integrates with popular tools, provides detailed insights for easy debugging, and ensures visual-first testing for a seamless user experience. With a focus on automating end-to-end tests, Rainforest QA aims to eliminate QA bottlenecks and help teams ship bug-free code with confidence.

LangChain

LangChain is a framework for developing applications powered by large language models (LLMs). It simplifies every stage of the LLM application lifecycle, including development, productionization, and deployment. LangChain consists of open-source libraries such as langchain-core, langchain-community, and partner packages. It also includes LangGraph for building stateful agents and LangSmith for debugging and monitoring LLM applications.

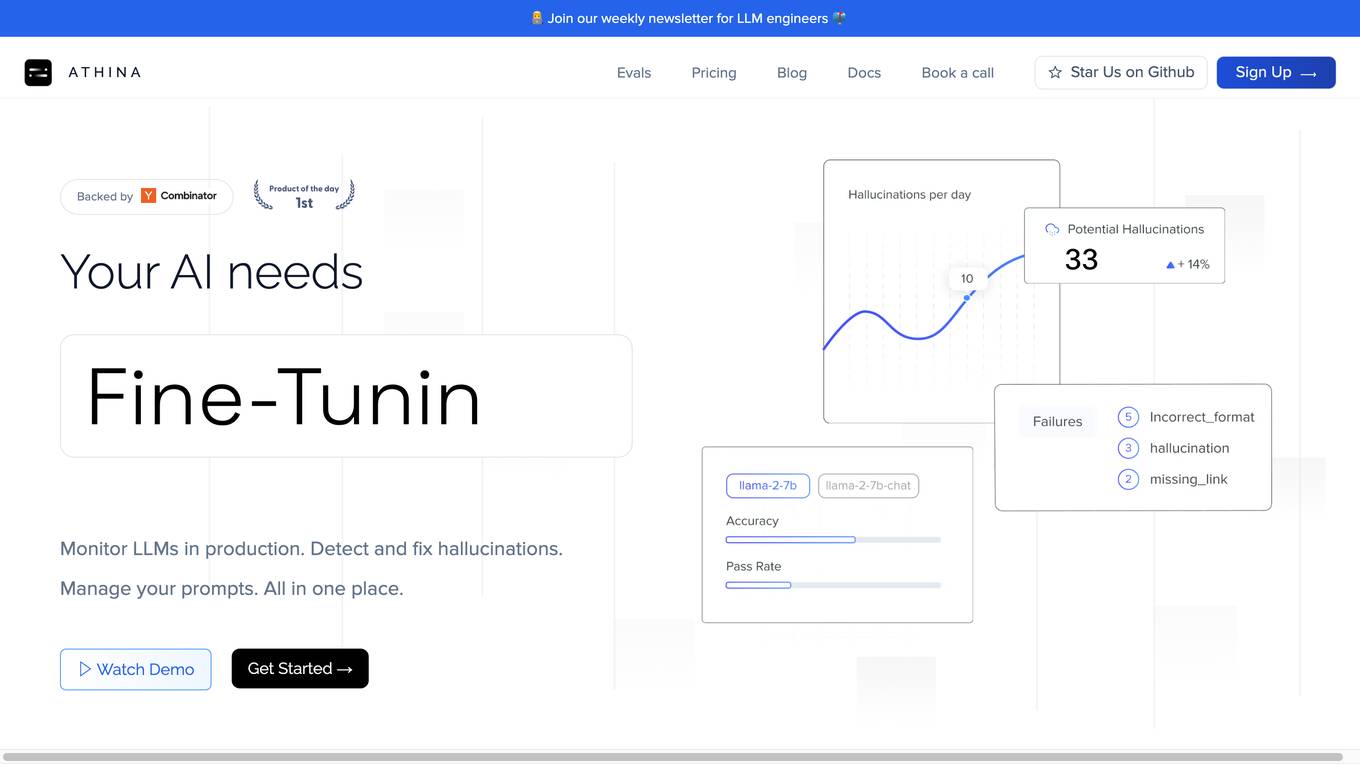

Athina AI

Athina AI is a comprehensive platform designed to monitor, debug, analyze, and improve the performance of Large Language Models (LLMs) in production environments. It provides a suite of tools and features that enable users to detect and fix hallucinations, evaluate output quality, analyze usage patterns, and optimize prompt management. Athina AI supports integration with various LLMs and offers a range of evaluation metrics, including context relevancy, harmfulness, summarization accuracy, and custom evaluations. It also provides a self-hosted solution for complete privacy and control, a GraphQL API for programmatic access to logs and evaluations, and support for multiple users and teams. Athina AI's mission is to empower organizations to harness the full potential of LLMs by ensuring their reliability, accuracy, and alignment with business objectives.

BoltAI

BoltAI is a powerful and user-friendly ChatGPT app for Mac that seamlessly integrates AI into your workflow. With BoltAI, you can access the capabilities of ChatGPT directly within your favorite macOS apps, enhancing your productivity and creativity. Whether you're a developer, content creator, student, or entrepreneur, BoltAI empowers you to leverage AI to streamline your tasks and achieve more. Its intuitive chat UI, powerful AI commands, and inline AI capabilities make it easy to incorporate AI assistance into your daily routine. BoltAI is designed to be versatile and customizable, allowing you to tailor it to your specific needs and preferences. With BoltAI, you can create custom AI assistants, utilize a library of prompts, and enjoy highly customizable features to optimize your workflow. BoltAI prioritizes your privacy and security, ensuring that your data remains protected and confidential. It operates locally on your device, with no data or prompts being stored or transmitted to external servers. Your OpenAI API key is securely stored in the Apple Keychain, adhering to industry-standard encryption methods. Additionally, BoltAI includes an automatic data detection feature that redacts sensitive information, providing peace of mind. BoltAI is committed to continuous improvement, with regular updates and new features being added to enhance your experience. By integrating BoltAI into your workflow, you gain access to a powerful AI assistant that can help you write high-quality content, generate creative ideas, debug code, learn new concepts, and much more. Unleash the potential of AI with BoltAI and experience a new level of productivity and efficiency.

Wordware

Wordware is an AI toolkit that empowers cross-functional teams to build reliable high-quality agents through rapid iteration. It combines the best aspects of software with the power of natural language, freeing users from traditional no-code tool constraints. With advanced technical capabilities, multiple LLM providers, one-click API deployment, and multimodal support, Wordware offers a seamless experience for AI app development and deployment.

Debug Sage

Debug Sage is a website designed to help users understand and troubleshoot errors in their software applications. The platform provides detailed insights into various types of errors, allowing users to identify and resolve issues efficiently. With a user-friendly interface, Debug Sage aims to streamline the debugging process for developers and software engineers. The website also offers resources and tools to enhance the overall debugging experience. By leveraging advanced technologies, Debug Sage empowers users to tackle complex errors with ease.

Whybug

Whybug is an AI tool designed to help developers debug their code by providing explanations for errors. By utilizing a large language model trained on data from StackExchange and other sources, Whybug can predict the causes of errors and suggest fixes. Users can simply paste an error message and receive detailed explanations on how to resolve the issue. The tool aims to streamline the debugging process and improve code quality.

New Relic

New Relic is an AI monitoring platform that offers an all-in-one observability solution for monitoring, debugging, and improving the entire technology stack. With over 30 capabilities and 750+ integrations, New Relic provides the power of AI to help users gain insights and optimize performance across various aspects of their infrastructure, applications, and digital experiences.

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Rerun

Rerun is an SDK, time-series database, and visualizer for temporal and multimodal data. It is used in fields like robotics, spatial computing, 2D/3D simulation, and finance to verify, debug, and explain data. Rerun allows users to log data like tensors, point clouds, and text to create streams, visualize and interact with live and recorded streams, build layouts, customize visualizations, and extend data and UI functionalities. The application provides a composable data model, dynamic schemas, and custom views for enhanced data visualization and analysis.

Snaplet

Snaplet is a data management tool for developers that provides AI-generated dummy data for local development, end-to-end testing, and debugging. It uses a real programming language (TypeScript) to define and edit data, ensuring type safety and auto-completion. Snaplet understands database structures and relationships, automatically transforming personally identifiable information and seeding data accordingly. It integrates seamlessly into development workflows, providing data where it's needed most: on local machines, for CI/CD testing, and preview environments.

Langfuse

Langfuse is an AI tool that offers the Langfuse TypeScript SDK v4 for building and debugging LLM (Large Language Models) applications. It provides features such as tracing, prompt management, evaluation, and metrics to enhance the performance of LLM applications. Langfuse is backed by a team of experts and offers integrations with various platforms and SDKs. The tool aims to simplify the development process of complex LLM applications and improve overall efficiency.

SourceAI

SourceAI is an AI-powered code generator that allows users to generate code in any programming language. It is easy to use, even for non-developers, and has a clear and intuitive interface. SourceAI is powered by GPT-3 and Codex, the most advanced AI technology available. It can be used to generate code for a variety of tasks, including calculating the factorial of a number, finding the roots of a polynomial, and translating text from one language to another.

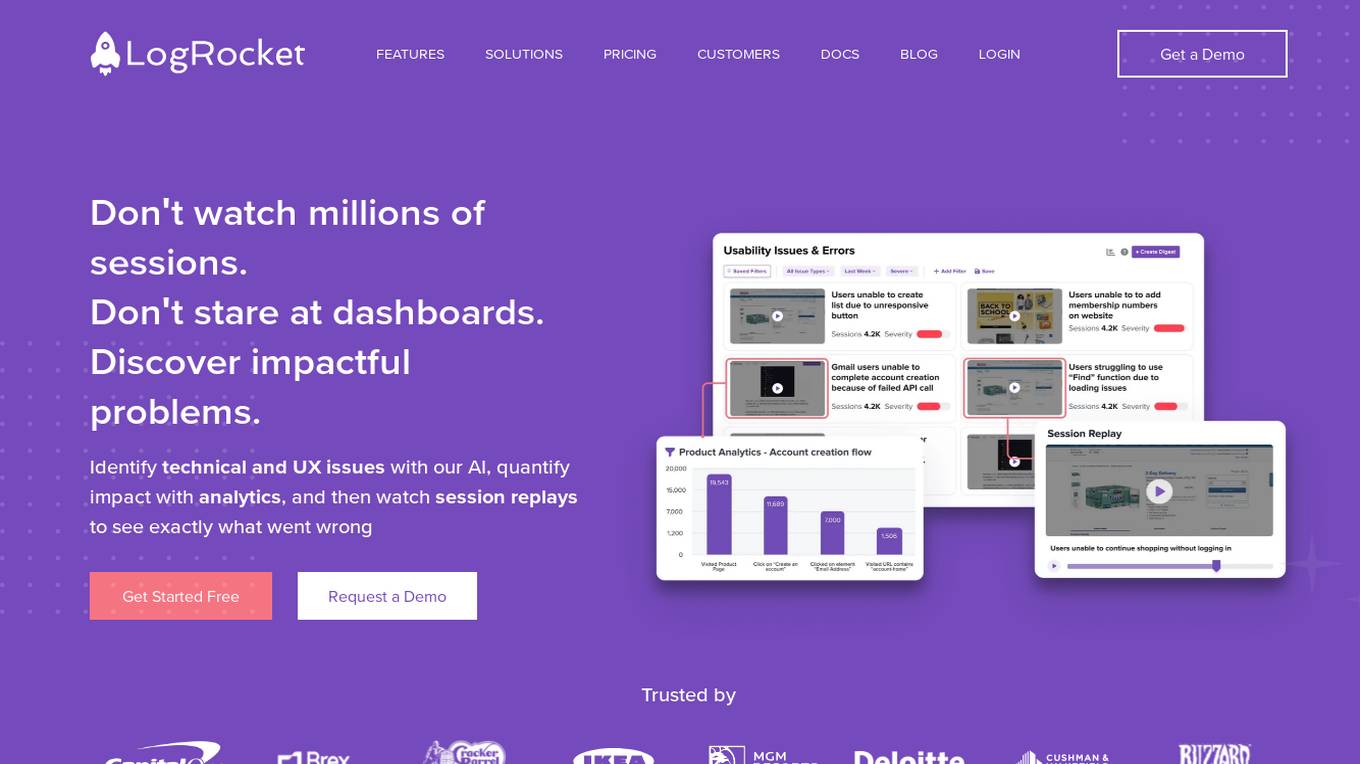

LogRocket

LogRocket is a session replay, product analytics, and issue detection platform that helps software teams deliver the best web and mobile experiences. With LogRocket, you can see exactly what users experienced on your app, as well as DOM playback, console and network logs, errors, and performance data. You can also surface the most impactful user issues with JavaScript errors, network errors, stack traces, automatic triaging, and alerting. LogRocket also provides product analytics to help you understand how users are interacting with your app, and UX analytics to help you visualize how users experience your app at both the individual and aggregate level.

CodeMate

CodeMate is an AI pair programmer tool designed to help developers write error-free code faster. It offers features like code navigation, understanding complex codebases, intuitive interface for smarter coding, instant debugging, code refactoring, and AI-powered code reviews. CodeMate supports all programming languages and provides suggestions for code optimizations. The tool ensures the security and privacy of user code and offers different pricing plans for individual developers, teams, and enterprises. Users can interact with their codebase, documentation, and Git repositories using CodeMate Chat. The tool aims to improve code quality and productivity by acting as a co-developer while programming.

RagaAI Catalyst

RagaAI Catalyst is a sophisticated AI observability, monitoring, and evaluation platform designed to help users observe, evaluate, and debug AI agents at all stages of Agentic AI workflows. It offers features like visualizing trace data, instrumenting and monitoring tools and agents, enhancing AI performance, agentic testing, comprehensive trace logging, evaluation for each step of the agent, enterprise-grade experiment management, secure and reliable LLM outputs, finetuning with human feedback integration, defining custom evaluation logic, generating synthetic data, and optimizing LLM testing with speed and precision. The platform is trusted by AI leaders globally and provides a comprehensive suite of tools for AI developers and enterprises.

Langtail

Langtail is a platform that helps developers build, test, and deploy AI-powered applications. It provides a suite of tools to help developers debug prompts, run tests, and monitor the performance of their AI models. Langtail also offers a community forum where developers can share tips and tricks, and get help from other users.

1 - Open Source AI Tools

ChatTTS-Forge

ChatTTS-Forge is a powerful text-to-speech generation tool that supports generating rich audio long texts using a SSML-like syntax and provides comprehensive API services, suitable for various scenarios. It offers features such as batch generation, support for generating super long texts, style prompt injection, full API services, user-friendly debugging GUI, OpenAI-style API, Google-style API, support for SSML-like syntax, speaker management, style management, independent refine API, text normalization optimized for ChatTTS, and automatic detection and processing of markdown format text. The tool can be experienced and deployed online through HuggingFace Spaces, launched with one click on Colab, deployed using containers, or locally deployed after cloning the project, preparing models, and installing necessary dependencies.

20 - OpenAI Gpts

! KAI - Assistant Laravel API

KAI, votre assistant dédié au développement d'une API en Laravel, sympathique et serviable. ALL LANGUAGES

API Insights Guide

Your real-time guide to the OpenAI API, directly referencing official documentation.

Chrome Extension Dev V3

Enhance Chrome extension development: Get expert AI assistance in building great Chrome Extensions. Expert in JavaScript, HTML, CSS, and API integration. Streamline your coding and debugging. Helps you transition Manifest V2 to Manifest V3.

OpenAPI Schema Builder

Assists with OpenAPI Schemas by providing JSON Schema format examples, debugging tips, and best practices.

![[latest] FastAPI GPT Screenshot](/screenshots_gpts/g-BhYCAfVXk.jpg)

[latest] FastAPI GPT

Up-to-date FastAPI coding assistant with knowledge of the latest version. Part of the [latest] GPTs family.

JAVA开发工程师

精通所有java知识和框架,特别是springboot和springcloud后台接口开发以及实体类和mysql完整流程crud完整代码开发。解决一切java报错问题,具备JAVA资深工程师能力

TypeScript Engineer

An expert TypeScript engineer to help you solve and debug problems together.