Best AI tools for< Compare Results >

20 - AI tool Sites

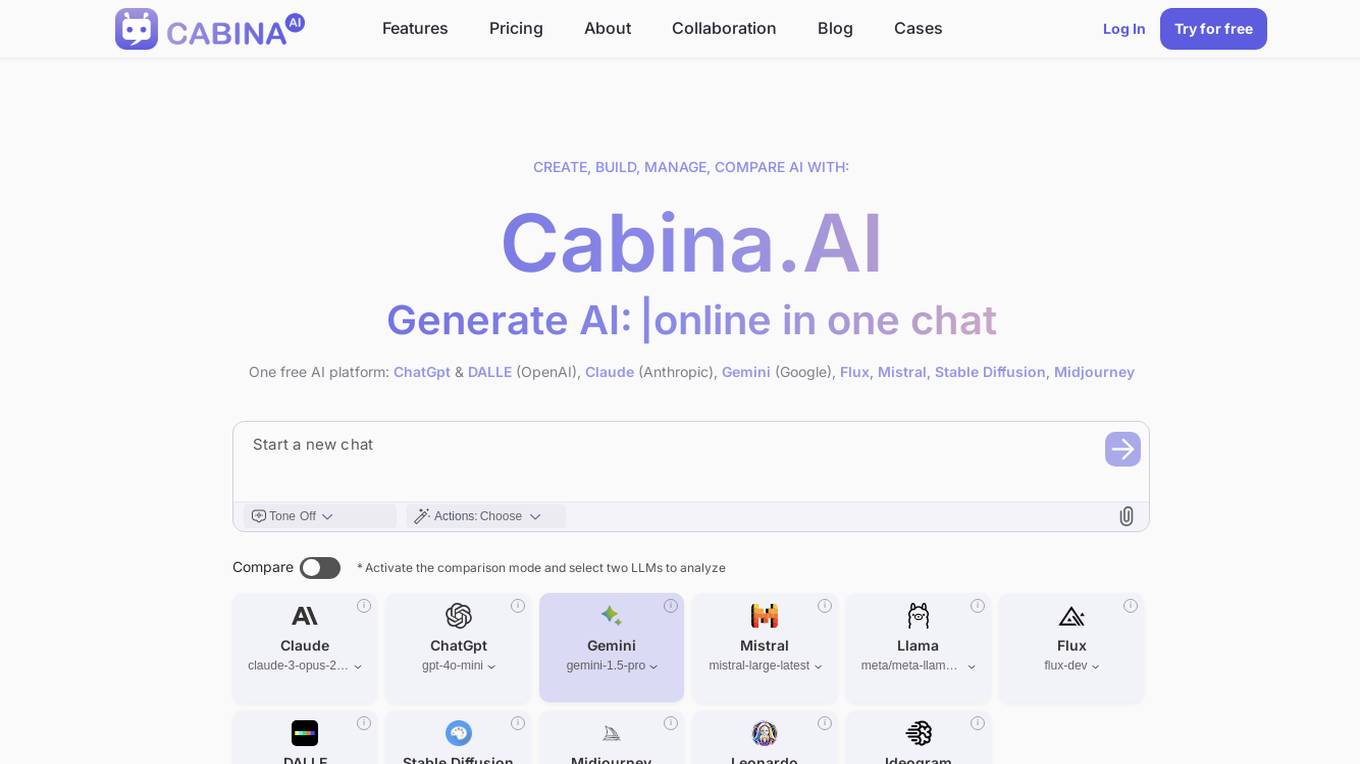

Cabina.AI

Cabina.AI is a free AI platform that allows users to generate content, text, and images online through a single chat interface. It offers a range of AI models such as ChatGpt, DALLE, Claude, Gemini, Flux, Mistral, and more for tasks like content creation, research, and real-time task solving. Users can access different LLMs, compare results, and find the best solutions faster. Cabina.AI also provides personalized actions, organization of chats, and the ability to track various data points. With flexible pricing plans and a friendly community, Cabina.AI aims to be a universal tool for research and content creation.

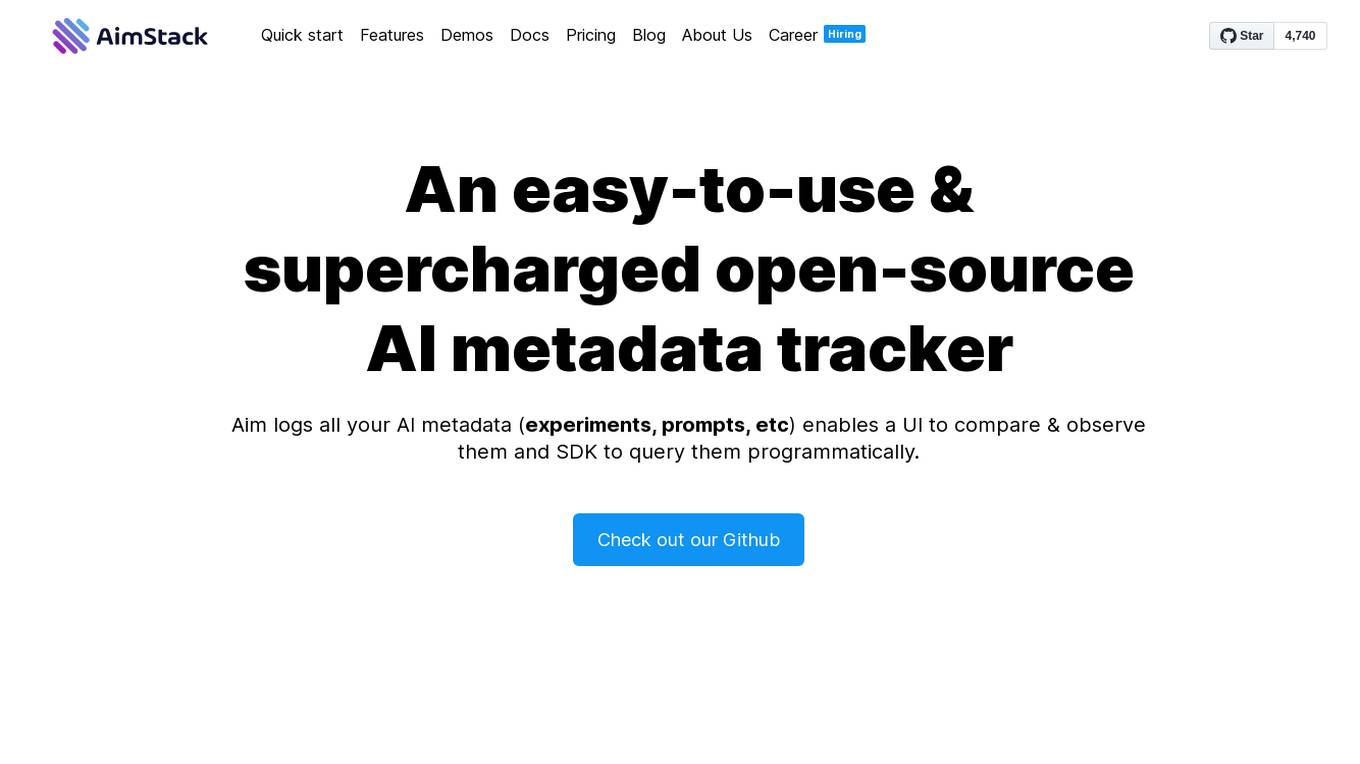

Aim

Aim is an open-source experiment tracker that logs your training runs, enables a beautiful UI to compare them, and an API to query them programmatically. It integrates seamlessly with your favorite tools.

GlowPro

GlowPro is a cutting-edge AI-powered skin analysis and care application available for iOS and Android users. It offers personalized skin analysis, care recommendations, and real-time assessment to optimize skin health. Users can track their skin's progress, receive tailored skincare tips, and benefit from a personal AI skincare expert. GlowPro has received positive reviews for its transformative impact on skincare routines and ease of use.

MachineTranslation.com

MachineTranslation.com is a leading AI translation platform trusted by over 1,000,000 users worldwide. It offers accurate and secure translations for businesses, professionals, and individuals. With support for 270+ languages and various file types, the platform ensures high-quality translations with human review. Users can personalize translations, preserve original formatting, and compare results from multiple AI sources. MachineTranslation.com is committed to making AI translation accessible and reliable through advanced technology and a user-friendly interface.

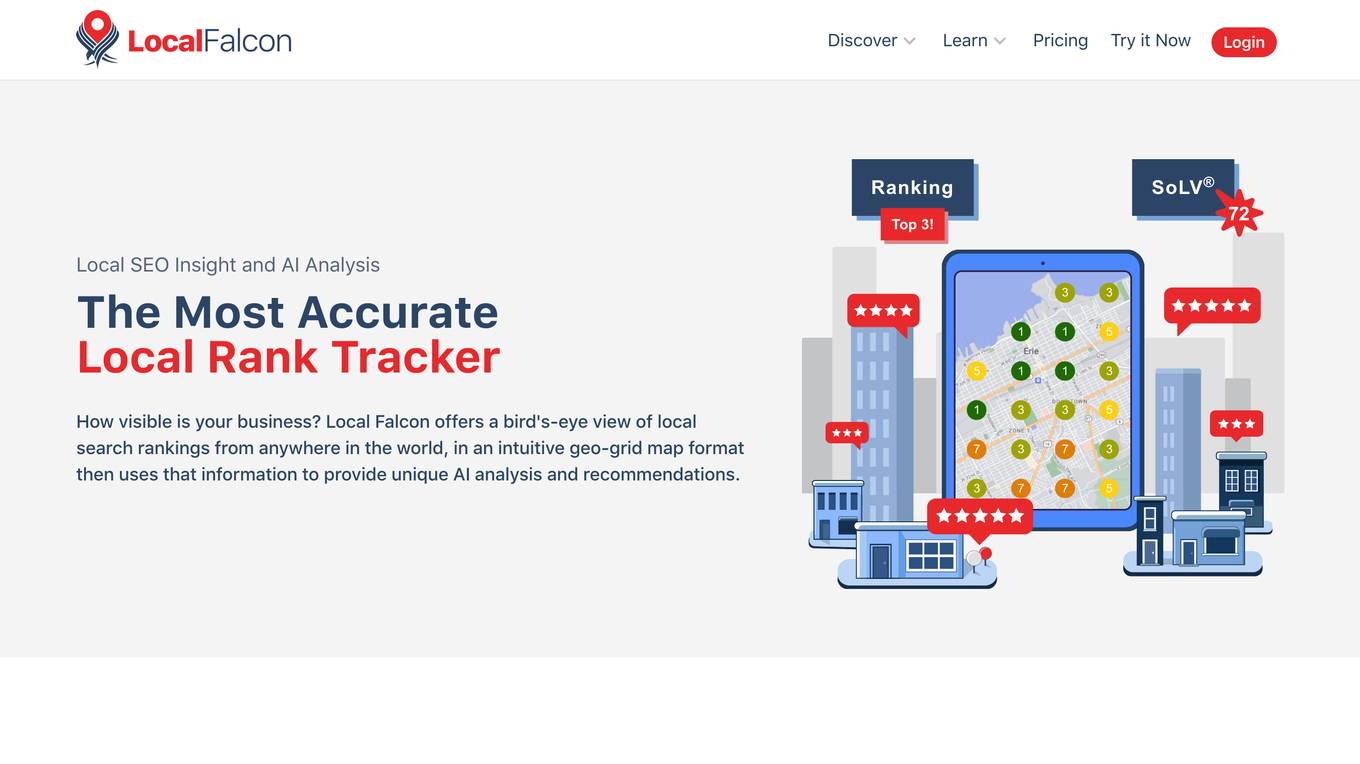

Local Falcon

Local Falcon is an AI-powered platform that offers local rank tracking and AI analysis to optimize search visibility. It provides insights on local rankings in Google and Apple Maps, as well as mentions from major AI platforms. With features like AI analysis, SoLV metric, and Falcon Assist, it helps businesses improve their local SEO performance and stay ahead of competitors.

LLM Clash

LLM Clash is a web-based application that allows users to compare the outputs of different large language models (LLMs) on a given task. Users can input a prompt and select which LLMs they want to compare. The application will then display the outputs of the LLMs side-by-side, allowing users to compare their strengths and weaknesses.

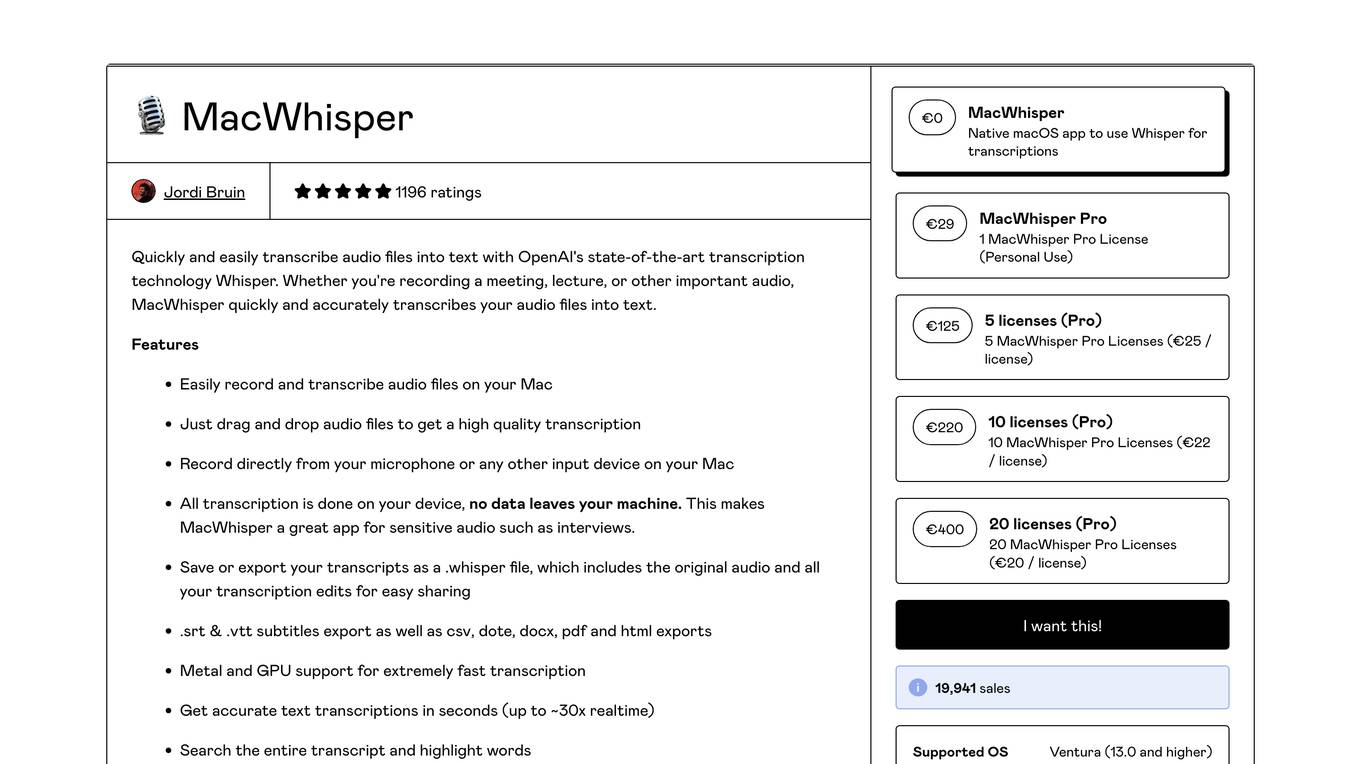

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

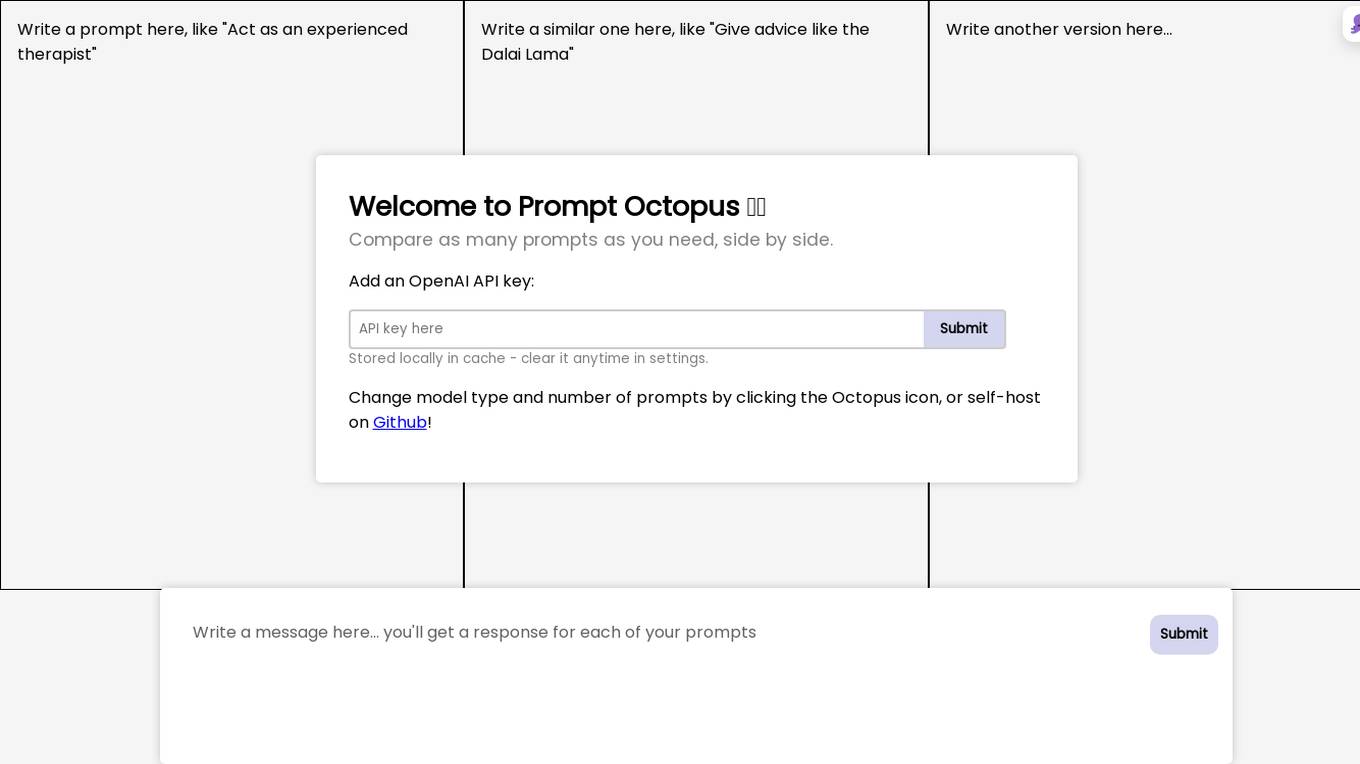

Prompt Octopus

Prompt Octopus is a free tool that allows you to compare multiple prompts side-by-side. You can add as many prompts as you need and view the responses in real-time. This can be helpful for fine-tuning your prompts and getting the best possible results from your AI model.

Neptune

Neptune is an MLOps stack component for experiment tracking. It allows users to track, compare, and share their models in one place. Neptune is used by scaling ML teams to skip days of debugging disorganized models, avoid long and messy model handovers, and start logging for free.

COPA

The website is an AI sports betting predictions platform called COPA that uses high-quality sports predictions engineered with Artificial Intelligence (AI). It offers predictive tools for major European football leagues, live scores, and odds comparison. Users can access match predictions, statistics, and insights to make informed betting choices. The platform aims to provide accurate forecasts at a low cost to sports fans globally.

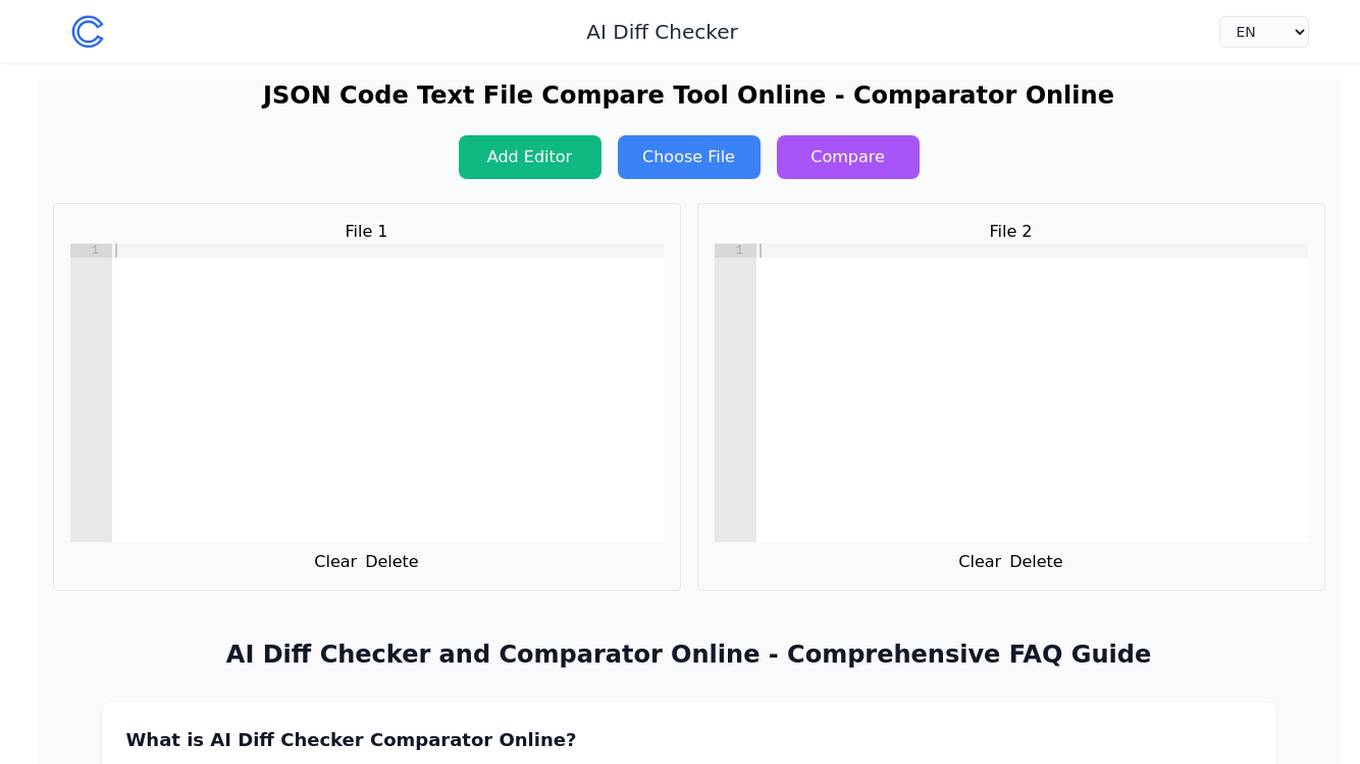

AI Diff Checker Comparator Online

The AI Diff Checker Comparator Online is an advanced online comparison tool that leverages AI technology to help users compare multiple text files, JSON files, and code files side by side. It offers both pairwise and baseline comparison modes, ensuring precise results. The tool processes files based on their content structure, supports various file types, and provides real-time editing capabilities. Users can benefit from its accurate comparison algorithms and innovative features, making it a powerful and easy-to-use solution for spotting differences between files.

Wowzer AI

Wowzer AI is a multi-model image generation tool that allows users to create stunning images using top-tier AI models simultaneously. With more than 2 million images generated, Wowzer AI makes generative AI easy, fast, and fun. Users can explore and compare unique images from various AI models, enhancing their creative vision. The tool offers Prompt Enhancer to help users craft exceptional creations by generating results across multiple AI models at once. Wowzer AI provides a platform to perfect prompts, create unique images, and share selections at an affordable price.

Aim

Aim is an open-source, self-hosted AI Metadata tracking tool designed to handle 100,000s of tracked metadata sequences. Two most famous AI metadata applications are: experiment tracking and prompt engineering. Aim provides a performant and beautiful UI for exploring and comparing training runs, prompt sessions.

StarByFace

StarByFace is a celebrity look-alike face recognition application that allows users to upload a photo and find their celebrity doppelganger. The application uses a Neural Network to compare the uploaded photo with a database of celebrity faces and suggests the most similar matches. It ensures privacy by not storing uploaded photos and collecting minimal personal information for website usage data only.

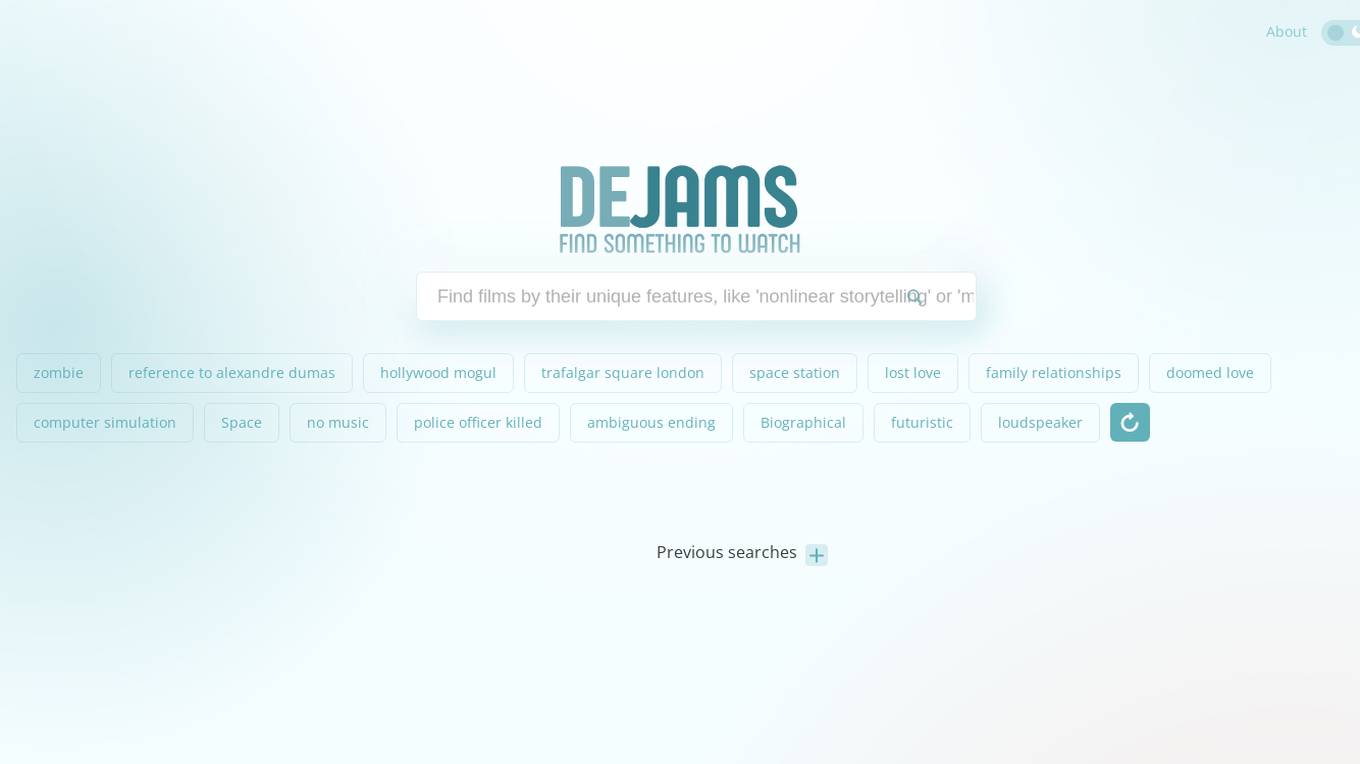

Dejams

Dejams is an AI-enhanced movie search engine that utilizes OpenAI to improve search results. It combines data from various sources such as themoviedb.org, rottentomatoes.com, and imdb.com, along with user-generated content. Dejams also integrates a widget from JustWatch.com to help users find where to watch movies. The website aims to provide the best movie search experience and welcomes user feedback for improvement.

Prompt Dev Tool

Prompt Dev Tool is an AI application designed to boost prompt engineering efficiency by helping users create, test, and optimize AI prompts for better results. It offers an intuitive interface, real-time feedback, model comparison, variable testing, prompt iteration, and advanced analytics. The tool is suitable for both beginners and experts, providing detailed insights to enhance AI interactions and improve outcomes.

Face Symmetry Test

Face Symmetry Test is an AI-powered tool that analyzes the symmetry of facial features by detecting key landmarks such as eyes, nose, mouth, and chin. Users can upload a photo to receive a personalized symmetry score, providing insights into the balance and proportion of their facial features. The tool uses advanced AI algorithms to ensure accurate results and offers guidelines for improving the accuracy of the analysis. Face Symmetry Test is free to use and prioritizes user privacy and security by securely processing uploaded photos without storing or sharing data with third parties.

LLMMM Marketing Monitor

LLMMM is an AI tool designed to monitor how AI models perceive and present brands. It offers real-time monitoring and cross-model insights to help brands understand their digital presence across various leading AI platforms. With automated analysis and lightning-fast results, LLMMM provides immediate visibility into how AI chatbots interpret brands. The tool focuses on brand intelligence, brand safety monitoring, misalignment detection, and cross-model brand intelligence. Users can create an account in minutes and access a range of features to track and analyze their brand's performance in the AI landscape.

Manus

Manus is a general AI agent that excels at various tasks in work and life, bridging minds and actions to deliver results. It integrates comprehensive travel information, conducts in-depth stock analysis, develops engaging educational content, generates structured comparison tables, and conducts comprehensive research across extensive networks. Manus works exclusively in the user's best interest, providing actionable insights, visualizations, and customized strategies to increase performance.

Pixian.AI

Pixian.AI is an AI tool that specializes in removing backgrounds from images. It offers a free service with no signup required, as well as a paid option for higher resolution images. The tool uses powerful GPUs and multi-core CPUs to analyze images and provide high-quality results. Pixian.AI aims to provide efficient and cost-effective AI image processing solutions to users, with a focus on quality and value.

4 - Open Source AI Tools

SwanLab

SwanLab is an open-source, lightweight AI experiment tracking tool that provides a platform for tracking, comparing, and collaborating on experiments, aiming to accelerate the research and development efficiency of AI teams by 100 times. It offers a friendly API and a beautiful interface, combining hyperparameter tracking, metric recording, online collaboration, experiment link sharing, real-time message notifications, and more. With SwanLab, researchers can document their training experiences, seamlessly communicate and collaborate with collaborators, and machine learning engineers can develop models for production faster.

neptune-client

Neptune is a scalable experiment tracker for teams training foundation models. Log millions of runs, effortlessly monitor and visualize model training, and deploy on your infrastructure. Track 100% of metadata to accelerate AI breakthroughs. Log and display any framework and metadata type from any ML pipeline. Organize experiments with nested structures and custom dashboards. Compare results, visualize training, and optimize models quicker. Version models, review stages, and access production-ready models. Share results, manage users, and projects. Integrate with 25+ frameworks. Trusted by great companies to improve workflow.

aisuite

Aisuite is a simple, unified interface to multiple Generative AI providers. It allows developers to easily interact with various Language Model (LLM) providers like OpenAI, Anthropic, Azure, Google, AWS, and more through a standardized interface. The library focuses on chat completions and provides a thin wrapper around python client libraries, enabling creators to test responses from different LLM providers without changing their code. Aisuite maximizes stability by using HTTP endpoints or SDKs for making calls to the providers. Users can install the base package or specific provider packages, set up API keys, and utilize the library to generate chat completion responses from different models.

AI-Shortcuts

AI Shortcuts is a browser extension designed to enhance the efficiency of using AI websites. It allows users to quickly send messages, open frequently used AI sites, compare generation results from multiple sites, and access AI content without the need for registration or membership. Users can configure their most frequently used AI sites and easily query selected text on webpages. The extension also features a tab mode for comparing results across multiple AI sites.

20 - OpenAI Gpts

GPT Search & Finderr

Optimized with advanced search operators for refined results. Specializing in finding and linking top custom GPTs from builders around the world. Version 0.3.0

Website Conversion by B12

I'll help you optimize your website for more conversions, and compare your site's CRO potential to competitors’.

New Zealand/Kiwi Lotto

Your Comprehensive Guide to Kiwi Lotto and Powerball: Explore Historical Numbers, Winnings, Odds, and Numbers Generation. Feel Free to ask any.

Best Spy Apps for Android (Q&A)

FREE tool to compare best spy apps for Android. Get answers to your questions and explore features, pricing, pros and cons of each spy app.

GPTValue

Compare similar GPTs outputs quality on the same question, identify the most valuable one.

TV Comparison | Comprehensive TV Database

Compare TV Devices Uncover the pros and cons of different latest TV models.

PerspectiveBot

Provide TOPIC & different views to compare: Gateway to Informed Comparisons. Harness AI-powered insights to analyze and score different viewpoints on any topic, delivering balanced, data-driven perspectives for smarter decision-making.

Calorie Count & Cut Cost: Food Data

Apples vs. Oranges? Optimize your low-calorie diet. Compare food items. Get tailored advice on satiating, nutritious, cost-effective food choices based on 240 items.

Best price kuwait

A customized GPT model for price comparison would search and compare product prices on websites in Kuwait, tailored to local markets and languages.

Software Comparison

I compare different software, providing detailed, balanced information.

Course Finder

Find the perfect online course in tech, business, marketing, programming, and more. Compare options from top platforms like Udemy, Coursera, and EDX.

AI Hub

Your Gateway to AI Discovery – Ask, Compare, Learn. Explore AI tools and software with ease. Create AI Tech Stacks for your business and much more – Just ask, and AI Hub will do the rest!

🔵 GPT Boosted

GPT- 5 ? | Enhanced version of GPT-4 Turbo, don't believe, try and compare! | ver .001