Best AI tools for< Build Containers >

20 - AI tool Sites

Intel Gaudi AI Accelerator Developer

The Intel Gaudi AI accelerator developer website provides resources, guidance, tools, and support for building, migrating, and optimizing AI models. It offers software, model references, libraries, containers, and tools for training and deploying Generative AI and Large Language Models. The site focuses on the Intel Gaudi accelerators, including tutorials, documentation, and support for developers to enhance AI model performance.

Microsoft Azure

The website is Microsoft Azure, a cloud computing service offering a wide range of products and solutions for businesses and developers. Azure provides global infrastructure, FinOps, AI services, compute resources, containers, hybrid and multicloud solutions, analytics, application development, and more. It aims to empower users to innovate, modernize, and scale their applications and workloads efficiently on a secure and flexible cloud platform.

Microsoft Azure

Microsoft Azure is a cloud computing service that offers a wide range of products and solutions for businesses and developers. It provides services such as databases, analytics, compute, containers, hybrid cloud, AI, application development, and more. Azure aims to help organizations innovate, modernize, and scale their operations by leveraging the power of the cloud. With a focus on flexibility, performance, and security, Azure is designed to support a variety of workloads and use cases across different industries.

SiteRetriever

SiteRetriever is a self-hosted AI chatbot platform that enables users to build and deploy chatbots on their websites without any monthly fees. It offers a completely self-contained and self-hosted solution, providing full control over data and costs. With features like easy embedding, complete control over customization, lightning-fast responses, and smart AI powered by advanced language models, SiteRetriever simplifies the process of creating and managing chatbots. Users can upload content, configure bot settings, and embed the chatbot on their site within minutes. Join the waitlist to experience the benefits of SiteRetriever and take control of your chatbot today.

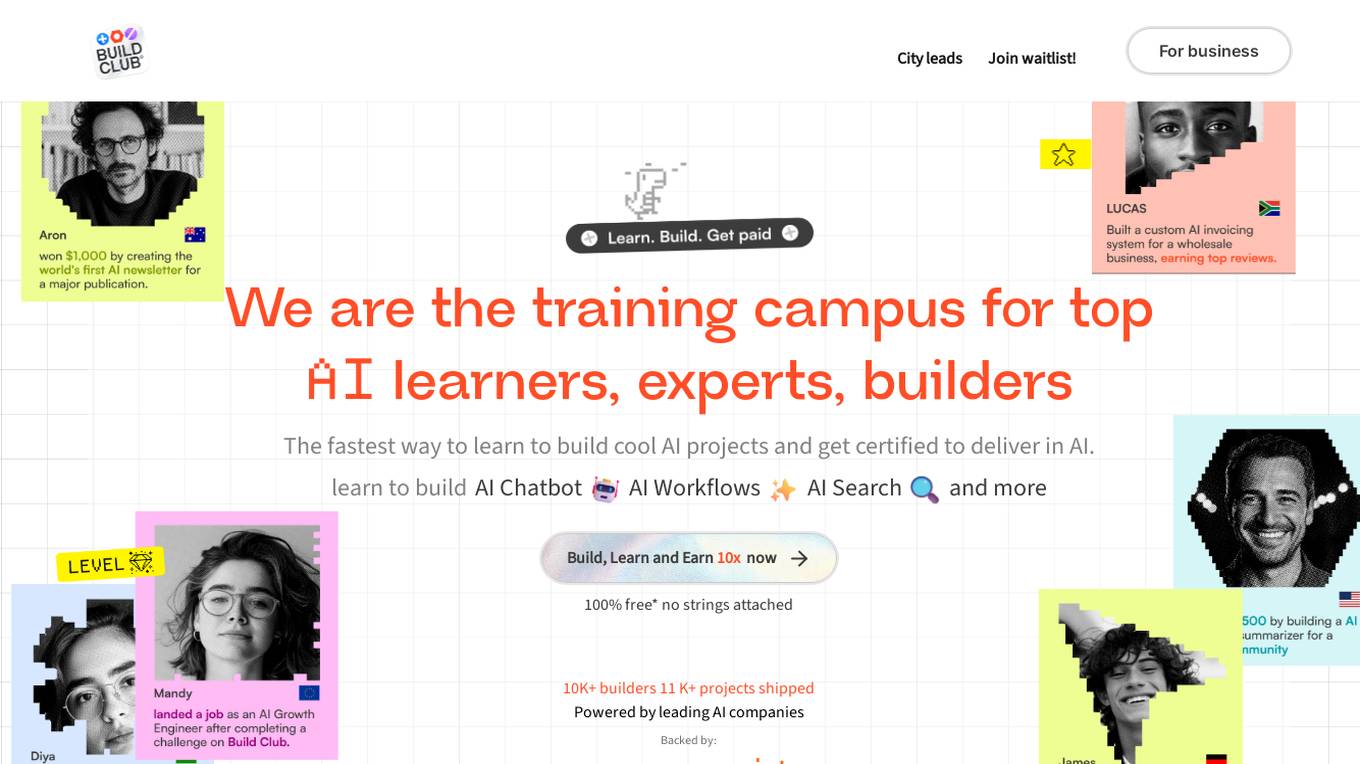

Build Club

Build Club is a leading training campus for AI learners, experts, and builders. It offers a platform where individuals can upskill into AI careers, get certified by top AI companies, learn the latest AI tools, and earn money by solving real problems. The community at Build Club consists of AI learners, engineers, consultants, and founders who collaborate on cutting-edge AI projects. The platform provides challenges, support, and resources to help individuals build AI projects and advance their skills in the field.

Unified DevOps platform to build AI applications

This is a unified DevOps platform to build AI applications. It provides a comprehensive set of tools and services to help developers build, deploy, and manage AI applications. The platform includes a variety of features such as a code editor, a debugger, a profiler, and a deployment manager. It also provides access to a variety of AI services, such as natural language processing, machine learning, and computer vision.

Build Chatbot

Build Chatbot is a no-code chatbot builder designed to simplify the process of creating chatbots. It enables users to build their chatbot without any coding knowledge, auto-train it with personalized content, and get the chatbot ready with an engaging UI. The platform offers various features to enhance user engagement, provide personalized responses, and streamline communication with website visitors. Build Chatbot aims to save time for both businesses and customers by making information easily accessible and transforming visitors into satisfied customers.

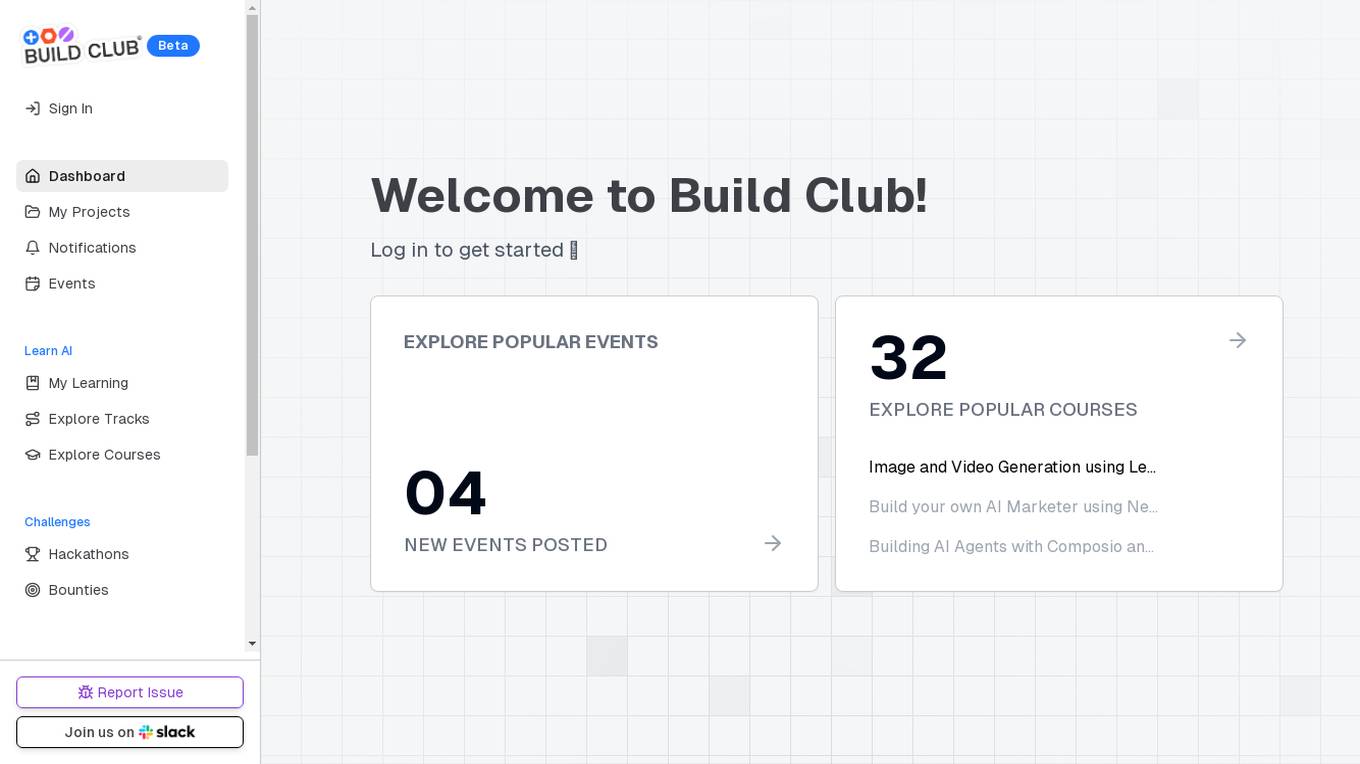

Build Club

Build Club is an AI tool designed to help individuals learn and explore various aspects of artificial intelligence. The platform offers a wide range of courses, challenges, hackathons, and community projects to enhance users' AI skills. Users can build AI models for tasks like image and video generation, AI marketing, and creating AI agents. Build Club aims to create a collaborative learning environment for AI enthusiasts to grow their knowledge and skills in the field of artificial intelligence.

Fabricate

Fabricate is an AI full-stack app builder that empowers users to create a wide range of applications quickly and efficiently. With Fabricate, users can build anything they imagine and launch their projects to market faster than ever before. The platform offers a user-friendly interface and a powerful set of tools to streamline the app development process, making it accessible to both beginners and experienced developers. Fabricate leverages AI technology to automate various aspects of app building, from design to deployment, enabling users to focus on their ideas rather than technical complexities.

Mimir

Mimir is an AI-native product management tool that helps users figure out what to build next by importing or uploading feedback, interviews, or metrics. It provides evidence-backed recommendations, refines them in chat, and generates AI agent-ready specs. Mimir stands out by creating GitHub issues from recommendations with complete specs and implementation tasks, enabling users to ship features in hours. The tool extracts structured insights, clusters them into themes, and generates prioritized recommendations based on product management best practices. Mimir learns from every interaction, aligning recommendations with the user's business context over time.

GitHub

GitHub is a collaborative platform that allows users to build and ship software efficiently. GitHub Copilot, an AI-powered tool, helps developers write better code by providing coding assistance, automating workflows, and enhancing security. The platform offers features such as instant dev environments, code review, code search, and collaboration tools. GitHub is widely used by enterprises, small and medium teams, startups, and nonprofits across various industries. It aims to simplify the development process, increase productivity, and improve the overall developer experience.

Google Cloud

Google Cloud is a suite of cloud computing services that runs on the same infrastructure as Google. Its services include computing, storage, networking, databases, machine learning, and more. Google Cloud is designed to make it easy for businesses to develop and deploy applications in the cloud. It offers a variety of tools and services to help businesses with everything from building and deploying applications to managing their infrastructure. Google Cloud is also committed to sustainability, and it has a number of programs in place to reduce its environmental impact.

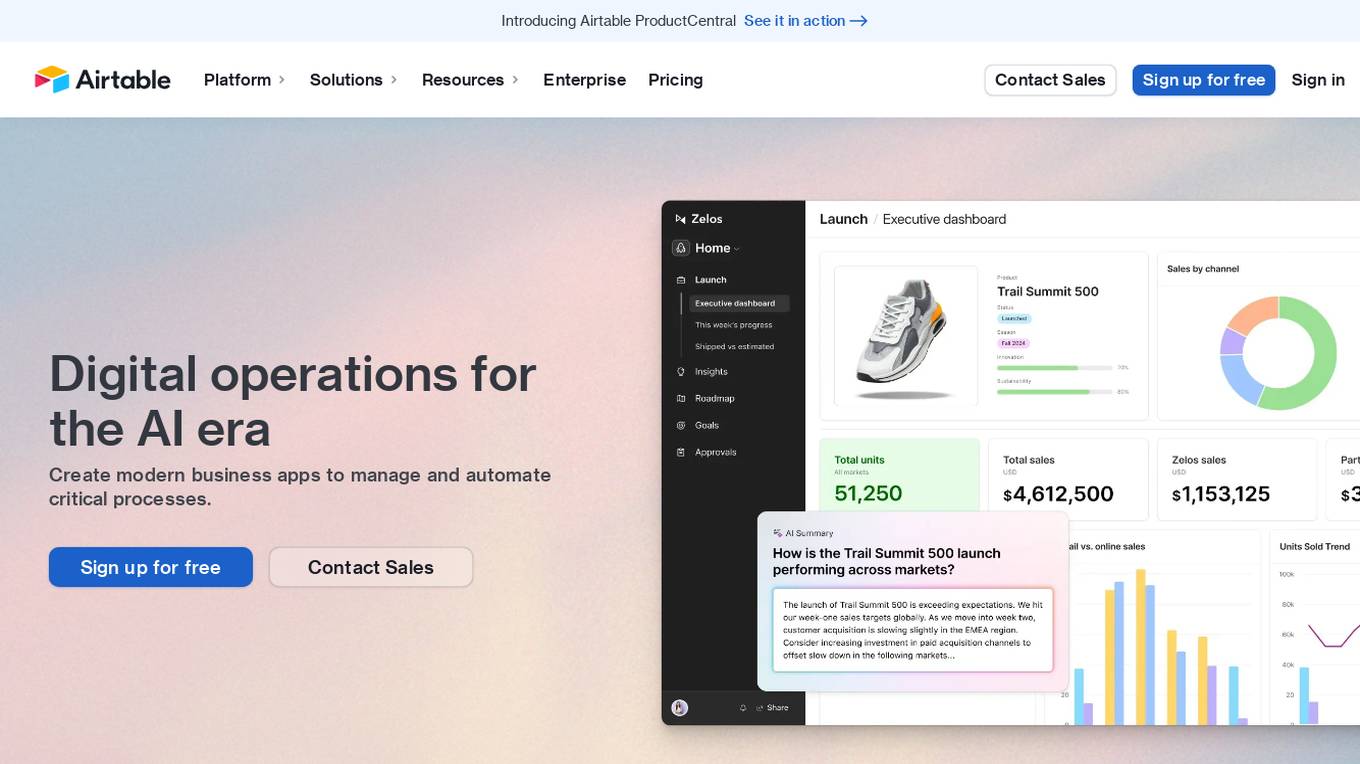

Airtable

Airtable is a next-gen app-building platform that enables teams to create custom business apps without the need for coding. It offers features like AI integration, connected data, automations, interface design, and data visualization. Airtable allows users to manage security, permissions, and data protection at scale. The platform also provides integrations with popular tools like Slack, Google Drive, and Salesforce, along with an extension marketplace for additional templates and apps. Users can streamline workflows, automate processes, and gain insights through reporting and analytics.

Gemini

Gemini is a large and powerful AI model developed by Google. It is designed to handle a wide variety of text and image reasoning tasks, and it can be used to build a variety of AI-powered applications. Gemini is available in three sizes: Ultra, Pro, and Nano. Ultra is the most capable model, but it is also the most expensive. Pro is the best performing model for a wide variety of tasks, and it is a good value for the price. Nano is the most efficient model, and it is designed for on-device use cases.

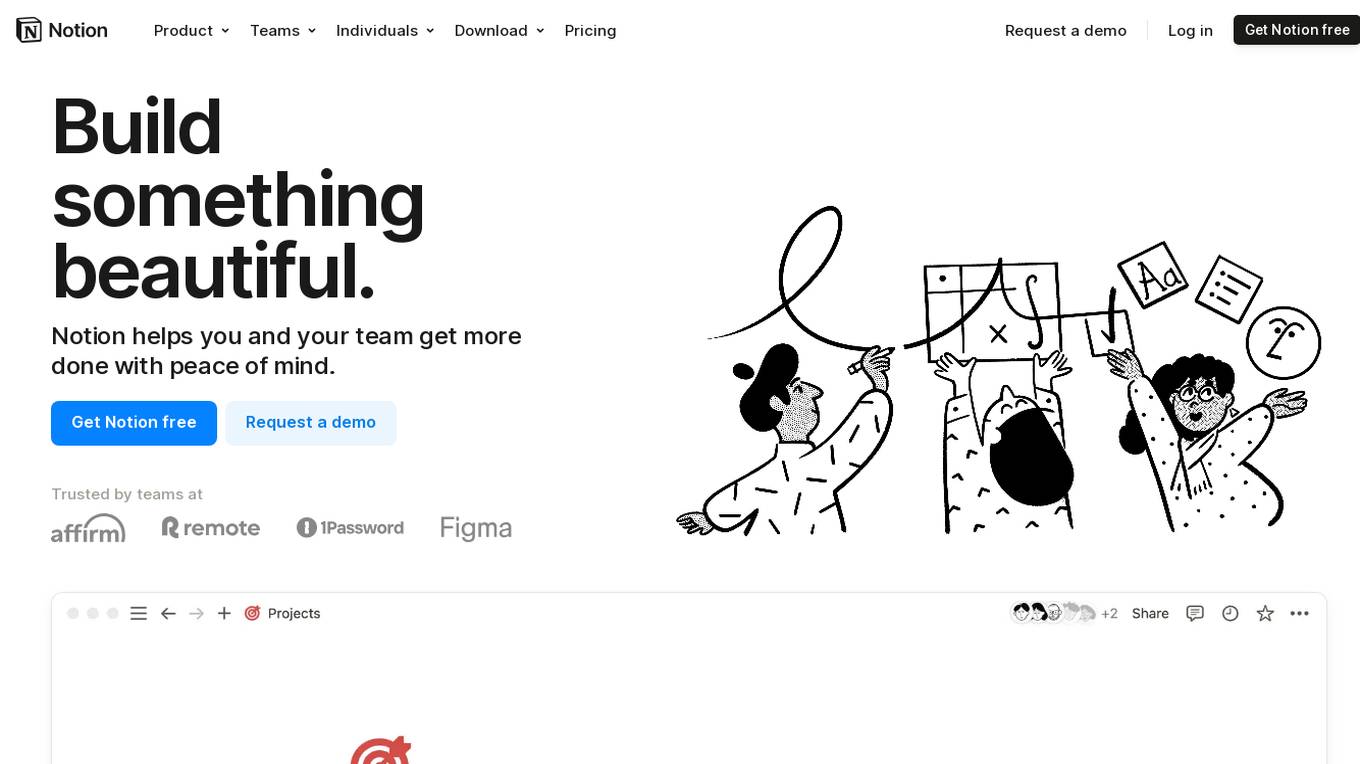

Notion

Notion is an AI-integrated workspace platform that combines wiki, docs, and project management functionalities in one tool. It offers a centralized hub for teams to collaborate, share knowledge, manage projects, and streamline workflows. With AI assistance, users can enhance their productivity by automating tasks, generating content, and finding information quickly. Notion aims to simplify work processes and empower teams to work more efficiently and creatively.

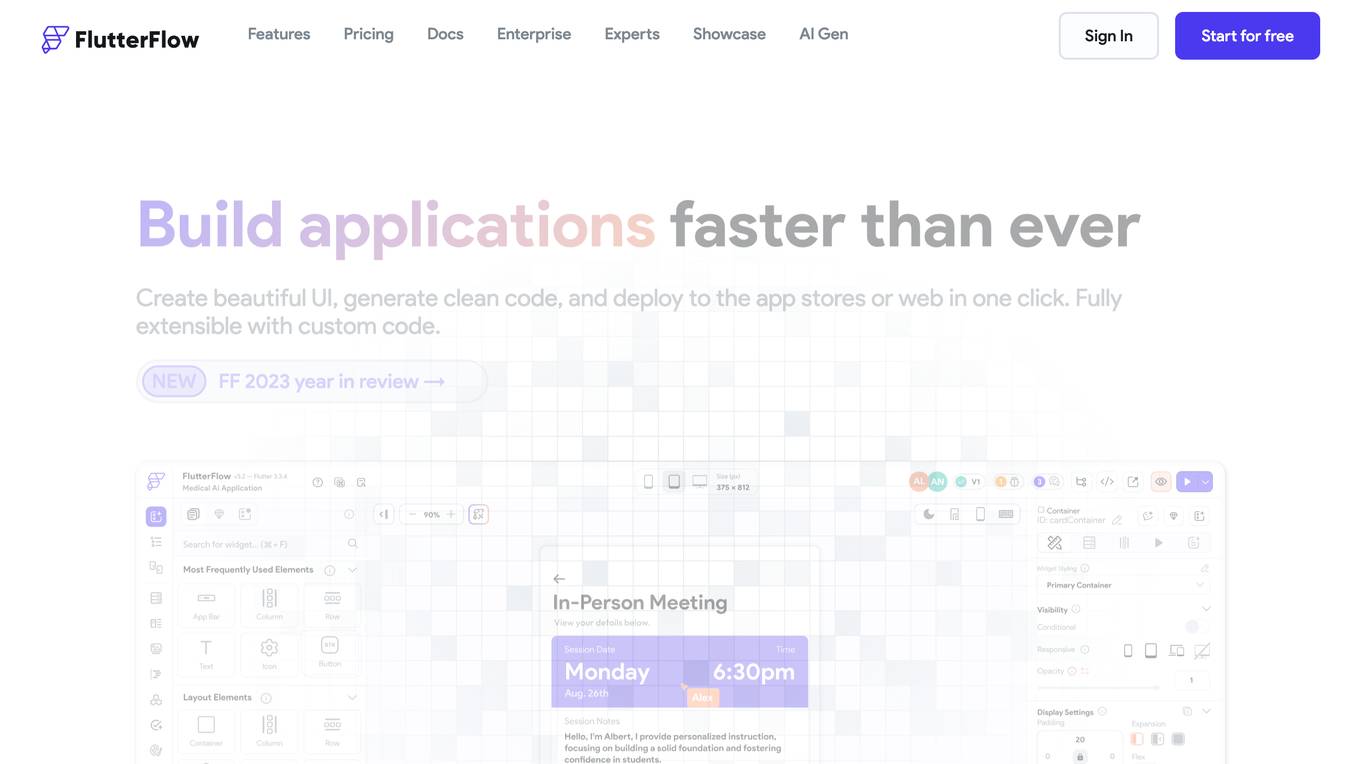

FlutterFlow

FlutterFlow is a low-code development platform that enables users to build cross-platform mobile and web applications without writing code. It provides a visual interface for designing user interfaces, connecting data, and implementing complex logic. FlutterFlow is trusted by users at leading companies around the world and has been used to build a wide range of applications, from simple prototypes to complex enterprise solutions.

Enhancv

Enhancv is an AI-powered online resume builder that helps users create professional resumes and cover letters tailored to their job applications. The tool offers a drag-and-drop resume builder with a variety of modern templates, a resume checker that evaluates resumes for ATS-friendliness, and provides actionable suggestions. Enhancv also provides resume and CV examples written by experienced professionals, a resume tailoring feature, and a free resume checker. Users can download their resumes in PDF or TXT formats and store up to 30 documents in cloud storage.

Abacus.AI

Abacus.AI is the world's first AI platform where AI, not humans, build Applied AI agents and systems at scale. Using generative AI and other novel neural net techniques, AI can build LLM apps, gen AI agents, and predictive applied AI systems at scale.

Bubble

Bubble is a no-code application development platform that allows users to build and deploy web and mobile applications without writing any code. It provides a visual interface for designing and developing applications, and it includes a library of pre-built components and templates that can be used to accelerate development. Bubble is suitable for a wide range of users, from beginners with no coding experience to experienced developers who want to build applications quickly and easily.

Zyro

Zyro is a website builder that allows users to create professional websites and online stores without any coding knowledge. It offers a range of features, including customizable templates, drag-and-drop editing, and AI-powered tools to help users brand and grow their businesses.

2 - Open Source AI Tools

spellbook-docker

The Spellbook Docker Compose repository contains the Docker Compose files for running the Spellbook AI Assistant stack. It requires ExLlama and a Nvidia Ampere or better GPU for real-time results. The repository provides instructions for installing Docker, building and starting containers with or without GPU, additional workers, Nvidia driver installation, port forwarding, and fresh installation steps. Users can follow the detailed guidelines to set up the Spellbook framework on Ubuntu 22, enabling them to run the UI, middleware, and additional workers for resource access.

ai-containers

This repository contains Dockerfiles, scripts, yaml files, Helm charts, etc. used to scale out AI containers with versions of TensorFlow and PyTorch optimized for Intel platforms. Scaling is done with python, Docker, kubernetes, kubeflow, cnvrg.io, Helm, and other container orchestration frameworks for use in the cloud and on-premise.

20 - OpenAI Gpts

rosGPT

Learn ROS/ROS2 at any level, from beginner to expert with rosGPT - and build Docker containers to test your robot anywhere.

Build a Brand

Unique custom images based on your input. Just type ideas and the brand image is created.

Beam Eye Tracker Extension Copilot

Build extensions using the Eyeware Beam eye tracking SDK

Business Model Canvas Strategist

Business Model Canvas Creator - Build and evaluate your business model

League Champion Builder GPT

Build your own League of Legends Style Champion with Abilities, Back Story and Splash Art

RenovaTecno

Your tech buddy helping you refurbish or build a PC from scratch, tailored to your needs, budget, and language.

Gradle Expert

Your expert in Gradle build configuration, offering clear, practical advice.

XRPL GPT

Build on the XRP Ledger with assistance from this GPT trained on extensive documentation and code samples.