Best AI tools for< Model Tuning >

Infographic

20 - AI tool Sites

FineTuneAIs.com

FineTuneAIs.com is a platform that specializes in custom AI model fine-tuning. Users can fine-tune their AI models to achieve better performance and accuracy. The platform requires JavaScript to be enabled for optimal functionality.

IngestAI

IngestAI is a Silicon Valley-based startup that provides a sophisticated toolbox for data preparation and model selection, powered by proprietary AI algorithms. The company's mission is to make AI accessible and affordable for businesses of all sizes. IngestAI's platform offers a turn-key service tailored for AI builders seeking to optimize AI application development. The company identifies the model best-suited for a customer's needs, ensuring it is designed for high performance and reliability. IngestAI utilizes Deepmark AI, its proprietary software solution, to minimize the time required to identify and deploy the most effective AI solutions. IngestAI also provides data preparation services, transforming raw structured and unstructured data into high-quality, AI-ready formats. This service is meticulously designed to ensure that AI models receive the best possible input, leading to unparalleled performance and accuracy. IngestAI goes beyond mere implementation; the company excels in fine-tuning AI models to ensure that they match the unique nuances of a customer's data and specific demands of their industry. IngestAI rigorously evaluates each AI project, not only ensuring its successful launch but its optimal alignment with a customer's business goals.

Prem AI

Prem is an AI platform that empowers developers and businesses to build and fine-tune generative AI models with ease. It offers a user-friendly development platform for developers to create AI solutions effortlessly. For businesses, Prem provides tailored model fine-tuning and training to meet unique requirements, ensuring data sovereignty and ownership. Trusted by global companies, Prem accelerates the advent of sovereign generative AI by simplifying complex AI tasks and enabling full control over intellectual capital. With a suite of foundational open-source SLMs, Prem supercharges business applications with cutting-edge research and customization options.

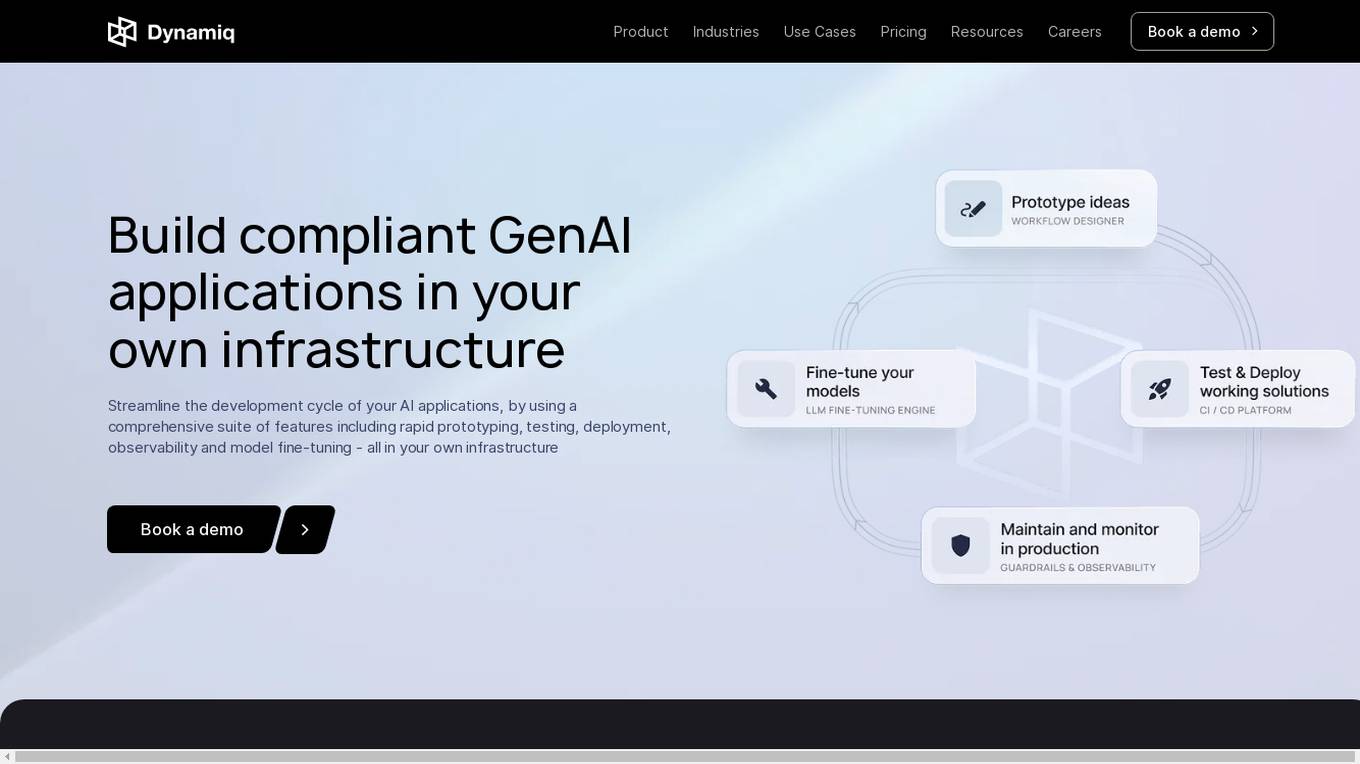

Dynamiq

Dynamiq is an operating platform for GenAI applications that enables users to build compliant GenAI applications in their own infrastructure. It offers a comprehensive suite of features including rapid prototyping, testing, deployment, observability, and model fine-tuning. The platform helps streamline the development cycle of AI applications and provides tools for workflow automations, knowledge base management, and collaboration. Dynamiq is designed to optimize productivity, reduce AI adoption costs, and empower organizations to establish AI ahead of schedule.

Odin AI

Odin AI is a comprehensive AI platform that offers a range of tools and features to simplify and automate various tasks. It provides solutions for brand compliance, custom templates, guardrails, knowledge graph, model fine-tuning, conversational AI, task automation, meeting note-taking, chatbot building, and more. Odin AI aims to enhance productivity, streamline workflows, and improve decision-making across different industries and use cases.

Algo

Algo is a conversational AI chatbot that is different from ChatGPT. Algo is less verbose and more attuned to the user's needs, providing helpful and meaningful insights without a lot of excess chatter. Algo does not use your data for further training and model fine-tuning, and it is designed to keep all communication private and secure. You can delete your data at any time. This provides a higher level of control over personal information compared to ChatGPT, which is a public system and has no provision for data deletion. Beyond its conversational capabilities, Algo boasts built-in features that allow it to browse the web and craft stunning visuals using advanced generative AI models.

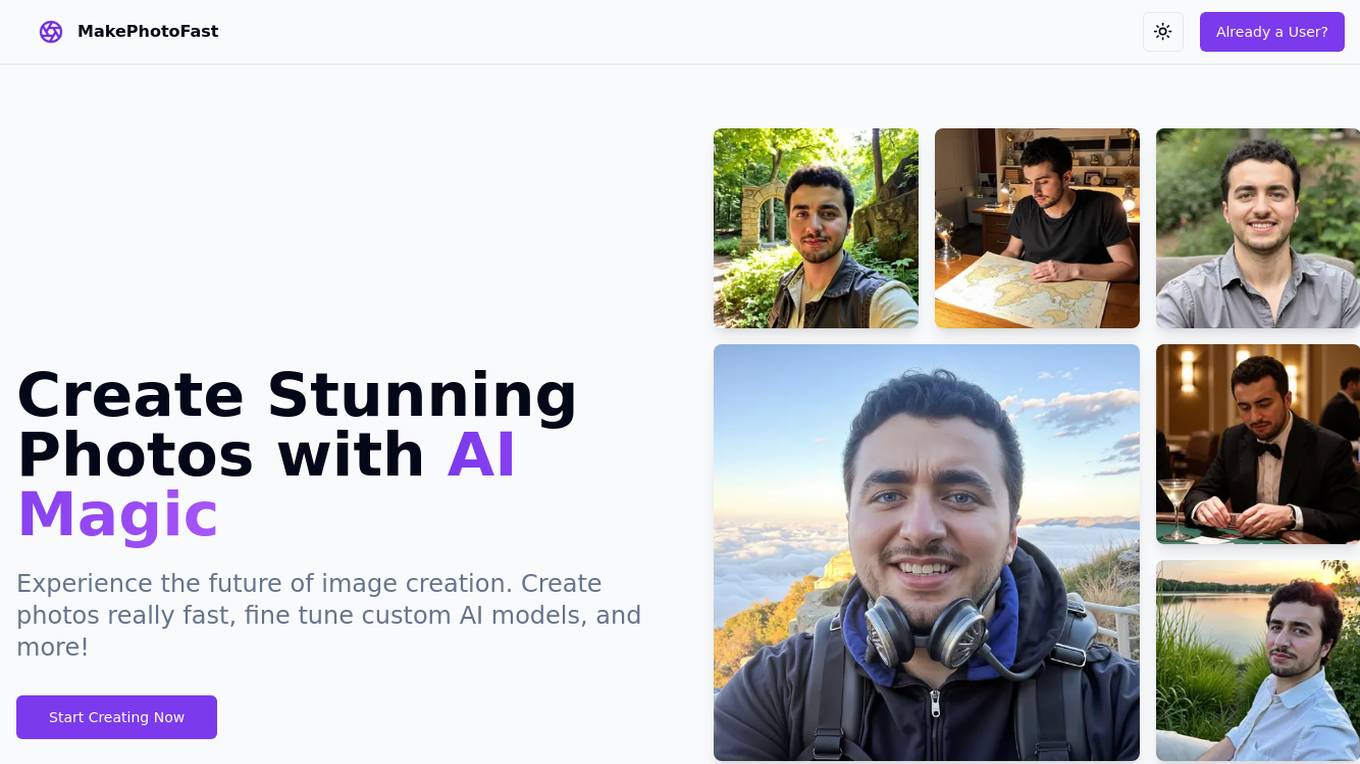

MakePhotoFast

MakePhotoFast is an AI-powered image generation tool that allows users to create stunning photos with advanced AI models. Users can enter a prompt or select an image package to generate images quickly, fine-tune custom AI models, and download and share the enhanced photos. The tool offers powerful features such as AI-powered image generation, custom AI model fine-tuning, and style package selection. With simple one-time pricing and no subscriptions, MakePhotoFast provides a user-friendly experience for creating unique and professional-looking images.

WhimsicalAI

The website is an AI tool that allows users to generate whimsical and delightful illustrations using GPT and algorithmic steps. The project started in March/April 2023 and evolved to create recognizable and amusing images. The tool generated 3,447 images over a 9-month period before being shut down. The collected data could be used for fine-tuning a model, although the project has not started yet.

Predibase

Predibase is a platform for fine-tuning and serving Large Language Models (LLMs). It provides a cost-effective and efficient way to train and deploy LLMs for a variety of tasks, including classification, information extraction, customer sentiment analysis, customer support, code generation, and named entity recognition. Predibase is built on proven open-source technology, including LoRAX, Ludwig, and Horovod.

Flux LoRA Model Library

Flux LoRA Model Library is an AI tool that provides a platform for finding and using Flux LoRA models suitable for various projects. Users can browse a catalog of popular Flux LoRA models and learn about FLUX models and LoRA (Low-Rank Adaptation) technology. The platform offers resources for fine-tuning models and ensuring responsible use of generated images.

LaoZhang AI Blog

LaoZhang AI Blog is an AI technology blog that offers expert guides, best practices, and deep analysis on cutting-edge AI model integration techniques. Developers can explore and master core methods for API optimization and performance tuning, with a complete technical path from beginner to expert. The blog covers a wide range of topics such as AI video generation, AI tutorials, troubleshooting, API development, and more, providing valuable insights and resources for AI enthusiasts and professionals.

Lucy Edit AI

Lucy Edit AI is an open-source instruction-guided video editing model that allows users to transform videos with simple text instructions. It offers features such as text-guided editing, motion preservation, character transformations, object & scene editing, and high-fidelity transformations. The application is built on Wan2.2 5B architecture and supports 81-frame video generations. Lucy Edit AI is suitable for creators of all levels, from hobbyists to professionals, and is free to use for any purpose, including commercial projects.

Together AI

Together AI is an AI tool that offers a variety of generative AI services, including serverless models, fine-tuning capabilities, dedicated endpoints, and GPU clusters. Users can run or fine-tune leading open source models with only a few lines of code. The platform provides a range of functionalities for tasks such as chat, vision, text-to-speech, code/language reranking, and more. Together AI aims to simplify the process of utilizing AI models for various applications.

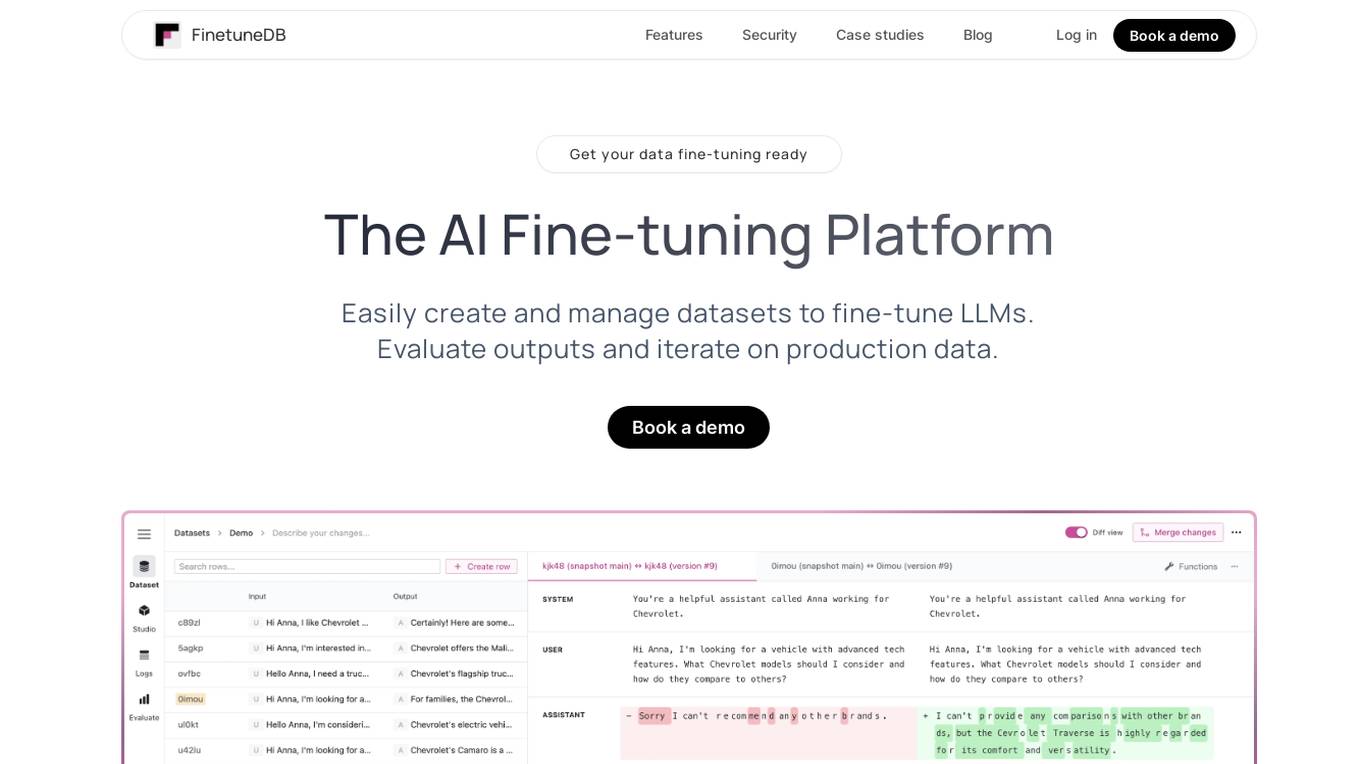

FinetuneDB

FinetuneDB is an AI fine-tuning platform that allows users to easily create and manage datasets to fine-tune LLMs, evaluate outputs, and iterate on production data. It integrates with open-source and proprietary foundation models, and provides a collaborative editor for building datasets. FinetuneDB also offers a variety of features for evaluating model performance, including human and AI feedback, automated evaluations, and model metrics tracking.

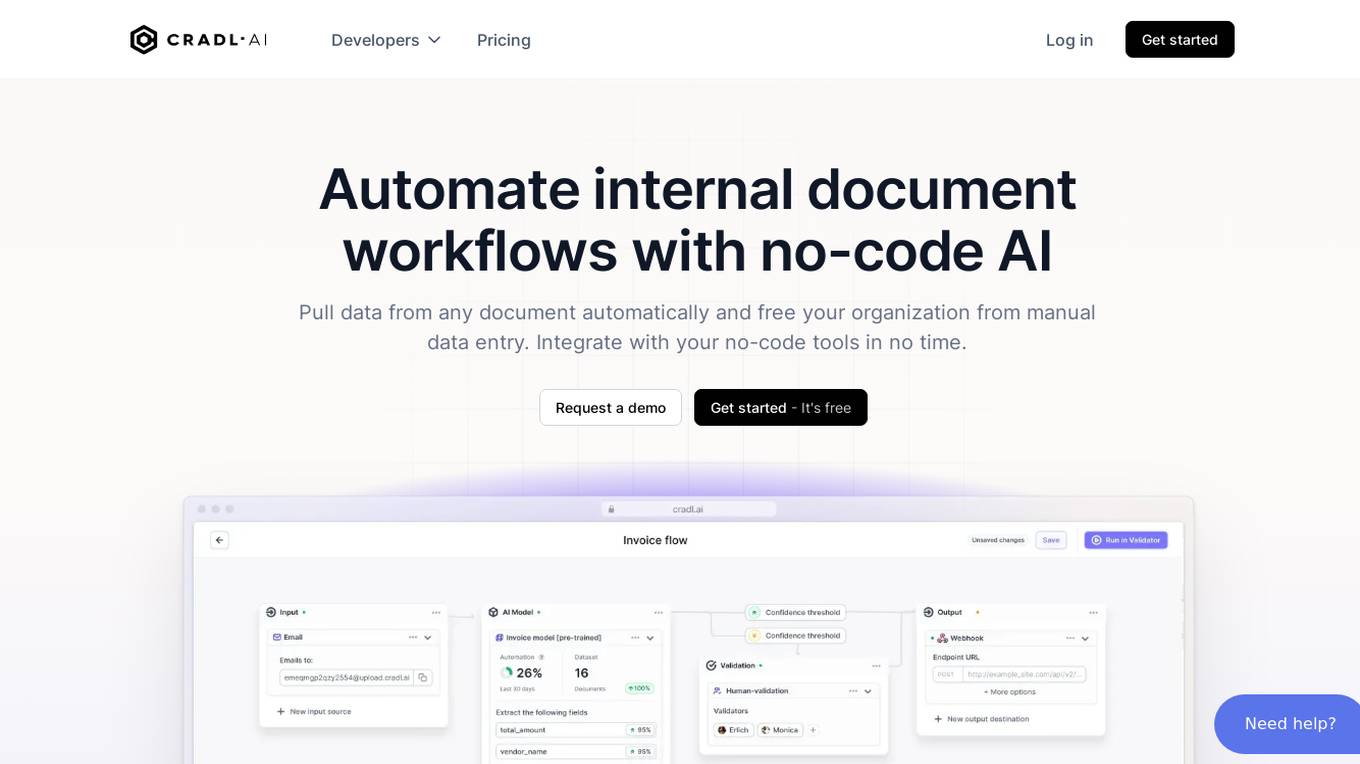

Cradl AI

Cradl AI is an AI-powered tool designed to automate document workflows with no-code AI. It enables users to extract data from any document automatically, integrate with no-code tools, and build custom AI models through an easy-to-use interface. The tool empowers automation teams across industries by extracting data from complex document layouts, regardless of language or structure. Cradl AI offers features such as line item extraction, fine-tuning AI models, human-in-the-loop validation, and seamless integration with automation tools. It is trusted by organizations for business-critical document automation, providing enterprise-level features like encrypted transmission, GDPR compliance, secure data handling, and auto-scaling.

Together AI

Together AI is an AI Acceleration Cloud platform that offers fast inference, fine-tuning, and training services. It provides self-service NVIDIA GPUs, model deployment on custom hardware, AI chat app, code execution sandbox, and tools to find the right model for specific use cases. The platform also includes a model library with open-source models, documentation for developers, and resources for advancing open-source AI. Together AI enables users to leverage pre-trained models, fine-tune them, or build custom models from scratch, catering to various generative AI needs.

Fine-Tune AI

Fine-Tune AI is a tool that allows users to generate fine-tune data sets using prompts. This can be useful for a variety of tasks, such as improving the accuracy of machine learning models or creating new training data for AI applications.

poolside

poolside is an advanced foundational AI model designed specifically for software engineering challenges. It allows users to fine-tune the model on their own code, enabling it to understand project uniqueness and complexities that generic models can't grasp. The platform aims to empower teams to build better, faster, and happier by providing a personalized AI model that continuously improves. In addition to the AI model for writing code, poolside offers an intuitive editor assistant and an API for developers to leverage.

Anycores

Anycores is an AI tool designed to optimize the performance of deep neural networks and reduce the cost of running AI models in the cloud. It offers a platform that provides automated solutions for tuning and inference consultation, optimized networks zoo, and platform for reducing AI model cost. Anycores focuses on faster execution, reducing inference time over 10x times, and footprint reduction during model deployment. It is device agnostic, supporting Nvidia, AMD GPUs, Intel, ARM, AMD CPUs, servers, and edge devices. The tool aims to provide highly optimized, low footprint networks tailored to specific deployment scenarios.

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

1 - Open Source Tools

lighteval

LightEval is a lightweight LLM evaluation suite that Hugging Face has been using internally with the recently released LLM data processing library datatrove and LLM training library nanotron. We're releasing it with the community in the spirit of building in the open. Note that it is still very much early so don't expect 100% stability ^^' In case of problems or question, feel free to open an issue!

20 - OpenAI Gpts

TuringGPT

The Turing Test, first named the imitation game by Alan Turing in 1950, is a measure of a machine's capacity to demonstrate intelligence that's either equal to or indistinguishable from human intelligence.

Seabiscuit Business Model Master

Discover A More Robust Business: Craft tailored value proposition statements, develop a comprehensive business model canvas, conduct detailed PESTLE analysis, and gain strategic insights on enhancing business model elements like scalability, cost structure, and market competition strategies. (v1.18)

Create A Business Model Canvas For Your Business

Let's get started by telling me about your business: What do you offer? Who do you serve? ------------------------------------------------------- Need help Prompt Engineering? Reach out on LinkedIn: StephenHnilica

Business Model Canvas Strategist

Business Model Canvas Creator - Build and evaluate your business model

BITE Model Analyzer by Dr. Steven Hassan

Discover if your group, relationship or organization uses specific methods to recruit and maintain control over people

EIA model

Generates Environmental impact assessment templates based on specific global locations and parameters.

Business Model Canvas Wizard

Un aiuto a costruire il Business Model Canvas della tua iniziativa

Business Model Advisor

Business model expert, create detailed reports based on business ideas.

AI Model NFT Marketplace- Joy Marketplace

Expert on AI Model NFT Marketplace, offering insights on blockchain tech and NFTs.