Best AI tools for< Language Model Fine-tuning >

Infographic

20 - AI tool Sites

Predibase

Predibase is a platform for fine-tuning and serving Large Language Models (LLMs). It provides a cost-effective and efficient way to train and deploy LLMs for a variety of tasks, including classification, information extraction, customer sentiment analysis, customer support, code generation, and named entity recognition. Predibase is built on proven open-source technology, including LoRAX, Ludwig, and Horovod.

Odin AI

Odin AI is a comprehensive AI platform that offers a range of tools and features to simplify and automate various tasks. It provides solutions for brand compliance, custom templates, guardrails, knowledge graph, model fine-tuning, conversational AI, task automation, meeting note-taking, chatbot building, and more. Odin AI aims to enhance productivity, streamline workflows, and improve decision-making across different industries and use cases.

Algo

Algo is a conversational AI chatbot that is different from ChatGPT. Algo is less verbose and more attuned to the user's needs, providing helpful and meaningful insights without a lot of excess chatter. Algo does not use your data for further training and model fine-tuning, and it is designed to keep all communication private and secure. You can delete your data at any time. This provides a higher level of control over personal information compared to ChatGPT, which is a public system and has no provision for data deletion. Beyond its conversational capabilities, Algo boasts built-in features that allow it to browse the web and craft stunning visuals using advanced generative AI models.

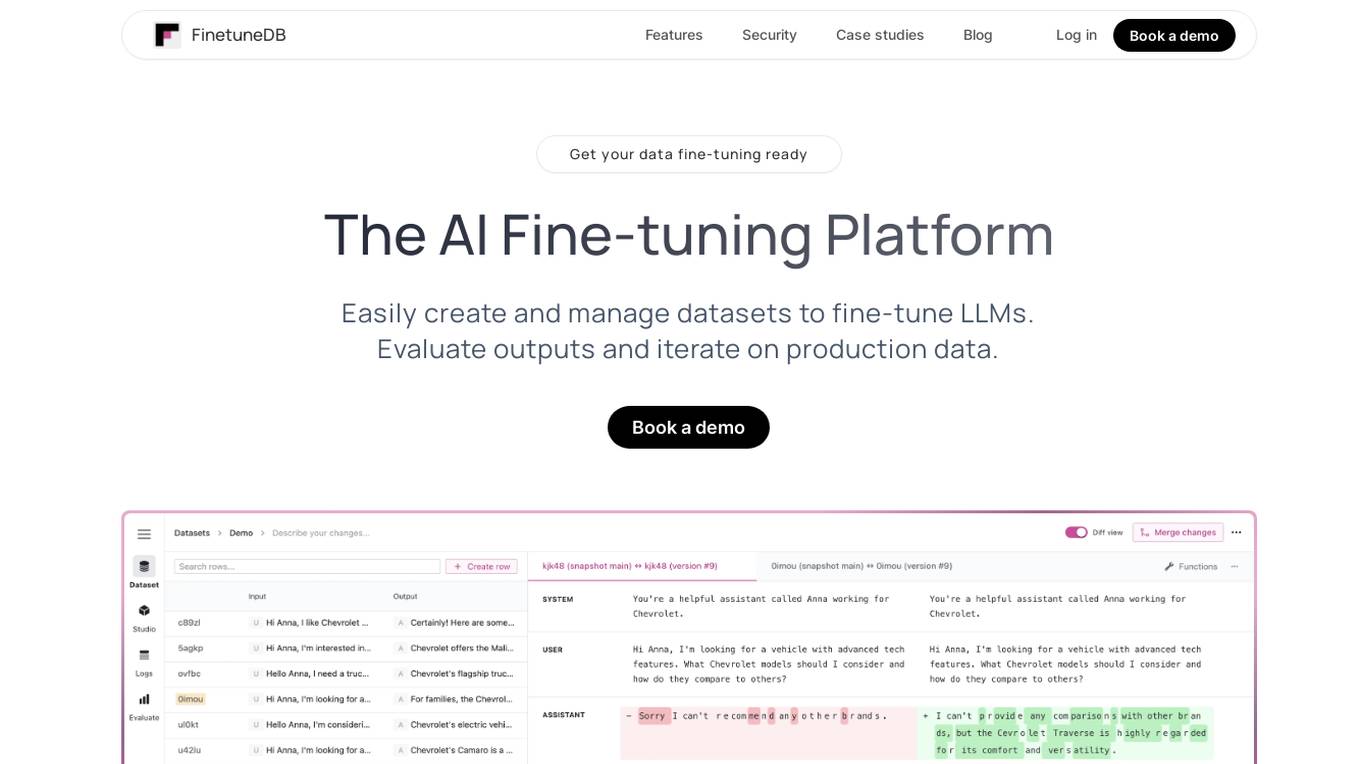

FinetuneDB

FinetuneDB is an AI fine-tuning platform that allows users to easily create and manage datasets to fine-tune LLMs, evaluate outputs, and iterate on production data. It integrates with open-source and proprietary foundation models, and provides a collaborative editor for building datasets. FinetuneDB also offers a variety of features for evaluating model performance, including human and AI feedback, automated evaluations, and model metrics tracking.

Together AI

Together AI is an AI tool that offers a variety of generative AI services, including serverless models, fine-tuning capabilities, dedicated endpoints, and GPU clusters. Users can run or fine-tune leading open source models with only a few lines of code. The platform provides a range of functionalities for tasks such as chat, vision, text-to-speech, code/language reranking, and more. Together AI aims to simplify the process of utilizing AI models for various applications.

Sapien.io

Sapien.io is a decentralized data foundry that offers data labeling services powered by a decentralized workforce and gamified platform. The platform provides high-quality training data for large language models through a human-in-the-loop labeling process, enabling fine-tuning of datasets to build performant AI models. Sapien combines AI and human intelligence to collect and annotate various data types for any model, offering customized data collection and labeling models across industries.

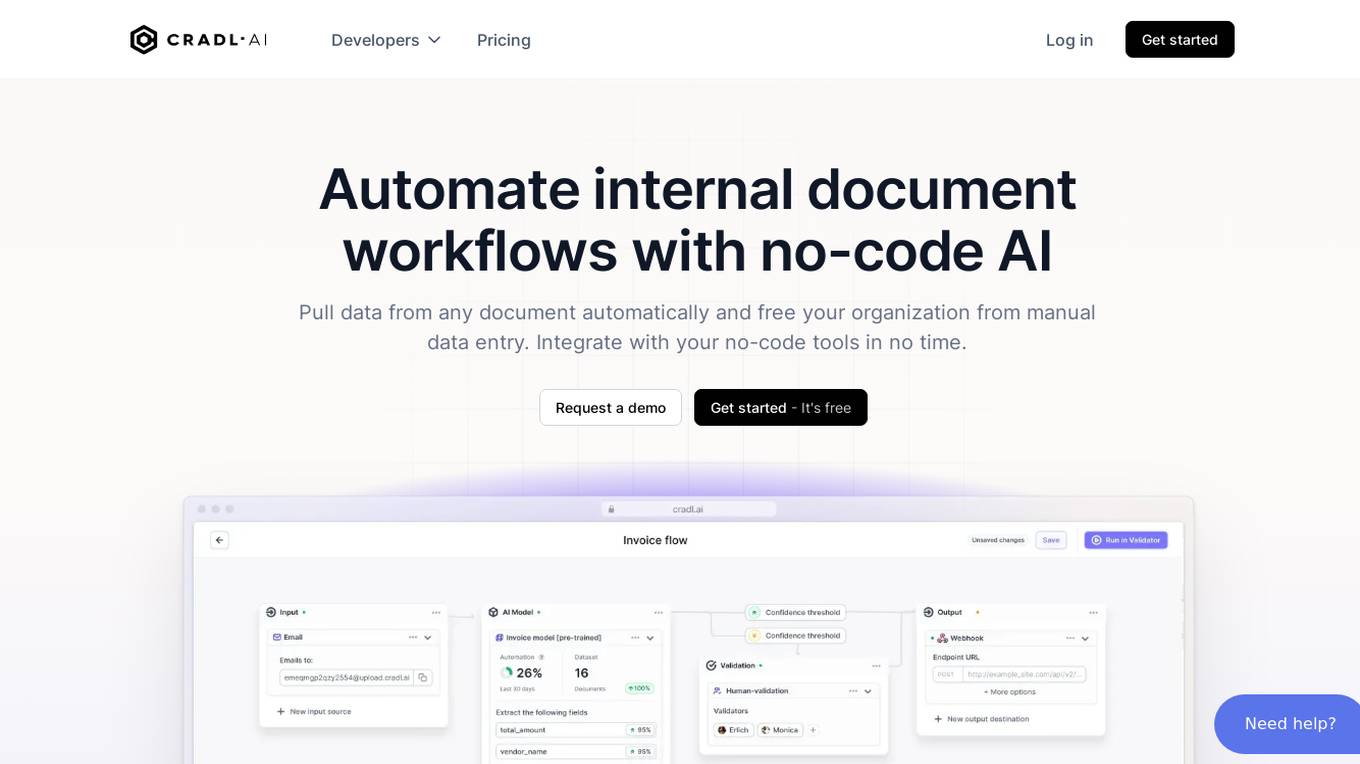

Cradl AI

Cradl AI is an AI-powered tool designed to automate document workflows with no-code AI. It enables users to extract data from any document automatically, integrate with no-code tools, and build custom AI models through an easy-to-use interface. The tool empowers automation teams across industries by extracting data from complex document layouts, regardless of language or structure. Cradl AI offers features such as line item extraction, fine-tuning AI models, human-in-the-loop validation, and seamless integration with automation tools. It is trusted by organizations for business-critical document automation, providing enterprise-level features like encrypted transmission, GDPR compliance, secure data handling, and auto-scaling.

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

LexEdge

LexEdge is an AI-powered legal practice management solution that revolutionizes how legal professionals handle their responsibilities. It offers advanced features like case tracking, client communications, AI chatbot assistance, document automation, task management, and detailed reporting and analytics. LexEdge enhances productivity, accuracy, and client satisfaction by leveraging technologies such as AI, large language models (LLM), retrieval-augmented generation (RAG), fine-tuning, and custom model training. It caters to solo practitioners, small and large law firms, and corporate legal departments, providing tailored solutions to meet their unique needs.

Tensoic AI

Tensoic AI is an AI tool designed for custom Large Language Models (LLMs) fine-tuning and inference. It offers ultra-fast fine-tuning and inference capabilities for enterprise-grade LLMs, with a focus on use case-specific tasks. The tool is efficient, cost-effective, and easy to use, enabling users to outperform general-purpose LLMs using synthetic data. Tensoic AI generates small, powerful models that can run on consumer-grade hardware, making it ideal for a wide range of applications.

Entry Point AI

Entry Point AI is a modern AI optimization platform for fine-tuning proprietary and open-source language models. It provides a user-friendly interface to manage prompts, fine-tunes, and evaluations in one place. The platform enables users to optimize models from leading providers, train across providers, work collaboratively, write templates, import/export data, share models, and avoid common pitfalls associated with fine-tuning. Entry Point AI simplifies the fine-tuning process, making it accessible to users without the need for extensive data, infrastructure, or insider knowledge.

Framer

Framer is a design tool that allows users to create interactive prototypes for web and mobile applications. It provides a platform for designers to easily visualize and test their designs, helping them to iterate quickly and efficiently. With Framer, users can create animations, transitions, and interactions to bring their designs to life. The tool offers a range of features to enhance the design process, making it a valuable asset for designers looking to create engaging user experiences.

Airtrain

Airtrain is a no-code compute platform for Large Language Models (LLMs). It provides a user-friendly interface for fine-tuning, evaluating, and deploying custom AI models. Airtrain also offers a marketplace of pre-trained models that can be used for a variety of tasks, such as text generation, translation, and question answering.

Trieve

Trieve is an AI-first infrastructure API that offers search, recommendations, and RAG capabilities by combining language models with tools for fine-tuning ranking and relevance. It helps companies build unfair competitive advantages through their discovery experiences, powering over 30,000 discovery experiences across various categories. Trieve supports semantic vector search, BM25 & SPLADE full-text search, hybrid search, merchandising & relevance tuning, and sub-sentence highlighting. The platform is built on open-source models, ensuring data privacy, and offers self-hostable options for sensitive data and maximum performance.

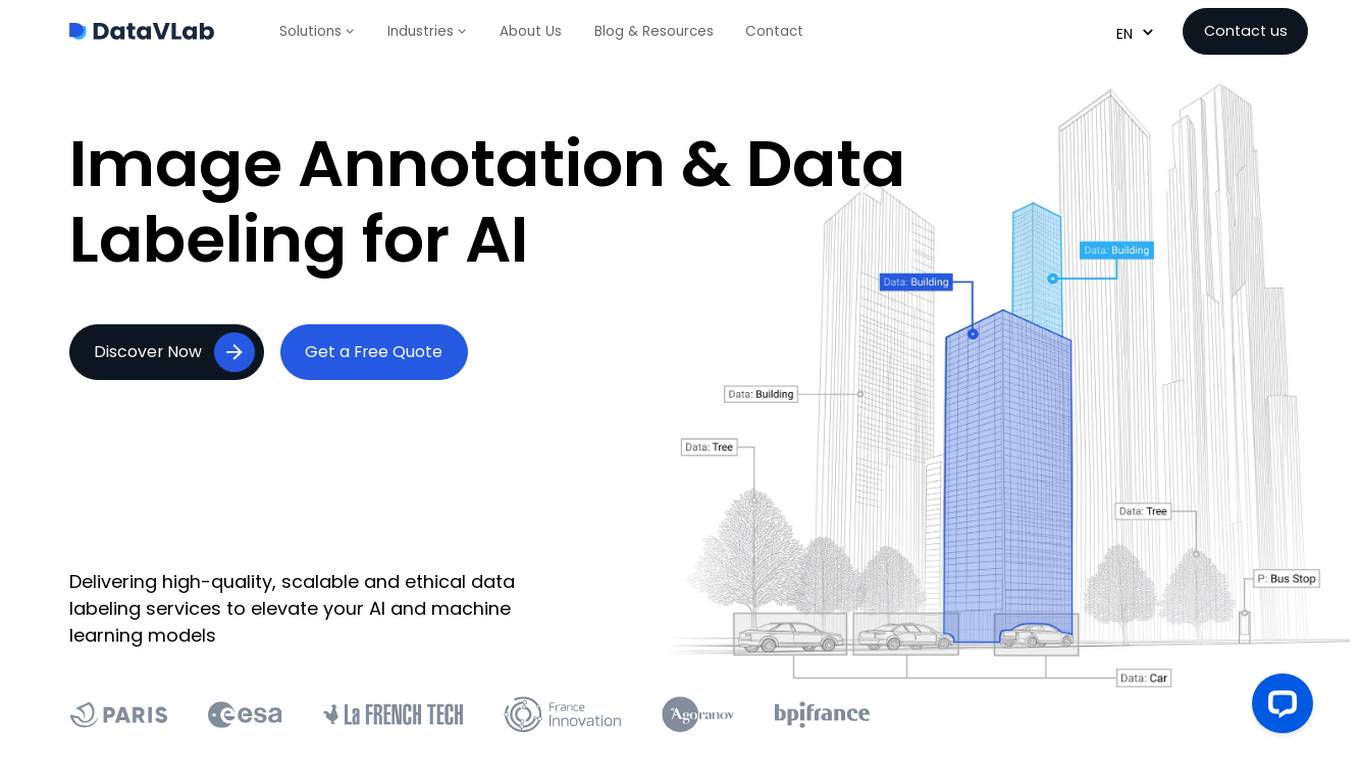

DataVLab Solutions

DataVLab Solutions is an AI-powered platform offering comprehensive image annotation and data labeling services for AI applications. They provide high-quality, scalable, and ethical data labeling solutions to enhance AI and machine learning models. With expertise in image, video, 3D annotation, custom AI projects, NLP, and text annotation, DataVLab Solutions caters to various industries such as energy infrastructure, autonomous vehicles, agriculture, medical, e-commerce, and insurance. Their advanced annotation process accelerates data labeling, ensuring precision and efficiency. Leveraging AI-driven tools, they offer tailor-made annotation workflows for unique AI challenges, delivering high-quality annotations for computer vision models, dynamic data, and spatial AI. The platform also provides training data and fine-tuning support for Large Language Models and generative AI applications.

hoopsAI

hoopsAI is a pioneering technology company committed to empowering retail investors in the stock market. Our cutting-edge platform leverages the immense power of large language models (LLMs) to provide personalized insights. Through continuous fine-tuning, we enhance customization and precision, enabling you to make informed decisions and maximize your understanding of the markets.

Helix AI

Helix AI is a private GenAI platform that enables users to build AI applications using open source models. The platform offers tools for RAG (Retrieval-Augmented Generation) and fine-tuning, allowing deployment on-premises or in a Virtual Private Cloud (VPC). Users can access curated models, utilize Helix API tools to connect internal and external APIs, embed Helix Assistants into websites/apps for chatbot functionality, write AI application logic in natural language, and benefit from the innovative RAG system for Q&A generation. Additionally, users can fine-tune models for domain-specific needs and deploy securely on Kubernetes or Docker in any cloud environment. Helix Cloud offers free and premium tiers with GPU priority, catering to individuals, students, educators, and companies of varying sizes.

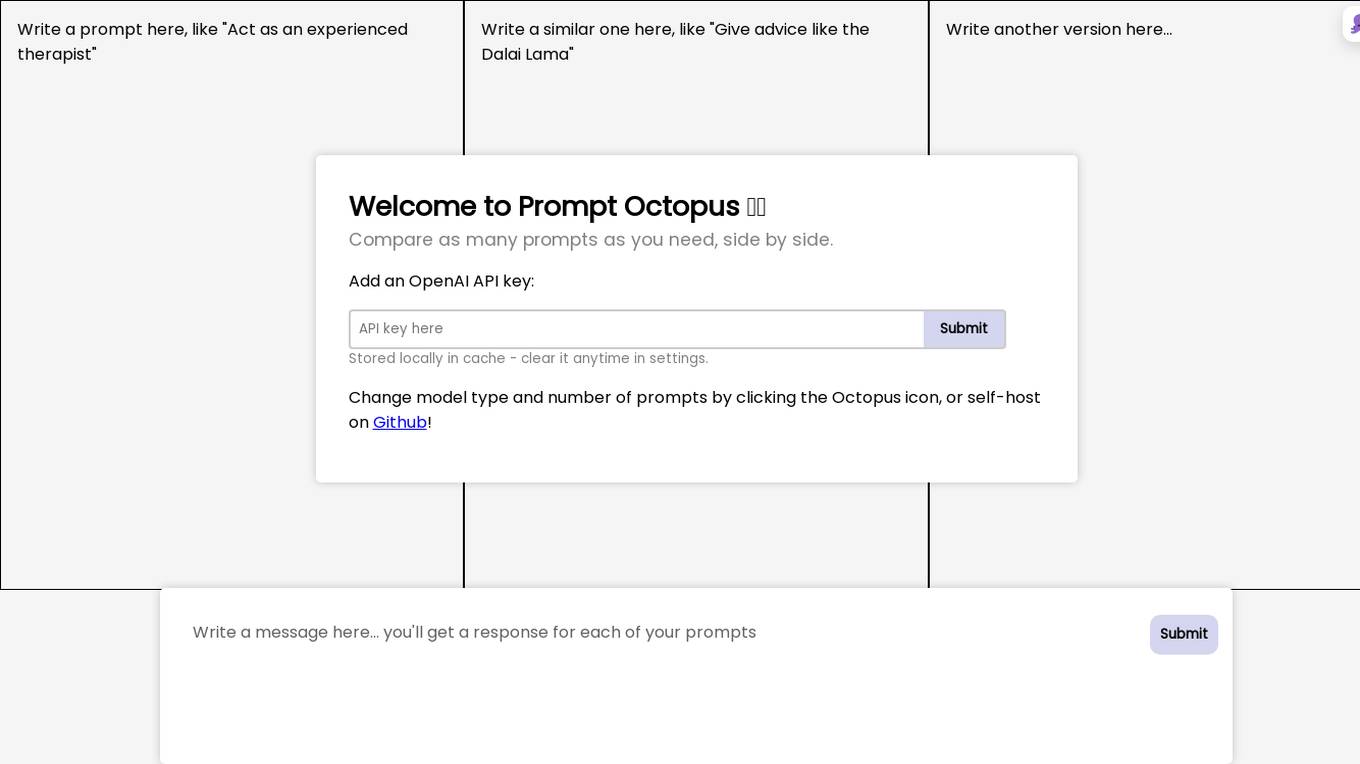

Prompt Octopus

Prompt Octopus is a free tool that allows you to compare multiple prompts side-by-side. You can add as many prompts as you need and view the responses in real-time. This can be helpful for fine-tuning your prompts and getting the best possible results from your AI model.

Fifi.ai

Fifi.ai is a managed AI cloud platform that provides users with the infrastructure and tools to deploy and run AI models. The platform is designed to be easy to use, with a focus on plug-and-play functionality. Fifi.ai also offers a range of customization and fine-tuning options, allowing users to tailor the platform to their specific needs. The platform is supported by a team of experts who can provide assistance with onboarding, API integration, and troubleshooting.

Unsloth

Unsloth is an AI tool designed to make finetuning large language models like Llama-3, Mistral, Phi-3, and Gemma 2x faster, use 70% less memory, and with no degradation in accuracy. The tool provides documentation to help users navigate through training their custom models, covering essentials such as installing and updating Unsloth, creating datasets, running, and deploying models. Users can also integrate third-party tools and utilize platforms like Google Colab.

1 - Open Source Tools

LLM-Finetune-Guide

This project provides a comprehensive guide to fine-tuning large language models (LLMs) with efficient methods like LoRA and P-tuning V2. It includes detailed instructions, code examples, and performance benchmarks for various LLMs and fine-tuning techniques. The guide also covers data preparation, evaluation, prediction, and running inference on CPU environments. By leveraging this guide, users can effectively fine-tune LLMs for specific tasks and applications.

20 - OpenAI Gpts

Pytorch Trainer GPT

Your purpose is to create the pytorch code to train language models using pytorch

HuggingFace Helper

A witty yet succinct guide for HuggingFace, offering technical assistance on using the platform - based on their Learning Hub

Enough

As the smallest language model (SLM) chatbot in existence, Enough responds with only one word.

HackingPT

HackingPT is a specialized language model focused on cybersecurity and penetration testing, committed to providing precise and in-depth insights in these fields.

Discrete Mathematics

Precision-focused Language Model for Discrete Mathematics, ensuring unmatched accuracy and error avoidance.

OneWord GPT

SuccintBot delivers concise one-word answers, offering a unique twist on language model interactions with brevity at its core.

LFG GPT

Talk to Navigation with Large Language Models: Semantic Guesswork as a Heuristic for Planning (LFG)

Find Any GPT In The World

I help you find the perfect GPT model for your needs. From GPT Design, GPT Business, SEO, Content Creation or GPTs for Social Media we have you covered.